Xiting Zhao

Collecting Larg-Scale Robotic Datasets on a High-Speed Mobile Platform

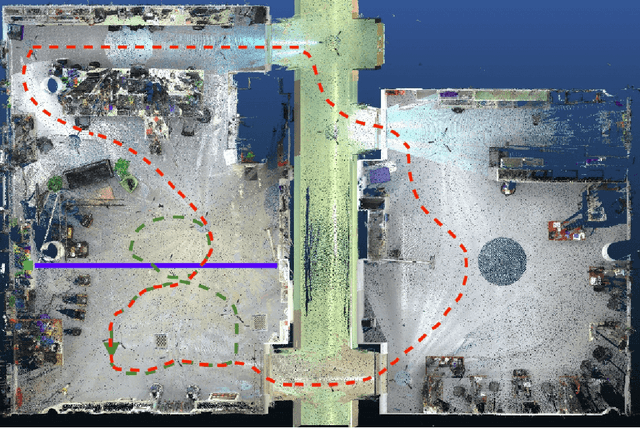

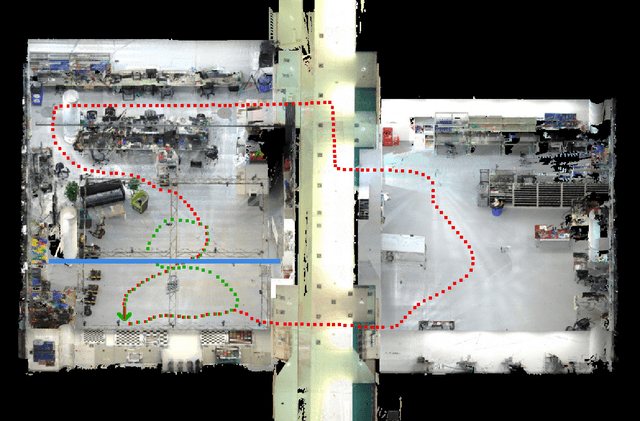

Aug 01, 2024Abstract:Mobile robotics datasets are essential for research on robotics, for example for research on Simultaneous Localization and Mapping (SLAM). Therefore the ShanghaiTech Mapping Robot was constructed, that features a multitude high-performance sensors and a 16-node cluster to collect all this data. That robot is based on a Clearpath Husky mobile base with a maximum speed of 1 meter per second. This is fine for indoor datasets, but to collect large-scale outdoor datasets a faster platform is needed. This system paper introduces our high-speed mobile platform for data collection. The mapping robot is secured on the rear-steered flatbed car with maximum field of view. Additionally two encoders collect odometry data from two of the car wheels and an external sensor plate houses a downlooking RGB and event camera. With this setup a dataset of more than 10km in the underground parking garage and the outside of our campus was collected and is published with this paper.

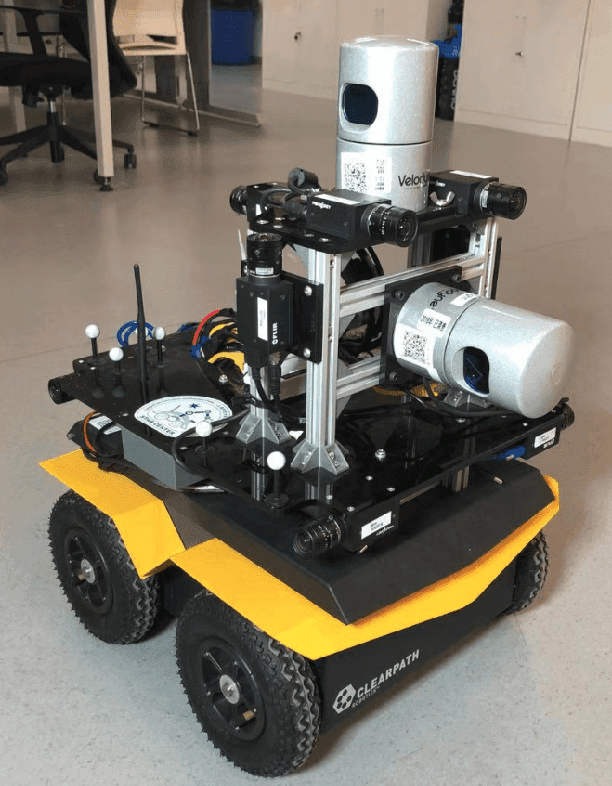

ShanghaiTech Mapping Robot is All You Need: Robot System for Collecting Universal Ground Vehicle Datasets

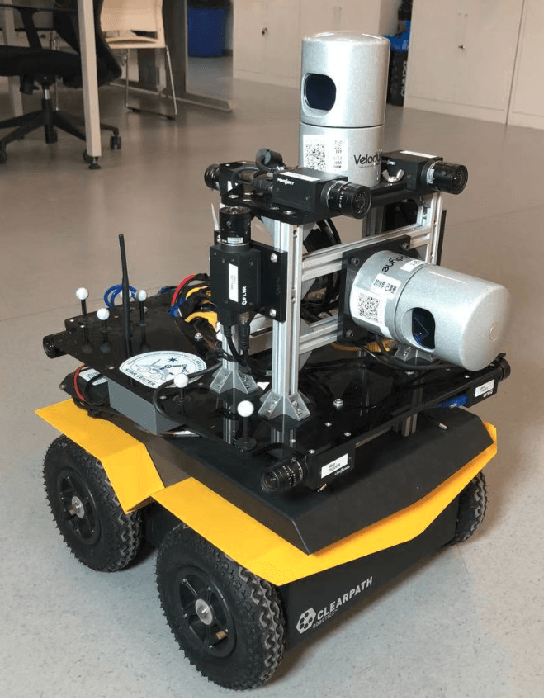

Jun 24, 2024Abstract:This paper presents the ShanghaiTech Mapping Robot, a state-of-the-art unmanned ground vehicle (UGV) designed for collecting comprehensive multi-sensor datasets to support research in robotics, computer vision, and autonomous driving. The robot is equipped with a wide array of sensors including RGB cameras, RGB-D cameras, event-based cameras, IR cameras, LiDARs, mmWave radars, IMUs, ultrasonic range finders, and a GNSS RTK receiver. The sensor suite is integrated onto a specially designed mechanical structure with a centralized power system and a synchronization mechanism to ensure spatial and temporal alignment of the sensor data. A 16-node on-board computing cluster handles sensor control, data collection, and storage. We describe the hardware and software architecture of the robot in detail and discuss the calibration procedures for the various sensors. The capabilities of the platform are demonstrated through an extensive dataset collected in diverse real-world environments. To facilitate research, we make the dataset publicly available along with the associated robot sensor calibration data. Performance evaluations on a set of standard perception and localization tasks showcase the potential of the dataset to support developments in Robot Autonomy.

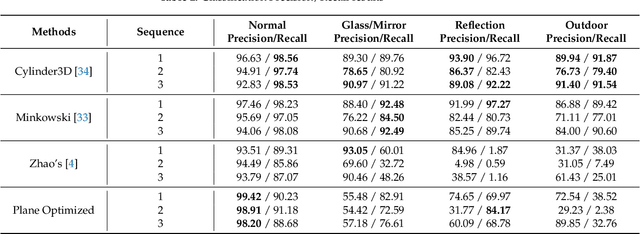

Detection and Utilization of Reflections in LiDAR Scans Through Plane Optimization and Plane SLAM

Jun 15, 2024

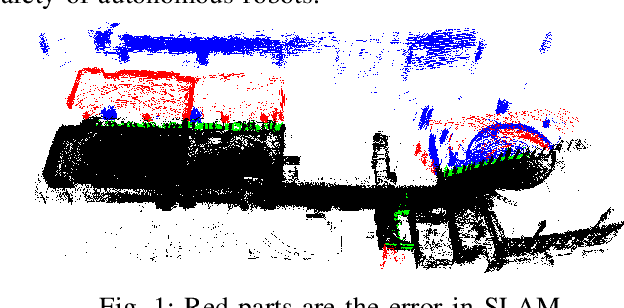

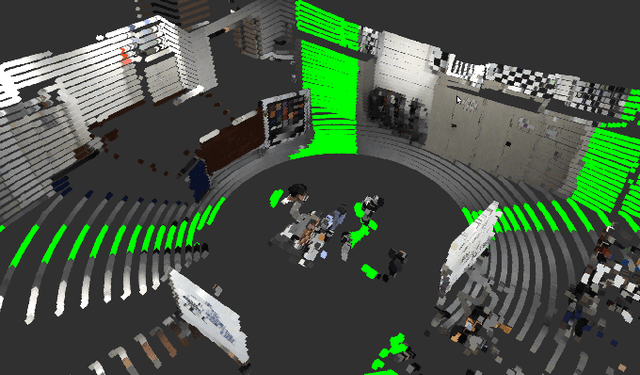

Abstract:In LiDAR sensing, glass, mirrors and other material often cause inconsistent data readings, because the laser beams may report the distance of the glass, the distance of the object behind the glass or the distance to a reflected object. This causes problems in robotics and 3D reconstruction, especially with respect to localization, mapping and thus navigation. With dual-return LiDARs and other methods, one can detect the glass plane and classify the points in a single scan. In this work we go one step further and construct a global, optimized map of reflective planes, in order to then classify all LiDAR readings at the end. As our experiments will show, this approach provides superior classification accuracy compared to the single scan approach. The code and data for this work are available as open source online.

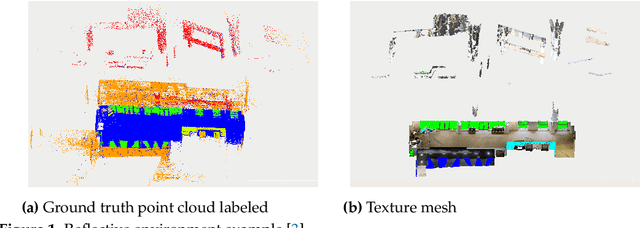

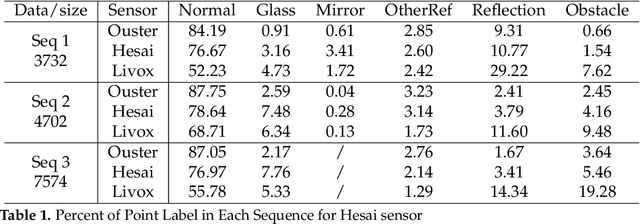

3DRef: 3D Dataset and Benchmark for Reflection Detection in RGB and Lidar Data

Mar 11, 2024Abstract:Reflective surfaces present a persistent challenge for reliable 3D mapping and perception in robotics and autonomous systems. However, existing reflection datasets and benchmarks remain limited to sparse 2D data. This paper introduces the first large-scale 3D reflection detection dataset containing more than 50,000 aligned samples of multi-return Lidar, RGB images, and 2D/3D semantic labels across diverse indoor environments with various reflections. Textured 3D ground truth meshes enable automatic point cloud labeling to provide precise ground truth annotations. Detailed benchmarks evaluate three Lidar point cloud segmentation methods, as well as current state-of-the-art image segmentation networks for glass and mirror detection. The proposed dataset advances reflection detection by providing a comprehensive testbed with precise global alignment, multi-modal data, and diverse reflective objects and materials. It will drive future research towards reliable reflection detection. The dataset is publicly available at http://3dref.github.io

Advanced Mapping Robot and High-Resolution Dataset

Jul 23, 2020

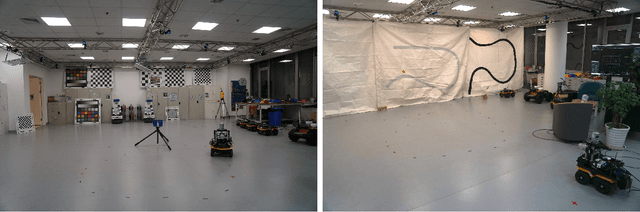

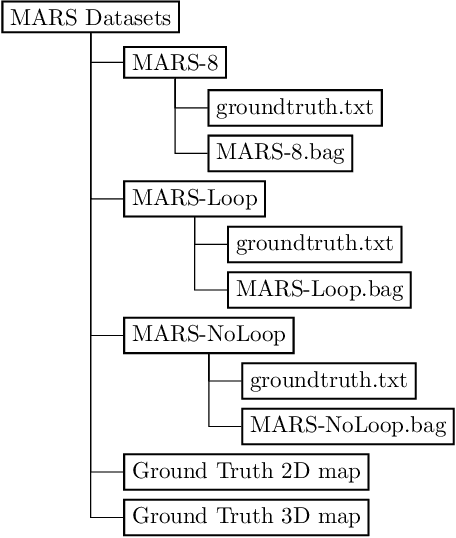

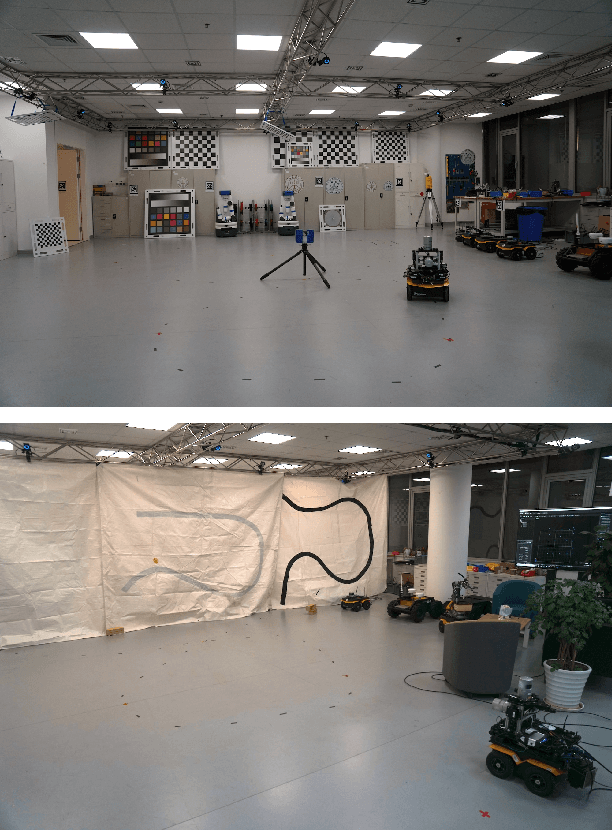

Abstract:This paper presents a fully hardware synchronized mapping robot with support for a hardware synchronized external tracking system, for super-precise timing and localization. Nine high-resolution cameras and two 32-beam 3D Lidars were used along with a professional, static 3D scanner for ground truth map collection. With all the sensors calibrated on the mapping robot, three datasets are collected to evaluate the performance of mapping algorithms within a room and between rooms. Based on these datasets we generate maps and trajectory data, which is then fed into evaluation algorithms. We provide the datasets for download and the mapping and evaluation procedures are made in a very easily reproducible manner for maximum comparability. We have also conducted a survey on available robotics-related datasets and compiled a big table with those datasets and a number of properties of them.

Mapping with Reflection -- Detection and Utilization of Reflection in 3D Lidar Scans

Sep 27, 2019

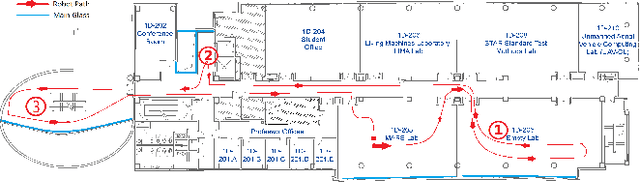

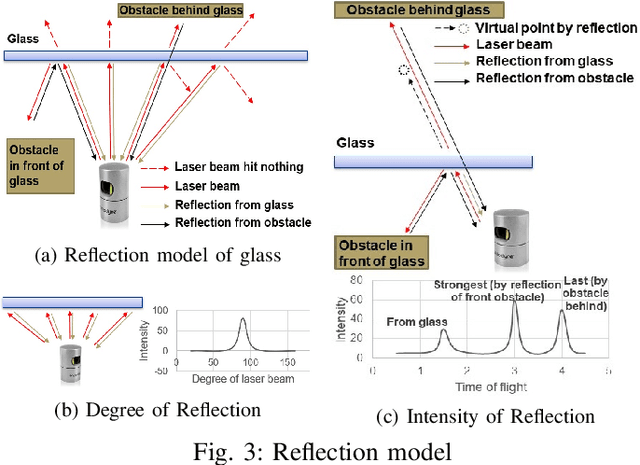

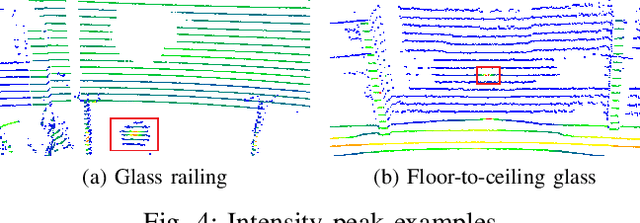

Abstract:This paper presents a method to detect reflection with 3D light detection and ranging (Lidar) and uses it to map the back side of objects. This method uses several approaches to analyze the point cloud, including intensity peak detection, dual return detection, plane fitting, and finding the boundaries. These approaches can classify the point cloud and detect the reflection in it. By mirroring the reflection points on the detected window pane and adding classification labels on the points, we can have improve the map quality in a Simultaneous Localization and Mapping (SLAM) framework.

Towards Generation and Evaluation of Comprehensive Mapping Robot Datasets

May 23, 2019

Abstract:This paper presents a fully hardware synchronized mapping robot with support for a hardware synchronized external tracking system, for super-precise timing and localization. We also employ a professional, static 3D scanner for ground truth map collection. Three datasets are generated to evaluate the performance of mapping algorithms within a room and between rooms. Based on these datasets we generate maps and trajectory data, which is then fed into evaluation algorithms. The mapping and evaluation procedures are made in a very easily reproducible manner for maximum comparability. In the end we can draw a couple of conclusions about the tested SLAM algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge