Tony Quek

DiffPO: Diffusion-styled Preference Optimization for Efficient Inference-Time Alignment of Large Language Models

Mar 06, 2025Abstract:Inference-time alignment provides an efficient alternative for aligning LLMs with humans. However, these approaches still face challenges, such as limited scalability due to policy-specific value functions and latency during the inference phase. In this paper, we propose a novel approach, Diffusion-styled Preference Optimization (\model), which provides an efficient and policy-agnostic solution for aligning LLMs with humans. By directly performing alignment at sentence level, \model~avoids the time latency associated with token-level generation. Designed as a plug-and-play module, \model~can be seamlessly integrated with various base models to enhance their alignment. Extensive experiments on AlpacaEval 2, MT-bench, and HH-RLHF demonstrate that \model~achieves superior alignment performance across various settings, achieving a favorable trade-off between alignment quality and inference-time latency. Furthermore, \model~demonstrates model-agnostic scalability, significantly improving the performance of large models such as Llama-3-70B.

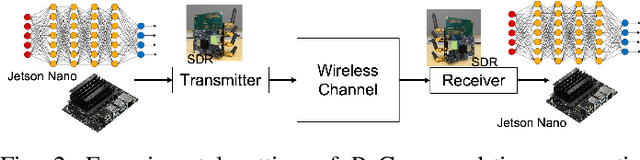

Diffusion-Driven Semantic Communication for Generative Models with Bandwidth Constraints

Jul 26, 2024Abstract:Diffusion models have been extensively utilized in AI-generated content (AIGC) in recent years, thanks to the superior generation capabilities. Combining with semantic communications, diffusion models are used for tasks such as denoising, data reconstruction, and content generation. However, existing diffusion-based generative models do not consider the stringent bandwidth limitation, which limits its application in wireless communication. This paper introduces a diffusion-driven semantic communication framework with advanced VAE-based compression for bandwidth-constrained generative model. Our designed architecture utilizes the diffusion model, where the signal transmission process through the wireless channel acts as the forward process in diffusion. To reduce bandwidth requirements, we incorporate a downsampling module and a paired upsampling module based on a variational auto-encoder with reparameterization at the receiver to ensure that the recovered features conform to the Gaussian distribution. Furthermore, we derive the loss function for our proposed system and evaluate its performance through comprehensive experiments. Our experimental results demonstrate significant improvements in pixel-level metrics such as peak signal to noise ratio (PSNR) and semantic metrics like learned perceptual image patch similarity (LPIPS). These enhancements are more profound regarding the compression rates and SNR compared to deep joint source-channel coding (DJSCC).

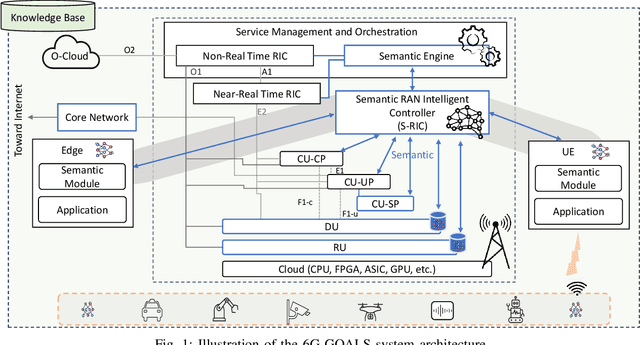

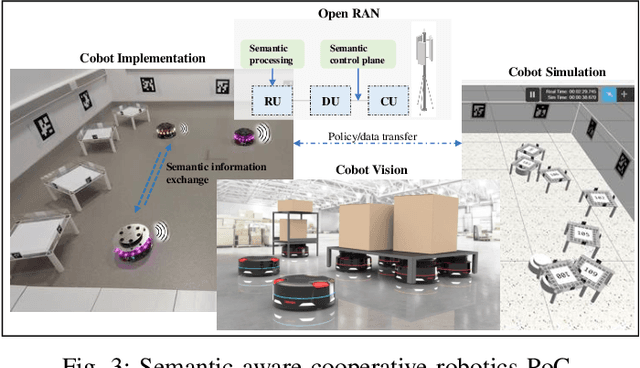

Goal-Oriented and Semantic Communication in 6G AI-Native Networks: The 6G-GOALS Approach

Feb 12, 2024

Abstract:Recent advances in AI technologies have notably expanded device intelligence, fostering federation and cooperation among distributed AI agents. These advancements impose new requirements on future 6G mobile network architectures. To meet these demands, it is essential to transcend classical boundaries and integrate communication, computation, control, and intelligence. This paper presents the 6G-GOALS approach to goal-oriented and semantic communications for AI-Native 6G Networks. The proposed approach incorporates semantic, pragmatic, and goal-oriented communication into AI-native technologies, aiming to facilitate information exchange between intelligent agents in a more relevant, effective, and timely manner, thereby optimizing bandwidth, latency, energy, and electromagnetic field (EMF) radiation. The focus is on distilling data to its most relevant form and terse representation, aligning with the source's intent or the destination's objectives and context, or serving a specific goal. 6G-GOALS builds on three fundamental pillars: i) AI-enhanced semantic data representation, sensing, compression, and communication, ii) foundational AI reasoning and causal semantic data representation, contextual relevance, and value for goal-oriented effectiveness, and iii) sustainability enabled by more efficient wireless services. Finally, we illustrate two proof-of-concepts implementing semantic, goal-oriented, and pragmatic communication principles in near-future use cases. Our study covers the project's vision, methodologies, and potential impact.

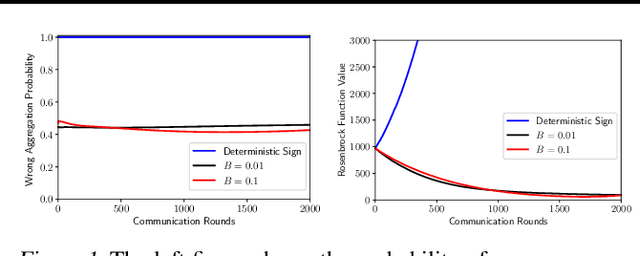

Magnitude Matters: Fixing SIGNSGD Through Magnitude-Aware Sparsification in the Presence of Data Heterogeneity

Feb 19, 2023

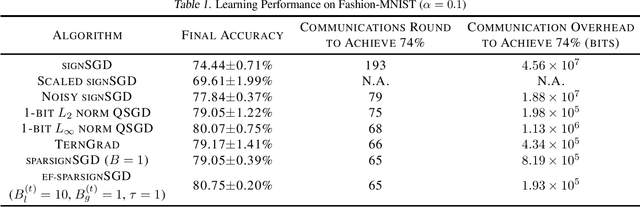

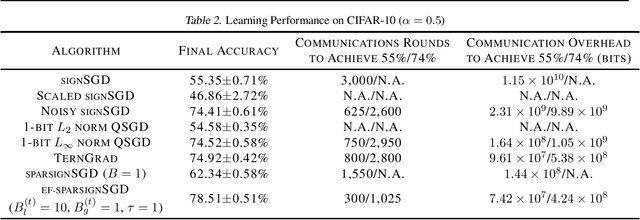

Abstract:Communication overhead has become one of the major bottlenecks in the distributed training of deep neural networks. To alleviate the concern, various gradient compression methods have been proposed, and sign-based algorithms are of surging interest. However, SIGNSGD fails to converge in the presence of data heterogeneity, which is commonly observed in the emerging federated learning (FL) paradigm. Error feedback has been proposed to address the non-convergence issue. Nonetheless, it requires the workers to locally keep track of the compression errors, which renders it not suitable for FL since the workers may not participate in the training throughout the learning process. In this paper, we propose a magnitude-driven sparsification scheme, which addresses the non-convergence issue of SIGNSGD while further improving communication efficiency. Moreover, the local update scheme is further incorporated to improve the learning performance, and the convergence of the proposed method is established. The effectiveness of the proposed scheme is validated through experiments on Fashion-MNIST, CIFAR-10, and CIFAR-100 datasets.

On the $f$-Differential Privacy Guarantees of Discrete-Valued Mechanisms

Feb 19, 2023

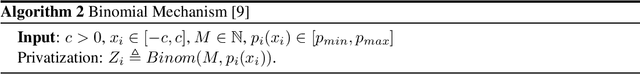

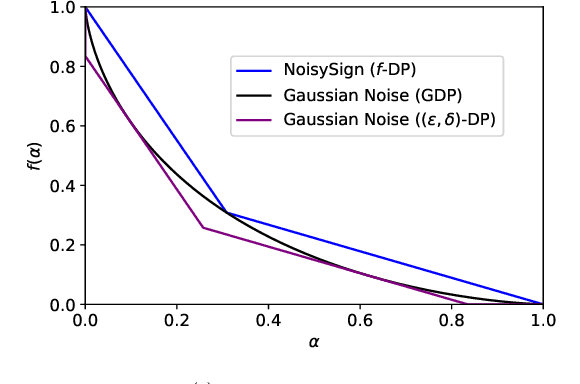

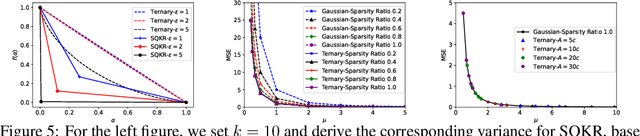

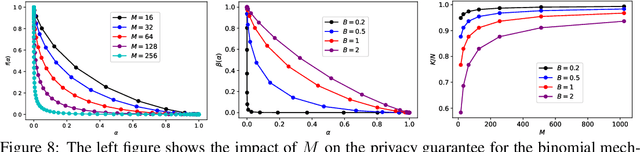

Abstract:We consider a federated data analytics problem in which a server coordinates the collaborative data analysis of multiple users with privacy concerns and limited communication capability. The commonly adopted compression schemes introduce information loss into local data while improving communication efficiency, and it remains an open question whether such discrete-valued mechanisms provide any privacy protection. Considering that differential privacy has become the gold standard for privacy measures due to its simple implementation and rigorous theoretical foundation, in this paper, we study the privacy guarantees of discrete-valued mechanisms with finite output space in the lens of $f$-differential privacy (DP). By interpreting the privacy leakage as a hypothesis testing problem, we derive the closed-form expression of the tradeoff between type I and type II error rates, based on which the $f$-DP guarantees of a variety of discrete-valued mechanisms, including binomial mechanisms, sign-based methods, and ternary-based compressors, are characterized. We further investigate the Byzantine resilience of binomial mechanisms and ternary compressors and characterize the tradeoff among differential privacy, Byzantine resilience, and communication efficiency. Finally, we discuss the application of the proposed method to differentially private stochastic gradient descent in federated learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge