Tengfei Xing

Global Stress Generation and Spatiotemporal Super-Resolution Physics-Informed Operator under Dynamic Loading for Two-Phase Random Materials

Apr 26, 2025Abstract:Material stress analysis is a critical aspect of material design and performance optimization. Under dynamic loading, the global stress evolution in materials exhibits complex spatiotemporal characteristics, especially in two-phase random materials (TRMs). Such kind of material failure is often associated with stress concentration, and the phase boundaries are key locations where stress concentration occurs. In practical engineering applications, the spatiotemporal resolution of acquired microstructural data and its dynamic stress evolution is often limited. This poses challenges for deep learning methods in generating high-resolution spatiotemporal stress fields, particularly for accurately capturing stress concentration regions. In this study, we propose a framework for global stress generation and spatiotemporal super-resolution in TRMs under dynamic loading. First, we introduce a diffusion model-based approach, named as Spatiotemporal Stress Diffusion (STS-diffusion), for generating global spatiotemporal stress data. This framework incorporates Space-Time U-Net (STU-net), and we systematically investigate the impact of different attention positions on model accuracy. Next, we develop a physics-informed network for spatiotemporal super-resolution, termed as Spatiotemporal Super-Resolution Physics-Informed Operator (ST-SRPINN). The proposed ST-SRPINN is an unsupervised learning method. The influence of data-driven and physics-informed loss function weights on model accuracy is explored in detail. Benefiting from physics-based constraints, ST-SRPINN requires only low-resolution stress field data during training and can upscale the spatiotemporal resolution of stress fields to arbitrary magnifications.

Predicting Stress in Two-phase Random Materials and Super-Resolution Method for Stress Images by Embedding Physical Information

Apr 26, 2025

Abstract:Stress analysis is an important part of material design. For materials with complex microstructures, such as two-phase random materials (TRMs), material failure is often accompanied by stress concentration. Phase interfaces in two-phase materials are critical for stress concentration. Therefore, the prediction error of stress at phase boundaries is crucial. In practical engineering, the pixels of the obtained material microstructure images are limited, which limits the resolution of stress images generated by deep learning methods, making it difficult to observe stress concentration regions. Existing Image Super-Resolution (ISR) technologies are all based on data-driven supervised learning. However, stress images have natural physical constraints, which provide new ideas for new ISR technologies. In this study, we constructed a stress prediction framework for TRMs. First, the framework uses a proposed Multiple Compositions U-net (MC U-net) to predict stress in low-resolution material microstructures. By considering the phase interface information of the microstructure, the MC U-net effectively reduces the problem of excessive prediction errors at phase boundaries. Secondly, a Mixed Physics-Informed Neural Network (MPINN) based method for stress ISR (SRPINN) was proposed. By introducing the constraints of physical information, the new method does not require paired stress images for training and can increase the resolution of stress images to any multiple. This enables a multiscale analysis of the stress concentration regions at phase boundaries. Finally, we performed stress analysis on TRMs with different phase volume fractions and loading states through transfer learning. The results show the proposed stress prediction framework has satisfactory accuracy and generalization ability.

MapVision: CVPR 2024 Autonomous Grand Challenge Mapless Driving Tech Report

Jun 14, 2024

Abstract:Autonomous driving without high-definition (HD) maps demands a higher level of active scene understanding. In this competition, the organizers provided the multi-perspective camera images and standard-definition (SD) maps to explore the boundaries of scene reasoning capabilities. We found that most existing algorithms construct Bird's Eye View (BEV) features from these multi-perspective images and use multi-task heads to delineate road centerlines, boundary lines, pedestrian crossings, and other areas. However, these algorithms perform poorly at the far end of roads and struggle when the primary subject in the image is occluded. Therefore, in this competition, we not only used multi-perspective images as input but also incorporated SD maps to address this issue. We employed map encoder pre-training to enhance the network's geometric encoding capabilities and utilized YOLOX to improve traffic element detection precision. Additionally, for area detection, we innovatively introduced LDTR and auxiliary tasks to achieve higher precision. As a result, our final OLUS score is 0.58.

LDTR: Transformer-based Lane Detection with Anchor-chain Representation

Mar 21, 2024Abstract:Despite recent advances in lane detection methods, scenarios with limited- or no-visual-clue of lanes due to factors such as lighting conditions and occlusion remain challenging and crucial for automated driving. Moreover, current lane representations require complex post-processing and struggle with specific instances. Inspired by the DETR architecture, we propose LDTR, a transformer-based model to address these issues. Lanes are modeled with a novel anchor-chain, regarding a lane as a whole from the beginning, which enables LDTR to handle special lanes inherently. To enhance lane instance perception, LDTR incorporates a novel multi-referenced deformable attention module to distribute attention around the object. Additionally, LDTR incorporates two line IoU algorithms to improve convergence efficiency and employs a Gaussian heatmap auxiliary branch to enhance model representation capability during training. To evaluate lane detection models, we rely on Frechet distance, parameterized F1-score, and additional synthetic metrics. Experimental results demonstrate that LDTR achieves state-of-the-art performance on well-known datasets.

CANet: Curved Guide Line Network with Adaptive Decoder for Lane Detection

Apr 23, 2023

Abstract:Lane detection is challenging due to the complicated on road scenarios and line deformation from different camera perspectives. Lots of solutions were proposed, but can not deal with corner lanes well. To address this problem, this paper proposes a new top-down deep learning lane detection approach, CANET. A lane instance is first responded by the heat-map on the U-shaped curved guide line at global semantic level, thus the corresponding features of each lane are aggregated at the response point. Then CANET obtains the heat-map response of the entire lane through conditional convolution, and finally decodes the point set to describe lanes via adaptive decoder. The experimental results show that CANET reaches SOTA in different metrics. Our code will be released soon.

S4OD: Semi-Supervised learning for Single-Stage Object Detection

Apr 09, 2022

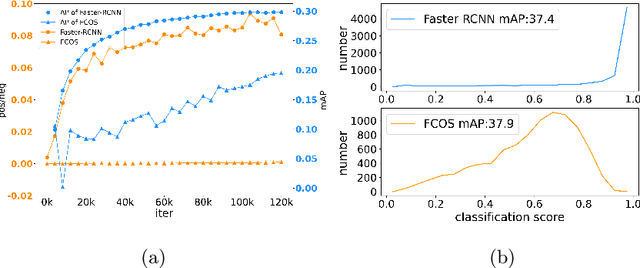

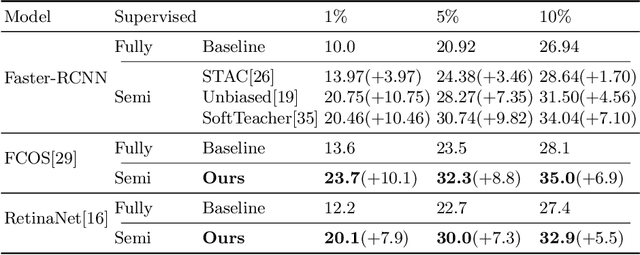

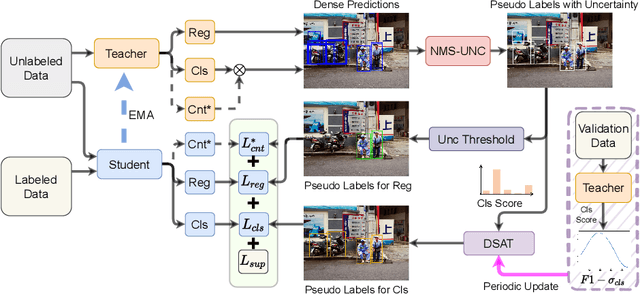

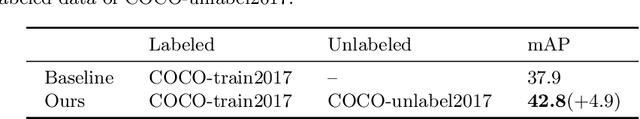

Abstract:Single-stage detectors suffer from extreme foreground-background class imbalance, while two-stage detectors do not. Therefore, in semi-supervised object detection, two-stage detectors can deliver remarkable performance by only selecting high-quality pseudo labels based on classification scores. However, directly applying this strategy to single-stage detectors would aggravate the class imbalance with fewer positive samples. Thus, single-stage detectors have to consider both quality and quantity of pseudo labels simultaneously. In this paper, we design a dynamic self-adaptive threshold (DSAT) strategy in classification branch, which can automatically select pseudo labels to achieve an optimal trade-off between quality and quantity. Besides, to assess the regression quality of pseudo labels in single-stage detectors, we propose a module to compute the regression uncertainty of boxes based on Non-Maximum Suppression. By leveraging only 10% labeled data from COCO, our method achieves 35.0% AP on anchor-free detector (FCOS) and 32.9% on anchor-based detector (RetinaNet).

2nd Place Solution for VisDA 2021 Challenge -- Universally Domain Adaptive Image Recognition

Oct 27, 2021

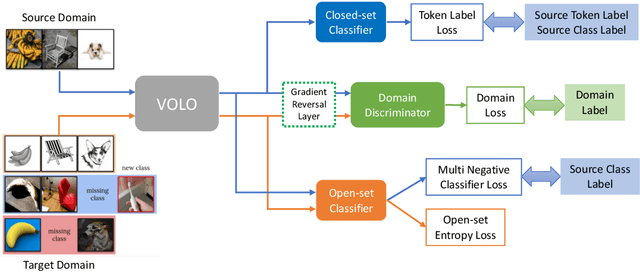

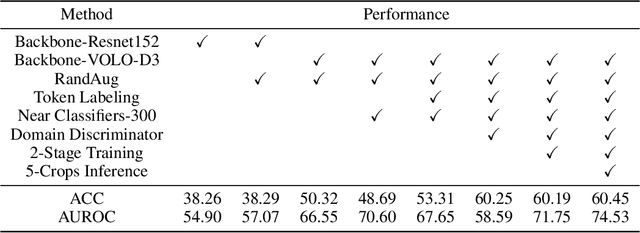

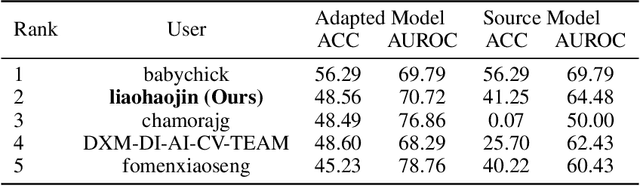

Abstract:The Visual Domain Adaptation (VisDA) 2021 Challenge calls for unsupervised domain adaptation (UDA) methods that can deal with both input distribution shift and label set variance between the source and target domains. In this report, we introduce a universal domain adaptation (UniDA) method by aggregating several popular feature extraction and domain adaptation schemes. First, we utilize VOLO, a Transformer-based architecture with state-of-the-art performance in several visual tasks, as the backbone to extract effective feature representations. Second, we modify the open-set classifier of OVANet to recognize the unknown class with competitive accuracy and robustness. As shown in the leaderboard, our proposed UniDA method ranks the 2nd place with 48.56% ACC and 70.72% AUROC in the VisDA 2021 Challenge.

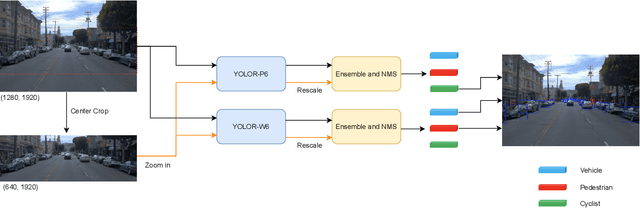

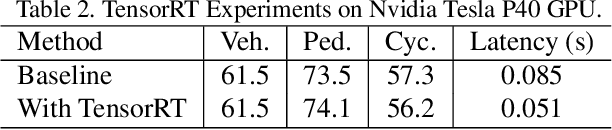

2nd Place Solution for Waymo Open Dataset Challenge -- Real-time 2D Object Detection

Jun 16, 2021

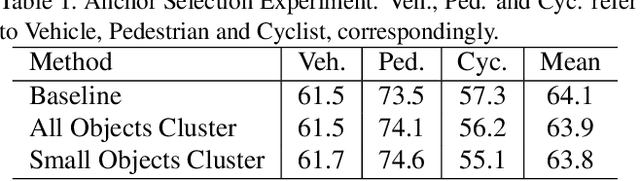

Abstract:In an autonomous driving system, it is essential to recognize vehicles, pedestrians and cyclists from images. Besides the high accuracy of the prediction, the requirement of real-time running brings new challenges for convolutional network models. In this report, we introduce a real-time method to detect the 2D objects from images. We aggregate several popular one-stage object detectors and train the models of variety input strategies independently, to yield better performance for accurate multi-scale detection of each category, especially for small objects. For model acceleration, we leverage TensorRT to optimize the inference time of our detection pipeline. As shown in the leaderboard, our proposed detection framework ranks the 2nd place with 75.00% L1 mAP and 69.72% L2 mAP in the real-time 2D detection track of the Waymo Open Dataset Challenges, while our framework achieves the latency of 45.8ms/frame on an Nvidia Tesla V100 GPU.

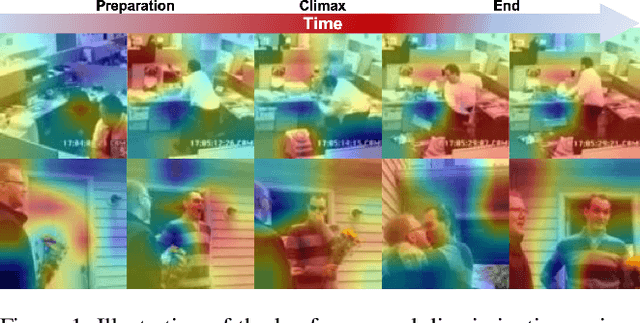

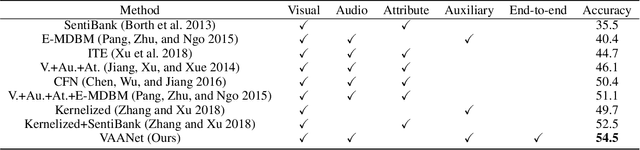

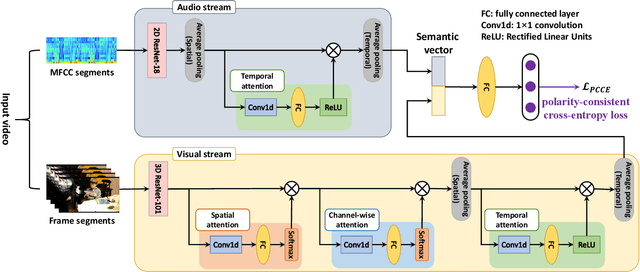

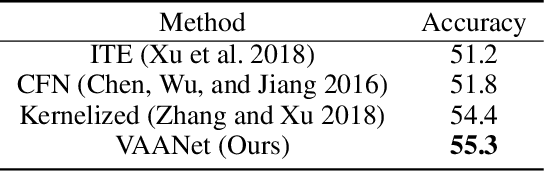

An End-to-End Visual-Audio Attention Network for Emotion Recognition in User-Generated Videos

Feb 12, 2020

Abstract:Emotion recognition in user-generated videos plays an important role in human-centered computing. Existing methods mainly employ traditional two-stage shallow pipeline, i.e. extracting visual and/or audio features and training classifiers. In this paper, we propose to recognize video emotions in an end-to-end manner based on convolutional neural networks (CNNs). Specifically, we develop a deep Visual-Audio Attention Network (VAANet), a novel architecture that integrates spatial, channel-wise, and temporal attentions into a visual 3D CNN and temporal attentions into an audio 2D CNN. Further, we design a special classification loss, i.e. polarity-consistent cross-entropy loss, based on the polarity-emotion hierarchy constraint to guide the attention generation. Extensive experiments conducted on the challenging VideoEmotion-8 and Ekman-6 datasets demonstrate that the proposed VAANet outperforms the state-of-the-art approaches for video emotion recognition. Our source code is released at: https://github.com/maysonma/VAANet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge