Jigar Doshi

NurtureNet: A Multi-task Video-based Approach for Newborn Anthropometry

May 09, 2024Abstract:Malnutrition among newborns is a top public health concern in developing countries. Identification and subsequent growth monitoring are key to successful interventions. However, this is challenging in rural communities where health systems tend to be inaccessible and under-equipped, with poor adherence to protocol. Our goal is to equip health workers and public health systems with a solution for contactless newborn anthropometry in the community. We propose NurtureNet, a multi-task model that fuses visual information (a video taken with a low-cost smartphone) with tabular inputs to regress multiple anthropometry estimates including weight, length, head circumference, and chest circumference. We show that visual proxy tasks of segmentation and keypoint prediction further improve performance. We establish the efficacy of the model through several experiments and achieve a relative error of 3.9% and mean absolute error of 114.3 g for weight estimation. Model compression to 15 MB also allows offline deployment to low-cost smartphones.

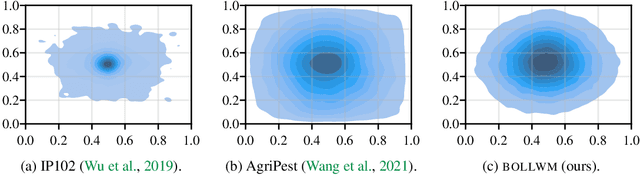

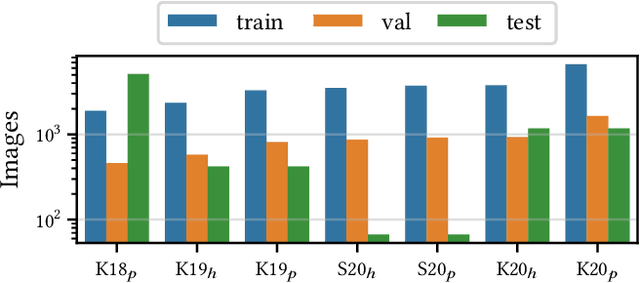

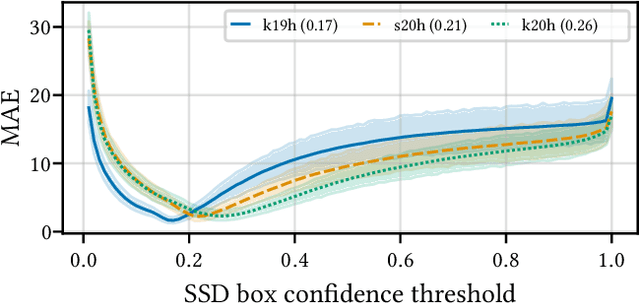

BOLLWM: A real-world dataset for bollworm pest monitoring from cotton fields in India

Apr 03, 2023

Abstract:This paper presents a dataset of agricultural pest images captured over five years by thousands of small holder farmers and farming extension workers across India. The dataset has been used to support a mobile application that relies on artificial intelligence to assist farmers with pest management decisions. Creation came from a mix of organized data collection, and from mobile application usage that was less controlled. This makes the dataset unique within the pest detection community, exhibiting a number of characteristics that place it closer to other non-agricultural objected detection datasets. This not only makes the dataset applicable to future pest management applications, it opens the door for a wide variety of other research agendas.

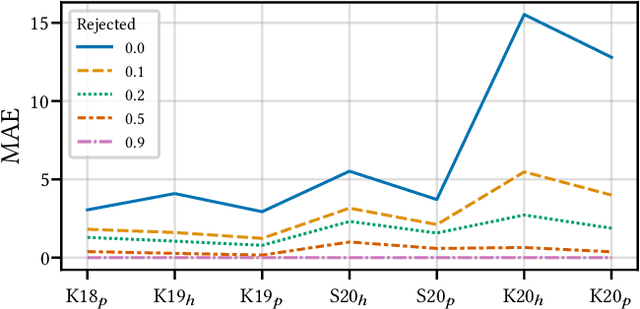

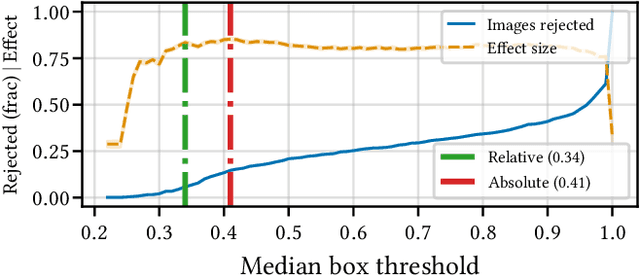

A Case for Rejection in Low Resource ML Deployment

Aug 15, 2022

Abstract:Building reliable AI decision support systems requires a robust set of data on which to train models; both with respect to quantity and diversity. Obtaining such datasets can be difficult in resource limited settings, or for applications in early stages of deployment. Sample rejection is one way to work around this challenge, however much of the existing work in this area is ill-suited for such scenarios. This paper substantiates that position and proposes a simple solution as a proof of concept baseline.

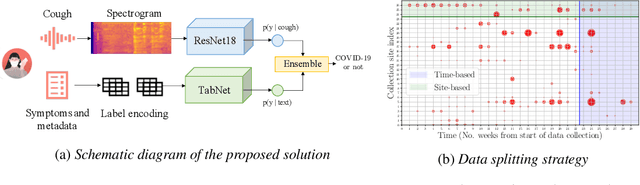

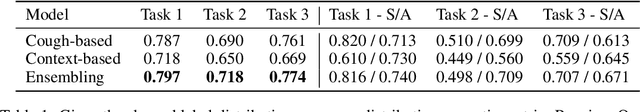

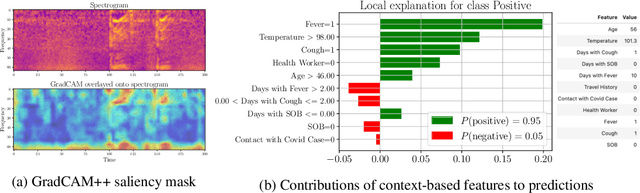

Impact of data-splits on generalization: Identifying COVID-19 from cough and context

Jun 05, 2021

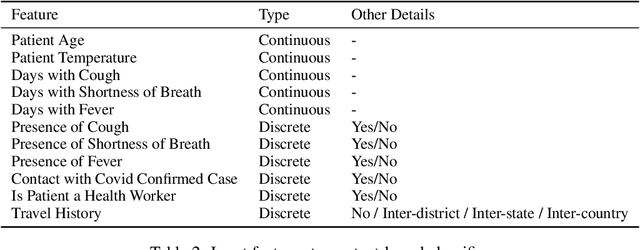

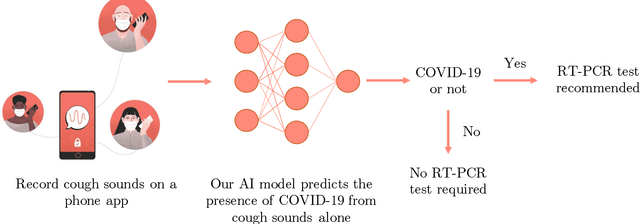

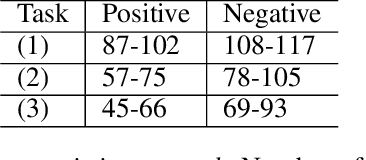

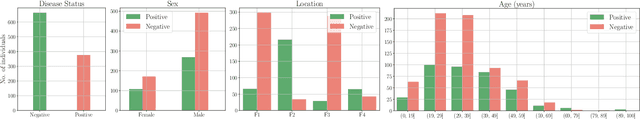

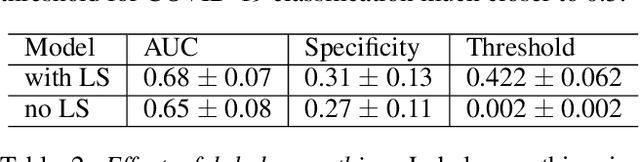

Abstract:Rapidly scaling screening, testing and quarantine has shown to be an effective strategy to combat the COVID-19 pandemic. We consider the application of deep learning techniques to distinguish individuals with COVID from non-COVID by using data acquirable from a phone. Using cough and context (symptoms and meta-data) represent such a promising approach. Several independent works in this direction have shown promising results. However, none of them report performance across clinically relevant data splits. Specifically, the performance where the development and test sets are split in time (retrospective validation) and across sites (broad validation). Although there is meaningful generalization across these splits the performance significantly varies (up to 0.1 AUC score). In addition, we study the performance of symptomatic and asymptomatic individuals across these three splits. Finally, we show that our model focuses on meaningful features of the input, cough bouts for cough and relevant symptoms for context. The code and checkpoints are available at https://github.com/WadhwaniAI/cough-against-covid

Multi-class segmentation under severe class imbalance: A case study in roof damage assessment

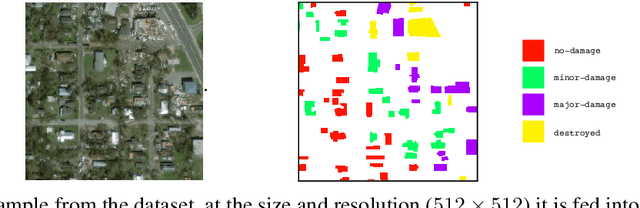

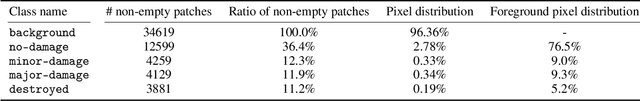

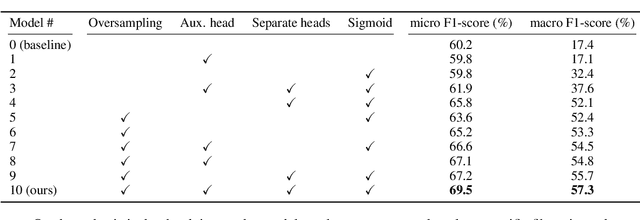

Oct 14, 2020

Abstract:The task of roof damage classification and segmentation from overhead imagery presents unique challenges. In this work we choose to address the challenge posed due to strong class imbalance. We propose four distinct techniques that aim at mitigating this problem. Through a new scheme that feeds the data to the network by oversampling the minority classes, and three other network architectural improvements, we manage to boost the macro-averaged F1-score of a model by 39.9 percentage points, thus achieving improved segmentation performance, especially on the minority classes.

Cough Against COVID: Evidence of COVID-19 Signature in Cough Sounds

Sep 23, 2020

Abstract:Testing capacity for COVID-19 remains a challenge globally due to the lack of adequate supplies, trained personnel, and sample-processing equipment. These problems are even more acute in rural and underdeveloped regions. We demonstrate that solicited-cough sounds collected over a phone, when analysed by our AI model, have statistically significant signal indicative of COVID-19 status (AUC 0.72, t-test,p <0.01,95% CI 0.61-0.83). This holds true for asymptomatic patients as well. Towards this, we collect the largest known(to date) dataset of microbiologically confirmed COVID-19 cough sounds from 3,621 individuals. When used in a triaging step within an overall testing protocol, by enabling risk-stratification of individuals before confirmatory tests, our tool can increase the testing capacity of a healthcare system by 43% at disease prevalence of 5%, without additional supplies, trained personnel, or physical infrastructure

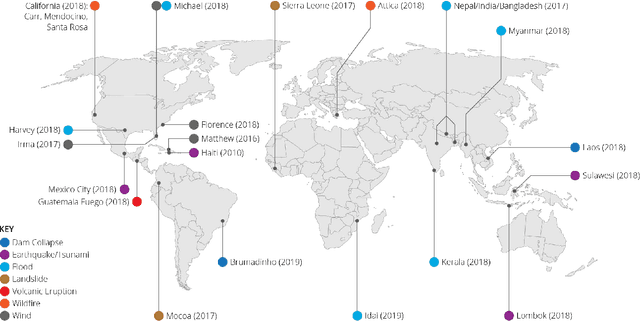

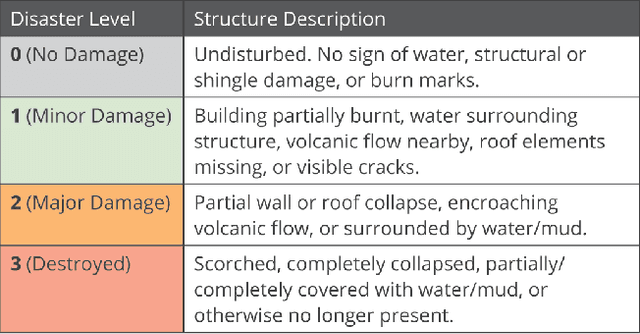

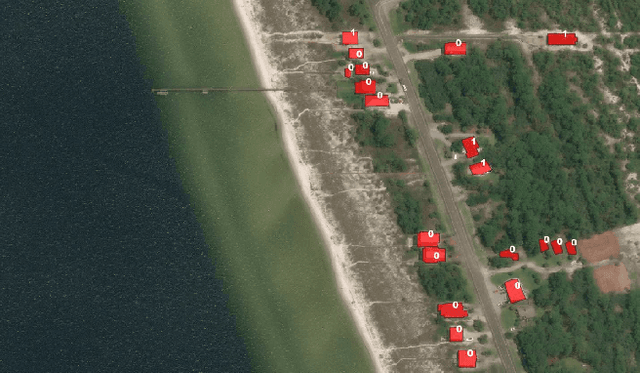

xBD: A Dataset for Assessing Building Damage from Satellite Imagery

Nov 21, 2019

Abstract:We present xBD, a new, large-scale dataset for the advancement of change detection and building damage assessment for humanitarian assistance and disaster recovery research. Natural disaster response requires an accurate understanding of damaged buildings in an affected region. Current response strategies require in-person damage assessments within 24-48 hours of a disaster. Massive potential exists for using aerial imagery combined with computer vision algorithms to assess damage and reduce the potential danger to human life. In collaboration with multiple disaster response agencies, xBD provides pre- and post-event satellite imagery across a variety of disaster events with building polygons, ordinal labels of damage level, and corresponding satellite metadata. Furthermore, the dataset contains bounding boxes and labels for environmental factors such as fire, water, and smoke. xBD is the largest building damage assessment dataset to date, containing 850,736 building annotations across 45,362 km\textsuperscript{2} of imagery.

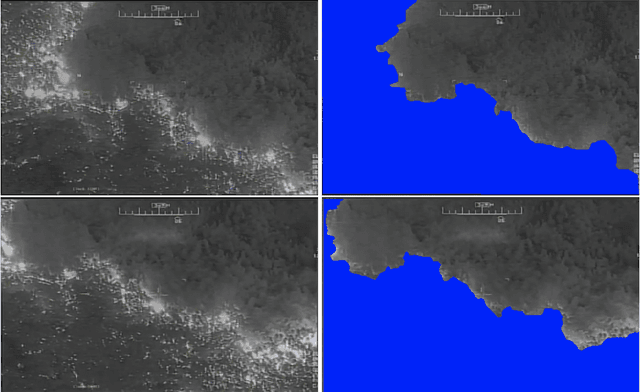

FireNet: Real-time Segmentation of Fire Perimeter from Aerial Video

Oct 14, 2019

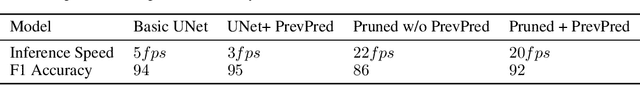

Abstract:In this paper, we share our approach to real-time segmentation of fire perimeter from aerial full-motion infrared video. We start by describing the problem from a humanitarian aid and disaster response perspective. Specifically, we explain the importance of the problem, how it is currently resolved, and how our machine learning approach improves it. To test our models we annotate a large-scale dataset of 400,000 frames with guidance from domain experts. Finally, we share our approach currently deployed in production with inference speed of 20 frames per second and an accuracy of 92 (F1 Score).

From Satellite Imagery to Disaster Insights

Dec 17, 2018

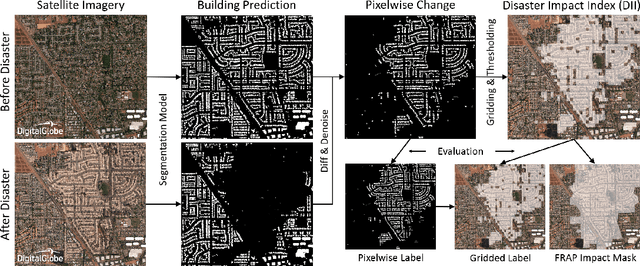

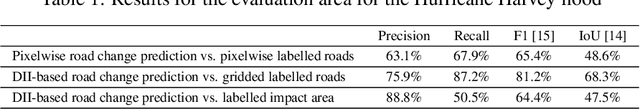

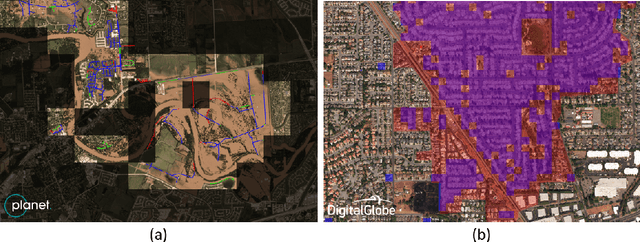

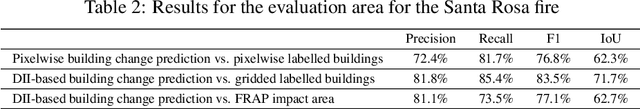

Abstract:The use of satellite imagery has become increasingly popular for disaster monitoring and response. After a disaster, it is important to prioritize rescue operations, disaster response and coordinate relief efforts. These have to be carried out in a fast and efficient manner since resources are often limited in disaster-affected areas and it's extremely important to identify the areas of maximum damage. However, most of the existing disaster mapping efforts are manual which is time-consuming and often leads to erroneous results. In order to address these issues, we propose a framework for change detection using Convolutional Neural Networks (CNN) on satellite images which can then be thresholded and clustered together into grids to find areas which have been most severely affected by a disaster. We also present a novel metric called Disaster Impact Index (DII) and use it to quantify the impact of two natural disasters - the Hurricane Harvey flood and the Santa Rosa fire. Our framework achieves a top F1 score of 81.2% on the gridded flood dataset and 83.5% on the gridded fire dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge