Ben Talbot

Retrospectives on the Embodied AI Workshop

Oct 17, 2022

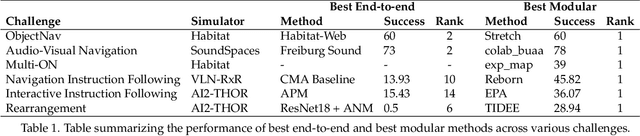

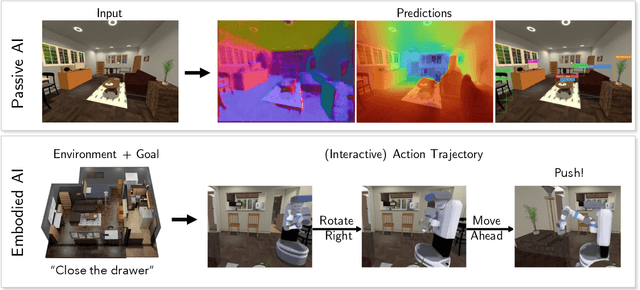

Abstract:We present a retrospective on the state of Embodied AI research. Our analysis focuses on 13 challenges presented at the Embodied AI Workshop at CVPR. These challenges are grouped into three themes: (1) visual navigation, (2) rearrangement, and (3) embodied vision-and-language. We discuss the dominant datasets within each theme, evaluation metrics for the challenges, and the performance of state-of-the-art models. We highlight commonalities between top approaches to the challenges and identify potential future directions for Embodied AI research.

Zero-Shot Uncertainty-Aware Deployment of Simulation Trained Policies on Real-World Robots

Dec 10, 2021

Abstract:While deep reinforcement learning (RL) agents have demonstrated incredible potential in attaining dexterous behaviours for robotics, they tend to make errors when deployed in the real world due to mismatches between the training and execution environments. In contrast, the classical robotics community have developed a range of controllers that can safely operate across most states in the real world given their explicit derivation. These controllers however lack the dexterity required for complex tasks given limitations in analytical modelling and approximations. In this paper, we propose Bayesian Controller Fusion (BCF), a novel uncertainty-aware deployment strategy that combines the strengths of deep RL policies and traditional handcrafted controllers. In this framework, we can perform zero-shot sim-to-real transfer, where our uncertainty based formulation allows the robot to reliably act within out-of-distribution states by leveraging the handcrafted controller while gaining the dexterity of the learned system otherwise. We show promising results on two real-world continuous control tasks, where BCF outperforms both the standalone policy and controller, surpassing what either can achieve independently. A supplementary video demonstrating our system is provided at https://bit.ly/bcf_deploy.

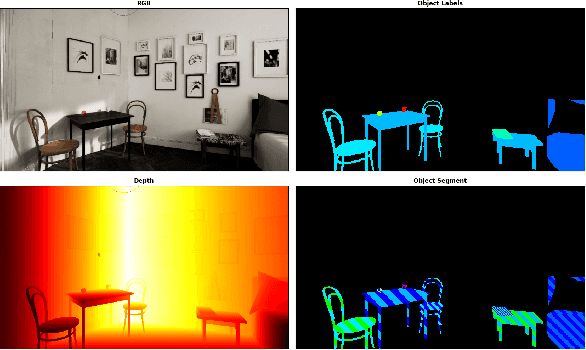

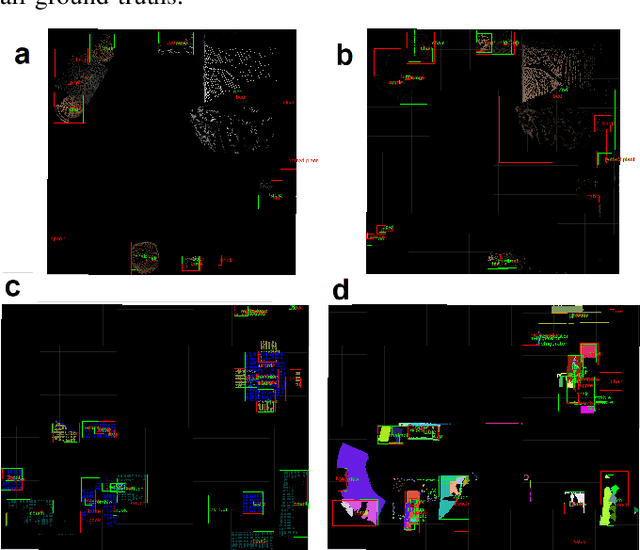

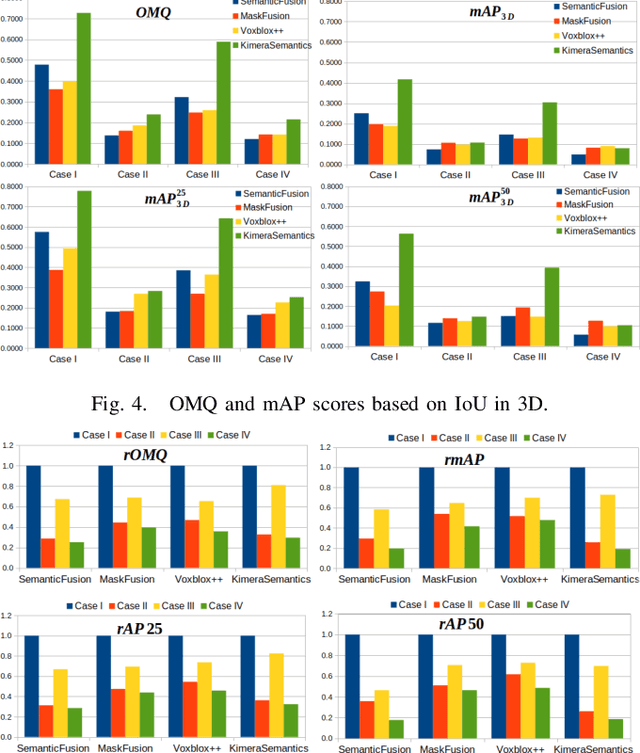

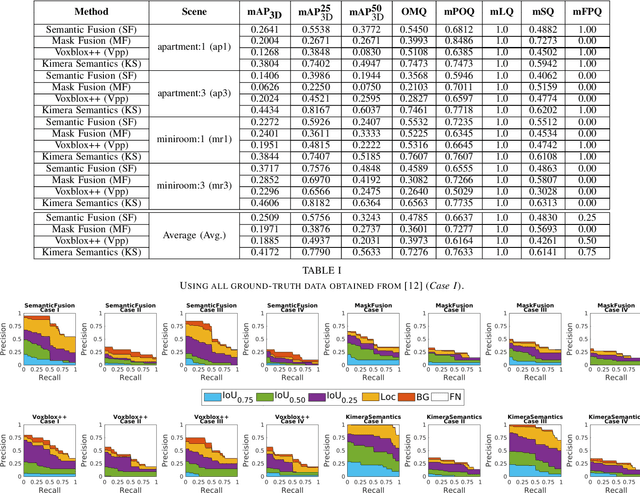

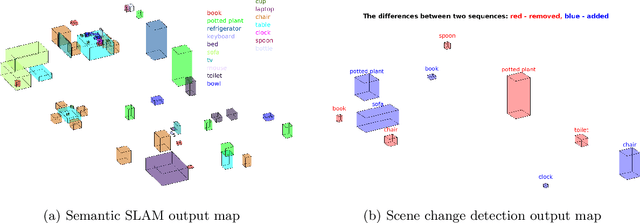

Evaluating the Impact of Semantic Segmentation and Pose Estimation on Dense Semantic SLAM

Sep 16, 2021

Abstract:Recent Semantic SLAM methods combine classical geometry-based estimation with deep learning-based object detection or semantic segmentation. In this paper we evaluate the quality of semantic maps generated by state-of-the-art class- and instance-aware dense semantic SLAM algorithms whose codes are publicly available and explore the impacts both semantic segmentation and pose estimation have on the quality of semantic maps. We obtain these results by providing algorithms with ground-truth pose and/or semantic segmentation data available from simulated environments. We establish that semantic segmentation is the largest source of error through our experiments, dropping mAP and OMQ performance by up to 74.3% and 71.3% respectively.

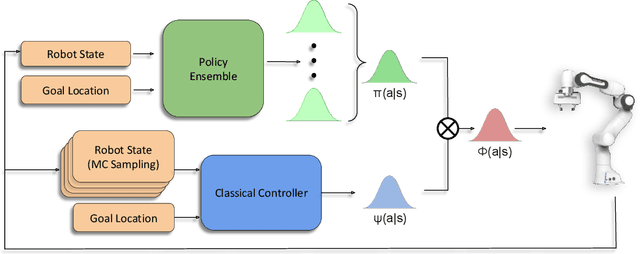

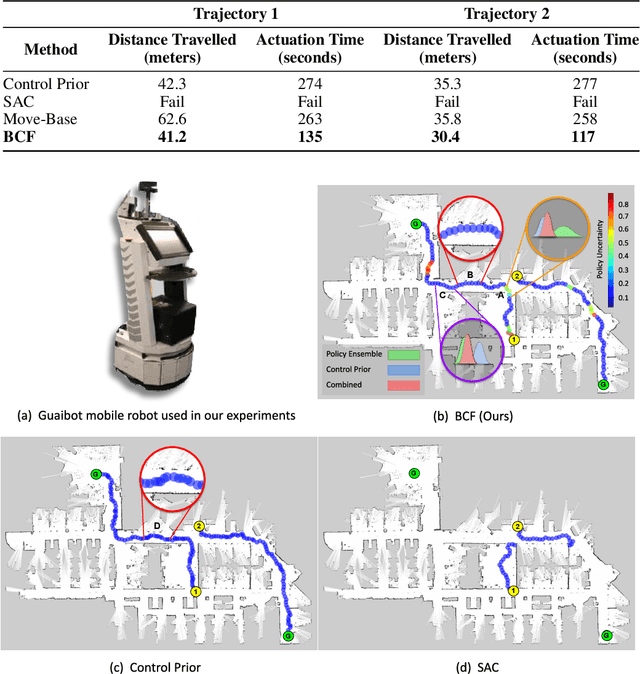

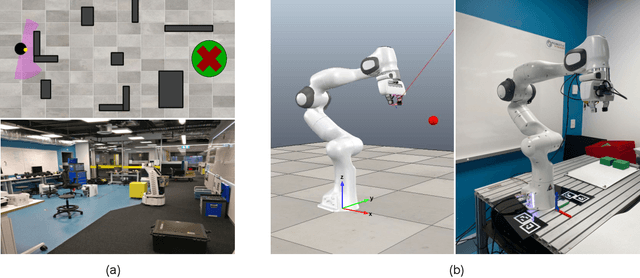

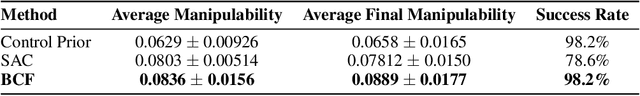

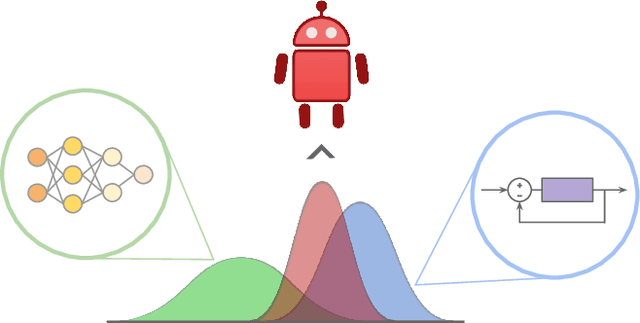

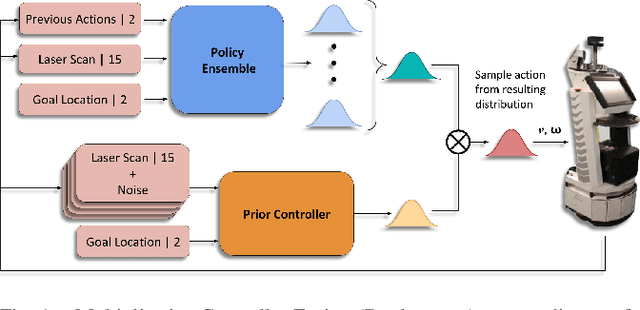

Bayesian Controller Fusion: Leveraging Control Priors in Deep Reinforcement Learning for Robotics

Jul 22, 2021

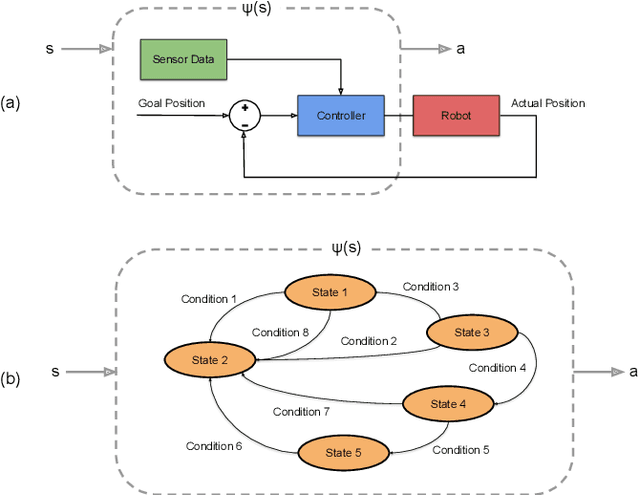

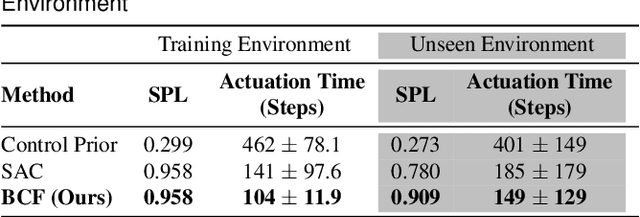

Abstract:We present Bayesian Controller Fusion (BCF): a hybrid control strategy that combines the strengths of traditional hand-crafted controllers and model-free deep reinforcement learning (RL). BCF thrives in the robotics domain, where reliable but suboptimal control priors exist for many tasks, but RL from scratch remains unsafe and data-inefficient. By fusing uncertainty-aware distributional outputs from each system, BCF arbitrates control between them, exploiting their respective strengths. We study BCF on two real-world robotics tasks involving navigation in a vast and long-horizon environment, and a complex reaching task that involves manipulability maximisation. For both these domains, there exist simple handcrafted controllers that can solve the task at hand in a risk-averse manner but do not necessarily exhibit the optimal solution given limitations in analytical modelling, controller miscalibration and task variation. As exploration is naturally guided by the prior in the early stages of training, BCF accelerates learning, while substantially improving beyond the performance of the control prior, as the policy gains more experience. More importantly, given the risk-aversity of the control prior, BCF ensures safe exploration and deployment, where the control prior naturally dominates the action distribution in states unknown to the policy. We additionally show BCF's applicability to the zero-shot sim-to-real setting and its ability to deal with out-of-distribution states in the real-world. BCF is a promising approach for combining the complementary strengths of deep RL and traditional robotic control, surpassing what either can achieve independently. The code and supplementary video material are made publicly available at https://krishanrana.github.io/bcf.

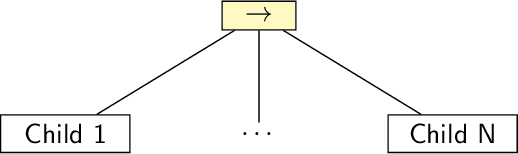

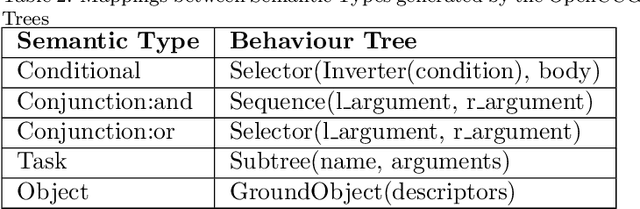

Learning and Executing Re-usable Behaviour Trees from Natural Language Instruction

Jun 03, 2021

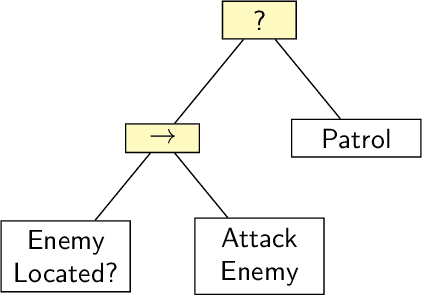

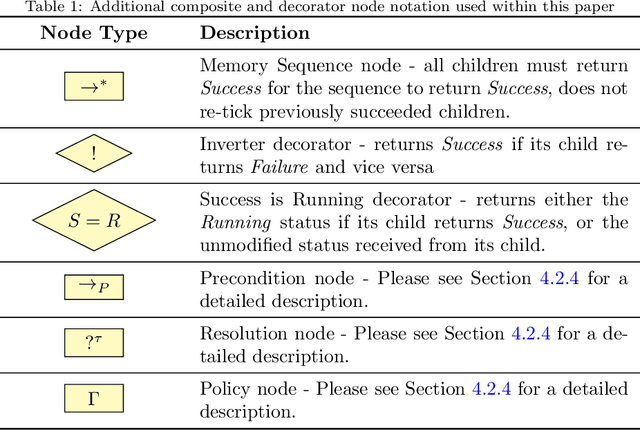

Abstract:Domestic and service robots have the potential to transform industries such as health care and small-scale manufacturing, as well as the homes in which we live. However, due to the overwhelming variety of tasks these robots will be expected to complete, providing generic out-of-the-box solutions that meet the needs of every possible user is clearly intractable. To address this problem, robots must therefore not only be capable of learning how to complete novel tasks at run-time, but the solutions to these tasks must also be informed by the needs of the user. In this paper we demonstrate how behaviour trees, a well established control architecture in the fields of gaming and robotics, can be used in conjunction with natural language instruction to provide a robust and modular control architecture for instructing autonomous agents to learn and perform novel complex tasks. We also show how behaviour trees generated using our approach can be generalised to novel scenarios, and can be re-used in future learning episodes to create increasingly complex behaviours. We validate this work against an existing corpus of natural language instructions, demonstrate the application of our approach on both a simulated robot solving a toy problem, as well as two distinct real-world robot platforms which, respectively, complete a block sorting scenario, and a patrol scenario.

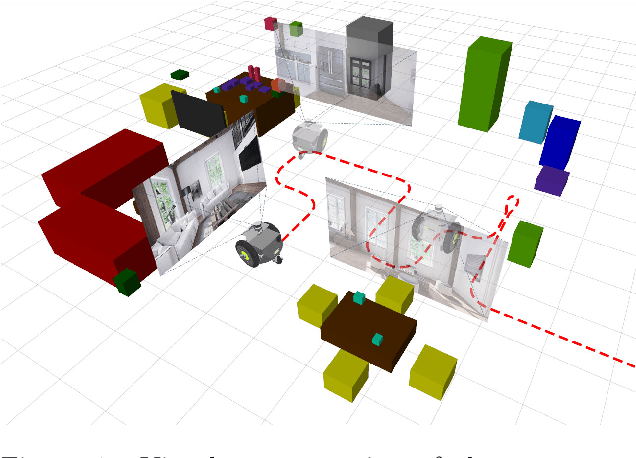

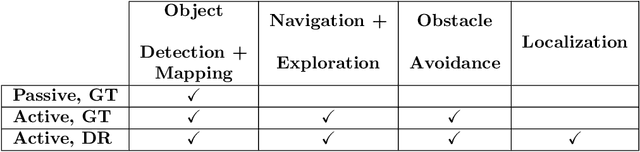

The Robotic Vision Scene Understanding Challenge

Sep 11, 2020

Abstract:Being able to explore an environment and understand the location and type of all objects therein is important for indoor robotic platforms that must interact closely with humans. However, it is difficult to evaluate progress in this area due to a lack of standardized testing which is limited due to the need for active robot agency and perfect object ground-truth. To help provide a standard for testing scene understanding systems, we present a new robot vision scene understanding challenge using simulation to enable repeatable experiments with active robot agency. We provide two challenging task types, three difficulty levels, five simulated environments and a new evaluation measure for evaluating 3D cuboid object maps. Our aim is to drive state-of-the-art research in scene understanding through enabling evaluation and comparison of active robotic vision systems.

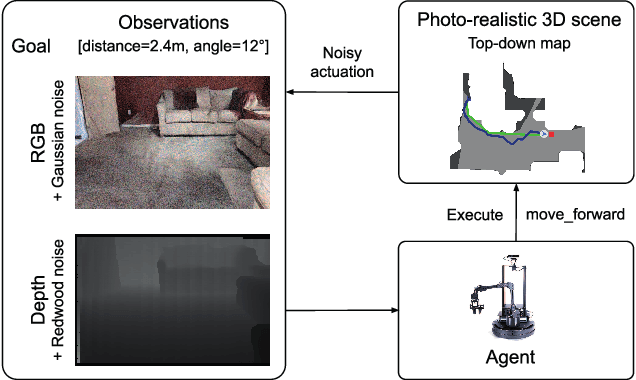

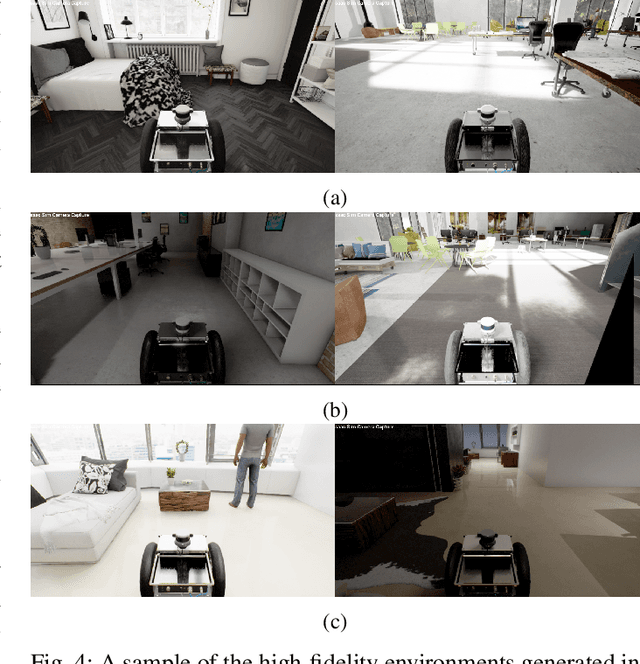

BenchBot: Evaluating Robotics Research in Photorealistic 3D Simulation and on Real Robots

Aug 03, 2020

Abstract:We introduce BenchBot, a novel software suite for benchmarking the performance of robotics research across both photorealistic 3D simulations and real robot platforms. BenchBot provides a simple interface to the sensorimotor capabilities of a robot when solving robotics research problems; an interface that is consistent regardless of whether the target platform is simulated or a real robot. In this paper we outline the BenchBot system architecture, and explore the parallels between its user-centric design and an ideal research development process devoid of tangential robot engineering challenges. The paper describes the research benefits of using the BenchBot system, including: enhanced capacity to focus solely on research problems, direct quantitative feedback to inform research development, tools for deriving comprehensive performance characteristics, and submission formats which promote sharability and repeatability of research outcomes. BenchBot is publicly available (http://benchbot.org), and we encourage its use in the research community for comprehensively evaluating the simulated and real world performance of novel robotic algorithms.

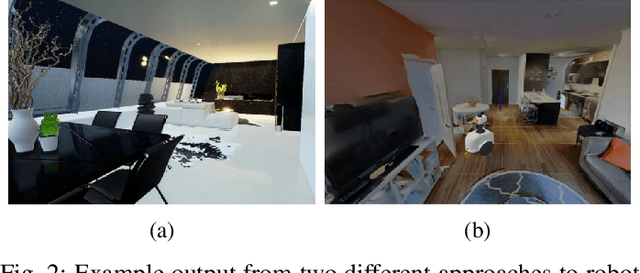

Multiplicative Controller Fusion: A Hybrid Navigation Strategy For Deployment in Unknown Environments

Mar 13, 2020

Abstract:Learning-based approaches often outperform hand-coded algorithmic solutions for many problems in robotics. However, learning long-horizon tasks on real robot hardware can be intractable, and transferring a learned policy from simulation to reality is still extremely challenging. We present a novel approach to model-free reinforcement learning that can leverage existing sub-optimal solutions as an algorithmic prior during training and deployment. During training, our gated fusion approach enables the prior to guide the initial stages of exploration, increasing sample-efficiency and enabling learning from sparse long-horizon reward signals. Importantly, the policy can learn to improve beyond the performance of the sub-optimal prior since the prior's influence is annealed gradually. During deployment, the policy's uncertainty provides a reliable strategy for transferring a simulation-trained policy to the real world by falling back to the prior controller in uncertain states. We show the efficacy of our Multiplicative Controller Fusion approach on the task of robot navigation and demonstrate safe transfer from simulation to the real world without any fine tuning. The code for this project is made publicly available at https://sites.google.com/view/mcf-nav/home.

Robot Navigation in Unseen Spaces using an Abstract Map

Jan 31, 2020

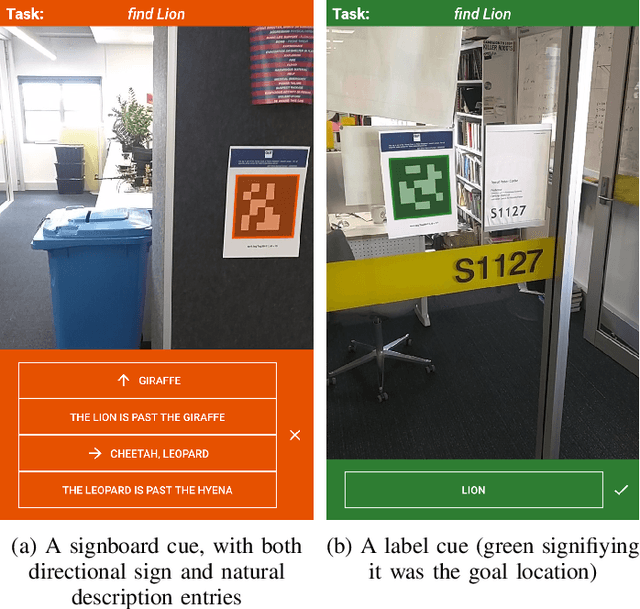

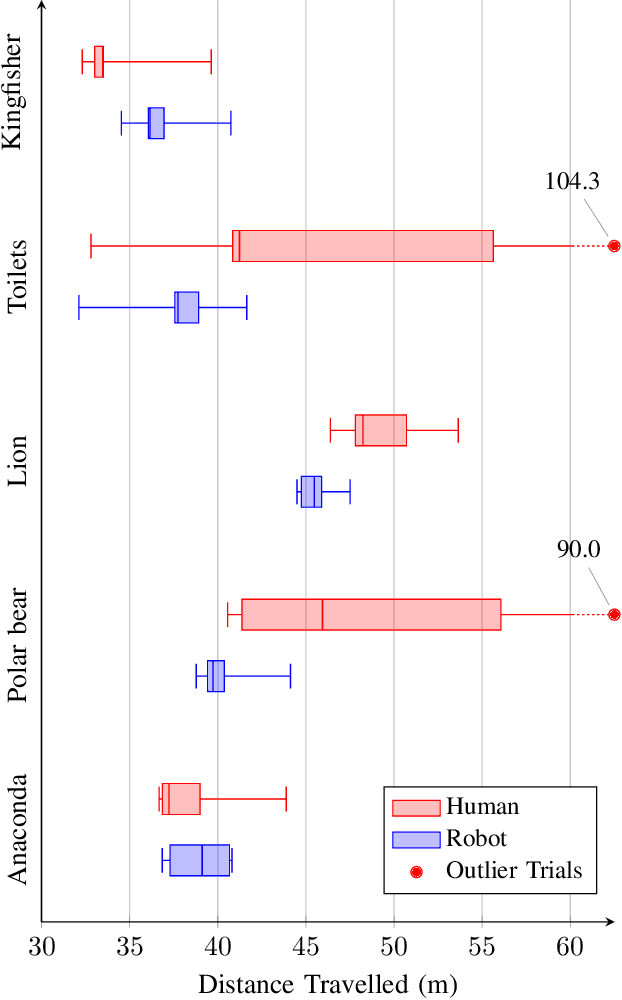

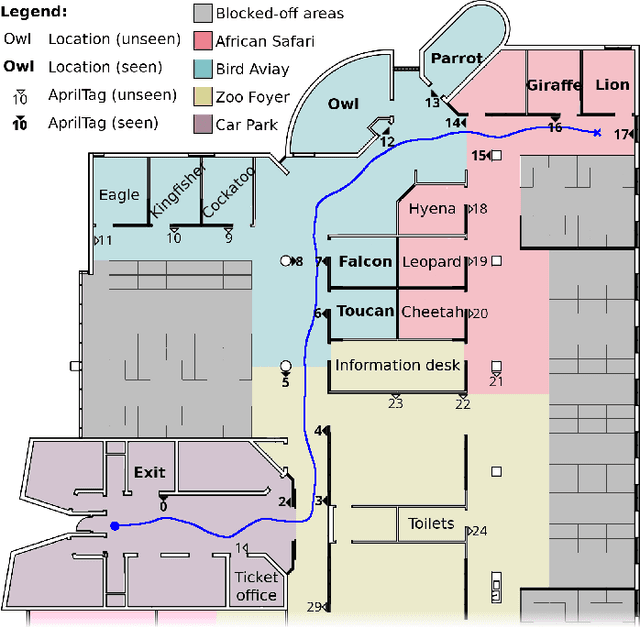

Abstract:Human navigation in built environments depends on symbolic spatial information which has unrealised potential to enhance robot navigation capabilities. Information sources such as labels, signs, maps, planners, spoken directions, and navigational gestures communicate a wealth of spatial information to the navigators of built environments; a wealth of information that robots typically ignore. We present a robot navigation system that uses the same symbolic spatial information employed by humans to purposefully navigate in unseen built environments with a level of performance comparable to humans. The navigation system uses a novel data structure called the abstract map to imagine malleable spatial models for unseen spaces from spatial symbols. Sensorimotor perceptions from a robot are then employed to provide purposeful navigation to symbolic goal locations in the unseen environment. We show how a dynamic system can be used to create malleable spatial models for the abstract map, and provide an open source implementation to encourage future work in the area of symbolic navigation. Symbolic navigation performance of humans and a robot is evaluated in a real-world built environment. The paper concludes with a qualitative analysis of human navigation strategies, providing further insights into how the symbolic navigation capabilities of robots in unseen built environments can be improved in the future.

Residual Reactive Navigation: Combining Classical and Learned Navigation Strategies For Deployment in Unknown Environments

Sep 24, 2019

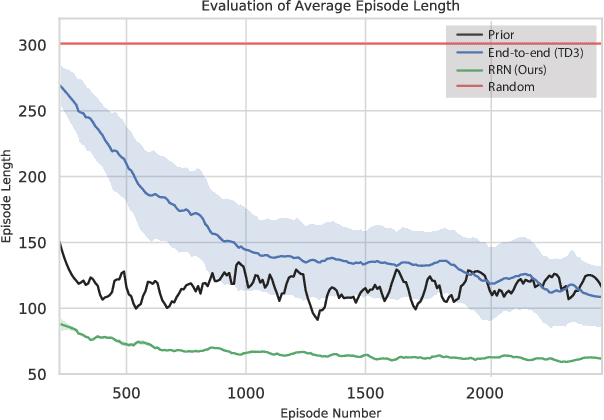

Abstract:In this work we focus on improving the efficiency and generalisation of learned navigation strategies when transferred from its training environment to previously unseen ones. We present an extension of the residual reinforcement learning framework from the robotic manipulation literature and adapt it to the vast and unstructured environments that mobile robots can operate in. The concept is based on learning a residual control effect to add to a typical sub-optimal classical controller in order to close the performance gap, whilst guiding the exploration process during training for improved data efficiency. We exploit this tight coupling and propose a novel deployment strategy, switching Residual Reactive Navigation (sRNN), which yields efficient trajectories whilst probabilistically switching to a classical controller in cases of high policy uncertainty. Our approach achieves improved performance over end-to-end alternatives and can be incorporated as part of a complete navigation stack for cluttered indoor navigation tasks in the real world. The code and training environment for this project is made publicly available at https://github.com/krishanrana/2D_SRRN.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge