Zhiyuan Zhang

Bench2Drive-VL: Benchmarks for Closed-Loop Autonomous Driving with Vision-Language Models

Apr 01, 2026Abstract:With the rise of vision-language models (VLM), their application for autonomous driving (VLM4AD) has gained significant attention. Meanwhile, in autonomous driving, closed-loop evaluation has become widely recognized as a more reliable validation method than open-loop evaluation, as it can evaluate the performance of the model under cumulative errors and out-of-distribution inputs. However, existing VLM4AD benchmarks evaluate the model`s scene understanding ability under open-loop, i.e., via static question-answer (QA) dataset. This kind of evaluation fails to assess the VLMs performance under out-of-distribution states rarely appeared in the human collected datasets.To this end, we present Bench2Drive-VL, an extension of Bench2Drive that brings closed-loop evaluation to VLM-based driving, which introduces: (1) DriveCommenter, a closed-loop generator that automatically generates diverse, behavior-grounded question-answer pairs for all driving situations in CARLA,including severe off-route and off-road deviations previously unassessable in simulation. (2) A unified protocol and interface that allows modern VLMs to be directly plugged into the Bench2Drive closed-loop environment to compare with traditional agents. (3) A flexible reasoning and control framework, supporting multi-format visual inputs and configurable graph-based chain-of-thought execution. (4) A complete development ecosystem. Together, these components form a comprehensive closed-loop benchmark for VLM4AD. All codes and annotated datasets are open sourced.

Composer 2 Technical Report

Mar 25, 2026Abstract:Composer 2 is a specialized model designed for agentic software engineering. The model demonstrates strong long-term planning and coding intelligence while maintaining the ability to efficiently solve problems for interactive use. The model is trained in two phases: first, continued pretraining to improve the model's knowledge and latent coding ability, followed by large-scale reinforcement learning to improve end-to-end coding performance through stronger reasoning, accurate multi-step execution, and coherence on long-horizon realistic coding problems. We develop infrastructure to support training in the same Cursor harness that is used by the deployed model, with equivalent tools and structure, and use environments that match real problems closely. To measure the ability of the model on increasingly difficult tasks, we introduce a benchmark derived from real software engineering problems in large codebases including our own. Composer 2 is a frontier-level coding model and demonstrates a process for training strong domain-specialized models. On our CursorBench evaluations the model achieves a major improvement in accuracy compared to previous Composer models (61.3). On public benchmarks the model scores 61.7 on Terminal-Bench and 73.7 on SWE-bench Multilingual in our harness, comparable to state-of-the-art systems.

TacVLA: Contact-Aware Tactile Fusion for Robust Vision-Language-Action Manipulation

Mar 13, 2026Abstract:Vision-Language-Action (VLA) models have demonstrated significant advantages in robotic manipulation. However, their reliance on vision and language often leads to suboptimal performance in tasks involving visual occlusion, fine-grained manipulation, and physical contact. To address these challenges, we propose TacVLA, a fine-tuned VLA model by incorporating tactile modalities into the transformer-based policy to enhance fine-grained manipulation capabilities. Specifically, we introduce a contact-aware gating mechanism that selectively activates tactile tokens only when contact is detected, enabling adaptive multimodal fusion while avoiding irrelevant tactile interference. The fused visual, language, and tactile tokens are jointly processed within the transformer architecture to strengthen cross-modal grounding during contact-rich interaction. Extensive experiments on constraint-locked disassembly, in-box picking and robustness evaluations demonstrate that our model outperforms baselines, improving the performance by averaging 20% success rate in disassembly, 60% in in-box picking and 2.1x improvement in scenarios with visual occlusion. Videos are available at https://sites.google.com/view/tacvla and code will be released.

CONTACT: CONtact-aware TACTile Learning for Robotic Disassembly

Mar 09, 2026Abstract:Robotic disassembly involves contact-rich interactions in which successful manipulation depends not only on geometric alignment but also on force-dependent state transitions. While vision-based policies perform well in structured settings, their reliability often degrades in tight-tolerance, contact-dominated, or deformable scenarios. In this work, we systematically investigate the role of tactile sensing in robotic disassembly through both simulation and real-world experiments. We construct five rigid-body disassembly tasks in simulation with increasing geometric constraints and extraction difficulty. We further design five real-world tasks, including three rigid and two deformable scenarios, to evaluate contact-dependent manipulation. Within a unified learning framework, we compare three sensing configurations: Vision Only, Vision + tactile RGB (TacRGB), and Vision + tactile force field (TacFF). Across both simulation and real-world experiments, TacFF-based policies consistently achieve the highest success rates, with particularly notable gains in contact-dependent and deformable settings. Notably, naive fusion of TacRGB and TacFF underperforms either modality alone, indicating that simple concatenation can dilute task-relevant force information. Our results show that tactile sensing plays a critical, task-dependent role in robotic disassembly, with structured force-field representations being particularly effective in contact-dominated scenarios.

EquiBim: Learning Symmetry-Equivariant Policy for Bimanual Manipulation

Mar 09, 2026Abstract:Robotic imitation learning has achieved impressive success in learning complex manipulation behaviors from demonstrations. However, many existing robot learning methods do not explicitly account for the physical symmetries of robotic systems, often resulting in asymmetric or inconsistent behaviors under symmetric observations. This limitation is particularly pronounced in dual-arm manipulation, where bilateral symmetry is inherent to both the robot morphology and the structure of many tasks. In this paper, we introduce EquiBim, a symmetry-equivariant policy learning framework for bimanual manipulation that enforces bilateral equivariance between observations and actions during training. Our approach formulates physical symmetry as a group action on both observation and action spaces, and imposes an equivariance constraint on policy predictions under symmetric transformations. The framework is model-agnostic and can be seamlessly integrated into a wide range of imitation learning pipelines with diverse observation modalities and action representations, including point cloud-based and image-based policies, as well as both end-effector-space and joint-space parameterizations. We evaluate EquiBim on RoboTwin, a dual-arm robotic platform with symmetric kinematics, and evaluate it across diverse observation and action configurations in simulation. We further validate the approach on a real-world dual-arm system. Across both simulation and physical experiments, our method consistently improves performance and robustness under distribution shifts. These results suggest that explicitly enforcing physical symmetry provides a simple yet effective inductive bias for bimanual robot learning.

EquiForm: Noise-Robust SE(3)-Equivariant Policy Learning from 3D Point Clouds

Jan 24, 2026Abstract:Visual imitation learning with 3D point clouds has advanced robotic manipulation by providing geometry-aware, appearance-invariant observations. However, point cloud-based policies remain highly sensitive to sensor noise, pose perturbations, and occlusion-induced artifacts, which distort geometric structure and break the equivariance assumptions required for robust generalization. Existing equivariant approaches primarily encode symmetry constraints into neural architectures, but do not explicitly correct noise-induced geometric deviations or enforce equivariant consistency in learned representations. We introduce EquiForm, a noise-robust SE(3)-equivariant policy learning framework for point cloud-based manipulation. EquiForm formalizes how noise-induced geometric distortions lead to equivariance deviations in observation-to-action mappings, and introduces a geometric denoising module to restore consistent 3D structure under noisy or incomplete observations. In addition, we propose a contrastive equivariant alignment objective that enforces representation consistency under both rigid transformations and noise perturbations. Built upon these components, EquiForm forms a flexible policy learning pipeline that integrates noise-robust geometric reasoning with modern generative models. We evaluate EquiForm on 16 simulated tasks and 4 real-world manipulation tasks across diverse objects and scene layouts. Compared to state-of-the-art point cloud imitation learning methods, EquiForm achieves an average improvement of 17.2% in simulation and 28.1% in real-world experiments, demonstrating strong noise robustness and spatial generalization.

EmbryoDiff: A Conditional Diffusion Framework with Multi-Focal Feature Fusion for Fine-Grained Embryo Developmental Stage Recognition

Nov 14, 2025Abstract:Identification of fine-grained embryo developmental stages during In Vitro Fertilization (IVF) is crucial for assessing embryo viability. Although recent deep learning methods have achieved promising accuracy, existing discriminative models fail to utilize the distributional prior of embryonic development to improve accuracy. Moreover, their reliance on single-focal information leads to incomplete embryonic representations, making them susceptible to feature ambiguity under cell occlusions. To address these limitations, we propose EmbryoDiff, a two-stage diffusion-based framework that formulates the task as a conditional sequence denoising process. Specifically, we first train and freeze a frame-level encoder to extract robust multi-focal features. In the second stage, we introduce a Multi-Focal Feature Fusion Strategy that aggregates information across focal planes to construct a 3D-aware morphological representation, effectively alleviating ambiguities arising from cell occlusions. Building on this fused representation, we derive complementary semantic and boundary cues and design a Hybrid Semantic-Boundary Condition Block to inject them into the diffusion-based denoising process, enabling accurate embryonic stage classification. Extensive experiments on two benchmark datasets show that our method achieves state-of-the-art results. Notably, with only a single denoising step, our model obtains the best average test performance, reaching 82.8% and 81.3% accuracy on the two datasets, respectively.

Private-RAG: Answering Multiple Queries with LLMs while Keeping Your Data Private

Nov 10, 2025

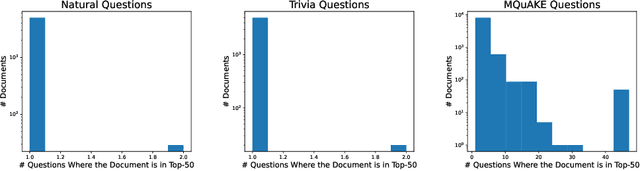

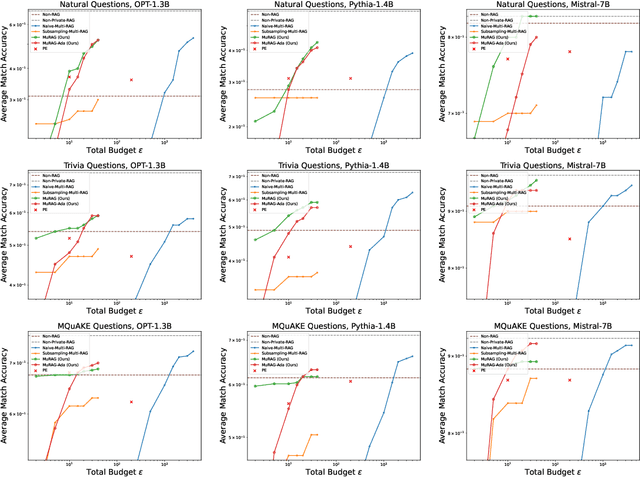

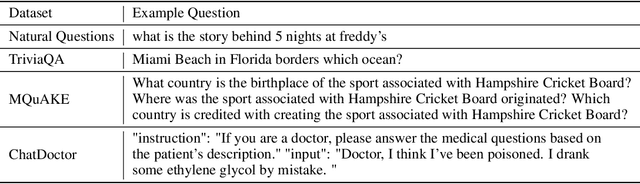

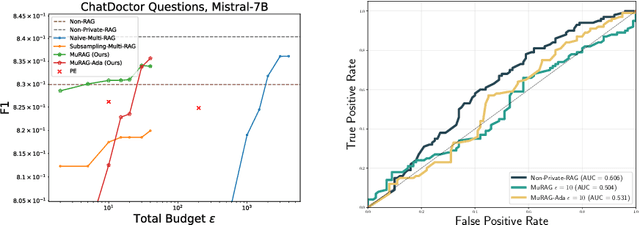

Abstract:Retrieval-augmented generation (RAG) enhances large language models (LLMs) by retrieving documents from an external corpus at inference time. When this corpus contains sensitive information, however, unprotected RAG systems are at risk of leaking private information. Prior work has introduced differential privacy (DP) guarantees for RAG, but only in single-query settings, which fall short of realistic usage. In this paper, we study the more practical multi-query setting and propose two DP-RAG algorithms. The first, MURAG, leverages an individual privacy filter so that the accumulated privacy loss only depends on how frequently each document is retrieved rather than the total number of queries. The second, MURAG-ADA, further improves utility by privately releasing query-specific thresholds, enabling more precise selection of relevant documents. Our experiments across multiple LLMs and datasets demonstrate that the proposed methods scale to hundreds of queries within a practical DP budget ($\varepsilon\approx10$), while preserving meaningful utility.

Super LiDAR Reflectance for Robotic Perception

Aug 14, 2025Abstract:Conventionally, human intuition often defines vision as a modality of passive optical sensing, while active optical sensing is typically regarded as measuring rather than the default modality of vision. However, the situation now changes: sensor technologies and data-driven paradigms empower active optical sensing to redefine the boundaries of vision, ushering in a new era of active vision. Light Detection and Ranging (LiDAR) sensors capture reflectance from object surfaces, which remains invariant under varying illumination conditions, showcasing significant potential in robotic perception tasks such as detection, recognition, segmentation, and Simultaneous Localization and Mapping (SLAM). These applications often rely on dense sensing capabilities, typically achieved by high-resolution, expensive LiDAR sensors. A key challenge with low-cost LiDARs lies in the sparsity of scan data, which limits their broader application. To address this limitation, this work introduces an innovative framework for generating dense LiDAR reflectance images from sparse data, leveraging the unique attributes of non-repeating scanning LiDAR (NRS-LiDAR). We tackle critical challenges, including reflectance calibration and the transition from static to dynamic scene domains, facilitating the reconstruction of dense reflectance images in real-world settings. The key contributions of this work include a comprehensive dataset for LiDAR reflectance image densification, a densification network tailored for NRS-LiDAR, and diverse applications such as loop closure and traffic lane detection using the generated dense reflectance images.

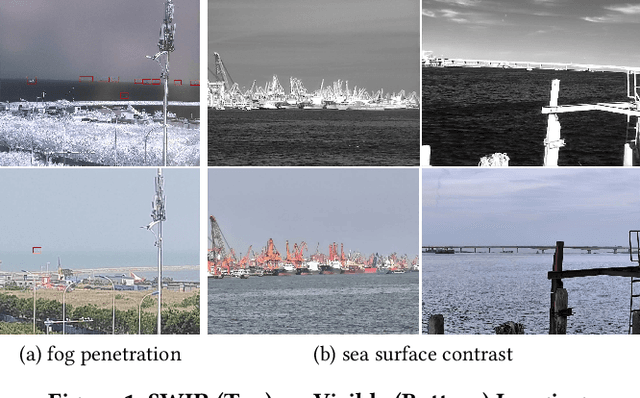

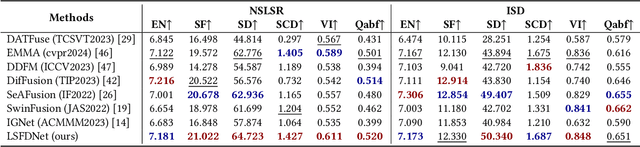

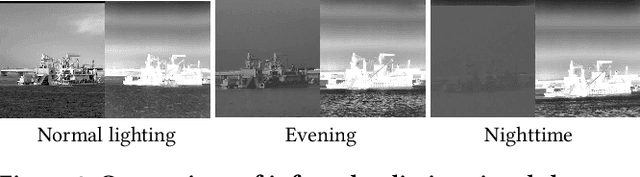

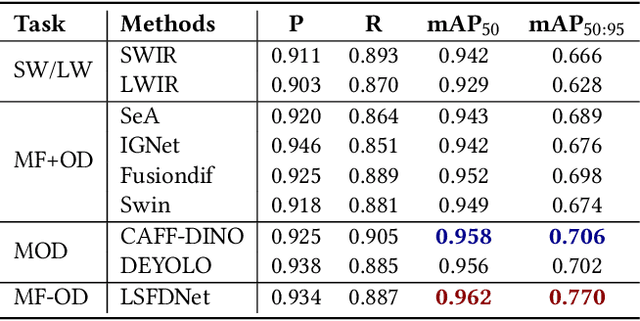

LSFDNet: A Single-Stage Fusion and Detection Network for Ships Using SWIR and LWIR

Jul 28, 2025

Abstract:Traditional ship detection methods primarily rely on single-modal approaches, such as visible or infrared images, which limit their application in complex scenarios involving varying lighting conditions and heavy fog. To address this issue, we explore the advantages of short-wave infrared (SWIR) and long-wave infrared (LWIR) in ship detection and propose a novel single-stage image fusion detection algorithm called LSFDNet. This algorithm leverages feature interaction between the image fusion and object detection subtask networks, achieving remarkable detection performance and generating visually impressive fused images. To further improve the saliency of objects in the fused images and improve the performance of the downstream detection task, we introduce the Multi-Level Cross-Fusion (MLCF) module. This module combines object-sensitive fused features from the detection task and aggregates features across multiple modalities, scales, and tasks to obtain more semantically rich fused features. Moreover, we utilize the position prior from the detection task in the Object Enhancement (OE) loss function, further increasing the retention of object semantics in the fused images. The detection task also utilizes preliminary fused features from the fusion task to complement SWIR and LWIR features, thereby enhancing detection performance. Additionally, we have established a Nearshore Ship Long-Short Wave Registration (NSLSR) dataset to train effective SWIR and LWIR image fusion and detection networks, bridging a gap in this field. We validated the superiority of our proposed single-stage fusion detection algorithm on two datasets. The source code and dataset are available at https://github.com/Yanyin-Guo/LSFDNet

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge