Michael J. Curry

Aligned Textual Scoring Rules

Jul 08, 2025Abstract:Scoring rules elicit probabilistic predictions from a strategic agent by scoring the prediction against a ground truth state. A scoring rule is proper if, from the agent's perspective, reporting the true belief maximizes the expected score. With the development of language models, Wu and Hartline (2024) proposes a reduction from textual information elicitation to the numerical (i.e. probabilistic) information elicitation problem, which achieves provable properness for textual elicitation. However, not all proper scoring rules are well aligned with human preference over text. Our paper designs the Aligned Scoring rule (ASR) for text by optimizing and minimizing the mean squared error between a proper scoring rule and a reference score (e.g. human score). Our experiments show that our ASR outperforms previous methods in aligning with human preference while maintaining properness.

Truthful Aggregation of LLMs with an Application to Online Advertising

May 09, 2024

Abstract:We address the challenge of aggregating the preferences of multiple agents over LLM-generated replies to user queries, where agents might modify or exaggerate their preferences. New agents may participate for each new query, making fine-tuning LLMs on these preferences impractical. To overcome these challenges, we propose an auction mechanism that operates without fine-tuning or access to model weights. This mechanism is designed to provably converge to the ouput of the optimally fine-tuned LLM as computational resources are increased. The mechanism can also incorporate contextual information about the agents when avaiable, which significantly accelerates its convergence. A well-designed payment rule ensures that truthful reporting is the optimal strategy for all agents, while also promoting an equity property by aligning each agent's utility with her contribution to social welfare - an essential feature for the mechanism's long-term viability. While our approach can be applied whenever monetary transactions are permissible, our flagship application is in online advertising. In this context, advertisers try to steer LLM-generated responses towards their brand interests, while the platform aims to maximize advertiser value and ensure user satisfaction. Experimental results confirm that our mechanism not only converges efficiently to the optimally fine-tuned LLM but also significantly boosts advertiser value and platform revenue, all with minimal computational overhead.

Optimal Automated Market Makers: Differentiable Economics and Strong Duality

Feb 14, 2024

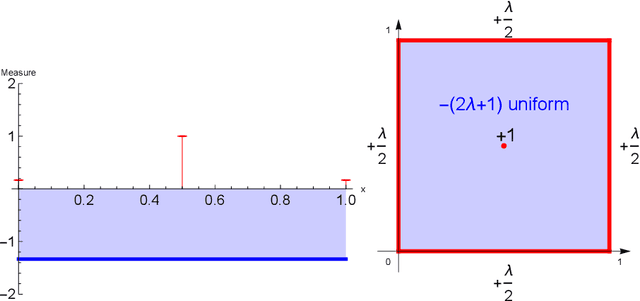

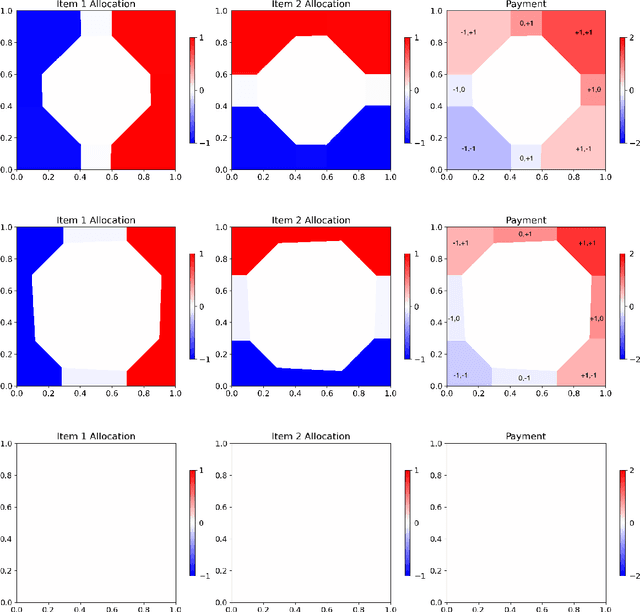

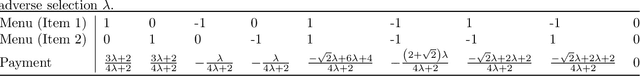

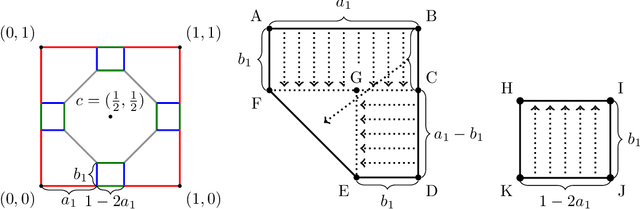

Abstract:The role of a market maker is to simultaneously offer to buy and sell quantities of goods, often a financial asset such as a share, at specified prices. An automated market maker (AMM) is a mechanism that offers to trade according to some predetermined schedule; the best choice of this schedule depends on the market maker's goals. The literature on the design of AMMs has mainly focused on prediction markets with the goal of information elicitation. More recent work motivated by DeFi has focused instead on the goal of profit maximization, but considering only a single type of good (traded with a numeraire), including under adverse selection (Milionis et al. 2022). Optimal market making in the presence of multiple goods, including the possibility of complex bundling behavior, is not well understood. In this paper, we show that finding an optimal market maker is dual to an optimal transport problem, with specific geometric constraints on the transport plan in the dual. We show that optimal mechanisms for multiple goods and under adverse selection can take advantage of bundling, both improved prices for bundled purchases and sales as well as sometimes accepting payment "in kind." We present conjectures of optimal mechanisms in additional settings which show further complex behavior. From a methodological perspective, we make essential use of the tools of differentiable economics to generate conjectures of optimal mechanisms, and give a proof-of-concept for the use of such tools in guiding theoretical investigations.

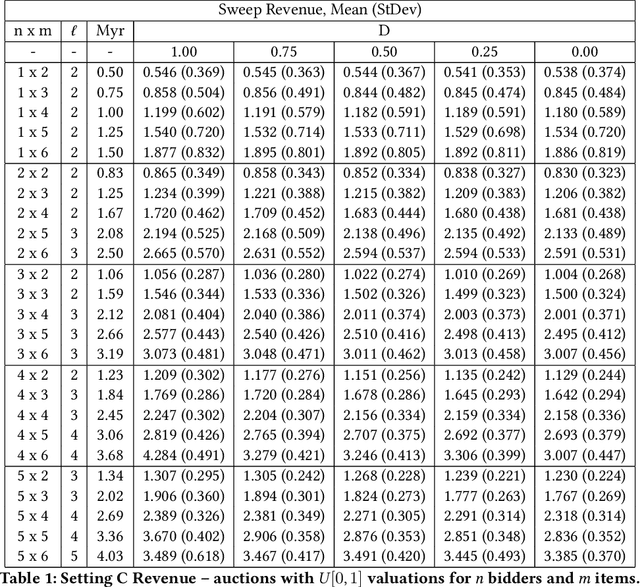

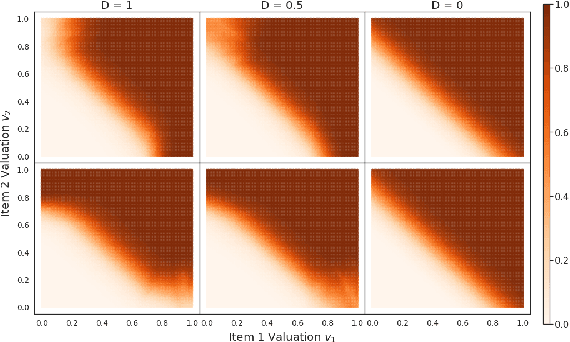

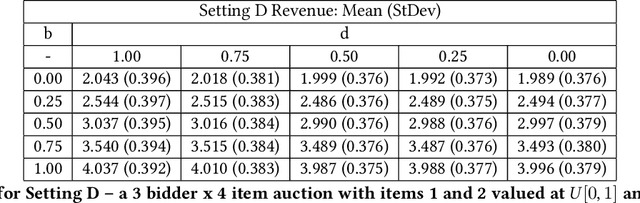

Learning Revenue-Maximizing Auctions With Differentiable Matching

Jun 15, 2021

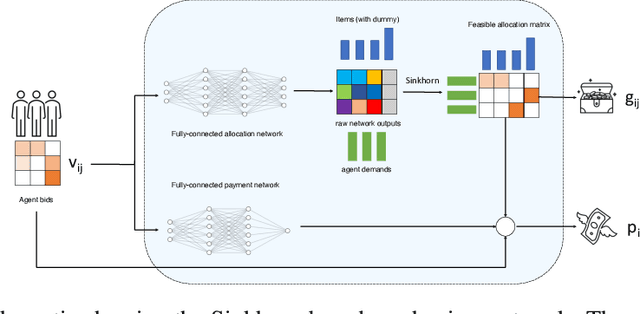

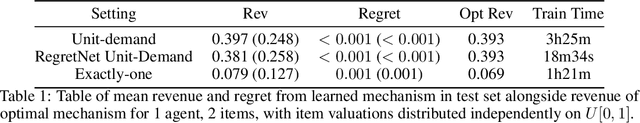

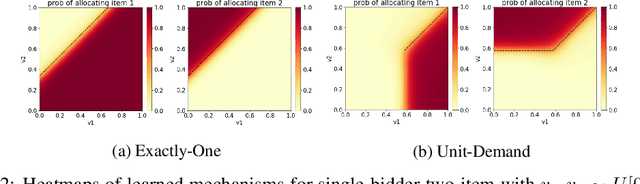

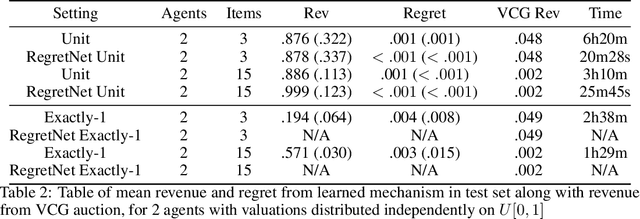

Abstract:We propose a new architecture to approximately learn incentive compatible, revenue-maximizing auctions from sampled valuations. Our architecture uses the Sinkhorn algorithm to perform a differentiable bipartite matching which allows the network to learn strategyproof revenue-maximizing mechanisms in settings not learnable by the previous RegretNet architecture. In particular, our architecture is able to learn mechanisms in settings without free disposal where each bidder must be allocated exactly some number of items. In experiments, we show our approach successfully recovers multiple known optimal mechanisms and high-revenue, low-regret mechanisms in larger settings where the optimal mechanism is unknown.

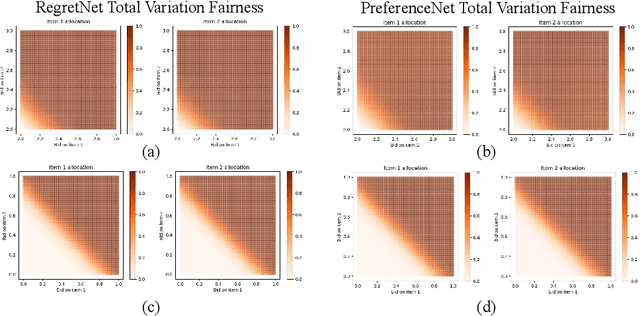

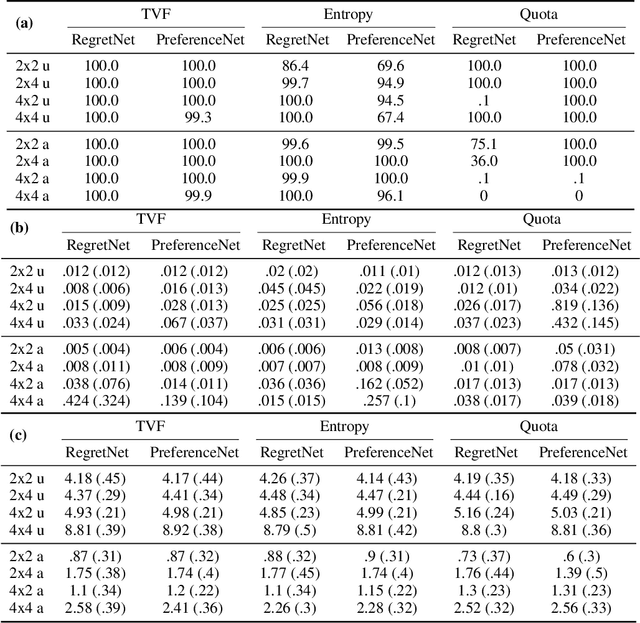

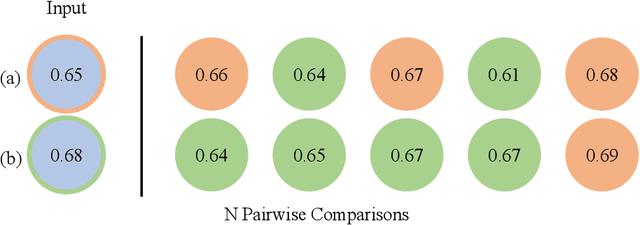

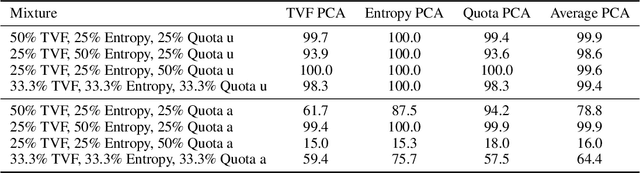

PreferenceNet: Encoding Human Preferences in Auction Design with Deep Learning

Jun 06, 2021

Abstract:The design of optimal auctions is a problem of interest in economics, game theory and computer science. Despite decades of effort, strategyproof, revenue-maximizing auction designs are still not known outside of restricted settings. However, recent methods using deep learning have shown some success in approximating optimal auctions, recovering several known solutions and outperforming strong baselines when optimal auctions are not known. In addition to maximizing revenue, auction mechanisms may also seek to encourage socially desirable constraints such as allocation fairness or diversity. However, these philosophical notions neither have standardization nor do they have widely accepted formal definitions. In this paper, we propose PreferenceNet, an extension of existing neural-network-based auction mechanisms to encode constraints using (potentially human-provided) exemplars of desirable allocations. In addition, we introduce a new metric to evaluate an auction allocations' adherence to such socially desirable constraints and demonstrate that our proposed method is competitive with current state-of-the-art neural-network based auction designs. We validate our approach through human subject research and show that we are able to effectively capture real human preferences. Our code is available at https://github.com/neeharperi/PreferenceNet

ProportionNet: Balancing Fairness and Revenue for Auction Design with Deep Learning

Oct 13, 2020

Abstract:The design of revenue-maximizing auctions with strong incentive guarantees is a core concern of economic theory. Computational auctions enable online advertising, sourcing, spectrum allocation, and myriad financial markets. Analytic progress in this space is notoriously difficult; since Myerson's 1981 work characterizing single-item optimal auctions, there has been limited progress outside of restricted settings. A recent paper by D\"utting et al. circumvents analytic difficulties by applying deep learning techniques to, instead, approximate optimal auctions. In parallel, new research from Ilvento et al. and other groups has developed notions of fairness in the context of auction design. Inspired by these advances, in this paper, we extend techniques for approximating auctions using deep learning to address concerns of fairness while maintaining high revenue and strong incentive guarantees.

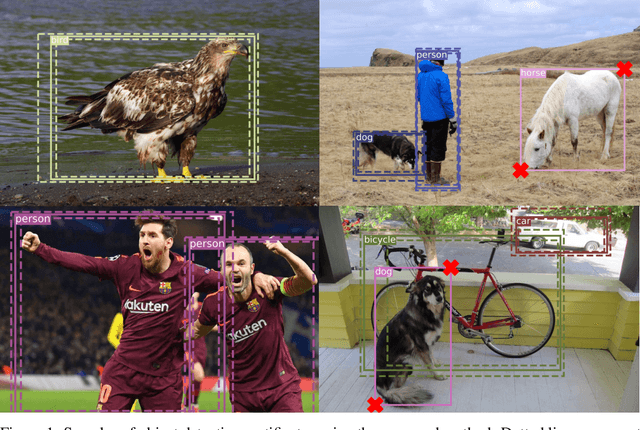

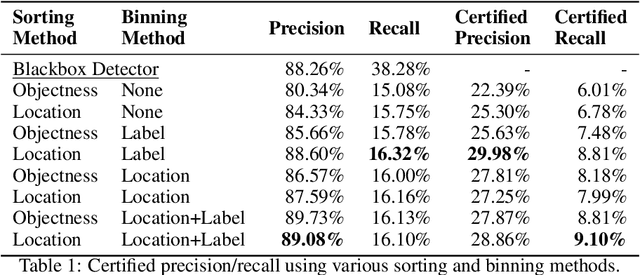

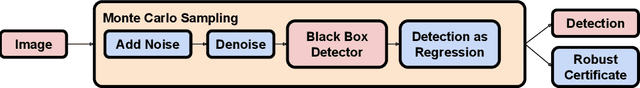

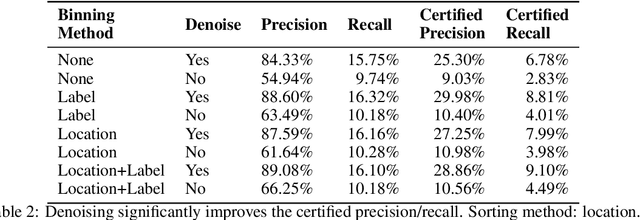

Detection as Regression: Certified Object Detection by Median Smoothing

Jul 07, 2020

Abstract:Despite the vulnerability of object detectors to adversarial attacks, very few defenses are known to date. While adversarial training can improve the empirical robustness of image classifiers, a direct extension to object detection is very expensive. This work is motivated by recent progress on certified classification by randomized smoothing. We start by presenting a reduction from object detection to a regression problem. Then, to enable certified regression, where standard mean smoothing fails, we propose median smoothing, which is of independent interest. We obtain the first model-agnostic, training-free, and certified defense for object detection against $\ell_2$-bounded attacks.

Certifying Strategyproof Auction Networks

Jun 15, 2020

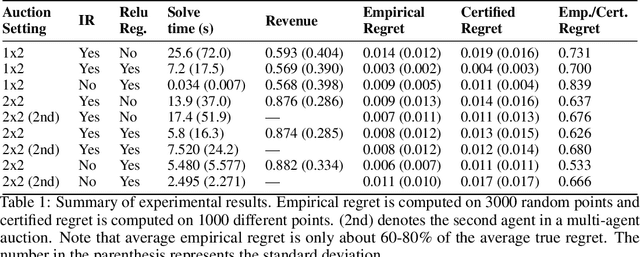

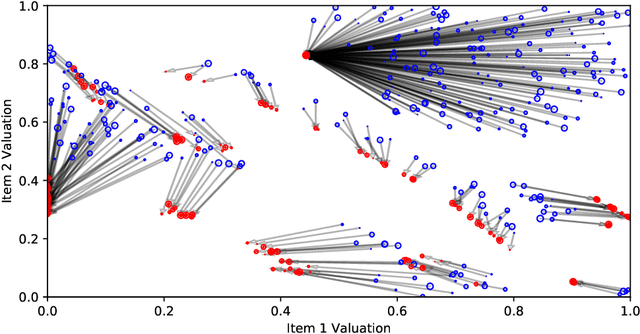

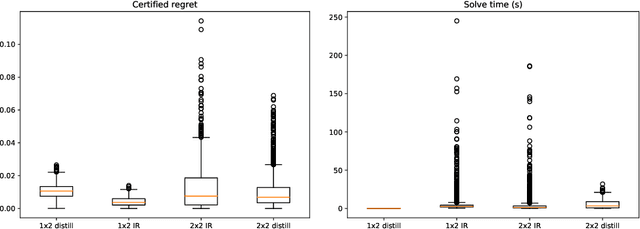

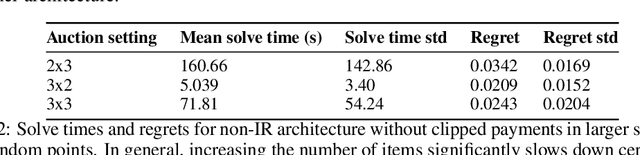

Abstract:Optimal auctions maximize a seller's expected revenue subject to individual rationality and strategyproofness for the buyers. Myerson's seminal work in 1981 settled the case of auctioning a single item; however, subsequent decades of work have yielded little progress moving beyond a single item, leaving the design of revenue-maximizing auctions as a central open problem in the field of mechanism design. A recent thread of work in "differentiable economics" has used tools from modern deep learning to instead learn good mechanisms. We focus on the RegretNet architecture, which can represent auctions with arbitrary numbers of items and participants; it is trained to be empirically strategyproof, but the property is never exactly verified leaving potential loopholes for market participants to exploit. We propose ways to explicitly verify strategyproofness under a particular valuation profile using techniques from the neural network verification literature. Doing so requires making several modifications to the RegretNet architecture in order to represent it exactly in an integer program. We train our network and produce certificates in several settings, including settings for which the optimal strategyproof mechanism is not known.

Headless Horseman: Adversarial Attacks on Transfer Learning Models

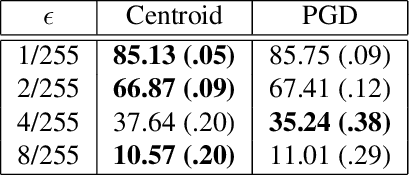

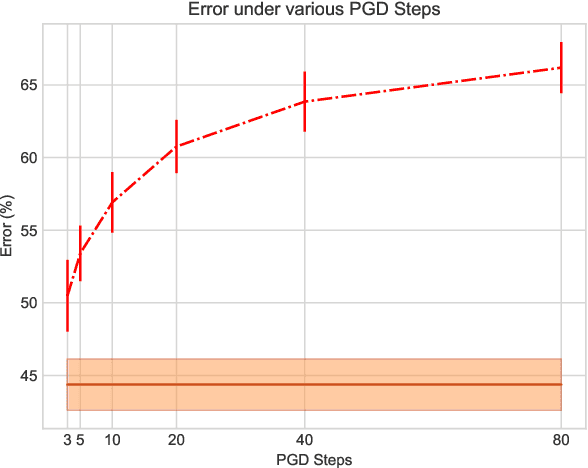

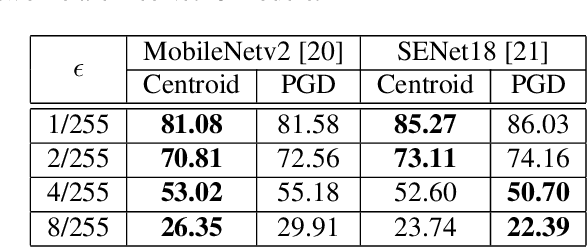

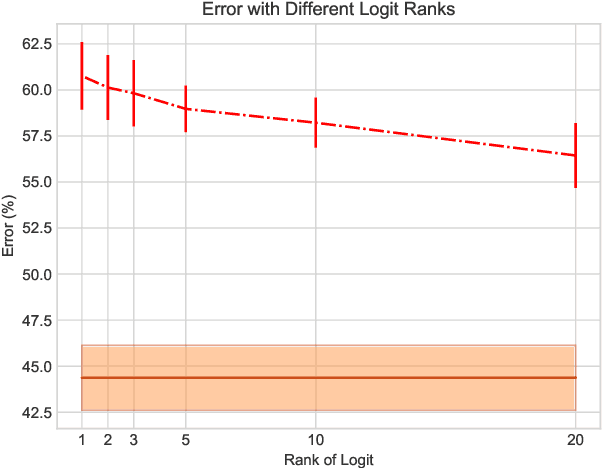

Apr 20, 2020

Abstract:Transfer learning facilitates the training of task-specific classifiers using pre-trained models as feature extractors. We present a family of transferable adversarial attacks against such classifiers, generated without access to the classification head; we call these \emph{headless attacks}. We first demonstrate successful transfer attacks against a victim network using \textit{only} its feature extractor. This motivates the introduction of a label-blind adversarial attack. This transfer attack method does not require any information about the class-label space of the victim. Our attack lowers the accuracy of a ResNet18 trained on CIFAR10 by over 40\%.

Mix and Match: Markov Chains & Mixing Times for Matching in Rideshare

Nov 30, 2019

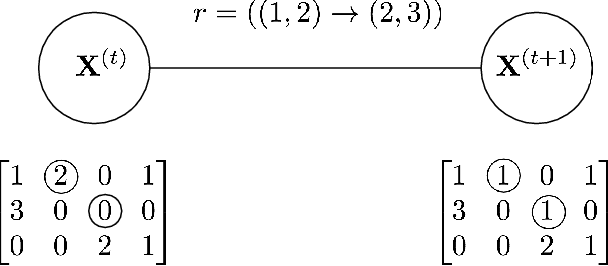

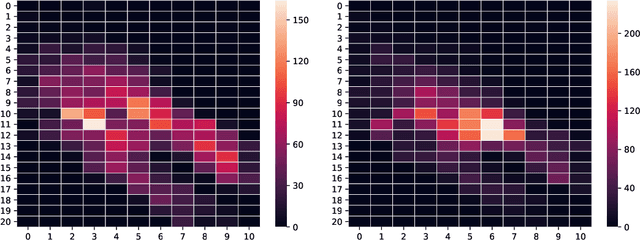

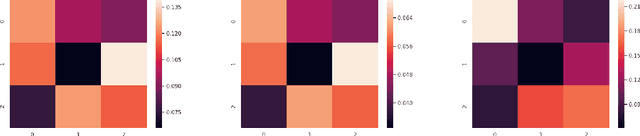

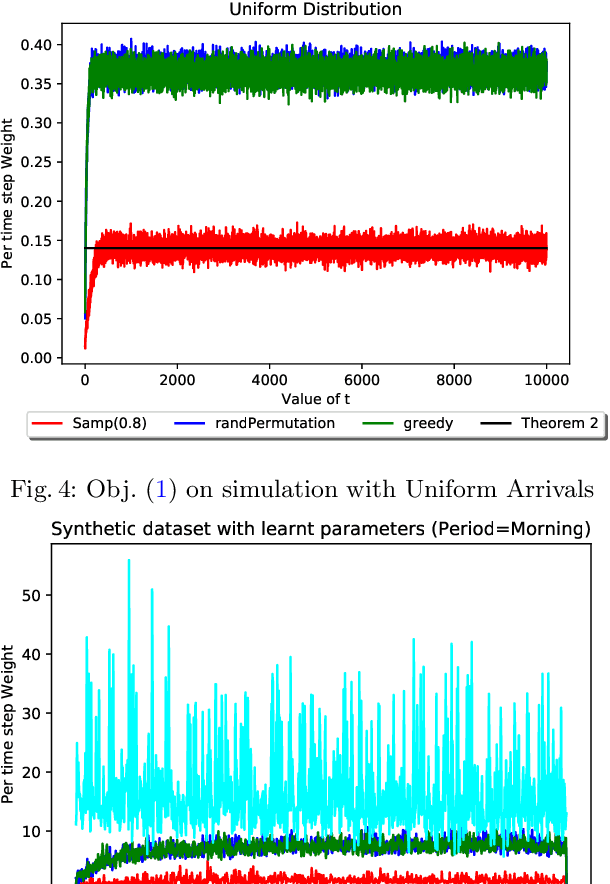

Abstract:Rideshare platforms such as Uber and Lyft dynamically dispatch drivers to match riders' requests. We model the dispatching process in rideshare as a Markov chain that takes into account the geographic mobility of both drivers and riders over time. Prior work explores dispatch policies in the limit of such Markov chains; we characterize when this limit assumption is valid, under a variety of natural dispatch policies. We give explicit bounds on convergence in general, and exact (including constants) convergence rates for special cases. Then, on simulated and real transit data, we show that our bounds characterize convergence rates -- even when the necessary theoretical assumptions are relaxed. Additionally these policies compare well against a standard reinforcement learning algorithm which optimizes for profit without any convergence properties.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge