Ki Myung Brian Lee

Kernel-SDF: An Open-Source Library for Real-Time Signed Distance Function Estimation using Kernel Regression

Mar 31, 2026Abstract:Accurate and efficient environment representation is crucial for robotic applications such as motion planning, manipulation, and navigation. Signed distance functions (SDFs) have emerged as a powerful representation for encoding distance to obstacle boundaries, enabling efficient collision-checking and trajectory optimization techniques. However, existing SDF reconstruction methods have limitations when it comes to large-scale uncertainty-aware SDF estimation from streaming sensor data. Voxel-based approaches are limited by fixed resolution and lack uncertainty quantification, neural network methods require significant training time, while Gaussian process (GP) methods struggle with scalability, sign estimation, and uncertainty calibration. In this letter, we develop an open-source library, Kernel-SDF, which uses kernel regression to learn SDF with calibrated uncertainty quantification in real-time. Our approach consists of a front-end that learns a continuous occupancy field via kernel regression, and a back-end that estimates accurate SDF via GP regression using samples from the front-end surface boundaries. Kernel-SDF provides accurate SDF, SDF gradient, SDF uncertainty, and mesh construction in real-time. Evaluation results show that Kernel-SDF achieves superior accuracy compared to existing methods, while maintaining real-time performance, making it suitable for various robotics applications requiring reliable uncertainty-aware geometric information.

PhysGraph: Physically-Grounded Graph-Transformer Policies for Bimanual Dexterous Hand-Tool-Object Manipulation

Mar 02, 2026Abstract:Bimanual dexterous manipulation for tool use remains a formidable challenge in robotics due to the high-dimensional state space and complicated contact dynamics. Existing methods naively represent the entire system state as a single configuration vector, disregarding the rich structural and topological information inherent to articulated hands. We present PhysGraph, a physically-grounded graph transformer policy designed explicitly for challenging bimanual hand-tool-object manipulation. Unlike prior works, we represent the bimanual system as a kinematic graph and introduce per-link tokenization to preserve fine-grained local state information. We propose a physically-grounded bias generator that injects structural priors directly into the attention mechanism, including kinematic spatial distance, dynamic contact states, geometric proximity, and anatomical properties. This allows the policy to explicitly reason about physical interactions rather than learning them implicitly from sparse rewards. Extensive experiments show that PhysGraph significantly outperforms baseline - ManipTrans in manipulation precision and task success rates while using only 51% of the parameters of ManipTrans. Furthermore, the inherent topological flexibility of our architecture shows qualitative zero-shot transfer to unseen tool/object geometries, and is sufficiently general to be trained on three robotic hands (Shadow, Allegro, Inspire).

Learning Scene-Level Signed Directional Distance Function with Ellipsoidal Priors and Neural Residuals

Mar 25, 2025

Abstract:Dense geometric environment representations are critical for autonomous mobile robot navigation and exploration. Recent work shows that implicit continuous representations of occupancy, signed distance, or radiance learned using neural networks offer advantages in reconstruction fidelity, efficiency, and differentiability over explicit discrete representations based on meshes, point clouds, and voxels. In this work, we explore a directional formulation of signed distance, called signed directional distance function (SDDF). Unlike signed distance function (SDF) and similar to neural radiance fields (NeRF), SDDF has a position and viewing direction as input. Like SDF and unlike NeRF, SDDF directly provides distance to the observed surface along the direction, rather than integrating along the view ray, allowing efficient view synthesis. To learn and predict scene-level SDDF efficiently, we develop a differentiable hybrid representation that combines explicit ellipsoid priors and implicit neural residuals. This approach allows the model to effectively handle large distance discontinuities around obstacle boundaries while preserving the ability for dense high-fidelity prediction. We show that SDDF is competitive with the state-of-the-art neural implicit scene models in terms of reconstruction accuracy and rendering efficiency, while allowing differentiable view prediction for robot trajectory optimization.

DynaGSLAM: Real-Time Gaussian-Splatting SLAM for Online Rendering, Tracking, Motion Predictions of Moving Objects in Dynamic Scenes

Mar 15, 2025

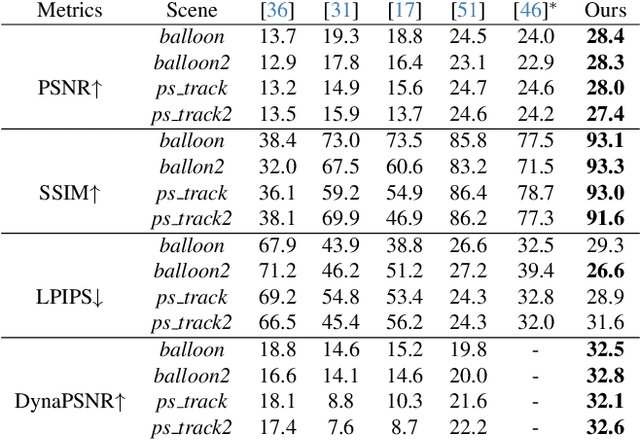

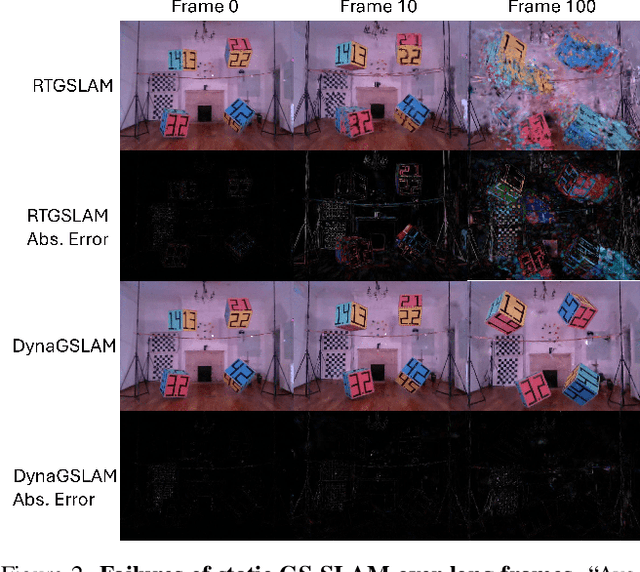

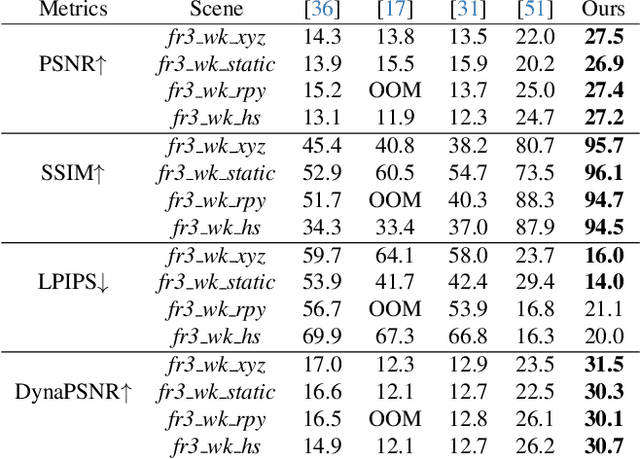

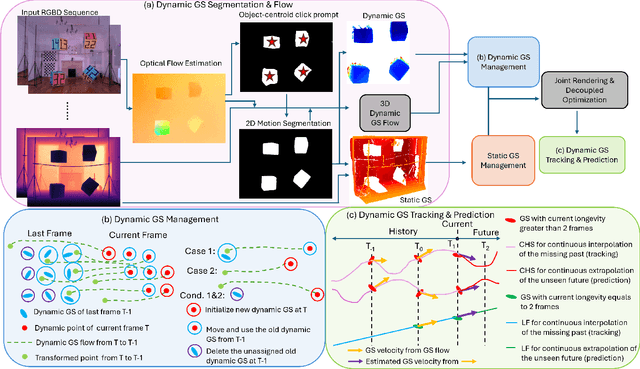

Abstract:Simultaneous Localization and Mapping (SLAM) is one of the most important environment-perception and navigation algorithms for computer vision, robotics, and autonomous cars/drones. Hence, high quality and fast mapping becomes a fundamental problem. With the advent of 3D Gaussian Splatting (3DGS) as an explicit representation with excellent rendering quality and speed, state-of-the-art (SOTA) works introduce GS to SLAM. Compared to classical pointcloud-SLAM, GS-SLAM generates photometric information by learning from input camera views and synthesize unseen views with high-quality textures. However, these GS-SLAM fail when moving objects occupy the scene that violate the static assumption of bundle adjustment. The failed updates of moving GS affects the static GS and contaminates the full map over long frames. Although some efforts have been made by concurrent works to consider moving objects for GS-SLAM, they simply detect and remove the moving regions from GS rendering ("anti'' dynamic GS-SLAM), where only the static background could benefit from GS. To this end, we propose the first real-time GS-SLAM, "DynaGSLAM'', that achieves high-quality online GS rendering, tracking, motion predictions of moving objects in dynamic scenes while jointly estimating accurate ego motion. Our DynaGSLAM outperforms SOTA static & "Anti'' dynamic GS-SLAM on three dynamic real datasets, while keeping speed and memory efficiency in practice.

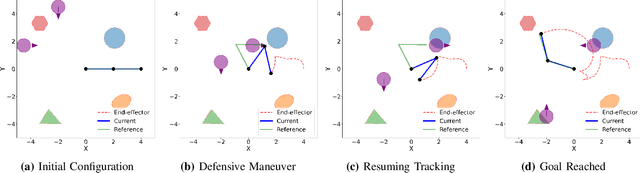

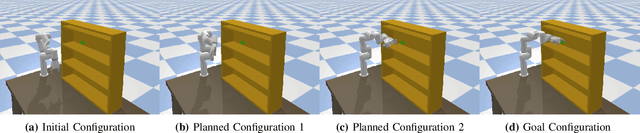

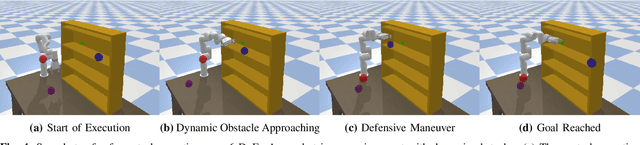

Neural Configuration-Space Barriers for Manipulation Planning and Control

Mar 06, 2025

Abstract:Planning and control for high-dimensional robot manipulators in cluttered, dynamic environments require both computational efficiency and robust safety guarantees. Inspired by recent advances in learning configuration-space distance functions (CDFs) as robot body representations, we propose a unified framework for motion planning and control that formulates safety constraints as CDF barriers. A CDF barrier approximates the local free configuration space, substantially reducing the number of collision-checking operations during motion planning. However, learning a CDF barrier with a neural network and relying on online sensor observations introduce uncertainties that must be considered during control synthesis. To address this, we develop a distributionally robust CDF barrier formulation for control that explicitly accounts for modeling errors and sensor noise without assuming a known underlying distribution. Simulations and hardware experiments on a 6-DoF xArm manipulator show that our neural CDF barrier formulation enables efficient planning and robust real-time safe control in cluttered and dynamic environments, relying only on onboard point-cloud observations.

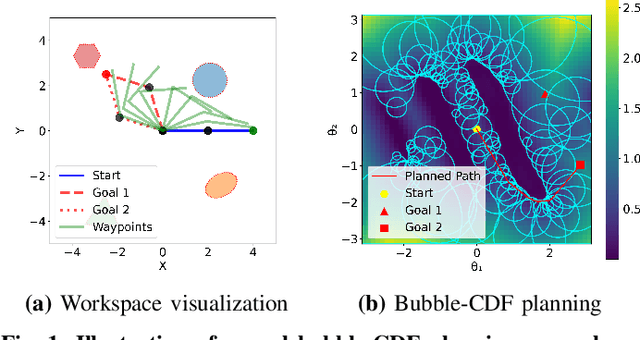

Safe Bubble Cover for Motion Planning on Distance Fields

Aug 23, 2024Abstract:We consider the problem of planning collision-free trajectories on distance fields. Our key observation is that querying a distance field at one configuration reveals a region of safe space whose radius is given by the distance value, obviating the need for additional collision checking within the safe region. We refer to such regions as safe bubbles, and show that safe bubbles can be obtained from any Lipschitz-continuous safety constraint. Inspired by sampling-based planning algorithms, we present three algorithms for constructing a safe bubble cover of free space, named bubble roadmap (BRM), rapidly exploring bubble graph (RBG), and expansive bubble graph (EBG). The bubble sampling algorithms are combined with a hierarchical planning method that first computes a discrete path of bubbles, followed by a continuous path within the bubbles computed via convex optimization. Experimental results show that the bubble-based methods yield up to 5- 10 times cost reduction relative to conventional baselines while simultaneously reducing computational efforts by orders of magnitude.

Decentralised Active Perception in Continuous Action Spaces for the Coordinated Escort Problem

May 03, 2023

Abstract:We consider the coordinated escort problem, where a decentralised team of supporting robots implicitly assist the mission of higher-value principal robots. The defining challenge is how to evaluate the effect of supporting robots' actions on the principal robots' mission. To capture this effect, we define two novel auxiliary reward functions for supporting robots called satisfaction improvement and satisfaction entropy, which computes the improvement in probability of mission success, or the uncertainty thereof. Given these reward functions, we coordinate the entire team of principal and supporting robots using decentralised cross entropy method (Dec-CEM), a new extension of CEM to multi-agent systems based on the product distribution approximation. In a simulated object avoidance scenario, our planning framework demonstrates up to two-fold improvement in task satisfaction against conventional decoupled information gathering.The significance of our results is to introduce a new family of algorithmic problems that will enable important new practical applications of heterogeneous multi-robot systems.

Topological Trajectory Prediction with Homotopy Classes

Jan 24, 2023

Abstract:Trajectory prediction in a cluttered environment is key to many important robotics tasks such as autonomous navigation. However, there are an infinite number of possible trajectories to consider. To simplify the space of trajectories under consideration, we utilise homotopy classes to partition the space into countably many mathematically equivalent classes. All members within a class demonstrate identical high-level motion with respect to the environment, i.e., travelling above or below an obstacle. This allows high-level prediction of a trajectory in terms of a sparse label identifying its homotopy class. We therefore present a light-weight learning framework based on variable-order Markov processes to learn and predict homotopy classes and thus high-level agent motion. By informing a Gaussian Mixture Model (GMM) with our homotopy class predictions, we see great improvements in low-level trajectory prediction compared to a naive GMM on a real dataset.

Motion planning in task space with Gromov-Hausdorff approximations

Sep 11, 2022

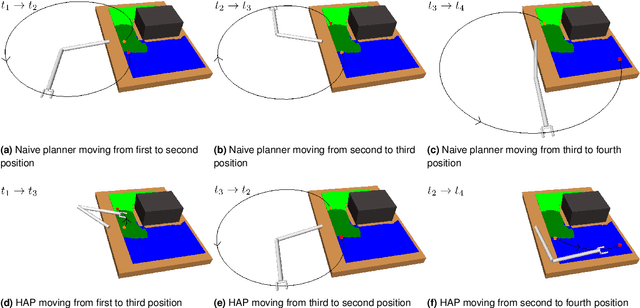

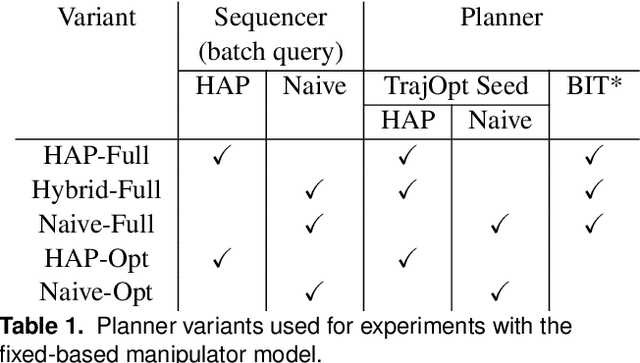

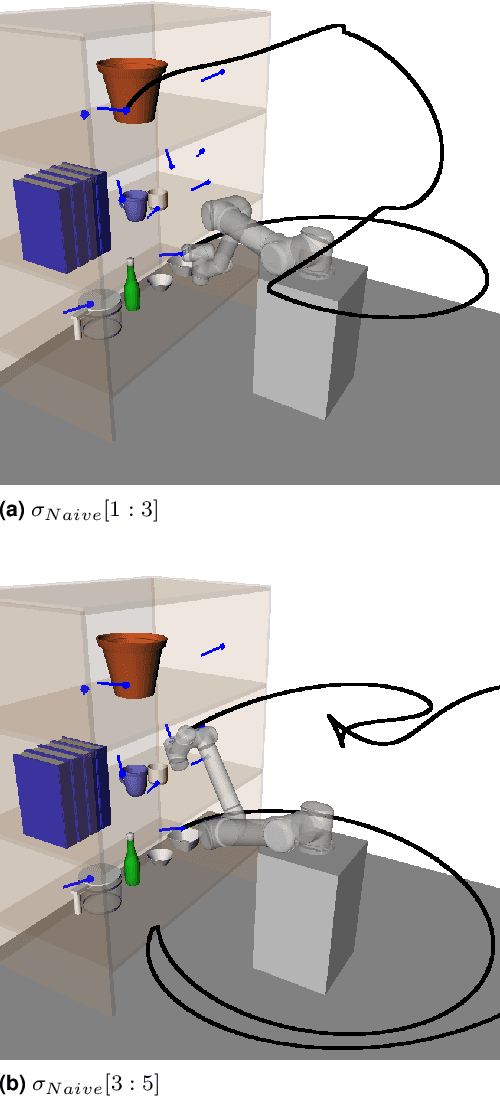

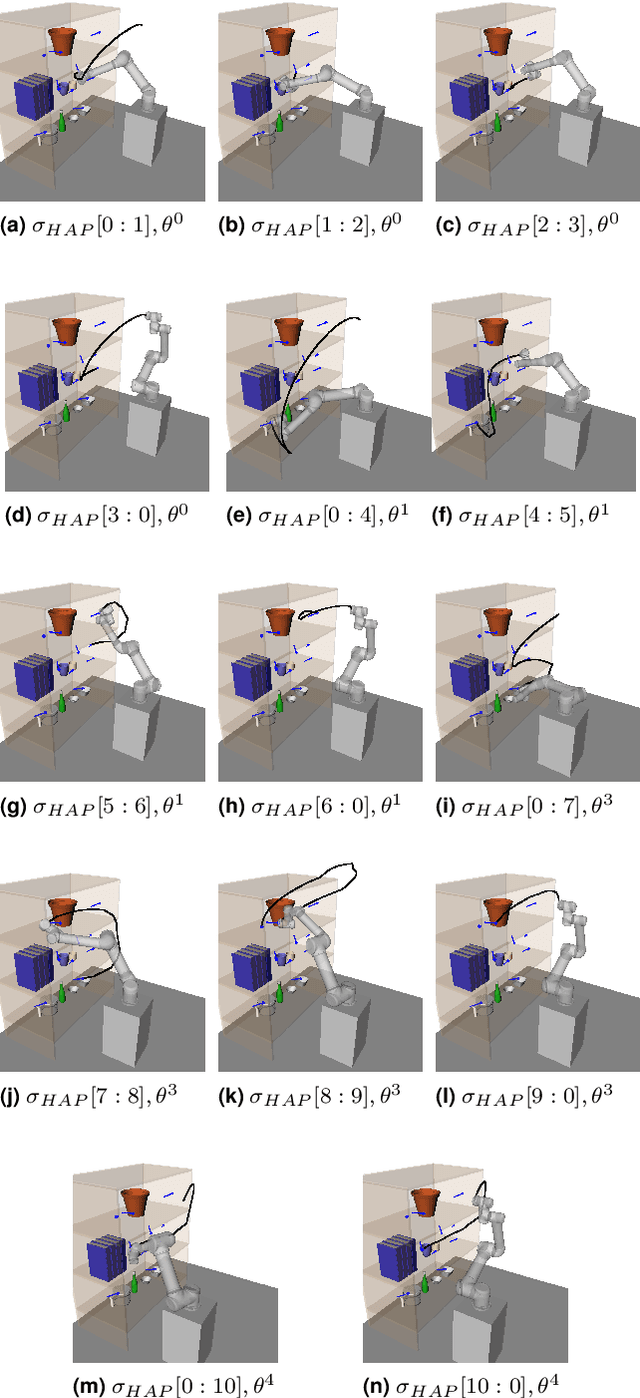

Abstract:Applications of industrial robotic manipulators such as cobots can require efficient online motion planning in environments that have a combination of static and non-static obstacles. Existing general purpose planning methods often produce poor quality solutions when available computation time is restricted, or fail to produce a solution entirely. We propose a new motion planning framework designed to operate in a user-defined task space, as opposed to the robot's workspace, that intentionally trades off workspace generality for planning and execution time efficiency. Our framework automatically constructs trajectory libraries that are queried online, similar to previous methods that exploit offline computation. Importantly, our method also offers bounded suboptimality guarantees on trajectory length. The key idea is to establish approximate isometries known as $\epsilon$-Gromov-Hausdorff approximations such that points that are close by in task space are also close in configuration space. These bounding relations further imply that trajectories can be smoothly concatenated, which enables our framework to address batch-query scenarios where the objective is to find a minimum length sequence of trajectories that visit an unordered set of goals. We evaluate our framework in simulation with several kinematic configurations, including a manipulator mounted to a mobile base. Results demonstrate that our method achieves feasible real-time performance for practical applications and suggest interesting opportunities for extending its capabilities.

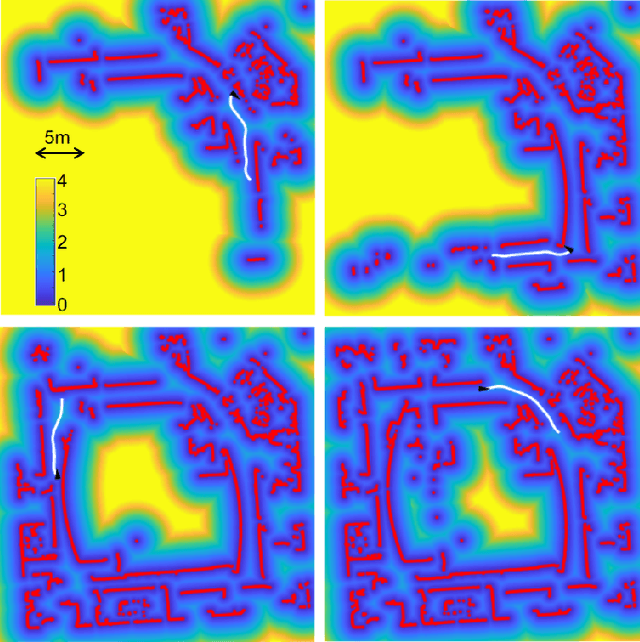

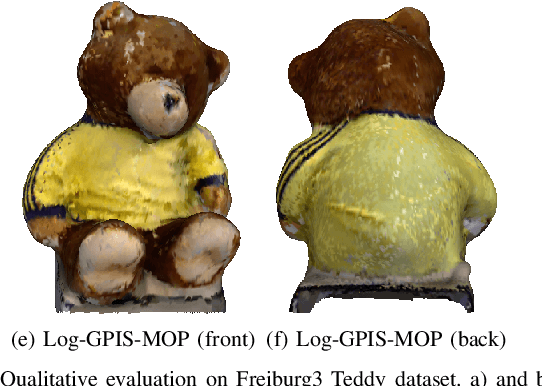

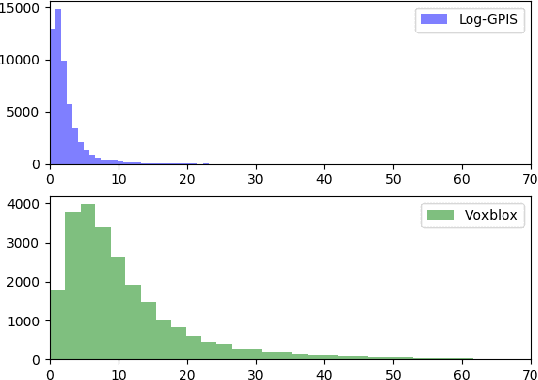

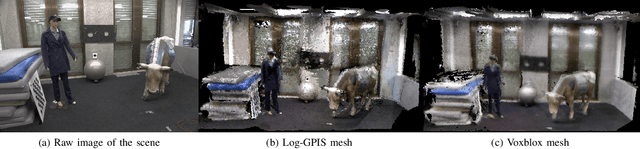

Log-GPIS-MOP: A Unified Representation for Mapping, Odometry and Planning

Jun 19, 2022

Abstract:Whereas dedicated scene representations are required for each different tasks in conventional robotic systems, this paper demonstrates that a unified representation can be used directly for multiple key tasks. We propose the Log-Gaussian Process Implicit Surface for Mapping, Odometry and Planning (Log-GPIS-MOP): a probabilistic framework for surface reconstruction, localisation and navigation based on a unified representation. Our framework applies a logarithmic transformation to a Gaussian Process Implicit Surface (GPIS) formulation to recover a global representation that accurately captures the Euclidean distance field with gradients and, at the same time, the implicit surface. By directly estimate the distance field and its gradient through Log-GPIS inference, the proposed incremental odometry technique computes the optimal alignment of an incoming frame, and fuses it globally to produce a map. Concurrently, an optimisation-based planner computes a safe collision-free path using the same Log-GPIS surface representation. We validate the proposed framework on simulated and real datasets in 2D and 3D and benchmark against the state-of-the-art approaches. Our experiments show that Log-GPIS-MOP produces competitive results in sequential odometry, surface mapping and obstacle avoidance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge