Jeffrey Delmerico

Spatial Computing and Intuitive Interaction: Bringing Mixed Reality and Robotics Together

Feb 03, 2022

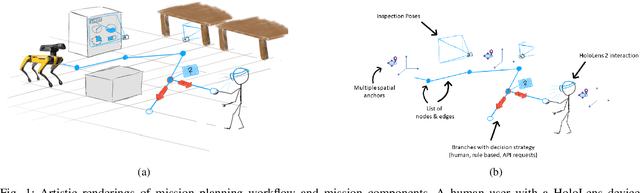

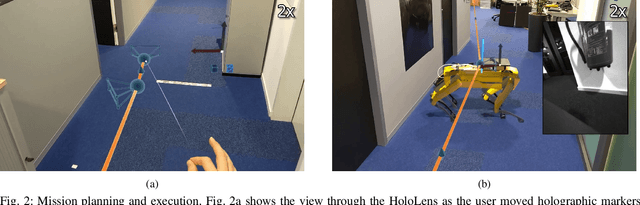

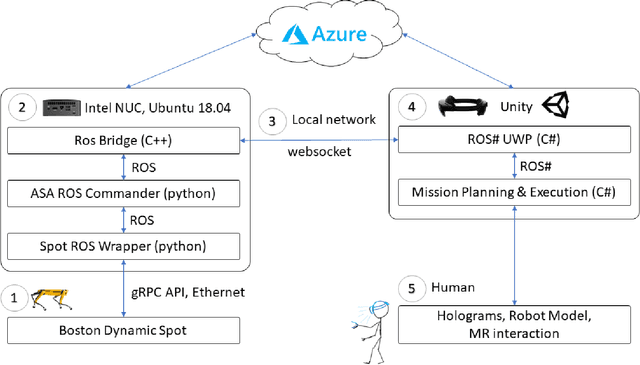

Abstract:Spatial computing -- the ability of devices to be aware of their surroundings and to represent this digitally -- offers novel capabilities in human-robot interaction. In particular, the combination of spatial computing and egocentric sensing on mixed reality devices enables them to capture and understand human actions and translate these to actions with spatial meaning, which offers exciting new possibilities for collaboration between humans and robots. This paper presents several human-robot systems that utilize these capabilities to enable novel robot use cases: mission planning for inspection, gesture-based control, and immersive teleoperation. These works demonstrate the power of mixed reality as a tool for human-robot interaction, and the potential of spatial computing and mixed reality to drive the future of human-robot interaction.

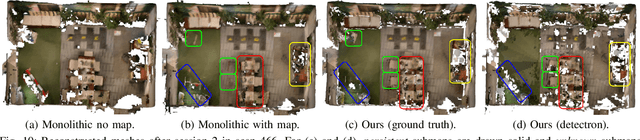

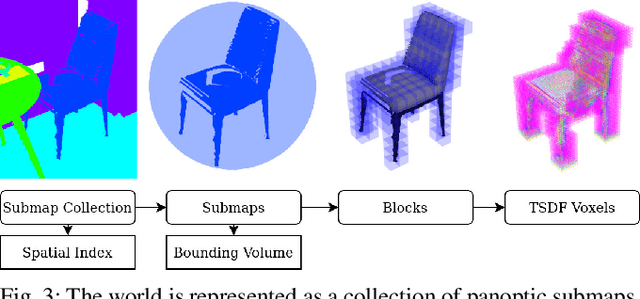

Panoptic Multi-TSDFs: a Flexible Representation for Online Multi-resolution Volumetric Mapping and Long-term Dynamic Scene Consistency

Sep 21, 2021

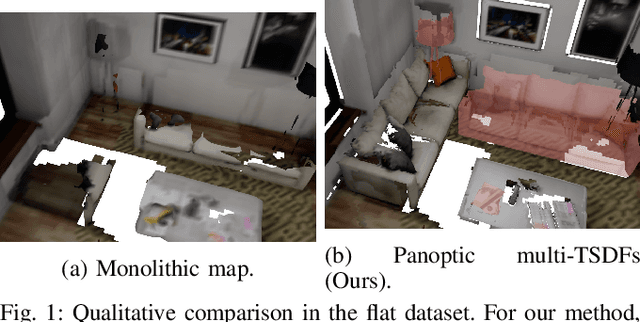

Abstract:For robotic interaction in an environment shared with multiple agents, accessing a volumetric and semantic map of the scene is crucial. However, such environments are inevitably subject to long-term changes, which the map representation needs to account for.To this end, we propose panoptic multi-TSDFs, a novel representation for multi-resolution volumetric mapping over long periods of time. By leveraging high-level information for 3D reconstruction, our proposed system allocates high resolution only where needed. In addition, through reasoning on the object level, semantic consistency over time is achieved. This enables to maintain up-to-date reconstructions with high accuracy while improving coverage by incorporating and fusing previous data. We show in thorough experimental validations that our map representation can be efficiently constructed, maintained, and queried during online operation, and that the presented approach can operate robustly on real depth sensors using non-optimized panoptic segmentation as input.

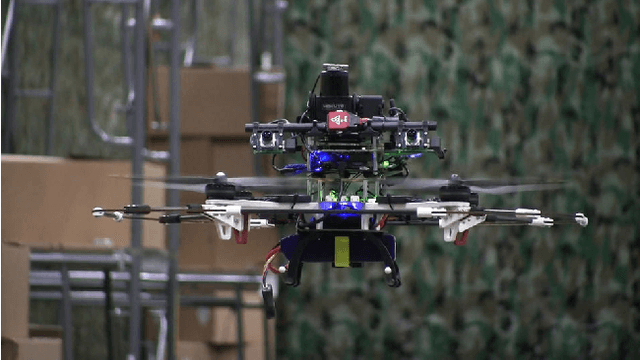

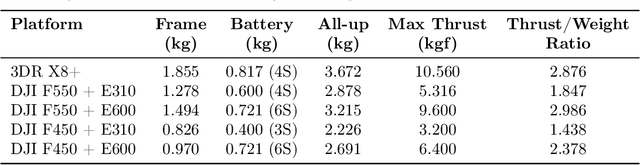

Fast, Autonomous Flight in GPS-Denied and Cluttered Environments

Dec 06, 2017

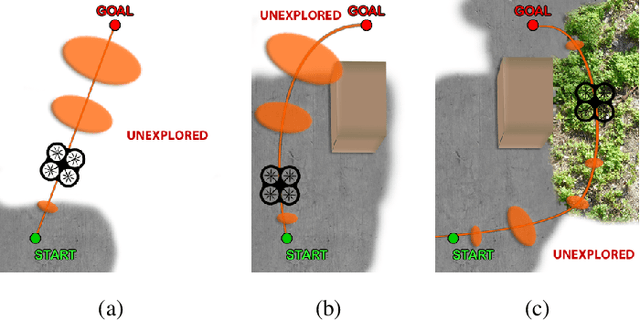

Abstract:One of the most challenging tasks for a flying robot is to autonomously navigate between target locations quickly and reliably while avoiding obstacles in its path, and with little to no a-priori knowledge of the operating environment. This challenge is addressed in the present paper. We describe the system design and software architecture of our proposed solution, and showcase how all the distinct components can be integrated to enable smooth robot operation. We provide critical insight on hardware and software component selection and development, and present results from extensive experimental testing in real-world warehouse environments. Experimental testing reveals that our proposed solution can deliver fast and robust aerial robot autonomous navigation in cluttered, GPS-denied environments.

Perception-aware Path Planning

Feb 10, 2017

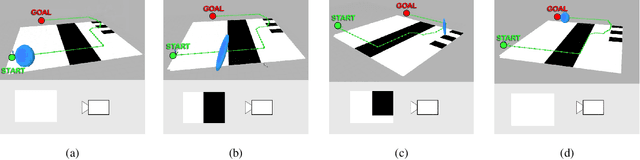

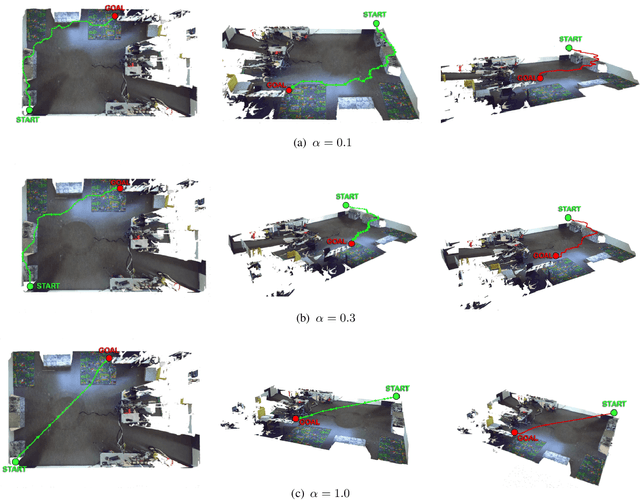

Abstract:In this paper, we give a double twist to the problem of planning under uncertainty. State-of-the-art planners seek to minimize the localization uncertainty by only considering the geometric structure of the scene. In this paper, we argue that motion planning for vision-controlled robots should be perception aware in that the robot should also favor texture-rich areas to minimize the localization uncertainty during a goal-reaching task. Thus, we describe how to optimally incorporate the photometric information (i.e., texture) of the scene, in addition to the the geometric one, to compute the uncertainty of vision-based localization during path planning. To avoid the caveats of feature-based localization systems (i.e., dependence on feature type and user-defined thresholds), we use dense, direct methods. This allows us to compute the localization uncertainty directly from the intensity values of every pixel in the image. We also describe how to compute trajectories online, considering also scenarios with no prior knowledge about the map. The proposed framework is general and can easily be adapted to different robotic platforms and scenarios. The effectiveness of our approach is demonstrated with extensive experiments in both simulated and real-world environments using a vision-controlled micro aerial vehicle.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge