Haiping Zhu

Towards Efficient and Robust Linguistic Emotion Diagnosis for Mental Health via Multi-Agent Instruction Refinement

Jan 20, 2026Abstract:Linguistic expressions of emotions such as depression, anxiety, and trauma-related states are pervasive in clinical notes, counseling dialogues, and online mental health communities, and accurate recognition of these emotions is essential for clinical triage, risk assessment, and timely intervention. Although large language models (LLMs) have demonstrated strong generalization ability in emotion analysis tasks, their diagnostic reliability in high-stakes, context-intensive medical settings remains highly sensitive to prompt design. Moreover, existing methods face two key challenges: emotional comorbidity, in which multiple intertwined emotional states complicate prediction, and inefficient exploration of clinically relevant cues. To address these challenges, we propose APOLO (Automated Prompt Optimization for Linguistic Emotion Diagnosis), a framework that systematically explores a broader and finer-grained prompt space to improve diagnostic efficiency and robustness. APOLO formulates instruction refinement as a Partially Observable Markov Decision Process and adopts a multi-agent collaboration mechanism involving Planner, Teacher, Critic, Student, and Target roles. Within this closed-loop framework, the Planner defines an optimization trajectory, while the Teacher-Critic-Student agents iteratively refine prompts to enhance reasoning stability and effectiveness, and the Target agent determines whether to continue optimization based on performance evaluation. Experimental results show that APOLO consistently improves diagnostic accuracy and robustness across domain-specific and stratified benchmarks, demonstrating a scalable and generalizable paradigm for trustworthy LLM applications in mental healthcare.

LLMSeR: Enhancing Sequential Recommendation via LLM-based Data Augmentation

Mar 16, 2025Abstract:Sequential Recommender Systems (SRS) have become a cornerstone of online platforms, leveraging users' historical interaction data to forecast their next potential engagement. Despite their widespread adoption, SRS often grapple with the long-tail user dilemma, resulting in less effective recommendations for individuals with limited interaction records. The advent of Large Language Models (LLMs), with their profound capability to discern semantic relationships among items, has opened new avenues for enhancing SRS through data augmentation. Nonetheless, current methodologies encounter obstacles, including the absence of collaborative signals and the prevalence of hallucination phenomena.In this work, we present LLMSeR, an innovative framework that utilizes Large Language Models (LLMs) to generate pseudo-prior items, thereby improving the efficacy of Sequential Recommender Systems (SRS). To alleviate the challenge of insufficient collaborative signals, we introduce the Semantic Interaction Augmentor (SIA), a method that integrates both semantic and collaborative information to comprehensively augment user interaction data. Moreover, to weaken the adverse effects of hallucination in SRS, we develop the Adaptive Reliability Validation (ARV), a validation technique designed to assess the reliability of the generated pseudo items. Complementing these advancements, we also devise a Dual-Channel Training strategy, ensuring seamless integration of data augmentation into the SRS training process.Extensive experiments conducted with three widely-used SRS models demonstrate the generalizability and efficacy of LLMSeR.

A Semantic Mention Graph Augmented Model for Document-Level Event Argument Extraction

Mar 12, 2024

Abstract:Document-level Event Argument Extraction (DEAE) aims to identify arguments and their specific roles from an unstructured document. The advanced approaches on DEAE utilize prompt-based methods to guide pre-trained language models (PLMs) in extracting arguments from input documents. They mainly concentrate on establishing relations between triggers and entity mentions within documents, leaving two unresolved problems: a) independent modeling of entity mentions; b) document-prompt isolation. To this end, we propose a semantic mention Graph Augmented Model (GAM) to address these two problems in this paper. Firstly, GAM constructs a semantic mention graph that captures relations within and between documents and prompts, encompassing co-existence, co-reference and co-type relations. Furthermore, we introduce an ensembled graph transformer module to address mentions and their three semantic relations effectively. Later, the graph-augmented encoder-decoder module incorporates the relation-specific graph into the input embedding of PLMs and optimizes the encoder section with topology information, enhancing the relations comprehensively. Extensive experiments on the RAMS and WikiEvents datasets demonstrate the effectiveness of our approach, surpassing baseline methods and achieving a new state-of-the-art performance.

Generalized Category Discovery with Large Language Models in the Loop

Dec 18, 2023

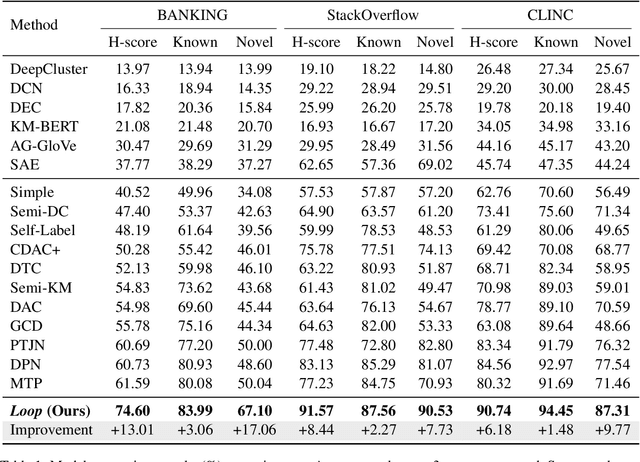

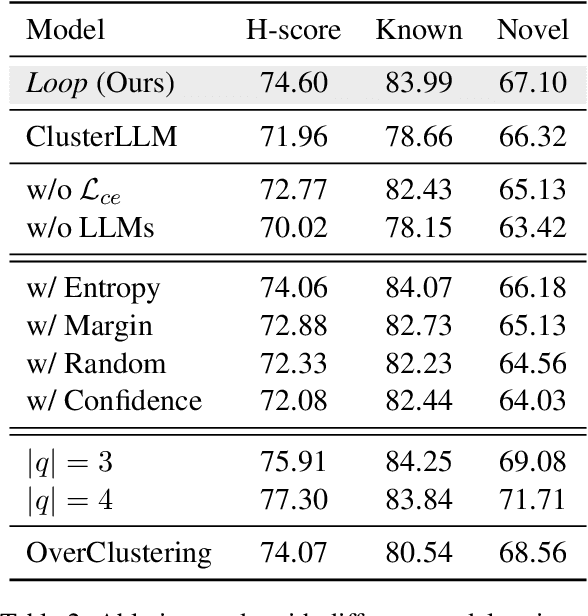

Abstract:Generalized Category Discovery (GCD) is a crucial task that aims to recognize both known and novel categories from a set of unlabeled data by utilizing a few labeled data with only known categories. Due to the lack of supervision and category information, current methods usually perform poorly on novel categories and struggle to reveal semantic meanings of the discovered clusters, which limits their applications in the real world. To mitigate above issues, we propose Loop, an end-to-end active-learning framework that introduces Large Language Models (LLMs) into the training loop, which can boost model performance and generate category names without relying on any human efforts. Specifically, we first propose Local Inconsistent Sampling (LIS) to select samples that have a higher probability of falling to wrong clusters, based on neighborhood prediction consistency and entropy of cluster assignment probabilities. Then we propose a Scalable Query strategy to allow LLMs to choose true neighbors of the selected samples from multiple candidate samples. Based on the feedback from LLMs, we perform Refined Neighborhood Contrastive Learning (RNCL) to pull samples and their neighbors closer to learn clustering-friendly representations. Finally, we select representative samples from clusters corresponding to novel categories to allow LLMs to generate category names for them. Extensive experiments on three benchmark datasets show that Loop outperforms SOTA models by a large margin and generates accurate category names for the discovered clusters. We will release our code and data after publication.

Meta Ordinal Regression Forest for Medical Image Classification with Ordinal Labels

Mar 15, 2022

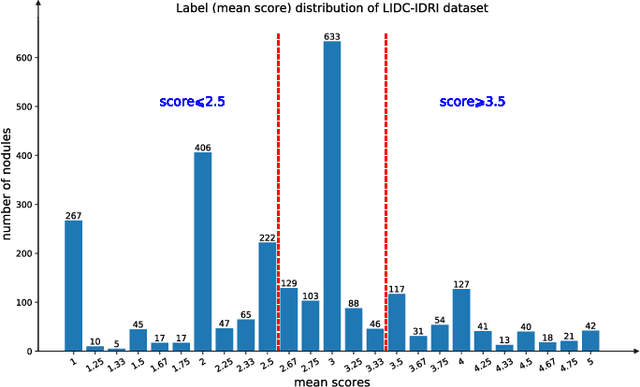

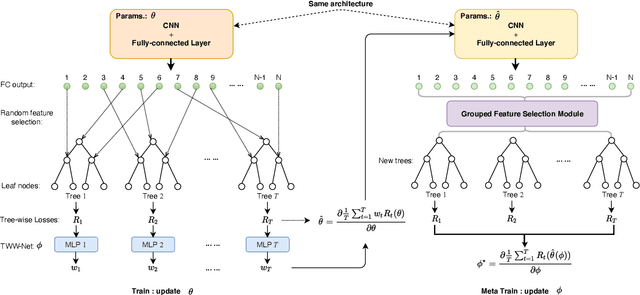

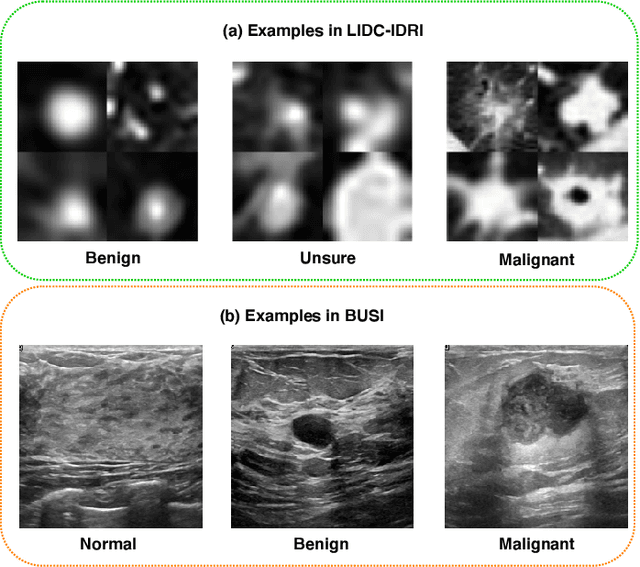

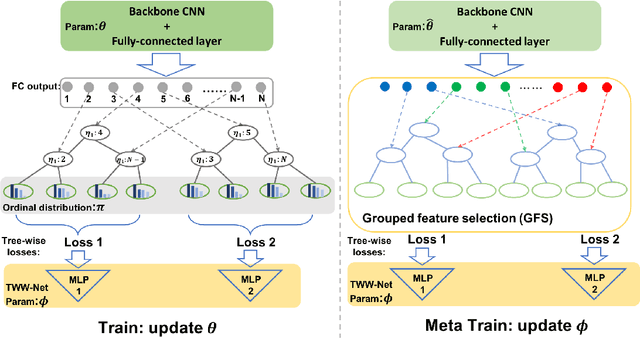

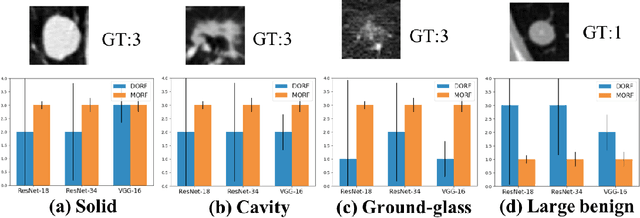

Abstract:The performance of medical image classification has been enhanced by deep convolutional neural networks (CNNs), which are typically trained with cross-entropy (CE) loss. However, when the label presents an intrinsic ordinal property in nature, e.g., the development from benign to malignant tumor, CE loss cannot take into account such ordinal information to allow for better generalization. To improve model generalization with ordinal information, we propose a novel meta ordinal regression forest (MORF) method for medical image classification with ordinal labels, which learns the ordinal relationship through the combination of convolutional neural network and differential forest in a meta-learning framework. The merits of the proposed MORF come from the following two components: a tree-wise weighting net (TWW-Net) and a grouped feature selection (GFS) module. First, the TWW-Net assigns each tree in the forest with a specific weight that is mapped from the classification loss of the corresponding tree. Hence, all the trees possess varying weights, which is helpful for alleviating the tree-wise prediction variance. Second, the GFS module enables a dynamic forest rather than a fixed one that was previously used, allowing for random feature perturbation. During training, we alternatively optimize the parameters of the CNN backbone and TWW-Net in the meta-learning framework through calculating the Hessian matrix. Experimental results on two medical image classification datasets with ordinal labels, i.e., LIDC-IDRI and Breast Ultrasound Dataset, demonstrate the superior performances of our MORF method over existing state-of-the-art methods.

Meta Ordinal Regression Forest For Learning with Unsure Lung Nodules

Dec 07, 2020

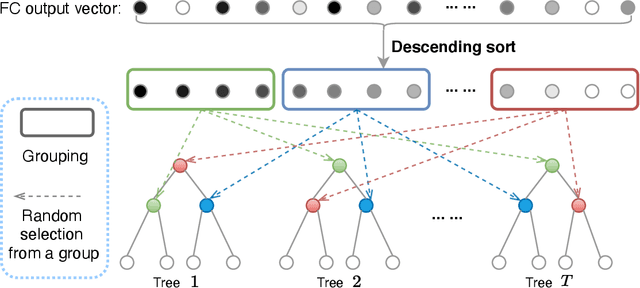

Abstract:Deep learning-based methods have achieved promising performance in early detection and classification of lung nodules, most of which discard unsure nodules and simply deal with a binary classification -- malignant vs benign. Recently, an unsure data model (UDM) was proposed to incorporate those unsure nodules by formulating this problem as an ordinal regression, showing better performance over traditional binary classification. To further explore the ordinal relationship for lung nodule classification, this paper proposes a meta ordinal regression forest (MORF), which improves upon the state-of-the-art ordinal regression method, deep ordinal regression forest (DORF), in three major ways. First, MORF can alleviate the biases of the predictions by making full use of deep features while DORF needs to fix the composition of decision trees before training. Second, MORF has a novel grouped feature selection (GFS) module to re-sample the split nodes of decision trees. Last, combined with GFS, MORF is equipped with a meta learning-based weighting scheme to map the features selected by GFS to tree-wise weights while DORF assigns equal weights for all trees. Experimental results on the LIDC-IDRI dataset demonstrate superior performance over existing methods, including the state-of-the-art DORF.

Deep Ordinal Regression Forests

Aug 07, 2020

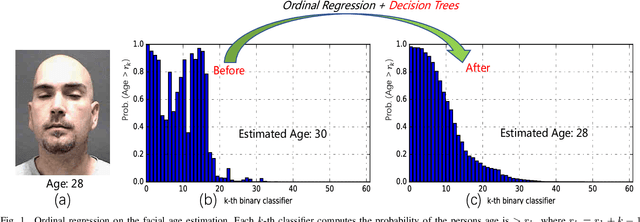

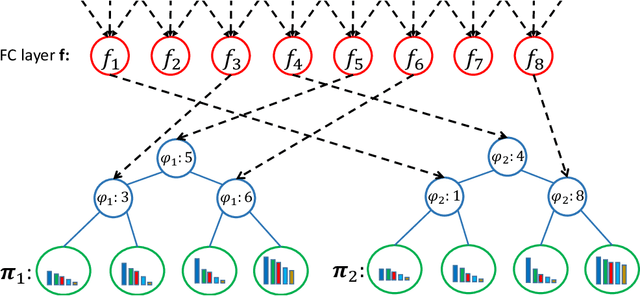

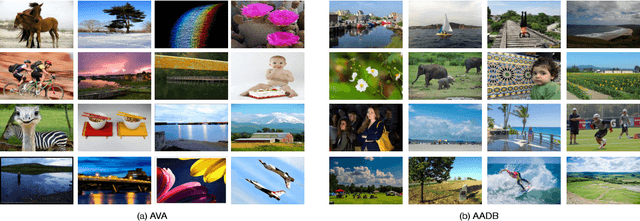

Abstract:Ordinal regression is a type of regression techniques used for predicting an ordinal variable. Recent methods formulate an ordinal regression problem as a series of binary classification problems. Such methods cannot ensure the global ordinal relationship is preserved since the relationships among different binary classifiers are neglected. We propose a novel ordinal regression approach called Deep Ordinal Regression Forests (DORFs), which is constructed with the differentiable decision trees for obtaining precise and stable global ordinal relationships. The advantages of the proposed DORFs are twofold. First, instead of learning a series of binary classifiers independently, the proposed method learns an ordinal distribution for ordinal regression. Second, the differentiable decision trees can be trained together with the ordinal distribution in an end-to-end manner. The effectiveness of the proposed DORFs is verified on two ordinal regression tasks, i.e., facial age estimation and image aesthetic assessment, showing significant improvements and better stability over the state-of-the-art ordinal regression methods.

Look globally, age locally: Face aging with an attention mechanism

Oct 24, 2019

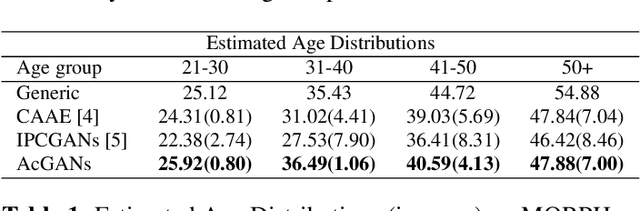

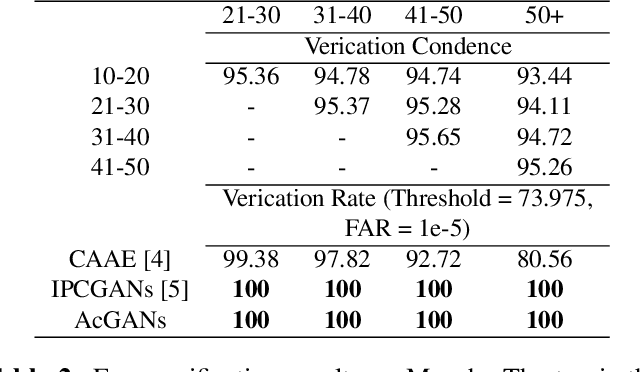

Abstract:Face aging is of great importance for cross-age recognition and entertainment-related applications. Recently, conditional generative adversarial networks (cGANs) have achieved impressive results for face aging. Existing cGANs-based methods usually require a pixel-wise loss to keep the identity and background consistent. However, minimizing the pixel-wise loss between the input and synthesized images likely resulting in a ghosted or blurry face. To address this deficiency, this paper introduces an Attention Conditional GANs (AcGANs) approach for face aging, which utilizes attention mechanism to only alert the regions relevant to face aging. In doing so, the synthesized face can well preserve the background information and personal identity without using the pixel-wise loss, and the ghost artifacts and blurriness can be significantly reduced. Based on the benchmarked dataset Morph, both qualitative and quantitative experiment results demonstrate superior performance over existing algorithms in terms of image quality, personal identity, and age accuracy.

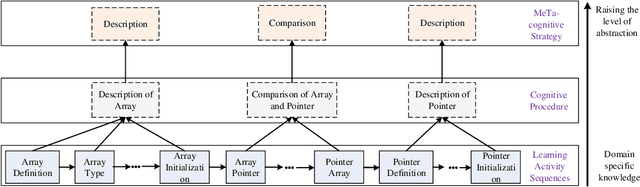

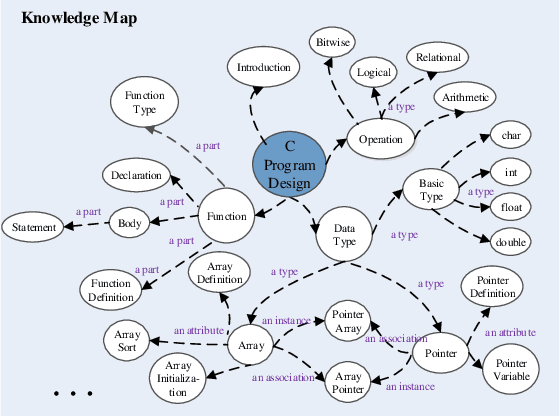

Modeling e-Learners' Cognitive and Metacognitive Strategy in Comparative Question Solving

Jun 04, 2019

Abstract:Cognitive and metacognitive strategy had demonstrated a significant role in self-regulated learning (SRL), and an appropriate use of strategies is beneficial to effective learning or question-solving tasks during a human-computer interaction process. This paper proposes a novel method combining Knowledge Map (KM) based data mining technique with Thinking Map (TM) to detect learner's cognitive and metacognitive strategy in the question-solving scenario. In particular, a graph-based mining algorithm is designed to facilitate our proposed method, which can automatically map cognitive strategy to metacognitive strategy with raising abstraction level, and make the cognitive and metacognitive process viewable, which acts like a reverse engineering engine to explain how a learner thinks when solving a question. Additionally, we develop an online learning environment system for participants to learn and record their behaviors. To corroborate the effectiveness of our approach and algorithm, we conduct experiments recruiting 173 postgraduate and undergraduate students, and they were asked to complete a question-solving task, such as "What are similarities and differences between array and pointer?" from "The C Programming Language" course and "What are similarities and differences between packet switching and circuit switching?" from "Computer Network Principle" course. The mined strategies patterns results are encouraging and supported well our proposed method.

Ordinal Distribution Regression for Gait-based Age Estimation

May 27, 2019

Abstract:Computer vision researchers prefer to estimate the age from face images due to informative facial features. Estimating the age from face images becomes challenging when people are far away from camcorders or occluded. As the unique biometric feature that can be perceived efficiently at a distance, gait can be an alternative way to predict the age in case that face images are not available. However, existing gait-based classification or regression methods ignore the ordinal relationship of different ages, which is an important clue to the age estimation. In this paper, we proposes an ordinal distribution regression with a global and local convolutional neural network for gait-based age estimation. Specifically, we decompose the gait-based age regression into a series of binary classifications to incorporate the ordinal information of the age. Then an ordinal distribution loss is proposed to take inner relationship among these classifications into account by penalizing the distribution discrepancy between the estimated and the ground-truth. In addition, our neural network consists of a global and three local sub-networks, which is capable of learning the global structure and more local details from head, body and feet of gait, respectively. By comparing with the state-of-the-art methods of gait-based age estimation, this paper highlights, experimentally, that the proposed approach has a better predictive performance on the OULP-Age dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge