Yu Shu

Voila: Voice-Language Foundation Models for Real-Time Autonomous Interaction and Voice Role-Play

May 05, 2025Abstract:A voice AI agent that blends seamlessly into daily life would interact with humans in an autonomous, real-time, and emotionally expressive manner. Rather than merely reacting to commands, it would continuously listen, reason, and respond proactively, fostering fluid, dynamic, and emotionally resonant interactions. We introduce Voila, a family of large voice-language foundation models that make a step towards this vision. Voila moves beyond traditional pipeline systems by adopting a new end-to-end architecture that enables full-duplex, low-latency conversations while preserving rich vocal nuances such as tone, rhythm, and emotion. It achieves a response latency of just 195 milliseconds, surpassing the average human response time. Its hierarchical multi-scale Transformer integrates the reasoning capabilities of large language models (LLMs) with powerful acoustic modeling, enabling natural, persona-aware voice generation -- where users can simply write text instructions to define the speaker's identity, tone, and other characteristics. Moreover, Voila supports over one million pre-built voices and efficient customization of new ones from brief audio samples as short as 10 seconds. Beyond spoken dialogue, Voila is designed as a unified model for a wide range of voice-based applications, including automatic speech recognition (ASR), Text-to-Speech (TTS), and, with minimal adaptation, multilingual speech translation. Voila is fully open-sourced to support open research and accelerate progress toward next-generation human-machine interactions.

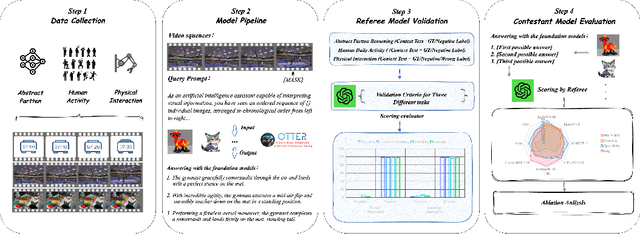

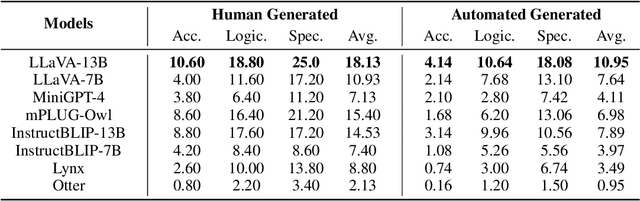

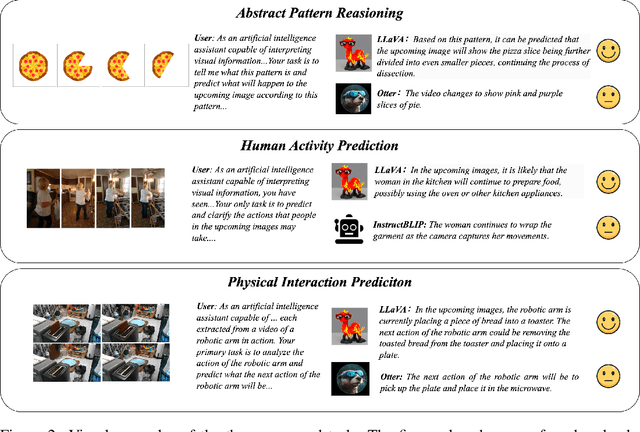

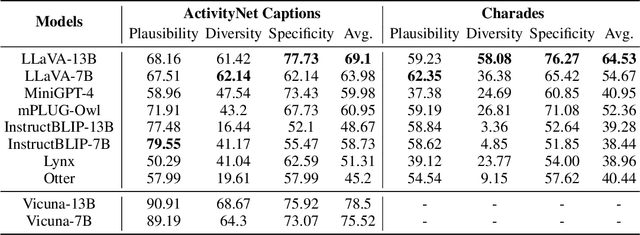

Benchmarking Sequential Visual Input Reasoning and Prediction in Multimodal Large Language Models

Oct 20, 2023

Abstract:Multimodal large language models (MLLMs) have shown great potential in perception and interpretation tasks, but their capabilities in predictive reasoning remain under-explored. To address this gap, we introduce a novel benchmark that assesses the predictive reasoning capabilities of MLLMs across diverse scenarios. Our benchmark targets three important domains: abstract pattern reasoning, human activity prediction, and physical interaction prediction. We further develop three evaluation methods powered by large language model to robustly quantify a model's performance in predicting and reasoning the future based on multi-visual context. Empirical experiments confirm the soundness of the proposed benchmark and evaluation methods via rigorous testing and reveal pros and cons of current popular MLLMs in the task of predictive reasoning. Lastly, our proposed benchmark provides a standardized evaluation framework for MLLMs and can facilitate the development of more advanced models that can reason and predict over complex long sequence of multimodal input.

AutoAgents: A Framework for Automatic Agent Generation

Oct 15, 2023

Abstract:Large language models (LLMs) have enabled remarkable advances in automated task-solving with multi-agent systems. However, most existing LLM-based multi-agent approaches rely on predefined agents to handle simple tasks, limiting the adaptability of multi-agent collaboration to different scenarios. Therefore, we introduce AutoAgents, an innovative framework that adaptively generates and coordinates multiple specialized agents to build an AI team according to different tasks. Specifically, AutoAgents couples the relationship between tasks and roles by dynamically generating multiple required agents based on task content and planning solutions for the current task based on the generated expert agents. Multiple specialized agents collaborate with each other to efficiently accomplish tasks. Concurrently, an observer role is incorporated into the framework to reflect on the designated plans and agents' responses and improve upon them. Our experiments on various benchmarks demonstrate that AutoAgents generates more coherent and accurate solutions than the existing multi-agent methods. This underscores the significance of assigning different roles to different tasks and of team cooperation, offering new perspectives for tackling complex tasks. The repository of this project is available at https://github.com/Link-AGI/AutoAgents.

LLaSM: Large Language and Speech Model

Sep 16, 2023

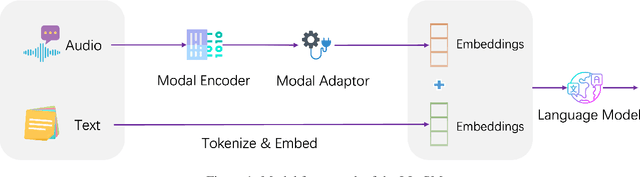

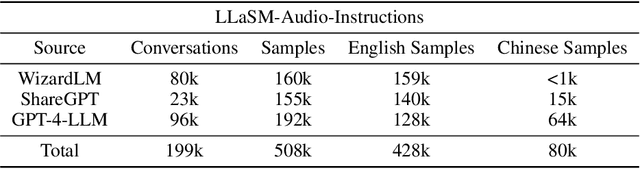

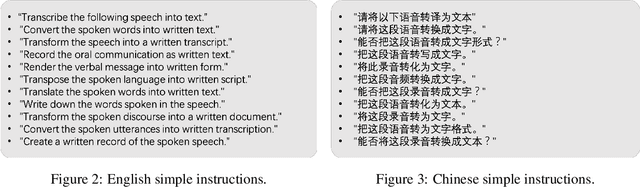

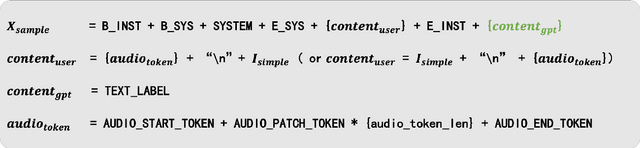

Abstract:Multi-modal large language models have garnered significant interest recently. Though, most of the works focus on vision-language multi-modal models providing strong capabilities in following vision-and-language instructions. However, we claim that speech is also an important modality through which humans interact with the world. Hence, it is crucial for a general-purpose assistant to be able to follow multi-modal speech-and-language instructions. In this work, we propose Large Language and Speech Model (LLaSM). LLaSM is an end-to-end trained large multi-modal speech-language model with cross-modal conversational abilities, capable of following speech-and-language instructions. Our early experiments show that LLaSM demonstrates a more convenient and natural way for humans to interact with artificial intelligence. Specifically, we also release a large Speech Instruction Following dataset LLaSM-Audio-Instructions. Code and demo are available at https://github.com/LinkSoul-AI/LLaSM and https://huggingface.co/spaces/LinkSoul/LLaSM. The LLaSM-Audio-Instructions dataset is available at https://huggingface.co/datasets/LinkSoul/LLaSM-Audio-Instructions.

Chinese Open Instruction Generalist: A Preliminary Release

Apr 25, 2023

Abstract:Instruction tuning is widely recognized as a key technique for building generalist language models, which has attracted the attention of researchers and the public with the release of InstructGPT~\citep{ouyang2022training} and ChatGPT\footnote{\url{https://chat.openai.com/}}. Despite impressive progress in English-oriented large-scale language models (LLMs), it is still under-explored whether English-based foundation LLMs can perform similarly on multilingual tasks compared to English tasks with well-designed instruction tuning and how we can construct the corpora needed for the tuning. To remedy this gap, we propose the project as an attempt to create a Chinese instruction dataset by various methods adapted to the intrinsic characteristics of 4 sub-tasks. We collect around 200k Chinese instruction tuning samples, which have been manually checked to guarantee high quality. We also summarize the existing English and Chinese instruction corpora and briefly describe some potential applications of the newly constructed Chinese instruction corpora. The resulting \textbf{C}hinese \textbf{O}pen \textbf{I}nstruction \textbf{G}eneralist (\textbf{COIG}) corpora are available in Huggingface\footnote{\url{https://huggingface.co/datasets/BAAI/COIG}} and Github\footnote{\url{https://github.com/BAAI-Zlab/COIG}}, and will be continuously updated.

P-ODN: Prototype based Open Deep Network for Open Set Recognition

May 06, 2019

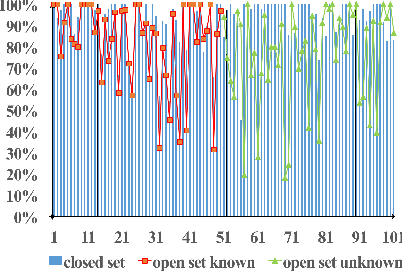

Abstract:Most of the existing recognition algorithms are proposed for closed set scenarios, where all categories are known beforehand. However, in practice, recognition is essentially an open set problem. There are categories we know called "knowns", and there are more we do not know called "unknowns". Enumerating all categories beforehand is never possible, consequently it is infeasible to prepare sufficient training samples for those unknowns. Applying closed set recognition methods will naturally lead to unseen-category errors. To address this problem, we propose the prototype based Open Deep Network (P-ODN) for open set recognition tasks. Specifically, we introduce prototype learning into open set recognition. Prototypes and prototype radiuses are trained jointly to guide a CNN network to derive more discriminative features. Then P-ODN detects the unknowns by applying a multi-class triplet thresholding method based on the distance metric between features and prototypes. Manual labeling the unknowns which are detected in the previous process as new categories. Predictors for new categories are added to the classification layer to "open" the deep neural networks to incorporate new categories dynamically. The weights of new predictors are initialized exquisitely by applying a distances based algorithm to transfer the learned knowledge. Consequently, this initialization method speed up the fine-tuning process and reduce the samples needed to train new predictors. Extensive experiments show that P-ODN can effectively detect unknowns and needs only few samples with human intervention to recognize a new category. In the real world scenarios, our method achieves state-of-the-art performance on the UCF11, UCF50, UCF101 and HMDB51 datasets.

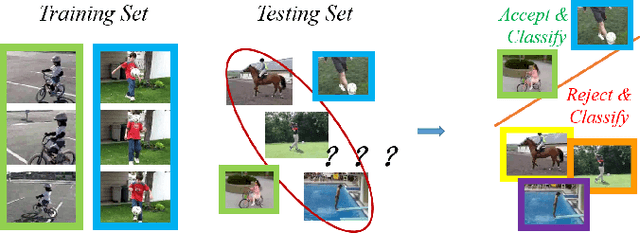

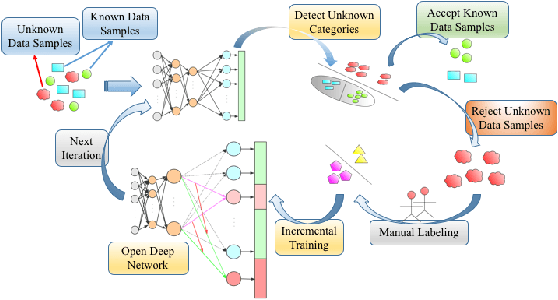

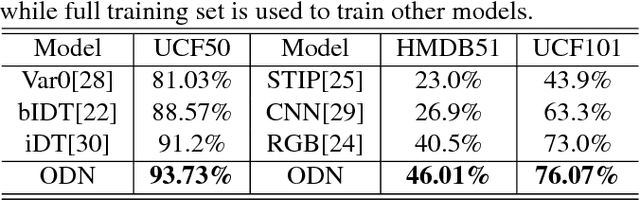

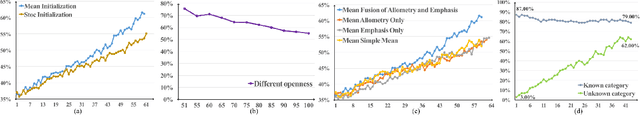

ODN: Opening the Deep Network for Open-set Action Recognition

Jan 23, 2019

Abstract:In recent years, the performance of action recognition has been significantly improved with the help of deep neural networks. Most of the existing action recognition works hold the \textit{closed-set} assumption that all action categories are known beforehand while deep networks can be well trained for these categories. However, action recognition in the real world is essentially an \textit{open-set} problem, namely, it is impossible to know all action categories beforehand and consequently infeasible to prepare sufficient training samples for those emerging categories. In this case, applying closed-set recognition methods will definitely lead to unseen-category errors. To address this challenge, we propose the Open Deep Network (ODN) for the open-set action recognition task. Technologically, ODN detects new categories by applying a multi-class triplet thresholding method, and then dynamically reconstructs the classification layer and "opens" the deep network by adding predictors for new categories continually. In order to transfer the learned knowledge to the new category, two novel methods, Emphasis Initialization and Allometry Training, are adopted to initialize and incrementally train the new predictor so that only few samples are needed to fine-tune the model. Extensive experiments show that ODN can effectively detect and recognize new categories with little human intervention, thus applicable to the open-set action recognition tasks in the real world. Moreover, ODN can even achieve comparable performance to some closed-set methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge