Xiaowei Mao

Spatial-Temporal Feedback Diffusion Guidance for Controlled Traffic Imputation

Jan 08, 2026Abstract:Imputing missing values in spatial-temporal traffic data is essential for intelligent transportation systems. Among advanced imputation methods, score-based diffusion models have demonstrated competitive performance. These models generate data by reversing a noising process, using observed values as conditional guidance. However, existing diffusion models typically apply a uniform guidance scale across both spatial and temporal dimensions, which is inadequate for nodes with high missing data rates. Sparse observations provide insufficient conditional guidance, causing the generative process to drift toward the learned prior distribution rather than closely following the conditional observations, resulting in suboptimal imputation performance. To address this, we propose FENCE, a spatial-temporal feedback diffusion guidance method designed to adaptively control guidance scales during imputation. First, FENCE introduces a dynamic feedback mechanism that adjusts the guidance scale based on the posterior likelihood approximations. The guidance scale is increased when generated values diverge from observations and reduced when alignment improves, preventing overcorrection. Second, because alignment to observations varies across nodes and denoising steps, a global guidance scale for all nodes is suboptimal. FENCE computes guidance scales at the cluster level by grouping nodes based on their attention scores, leveraging spatial-temporal correlations to provide more accurate guidance. Experimental results on real-world traffic datasets show that FENCE significantly enhances imputation accuracy.

RIPCN: A Road Impedance Principal Component Network for Probabilistic Traffic Flow Forecasting

Dec 25, 2025Abstract:Accurate traffic flow forecasting is crucial for intelligent transportation services such as navigation and ride-hailing. In such applications, uncertainty estimation in forecasting is important because it helps evaluate traffic risk levels, assess forecast reliability, and provide timely warnings. As a result, probabilistic traffic flow forecasting (PTFF) has gained significant attention, as it produces both point forecasts and uncertainty estimates. However, existing PTFF approaches still face two key challenges: (1) how to uncover and model the causes of traffic flow uncertainty for reliable forecasting, and (2) how to capture the spatiotemporal correlations of uncertainty for accurate prediction. To address these challenges, we propose RIPCN, a Road Impedance Principal Component Network that integrates domain-specific transportation theory with spatiotemporal principal component learning for PTFF. RIPCN introduces a dynamic impedance evolution network that captures directional traffic transfer patterns driven by road congestion level and flow variability, revealing the direct causes of uncertainty and enhancing both reliability and interpretability. In addition, a principal component network is designed to forecast the dominant eigenvectors of future flow covariance, enabling the model to capture spatiotemporal uncertainty correlations. This design allows for accurate and efficient uncertainty estimation while also improving point prediction performance. Experimental results on real-world datasets show that our approach outperforms existing probabilistic forecasting methods.

Towards An Efficient and Effective En Route Travel Time Estimation Framework

Apr 05, 2025

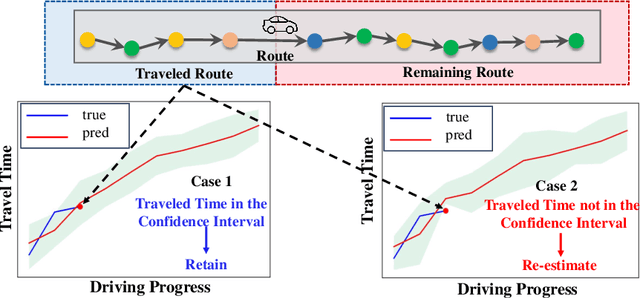

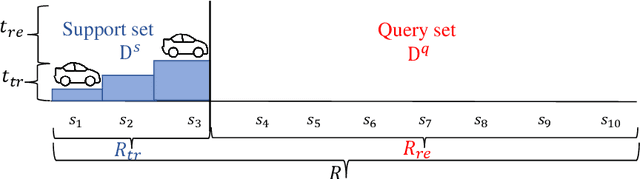

Abstract:En route travel time estimation (ER-TTE) focuses on predicting the travel time of the remaining route. Existing ER-TTE methods always make re-estimation which significantly hinders real-time performance, especially when faced with the computational demands of simultaneous user requests. This results in delays and reduced responsiveness in ER-TTE services. We propose a general efficient framework U-ERTTE combining an Uncertainty-Guided Decision mechanism (UGD) and Fine-Tuning with Meta-Learning (FTML) to address these challenges. UGD quantifies the uncertainty and provides confidence intervals for the entire route. It selectively re-estimates only when the actual travel time deviates from the predicted confidence intervals, thereby optimizing the efficiency of ER-TTE. To ensure the accuracy of confidence intervals and accurate predictions that need to re-estimate, FTML is employed to train the model, enabling it to learn general driving patterns and specific features to adapt to specific tasks. Extensive experiments on two large-scale real datasets demonstrate that the U-ERTTE framework significantly enhances inference speed and throughput while maintaining high effectiveness. Our code is available at https://github.com/shenzekai/U-ERTTE

DRL4AOI: A DRL Framework for Semantic-aware AOI Segmentation in Location-Based Services

Dec 06, 2024

Abstract:In Location-Based Services (LBS), such as food delivery, a fundamental task is segmenting Areas of Interest (AOIs), aiming at partitioning the urban geographical spaces into non-overlapping regions. Traditional AOI segmentation algorithms primarily rely on road networks to partition urban areas. While promising in modeling the geo-semantics, road network-based models overlooked the service-semantic goals (e.g., workload equality) in LBS service. In this paper, we point out that the AOI segmentation problem can be naturally formulated as a Markov Decision Process (MDP), which gradually chooses a nearby AOI for each grid in the current AOI's border. Based on the MDP, we present the first attempt to generalize Deep Reinforcement Learning (DRL) for AOI segmentation, leading to a novel DRL-based framework called DRL4AOI. The DRL4AOI framework introduces different service-semantic goals in a flexible way by treating them as rewards that guide the AOI generation. To evaluate the effectiveness of DRL4AOI, we develop and release an AOI segmentation system. We also present a representative implementation of DRL4AOI - TrajRL4AOI - for AOI segmentation in the logistics service. It introduces a Double Deep Q-learning Network (DDQN) to gradually optimize the AOI generation for two specific semantic goals: i) trajectory modularity, i.e., maximize tightness of the trajectory connections within an AOI and the sparsity of connections between AOIs, ii) matchness with the road network, i.e., maximizing the matchness between AOIs and the road network. Quantitative and qualitative experiments conducted on synthetic and real-world data demonstrate the effectiveness and superiority of our method. The code and system is publicly available at https://github.com/Kogler7/AoiOpt.

DiffLight: A Partial Rewards Conditioned Diffusion Model for Traffic Signal Control with Missing Data

Oct 31, 2024

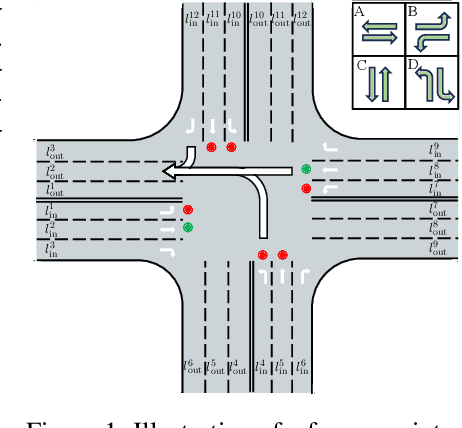

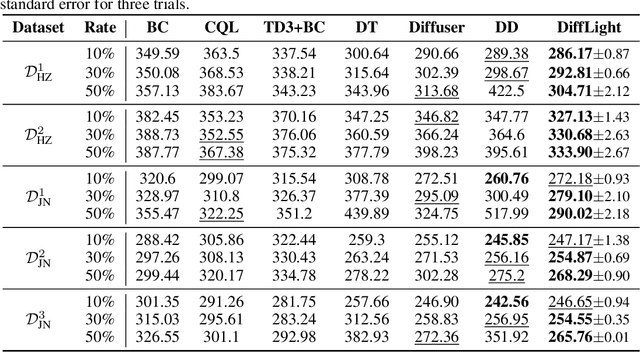

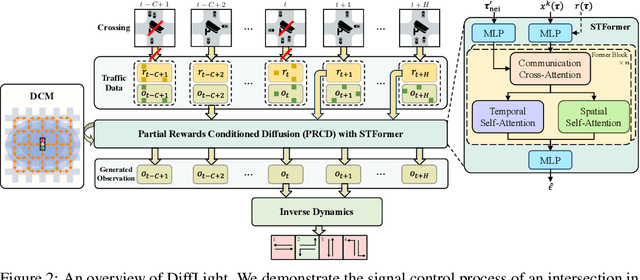

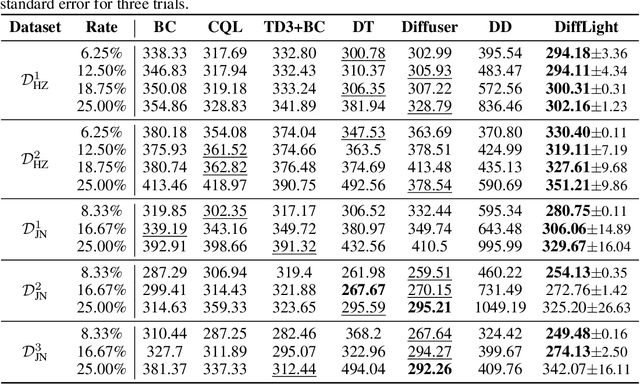

Abstract:The application of reinforcement learning in traffic signal control (TSC) has been extensively researched and yielded notable achievements. However, most existing works for TSC assume that traffic data from all surrounding intersections is fully and continuously available through sensors. In real-world applications, this assumption often fails due to sensor malfunctions or data loss, making TSC with missing data a critical challenge. To meet the needs of practical applications, we introduce DiffLight, a novel conditional diffusion model for TSC under data-missing scenarios in the offline setting. Specifically, we integrate two essential sub-tasks, i.e., traffic data imputation and decision-making, by leveraging a Partial Rewards Conditioned Diffusion (PRCD) model to prevent missing rewards from interfering with the learning process. Meanwhile, to effectively capture the spatial-temporal dependencies among intersections, we design a Spatial-Temporal transFormer (STFormer) architecture. In addition, we propose a Diffusion Communication Mechanism (DCM) to promote better communication and control performance under data-missing scenarios. Extensive experiments on five datasets with various data-missing scenarios demonstrate that DiffLight is an effective controller to address TSC with missing data. The code of DiffLight is released at https://github.com/lokol5579/DiffLight-release.

DutyTTE: Deciphering Uncertainty in Origin-Destination Travel Time Estimation

Aug 23, 2024Abstract:Uncertainty quantification in travel time estimation (TTE) aims to estimate the confidence interval for travel time, given the origin (O), destination (D), and departure time (T). Accurately quantifying this uncertainty requires generating the most likely path and assessing travel time uncertainty along the path. This involves two main challenges: 1) Predicting a path that aligns with the ground truth, and 2) modeling the impact of travel time in each segment on overall uncertainty under varying conditions. We propose DutyTTE to address these challenges. For the first challenge, we introduce a deep reinforcement learning method to improve alignment between the predicted path and the ground truth, providing more accurate travel time information from road segments to improve TTE. For the second challenge, we propose a mixture of experts guided uncertainty quantification mechanism to better capture travel time uncertainty for each segment under varying contexts. Additionally, we calibrate our results using Hoeffding's upper-confidence bound to provide statistical guarantees for the estimated confidence intervals. Extensive experiments on two real-world datasets demonstrate the superiority of our proposed method.

STD-LLM: Understanding Both Spatial and Temporal Properties of Spatial-Temporal Data with LLMs

Jul 12, 2024

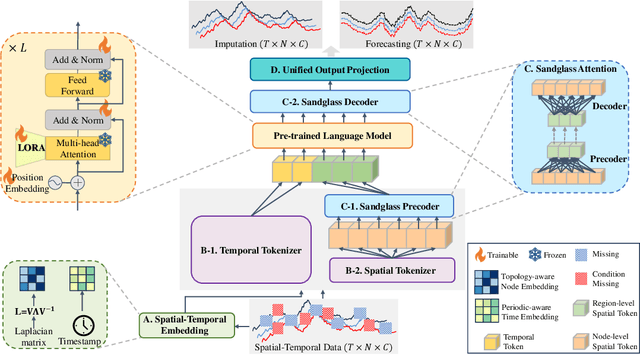

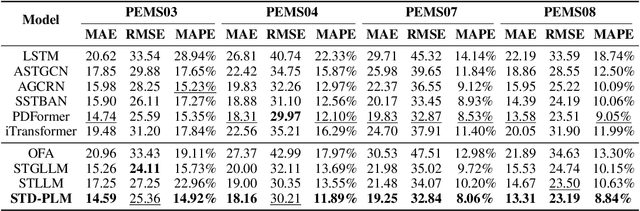

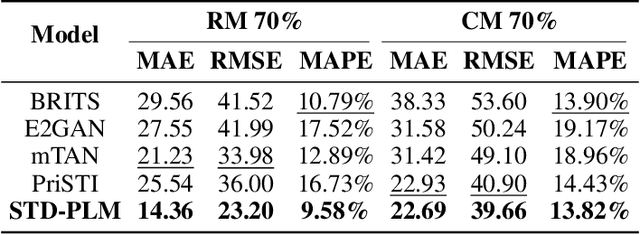

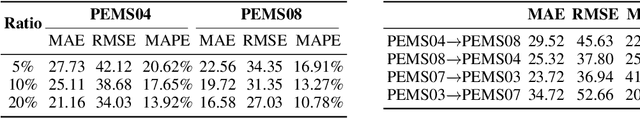

Abstract:Spatial-temporal forecasting and imputation are important for real-world dynamic systems such as intelligent transportation, urban planning, and public health. Most existing methods are tailored for individual forecasting or imputation tasks but are not designed for both. Additionally, they are less effective for zero-shot and few-shot learning. While large language models (LLMs) have exhibited strong pattern recognition and reasoning abilities across various tasks, including few-shot and zero-shot learning, their development in understanding spatial-temporal data has been constrained by insufficient modeling of complex correlations such as the temporal correlations, spatial connectivity, non-pairwise and high-order spatial-temporal correlations within data. In this paper, we propose STD-LLM for understanding both spatial and temporal properties of \underline{S}patial-\underline{T}emporal \underline{D}ata with \underline{LLM}s, which is capable of implementing both spatial-temporal forecasting and imputation tasks. STD-LLM understands spatial-temporal correlations via explicitly designed spatial and temporal tokenizers as well as virtual nodes. Topology-aware node embeddings are designed for LLMs to comprehend and exploit the topology structure of data. Additionally, to capture the non-pairwise and higher-order correlations, we design a hypergraph learning module for LLMs, which can enhance the overall performance and improve efficiency. Extensive experiments demonstrate that STD-LLM exhibits strong performance and generalization capabilities across the forecasting and imputation tasks on various datasets. Moreover, STD-LLM achieves promising results on both few-shot and zero-shot learning tasks.

A Survey on Service Route and Time Prediction in Instant Delivery: Taxonomy, Progress, and Prospects

Sep 03, 2023

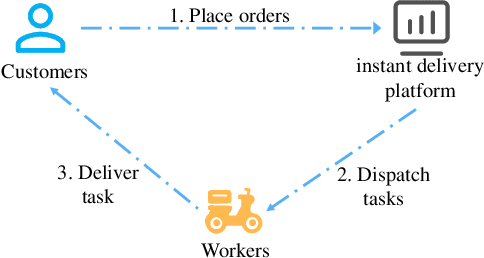

Abstract:Instant delivery services, such as food delivery and package delivery, have achieved explosive growth in recent years by providing customers with daily-life convenience. An emerging research area within these services is service Route\&Time Prediction (RTP), which aims to estimate the future service route as well as the arrival time of a given worker. As one of the most crucial tasks in those service platforms, RTP stands central to enhancing user satisfaction and trimming operational expenditures on these platforms. Despite a plethora of algorithms developed to date, there is no systematic, comprehensive survey to guide researchers in this domain. To fill this gap, our work presents the first comprehensive survey that methodically categorizes recent advances in service route and time prediction. We start by defining the RTP challenge and then delve into the metrics that are often employed. Following that, we scrutinize the existing RTP methodologies, presenting a novel taxonomy of them. We categorize these methods based on three criteria: (i) type of task, subdivided into only-route prediction, only-time prediction, and joint route\&time prediction; (ii) model architecture, which encompasses sequence-based and graph-based models; and (iii) learning paradigm, including Supervised Learning (SL) and Deep Reinforcement Learning (DRL). Conclusively, we highlight the limitations of current research and suggest prospective avenues. We believe that the taxonomy, progress, and prospects introduced in this paper can significantly promote the development of this field.

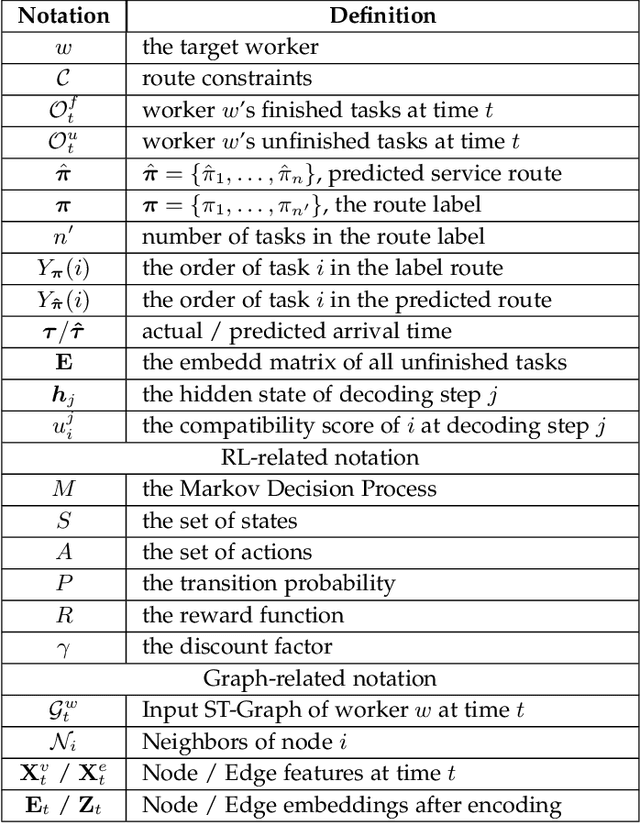

DRL4Route: A Deep Reinforcement Learning Framework for Pick-up and Delivery Route Prediction

Jul 30, 2023

Abstract:Pick-up and Delivery Route Prediction (PDRP), which aims to estimate the future service route of a worker given his current task pool, has received rising attention in recent years. Deep neural networks based on supervised learning have emerged as the dominant model for the task because of their powerful ability to capture workers' behavior patterns from massive historical data. Though promising, they fail to introduce the non-differentiable test criteria into the training process, leading to a mismatch in training and test criteria. Which considerably trims down their performance when applied in practical systems. To tackle the above issue, we present the first attempt to generalize Reinforcement Learning (RL) to the route prediction task, leading to a novel RL-based framework called DRL4Route. It combines the behavior-learning abilities of previous deep learning models with the non-differentiable objective optimization ability of reinforcement learning. DRL4Route can serve as a plug-and-play component to boost the existing deep learning models. Based on the framework, we further implement a model named DRL4Route-GAE for PDRP in logistic service. It follows the actor-critic architecture which is equipped with a Generalized Advantage Estimator that can balance the bias and variance of the policy gradient estimates, thus achieving a more optimal policy. Extensive offline experiments and the online deployment show that DRL4Route-GAE improves Location Square Deviation (LSD) by 0.9%-2.7%, and Accuracy@3 (ACC@3) by 2.4%-3.2% over existing methods on the real-world dataset.

LaDe: The First Comprehensive Last-mile Delivery Dataset from Industry

Jun 19, 2023

Abstract:Real-world last-mile delivery datasets are crucial for research in logistics, supply chain management, and spatio-temporal data mining. Despite a plethora of algorithms developed to date, no widely accepted, publicly available last-mile delivery dataset exists to support research in this field. In this paper, we introduce \texttt{LaDe}, the first publicly available last-mile delivery dataset with millions of packages from the industry. LaDe has three unique characteristics: (1) Large-scale. It involves 10,677k packages of 21k couriers over 6 months of real-world operation. (2) Comprehensive information. It offers original package information, such as its location and time requirements, as well as task-event information, which records when and where the courier is while events such as task-accept and task-finish events happen. (3) Diversity. The dataset includes data from various scenarios, including package pick-up and delivery, and from multiple cities, each with its unique spatio-temporal patterns due to their distinct characteristics such as populations. We verify LaDe on three tasks by running several classical baseline models per task. We believe that the large-scale, comprehensive, diverse feature of LaDe can offer unparalleled opportunities to researchers in the supply chain community, data mining community, and beyond. The dataset homepage is publicly available at https://huggingface.co/datasets/Cainiao-AI/LaDe.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge