Wenjing Jia

Versatile and Efficient Medical Image Super-Resolution Via Frequency-Gated Mamba

Oct 31, 2025Abstract:Medical image super-resolution (SR) is essential for enhancing diagnostic accuracy while reducing acquisition cost and scanning time. However, modeling both long-range anatomical structures and fine-grained frequency details with low computational overhead remains challenging. We propose FGMamba, a novel frequency-aware gated state-space model that unifies global dependency modeling and fine-detail enhancement into a lightweight architecture. Our method introduces two key innovations: a Gated Attention-enhanced State-Space Module (GASM) that integrates efficient state-space modeling with dual-branch spatial and channel attention, and a Pyramid Frequency Fusion Module (PFFM) that captures high-frequency details across multiple resolutions via FFT-guided fusion. Extensive evaluations across five medical imaging modalities (Ultrasound, OCT, MRI, CT, and Endoscopic) demonstrate that FGMamba achieves superior PSNR/SSIM while maintaining a compact parameter footprint ($<$0.75M), outperforming CNN-based and Transformer-based SOTAs. Our results validate the effectiveness of frequency-aware state-space modeling for scalable and accurate medical image enhancement.

SAGS: Self-Adaptive Alias-Free Gaussian Splatting for Dynamic Surgical Endoscopic Reconstruction

Oct 31, 2025Abstract:Surgical reconstruction of dynamic tissues from endoscopic videos is a crucial technology in robot-assisted surgery. The development of Neural Radiance Fields (NeRFs) has greatly advanced deformable tissue reconstruction, achieving high-quality results from video and image sequences. However, reconstructing deformable endoscopic scenes remains challenging due to aliasing and artifacts caused by tissue movement, which can significantly degrade visualization quality. The introduction of 3D Gaussian Splatting (3DGS) has improved reconstruction efficiency by enabling a faster rendering pipeline. Nevertheless, existing 3DGS methods often prioritize rendering speed while neglecting these critical issues. To address these challenges, we propose SAGS, a self-adaptive alias-free Gaussian splatting framework. We introduce an attention-driven, dynamically weighted 4D deformation decoder, leveraging 3D smoothing filters and 2D Mip filters to mitigate artifacts in deformable tissue reconstruction and better capture the fine details of tissue movement. Experimental results on two public benchmarks, EndoNeRF and SCARED, demonstrate that our method achieves superior performance in all metrics of PSNR, SSIM, and LPIPS compared to the state of the art while also delivering better visualization quality.

JointSplat: Probabilistic Joint Flow-Depth Optimization for Sparse-View Gaussian Splatting

Jun 04, 2025Abstract:Reconstructing 3D scenes from sparse viewpoints is a long-standing challenge with wide applications. Recent advances in feed-forward 3D Gaussian sparse-view reconstruction methods provide an efficient solution for real-time novel view synthesis by leveraging geometric priors learned from large-scale multi-view datasets and computing 3D Gaussian centers via back-projection. Despite offering strong geometric cues, both feed-forward multi-view depth estimation and flow-depth joint estimation face key limitations: the former suffers from mislocation and artifact issues in low-texture or repetitive regions, while the latter is prone to local noise and global inconsistency due to unreliable matches when ground-truth flow supervision is unavailable. To overcome this, we propose JointSplat, a unified framework that leverages the complementarity between optical flow and depth via a novel probabilistic optimization mechanism. Specifically, this pixel-level mechanism scales the information fusion between depth and flow based on the matching probability of optical flow during training. Building upon the above mechanism, we further propose a novel multi-view depth-consistency loss to leverage the reliability of supervision while suppressing misleading gradients in uncertain areas. Evaluated on RealEstate10K and ACID, JointSplat consistently outperforms state-of-the-art (SOTA) methods, demonstrating the effectiveness and robustness of our proposed probabilistic joint flow-depth optimization approach for high-fidelity sparse-view 3D reconstruction.

S2AFormer: Strip Self-Attention for Efficient Vision Transformer

May 28, 2025Abstract:Vision Transformer (ViT) has made significant advancements in computer vision, thanks to its token mixer's sophisticated ability to capture global dependencies between all tokens. However, the quadratic growth in computational demands as the number of tokens increases limits its practical efficiency. Although recent methods have combined the strengths of convolutions and self-attention to achieve better trade-offs, the expensive pairwise token affinity and complex matrix operations inherent in self-attention remain a bottleneck. To address this challenge, we propose S2AFormer, an efficient Vision Transformer architecture featuring novel Strip Self-Attention (SSA). We design simple yet effective Hybrid Perception Blocks (HPBs) to effectively integrate the local perception capabilities of CNNs with the global context modeling of Transformer's attention mechanisms. A key innovation of SSA lies in its reducing the spatial dimensions of $K$ and $V$ while compressing the channel dimensions of $Q$ and $K$. This design significantly reduces computational overhead while preserving accuracy, striking an optimal balance between efficiency and effectiveness. We evaluate the robustness and efficiency of S2AFormer through extensive experiments on multiple vision benchmarks, including ImageNet-1k for image classification, ADE20k for semantic segmentation, and COCO for object detection and instance segmentation. Results demonstrate that S2AFormer achieves significant accuracy gains with superior efficiency in both GPU and non-GPU environments, making it a strong candidate for efficient vision Transformers.

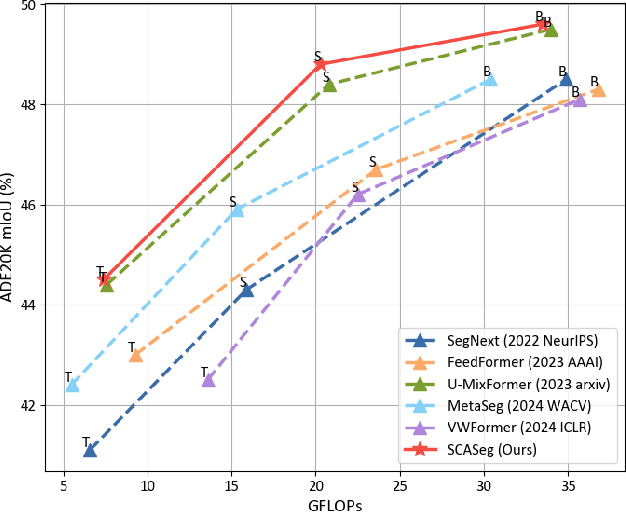

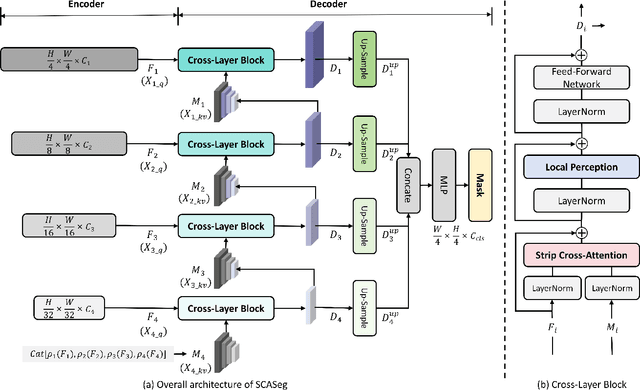

SCASeg: Strip Cross-Attention for Efficient Semantic Segmentation

Nov 26, 2024

Abstract:The Vision Transformer (ViT) has achieved notable success in computer vision, with its variants extensively validated across various downstream tasks, including semantic segmentation. However, designed as general-purpose visual encoders, ViT backbones often overlook the specific needs of task decoders, revealing opportunities to design decoders tailored to efficient semantic segmentation. This paper proposes Strip Cross-Attention (SCASeg), an innovative decoder head explicitly designed for semantic segmentation. Instead of relying on the simple conventional skip connections, we employ lateral connections between the encoder and decoder stages, using encoder features as Queries for the cross-attention modules. Additionally, we introduce a Cross-Layer Block that blends hierarchical feature maps from different encoder and decoder stages to create a unified representation for Keys and Values. To further boost computational efficiency, SCASeg compresses queries and keys into strip-like patterns to optimize memory usage and inference speed over the traditional vanilla cross-attention. Moreover, the Cross-Layer Block incorporates the local perceptual strengths of convolution, enabling SCASeg to capture both global and local context dependencies across multiple layers. This approach facilitates effective feature interaction at different scales, improving the overall performance. Experiments show that the adaptable decoder of SCASeg produces competitive performance across different setups, surpassing leading segmentation architectures on all benchmark datasets, including ADE20K, Cityscapes, COCO-Stuff 164k, and Pascal VOC2012, even under varying computational limitations.

ReviveDiff: A Universal Diffusion Model for Restoring Images in Adverse Weather Conditions

Sep 27, 2024

Abstract:Images captured in challenging environments--such as nighttime, foggy, rainy weather, and underwater--often suffer from significant degradation, resulting in a substantial loss of visual quality. Effective restoration of these degraded images is critical for the subsequent vision tasks. While many existing approaches have successfully incorporated specific priors for individual tasks, these tailored solutions limit their applicability to other degradations. In this work, we propose a universal network architecture, dubbed "ReviveDiff", which can address a wide range of degradations and bring images back to life by enhancing and restoring their quality. Our approach is inspired by the observation that, unlike degradation caused by movement or electronic issues, quality degradation under adverse conditions primarily stems from natural media (such as fog, water, and low luminance), which generally preserves the original structures of objects. To restore the quality of such images, we leveraged the latest advancements in diffusion models and developed ReviveDiff to restore image quality from both macro and micro levels across some key factors determining image quality, such as sharpness, distortion, noise level, dynamic range, and color accuracy. We rigorously evaluated ReviveDiff on seven benchmark datasets covering five types of degrading conditions: Rainy, Underwater, Low-light, Smoke, and Nighttime Hazy. Our experimental results demonstrate that ReviveDiff outperforms the state-of-the-art methods both quantitatively and visually.

MacFormer: Semantic Segmentation with Fine Object Boundaries

Aug 11, 2024

Abstract:Semantic segmentation involves assigning a specific category to each pixel in an image. While Vision Transformer-based models have made significant progress, current semantic segmentation methods often struggle with precise predictions in localized areas like object boundaries. To tackle this challenge, we introduce a new semantic segmentation architecture, ``MacFormer'', which features two key components. Firstly, using learnable agent tokens, a Mutual Agent Cross-Attention (MACA) mechanism effectively facilitates the bidirectional integration of features across encoder and decoder layers. This enables better preservation of low-level features, such as elementary edges, during decoding. Secondly, a Frequency Enhancement Module (FEM) in the decoder leverages high-frequency and low-frequency components to boost features in the frequency domain, benefiting object boundaries with minimal computational complexity increase. MacFormer is demonstrated to be compatible with various network architectures and outperforms existing methods in both accuracy and efficiency on benchmark datasets ADE20K and Cityscapes under different computational constraints.

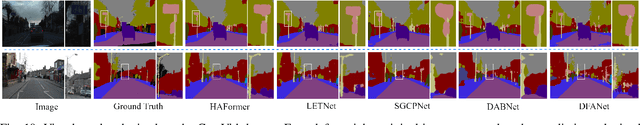

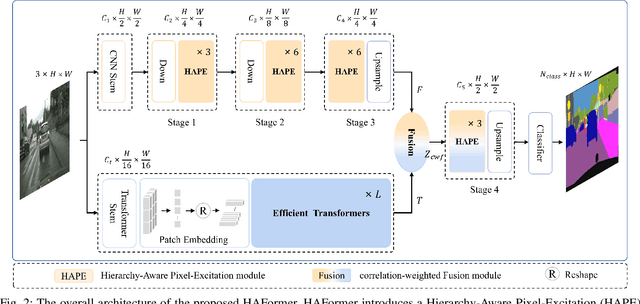

HAFormer: Unleashing the Power of Hierarchy-Aware Features for Lightweight Semantic Segmentation

Jul 11, 2024

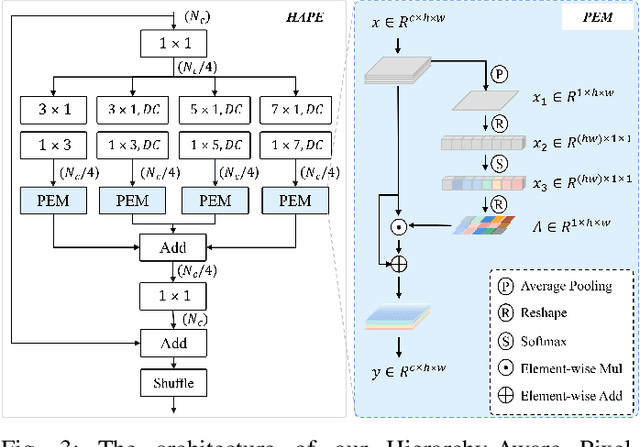

Abstract:Both Convolutional Neural Networks (CNNs) and Transformers have shown great success in semantic segmentation tasks. Efforts have been made to integrate CNNs with Transformer models to capture both local and global context interactions. However, there is still room for enhancement, particularly when considering constraints on computational resources. In this paper, we introduce HAFormer, a model that combines the hierarchical features extraction ability of CNNs with the global dependency modeling capability of Transformers to tackle lightweight semantic segmentation challenges. Specifically, we design a Hierarchy-Aware Pixel-Excitation (HAPE) module for adaptive multi-scale local feature extraction. During the global perception modeling, we devise an Efficient Transformer (ET) module streamlining the quadratic calculations associated with traditional Transformers. Moreover, a correlation-weighted Fusion (cwF) module selectively merges diverse feature representations, significantly enhancing predictive accuracy. HAFormer achieves high performance with minimal computational overhead and compact model size, achieving 74.2% mIoU on Cityscapes and 71.1% mIoU on CamVid test datasets, with frame rates of 105FPS and 118FPS on a single 2080Ti GPU. The source codes are available at https://github.com/XU-GITHUB-curry/HAFormer.

Millimeter Wave Radar-based Human Activity Recognition for Healthcare Monitoring Robot

May 03, 2024Abstract:Healthcare monitoring is crucial, especially for the daily care of elderly individuals living alone. It can detect dangerous occurrences, such as falls, and provide timely alerts to save lives. Non-invasive millimeter wave (mmWave) radar-based healthcare monitoring systems using advanced human activity recognition (HAR) models have recently gained significant attention. However, they encounter challenges in handling sparse point clouds, achieving real-time continuous classification, and coping with limited monitoring ranges when statically mounted. To overcome these limitations, we propose RobHAR, a movable robot-mounted mmWave radar system with lightweight deep neural networks for real-time monitoring of human activities. Specifically, we first propose a sparse point cloud-based global embedding to learn the features of point clouds using the light-PointNet (LPN) backbone. Then, we learn the temporal pattern with a bidirectional lightweight LSTM model (BiLiLSTM). In addition, we implement a transition optimization strategy, integrating the Hidden Markov Model (HMM) with Connectionist Temporal Classification (CTC) to improve the accuracy and robustness of the continuous HAR. Our experiments on three datasets indicate that our method significantly outperforms the previous studies in both discrete and continuous HAR tasks. Finally, we deploy our system on a movable robot-mounted edge computing platform, achieving flexible healthcare monitoring in real-world scenarios.

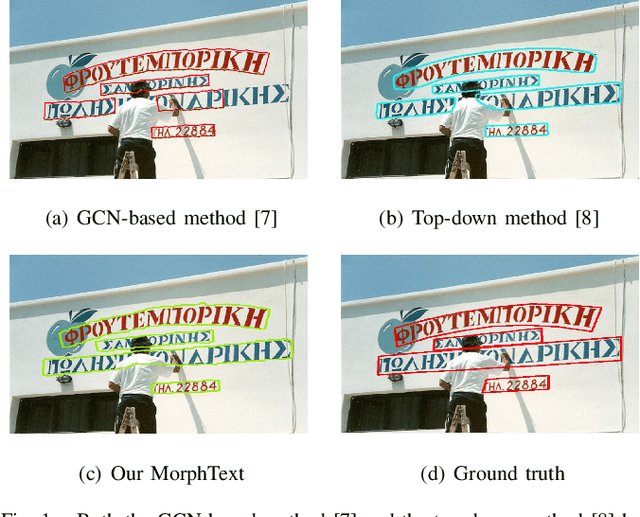

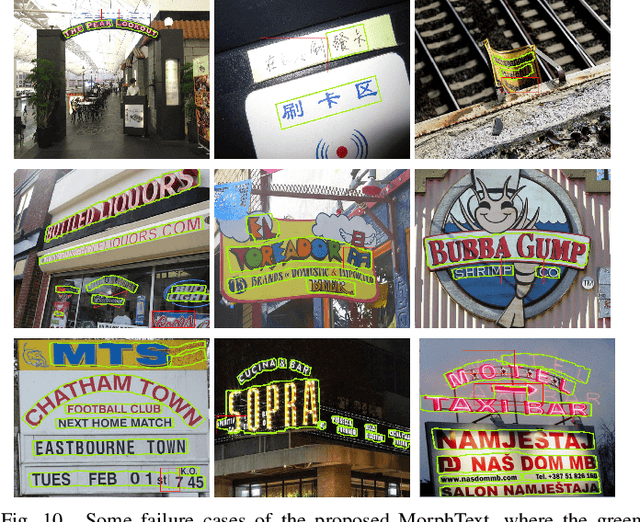

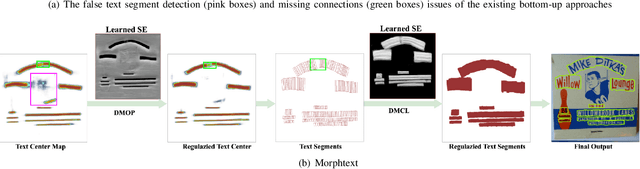

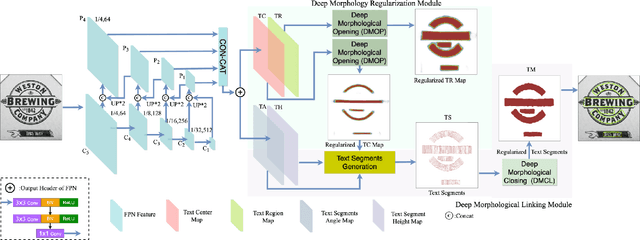

MorphText: Deep Morphology Regularized Arbitrary-shape Scene Text Detection

Apr 26, 2024

Abstract:Bottom-up text detection methods play an important role in arbitrary-shape scene text detection but there are two restrictions preventing them from achieving their great potential, i.e., 1) the accumulation of false text segment detections, which affects subsequent processing, and 2) the difficulty of building reliable connections between text segments. Targeting these two problems, we propose a novel approach, named ``MorphText", to capture the regularity of texts by embedding deep morphology for arbitrary-shape text detection. Towards this end, two deep morphological modules are designed to regularize text segments and determine the linkage between them. First, a Deep Morphological Opening (DMOP) module is constructed to remove false text segment detections generated in the feature extraction process. Then, a Deep Morphological Closing (DMCL) module is proposed to allow text instances of various shapes to stretch their morphology along their most significant orientation while deriving their connections. Extensive experiments conducted on four challenging benchmark datasets (CTW1500, Total-Text, MSRA-TD500 and ICDAR2017) demonstrate that our proposed MorphText outperforms both top-down and bottom-up state-of-the-art arbitrary-shape scene text detection approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge