Weihang Liang

Designing a Wayfinding Robot for People with Visual Impairments

Feb 17, 2023Abstract:People with visual impairments (PwVI) often have difficulties navigating through unfamiliar indoor environments. However, current wayfinding tools are fairly limited. In this short paper, we present our in-progress work on a wayfinding robot for PwVI. The robot takes an audio command from the user that specifies the intended destination. Then, the robot autonomously plans a path to navigate to the goal. We use sensors to estimate the real-time position of the user, which is fed to the planner to improve the safety and comfort of the user. In addition, the robot describes the surroundings to the user periodically to prevent disorientation and potential accidents. We demonstrate the feasibility of our design in a public indoor environment. Finally, we analyze the limitations of our current design, as well as our insights and future work. A demonstration video can be found at https://youtu.be/BS9r5bkIass.

Structural Attention-Based Recurrent Variational Autoencoder for Highway Vehicle Anomaly Detection

Jan 09, 2023

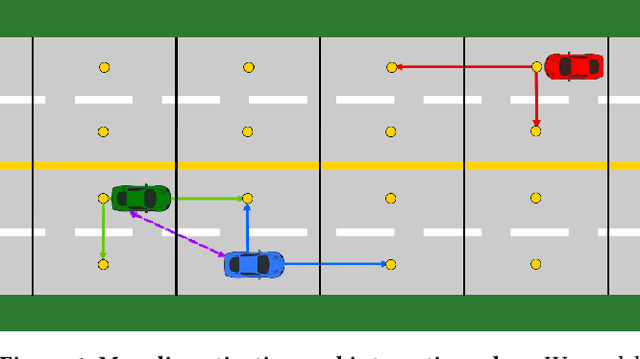

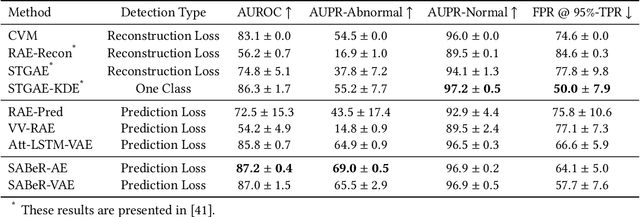

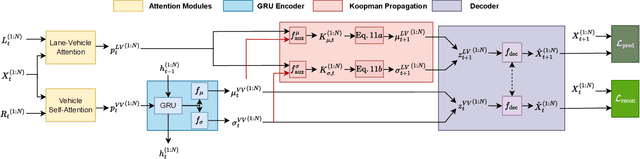

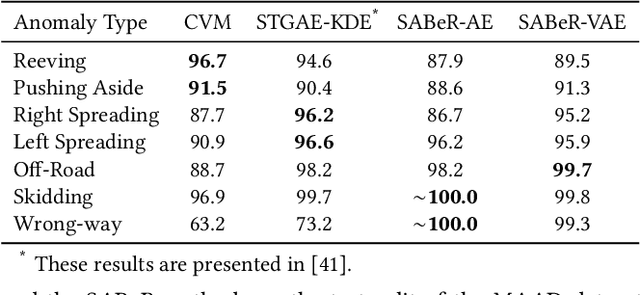

Abstract:In autonomous driving, detection of abnormal driving behaviors is essential to ensure the safety of vehicle controllers. Prior works in vehicle anomaly detection have shown that modeling interactions between agents improves detection accuracy, but certain abnormal behaviors where structured road information is paramount are poorly identified, such as wrong-way and off-road driving. We propose a novel unsupervised framework for highway anomaly detection named Structural Attention-based Recurrent VAE (SABeR-VAE), which explicitly uses the structure of the environment to aid anomaly identification. Specifically, we use a vehicle self-attention module to learn the relations among vehicles on a road, and a separate lane-vehicle attention module to model the importance of permissible lanes to aid in trajectory prediction. Conditioned on the attention modules' outputs, a recurrent encoder-decoder architecture with a stochastic Koopman operator-propagated latent space predicts the next states of vehicles. Our model is trained end-to-end to minimize prediction loss on normal vehicle behaviors, and is deployed to detect anomalies in (ab)normal scenarios. By combining the heterogeneous vehicle and lane information, SABeR-VAE and its deterministic variant, SABeR-AE, improve abnormal AUPR by 18% and 25% respectively on the simulated MAAD highway dataset. Furthermore, we show that the learned Koopman operator in SABeR-VAE enforces interpretable structure in the variational latent space. The results of our method indeed show that modeling environmental factors is essential to detecting a diverse set of anomalies in deployment.

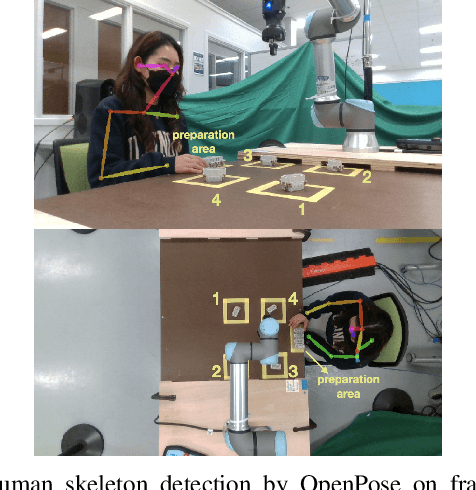

Seamless Interaction Design with Coexistence and Cooperation Modes for Robust Human-Robot Collaboration

Jun 09, 2022

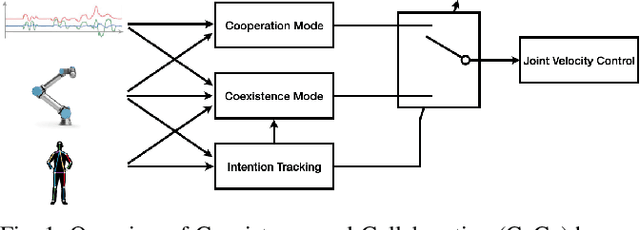

Abstract:A robot needs multiple interaction modes to robustly collaborate with a human in complicated industrial tasks. We develop a Coexistence-and-Cooperation (CoCo) human-robot collaboration system. Coexistence mode enables the robot to work with the human on different sub-tasks independently in a shared space. Cooperation mode enables the robot to follow human guidance and recover failures. A human intention tracking algorithm takes in both human and robot motion measurements as input and provides a switch on the interaction modes. We demonstrate the effectiveness of CoCo system in a use case analogous to a real world multi-step assembly task.

Hierarchical Intention Tracking for Robust Human-Robot Collaboration in Industrial Assembly Tasks

Mar 17, 2022

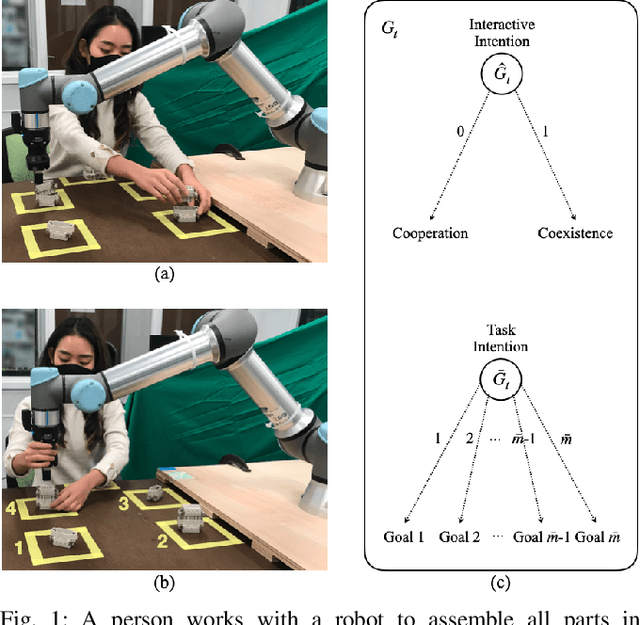

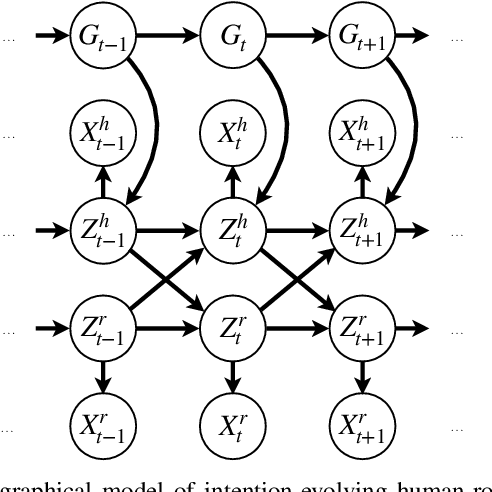

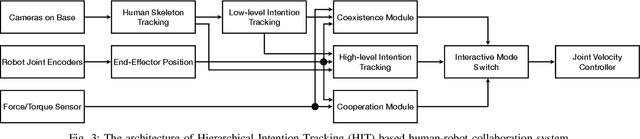

Abstract:Collaborative robots require effective intention estimation to safely and smoothly work with humans in less structured tasks such as industrial assembly. During these tasks, human intention continuously changes across multiple steps, and is composed of a hierarchy including high-level interactive intention and low-level task intention. Thus, we propose the concept of intention tracking and introduce a collaborative robot system with a hierarchical framework that concurrently tracks intentions at both levels by observing force/torque measurements, robot state sequences, and tracked human trajectories. The high-level intention estimate enables the robot to both (1) safely avoid collision with the human to minimize interruption and (2) cooperatively approach the human and help recover from an assembly failure through admittance control. The low-level intention estimate provides the robot with task-specific information (e.g., which part the human is working on) for concurrent task execution. We implement the system on a UR5e robot, and demonstrate robust, seamless and ergonomic collaboration between the human and the robot in an assembly use case through an ablative pilot study.

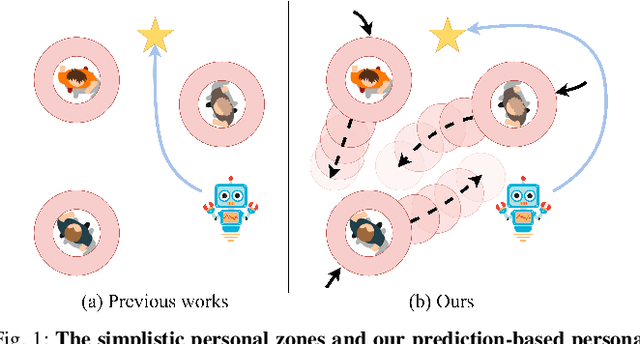

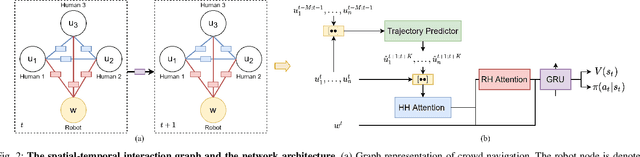

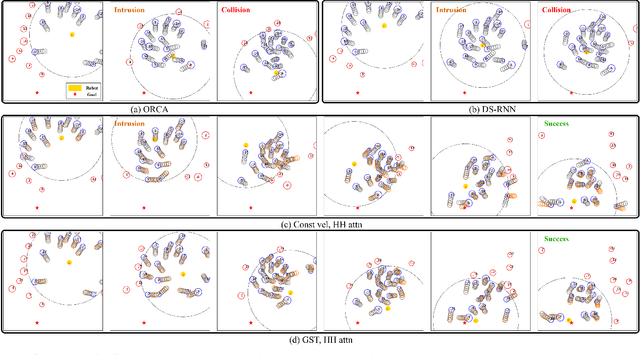

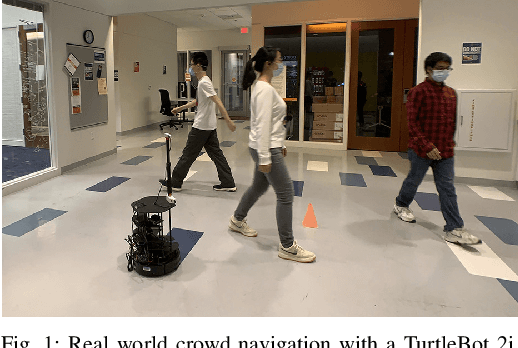

Socially Aware Robot Crowd Navigation with Interaction Graphs and Human Trajectory Prediction

Mar 03, 2022

Abstract:We study the problem of safe and socially aware robot navigation in dense and interactive human crowds. Previous works use simplified methods to model the personal spaces of pedestrians and ignore the social compliance of the robot behaviors. In this paper, we provide a more accurate representation of personal zones of walking pedestrians with their future trajectories. The predicted personal zones are incorporated into a reinforcement learning framework to prevent the robot from intruding into the personal zones. To learn socially aware navigation policies, we propose a novel recurrent graph neural network with attention mechanisms to capture the interactions among agents through space and time. We demonstrate that our method enables the robot to achieve good navigation performance and non-invasiveness in challenging crowd navigation scenarios. We successfully transfer the policy learned in the simulator to a real-world TurtleBot 2i.

Decentralized Structural-RNN for Robot Crowd Navigation with Deep Reinforcement Learning

Nov 09, 2020

Abstract:Safe and efficient navigation through human crowds is an essential capability for mobile robots. Previous work on robot crowd navigation assumes that the dynamics of all agents are known and well-defined. In addition, the performance of previous methods deteriorates in partially observable environments and environments with dense crowds. To tackle these problems, we propose decentralized structural-Recurrent Neural Network (DS-RNN), a novel network that reasons about spatial and temporal relationships for robot decision making in crowd navigation. We train our network with model-free deep reinforcement learning without any expert supervision. We demonstrate that our model outperforms previous methods and successfully transfer the policy learned in the simulator to a real-world TurtleBot 2i.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge