Tara Javidi

Convex and Non-convex Federated Learning with Stale Stochastic Gradients: Diminishing Step Size is All You Need

Mar 03, 2026Abstract:We propose a general framework for distributed stochastic optimization under delayed gradient models. In this setting, $n$ local agents leverage their own data and computation to assist a central server in minimizing a global objective composed of agents' local cost functions. Each agent is allowed to transmit stochastic-potentially biased and delayed-estimates of its local gradient. While a prior work has advocated delay-adaptive step sizes for stochastic gradient descent (SGD) in the presence of delays, we demonstrate that a pre-chosen diminishing step size is sufficient and matches the performance of the adaptive scheme. Moreover, our analysis establishes that diminishing step sizes recover the optimal SGD rates for nonconvex and strongly convex objectives.

$κ$-Explorer: A Unified Framework for Active Model Estimation in MDPs

Feb 23, 2026Abstract:In tabular Markov decision processes (MDPs) with perfect state observability, each trajectory provides active samples from the transition distributions conditioned on state-action pairs. Consequently, accurate model estimation depends on how the exploration policy allocates visitation frequencies in accordance with the intrinsic complexity of each transition distribution. Building on recent work on coverage-based exploration, we introduce a parameterized family of decomposable and concave objective functions $U_κ$ that explicitly incorporate both intrinsic estimation complexity and extrinsic visitation frequency. Moreover, the curvature $κ$ provides a unified treatment of various global objectives, such as the average-case and worst-case estimation error objectives. Using the closed-form characterization of the gradient of $U_κ$, we propose $κ$-Explorer, an active exploration algorithm that performs Frank-Wolfe-style optimization over state-action occupancy measures. The diminishing-returns structure of $U_κ$ naturally prioritizes underexplored and high-variance transitions, while preserving smoothness properties that enable efficient optimization. We establish tight regret guarantees for $κ$-Explorer and further introduce a fully online and computationally efficient surrogate algorithm for practical use. Experiments on benchmark MDPs demonstrate that $κ$-Explorer provides superior performance compared to existing exploration strategies.

3D Scene Rendering with Multimodal Gaussian Splatting

Feb 19, 2026Abstract:3D scene reconstruction and rendering are core tasks in computer vision, with applications spanning industrial monitoring, robotics, and autonomous driving. Recent advances in 3D Gaussian Splatting (GS) and its variants have achieved impressive rendering fidelity while maintaining high computational and memory efficiency. However, conventional vision-based GS pipelines typically rely on a sufficient number of camera views to initialize the Gaussian primitives and train their parameters, typically incurring additional processing cost during initialization while falling short in conditions where visual cues are unreliable, such as adverse weather, low illumination, or partial occlusions. To cope with these challenges, and motivated by the robustness of radio-frequency (RF) signals to weather, lighting, and occlusions, we introduce a multimodal framework that integrates RF sensing, such as automotive radar, with GS-based rendering as a more efficient and robust alternative to vision-only GS rendering. The proposed approach enables efficient depth prediction from only sparse RF-based depth measurements, yielding a high-quality 3D point cloud for initializing Gaussian functions across diverse GS architectures. Numerical tests demonstrate the merits of judiciously incorporating RF sensing into GS pipelines, achieving high-fidelity 3D scene rendering driven by RF-informed structural accuracy.

From Relative Entropy to Minimax: A Unified Framework for Coverage in MDPs

Jan 17, 2026Abstract:Targeted and deliberate exploration of state--action pairs is essential in reward-free Markov Decision Problems (MDPs). More precisely, different state-action pairs exhibit different degree of importance or difficulty which must be actively and explicitly built into a controlled exploration strategy. To this end, we propose a weighted and parameterized family of concave coverage objectives, denoted by $U_ρ$, defined directly over state--action occupancy measures. This family unifies several widely studied objectives within a single framework, including divergence-based marginal matching, weighted average coverage, and worst-case (minimax) coverage. While the concavity of $U_ρ$ captures the diminishing return associated with over-exploration, the simple closed form of the gradient of $U_ρ$ enables an explicit control to prioritize under-explored state--action pairs. Leveraging this structure, we develop a gradient-based algorithm that actively steers the induced occupancy toward a desired coverage pattern. Moreover, we show that as $ρ$ increases, the resulting exploration strategy increasingly emphasizes the least-explored state--action pairs, recovering worst-case coverage behavior in the limit.

ActiveInitSplat: How Active Image Selection Helps Gaussian Splatting

Mar 10, 2025

Abstract:Gaussian splatting (GS) along with its extensions and variants provides outstanding performance in real-time scene rendering while meeting reduced storage demands and computational efficiency. While the selection of 2D images capturing the scene of interest is crucial for the proper initialization and training of GS, hence markedly affecting the rendering performance, prior works rely on passively and typically densely selected 2D images. In contrast, this paper proposes `ActiveInitSplat', a novel framework for active selection of training images for proper initialization and training of GS. ActiveInitSplat relies on density and occupancy criteria of the resultant 3D scene representation from the selected 2D images, to ensure that the latter are captured from diverse viewpoints leading to better scene coverage and that the initialized Gaussian functions are well aligned with the actual 3D structure. Numerical tests on well-known simulated and real environments demonstrate the merits of ActiveInitSplat resulting in significant GS rendering performance improvement over passive GS baselines, in the widely adopted LPIPS, SSIM, and PSNR metrics.

Active Sampling and Gaussian Reconstruction for Radio Frequency Radiance Field

Dec 11, 2024

Abstract:Radio-frequency (RF) Radiance Field reconstruction is a challenging problem. The difficulty lies in the interactions between the propagating signal and objects, such as reflections and diffraction, which are hard to model precisely, especially when the shapes and materials of the objects are unknown. Previously, a neural network-based method was proposed to reconstruct the RF Radiance Field, showing promising results. However, this neural network-based method has some limitations: it requires a large number of samples for training and is computationally expensive. Additionally, the neural network only provides the predicted mean of the RF Radiance Field and does not offer an uncertainty model. In this work, we propose a training-free Gaussian reconstruction method for RF Radiance Field. Our method demonstrates that the required number of samples is significantly smaller compared to the neural network-based approach. Furthermore, we introduce an uncertainty model that provides confidence estimates for predictions at any selected position in the scene. We also combine the Gaussian reconstruction method with active sampling, which further reduces the number of samples needed to achieve the same performance. Finally, we explore the potential benefits of our method in a quasi-dynamic setting, showcasing its ability to adapt to changes in the scene without requiring the entire process to be repeated.

Trojan Cleansing with Neural Collapse

Nov 19, 2024

Abstract:Trojan attacks are sophisticated training-time attacks on neural networks that embed backdoor triggers which force the network to produce a specific output on any input which includes the trigger. With the increasing relevance of deep networks which are too large to train with personal resources and which are trained on data too large to thoroughly audit, these training-time attacks pose a significant risk. In this work, we connect trojan attacks to Neural Collapse, a phenomenon wherein the final feature representations of over-parameterized neural networks converge to a simple geometric structure. We provide experimental evidence that trojan attacks disrupt this convergence for a variety of datasets and architectures. We then use this disruption to design a lightweight, broadly generalizable mechanism for cleansing trojan attacks from a wide variety of different network architectures and experimentally demonstrate its efficacy.

Coverage Analysis for Digital Cousin Selection -- Improving Multi-Environment Q-Learning

Nov 13, 2024Abstract:Q-learning is widely employed for optimizing various large-dimensional networks with unknown system dynamics. Recent advancements include multi-environment mixed Q-learning (MEMQ) algorithms, which utilize multiple independent Q-learning algorithms across multiple, structurally related but distinct environments and outperform several state-of-the-art Q-learning algorithms in terms of accuracy, complexity, and robustness. We herein conduct a comprehensive probabilistic coverage analysis to ensure optimal data coverage conditions for MEMQ algorithms. First, we derive upper and lower bounds on the expectation and variance of different coverage coefficients (CC) for MEMQ algorithms. Leveraging these bounds, we develop a simple way of comparing the utilities of multiple environments in MEMQ algorithms. This approach appears to be near optimal versus our previously proposed partial ordering approach. We also present a novel CC-based MEMQ algorithm to improve the accuracy and complexity of existing MEMQ algorithms. Numerical experiments are conducted using random network graphs with four different graph properties. Our algorithm can reduce the average policy error (APE) by 65% compared to partial ordering and is 95% faster than the exhaustive search. It also achieves 60% less APE than several state-of-the-art reinforcement learning and prior MEMQ algorithms. Additionally, we numerically verify the theoretical results and show their scalability with the action-space size.

Primal-Dual Continual Learning: Stability and Plasticity through Lagrange Multipliers

Sep 29, 2023

Abstract:Continual learning is inherently a constrained learning problem. The goal is to learn a predictor under a \emph{no-forgetting} requirement. Although several prior studies formulate it as such, they do not solve the constrained problem explicitly. In this work, we show that it is both possible and beneficial to undertake the constrained optimization problem directly. To do this, we leverage recent results in constrained learning through Lagrangian duality. We focus on memory-based methods, where a small subset of samples from previous tasks can be stored in a replay buffer. In this setting, we analyze two versions of the continual learning problem: a coarse approach with constraints at the task level and a fine approach with constraints at the sample level. We show that dual variables indicate the sensitivity of the optimal value with respect to constraint perturbations. We then leverage this result to partition the buffer in the coarse approach, allocating more resources to harder tasks, and to populate the buffer in the fine approach, including only impactful samples. We derive sub-optimality bounds, and empirically corroborate our theoretical results in various continual learning benchmarks. We also discuss the limitations of these methods with respect to the amount of memory available and the number of constraints involved in the optimization problem.

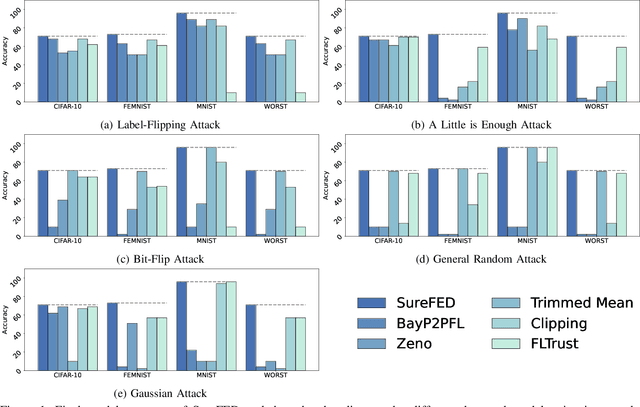

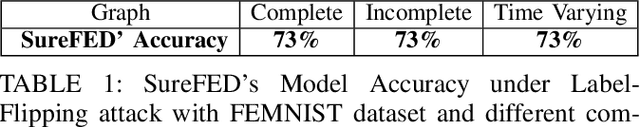

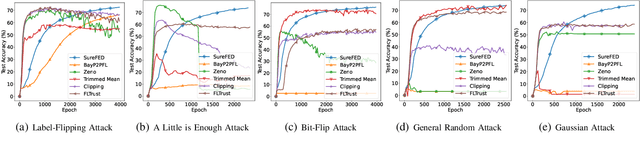

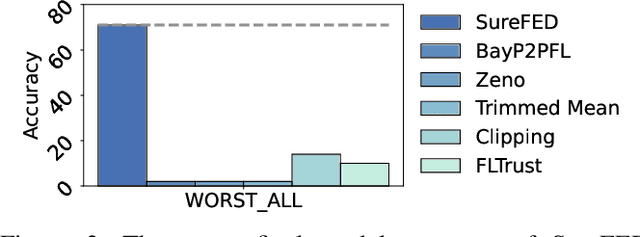

SABRE: Robust Bayesian Peer-to-Peer Federated Learning

Aug 04, 2023

Abstract:We introduce SABRE, a novel framework for robust variational Bayesian peer-to-peer federated learning. We analyze the robustness of the known variational Bayesian peer-to-peer federated learning framework (BayP2PFL) against poisoning attacks and subsequently show that BayP2PFL is not robust against those attacks. The new SABRE aggregation methodology is then devised to overcome the limitations of the existing frameworks. SABRE works well in non-IID settings, does not require the majority of the benign nodes over the compromised ones, and even outperforms the baseline algorithm in benign settings. We theoretically prove the robustness of our algorithm against data / model poisoning attacks in a decentralized linear regression setting. Proof-of-Concept evaluations on benchmark data from image classification demonstrate the superiority of SABRE over the existing frameworks under various poisoning attacks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge