Ninad Jadhav

Decentralized Vision-Based Autonomous Aerial Wildlife Monitoring

Aug 20, 2025

Abstract:Wildlife field operations demand efficient parallel deployment methods to identify and interact with specific individuals, enabling simultaneous collective behavioral analysis, and health and safety interventions. Previous robotics solutions approach the problem from the herd perspective, or are manually operated and limited in scale. We propose a decentralized vision-based multi-quadrotor system for wildlife monitoring that is scalable, low-bandwidth, and sensor-minimal (single onboard RGB camera). Our approach enables robust identification and tracking of large species in their natural habitat. We develop novel vision-based coordination and tracking algorithms designed for dynamic, unstructured environments without reliance on centralized communication or control. We validate our system through real-world experiments, demonstrating reliable deployment in diverse field conditions.

WiSER-X: Wireless Signals-based Efficient Decentralized Multi-Robot Exploration without Explicit Information Exchange

Dec 27, 2024Abstract:We introduce a Wireless Signal based Efficient multi-Robot eXploration (WiSER-X) algorithm applicable to a decentralized team of robots exploring an unknown environment with communication bandwidth constraints. WiSER-X relies only on local inter-robot relative position estimates, that can be obtained by exchanging signal pings from onboard sensors such as WiFi, Ultra-Wide Band, amongst others, to inform the exploration decisions of individual robots to minimize redundant coverage overlaps. Furthermore, WiSER-X also enables asynchronous termination without requiring a shared map between the robots. It also adapts to heterogeneous robot behaviors and even complete failures in unknown environment while ensuring complete coverage. Simulations show that WiSER-X leads to 58% lower overlap than a zero-information-sharing baseline algorithm-1 and only 23% more overlap than a full-information-sharing algorithm baseline algorithm-2.

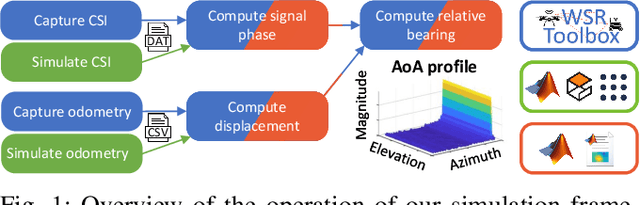

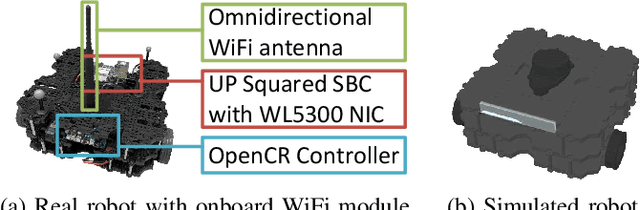

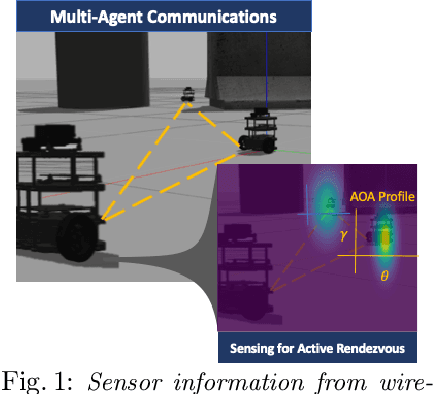

WiFi-CSI Sensing and Bearing Estimation in Multi-Robot Systems: An Open-Source Simulation Framework

Oct 02, 2024

Abstract:Development and testing of multi-robot systems employing wireless signal-based sensing requires access to suitable hardware, such as channel monitoring WiFi transceivers, which can pose significant limitations. The WiFi Sensor for Robotics (WSR) toolbox, introduced by Jadhav et al. in 2022, provides a novel solution by using WiFi Channel State Information (CSI) to compute relative bearing between robots. The toolbox leverages the amplitude and phase of WiFi signals and creates virtual antenna arrays by exploiting the motion of mobile robots, eliminating the need for physical antenna arrays. However, the WSR toolbox's reliance on an obsoleting WiFi transceiver hardware has limited its operability and accessibility, hindering broader application and development of relevant tools. We present an open-source simulation framework that replicates the WSR toolbox's capabilities using Gazebo and Matlab. By simulating WiFi-CSI data collection, our framework emulates the behavior of mobile robots equipped with the WSR toolbox, enabling precise bearing estimation without physical hardware. We validate the framework through experiments with both simulated and real Turtlebot3 robots, showing a close match between the obtained CSI data and the resulting bearing estimates. This work provides a virtual environment for developing and testing WiFi-CSI-based multi-robot localization without relying on physical hardware. All code and experimental setup information are publicly available at https://github.com/BrendanxP/CSI-Simulation-Framework

Follow Anything: Open-set detection, tracking, and following in real-time

Aug 10, 2023

Abstract:Tracking and following objects of interest is critical to several robotics use cases, ranging from industrial automation to logistics and warehousing, to healthcare and security. In this paper, we present a robotic system to detect, track, and follow any object in real-time. Our approach, dubbed ``follow anything'' (FAn), is an open-vocabulary and multimodal model -- it is not restricted to concepts seen at training time and can be applied to novel classes at inference time using text, images, or click queries. Leveraging rich visual descriptors from large-scale pre-trained models (foundation models), FAn can detect and segment objects by matching multimodal queries (text, images, clicks) against an input image sequence. These detected and segmented objects are tracked across image frames, all while accounting for occlusion and object re-emergence. We demonstrate FAn on a real-world robotic system (a micro aerial vehicle) and report its ability to seamlessly follow the objects of interest in a real-time control loop. FAn can be deployed on a laptop with a lightweight (6-8 GB) graphics card, achieving a throughput of 6-20 frames per second. To enable rapid adoption, deployment, and extensibility, we open-source all our code on our project webpage at https://github.com/alaamaalouf/FollowAnything . We also encourage the reader the watch our 5-minutes explainer video in this https://www.youtube.com/watch?v=6Mgt3EPytrw .

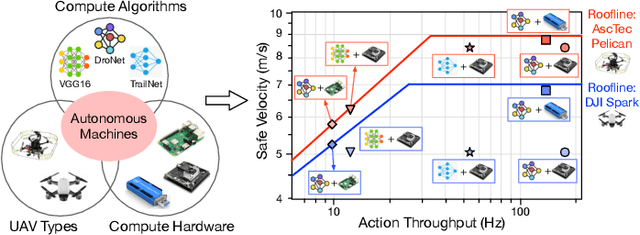

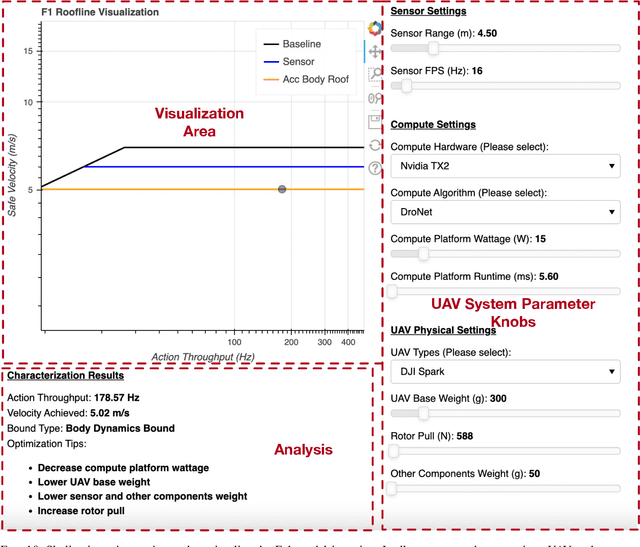

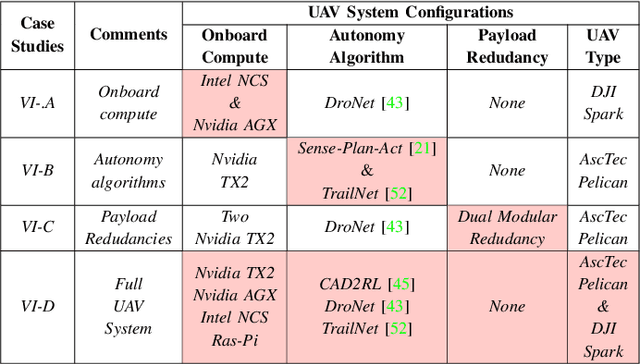

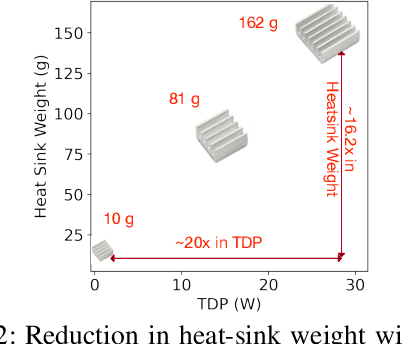

Roofline Model for UAVs: A Bottleneck Analysis Tool for Onboard Compute Characterization of Autonomous Unmanned Aerial Vehicles

Apr 22, 2022

Abstract:We introduce an early-phase bottleneck analysis and characterization model called the F-1 for designing computing systems that target autonomous Unmanned Aerial Vehicles (UAVs). The model provides insights by exploiting the fundamental relationships between various components in the autonomous UAV, such as sensor, compute, and body dynamics. To guarantee safe operation while maximizing the performance (e.g., velocity) of the UAV, the compute, sensor, and other mechanical properties must be carefully selected or designed. The F-1 model provides visual insights that can aid a system architect in understanding the optimal compute design or selection for autonomous UAVs. The model is experimentally validated using real UAVs, and the error is between 5.1\% to 9.5\% compared to real-world flight tests. An interactive web-based tool for the F-1 model called Skyline is available for free of cost use at: ~\url{https://bit.ly/skyline-tool}

Toolbox Release: A WiFi-Based Relative Bearing Sensor for Robotics

Sep 24, 2021

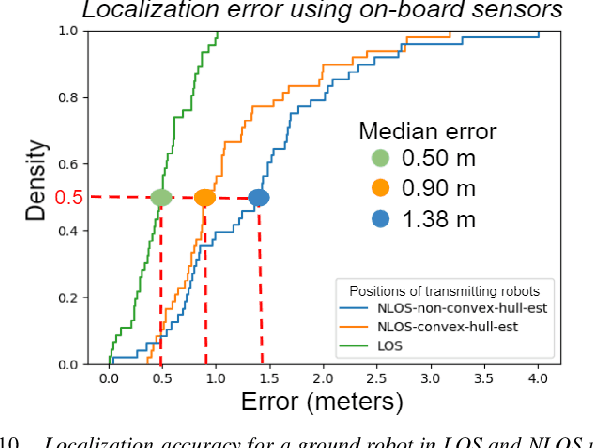

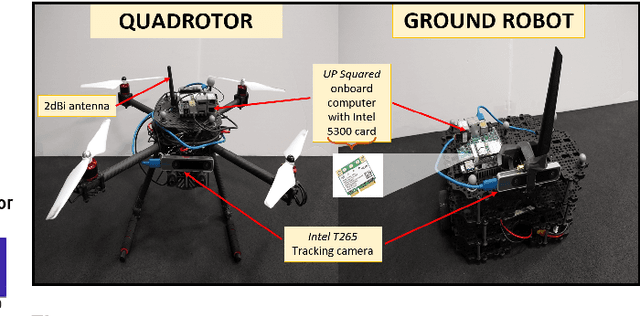

Abstract:This paper presents the WiFi-Sensor-for-Robotics (WSR) toolbox, an open source C++ framework. It enables robots in a team to obtain relative bearing to each other, even in non-line-of-sight (NLOS) settings which is a very challenging problem in robotics. It does so by analyzing the phase of their communicated WiFi signals as the robots traverse the environment. This capability, based on the theory developed in our prior works, is made available for the first time as an opensource tool. It is motivated by the lack of easily deployable solutions that use robots' local resources (e.g WiFi) for sensing in NLOS. This has implications for localization, ad-hoc robot networks, and security in multi-robot teams, amongst others. The toolbox is designed for distributed and online deployment on robot platforms using commodity hardware and on-board sensors. We also release datasets demonstrating its performance in NLOS and line-of-sight (LOS) settings for a multi-robot localization usecase. Empirical results show that the bearing estimation from our toolbox achieves mean accuracy of 5.10 degrees. This leads to a median error of 0.5m and 0.9m for localization in LOS and NLOS settings respectively, in a hardware deployment in an indoor office environment.

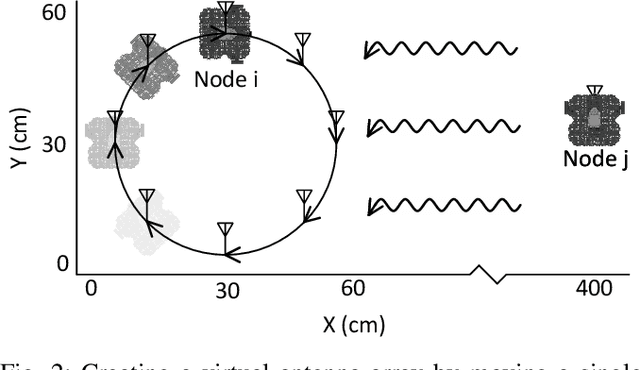

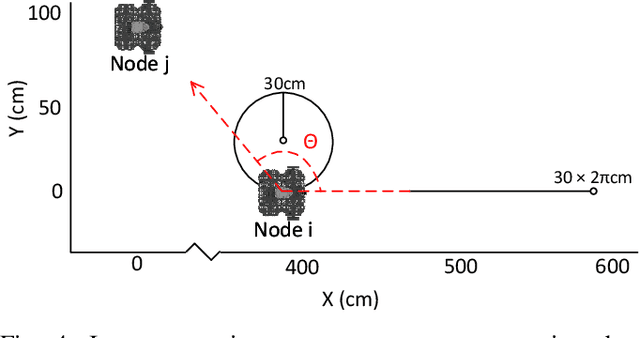

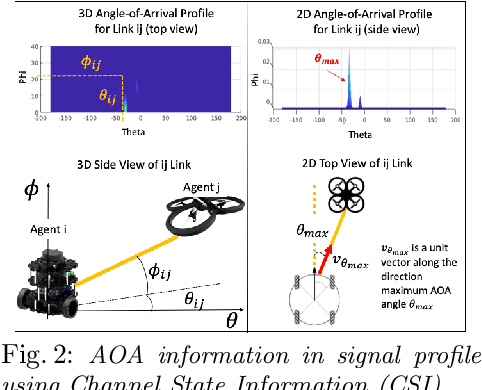

WSR: A WiFi Sensor for Collaborative Robotics

Dec 08, 2020

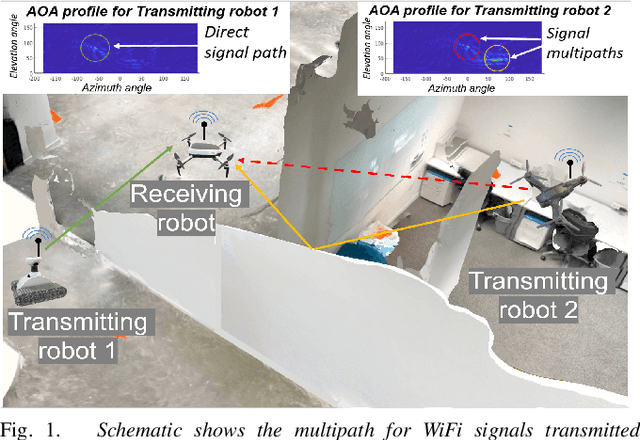

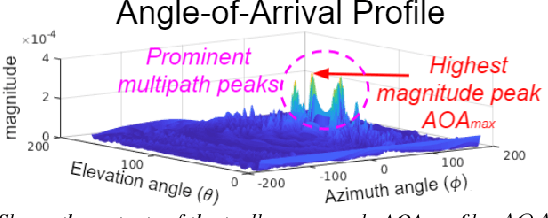

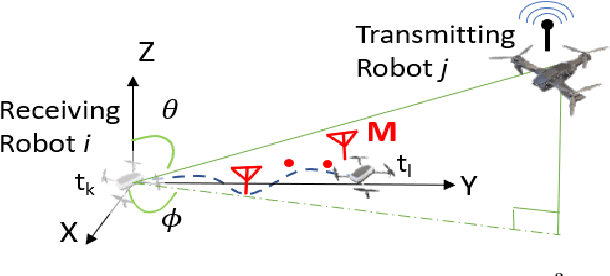

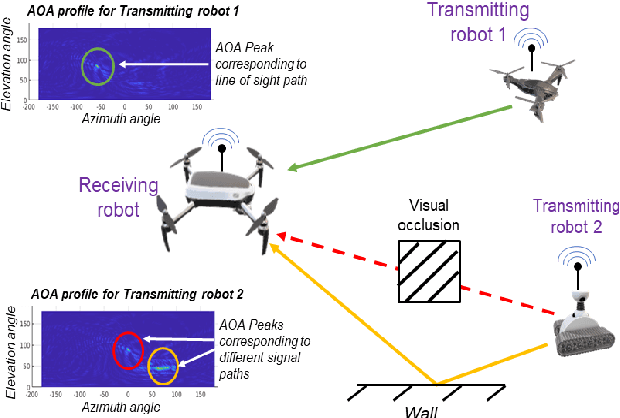

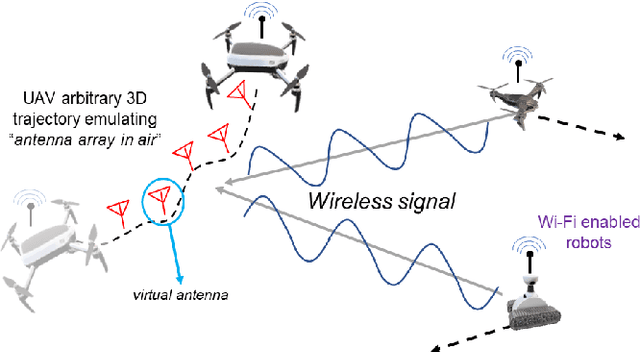

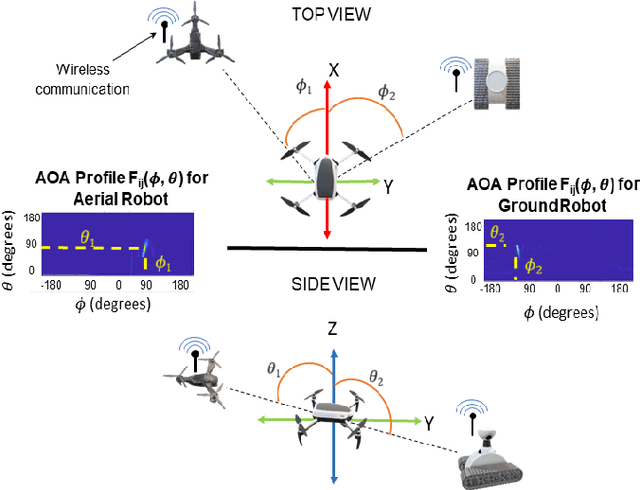

Abstract:In this paper we derive a new capability for robots to measure relative direction, or Angle-of-Arrival (AOA), to other robots operating in non-line-of-sight and unmapped environments with occlusions, without requiring external infrastructure. We do so by capturing all of the paths that a WiFi signal traverses as it travels from a transmitting to a receiving robot, which we term an AOA profile. The key intuition is to "emulate antenna arrays in the air" as the robots move in 3D space, a method akin to Synthetic Aperture Radar (SAR). The main contributions include development of i) a framework to accommodate arbitrary 3D trajectories, as well as continuous mobility all robots, while computing AOA profiles and ii) an accompanying analysis that provides a lower bound on variance of AOA estimation as a function of robot trajectory geometry based on the Cramer Rao Bound. This is a critical distinction with previous work on SAR that restricts robot mobility to prescribed motion patterns, does not generalize to 3D space, and/or requires transmitting robots to be static during data acquisition periods. Our method results in more accurate AOA profiles and thus better AOA estimation, and formally characterizes this observation as the informativeness of the trajectory; a computable quantity for which we derive a closed form. All theoretical developments are substantiated by extensive simulation and hardware experiments. We also show that our formulation can be used with an off-the-shelf trajectory estimation sensor. Finally, we demonstrate the performance of our system on a multi-robot dynamic rendezvous task.

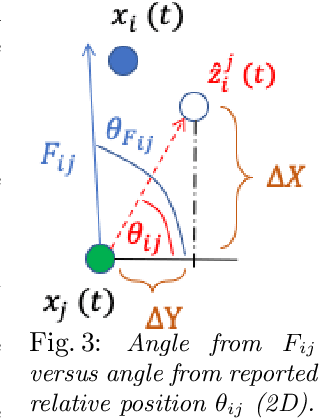

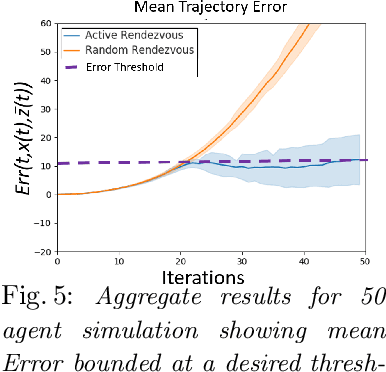

Active Rendezvous for Multi-Robot Pose Graph Optimization using Sensing over Wi-Fi

Jul 12, 2019

Abstract:We present a novel framework for collaboration amongst a team of robots performing Pose Graph Optimization (PGO) that addresses two important challenges for multi-robot SLAM: that of enabling information exchange "on-demand" via active rendezvous, and that of rejecting outlier measurements with high probability. Our key insight is to exploit relative position data present in the communication channel between agents, as an independent measurement in PGO. We show that our algorithmic and experimental framework for integrating Channel State Information (CSI) over the communication channels, with multi-agent PGO, addresses the two open challenges of enabling information exchange and rejecting outliers. Our presented framework is distributed and applicable in low-lighting or featureless environments where traditional sensors often fail. We present extensive experimental results on actual robots showing both the use of active rendezvous resulting in error reduction by 6X as compared to randomly occurring rendezvous and the use of CSI observations providing a reduction in ground truth pose estimation errors of 32%. These results demonstrate the promise of using a combination of multi-robot coordination and CSI to address challenges in multi-agent localization and mapping -- providing an important step towards integrating communication as a novel sensor for SLAM tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge