Nicky Zimmerman

Dalle Molle Institute for Artificial Intelligence, University of Bonn

SignScene: Visual Sign Grounding for Mapless Navigation

Feb 13, 2026Abstract:Navigational signs enable humans to navigate unfamiliar environments without maps. This work studies how robots can similarly exploit signs for mapless navigation in the open world. A central challenge lies in interpreting signs: real-world signs are diverse and complex, and their abstract semantic contents need to be grounded in the local 3D scene. We formalize this as sign grounding, the problem of mapping semantic instructions on signs to corresponding scene elements and navigational actions. Recent Vision-Language Models (VLMs) offer the semantic common-sense and reasoning capabilities required for this task, but are sensitive to how spatial information is represented. We propose SignScene, a sign-centric spatial-semantic representation that captures navigation-relevant scene elements and sign information, and presents them to VLMs in a form conducive to effective reasoning. We evaluate our grounding approach on a dataset of 114 queries collected across nine diverse environment types, achieving 88% grounding accuracy and significantly outperforming baselines. Finally, we demonstrate that it enables real-world mapless navigation on a Spot robot using only signs.

Human-Inspired Long-Term Indoor Localization in Human-Oriented Environment

Oct 16, 2024Abstract:Lifelong localization is crucial for enabling the autonomy of service robots. In this paper, we present an overview of our past research on long-term localization and mapping, exploiting geometric priors such as floor plans and integrating textual and semantic information. Our approach was validated on challenging sequences spanning over many months, and we released open source implementations.

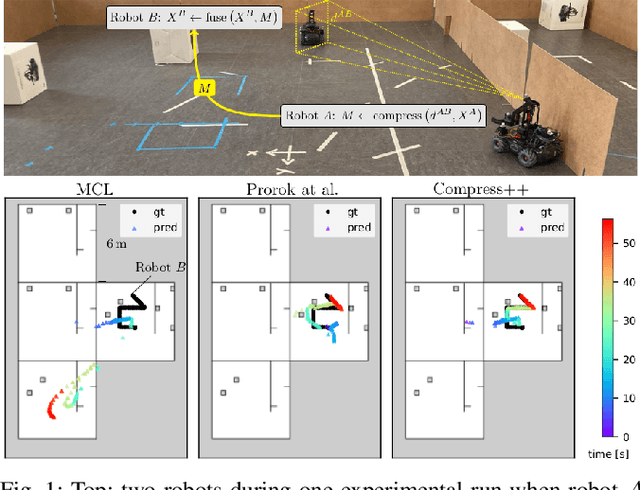

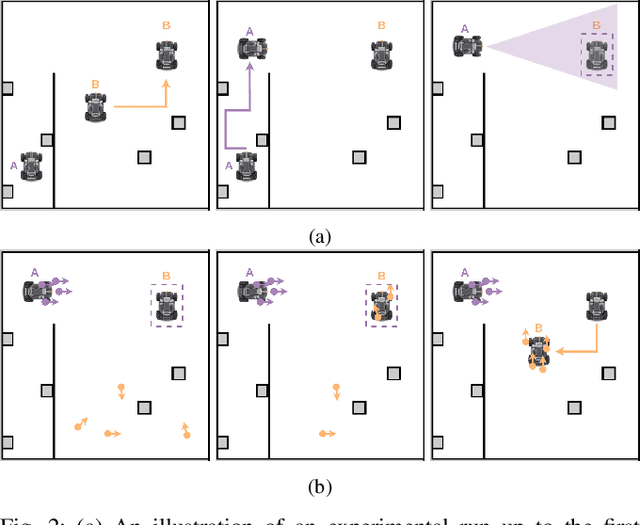

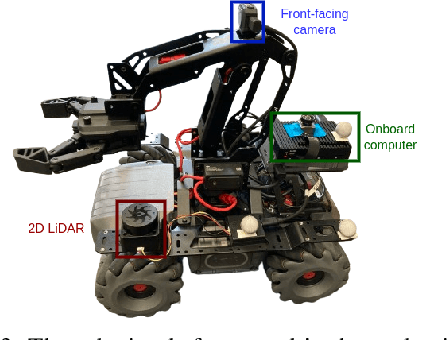

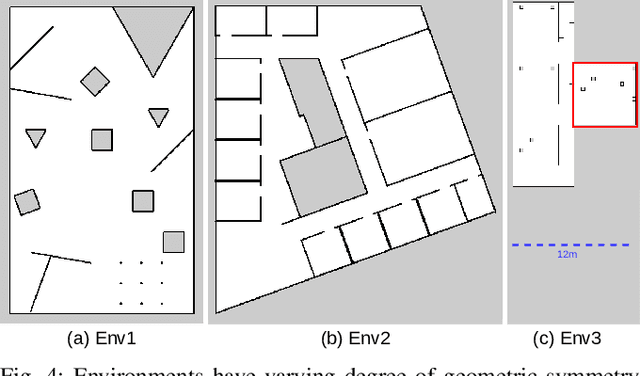

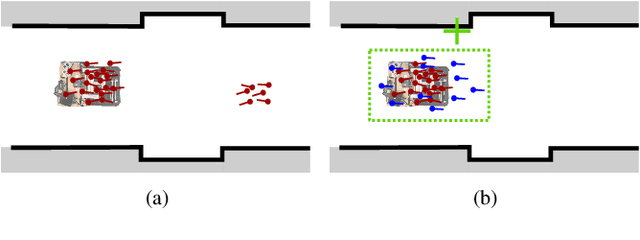

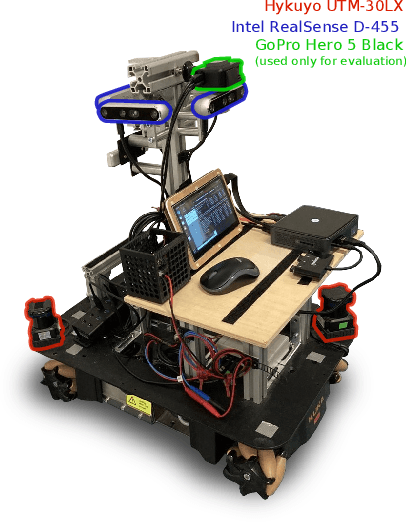

Resource-Aware Collaborative Monte Carlo Localization with Distribution Compression

Apr 02, 2024

Abstract:Global localization is essential in enabling robot autonomy, and collaborative localization is key for multi-robot systems. In this paper, we address the task of collaborative global localization under computational and communication constraints. We propose a method which reduces the amount of information exchanged and the computational cost. We also analyze, implement and open-source seminal approaches, which we believe to be a valuable contribution to the community. We exploit techniques for distribution compression in near-linear time, with error guarantees. We evaluate our approach and the implemented baselines on multiple challenging scenarios, simulated and real-world. Our approach can run online on an onboard computer. We release an open-source C++/ROS2 implementation of our approach, as well as the baselines

Fully Onboard Low-Power Localization with Semantic Sensor Fusion on a Nano-UAV using Floor Plans

Oct 19, 2023

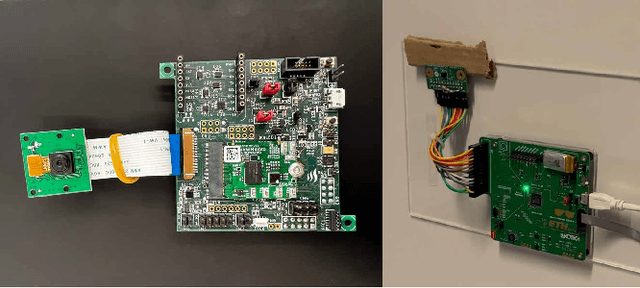

Abstract:Nano-sized unmanned aerial vehicles (UAVs) are well-fit for indoor applications and for close proximity to humans. To enable autonomy, the nano-UAV must be able to self-localize in its operating environment. This is a particularly-challenging task due to the limited sensing and compute resources on board. This work presents an online and onboard approach for localization in floor plans annotated with semantic information. Unlike sensor-based maps, floor plans are readily-available, and do not increase the cost and time of deployment. To overcome the difficulty of localizing in sparse maps, the proposed approach fuses geometric information from miniaturized time-of-flight sensors and semantic cues. The semantic information is extracted from images by deploying a state-of-the-art object detection model on a high-performance multi-core microcontroller onboard the drone, consuming only 2.5mJ per frame and executing in 38ms. In our evaluation, we globally localize in a real-world office environment, achieving 90% success rate. We also release an open-source implementation of our work.

Flexible and Fully Quantized Ultra-Lightweight TinyissimoYOLO for Ultra-Low-Power Edge Systems

Jul 14, 2023

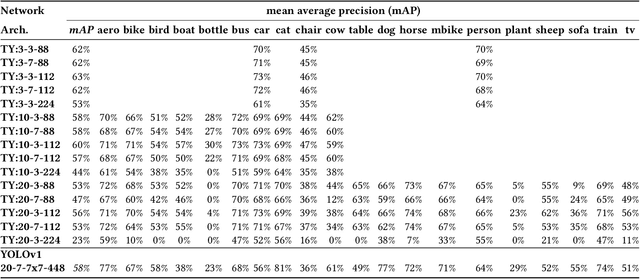

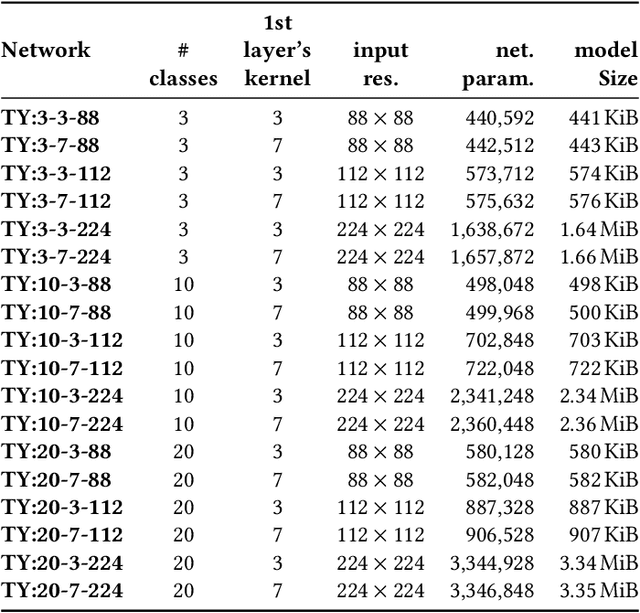

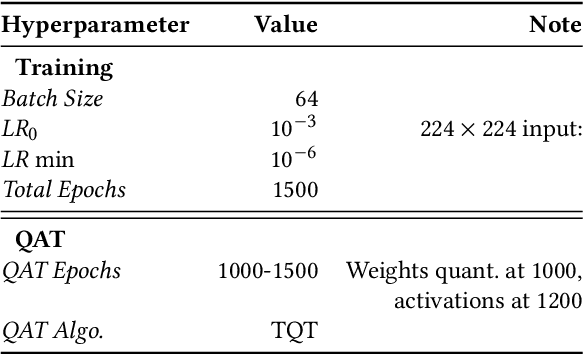

Abstract:This paper deploys and explores variants of TinyissimoYOLO, a highly flexible and fully quantized ultra-lightweight object detection network designed for edge systems with a power envelope of a few milliwatts. With experimental measurements, we present a comprehensive characterization of the network's detection performance, exploring the impact of various parameters, including input resolution, number of object classes, and hidden layer adjustments. We deploy variants of TinyissimoYOLO on state-of-the-art ultra-low-power extreme edge platforms, presenting an in-depth a comparison on latency, energy efficiency, and their ability to efficiently parallelize the workload. In particular, the paper presents a comparison between a novel parallel RISC-V processor (GAP9 from Greenwaves) with and without use of its on-chip hardware accelerator, an ARM Cortex-M7 core (STM32H7 from ST Microelectronics), two ARM Cortex-M4 cores (STM32L4 from STM and Apollo4b from Ambiq), and a multi-core platform with a CNN hardware accelerator (Analog Devices MAX78000). Experimental results show that the GAP9's hardware accelerator achieves the lowest inference latency and energy at 2.12ms and 150uJ respectively, which is around 2x faster and 20% more efficient than the next best platform, the MAX78000. The hardware accelerator of GAP9 can even run an increased resolution version of TinyissimoYOLO with 112x112 pixels and 10 detection classes within 3.2ms, consuming 245uJ. To showcase the competitiveness of a versatile general-purpose system we also deployed and profiled a multi-core implementation on GAP9 at different operating points, achieving 11.3ms with the lowest-latency and 490uJ with the most energy-efficient configuration. With this paper, we demonstrate the suitability and flexibility of TinyissimoYOLO on state-of-the-art detection datasets for real-time ultra-low-power edge inference.

Long-Term Indoor Localization with Metric-Semantic Mapping using a Floor Plan Prior

Mar 20, 2023Abstract:Object-based maps are relevant for scene understanding since they integrate geometric and semantic information of the environment, allowing autonomous robots to robustly localize and interact with on objects. In this paper, we address the task of constructing a metric-semantic map for the purpose of long-term object-based localization. We exploit 3D object detections from monocular RGB frames for both, the object-based map construction, and for globally localizing in the constructed map. To tailor the approach to a target environment, we propose an efficient way of generating 3D annotations to finetune the 3D object detection model. We evaluate our map construction in an office building, and test our long-term localization approach on challenging sequences recorded in the same environment over nine months. The experiments suggest that our approach is suitable for constructing metric-semantic maps, and that our localization approach is robust to long-term changes. Both, the mapping algorithm and the localization pipeline can run online on an onboard computer. We will release an open-source C++/ROS implementation of our approach.

Fully On-board Low-Power Localization with Multizone Time-of-Flight Sensors on Nano-UAVs

Nov 25, 2022Abstract:Nano-size unmanned aerial vehicles (UAVs) hold enormous potential to perform autonomous operations in complex environments, such as inspection, monitoring or data collection. Moreover, their small size allows safe operation close to humans and agile flight. An important part of autonomous flight is localization, which is a computationally intensive task especially on a nano-UAV that usually has strong constraints in sensing, processing and memory. This work presents a real-time localization approach with low element-count multizone range sensors for resource-constrained nano-UAVs. The proposed approach is based on a novel miniature 64-zone time-of-flight sensor from ST Microelectronics and a RISC-V-based parallel ultra low-power processor, to enable accurate and low latency Monte Carlo Localization on-board. Experimental evaluation using a nano-UAV open platform demonstrated that the proposed solution is capable of localizing on a 31.2m$\boldsymbol{^2}$ map with 0.15m accuracy and an above 95% success rate. The achieved accuracy is sufficient for localization in common indoor environments. We analyze tradeoffs in using full and half-precision floating point numbers as well as a quantized map and evaluate the accuracy and memory footprint across the design space. Experimental evaluation shows that parallelizing the execution for 8 RISC-V cores brings a 7x speedup and allows us to execute the algorithm on-board in real-time with a latency of 0.2-30ms (depending on the number of particles), while only increasing the overall drone power consumption by 3-7%. Finally, we provide an open-source implementation of our approach.

IR-MCL: Implicit Representation-Based Online Global Localization

Oct 06, 2022

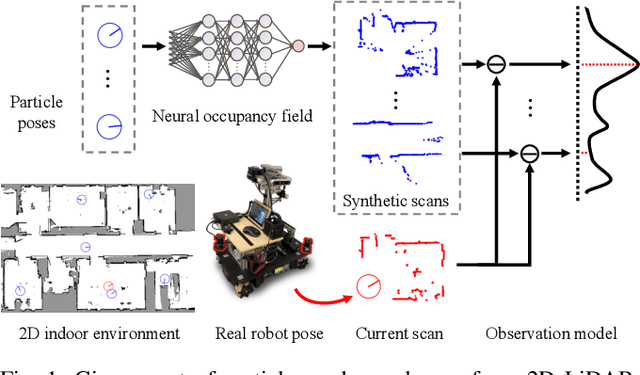

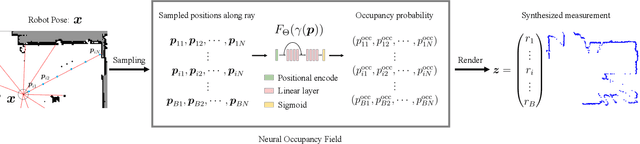

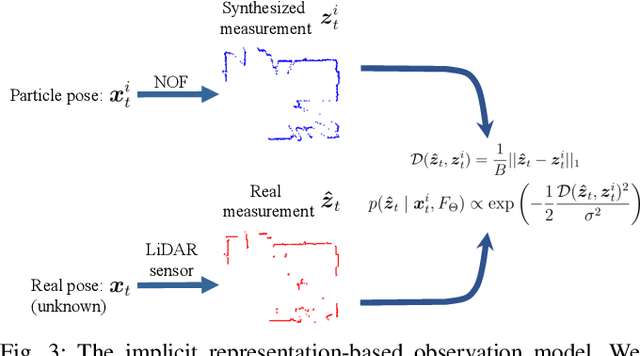

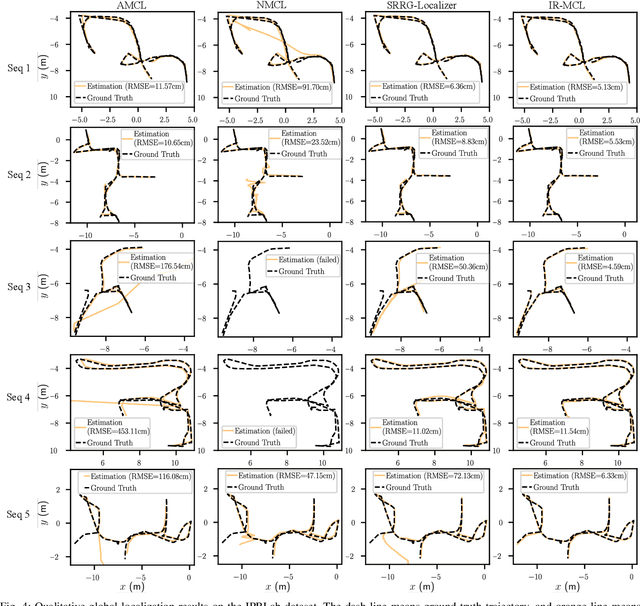

Abstract:Determining the state of a mobile robot is an essential building block of robot navigation systems. In this paper, we address the problem of estimating the robots pose in an indoor environment using 2D LiDAR data and investigate how modern environment models can improve gold standard Monte-Carlo localization (MCL) systems. We propose a neural occupancy field (NOF) to implicitly represent the scene using a neural network. With the pretrained network, we can synthesize 2D LiDAR scans for an arbitrary robot pose through volume rendering. Based on the implicit representation, we can obtain the similarity between a synthesized and actual scan as an observation model and integrate it into an MCL system to perform accurate localization. We evaluate our approach on five sequences of a self-recorded dataset and three publicly available datasets. We show that we can accurately and efficiently localize a robot using our approach surpassing the localization performance of state-of-the-art methods. The experiments suggest that the presented implicit representation is able to predict more accurate 2D LiDAR scans leading to an improved observation model for our particle filter-based localization. The code of our approach is released at: https://github.com/PRBonn/ir-mcl.

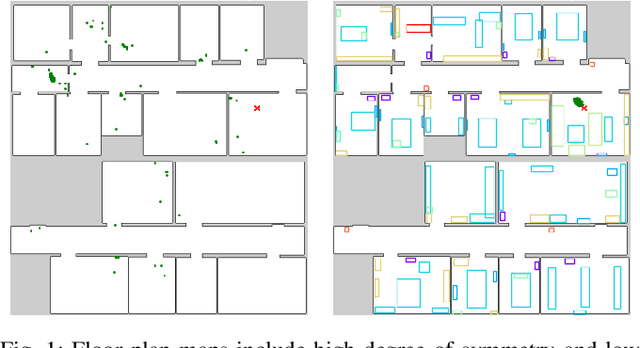

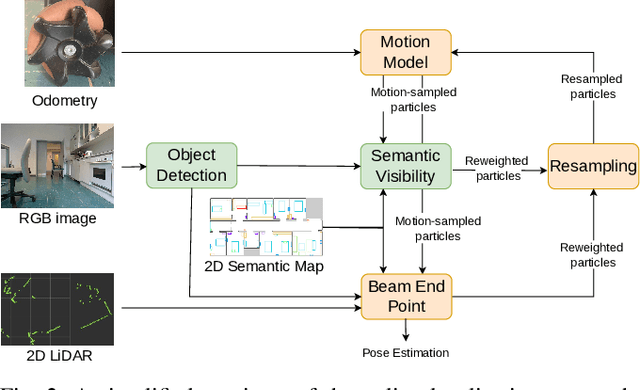

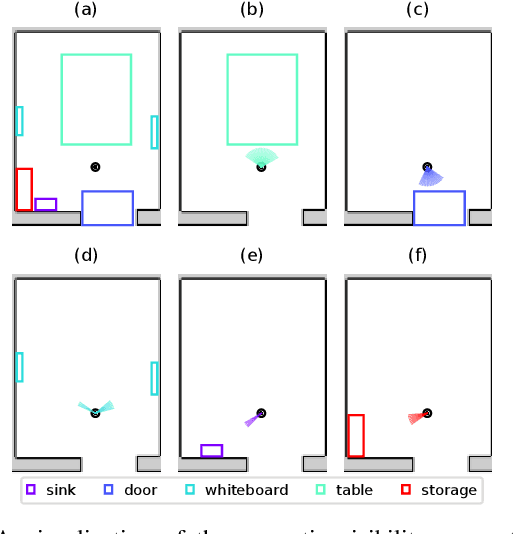

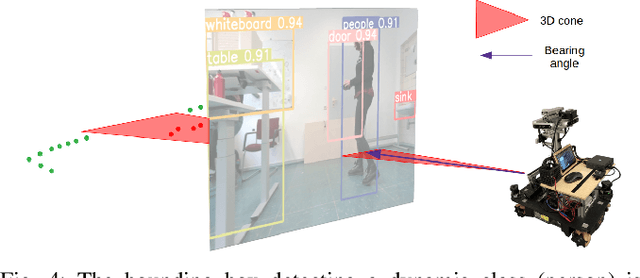

Long-Term Localization using Semantic Cues in Floor Plan Maps

Oct 04, 2022

Abstract:Lifelong localization in a given map is an essential capability for autonomous service robots. In this paper, we consider the task of long-term localization in a changing indoor environment given sparse CAD floor plans. The commonly used pre-built maps from the robot sensors may increase the cost and time of deployment. Furthermore, their detailed nature requires that they are updated when significant changes occur. We address the difficulty of localization when the correspondence between the map and the observations is low due to the sparsity of the CAD map and the changing environment. To overcome both challenges, we propose to exploit semantic cues that are commonly present in human-oriented spaces. These semantic cues can be detected using RGB cameras by utilizing object detection, and are matched against an easy-to-update, abstract semantic map. The semantic information is integrated into a Monte Carlo localization framework using a particle filter that operates on 2D LiDAR scans and camera data. We provide a long-term localization solution and a semantic map format, for environments that undergo changes to their interior structure and detailed geometric maps are not available. We evaluate our localization framework on multiple challenging indoor scenarios in an office environment, taken weeks apart. The experiments suggest that our approach is robust to structural changes and can run on an onboard computer. We released the open source implementation of our approach written in C++ together with a ROS wrapper.

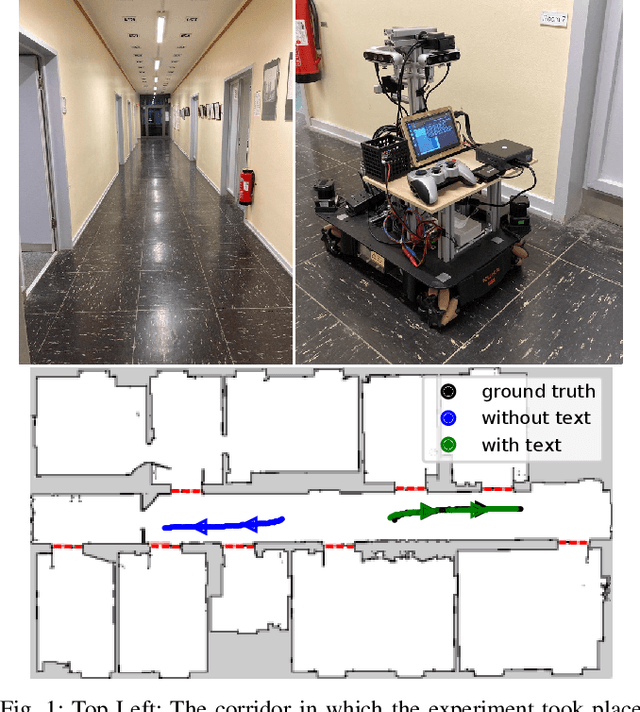

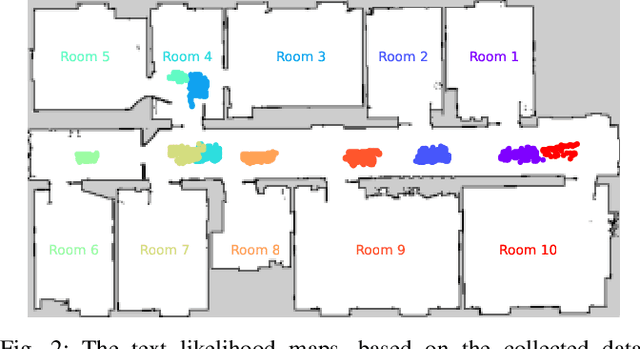

Robust Onboard Localization in Changing Environments Exploiting Text Spotting

Mar 23, 2022

Abstract:Robust localization in a given map is a crucial component of most autonomous robots. In this paper, we address the problem of localizing in an indoor environment that changes and where prominent structures have no correspondence in the map built at a different point in time. To overcome the discrepancy between the map and the observed environment caused by such changes, we exploit human-readable localization cues to assist localization. These cues are readily available in most facilities and can be detected using RGB camera images by utilizing text spotting. We integrate these cues into a Monte Carlo localization framework using a particle filter that operates on 2D LiDAR scans and camera data. By this, we provide a robust localization solution for environments with structural changes and dynamics by humans walking. We evaluate our localization framework on multiple challenging indoor scenarios in an office environment. The experiments suggest that our approach is robust to structural changes and can run on an onboard computer. We release an open source implementation of our approach (upon paper acceptance), which uses off-the-shelf text spotting, written in C++ with a ROS wrapper.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge