Hanna Müller

BatDeck -- Ultra Low-power Ultrasonic Ego-velocity Estimation and Obstacle Avoidance on Nano-drones

Dec 13, 2024

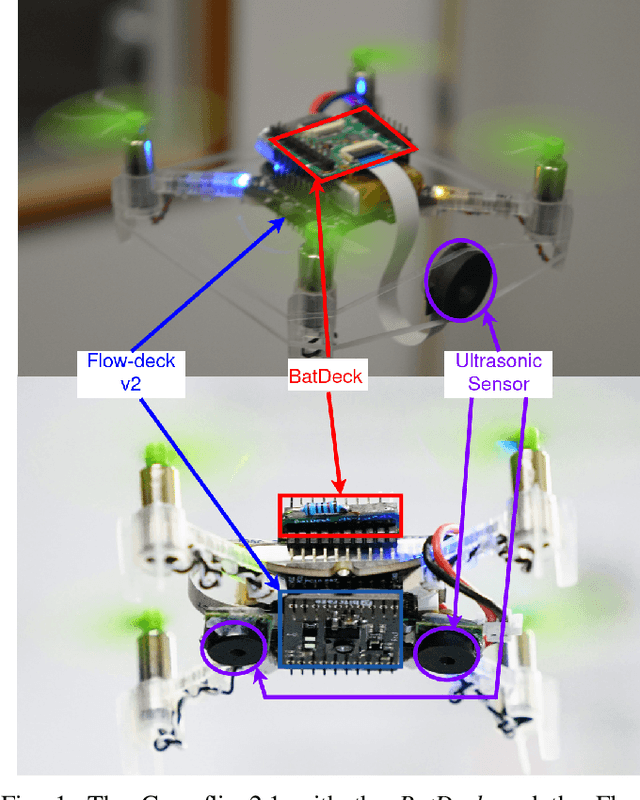

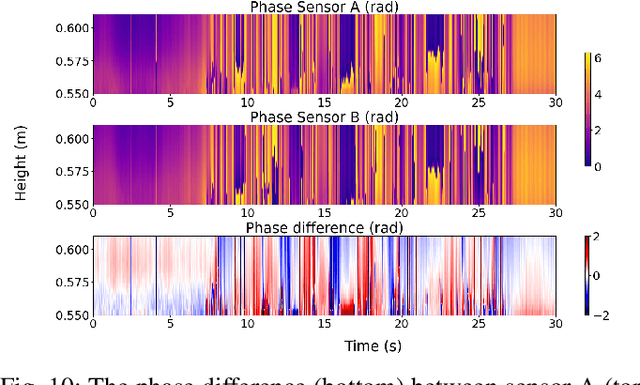

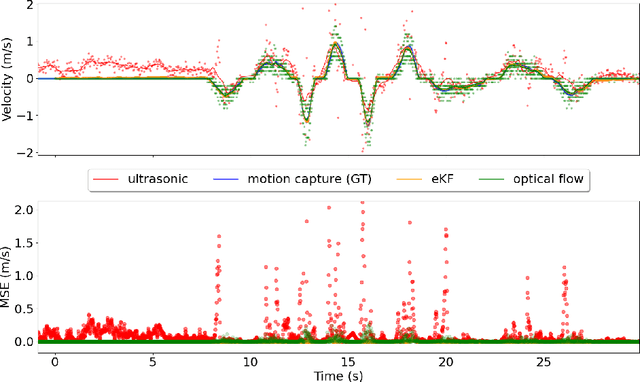

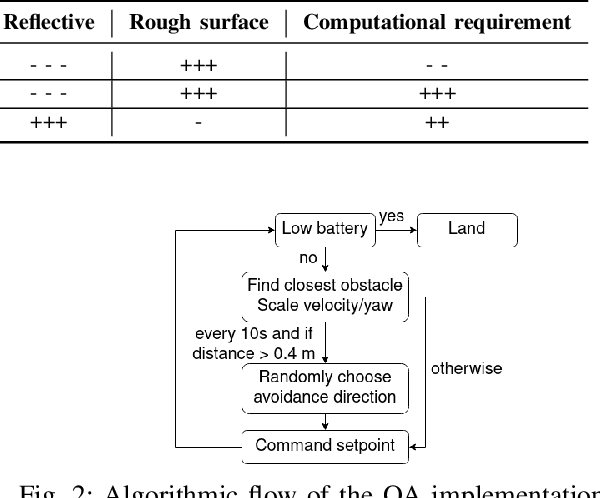

Abstract:Nano-drones, with their small, lightweight design, are ideal for confined-space rescue missions and inherently safe for human interaction. However, their limited payload restricts the critical sensing needed for ego-velocity estimation and obstacle detection to single-bean laser-based time-of-flight (ToF) and low-resolution optical sensors. Although those sensors have demonstrated good performance, they fail in some complex real-world scenarios, especially when facing transparent or reflective surfaces (ToFs) or when lacking visual features (optical-flow sensors). Taking inspiration from bats, this paper proposes a novel two-way ranging-based method for ego-velocity estimation and obstacle avoidance based on down-and-forward facing ultra-low-power ultrasonic sensors, which improve the performance when the drone faces reflective materials or navigates in complete darkness. Our results demonstrate that our new sensing system achieves a mean square error of 0.019 m/s on ego-velocity estimation and allows exploration for a flight time of 8 minutes while covering 136 m on average in a challenging environment with transparent and reflective obstacles. We also compare ultrasonic and laser-based ToF sensing techniques for obstacle avoidance, as well as optical flow and ultrasonic-based techniques for ego-velocity estimation, denoting how these systems and methods can be complemented to enhance the robustness of nano-drone operations.

BatDeck: Advancing Nano-drone Navigation with Low-power Ultrasound-based Obstacle Avoidance

Mar 25, 2024

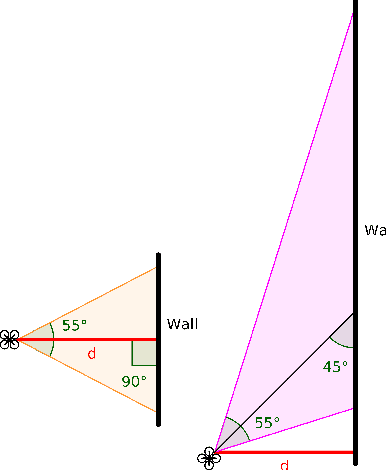

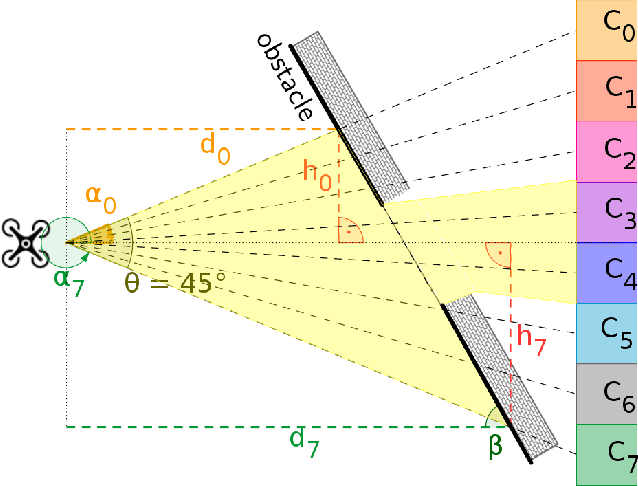

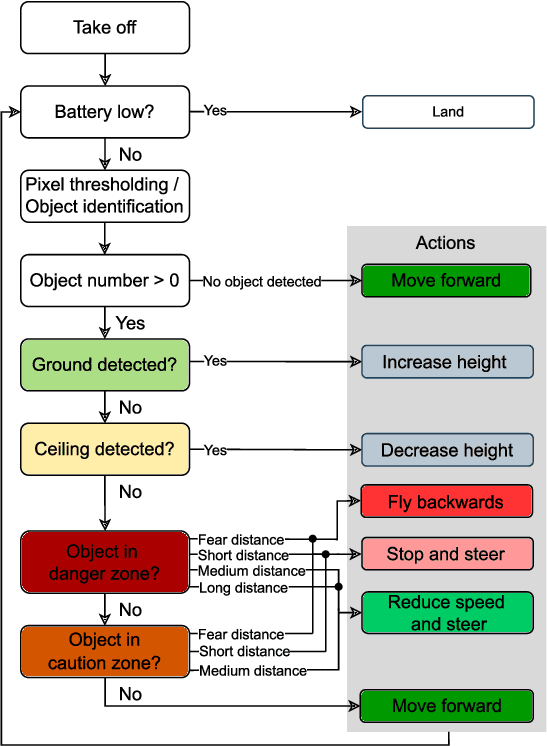

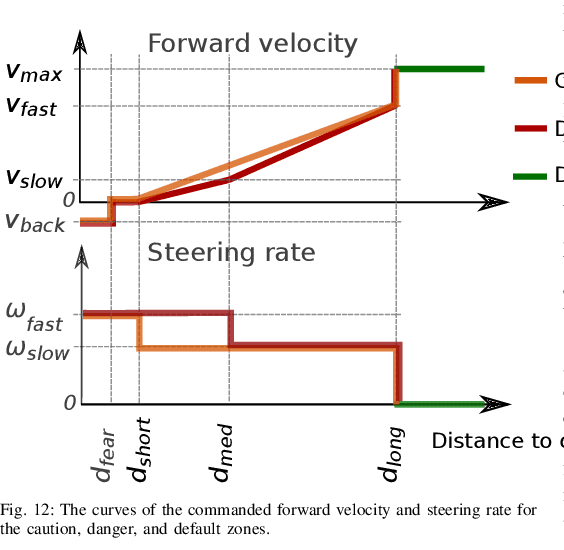

Abstract:Nano-drones, distinguished by their agility, minimal weight, and cost-effectiveness, are particularly well-suited for exploration in confined, cluttered and narrow spaces. Recognizing transparent, highly reflective or absorbing materials, such as glass and metallic surfaces is challenging, as classical sensors, such as cameras or laser rangers, often do not detect them. Inspired by bats, which can fly at high speeds in complete darkness with the help of ultrasound, this paper introduces \textit{BatDeck}, a pioneering sensor-deck employing a lightweight and low-power ultrasonic sensor for nano-drone autonomous navigation. This paper first provides insights about sensor characteristics, highlighting the influence of motor noise on the ultrasound readings, then it introduces the results of extensive experimental tests for obstacle avoidance (OA) in a diverse environment. Results show that \textit{BatDeck} allows exploration for a flight time of 8 minutes while covering 136m on average before crash in a challenging environment with transparent and reflective obstacles, proving the effectiveness of ultrasonic sensors for OA on nano-drones.

Fully Onboard Low-Power Localization with Semantic Sensor Fusion on a Nano-UAV using Floor Plans

Oct 19, 2023

Abstract:Nano-sized unmanned aerial vehicles (UAVs) are well-fit for indoor applications and for close proximity to humans. To enable autonomy, the nano-UAV must be able to self-localize in its operating environment. This is a particularly-challenging task due to the limited sensing and compute resources on board. This work presents an online and onboard approach for localization in floor plans annotated with semantic information. Unlike sensor-based maps, floor plans are readily-available, and do not increase the cost and time of deployment. To overcome the difficulty of localizing in sparse maps, the proposed approach fuses geometric information from miniaturized time-of-flight sensors and semantic cues. The semantic information is extracted from images by deploying a state-of-the-art object detection model on a high-performance multi-core microcontroller onboard the drone, consuming only 2.5mJ per frame and executing in 38ms. In our evaluation, we globally localize in a real-world office environment, achieving 90% success rate. We also release an open-source implementation of our work.

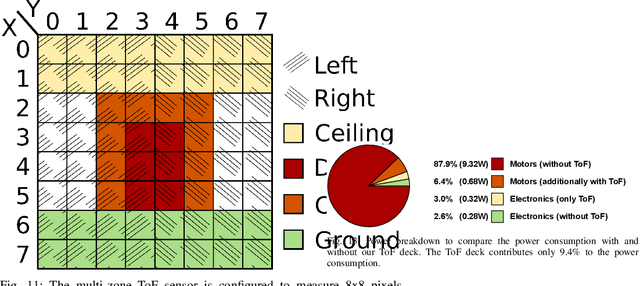

Fully On-board Low-Power Localization with Multizone Time-of-Flight Sensors on Nano-UAVs

Nov 25, 2022Abstract:Nano-size unmanned aerial vehicles (UAVs) hold enormous potential to perform autonomous operations in complex environments, such as inspection, monitoring or data collection. Moreover, their small size allows safe operation close to humans and agile flight. An important part of autonomous flight is localization, which is a computationally intensive task especially on a nano-UAV that usually has strong constraints in sensing, processing and memory. This work presents a real-time localization approach with low element-count multizone range sensors for resource-constrained nano-UAVs. The proposed approach is based on a novel miniature 64-zone time-of-flight sensor from ST Microelectronics and a RISC-V-based parallel ultra low-power processor, to enable accurate and low latency Monte Carlo Localization on-board. Experimental evaluation using a nano-UAV open platform demonstrated that the proposed solution is capable of localizing on a 31.2m$\boldsymbol{^2}$ map with 0.15m accuracy and an above 95% success rate. The achieved accuracy is sufficient for localization in common indoor environments. We analyze tradeoffs in using full and half-precision floating point numbers as well as a quantized map and evaluate the accuracy and memory footprint across the design space. Experimental evaluation shows that parallelizing the execution for 8 RISC-V cores brings a 7x speedup and allows us to execute the algorithm on-board in real-time with a latency of 0.2-30ms (depending on the number of particles), while only increasing the overall drone power consumption by 3-7%. Finally, we provide an open-source implementation of our approach.

Robust and Efficient Depth-based Obstacle Avoidance for Autonomous Miniaturized UAVs

Aug 26, 2022

Abstract:Nano-size drones hold enormous potential to explore unknown and complex environments. Their small size makes them agile and safe for operation close to humans and allows them to navigate through narrow spaces. However, their tiny size and payload restrict the possibilities for on-board computation and sensing, making fully autonomous flight extremely challenging. The first step towards full autonomy is reliable obstacle avoidance, which has proven to be technically challenging by itself in a generic indoor environment. Current approaches utilize vision-based or 1-dimensional sensors to support nano-drone perception algorithms. This work presents a lightweight obstacle avoidance system based on a novel millimeter form factor 64 pixels multi-zone Time-of-Flight (ToF) sensor and a generalized model-free control policy. Reported in-field tests are based on the Crazyflie 2.1, extended by a custom multi-zone ToF deck, featuring a total flight mass of 35g. The algorithm only uses 0.3% of the on-board processing power (210uS execution time) with a frame rate of 15fps, providing an excellent foundation for many future applications. Less than 10% of the total drone power is needed to operate the proposed perception system, including both lifting and operating the sensor. The presented autonomous nano-size drone reaches 100% reliability at 0.5m/s in a generic and previously unexplored indoor environment. The proposed system is released open-source with an extensive dataset including ToF and gray-scale camera data, coupled with UAV position ground truth from motion capture.

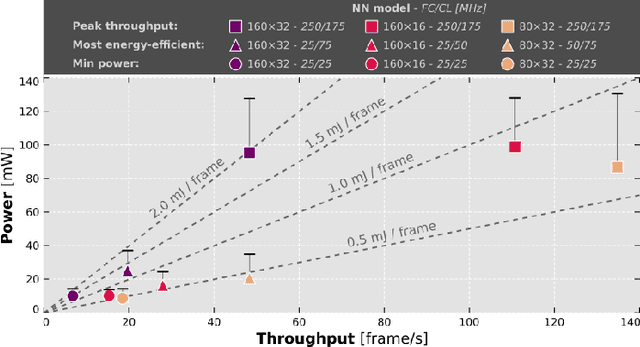

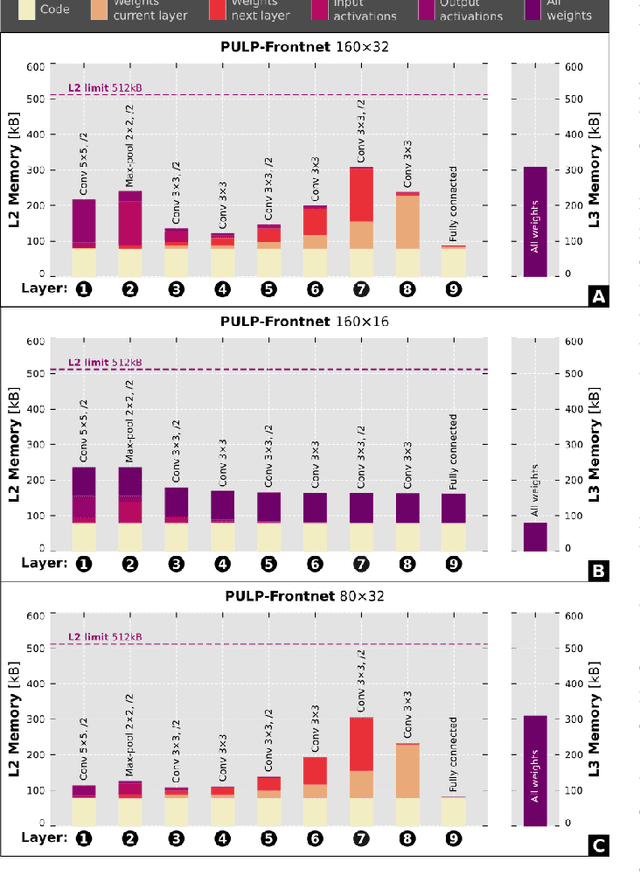

Fully Onboard AI-powered Human-Drone Pose Estimation on Ultra-low Power Autonomous Flying Nano-UAVs

Mar 19, 2021

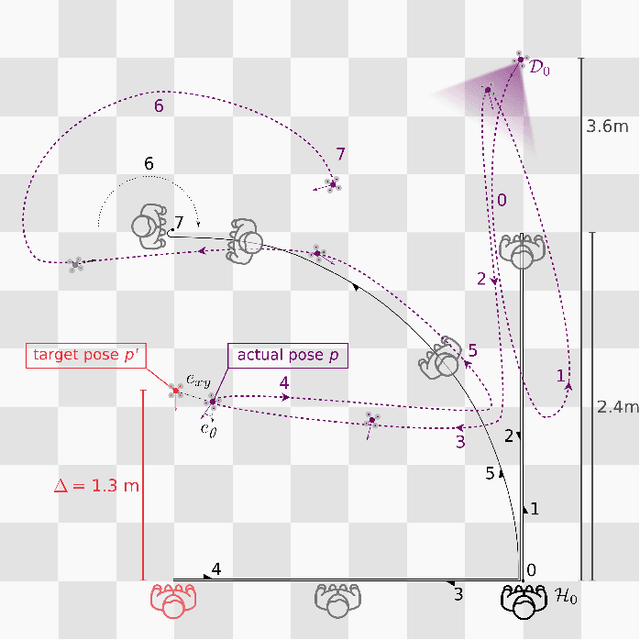

Abstract:Artificial intelligence-powered pocket-sized air robots have the potential to revolutionize the Internet-of-Things ecosystem, acting as autonomous, unobtrusive, and ubiquitous smart sensors. With a few cm$^{2}$ form-factor, nano-sized unmanned aerial vehicles (UAVs) are the natural befit for indoor human-drone interaction missions, as the pose estimation task we address in this work. However, this scenario is challenged by the nano-UAVs' limited payload and computational power that severely relegates the onboard brain to the sub-100 mW microcontroller unit-class. Our work stands at the intersection of the novel parallel ultra-low-power (PULP) architectural paradigm and our general development methodology for deep neural network (DNN) visual pipelines, i.e., covering from perception to control. Addressing the DNN model design, from training and dataset augmentation to 8-bit quantization and deployment, we demonstrate how a PULP-based processor, aboard a nano-UAV, is sufficient for the real-time execution (up to 135 frame/s) of our novel DNN, called PULP-Frontnet. We showcase how, scaling our model's memory and computational requirement, we can significantly improve the onboard inference (top energy efficiency of 0.43 mJ/frame) with no compromise in the quality-of-result vs. a resource-unconstrained baseline (i.e., full-precision DNN). Field experiments demonstrate a closed-loop top-notch autonomous navigation capability, with a heavily resource-constrained 27-gram Crazyflie 2.1 nano-quadrotor. Compared against the control performance achieved using an ideal sensing setup, onboard relative pose inference yields excellent drone behavior in terms of median absolute errors, such as positional (onboard: 41 cm, ideal: 26 cm) and angular (onboard: 3.7$^{\circ}$, ideal: 4.1$^{\circ}$).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge