Jia Xue

University of Toronto

hBert + BiasCorp -- Fighting Racism on the Web

Apr 06, 2021

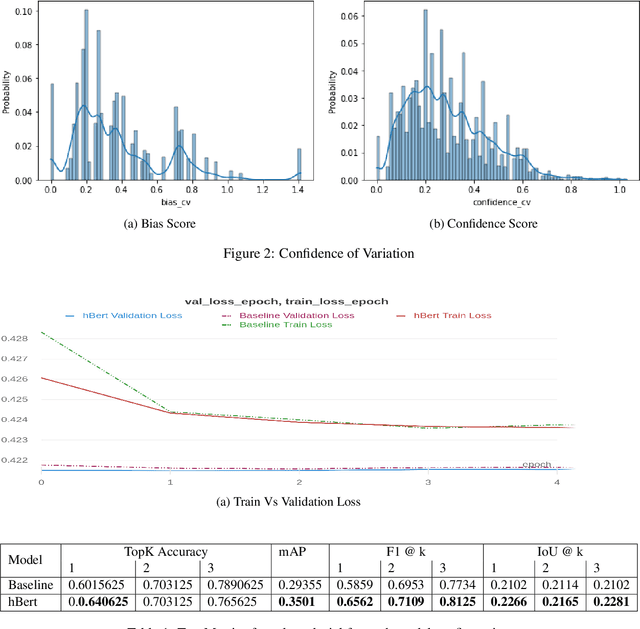

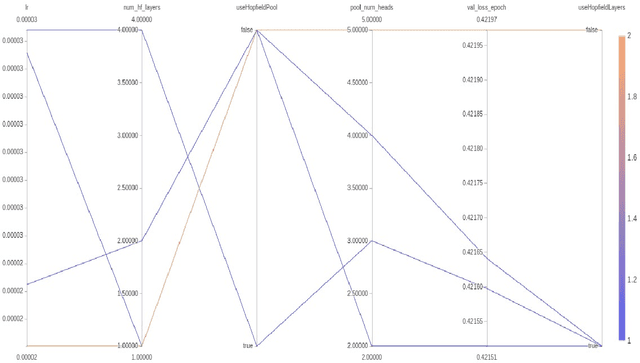

Abstract:Subtle and overt racism is still present both in physical and online communities today and has impacted many lives in different segments of the society. In this short piece of work, we present how we're tackling this societal issue with Natural Language Processing. We are releasing BiasCorp, a dataset containing 139,090 comments and news segment from three specific sources - Fox News, BreitbartNews and YouTube. The first batch (45,000 manually annotated) is ready for publication. We are currently in the final phase of manually labeling the remaining dataset using Amazon Mechanical Turk. BERT has been used widely in several downstream tasks. In this work, we present hBERT, where we modify certain layers of the pretrained BERT model with the new Hopfield Layer. hBert generalizes well across different distributions with the added advantage of a reduced model complexity. We are also releasing a JavaScript library and a Chrome Extension Application, to help developers make use of our trained model in web applications (say chat application) and for users to identify and report racially biased contents on the web respectively.

Angular Luminance for Material Segmentation

Sep 22, 2020

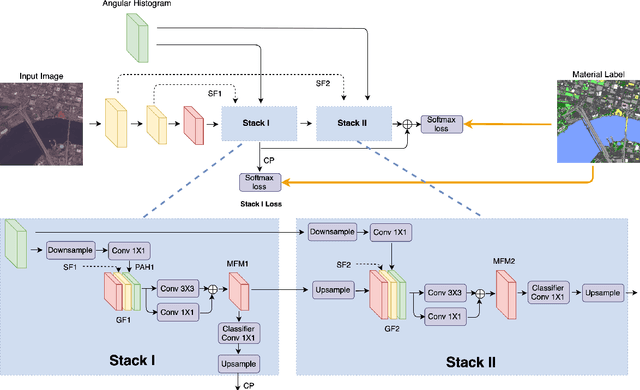

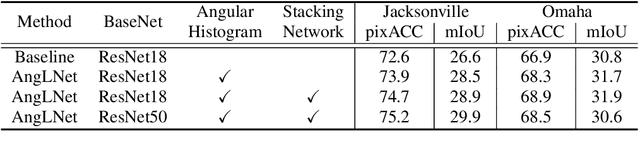

Abstract:Moving cameras provide multiple intensity measurements per pixel, yet often semantic segmentation, material recognition, and object recognition do not utilize this information. With basic alignment over several frames of a moving camera sequence, a distribution of intensities over multiple angles is obtained. It is well known from prior work that luminance histograms and the statistics of natural images provide a strong material recognition cue. We utilize per-pixel {\it angular luminance distributions} as a key feature in discriminating the material of the surface. The angle-space sampling in a multiview satellite image sequence is an unstructured sampling of the underlying reflectance function of the material. For real-world materials there is significant intra-class variation that can be managed by building a angular luminance network (AngLNet). This network combines angular reflectance cues from multiple images with spatial cues as input to fully convolutional networks for material segmentation. We demonstrate the increased performance of AngLNet over prior state-of-the-art in material segmentation from satellite imagery.

* IEEE International Geoscience and Remote Sensing Symposium (IGARSS) 2020

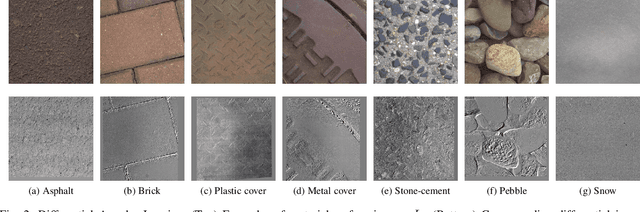

Differential Viewpoints for Ground Terrain Material Recognition

Sep 22, 2020

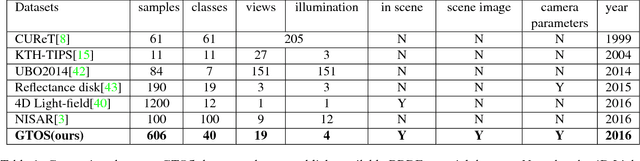

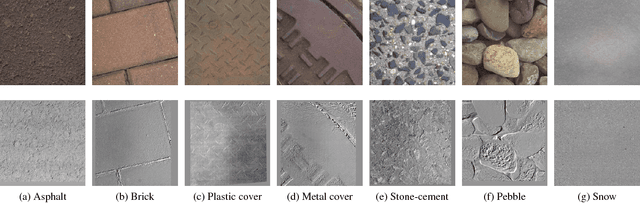

Abstract:Computational surface modeling that underlies material recognition has transitioned from reflectance modeling using in-lab controlled radiometric measurements to image-based representations based on internet-mined single-view images captured in the scene. We take a middle-ground approach for material recognition that takes advantage of both rich radiometric cues and flexible image capture. A key concept is differential angular imaging, where small angular variations in image capture enables angular-gradient features for an enhanced appearance representation that improves recognition. We build a large-scale material database, Ground Terrain in Outdoor Scenes (GTOS) database, to support ground terrain recognition for applications such as autonomous driving and robot navigation. The database consists of over 30,000 images covering 40 classes of outdoor ground terrain under varying weather and lighting conditions. We develop a novel approach for material recognition called texture-encoded angular network (TEAN) that combines deep encoding pooling of RGB information and differential angular images for angular-gradient features to fully leverage this large dataset. With this novel network architecture, we extract characteristics of materials encoded in the angular and spatial gradients of their appearance. Our results show that TEAN achieves recognition performance that surpasses single view performance and standard (non-differential/large-angle sampling) multiview performance.

* IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI). arXiv admin note: substantial text overlap with arXiv:1612.02372

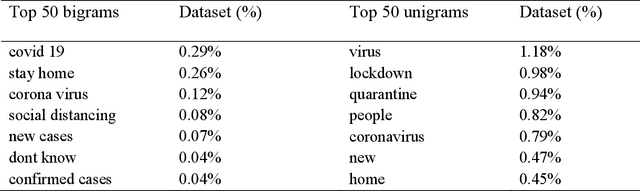

Twitter discussions and emotions about COVID-19 pandemic: a machine learning approach

Jun 19, 2020

Abstract:The objective of the study is to examine coronavirus disease (COVID-19) related discussions, concerns, and sentiments that emerged from tweets posted by Twitter users. We analyze 4 million Twitter messages related to the COVID-19 pandemic using a list of 25 hashtags such as "coronavirus," "COVID-19," "quarantine" from March 1 to April 21 in 2020. We use a machine learning approach, Latent Dirichlet Allocation (LDA), to identify popular unigram, bigrams, salient topics and themes, and sentiments in the collected Tweets. Popular unigrams include "virus," "lockdown," and "quarantine." Popular bigrams include "COVID-19," "stay home," "corona virus," "social distancing," and "new cases." We identify 13 discussion topics and categorize them into five different themes, such as "public health measures to slow the spread of COVID-19," "social stigma associated with COVID-19," "coronavirus news cases and deaths," "COVID-19 in the United States," and "coronavirus cases in the rest of the world". Across all identified topics, the dominant sentiments for the spread of coronavirus are anticipation that measures that can be taken, followed by a mixed feeling of trust, anger, and fear for different topics. The public reveals a significant feeling of fear when they discuss the coronavirus new cases and deaths than other topics. The study shows that Twitter data and machine learning approaches can be leveraged for infodemiology study by studying the evolving public discussions and sentiments during the COVID-19. Real-time monitoring and assessment of the Twitter discussion and concerns can be promising for public health emergency responses and planning. Already emerged pandemic fear, stigma, and mental health concerns may continue to influence public trust when there occurs a second wave of COVID-19 or a new surge of the imminent pandemic.

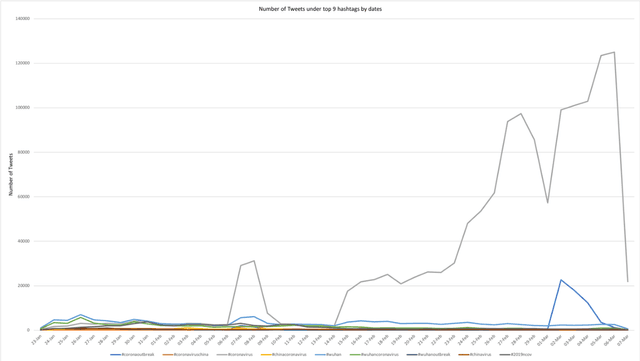

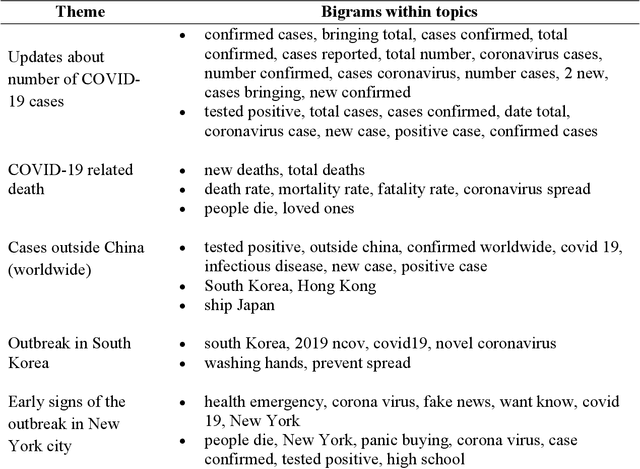

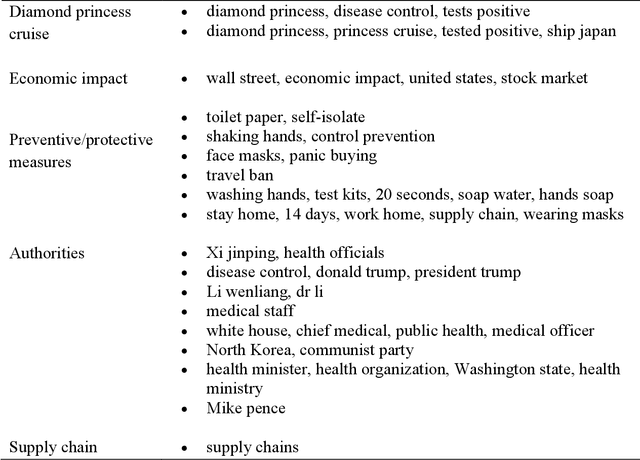

Machine learning on Big Data from Twitter to understand public reactions to COVID-19

May 22, 2020

Abstract:The study aims to understand Twitter users' discussions and reactions about the COVID-19. We use machine learning techniques to analyze about 1.8 million Tweets messages related to coronavirus collected from January 20th to March 7th, 2020. A total of salient 11 topics are identified and then categorized into 10 themes, such as "cases outside China (worldwide)," "COVID-19 outbreak in South Korea," "early signs of the outbreak in New York," "Diamond Princess cruise," "economic impact," "Preventive/Protective measures," "authorities," and "supply chain". Results do not reveal treatment and/or symptoms related messages as a prevalent topic on Twitter. We also run sentiment analysis and the results show that trust for the authorities remained a prevalent emotion, but mixed feelings of trust for authorities, fear for the outbreak, and anticipation for the potential preventive measures will be taken are identified. Implications and limitations of the study are also discussed.

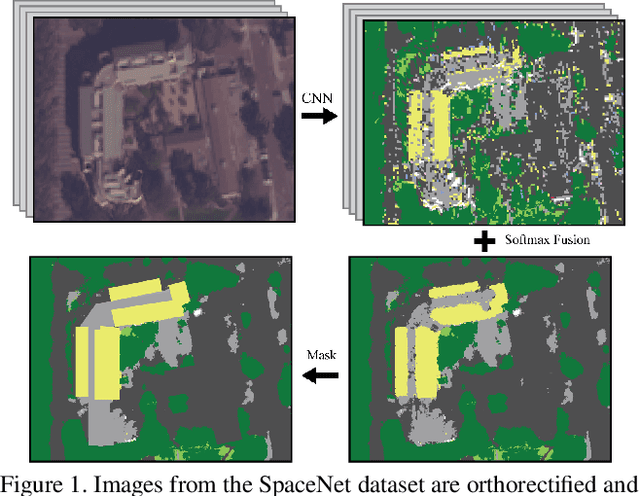

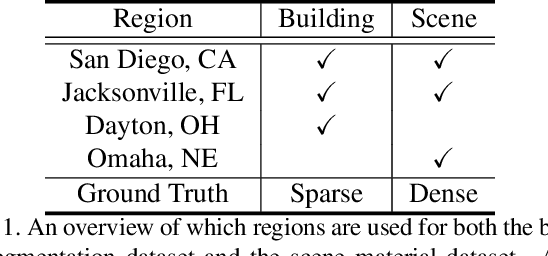

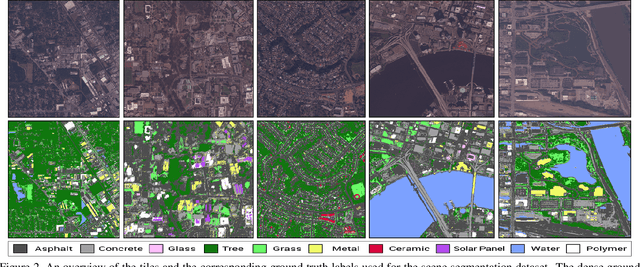

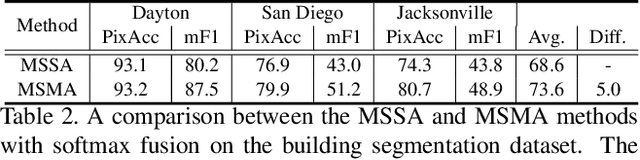

Material Segmentation of Multi-View Satellite Imagery

Apr 17, 2019

Abstract:Material recognition methods use image context and local cues for pixel-wise classification. In many cases only a single image is available to make a material prediction. Image sequences, routinely acquired in applications such as mutliview stereo, can provide a sampling of the underlying reflectance functions that reveal pixel-level material attributes. We investigate multi-view material segmentation using two datasets generated for building material segmentation and scene material segmentation from the SpaceNet Challenge satellite image dataset. In this paper, we explore the impact of multi-angle reflectance information by introducing the \textit{reflectance residual encoding}, which captures both the multi-angle and multispectral information present in our datasets. The residuals are computed by differencing the sparse-sampled reflectance function with a dictionary of pre-defined dense-sampled reflectance functions. Our proposed reflectance residual features improves material segmentation performance when integrated into pixel-wise and semantic segmentation architectures. At test time, predictions from individual segmentations are combined through softmax fusion and refined by building segment voting. We demonstrate robust and accurate pixelwise segmentation results using the proposed material segmentation pipeline.

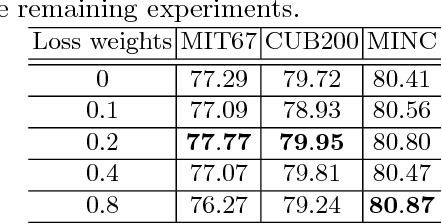

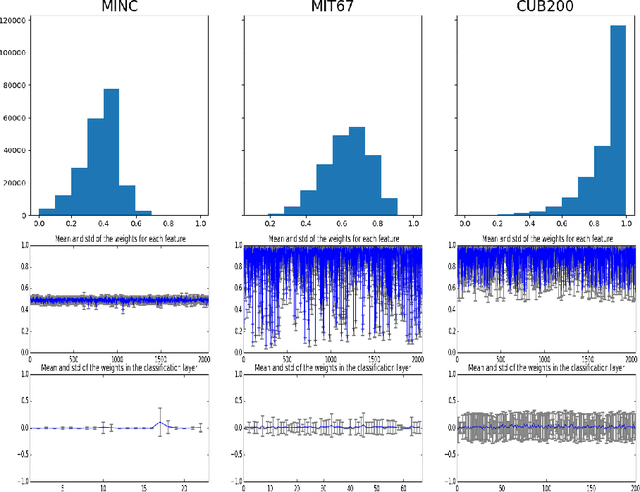

Gated Transfer Network for Transfer Learning

Oct 30, 2018

Abstract:Deep neural networks have led to a series of breakthroughs in computer vision given sufficient annotated training datasets. For novel tasks with limited labeled data, the prevalent approach is to transfer the knowledge learned in the pre-trained models to the new tasks by fine-tuning. Classic model fine-tuning utilizes the fact that well trained neural networks appear to learn cross domain features. These features are treated equally during transfer learning. In this paper, we explore the impact of feature selection in model fine-tuning by introducing a transfer module, which assigns weights to features extracted from pre-trained models. The proposed transfer module proves the importance of feature selection for transferring models from source to target domains. It is shown to significantly improve upon fine-tuning results with only marginal extra computational cost. We also incorporate an auxiliary classifier as an extra regularizer to avoid over-fitting. Finally, we build a Gated Transfer Network (GTN) based on our transfer module and achieve state-of-the-art results on six different tasks.

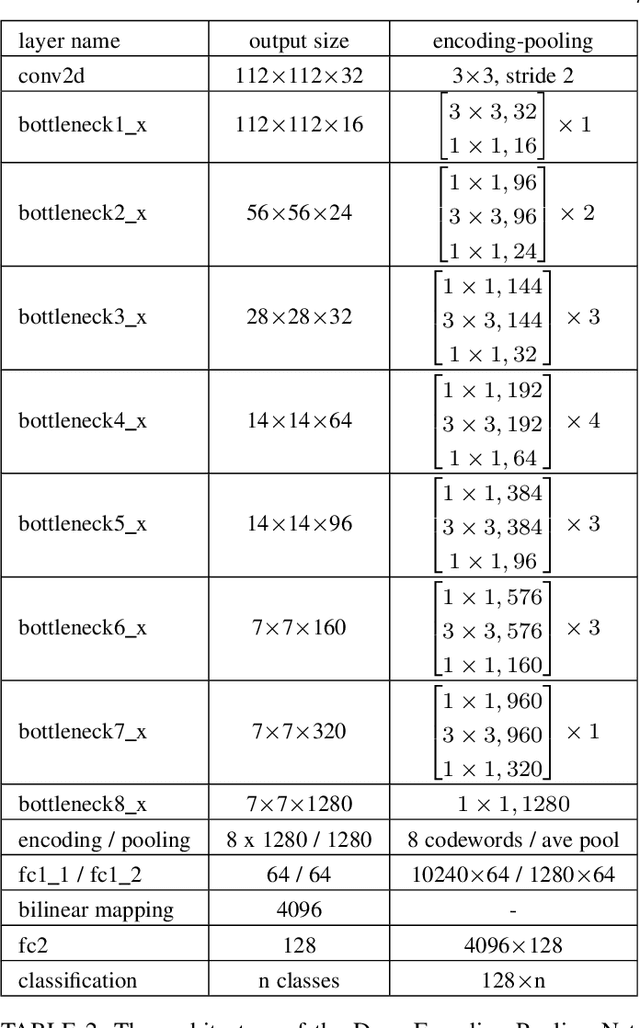

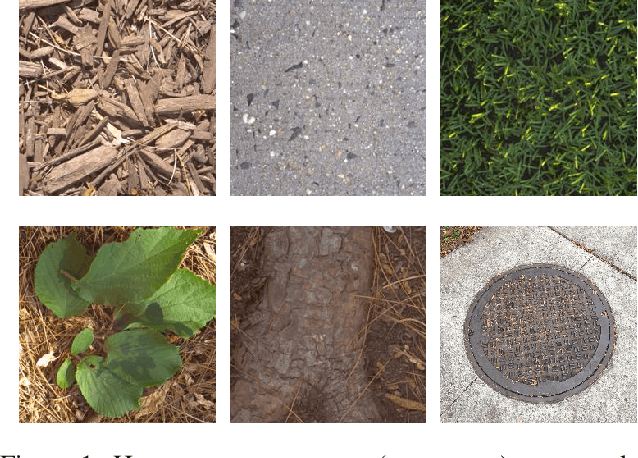

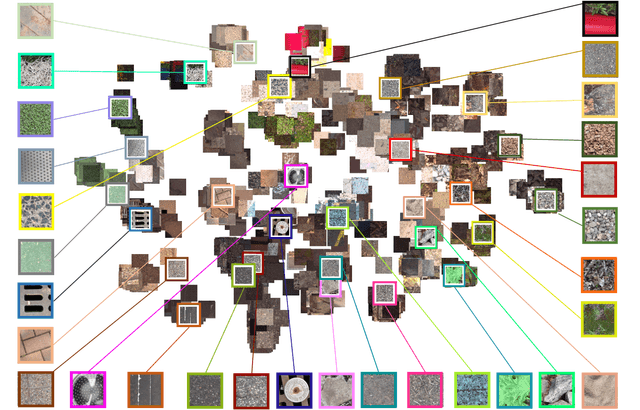

Deep Texture Manifold for Ground Terrain Recognition

Apr 03, 2018

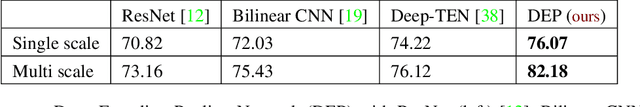

Abstract:We present a texture network called Deep Encoding Pooling Network (DEP) for the task of ground terrain recognition. Recognition of ground terrain is an important task in establishing robot or vehicular control parameters, as well as for localization within an outdoor environment. The architecture of DEP integrates orderless texture details and local spatial information and the performance of DEP surpasses state-of-the-art methods for this task. The GTOS database (comprised of over 30,000 images of 40 classes of ground terrain in outdoor scenes) enables supervised recognition. For evaluation under realistic conditions, we use test images that are not from the existing GTOS dataset, but are instead from hand-held mobile phone videos of similar terrain. This new evaluation dataset, GTOS-mobile, consists of 81 videos of 31 classes of ground terrain such as grass, gravel, asphalt and sand. The resultant network shows excellent performance not only for GTOS-mobile, but also for more general databases (MINC and DTD). Leveraging the discriminant features learned from this network, we build a new texture manifold called DEP-manifold. We learn a parametric distribution in feature space in a fully supervised manner, which gives the distance relationship among classes and provides a means to implicitly represent ambiguous class boundaries. The source code and database are publicly available.

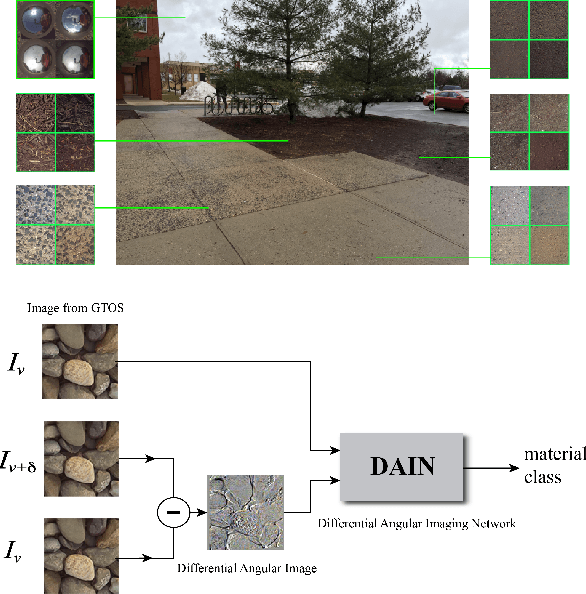

Differential Angular Imaging for Material Recognition

Jul 14, 2017

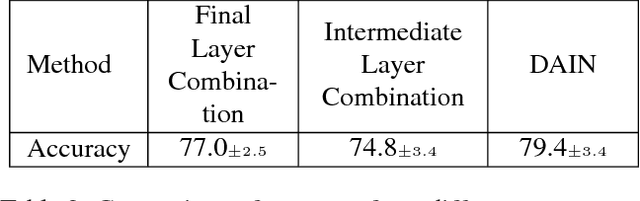

Abstract:Material recognition for real-world outdoor surfaces has become increasingly important for computer vision to support its operation "in the wild." Computational surface modeling that underlies material recognition has transitioned from reflectance modeling using in-lab controlled radiometric measurements to image-based representations based on internet-mined images of materials captured in the scene. We propose to take a middle-ground approach for material recognition that takes advantage of both rich radiometric cues and flexible image capture. We realize this by developing a framework for differential angular imaging, where small angular variations in image capture provide an enhanced appearance representation and significant recognition improvement. We build a large-scale material database, Ground Terrain in Outdoor Scenes (GTOS) database, geared towards real use for autonomous agents. The database consists of over 30,000 images covering 40 classes of outdoor ground terrain under varying weather and lighting conditions. We develop a novel approach for material recognition called a Differential Angular Imaging Network (DAIN) to fully leverage this large dataset. With this novel network architecture, we extract characteristics of materials encoded in the angular and spatial gradients of their appearance. Our results show that DAIN achieves recognition performance that surpasses single view or coarsely quantized multiview images. These results demonstrate the effectiveness of differential angular imaging as a means for flexible, in-place material recognition.

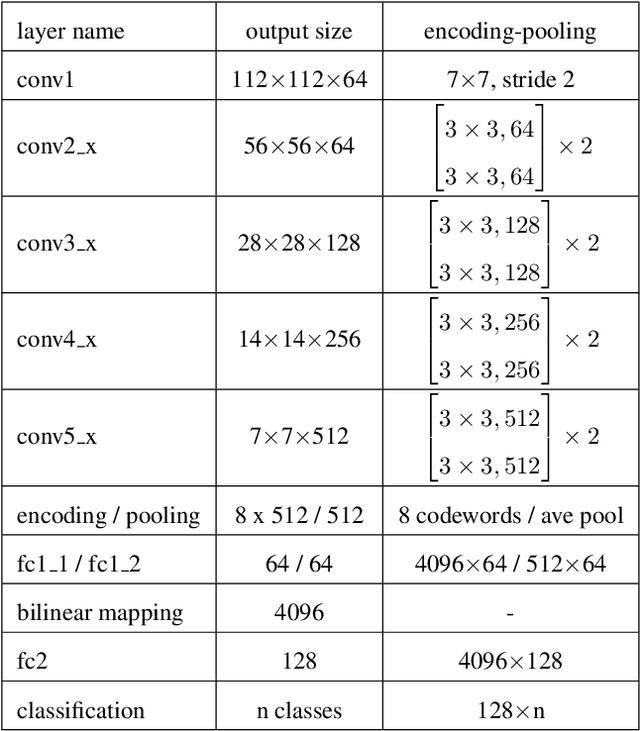

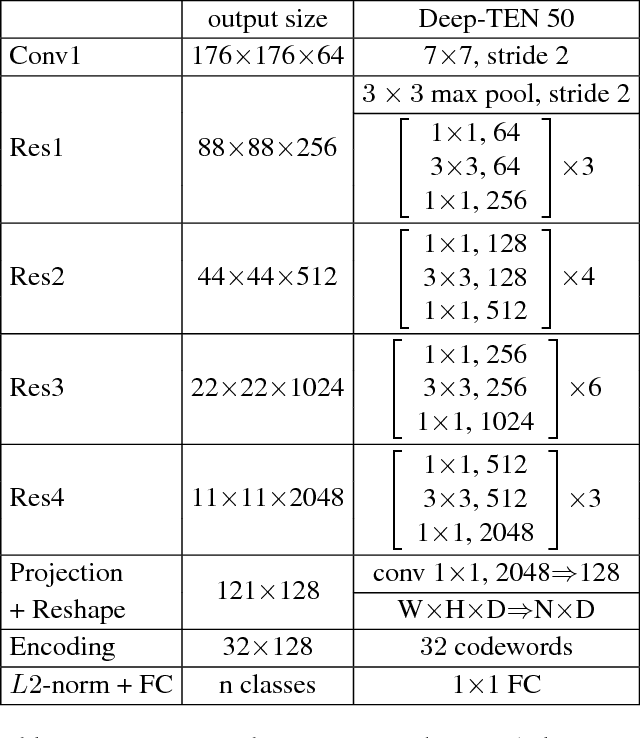

Deep TEN: Texture Encoding Network

Dec 08, 2016

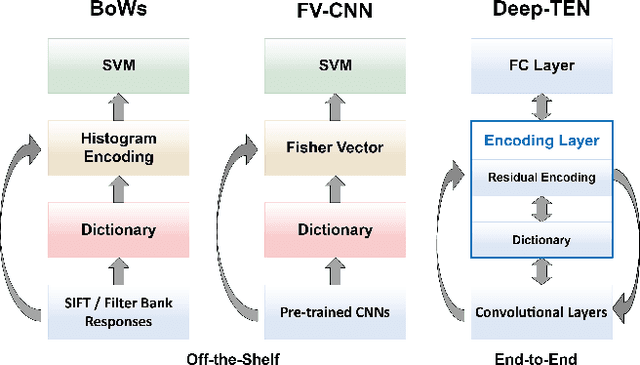

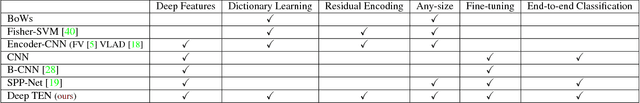

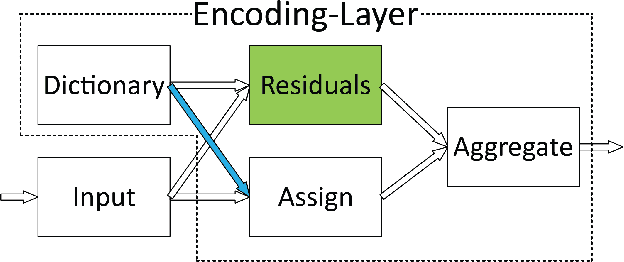

Abstract:We propose a Deep Texture Encoding Network (Deep-TEN) with a novel Encoding Layer integrated on top of convolutional layers, which ports the entire dictionary learning and encoding pipeline into a single model. Current methods build from distinct components, using standard encoders with separate off-the-shelf features such as SIFT descriptors or pre-trained CNN features for material recognition. Our new approach provides an end-to-end learning framework, where the inherent visual vocabularies are learned directly from the loss function. The features, dictionaries and the encoding representation for the classifier are all learned simultaneously. The representation is orderless and therefore is particularly useful for material and texture recognition. The Encoding Layer generalizes robust residual encoders such as VLAD and Fisher Vectors, and has the property of discarding domain specific information which makes the learned convolutional features easier to transfer. Additionally, joint training using multiple datasets of varied sizes and class labels is supported resulting in increased recognition performance. The experimental results show superior performance as compared to state-of-the-art methods using gold-standard databases such as MINC-2500, Flickr Material Database, KTH-TIPS-2b, and two recent databases 4D-Light-Field-Material and GTOS. The source code for the complete system are publicly available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge