Hongyi Li

Loosely-Structured Software: Engineering Context, Structure, and Evolution Entropy in Runtime-Rewired Multi-Agent Systems

Mar 16, 2026Abstract:As LLM-based multi-agent systems (MAS) become more autonomous, their free-form interactions increasingly dominate system behavior. However, scaling the number of agents often amplifies context pressure, coordination errors, and system drift. It is well known that building robust MAS requires more than prompt tuning or increased model intelligence. It necessitates engineering discipline focused on architecture to manage complexity under uncertainty. We characterize agentic software by a core property: \emph{runtime generation and evolution under uncertainty}. Drawing upon and extending software engineering experience, especially object-oriented programming, this paper introduces \emph{Loosely-Structured Software (LSS)}, a new class of software systems that shifts the engineering focus from constructing deterministic logic to managing the runtime entropy generated by View-constructed programming, semantic-driven self-organization, and endogenous evolution. To make this entropy governable, we introduce design principles under a three-layer engineering framework: \emph{View/Context Engineering} to manage the execution environment and maintain task-relevant Views, \emph{Structure Engineering} to organize dynamic binding over artifacts and agents, and \emph{Evolution Engineering} to govern the lifecycle of self-rewriting artifacts. Building on this framework, we develop LSS design patterns as semantic control blocks that stabilize fluid, inference-mediated interactions while preserving agent adaptability. Together, these abstractions improve the \emph{designability}, \emph{scalability}, and \emph{evolvability} of agentic infrastructure. We provide basic experimental validation of key mechanisms, demonstrating the effectiveness of LSS.

Hinge Regression Tree: A Newton Method for Oblique Regression Tree Splitting

Feb 05, 2026Abstract:Oblique decision trees combine the transparency of trees with the power of multivariate decision boundaries, but learning high-quality oblique splits is NP-hard, and practical methods still rely on slow search or theory-free heuristics. We present the Hinge Regression Tree (HRT), which reframes each split as a non-linear least-squares problem over two linear predictors whose max/min envelope induces ReLU-like expressive power. The resulting alternating fitting procedure is exactly equivalent to a damped Newton (Gauss-Newton) method within fixed partitions. We analyze this node-level optimization and, for a backtracking line-search variant, prove that the local objective decreases monotonically and converges; in practice, both fixed and adaptive damping yield fast, stable convergence and can be combined with optional ridge regularization. We further prove that HRT's model class is a universal approximator with an explicit $O(δ^2)$ approximation rate, and show on synthetic and real-world benchmarks that it matches or outperforms single-tree baselines with more compact structures.

Rotation-Robust Regression with Convolutional Model Trees

Jan 08, 2026Abstract:We study rotation-robust learning for image inputs using Convolutional Model Trees (CMTs) [1], whose split and leaf coefficients can be structured on the image grid and transformed geometrically at deployment time. In a controlled MNIST setting with a rotation-invariant regression target, we introduce three geometry-aware inductive biases for split directions -- convolutional smoothing, a tilt dominance constraint, and importance-based pruning -- and quantify their impact on robustness under in-plane rotations. We further evaluate a deployment-time orientation search that selects a discrete rotation maximizing a forest-level confidence proxy without updating model parameters. Orientation search improves robustness under severe rotations but can be harmful near the canonical orientation when confidence is misaligned with correctness. Finally, we observe consistent trends on MNIST digit recognition implemented as one-vs-rest regression, highlighting both the promise and limitations of confidence-based orientation selection for model-tree ensembles.

START: Traversing Sparse Footholds with Terrain Reconstruction

Dec 15, 2025

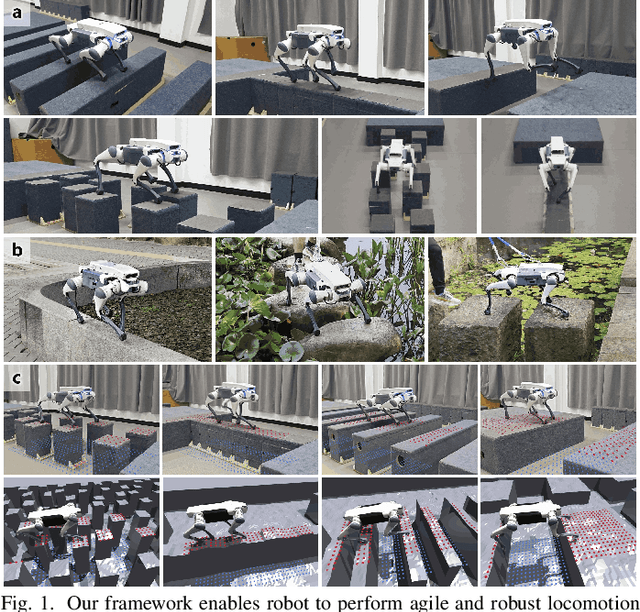

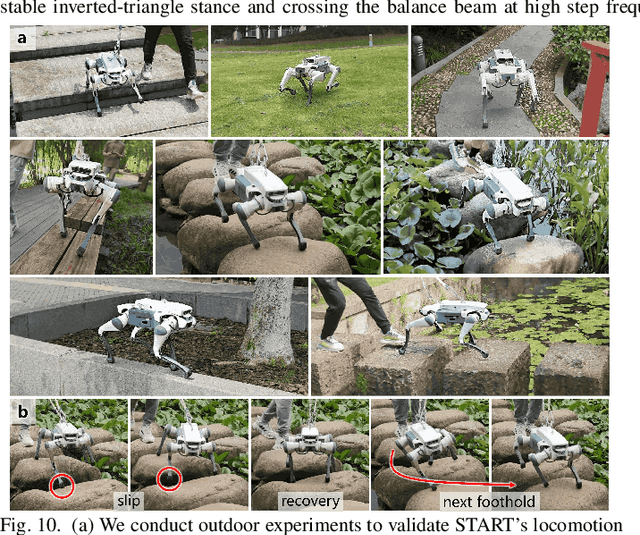

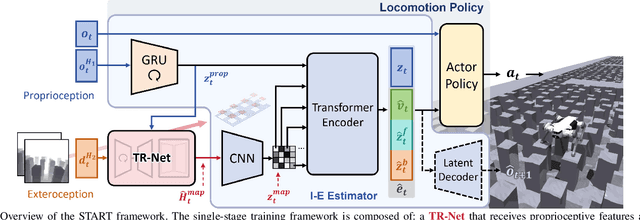

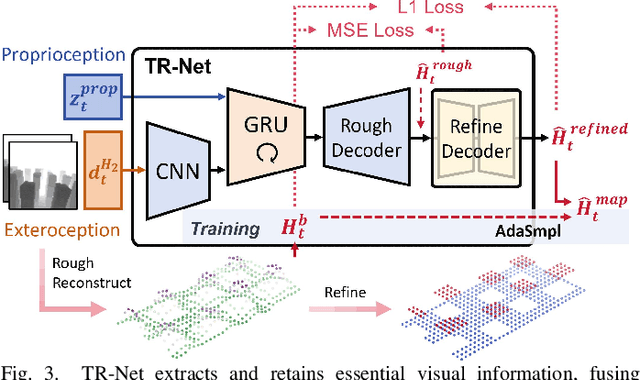

Abstract:Traversing terrains with sparse footholds like legged animals presents a promising yet challenging task for quadruped robots, as it requires precise environmental perception and agile control to secure safe foot placement while maintaining dynamic stability. Model-based hierarchical controllers excel in laboratory settings, but suffer from limited generalization and overly conservative behaviors. End-to-end learning-based approaches unlock greater flexibility and adaptability, but existing state-of-the-art methods either rely on heightmaps that introduce noise and complex, costly pipelines, or implicitly infer terrain features from egocentric depth images, often missing accurate critical geometric cues and leading to inefficient learning and rigid gaits. To overcome these limitations, we propose START, a single-stage learning framework that enables agile, stable locomotion on highly sparse and randomized footholds. START leverages only low-cost onboard vision and proprioception to accurately reconstruct local terrain heightmap, providing an explicit intermediate representation to convey essential features relevant to sparse foothold regions. This supports comprehensive environmental understanding and precise terrain assessment, reducing exploration cost and accelerating skill acquisition. Experimental results demonstrate that START achieves zero-shot transfer across diverse real-world scenarios, showcasing superior adaptability, precise foothold placement, and robust locomotion.

LHT: Statistically-Driven Oblique Decision Trees for Interpretable Classification

May 07, 2025Abstract:We introduce the Learning Hyperplane Tree (LHT), a novel oblique decision tree model designed for expressive and interpretable classification. LHT fundamentally distinguishes itself through a non-iterative, statistically-driven approach to constructing splitting hyperplanes. Unlike methods that rely on iterative optimization or heuristics, LHT directly computes the hyperplane parameters, which are derived from feature weights based on the differences in feature expectations between classes within each node. This deterministic mechanism enables a direct and well-defined hyperplane construction process. Predictions leverage a unique piecewise linear membership function within leaf nodes, obtained via local least-squares fitting. We formally analyze the convergence of the LHT splitting process, ensuring that each split yields meaningful, non-empty partitions. Furthermore, we establish that the time complexity for building an LHT up to depth $d$ is $O(mnd)$, demonstrating the practical feasibility of constructing trees with powerful oblique splits using this methodology. The explicit feature weighting at each split provides inherent interpretability. Experimental results on benchmark datasets demonstrate LHT's competitive accuracy, positioning it as a practical, theoretically grounded, and interpretable alternative in the landscape of tree-based models. The implementation of the proposed method is available at https://github.com/Hongyi-Li-sz/LHT_model.

SATA: Safe and Adaptive Torque-Based Locomotion Policies Inspired by Animal Learning

Feb 18, 2025

Abstract:Despite recent advances in learning-based controllers for legged robots, deployments in human-centric environments remain limited by safety concerns. Most of these approaches use position-based control, where policies output target joint angles that must be processed by a low-level controller (e.g., PD or impedance controllers) to compute joint torques. Although impressive results have been achieved in controlled real-world scenarios, these methods often struggle with compliance and adaptability when encountering environments or disturbances unseen during training, potentially resulting in extreme or unsafe behaviors. Inspired by how animals achieve smooth and adaptive movements by controlling muscle extension and contraction, torque-based policies offer a promising alternative by enabling precise and direct control of the actuators in torque space. In principle, this approach facilitates more effective interactions with the environment, resulting in safer and more adaptable behaviors. However, challenges such as a highly nonlinear state space and inefficient exploration during training have hindered their broader adoption. To address these limitations, we propose SATA, a bio-inspired framework that mimics key biomechanical principles and adaptive learning mechanisms observed in animal locomotion. Our approach effectively addresses the inherent challenges of learning torque-based policies by significantly improving early-stage exploration, leading to high-performance final policies. Remarkably, our method achieves zero-shot sim-to-real transfer. Our experimental results indicate that SATA demonstrates remarkable compliance and safety, even in challenging environments such as soft/slippery terrain or narrow passages, and under significant external disturbances, highlighting its potential for practical deployments in human-centric and safety-critical scenarios.

Learning Hyperplane Tree: A Piecewise Linear and Fully Interpretable Decision-making Framework

Jan 15, 2025

Abstract:This paper introduces a novel tree-based model, Learning Hyperplane Tree (LHT), which outperforms state-of-the-art (SOTA) tree models for classification tasks on several public datasets. The structure of LHT is simple and efficient: it partitions the data using several hyperplanes to progressively distinguish between target and non-target class samples. Although the separation is not perfect at each stage, LHT effectively improves the distinction through successive partitions. During testing, a sample is classified by evaluating the hyperplanes defined in the branching blocks and traversing down the tree until it reaches the corresponding leaf block. The class of the test sample is then determined using the piecewise linear membership function defined in the leaf blocks, which is derived through least-squares fitting and fuzzy logic. LHT is highly transparent and interpretable--at each branching block, the contribution of each feature to the classification can be clearly observed.

JailPO: A Novel Black-box Jailbreak Framework via Preference Optimization against Aligned LLMs

Dec 20, 2024Abstract:Large Language Models (LLMs) aligned with human feedback have recently garnered significant attention. However, it remains vulnerable to jailbreak attacks, where adversaries manipulate prompts to induce harmful outputs. Exploring jailbreak attacks enables us to investigate the vulnerabilities of LLMs and further guides us in enhancing their security. Unfortunately, existing techniques mainly rely on handcrafted templates or generated-based optimization, posing challenges in scalability, efficiency and universality. To address these issues, we present JailPO, a novel black-box jailbreak framework to examine LLM alignment. For scalability and universality, JailPO meticulously trains attack models to automatically generate covert jailbreak prompts. Furthermore, we introduce a preference optimization-based attack method to enhance the jailbreak effectiveness, thereby improving efficiency. To analyze model vulnerabilities, we provide three flexible jailbreak patterns. Extensive experiments demonstrate that JailPO not only automates the attack process while maintaining effectiveness but also exhibits superior performance in efficiency, universality, and robustness against defenses compared to baselines. Additionally, our analysis of the three JailPO patterns reveals that attacks based on complex templates exhibit higher attack strength, whereas covert question transformations elicit riskier responses and are more likely to bypass defense mechanisms.

General-purpose Dataflow Model with Neuromorphic Primitives

Aug 02, 2024

Abstract:Neuromorphic computing exhibits great potential to provide high-performance benefits in various applications beyond neural networks. However, a general-purpose program execution model that aligns with the features of neuromorphic computing is required to bridge the gap between program versatility and neuromorphic hardware efficiency. The dataflow model offers a potential solution, but it faces high graph complexity and incompatibility with neuromorphic hardware when dealing with control flow programs, which decreases the programmability and performance. Here, we present a dataflow model tailored for neuromorphic hardware, called neuromorphic dataflow, which provides a compact, concise, and neuromorphic-compatible program representation for control logic. The neuromorphic dataflow introduces "when" and "where" primitives, which restructure the view of control. The neuromorphic dataflow embeds these primitives in the dataflow schema with the plasticity inherited from the spiking algorithms. Our method enables the deployment of general-purpose programs on neuromorphic hardware with both programmability and plasticity, while fully utilizing the hardware's potential.

i-Octree: A Fast, Lightweight, and Dynamic Octree for Proximity Search

Sep 15, 2023

Abstract:Establishing the correspondences between newly acquired points and historically accumulated data (i.e., map) through nearest neighbors search is crucial in numerous robotic applications.However, static tree data structures are inadequate to handle large and dynamically growing maps in real-time.To address this issue, we present the i-Octree, a dynamic octree data structure that supports both fast nearest neighbor search and real-time dynamic updates, such as point insertion, deletion, and on-tree down-sampling. The i-Octree is built upon a leaf-based octree and has two key features: a local spatially continuous storing strategy that allows for fast access to points while minimizing memory usage, and local on-tree updates that significantly reduce computation time compared to existing static or dynamic tree structures.The experiments show that i-Octree surpasses state-of-the-art methods by reducing run-time by over 50% on real-world open datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge