Hong Qu

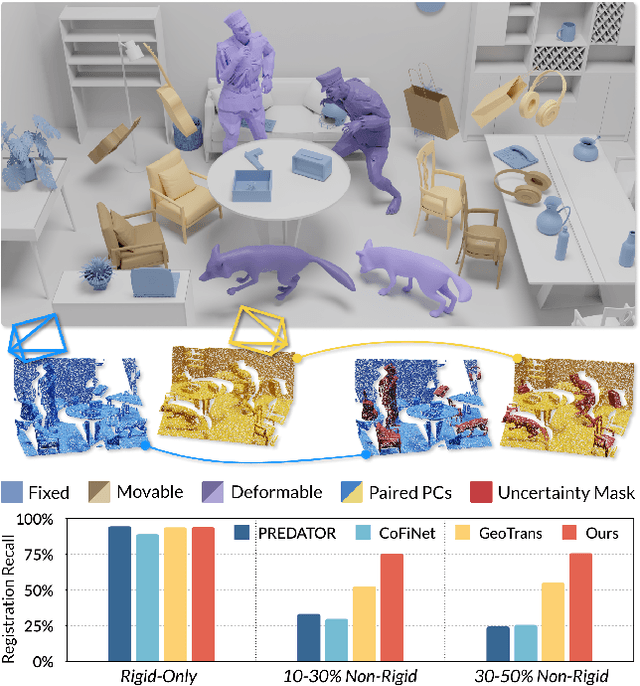

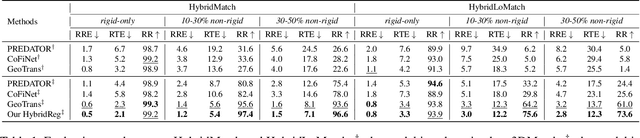

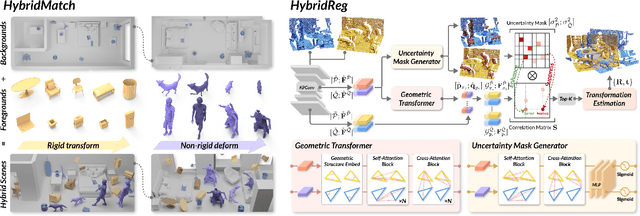

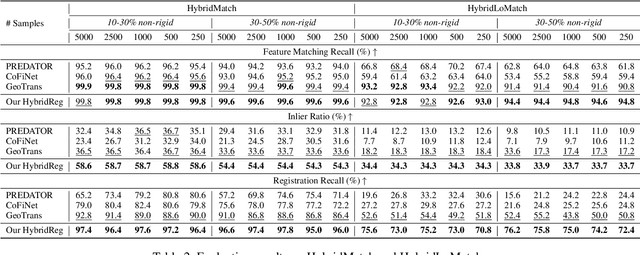

HybridReg: Robust 3D Point Cloud Registration with Hybrid Motions

Mar 10, 2025

Abstract:Scene-level point cloud registration is very challenging when considering dynamic foregrounds. Existing indoor datasets mostly assume rigid motions, so the trained models cannot robustly handle scenes with non-rigid motions. On the other hand, non-rigid datasets are mainly object-level, so the trained models cannot generalize well to complex scenes. This paper presents HybridReg, a new approach to 3D point cloud registration, learning uncertainty mask to account for hybrid motions: rigid for backgrounds and non-rigid/rigid for instance-level foregrounds. First, we build a scene-level 3D registration dataset, namely HybridMatch, designed specifically with strategies to arrange diverse deforming foregrounds in a controllable manner. Second, we account for different motion types and formulate a mask-learning module to alleviate the interference of deforming outliers. Third, we exploit a simple yet effective negative log-likelihood loss to adopt uncertainty to guide the feature extraction and correlation computation. To our best knowledge, HybridReg is the first work that exploits hybrid motions for robust point cloud registration. Extensive experiments show HybridReg's strengths, leading it to achieve state-of-the-art performance on both widely-used indoor and outdoor datasets.

MLPs Compass: What is learned when MLPs are combined with PLMs?

Jan 03, 2024

Abstract:While Transformer-based pre-trained language models and their variants exhibit strong semantic representation capabilities, the question of comprehending the information gain derived from the additional components of PLMs remains an open question in this field. Motivated by recent efforts that prove Multilayer-Perceptrons (MLPs) modules achieving robust structural capture capabilities, even outperforming Graph Neural Networks (GNNs), this paper aims to quantify whether simple MLPs can further enhance the already potent ability of PLMs to capture linguistic information. Specifically, we design a simple yet effective probing framework containing MLPs components based on BERT structure and conduct extensive experiments encompassing 10 probing tasks spanning three distinct linguistic levels. The experimental results demonstrate that MLPs can indeed enhance the comprehension of linguistic structure by PLMs. Our research provides interpretable and valuable insights into crafting variations of PLMs utilizing MLPs for tasks that emphasize diverse linguistic structures.

Enhancing Document-level Event Argument Extraction with Contextual Clues and Role Relevance

Oct 20, 2023

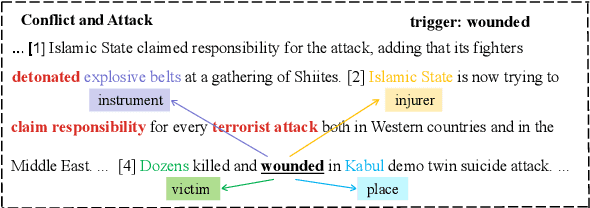

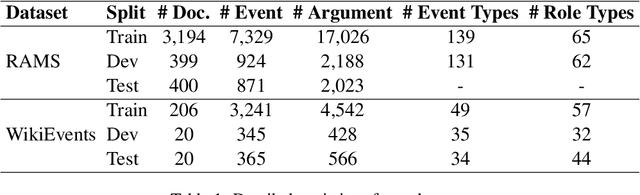

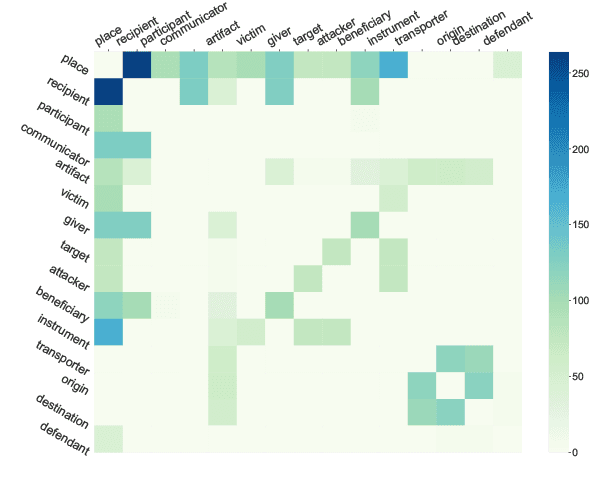

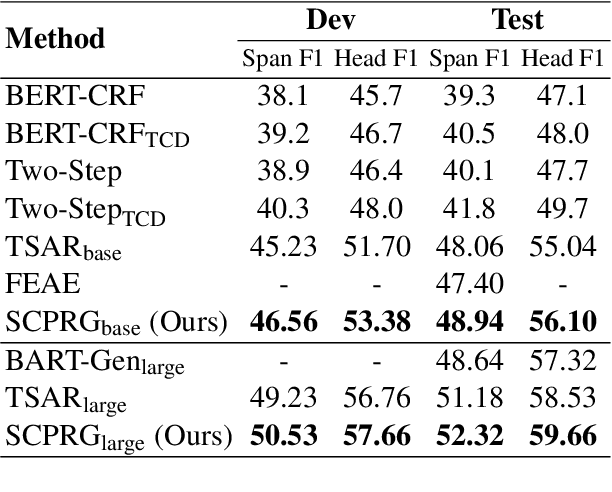

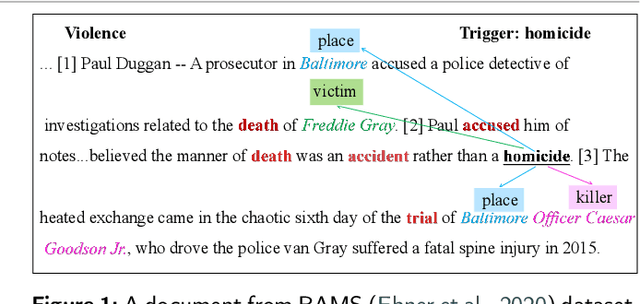

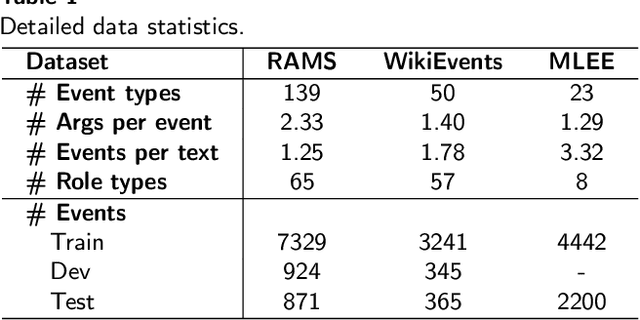

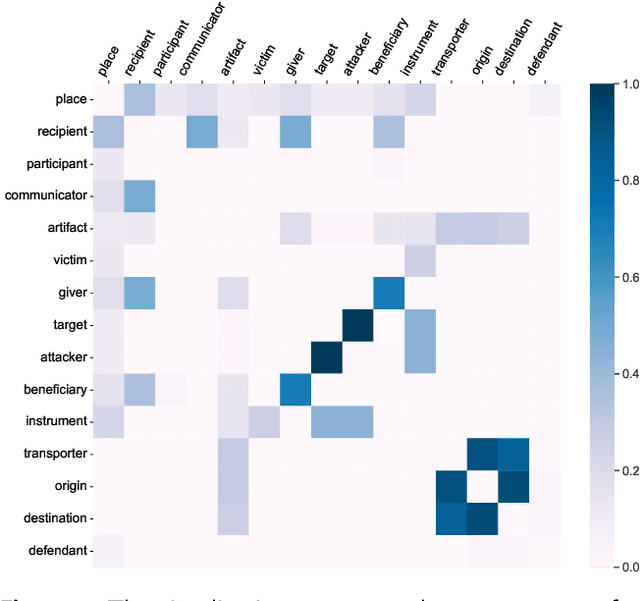

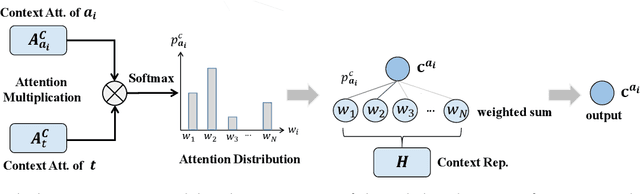

Abstract:Document-level event argument extraction poses new challenges of long input and cross-sentence inference compared to its sentence-level counterpart. However, most prior works focus on capturing the relations between candidate arguments and the event trigger in each event, ignoring two crucial points: a) non-argument contextual clue information; b) the relevance among argument roles. In this paper, we propose a SCPRG (Span-trigger-based Contextual Pooling and latent Role Guidance) model, which contains two novel and effective modules for the above problem. The Span-Trigger-based Contextual Pooling(STCP) adaptively selects and aggregates the information of non-argument clue words based on the context attention weights of specific argument-trigger pairs from pre-trained model. The Role-based Latent Information Guidance (RLIG) module constructs latent role representations, makes them interact through role-interactive encoding to capture semantic relevance, and merges them into candidate arguments. Both STCP and RLIG introduce no more than 1% new parameters compared with the base model and can be easily applied to other event extraction models, which are compact and transplantable. Experiments on two public datasets show that our SCPRG outperforms previous state-of-the-art methods, with 1.13 F1 and 2.64 F1 improvements on RAMS and WikiEvents respectively. Further analyses illustrate the interpretability of our model.

Revisiting Graph Meaning Representations through Decoupling Contextual Representation Learning and Structural Information Propagation

Oct 15, 2023Abstract:In the field of natural language understanding, the intersection of neural models and graph meaning representations (GMRs) remains a compelling area of research. Despite the growing interest, a critical gap persists in understanding the exact influence of GMRs, particularly concerning relation extraction tasks. Addressing this, we introduce DAGNN-plus, a simple and parameter-efficient neural architecture designed to decouple contextual representation learning from structural information propagation. Coupled with various sequence encoders and GMRs, this architecture provides a foundation for systematic experimentation on two English and two Chinese datasets. Our empirical analysis utilizes four different graph formalisms and nine parsers. The results yield a nuanced understanding of GMRs, showing improvements in three out of the four datasets, particularly favoring English over Chinese due to highly accurate parsers. Interestingly, GMRs appear less effective in literary-domain datasets compared to general-domain datasets. These findings lay the groundwork for better-informed design of GMRs and parsers to improve relation classification, which is expected to tangibly impact the future trajectory of natural language understanding research.

CARLG: Leveraging Contextual Clues and Role Correlations for Improving Document-level Event Argument Extraction

Oct 08, 2023

Abstract:Document-level event argument extraction (EAE) is a crucial but challenging subtask in information extraction. Most existing approaches focus on the interaction between arguments and event triggers, ignoring two critical points: the information of contextual clues and the semantic correlations among argument roles. In this paper, we propose the CARLG model, which consists of two modules: Contextual Clues Aggregation (CCA) and Role-based Latent Information Guidance (RLIG), effectively leveraging contextual clues and role correlations for improving document-level EAE. The CCA module adaptively captures and integrates contextual clues by utilizing context attention weights from a pre-trained encoder. The RLIG module captures semantic correlations through role-interactive encoding and provides valuable information guidance with latent role representation. Notably, our CCA and RLIG modules are compact, transplantable and efficient, which introduce no more than 1% new parameters and can be easily equipped on other span-base methods with significant performance boost. Extensive experiments on the RAMS, WikiEvents, and MLEE datasets demonstrate the superiority of the proposed CARLG model. It outperforms previous state-of-the-art approaches by 1.26 F1, 1.22 F1, and 1.98 F1, respectively, while reducing the inference time by 31%. Furthermore, we provide detailed experimental analyses based on the performance gains and illustrate the interpretability of our model.

Improving Image Captioning with Control Signal of Sentence Quality

Jun 07, 2022

Abstract:In the dataset of image captioning, each image is aligned with several captions. Despite the fact that the quality of these descriptions varies, existing captioning models treat them equally in the training process. In this paper, we propose a new control signal of sentence quality, which is taken as an additional input to the captioning model. By integrating the control signal information, captioning models are aware of the quality level of the target sentences and handle them differently. Moreover, we propose a novel reinforcement training method specially designed for the control signal of sentence quality: Quality-oriented Self-Annotated Training (Q-SAT). Equipped with R-Drop strategy, models controlled by the highest quality level surpass baseline models a lot on accuracy-based evaluation metrics, which validates the effectiveness of our proposed methods.

Double Thompson Sampling in Finite stochastic Games

Feb 28, 2022Abstract:We consider the trade-off problem between exploration and exploitation under finite discounted Markov Decision Process, where the state transition matrix of the underlying environment stays unknown. We propose a double Thompson sampling reinforcement learning algorithm(DTS) to solve this kind of problem. This algorithm achieves a total regret bound of $\tilde{\mathcal{O}}(D\sqrt{SAT})$in time horizon $T$ with $S$ states, $A$ actions and diameter $D$. DTS consists of two parts, the first part is the traditional part where we apply the posterior sampling method on transition matrix based on prior distribution. In the second part, we employ a count-based posterior update method to balance between the local optimal action and the long-term optimal action in order to find the global optimal game value. We established a regret bound of $\tilde{\mathcal{O}}(\sqrt{T}/S^{2})$. Which is by far the best regret bound for finite discounted Markov Decision Process to our knowledge. Numerical results proves the efficiency and superiority of our approach.

A Dual-Perception Graph Neural Network with Multi-hop Graph Generator

Oct 22, 2021

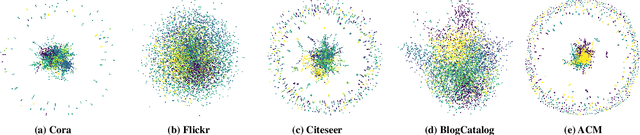

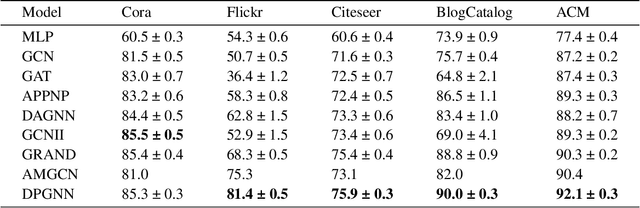

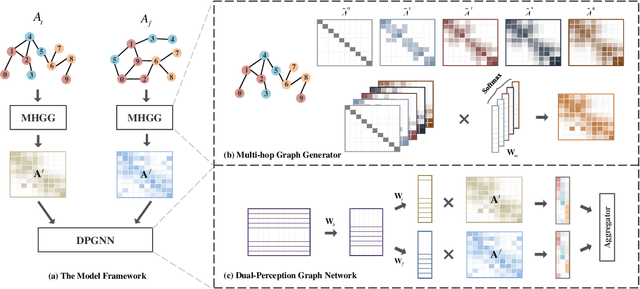

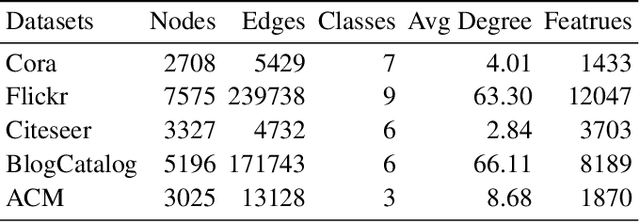

Abstract:Graph neural networks (GNNs) have drawn increasing attention in recent years and achieved remarkable performance in many graph-based tasks, especially in semi-supervised learning on graphs. However, most existing GNNs excessively rely on topological structures and aggregate multi-hop neighborhood information by simply stacking network layers, which may introduce superfluous noise information, limit the expressive power of GNNs and lead to the over-smoothing problem ultimately. In light of this, we propose a novel Dual-Perception Graph Neural Network (DPGNN) to address these issues. In DPGNN, we utilize node features to construct a feature graph, and perform node representations learning based on the original topology graph and the constructed feature graph simultaneously, which conduce to capture the structural neighborhood information and the feature-related information. Furthermore, we design a Multi-Hop Graph Generator (MHGG), which applies a node-to-hop attention mechanism to aggregate node-specific multi-hop neighborhood information adaptively. Finally, we apply self-ensembling to form a consistent prediction for unlabeled node representations. Experimental results on five datasets with different topological structures demonstrate that our proposed DPGNN outperforms all the latest state-of-the-art models on all datasets, which proves the superiority and versatility of our model. The source code of our model is available at https://github.com.

Self-Annotated Training for Controllable Image Captioning

Oct 16, 2021

Abstract:The Controllable Image Captioning (CIC) task aims to generate captions conditioned on designated control signals. In this paper, we improve CIC from two aspects: 1) Existing reinforcement training methods are not applicable to structure-related CIC models due to the fact that the accuracy-based reward focuses mainly on contents rather than semantic structures. The lack of reinforcement training prevents the model from generating more accurate and controllable sentences. To solve the problem above, we propose a novel reinforcement training method for structure-related CIC models: Self-Annotated Training (SAT), where a recursive sampling mechanism (RSM) is designed to force the input control signal to match the actual output sentence. Extensive experiments conducted on MSCOCO show that our SAT method improves C-Transformer (XE) on CIDEr-D score from 118.6 to 130.1 in the length-control task and from 132.2 to 142.7 in the tense-control task, while maintaining more than 99$\%$ matching accuracy with the control signal. 2) We introduce a new control signal: sentence quality. Equipped with it, CIC models are able to generate captions of different quality levels as needed. Experiments show that without additional information of ground truth captions, models controlled by the highest level of sentence quality perform much better in accuracy than baseline models.

Generating Human Readable Transcript for Automatic Speech Recognition with Pre-trained Language Model

Feb 22, 2021

Abstract:Modern Automatic Speech Recognition (ASR) systems can achieve high performance in terms of recognition accuracy. However, a perfectly accurate transcript still can be challenging to read due to disfluency, filter words, and other errata common in spoken communication. Many downstream tasks and human readers rely on the output of the ASR system; therefore, errors introduced by the speaker and ASR system alike will be propagated to the next task in the pipeline. In this work, we propose an ASR post-processing model that aims to transform the incorrect and noisy ASR output into a readable text for humans and downstream tasks. We leverage the Metadata Extraction (MDE) corpus to construct a task-specific dataset for our study. Since the dataset is small, we propose a novel data augmentation method and use a two-stage training strategy to fine-tune the RoBERTa pre-trained model. On the constructed test set, our model outperforms a production two-step pipeline-based post-processing method by a large margin of 13.26 on readability-aware WER (RA-WER) and 17.53 on BLEU metrics. Human evaluation also demonstrates that our method can generate more human-readable transcripts than the baseline method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge