Guoheng Huang

Layer-Wise Feature Metric of Semantic-Pixel Matching for Few-Shot Learning

Nov 10, 2024

Abstract:In Few-Shot Learning (FSL), traditional metric-based approaches often rely on global metrics to compute similarity. However, in natural scenes, the spatial arrangement of key instances is often inconsistent across images. This spatial misalignment can result in mismatched semantic pixels, leading to inaccurate similarity measurements. To address this issue, we propose a novel method called the Layer-Wise Features Metric of Semantic-Pixel Matching (LWFM-SPM) to make finer comparisons. Our method enhances model performance through two key modules: (1) the Layer-Wise Embedding (LWE) Module, which refines the cross-correlation of image pairs to generate well-focused feature maps for each layer; (2)the Semantic-Pixel Matching (SPM) Module, which aligns critical pixels based on semantic embeddings using an assignment algorithm. We conducted extensive experiments to evaluate our method on four widely used few-shot classification benchmarks: miniImageNet, tieredImageNet, CUB-200-2011, and CIFAR-FS. The results indicate that LWFM-SPM achieves competitive performance across these benchmarks. Our code will be publicly available on https://github.com/Halo2Tang/Code-for-LWFM-SPM.

IMAN: An Adaptive Network for Robust NPC Mortality Prediction with Missing Modalities

Oct 24, 2024Abstract:Accurate prediction of mortality in nasopharyngeal carcinoma (NPC), a complex malignancy particularly challenging in advanced stages, is crucial for optimizing treatment strategies and improving patient outcomes. However, this predictive process is often compromised by the high-dimensional and heterogeneous nature of NPC-related data, coupled with the pervasive issue of incomplete multi-modal data, manifesting as missing radiological images or incomplete diagnostic reports. Traditional machine learning approaches suffer significant performance degradation when faced with such incomplete data, as they fail to effectively handle the high-dimensionality and intricate correlations across modalities. Even advanced multi-modal learning techniques like Transformers struggle to maintain robust performance in the presence of missing modalities, as they lack specialized mechanisms to adaptively integrate and align the diverse data types, while also capturing nuanced patterns and contextual relationships within the complex NPC data. To address these problem, we introduce IMAN: an adaptive network for robust NPC mortality prediction with missing modalities.

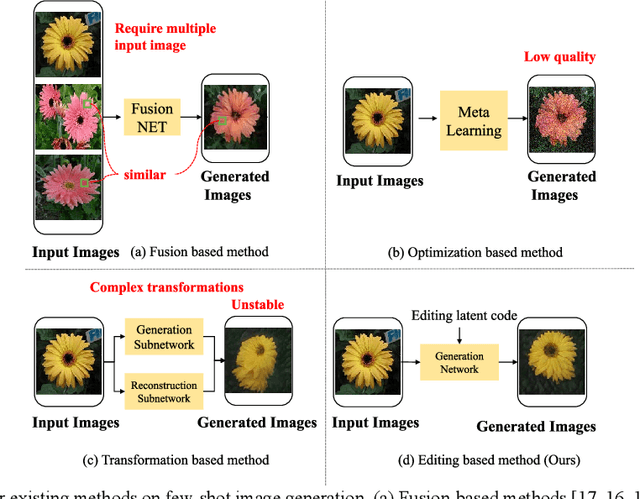

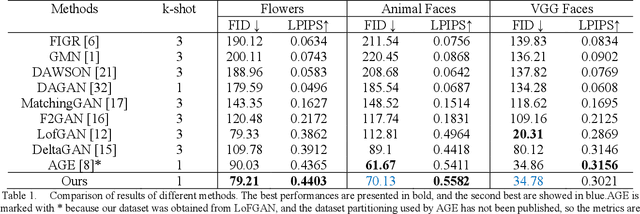

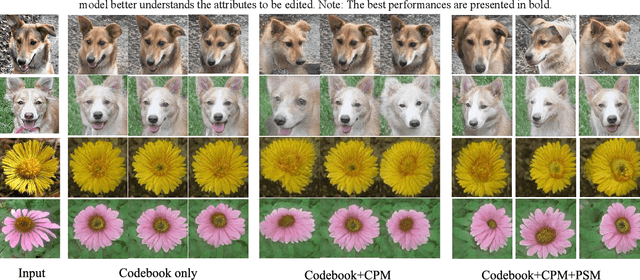

TAGE: Trustworthy Attribute Group Editing for Stable Few-shot Image Generation

Oct 23, 2024

Abstract:Generative Adversarial Networks (GANs) have emerged as a prominent research focus for image editing tasks, leveraging the powerful image generation capabilities of the GAN framework to produce remarkable results.However, prevailing approaches are contingent upon extensive training datasets and explicit supervision, presenting a significant challenge in manipulating the diverse attributes of new image classes with limited sample availability. To surmount this hurdle, we introduce TAGE, an innovative image generation network comprising three integral modules: the Codebook Learning Module (CLM), the Code Prediction Module (CPM) and the Prompt-driven Semantic Module (PSM). The CPM module delves into the semantic dimensions of category-agnostic attributes, encapsulating them within a discrete codebook. This module is predicated on the concept that images are assemblages of attributes, and thus, by editing these category-independent attributes, it is theoretically possible to generate images from unseen categories. Subsequently, the CPM module facilitates naturalistic image editing by predicting indices of category-independent attribute vectors within the codebook. Additionally, the PSM module generates semantic cues that are seamlessly integrated into the Transformer architecture of the CPM, enhancing the model's comprehension of the targeted attributes for editing. With these semantic cues, the model can generate images that accentuate desired attributes more prominently while maintaining the integrity of the original category, even with a limited number of samples. We have conducted extensive experiments utilizing the Animal Faces, Flowers, and VGGFaces datasets. The results of these experiments demonstrate that our proposed method not only achieves superior performance but also exhibits a high degree of stability when compared to other few-shot image generation techniques.

Lagrange Duality and Compound Multi-Attention Transformer for Semi-Supervised Medical Image Segmentation

Sep 12, 2024Abstract:Medical image segmentation, a critical application of semantic segmentation in healthcare, has seen significant advancements through specialized computer vision techniques. While deep learning-based medical image segmentation is essential for assisting in medical diagnosis, the lack of diverse training data causes the long-tail problem. Moreover, most previous hybrid CNN-ViT architectures have limited ability to combine various attentions in different layers of the Convolutional Neural Network. To address these issues, we propose a Lagrange Duality Consistency (LDC) Loss, integrated with Boundary-Aware Contrastive Loss, as the overall training objective for semi-supervised learning to mitigate the long-tail problem. Additionally, we introduce CMAformer, a novel network that synergizes the strengths of ResUNet and Transformer. The cross-attention block in CMAformer effectively integrates spatial attention and channel attention for multi-scale feature fusion. Overall, our results indicate that CMAformer, combined with the feature fusion framework and the new consistency loss, demonstrates strong complementarity in semi-supervised learning ensembles. We achieve state-of-the-art results on multiple public medical image datasets. Example code are available at: \url{https://github.com/lzeeorno/Lagrange-Duality-and-CMAformer}.

ASSNet: Adaptive Semantic Segmentation Network for Microtumors and Multi-Organ Segmentation

Sep 12, 2024

Abstract:Medical image segmentation, a crucial task in computer vision, facilitates the automated delineation of anatomical structures and pathologies, supporting clinicians in diagnosis, treatment planning, and disease monitoring. Notably, transformers employing shifted window-based self-attention have demonstrated exceptional performance. However, their reliance on local window attention limits the fusion of local and global contextual information, crucial for segmenting microtumors and miniature organs. To address this limitation, we propose the Adaptive Semantic Segmentation Network (ASSNet), a transformer architecture that effectively integrates local and global features for precise medical image segmentation. ASSNet comprises a transformer-based U-shaped encoder-decoder network. The encoder utilizes shifted window self-attention across five resolutions to extract multi-scale features, which are then propagated to the decoder through skip connections. We introduce an augmented multi-layer perceptron within the encoder to explicitly model long-range dependencies during feature extraction. Recognizing the constraints of conventional symmetrical encoder-decoder designs, we propose an Adaptive Feature Fusion (AFF) decoder to complement our encoder. This decoder incorporates three key components: the Long Range Dependencies (LRD) block, the Multi-Scale Feature Fusion (MFF) block, and the Adaptive Semantic Center (ASC) block. These components synergistically facilitate the effective fusion of multi-scale features extracted by the decoder while capturing long-range dependencies and refining object boundaries. Comprehensive experiments on diverse medical image segmentation tasks, including multi-organ, liver tumor, and bladder tumor segmentation, demonstrate that ASSNet achieves state-of-the-art results. Code and models are available at: \url{https://github.com/lzeeorno/ASSNet}.

Multi-scale Quaternion CNN and BiGRU with Cross Self-attention Feature Fusion for Fault Diagnosis of Bearing

May 25, 2024Abstract:In recent years, deep learning has led to significant advances in bearing fault diagnosis (FD). Most techniques aim to achieve greater accuracy. However, they are sensitive to noise and lack robustness, resulting in insufficient domain adaptation and anti-noise ability. The comparison of studies reveals that giving equal attention to all features does not differentiate their significance. In this work, we propose a novel FD model by integrating multi-scale quaternion convolutional neural network (MQCNN), bidirectional gated recurrent unit (BiGRU), and cross self-attention feature fusion (CSAFF). We have developed innovative designs in two modules, namely MQCNN and CSAFF. Firstly, MQCNN applies quaternion convolution to multi-scale architecture for the first time, aiming to extract the rich hidden features of the original signal from multiple scales. Then, the extracted multi-scale information is input into CSAFF for feature fusion, where CSAFF innovatively incorporates cross self-attention mechanism to enhance discriminative interaction representation within features. Finally, BiGRU captures temporal dependencies while a softmax layer is employed for fault classification, achieving accurate FD. To assess the efficacy of our approach, we experiment on three public datasets (CWRU, MFPT, and Ottawa) and compare it with other excellent methods. The results confirm its state-of-the-art, which the average accuracies can achieve up to 99.99%, 100%, and 99.21% on CWRU, MFPT, and Ottawa datasets. Moreover, we perform practical tests and ablation experiments to validate the efficacy and robustness of the proposed approach. Code is available at https://github.com/mubai011/MQCCAF.

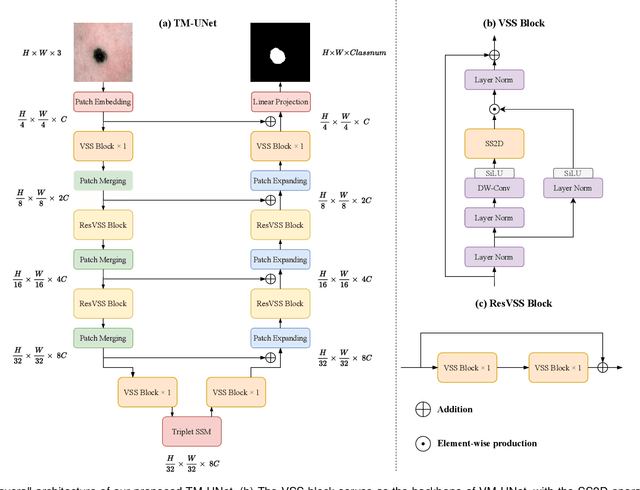

Rotate to Scan: UNet-like Mamba with Triplet SSM Module for Medical Image Segmentation

Apr 01, 2024

Abstract:Image segmentation holds a vital position in the realms of diagnosis and treatment within the medical domain. Traditional convolutional neural networks (CNNs) and Transformer models have made significant advancements in this realm, but they still encounter challenges because of limited receptive field or high computing complexity. Recently, State Space Models (SSMs), particularly Mamba and its variants, have demonstrated notable performance in the field of vision. However, their feature extraction methods may not be sufficiently effective and retain some redundant structures, leaving room for parameter reduction. Motivated by previous spatial and channel attention methods, we propose Triplet Mamba-UNet. The method leverages residual VSS Blocks to extract intensive contextual features, while Triplet SSM is employed to fuse features across spatial and channel dimensions. We conducted experiments on ISIC17, ISIC18, CVC-300, CVC-ClinicDB, Kvasir-SEG, CVC-ColonDB, and Kvasir-Instrument datasets, demonstrating the superior segmentation performance of our proposed TM-UNet. Additionally, compared to the previous VM-UNet, our model achieves a one-third reduction in parameters.

Medical Visual Prompting (MVP): A Unified Framework for Versatile and High-Quality Medical Image Segmentation

Apr 01, 2024

Abstract:Accurate segmentation of lesion regions is crucial for clinical diagnosis and treatment across various diseases. While deep convolutional networks have achieved satisfactory results in medical image segmentation, they face challenges such as loss of lesion shape information due to continuous convolution and downsampling, as well as the high cost of manually labeling lesions with varying shapes and sizes. To address these issues, we propose a novel medical visual prompting (MVP) framework that leverages pre-training and prompting concepts from natural language processing (NLP). The framework utilizes three key components: Super-Pixel Guided Prompting (SPGP) for superpixelating the input image, Image Embedding Guided Prompting (IEGP) for freezing patch embedding and merging with superpixels to provide visual prompts, and Adaptive Attention Mechanism Guided Prompting (AAGP) for pinpointing prompt content and efficiently adapting all layers. By integrating SPGP, IEGP, and AAGP, the MVP enables the segmentation network to better learn shape prompting information and facilitates mutual learning across different tasks. Extensive experiments conducted on five datasets demonstrate superior performance of this method in various challenging medical image tasks, while simplifying single-task medical segmentation models. This novel framework offers improved performance with fewer parameters and holds significant potential for accurate segmentation of lesion regions in various medical tasks, making it clinically valuable.

Adaptive Query Prompting for Multi-Domain Landmark Detection

Apr 01, 2024

Abstract:Medical landmark detection is crucial in various medical imaging modalities and procedures. Although deep learning-based methods have achieve promising performance, they are mostly designed for specific anatomical regions or tasks. In this work, we propose a universal model for multi-domain landmark detection by leveraging transformer architecture and developing a prompting component, named as Adaptive Query Prompting (AQP). Instead of embedding additional modules in the backbone network, we design a separate module to generate prompts that can be effectively extended to any other transformer network. In our proposed AQP, prompts are learnable parameters maintained in a memory space called prompt pool. The central idea is to keep the backbone frozen and then optimize prompts to instruct the model inference process. Furthermore, we employ a lightweight decoder to decode landmarks from the extracted features, namely Light-MLD. Thanks to the lightweight nature of the decoder and AQP, we can handle multiple datasets by sharing the backbone encoder and then only perform partial parameter tuning without incurring much additional cost. It has the potential to be extended to more landmark detection tasks. We conduct experiments on three widely used X-ray datasets for different medical landmark detection tasks. Our proposed Light-MLD coupled with AQP achieves SOTA performance on many metrics even without the use of elaborate structural designs or complex frameworks.

QEAN: Quaternion-Enhanced Attention Network for Visual Dance Generation

Mar 18, 2024Abstract:The study of music-generated dance is a novel and challenging Image generation task. It aims to input a piece of music and seed motions, then generate natural dance movements for the subsequent music. Transformer-based methods face challenges in time series prediction tasks related to human movements and music due to their struggle in capturing the nonlinear relationship and temporal aspects. This can lead to issues like joint deformation, role deviation, floating, and inconsistencies in dance movements generated in response to the music. In this paper, we propose a Quaternion-Enhanced Attention Network (QEAN) for visual dance synthesis from a quaternion perspective, which consists of a Spin Position Embedding (SPE) module and a Quaternion Rotary Attention (QRA) module. First, SPE embeds position information into self-attention in a rotational manner, leading to better learning of features of movement sequences and audio sequences, and improved understanding of the connection between music and dance. Second, QRA represents and fuses 3D motion features and audio features in the form of a series of quaternions, enabling the model to better learn the temporal coordination of music and dance under the complex temporal cycle conditions of dance generation. Finally, we conducted experiments on the dataset AIST++, and the results show that our approach achieves better and more robust performance in generating accurate, high-quality dance movements. Our source code and dataset can be available from https://github.com/MarasyZZ/QEAN and https://google.github.io/aistplusplus_dataset respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge