Eugenio Cuniato

Allocation for Omnidirectional Aerial Robots: Incorporating Power Dynamics

Dec 20, 2024

Abstract:Tilt-rotor aerial robots are more dynamic and versatile than their fixed-rotor counterparts, since the thrust vector and body orientation are decoupled. However, the coordination of servomotors and propellers (the allocation problem) is not trivial, especially accounting for overactuation and actuator dynamics. We present and compare different methods of actuator allocation for tilt-rotor platforms, evaluating them on a real aerial robot performing dynamic trajectories. We extend the state-of-the-art geometric allocation into a differential allocation, which uses the platform's redundancy and does not suffer from singularities typical of the geometric solution. We expand it by incorporating actuator dynamics and introducing propeller limit curves. These improve the modeling of propeller limits, automatically balancing their usage and allowing the platform to selectively activate and deactivate propellers during flight. We show that actuator dynamics and limits make the tuning of the allocation not only easier, but also allow it to track more dynamic oscillating trajectories with angular velocities up to 4 rad/s, compared to 2.8 rad/s of geometric methods.

Evaluation of Human-Robot Interfaces based on 2D/3D Visual and Haptic Feedback for Aerial Manipulation

Oct 20, 2024

Abstract:Most telemanipulation systems for aerial robots provide the operator with only 2D screen visual information. The lack of richer information about the robot's status and environment can limit human awareness and, in turn, task performance. While the pilot's experience can often compensate for this reduced flow of information, providing richer feedback is expected to reduce the cognitive workload and offer a more intuitive experience overall. This work aims to understand the significance of providing additional pieces of information during aerial telemanipulation, namely (i) 3D immersive visual feedback about the robot's surroundings through mixed reality (MR) and (ii) 3D haptic feedback about the robot interaction with the environment. To do so, we developed a human-robot interface able to provide this information. First, we demonstrate its potential in a real-world manipulation task requiring sub-centimeter-level accuracy. Then, we evaluate the individual effect of MR vision and haptic feedback on both dexterity and workload through a human subjects study involving a virtual block transportation task. Results show that both 3D MR vision and haptic feedback improve the operator's dexterity in the considered teleoperated aerial interaction tasks. Nevertheless, pilot experience remains the most significant factor.

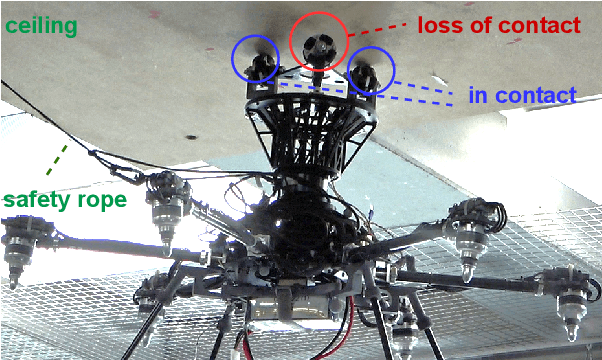

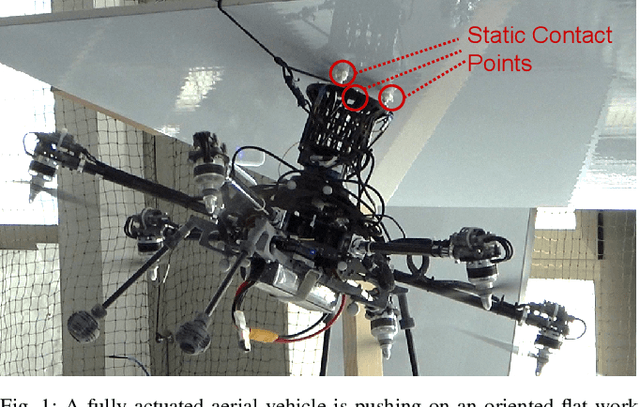

Multi-Wheeled Passive Sliding with Fully-Actuated Aerial Robots: Tip-Over Recovery and Avoidance

May 29, 2024

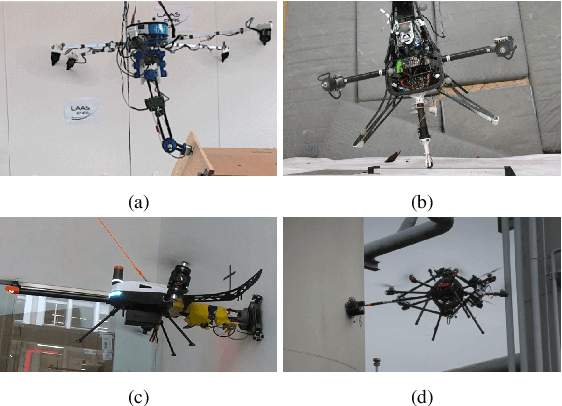

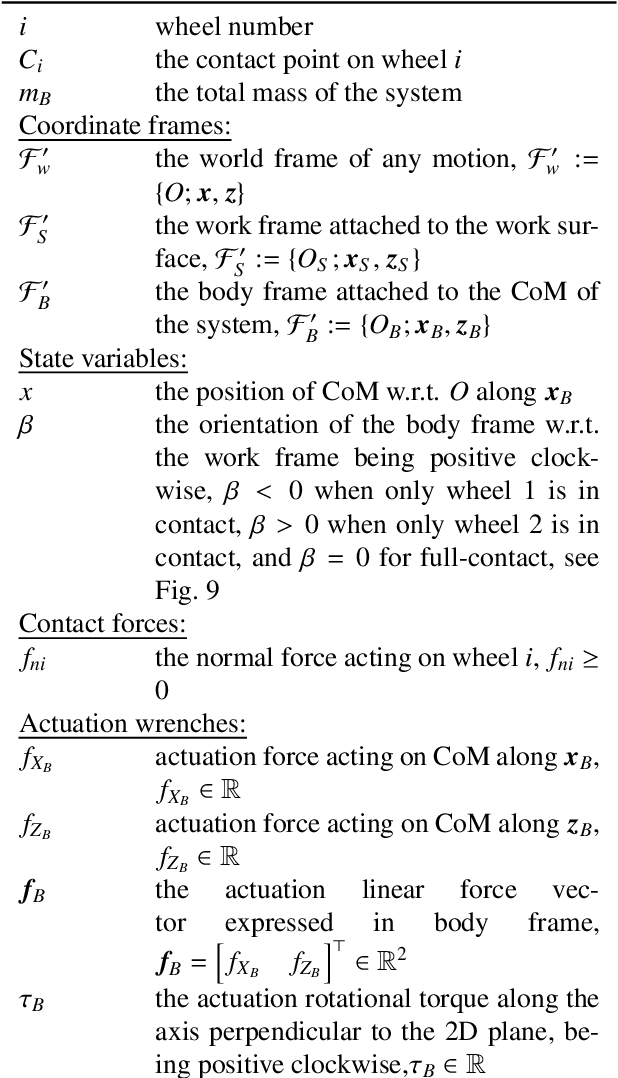

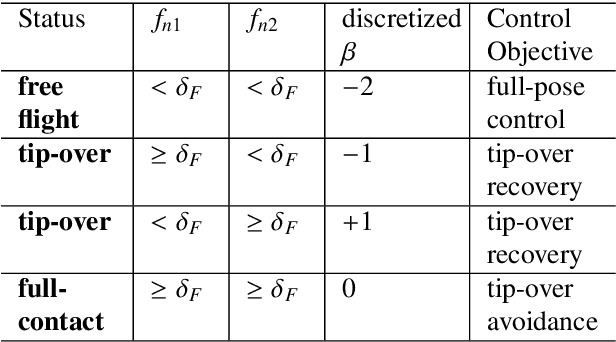

Abstract:Push-and-slide tasks carried out by fully-actuated aerial robots can be used for inspection and simple maintenance tasks at height, such as non-destructive testing and painting. Often, an end-effector based on multiple non-actuated contact wheels is used to contact the surface. This approach entails challenges in ensuring consistent wheel contact with a surface whose exact orientation and location might be uncertain due to sensor aliasing and drift. Using a standard full-pose controller dependent on the inaccurate surface position and orientation may cause wheels to lose contact during sliding, and subsequently lead to robot tip-over. To address the tip-over issue, we present two approaches: (1) tip-over avoidance guidelines for hardware design, and (2) control for tip-over recovery and avoidance. Physical experiments with a fully-actuated aerial vehicle were executed for a push-and-slide task on a flat surface. The resulting data is used in deriving tip-over avoidance guidelines and designing a simulator that closely captures real-world conditions. We then use the simulator to test the effectiveness and robustness of the proposed approaches in risky scenarios against uncertainties.

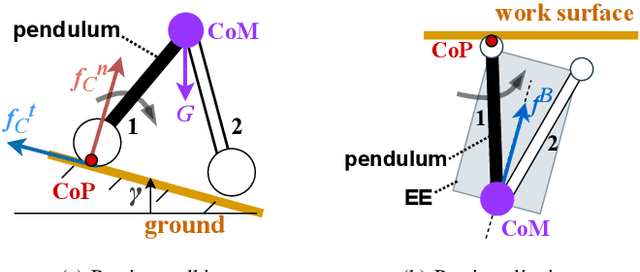

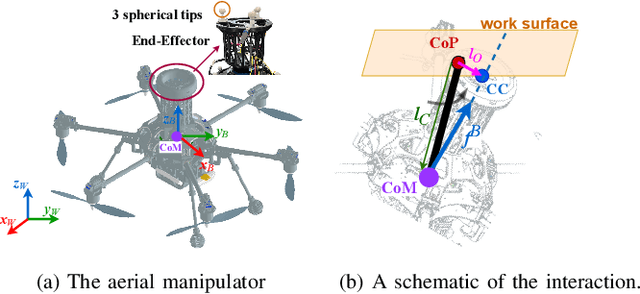

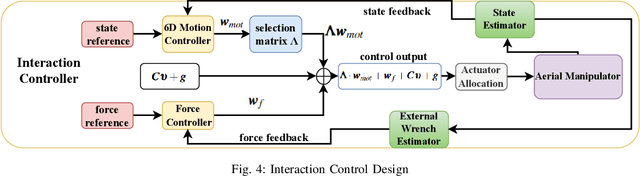

Passive Aligning Physical Interaction of Fully-Actuated Aerial Vehicles for Pushing Tasks

Feb 27, 2024

Abstract:Recently, the utilization of aerial manipulators for performing pushing tasks in non-destructive testing (NDT) applications has seen significant growth. Such operations entail physical interactions between the aerial robotic system and the environment. End-effectors with multiple contact points are often used for placing NDT sensors in contact with a surface to be inspected. Aligning the NDT sensor and the work surface while preserving contact, requires that all available contact points at the end-effector tip are in contact with the work surface. With a standard full-pose controller, attitude errors often occur due to perturbations caused by modeling uncertainties, sensor noise, and environmental uncertainties. Even small attitude errors can cause a loss of contact points between the end-effector tip and the work surface. To preserve full alignment amidst these uncertainties, we propose a control strategy which selectively deactivates angular motion control and enables direct force control in specific directions. In particular, we derive two essential conditions to be met, such that the robot can passively align with flat work surfaces achieving full alignment through the rotation along non-actively controlled axes. Additionally, these conditions serve as hardware design and control guidelines for effectively integrating the proposed control method for practical usage. Real world experiments are conducted to validate both the control design and the guidelines.

Learning to Fly Omnidirectional Micro Aerial Vehicles with an End-To-End Control Network

Dec 08, 2023Abstract:Overactuated tilt-rotor platforms offer many advantages over traditional fixed-arm drones, allowing the decoupling of the applied force from the attitude of the robot. This expands their application areas to aerial interaction and manipulation, and allows them to overcome disturbances such as from ground or wall effects by exploiting the additional degrees of freedom available to their controllers. However, the overactuation also complicates the control problem, especially if the motors that tilt the arms have slower dynamics than those spinning the propellers. Instead of building a complex model-based controller that takes all of these subtleties into account, we attempt to learn an end-to-end pose controller using reinforcement learning, and show its superior behavior in the presence of inertial and force disturbances compared to a state-of-the-art traditional controller.

Geranos: a Novel Tilted-Rotors Aerial Robot for the Transportation of Poles

Dec 04, 2023

Abstract:In challenging terrains, constructing structures such as antennas and cable-car masts often requires the use of helicopters to transport loads via ropes. The swinging of the load, exacerbated by wind, impairs positioning accuracy, therefore necessitating precise manual placement by ground crews. This increases costs and risk of injuries. Challenging this paradigm, we present Geranos: a specialized multirotor Unmanned Aerial Vehicle (UAV) designed to enhance aerial transportation and assembly. Geranos demonstrates exceptional prowess in accurately positioning vertical poles, achieving this through an innovative integration of load transport and precision. Its unique ring design mitigates the impact of high pole inertia, while a lightweight two-part grasping mechanism ensures secure load attachment without active force. With four primary propellers countering gravity and four auxiliary ones enhancing lateral precision, Geranos achieves comprehensive position and attitude control around hovering. Our experimental demonstration mimicking antenna/cable-car mast installations showcases Geranos ability in stacking poles (3 kg, 2 m long) with remarkable sub-5 cm placement accuracy, without the need of human manual intervention.

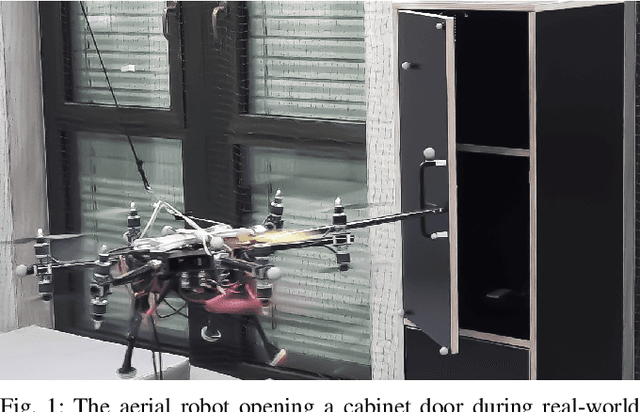

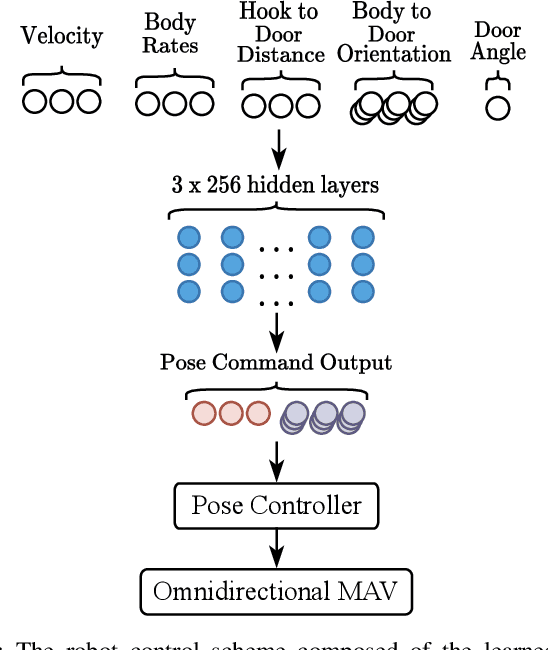

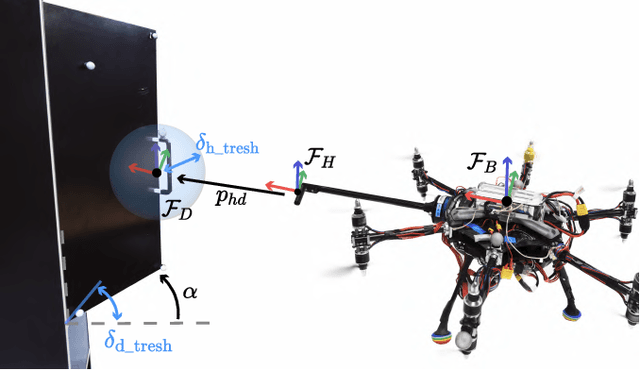

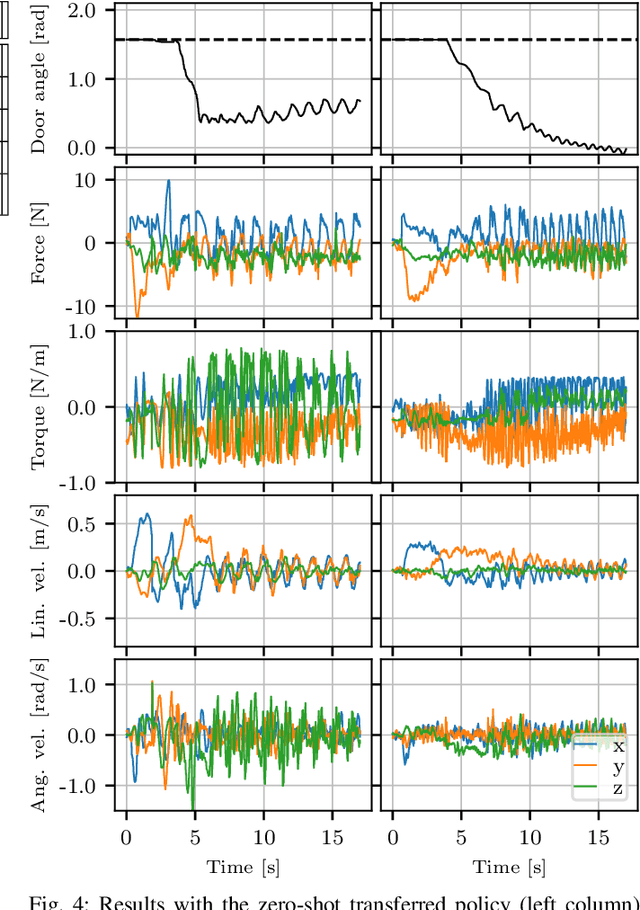

Learning to Open Doors with an Aerial Manipulator

Jul 28, 2023

Abstract:The field of aerial manipulation has seen rapid advances, transitioning from push-and-slide tasks to interaction with articulated objects. So far, when more complex actions are performed, the motion trajectory is usually handcrafted or a result of online optimization methods like Model Predictive Control (MPC) or Model Predictive Path Integral (MPPI) control. However, these methods rely on heuristics or model simplifications to efficiently run on onboard hardware, producing results in acceptable amounts of time. Moreover, they can be sensitive to disturbances and differences between the real environment and its simulated counterpart. In this work, we propose a Reinforcement Learning (RL) approach to learn motion behaviors for a manipulation task while producing policies that are robust to disturbances and modeling errors. Specifically, we train a policy to perform a door-opening task with an Omnidirectional Micro Aerial Vehicle (OMAV). The policy is trained in a physics simulator and experiments are presented both in simulation and running onboard the real platform, investigating the simulation to real world transfer. We compare our method against a state-of-the-art MPPI solution, showing a considerable increase in robustness and speed.

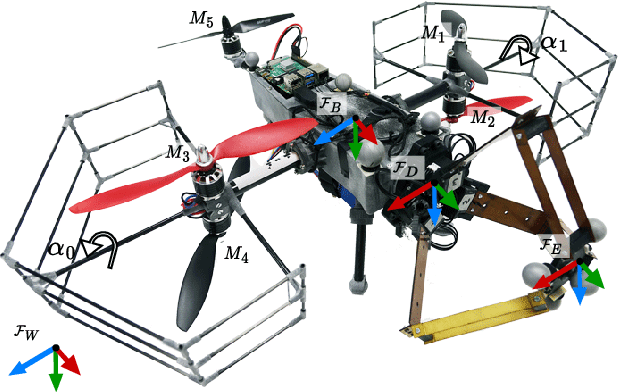

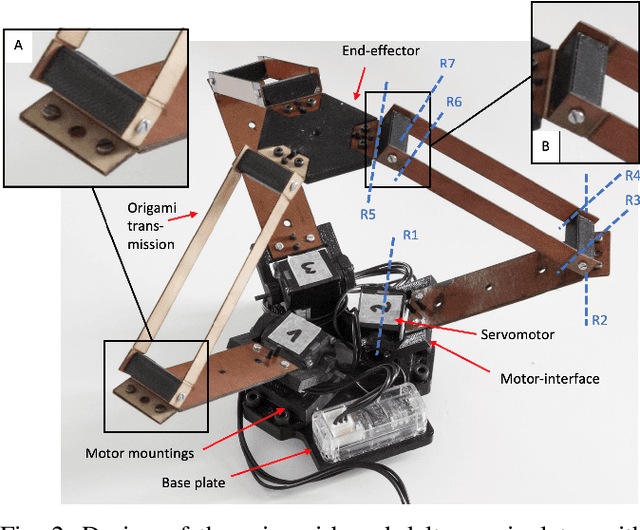

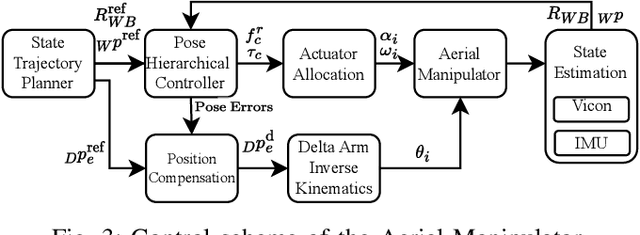

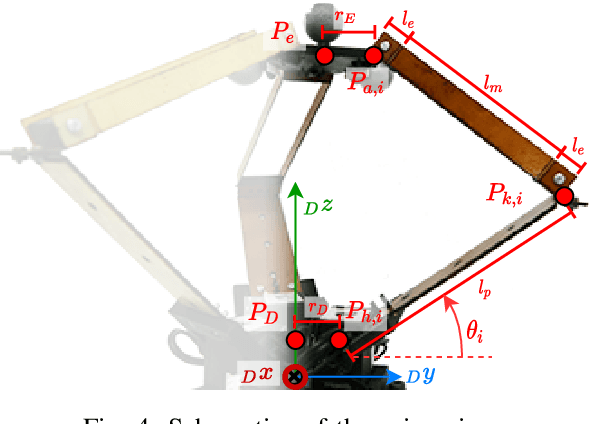

Design and Control of a Micro Overactuated Aerial Robot with an Origami Delta Manipulator

May 03, 2023

Abstract:This work presents the mechanical design and control of a novel small-size and lightweight Micro Aerial Vehicle (MAV) for aerial manipulation. To our knowledge, with a total take-off mass of only 2.0 kg, the proposed system is the most lightweight Aerial Manipulator (AM) that has 8-DOF independently controllable: 5 for the aerial platform and 3 for the articulated arm. We designed the robot to be fully-actuated in the body forward direction. This allows independent pitching and instantaneous force generation, improving the platform's performance during physical interaction. The robotic arm is an origami delta manipulator driven by three servomotors, enabling active motion compensation at the end-effector. Its composite multimaterial links help reduce the weight, while their flexibility allow for compliant aerial interaction with the environment. In particular, the arm's stiffness can be changed according to its configuration. We provide an in depth discussion of the system design and characterize the stiffness of the delta arm. A control architecture to deal with the platform's overactuation while exploiting the delta arm is presented. Its capabilities are experimentally illustrated both in free flight and physical interaction, highlighting advantages and disadvantages of the origami's folding mechanism.

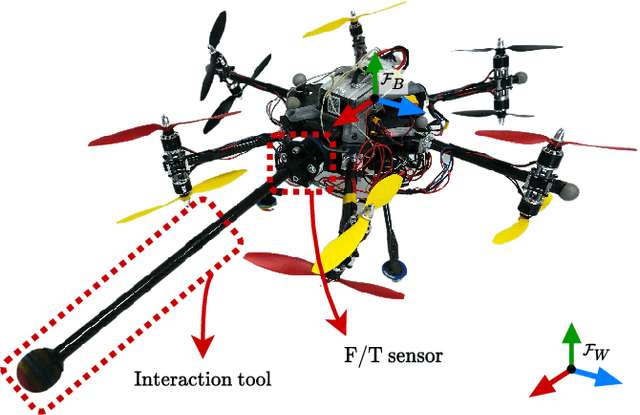

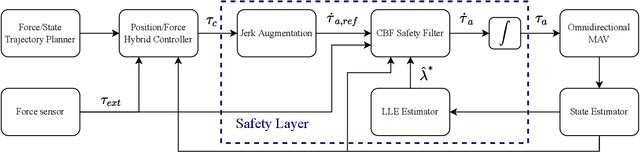

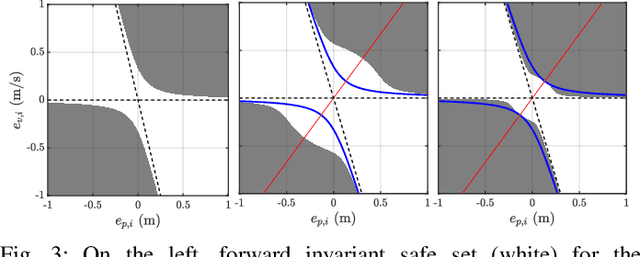

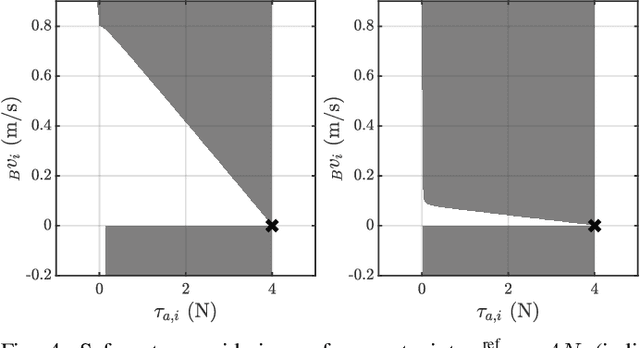

Power-based Safety Layer for Aerial Vehicles in Physical Interaction using Lyapunov Exponents

May 25, 2022

Abstract:As the performance of autonomous systems increases, safety concerns arise, especially when operating in non-structured environments. To deal with these concerns, this work presents a safety layer for mechanical systems that detects and responds to unstable dynamics caused by external disturbances. The safety layer is implemented independently and on top of already present nominal controllers, like pose or wrench tracking, and limits power flow when the system's response would lead to instability. This approach is based on the computation of the Largest Lyapunov Exponent (LLE) of the system's error dynamics, which represent a measure of the dynamics' divergence or convergence rate. By actively computing this metric, divergent and possibly dangerous system behaviors can be promptly detected. The LLE is then used in combination with Control Barrier Functions (CBFs) to impose power limit constraints on a jerk controlled system. The proposed architecture is experimentally validated on an Omnidirectional Micro Aerial Vehicle (OMAV) both in free flight and interaction tasks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge