Dimitrios Kollias

Behaviour4All: in-the-wild Facial Behaviour Analysis Toolkit

Sep 26, 2024

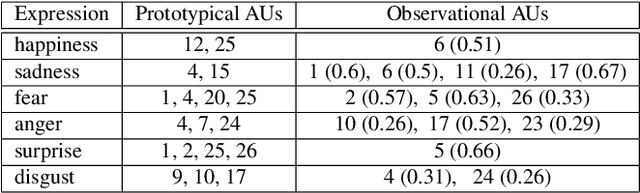

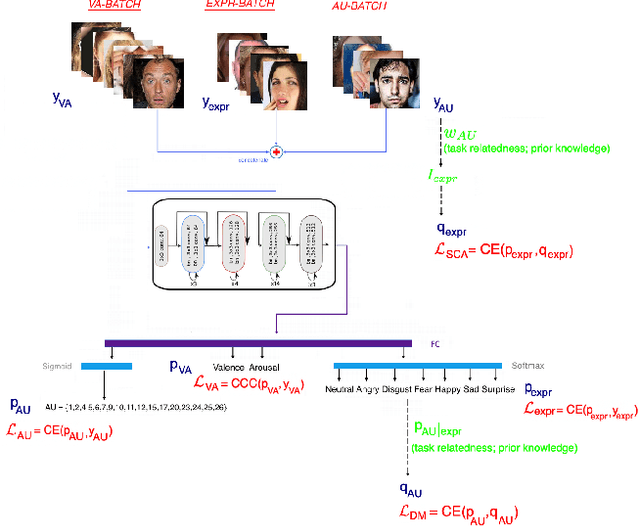

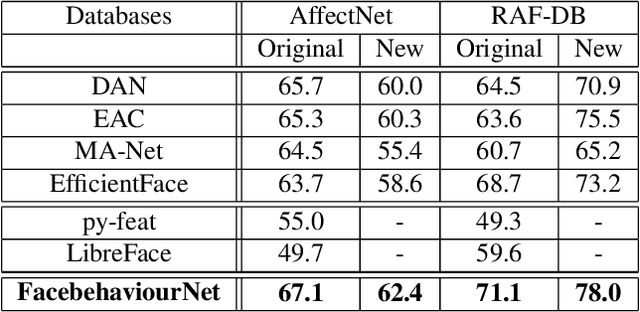

Abstract:In this paper, we introduce Behavior4All, a comprehensive, open-source toolkit for in-the-wild facial behavior analysis, integrating Face Localization, Valence-Arousal Estimation, Basic Expression Recognition and Action Unit Detection, all within a single framework. Available in both CPU-only and GPU-accelerated versions, Behavior4All leverages 12 large-scale, in-the-wild datasets consisting of over 5 million images from diverse demographic groups. It introduces a novel framework that leverages distribution matching and label co-annotation to address tasks with non-overlapping annotations, encoding prior knowledge of their relatedness. In the largest study of its kind, Behavior4All outperforms both state-of-the-art and toolkits in overall performance as well as fairness across all databases and tasks. It also demonstrates superior generalizability on unseen databases and on compound expression recognition. Finally, Behavior4All is way times faster than other toolkits.

MRAC Track 1: 2nd Workshop on Multimodal, Generative and Responsible Affective Computing

Sep 11, 2024Abstract:With the rapid advancements in multimodal generative technology, Affective Computing research has provoked discussion about the potential consequences of AI systems equipped with emotional intelligence. Affective Computing involves the design, evaluation, and implementation of Emotion AI and related technologies aimed at improving people's lives. Designing a computational model in affective computing requires vast amounts of multimodal data, including RGB images, video, audio, text, and physiological signals. Moreover, Affective Computing research is deeply engaged with ethical considerations at various stages-from training emotionally intelligent models on large-scale human data to deploying these models in specific applications. Fundamentally, the development of any AI system must prioritize its impact on humans, aiming to augment and enhance human abilities rather than replace them, while drawing inspiration from human intelligence in a safe and responsible manner. The MRAC 2024 Track 1 workshop seeks to extend these principles from controlled, small-scale lab environments to real-world, large-scale contexts, emphasizing responsible development. The workshop also aims to highlight the potential implications of generative technology, along with the ethical consequences of its use, to researchers and industry professionals. To the best of our knowledge, this is the first workshop series to comprehensively address the full spectrum of multimodal, generative affective computing from a responsible AI perspective, and this is the second iteration of this workshop. Webpage: https://react-ws.github.io/2024/

1M-Deepfakes Detection Challenge

Sep 11, 2024

Abstract:The detection and localization of deepfake content, particularly when small fake segments are seamlessly mixed with real videos, remains a significant challenge in the field of digital media security. Based on the recently released AV-Deepfake1M dataset, which contains more than 1 million manipulated videos across more than 2,000 subjects, we introduce the 1M-Deepfakes Detection Challenge. This challenge is designed to engage the research community in developing advanced methods for detecting and localizing deepfake manipulations within the large-scale high-realistic audio-visual dataset. The participants can access the AV-Deepfake1M dataset and are required to submit their inference results for evaluation across the metrics for detection or localization tasks. The methodologies developed through the challenge will contribute to the development of next-generation deepfake detection and localization systems. Evaluation scripts, baseline models, and accompanying code will be available on https://github.com/ControlNet/AV-Deepfake1M.

Rethinking Affect Analysis: A Protocol for Ensuring Fairness and Consistency

Aug 07, 2024

Abstract:Evaluating affect analysis methods presents challenges due to inconsistencies in database partitioning and evaluation protocols, leading to unfair and biased results. Previous studies claim continuous performance improvements, but our findings challenge such assertions. Using these insights, we propose a unified protocol for database partitioning that ensures fairness and comparability. We provide detailed demographic annotations (in terms of race, gender and age), evaluation metrics, and a common framework for expression recognition, action unit detection and valence-arousal estimation. We also rerun the methods with the new protocol and introduce a new leaderboards to encourage future research in affect recognition with a fairer comparison. Our annotations, code, and pre-trained models are available on \hyperlink{https://github.com/dkollias/Fair-Consistent-Affect-Analysis}{Github}.

SAM2CLIP2SAM: Vision Language Model for Segmentation of 3D CT Scans for Covid-19 Detection

Jul 23, 2024Abstract:This paper presents a new approach for effective segmentation of images that can be integrated into any model and methodology; the paradigm that we choose is classification of medical images (3-D chest CT scans) for Covid-19 detection. Our approach includes a combination of vision-language models that segment the CT scans, which are then fed to a deep neural architecture, named RACNet, for Covid-19 detection. In particular, a novel framework, named SAM2CLIP2SAM, is introduced for segmentation that leverages the strengths of both Segment Anything Model (SAM) and Contrastive Language-Image Pre-Training (CLIP) to accurately segment the right and left lungs in CT scans, subsequently feeding these segmented outputs into RACNet for classification of COVID-19 and non-COVID-19 cases. At first, SAM produces multiple part-based segmentation masks for each slice in the CT scan; then CLIP selects only the masks that are associated with the regions of interest (ROIs), i.e., the right and left lungs; finally SAM is given these ROIs as prompts and generates the final segmentation mask for the lungs. Experiments are presented across two Covid-19 annotated databases which illustrate the improved performance obtained when our method has been used for segmentation of the CT scans.

Robust Facial Reactions Generation: An Emotion-Aware Framework with Modality Compensation

Jul 23, 2024Abstract:The objective of the Multiple Appropriate Facial Reaction Generation (MAFRG) task is to produce contextually appropriate and diverse listener facial behavioural responses based on the multimodal behavioural data of the conversational partner (i.e., the speaker). Current methodologies typically assume continuous availability of speech and facial modality data, neglecting real-world scenarios where these data may be intermittently unavailable, which often results in model failures. Furthermore, despite utilising advanced deep learning models to extract information from the speaker's multimodal inputs, these models fail to adequately leverage the speaker's emotional context, which is vital for eliciting appropriate facial reactions from human listeners. To address these limitations, we propose an Emotion-aware Modality Compensatory (EMC) framework. This versatile solution can be seamlessly integrated into existing models, thereby preserving their advantages while significantly enhancing performance and robustness in scenarios with missing modalities. Our framework ensures resilience when faced with missing modality data through the Compensatory Modality Alignment (CMA) module. It also generates more appropriate emotion-aware reactions via the Emotion-aware Attention (EA) module, which incorporates the speaker's emotional information throughout the entire encoding and decoding process. Experimental results demonstrate that our framework improves the appropriateness metric FRCorr by an average of 57.2\% compared to the original model structure. In scenarios where speech modality data is missing, the performance of appropriate generation shows an improvement, and when facial data is missing, it only exhibits minimal degradation.

7th ABAW Competition: Multi-Task Learning and Compound Expression Recognition

Jul 04, 2024

Abstract:This paper describes the 7th Affective Behavior Analysis in-the-wild (ABAW) Competition, which is part of the respective Workshop held in conjunction with ECCV 2024. The 7th ABAW Competition addresses novel challenges in understanding human expressions and behaviors, crucial for the development of human-centered technologies. The Competition comprises of two sub-challenges: i) Multi-Task Learning (the goal is to learn at the same time, in a multi-task learning setting, to estimate two continuous affect dimensions, valence and arousal, to recognise between the mutually exclusive classes of the 7 basic expressions and 'other'), and to detect 12 Action Units); and ii) Compound Expression Recognition (the target is to recognise between the 7 mutually exclusive compound expression classes). s-Aff-Wild2, which is a static version of the A/V Aff-Wild2 database and contains annotations for valence-arousal, expressions and Action Units, is utilized for the purposes of the Multi-Task Learning Challenge; a part of C-EXPR-DB, which is an A/V in-the-wild database with compound expression annotations, is utilized for the purposes of the Compound Expression Recognition Challenge. In this paper, we introduce the two challenges, detailing their datasets and the protocols followed for each. We also outline the evaluation metrics, and highlight the baseline systems and their results. Additional information about the competition can be found at \url{https://affective-behavior-analysis-in-the-wild.github.io/7th}.

Can Machine Learning Assist in Diagnosis of Primary Immune Thrombocytopenia? A feasibility study

May 31, 2024Abstract:Primary Immune thrombocytopenia (ITP) is a rare autoimmune disease characterised by immune-mediated destruction of peripheral blood platelets in patients leading to low platelet counts and bleeding. The diagnosis and effective management of ITP is challenging because there is no established test to confirm the disease and no biomarker with which one can predict the response to treatment and outcome. In this work we conduct a feasibility study to check if machine learning can be applied effectively for diagnosis of ITP using routine blood tests and demographic data in a non-acute outpatient setting. Various ML models, including Logistic Regression, Support Vector Machine, k-Nearest Neighbor, Decision Tree and Random Forest, were applied to data from the UK Adult ITP Registry and a general hematology clinic. Two different approaches were investigated: a demographic-unaware and a demographic-aware one. We conduct extensive experiments to evaluate the predictive performance of these models and approaches, as well as their bias. The results revealed that Decision Tree and Random Forest models were both superior and fair, achieving nearly perfect predictive and fairness scores, with platelet count identified as the most significant variable. Models not provided with demographic information performed better in terms of predictive accuracy but showed lower fairness score, illustrating a trade-off between predictive performance and fairness.

Common Corruptions for Enhancing and Evaluating Robustness in Air-to-Air Visual Object Detection

May 16, 2024Abstract:The main barrier to achieving fully autonomous flights lies in autonomous aircraft navigation. Managing non-cooperative traffic presents the most important challenge in this problem. The most efficient strategy for handling non-cooperative traffic is based on monocular video processing through deep learning models. This study contributes to the vision-based deep learning aircraft detection and tracking literature by investigating the impact of data corruption arising from environmental and hardware conditions on the effectiveness of these methods. More specifically, we designed $7$ types of common corruptions for camera inputs taking into account real-world flight conditions. By applying these corruptions to the Airborne Object Tracking (AOT) dataset we constructed the first robustness benchmark dataset named AOT-C for air-to-air aerial object detection. The corruptions included in this dataset cover a wide range of challenging conditions such as adverse weather and sensor noise. The second main contribution of this letter is to present an extensive experimental evaluation involving $8$ diverse object detectors to explore the degradation in the performance under escalating levels of corruptions (domain shifts). Based on the evaluation results, the key observations that emerge are the following: 1) One-stage detectors of the YOLO family demonstrate better robustness, 2) Transformer-based and multi-stage detectors like Faster R-CNN are extremely vulnerable to corruptions, 3) Robustness against corruptions is related to the generalization ability of models. The third main contribution is to present that finetuning on our augmented synthetic data results in improvements in the generalisation ability of the object detector in real-world flight experiments.

Bridging the Gap: Protocol Towards Fair and Consistent Affect Analysis

May 16, 2024Abstract:The increasing integration of machine learning algorithms in daily life underscores the critical need for fairness and equity in their deployment. As these technologies play a pivotal role in decision-making, addressing biases across diverse subpopulation groups, including age, gender, and race, becomes paramount. Automatic affect analysis, at the intersection of physiology, psychology, and machine learning, has seen significant development. However, existing databases and methodologies lack uniformity, leading to biased evaluations. This work addresses these issues by analyzing six affective databases, annotating demographic attributes, and proposing a common protocol for database partitioning. Emphasis is placed on fairness in evaluations. Extensive experiments with baseline and state-of-the-art methods demonstrate the impact of these changes, revealing the inadequacy of prior assessments. The findings underscore the importance of considering demographic attributes in affect analysis research and provide a foundation for more equitable methodologies. Our annotations, code and pre-trained models are available at: https://github.com/dkollias/Fair-Consistent-Affect-Analysis

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge