Chin-Teng Lin

Institution One

Inter- and Intra-Subject Variability in EEG: A Systematic Survey

Feb 01, 2026Abstract:Electroencephalography (EEG) underpins neuroscience, clinical neurophysiology, and brain-computer interfaces (BCIs), yet pronounced inter- and intra-subject variability limits reliability, reproducibility, and translation. This systematic review studies that quantified or modeled EEG variability across resting-state, event-related potentials (ERPs), and task-related/BCI paradigms (including motor imagery and SSVEP) in healthy and clinical cohorts. Across paradigms, inter-subject differences are typically larger than within-subject fluctuations, but both affect inference and model generalization. Stability is feature-dependent: alpha-band measures and individual alpha peak frequency are often relatively reliable, whereas higher-frequency and many connectivity-derived metrics show more heterogeneous reliability; ERP reliability varies by component, with P300 measures frequently showing moderate-to-good stability. We summarize major sources of variability (biological, state-related, technical, and analytical), review common quantification and modeling approaches (e.g., ICC, CV, SNR, generalizability theory, and multivariate/learning-based methods), and provide recommendations for study design, reporting, and harmonization. Overall, EEG variability should be treated as both a practical constraint to manage and a meaningful signal to leverage for precision neuroscience and robust neurotechnology.

AEGIS: Human Attention-based Explainable Guidance for Intelligent Vehicle Systems

Apr 08, 2025Abstract:Improving decision-making capabilities in Autonomous Intelligent Vehicles (AIVs) has been a heated topic in recent years. Despite advancements, training machines to capture regions of interest for comprehensive scene understanding, like human perception and reasoning, remains a significant challenge. This study introduces a novel framework, Human Attention-based Explainable Guidance for Intelligent Vehicle Systems (AEGIS). AEGIS utilizes human attention, converted from eye-tracking, to guide reinforcement learning (RL) models to identify critical regions of interest for decision-making. AEGIS uses a pre-trained human attention model to guide RL models to identify critical regions of interest for decision-making. By collecting 1.2 million frames from 20 participants across six scenarios, AEGIS pre-trains a model to predict human attention patterns.

Q-MARL: A quantum-inspired algorithm using neural message passing for large-scale multi-agent reinforcement learning

Mar 10, 2025Abstract:Inspired by a graph-based technique for predicting molecular properties in quantum chemistry -- atoms' position within molecules in three-dimensional space -- we present Q-MARL, a completely decentralised learning architecture that supports very large-scale multi-agent reinforcement learning scenarios without the need for strong assumptions like common rewards or agent order. The key is to treat each agent as relative to its surrounding agents in an environment that is presumed to change dynamically. Hence, in each time step, an agent is the centre of its own neighbourhood and also a neighbour to many other agents. Each role is formulated as a sub-graph, and each sub-graph is used as a training sample. A message-passing neural network supports full-scale vertex and edge interaction within a local neighbourhood, while a parameter governing the depth of the sub-graphs eases the training burden. During testing, an agent's actions are locally ensembled across all the sub-graphs that contain it, resulting in robust decisions. Where other approaches struggle to manage 50 agents, Q-MARL can easily marshal thousands. A detailed theoretical analysis proves improvement and convergence, and simulations with the typical collaborative and competitive scenarios show dramatically faster training speeds and reduced training losses.

Multi-Agent Coordination across Diverse Applications: A Survey

Feb 21, 2025

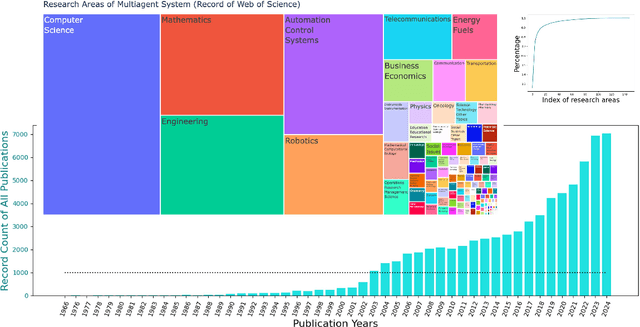

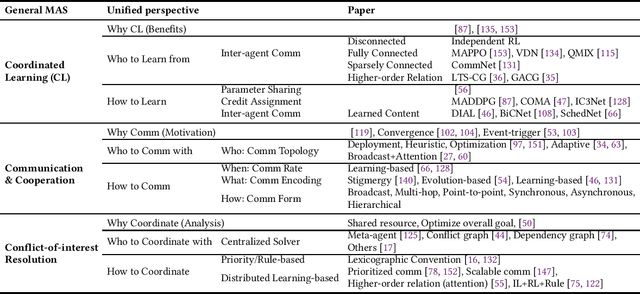

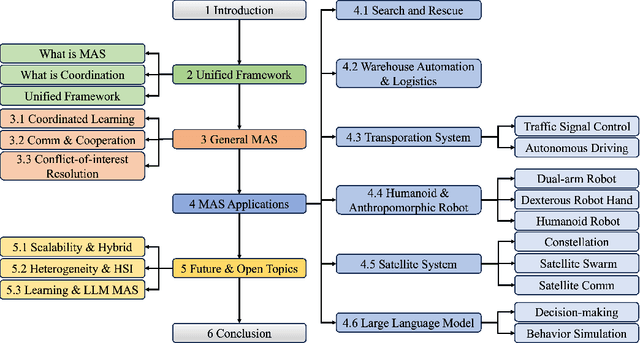

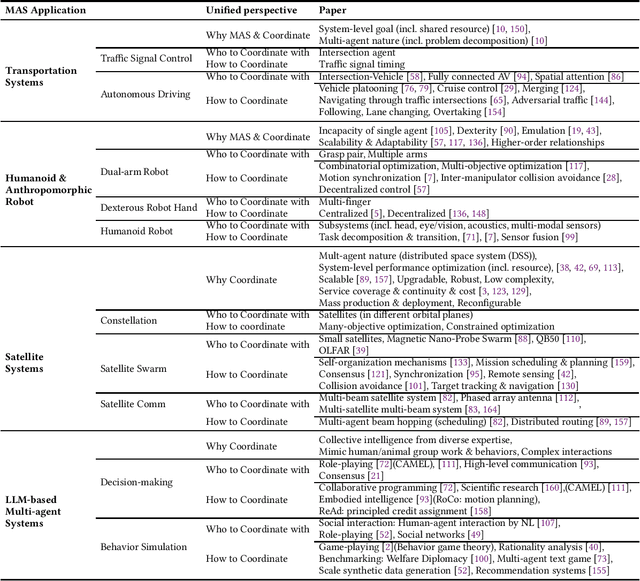

Abstract:Multi-agent coordination studies the underlying mechanism enabling the trending spread of diverse multi-agent systems (MAS) and has received increasing attention, driven by the expansion of emerging applications and rapid AI advances. This survey outlines the current state of coordination research across applications through a unified understanding that answers four fundamental coordination questions: (1) what is coordination; (2) why coordination; (3) who to coordinate with; and (4) how to coordinate. Our purpose is to explore existing ideas and expertise in coordination and their connections across diverse applications, while identifying and highlighting emerging and promising research directions. First, general coordination problems that are essential to varied applications are identified and analyzed. Second, a number of MAS applications are surveyed, ranging from widely studied domains, e.g., search and rescue, warehouse automation and logistics, and transportation systems, to emerging fields including humanoid and anthropomorphic robots, satellite systems, and large language models (LLMs). Finally, open challenges about the scalability, heterogeneity, and learning mechanisms of MAS are analyzed and discussed. In particular, we identify the hybridization of hierarchical and decentralized coordination, human-MAS coordination, and LLM-based MAS as promising future directions.

Can ChatGPT Diagnose Alzheimer's Disease?

Feb 10, 2025Abstract:Can ChatGPT diagnose Alzheimer's Disease (AD)? AD is a devastating neurodegenerative condition that affects approximately 1 in 9 individuals aged 65 and older, profoundly impairing memory and cognitive function. This paper utilises 9300 electronic health records (EHRs) with data from Magnetic Resonance Imaging (MRI) and cognitive tests to address an intriguing question: As a general-purpose task solver, can ChatGPT accurately detect AD using EHRs? We present an in-depth evaluation of ChatGPT using a black-box approach with zero-shot and multi-shot methods. This study unlocks ChatGPT's capability to analyse MRI and cognitive test results, as well as its potential as a diagnostic tool for AD. By automating aspects of the diagnostic process, this research opens a transformative approach for the healthcare system, particularly in addressing disparities in resource-limited regions where AD specialists are scarce. Hence, it offers a foundation for a promising method for early detection, supporting individuals with timely interventions, which is paramount for Quality of Life (QoL).

Neural Spelling: A Spell-Based BCI System for Language Neural Decoding

Jan 29, 2025

Abstract:Brain-computer interfaces (BCIs) present a promising avenue by translating neural activity directly into text, eliminating the need for physical actions. However, existing non-invasive BCI systems have not successfully covered the entire alphabet, limiting their practicality. In this paper, we propose a novel non-invasive EEG-based BCI system with Curriculum-based Neural Spelling Framework, which recognizes all 26 alphabet letters by decoding neural signals associated with handwriting first, and then apply a Generative AI (GenAI) to enhance spell-based neural language decoding tasks. Our approach combines the ease of handwriting with the accessibility of EEG technology, utilizing advanced neural decoding algorithms and pre-trained large language models (LLMs) to translate EEG patterns into text with high accuracy. This system show how GenAI can improve the performance of typical spelling-based neural language decoding task, and addresses the limitations of previous methods, offering a scalable and user-friendly solution for individuals with communication impairments, thereby enhancing inclusive communication options.

Contrastive Masked Autoencoders for Character-Level Open-Set Writer Identification

Jan 21, 2025

Abstract:In the realm of digital forensics and document authentication, writer identification plays a crucial role in determining the authors of documents based on handwriting styles. The primary challenge in writer-id is the "open-set scenario", where the goal is accurately recognizing writers unseen during the model training. To overcome this challenge, representation learning is the key. This method can capture unique handwriting features, enabling it to recognize styles not previously encountered during training. Building on this concept, this paper introduces the Contrastive Masked Auto-Encoders (CMAE) for Character-level Open-Set Writer Identification. We merge Masked Auto-Encoders (MAE) with Contrastive Learning (CL) to simultaneously and respectively capture sequential information and distinguish diverse handwriting styles. Demonstrating its effectiveness, our model achieves state-of-the-art (SOTA) results on the CASIA online handwriting dataset, reaching an impressive precision rate of 89.7%. Our study advances universal writer-id with a sophisticated representation learning approach, contributing substantially to the ever-evolving landscape of digital handwriting analysis, and catering to the demands of an increasingly interconnected world.

HFGaussian: Learning Generalizable Gaussian Human with Integrated Human Features

Nov 05, 2024Abstract:Recent advancements in radiance field rendering show promising results in 3D scene representation, where Gaussian splatting-based techniques emerge as state-of-the-art due to their quality and efficiency. Gaussian splatting is widely used for various applications, including 3D human representation. However, previous 3D Gaussian splatting methods either use parametric body models as additional information or fail to provide any underlying structure, like human biomechanical features, which are essential for different applications. In this paper, we present a novel approach called HFGaussian that can estimate novel views and human features, such as the 3D skeleton, 3D key points, and dense pose, from sparse input images in real time at 25 FPS. The proposed method leverages generalizable Gaussian splatting technique to represent the human subject and its associated features, enabling efficient and generalizable reconstruction. By incorporating a pose regression network and the feature splatting technique with Gaussian splatting, HFGaussian demonstrates improved capabilities over existing 3D human methods, showcasing the potential of 3D human representations with integrated biomechanics. We thoroughly evaluate our HFGaussian method against the latest state-of-the-art techniques in human Gaussian splatting and pose estimation, demonstrating its real-time, state-of-the-art performance.

Improving Trust Estimation in Human-Robot Collaboration Using Beta Reputation at Fine-grained Timescales

Nov 04, 2024Abstract:When interacting with each other, humans adjust their behavior based on perceived trust. However, to achieve similar adaptability, robots must accurately estimate human trust at sufficiently granular timescales during the human-robot collaboration task. A beta reputation is a popular way to formalize a mathematical estimation of human trust. However, it relies on binary performance, which updates trust estimations only after each task concludes. Additionally, manually crafting a reward function is the usual method of building a performance indicator, which is labor-intensive and time-consuming. These limitations prevent efficiently capturing continuous changes in trust at more granular timescales throughout the collaboration task. Therefore, this paper presents a new framework for the estimation of human trust using a beta reputation at fine-grained timescales. To achieve granularity in beta reputation, we utilize continuous reward values to update trust estimations at each timestep of a task. We construct a continuous reward function using maximum entropy optimization to eliminate the need for the laborious specification of a performance indicator. The proposed framework improves trust estimations by increasing accuracy, eliminating the need for manually crafting a reward function, and advancing toward developing more intelligent robots. The source code is publicly available. https://github.com/resuldagdanov/robot-learning-human-trust

A Self-Constructing Multi-Expert Fuzzy System for High-dimensional Data Classification

Oct 17, 2024

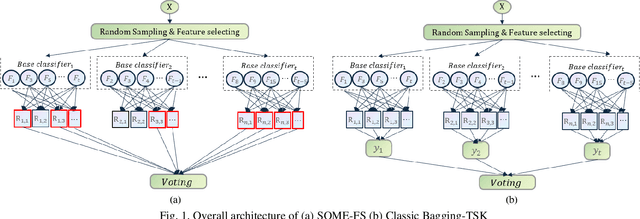

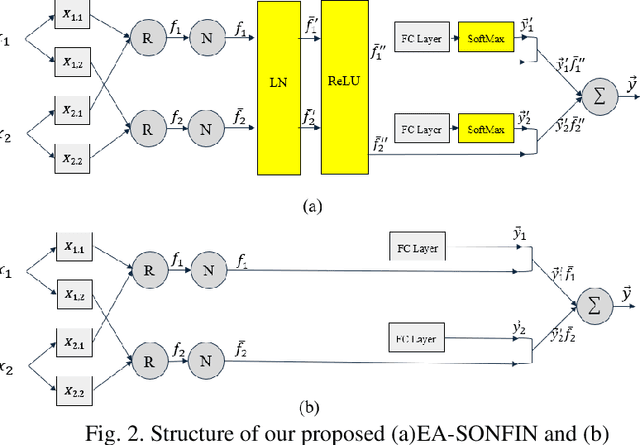

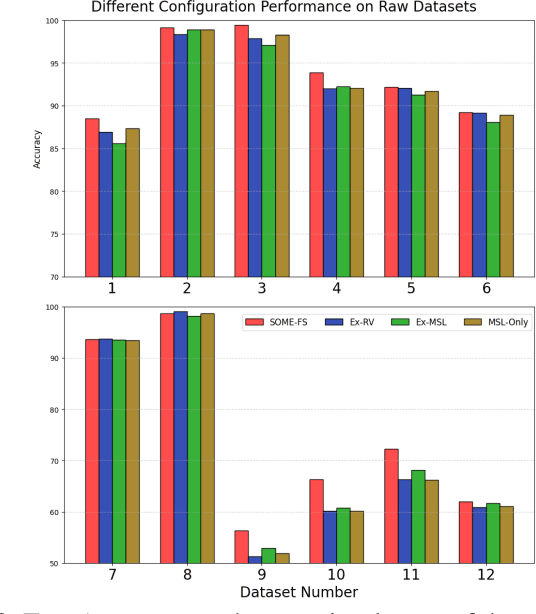

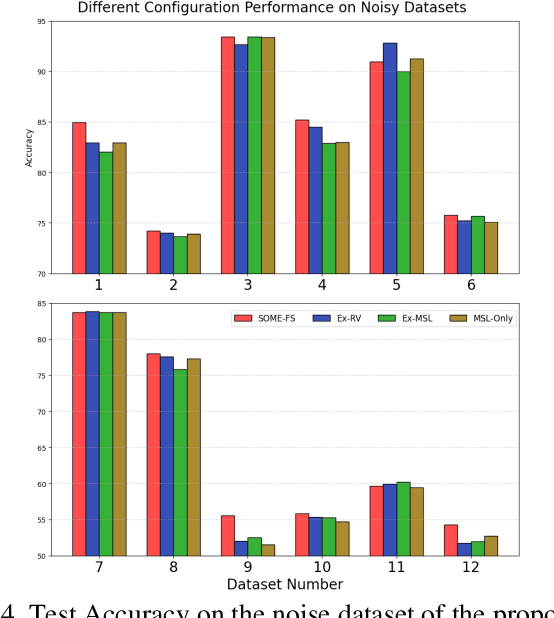

Abstract:Fuzzy Neural Networks (FNNs) are effective machine learning models for classification tasks, commonly based on the Takagi-Sugeno-Kang (TSK) fuzzy system. However, when faced with high-dimensional data, especially with noise, FNNs encounter challenges such as vanishing gradients, excessive fuzzy rules, and limited access to prior knowledge. To address these challenges, we propose a novel fuzzy system, the Self-Constructing Multi-Expert Fuzzy System (SOME-FS). It combines two learning strategies: mixed structure learning and multi-expert advanced learning. The former enables each base classifier to effectively determine its structure without requiring prior knowledge, while the latter tackles the issue of vanishing gradients by enabling each rule to focus on its local region, thereby enhancing the robustness of the fuzzy classifiers. The overall ensemble architecture enhances the stability and prediction performance of the fuzzy system. Our experimental results demonstrate that the proposed SOME-FS is effective in high-dimensional tabular data, especially in dealing with uncertainty. Moreover, our stable rule mining process can identify concise and core rules learned by the SOME-FS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge