Amr Abdelraouf

PDB: Not All Drivers Are the Same -- A Personalized Dataset for Understanding Driving Behavior

Mar 09, 2025

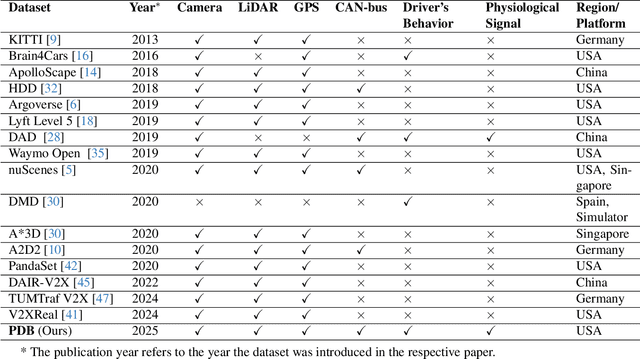

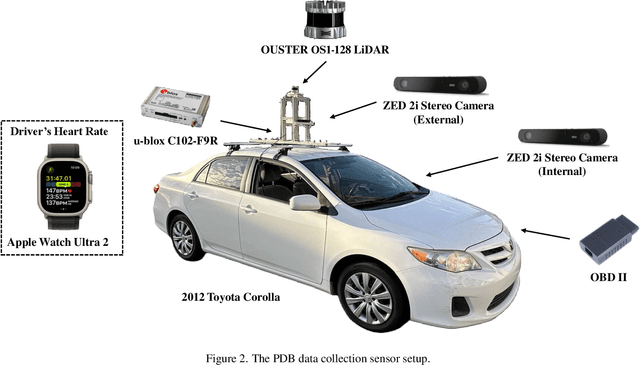

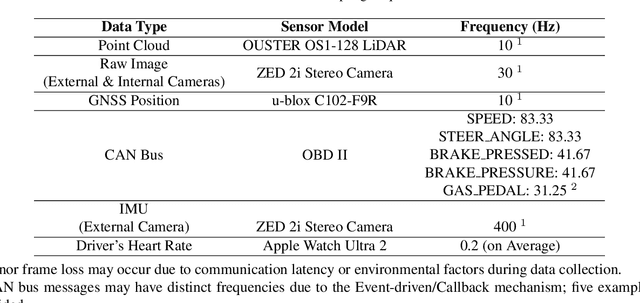

Abstract:Driving behavior is inherently personal, influenced by individual habits, decision-making styles, and physiological states. However, most existing datasets treat all drivers as homogeneous, overlooking driver-specific variability. To address this gap, we introduce the Personalized Driving Behavior (PDB) dataset, a multi-modal dataset designed to capture personalization in driving behavior under naturalistic driving conditions. Unlike conventional datasets, PDB minimizes external influences by maintaining consistent routes, vehicles, and lighting conditions across sessions. It includes sources from 128-line LiDAR, front-facing camera video, GNSS, 9-axis IMU, CAN bus data (throttle, brake, steering angle), and driver-specific signals such as facial video and heart rate. The dataset features 12 participants, approximately 270,000 LiDAR frames, 1.6 million images, and 6.6 TB of raw sensor data. The processed trajectory dataset consists of 1,669 segments, each spanning 10 seconds with a 0.2-second interval. By explicitly capturing drivers' behavior, PDB serves as a unique resource for human factor analysis, driver identification, and personalized mobility applications, contributing to the development of human-centric intelligent transportation systems.

Video Token Sparsification for Efficient Multimodal LLMs in Autonomous Driving

Sep 16, 2024

Abstract:Multimodal large language models (MLLMs) have demonstrated remarkable potential for enhancing scene understanding in autonomous driving systems through powerful logical reasoning capabilities. However, the deployment of these models faces significant challenges due to their substantial parameter sizes and computational demands, which often exceed the constraints of onboard computation. One major limitation arises from the large number of visual tokens required to capture fine-grained and long-context visual information, leading to increased latency and memory consumption. To address this issue, we propose Video Token Sparsification (VTS), a novel approach that leverages the inherent redundancy in consecutive video frames to significantly reduce the total number of visual tokens while preserving the most salient information. VTS employs a lightweight CNN-based proposal model to adaptively identify key frames and prune less informative tokens, effectively mitigating hallucinations and increasing inference throughput without compromising performance. We conduct comprehensive experiments on the DRAMA and LingoQA benchmarks, demonstrating the effectiveness of VTS in achieving up to a 33\% improvement in inference throughput and a 28\% reduction in memory usage compared to the baseline without compromising performance.

Investigating Personalized Driving Behaviors in Dilemma Zones: Analysis and Prediction of Stop-or-Go Decisions

May 06, 2024Abstract:Dilemma zones at signalized intersections present a commonly occurring but unsolved challenge for both drivers and traffic operators. Onsets of the yellow lights prompt varied responses from different drivers: some may brake abruptly, compromising the ride comfort, while others may accelerate, increasing the risk of red-light violations and potential safety hazards. Such diversity in drivers' stop-or-go decisions may result from not only surrounding traffic conditions, but also personalized driving behaviors. To this end, identifying personalized driving behaviors and integrating them into advanced driver assistance systems (ADAS) to mitigate the dilemma zone problem presents an intriguing scientific question. In this study, we employ a game engine-based (i.e., CARLA-enabled) driving simulator to collect high-resolution vehicle trajectories, incoming traffic signal phase and timing information, and stop-or-go decisions from four subject drivers in various scenarios. This approach allows us to analyze personalized driving behaviors in dilemma zones and develop a Personalized Transformer Encoder to predict individual drivers' stop-or-go decisions. The results show that the Personalized Transformer Encoder improves the accuracy of predicting driver decision-making in the dilemma zone by 3.7% to 12.6% compared to the Generic Transformer Encoder, and by 16.8% to 21.6% over the binary logistic regression model.

KI-GAN: Knowledge-Informed Generative Adversarial Networks for Enhanced Multi-Vehicle Trajectory Forecasting at Signalized Intersections

Apr 19, 2024

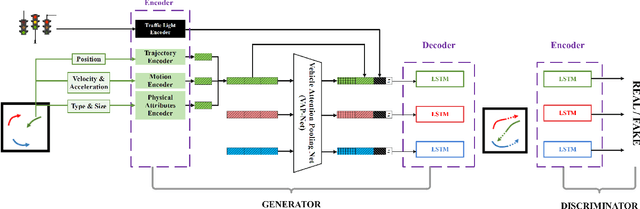

Abstract:Reliable prediction of vehicle trajectories at signalized intersections is crucial to urban traffic management and autonomous driving systems. However, it presents unique challenges, due to the complex roadway layout at intersections, involvement of traffic signal controls, and interactions among different types of road users. To address these issues, we present in this paper a novel model called Knowledge-Informed Generative Adversarial Network (KI-GAN), which integrates both traffic signal information and multi-vehicle interactions to predict vehicle trajectories accurately. Additionally, we propose a specialized attention pooling method that accounts for vehicle orientation and proximity at intersections. Based on the SinD dataset, our KI-GAN model is able to achieve an Average Displacement Error (ADE) of 0.05 and a Final Displacement Error (FDE) of 0.12 for a 6-second observation and 6-second prediction cycle. When the prediction window is extended to 9 seconds, the ADE and FDE values are further reduced to 0.11 and 0.26, respectively. These results demonstrate the effectiveness of the proposed KI-GAN model in vehicle trajectory prediction under complex scenarios at signalized intersections, which represents a significant advancement in the target field.

LaMPilot: An Open Benchmark Dataset for Autonomous Driving with Language Model Programs

Dec 07, 2023Abstract:We present LaMPilot, a novel framework for planning in the field of autonomous driving, rethinking the task as a code-generation process that leverages established behavioral primitives. This approach aims to address the challenge of interpreting and executing spontaneous user instructions such as "overtake the car ahead," which have typically posed difficulties for existing frameworks. We introduce the LaMPilot benchmark specifically designed to quantitatively evaluate the efficacy of Large Language Models (LLMs) in translating human directives into actionable driving policies. We then evaluate a wide range of state-of-the-art code generation language models on tasks from the LaMPilot Benchmark. The results of the experiments showed that GPT-4, with human feedback, achieved an impressive task completion rate of 92.7% and a minimal collision rate of 0.9%. To encourage further investigation in this area, our code and dataset will be made available.

Driving through the Concept Gridlock: Unraveling Explainability Bottlenecks in Automated Driving

Oct 26, 2023Abstract:Concept bottleneck models have been successfully used for explainable machine learning by encoding information within the model with a set of human-defined concepts. In the context of human-assisted or autonomous driving, explainability models can help user acceptance and understanding of decisions made by the autonomous vehicle, which can be used to rationalize and explain driver or vehicle behavior. We propose a new approach using concept bottlenecks as visual features for control command predictions and explanations of user and vehicle behavior. We learn a human-understandable concept layer that we use to explain sequential driving scenes while learning vehicle control commands. This approach can then be used to determine whether a change in a preferred gap or steering commands from a human (or autonomous vehicle) is led by an external stimulus or change in preferences. We achieve competitive performance to latent visual features while gaining interpretability within our model setup.

Interaction-Aware Personalized Vehicle Trajectory Prediction Using Temporal Graph Neural Networks

Aug 16, 2023

Abstract:Accurate prediction of vehicle trajectories is vital for advanced driver assistance systems and autonomous vehicles. Existing methods mainly rely on generic trajectory predictions derived from large datasets, overlooking the personalized driving patterns of individual drivers. To address this gap, we propose an approach for interaction-aware personalized vehicle trajectory prediction that incorporates temporal graph neural networks. Our method utilizes Graph Convolution Networks (GCN) and Long Short-Term Memory (LSTM) to model the spatio-temporal interactions between target vehicles and their surrounding traffic. To personalize the predictions, we establish a pipeline that leverages transfer learning: the model is initially pre-trained on a large-scale trajectory dataset and then fine-tuned for each driver using their specific driving data. We employ human-in-the-loop simulation to collect personalized naturalistic driving trajectories and corresponding surrounding vehicle trajectories. Experimental results demonstrate the superior performance of our personalized GCN-LSTM model, particularly for longer prediction horizons, compared to its generic counterpart. Moreover, the personalized model outperforms individual models created without pre-training, emphasizing the significance of pre-training on a large dataset to avoid overfitting. By incorporating personalization, our approach enhances trajectory prediction accuracy.

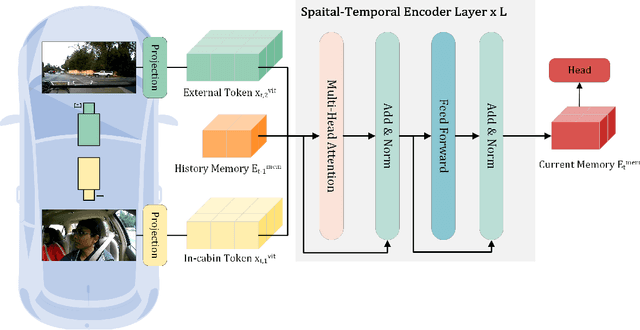

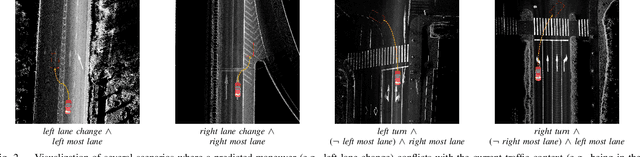

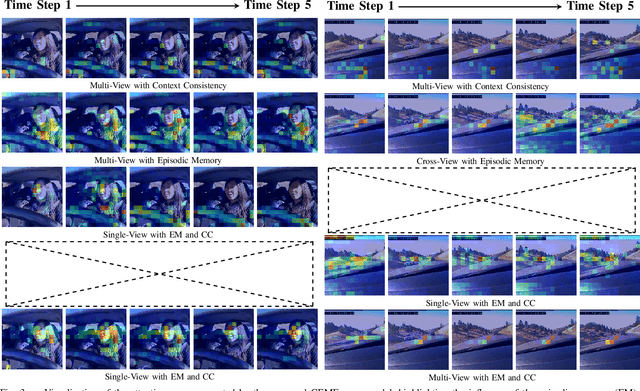

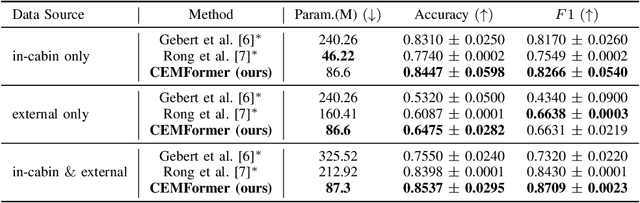

CEMFormer: Learning to Predict Driver Intentions from In-Cabin and External Cameras via Spatial-Temporal Transformers

May 13, 2023

Abstract:Driver intention prediction seeks to anticipate drivers' actions by analyzing their behaviors with respect to surrounding traffic environments. Existing approaches primarily focus on late-fusion techniques, and neglect the importance of maintaining consistency between predictions and prevailing driving contexts. In this paper, we introduce a new framework called Cross-View Episodic Memory Transformer (CEMFormer), which employs spatio-temporal transformers to learn unified memory representations for an improved driver intention prediction. Specifically, we develop a spatial-temporal encoder to integrate information from both in-cabin and external camera views, along with episodic memory representations to continuously fuse historical data. Furthermore, we propose a novel context-consistency loss that incorporates driving context as an auxiliary supervision signal to improve prediction performance. Comprehensive experiments on the Brain4Cars dataset demonstrate that CEMFormer consistently outperforms existing state-of-the-art methods in driver intention prediction.

M$^2$DAR: Multi-View Multi-Scale Driver Action Recognition with Vision Transformer

May 13, 2023

Abstract:Ensuring traffic safety and preventing accidents is a critical goal in daily driving, where the advancement of computer vision technologies can be leveraged to achieve this goal. In this paper, we present a multi-view, multi-scale framework for naturalistic driving action recognition and localization in untrimmed videos, namely M$^2$DAR, with a particular focus on detecting distracted driving behaviors. Our system features a weight-sharing, multi-scale Transformer-based action recognition network that learns robust hierarchical representations. Furthermore, we propose a new election algorithm consisting of aggregation, filtering, merging, and selection processes to refine the preliminary results from the action recognition module across multiple views. Extensive experiments conducted on the 7th AI City Challenge Track 3 dataset demonstrate the effectiveness of our approach, where we achieved an overlap score of 0.5921 on the A2 test set. Our source code is available at \url{https://github.com/PurdueDigitalTwin/M2DAR}.

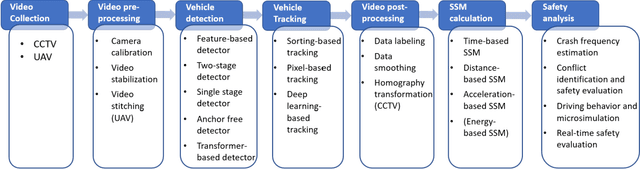

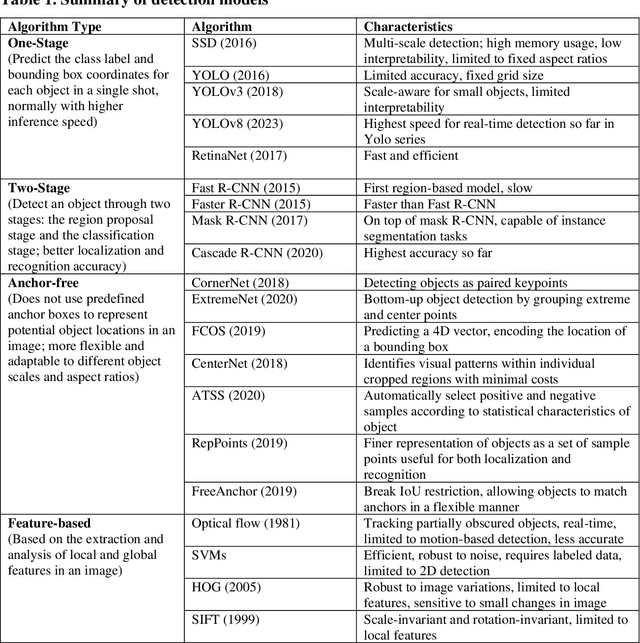

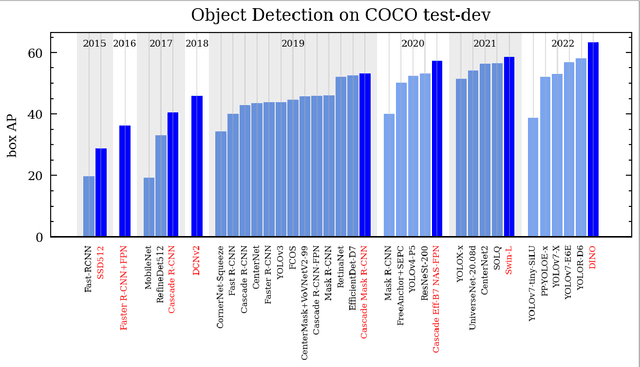

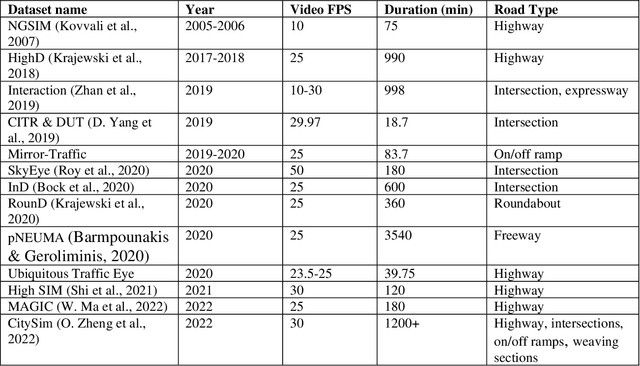

Advances and Applications of Computer Vision Techniques in Vehicle Trajectory Generation and Surrogate Traffic Safety Indicators

Mar 27, 2023

Abstract:The application of Computer Vision (CV) techniques massively stimulates microscopic traffic safety analysis from the perspective of traffic conflicts and near misses, which is usually measured using Surrogate Safety Measures (SSM). However, as video processing and traffic safety modeling are two separate research domains and few research have focused on systematically bridging the gap between them, it is necessary to provide transportation researchers and practitioners with corresponding guidance. With this aim in mind, this paper focuses on reviewing the applications of CV techniques in traffic safety modeling using SSM and suggesting the best way forward. The CV algorithm that are used for vehicle detection and tracking from early approaches to the state-of-the-art models are summarized at a high level. Then, the video pre-processing and post-processing techniques for vehicle trajectory extraction are introduced. A detailed review of SSMs for vehicle trajectory data along with their application on traffic safety analysis is presented. Finally, practical issues in traffic video processing and SSM-based safety analysis are discussed, and the available or potential solutions are provided. This review is expected to assist transportation researchers and engineers with the selection of suitable CV techniques for video processing, and the usage of SSMs for various traffic safety research objectives.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge