Yonghao Xu

GeoMMBench and GeoMMAgent: Toward Expert-Level Multimodal Intelligence in Geoscience and Remote Sensing

Apr 10, 2026Abstract:Recent advances in multimodal large language models (MLLMs) have accelerated progress in domain-oriented AI, yet their development in geoscience and remote sensing (RS) remains constrained by distinctive challenges: wide-ranging disciplinary knowledge, heterogeneous sensor modalities, and a fragmented spectrum of tasks. To bridge these gaps, we introduce GeoMMBench, a comprehensive multimodal question-answering benchmark covering diverse RS disciplines, sensors, and tasks, enabling broader and more rigorous evaluation than prior benchmarks. Using GeoMMBench, we assess 36 open-source and proprietary large language models, uncovering systematic deficiencies in domain knowledge, perceptual grounding, and reasoning--capabilities essential for expert-level geospatial interpretation. Beyond evaluation, we propose GeoMMAgent, a multi-agent framework that strategically integrates retrieval, perception, and reasoning through domain-specific RS models and tools. Extensive experimental results demonstrate that GeoMMAgent significantly outperforms standalone LLMs, underscoring the importance of tool-augmented agents for dynamically tackling complex geoscience and RS challenges.

DisasterInsight: A Multimodal Benchmark for Function-Aware and Grounded Disaster Assessment

Jan 26, 2026Abstract:Timely interpretation of satellite imagery is critical for disaster response, yet existing vision-language benchmarks for remote sensing largely focus on coarse labels and image-level recognition, overlooking the functional understanding and instruction robustness required in real humanitarian workflows. We introduce DisasterInsight, a multimodal benchmark designed to evaluate vision-language models (VLMs) on realistic disaster analysis tasks. DisasterInsight restructures the xBD dataset into approximately 112K building-centered instances and supports instruction-diverse evaluation across multiple tasks, including building-function classification, damage-level and disaster-type classification, counting, and structured report generation aligned with humanitarian assessment guidelines. To establish domain-adapted baselines, we propose DI-Chat, obtained by fine-tuning existing VLM backbones on disaster-specific instruction data using parameter-efficient Low-Rank Adaptation (LoRA). Extensive experiments on state-of-the-art generic and remote-sensing VLMs reveal substantial performance gaps across tasks, particularly in damage understanding and structured report generation. DI-Chat achieves significant improvements on damage-level and disaster-type classification as well as report generation quality, while building-function classification remains challenging for all evaluated models. DisasterInsight provides a unified benchmark for studying grounded multimodal reasoning in disaster imagery.

Towards Realistic Remote Sensing Dataset Distillation with Discriminative Prototype-guided Diffusion

Jan 22, 2026Abstract:Recent years have witnessed the remarkable success of deep learning in remote sensing image interpretation, driven by the availability of large-scale benchmark datasets. However, this reliance on massive training data also brings two major challenges: (1) high storage and computational costs, and (2) the risk of data leakage, especially when sensitive categories are involved. To address these challenges, this study introduces the concept of dataset distillation into the field of remote sensing image interpretation for the first time. Specifically, we train a text-to-image diffusion model to condense a large-scale remote sensing dataset into a compact and representative distilled dataset. To improve the discriminative quality of the synthesized samples, we propose a classifier-driven guidance by injecting a classification consistency loss from a pre-trained model into the diffusion training process. Besides, considering the rich semantic complexity of remote sensing imagery, we further perform latent space clustering on training samples to select representative and diverse prototypes as visual style guidance, while using a visual language model to provide aggregated text descriptions. Experiments on three high-resolution remote sensing scene classification benchmarks show that the proposed method can distill realistic and diverse samples for downstream model training. Code and pre-trained models are available online (https://github.com/YonghaoXu/DPD).

VLM2GeoVec: Toward Universal Multimodal Embeddings for Remote Sensing

Dec 12, 2025Abstract:Satellite imagery differs fundamentally from natural images: its aerial viewpoint, very high resolution, diverse scale variations, and abundance of small objects demand both region-level spatial reasoning and holistic scene understanding. Current remote-sensing approaches remain fragmented between dual-encoder retrieval models, which excel at large-scale cross-modal search but cannot interleave modalities, and generative assistants, which support region-level interpretation but lack scalable retrieval capabilities. We propose $\textbf{VLM2GeoVec}$, an instruction-following, single-encoder vision-language model trained contrastively to embed interleaved inputs (images, text, bounding boxes, and geographic coordinates) in a unified vector space. Our single encoder interleaves all inputs into one joint embedding trained with a contrastive loss, eliminating multi-stage pipelines and task-specific modules. To evaluate its versatility, we introduce $\textbf{RSMEB}$, a novel benchmark covering key remote-sensing embedding applications: scene classification; cross-modal search; compositional retrieval; visual-question answering; visual grounding and region-level reasoning; and semantic geospatial retrieval. On RSMEB, it achieves $\textbf{26.6%}$ P@1 on region-caption retrieval (+25 pp vs. dual-encoder baselines), $\textbf{32.5%}$ P@1 on referring-expression retrieval (+19 pp), and $\textbf{17.8%}$ P@1 on semantic geo-localization retrieval (over $3\times$ prior best), while matching or exceeding specialized baselines on conventional tasks such as scene classification and cross-modal retrieval. VLM2GeoVec unifies scalable retrieval with region-level spatial reasoning, enabling cohesive multimodal analysis in remote sensing. We will publicly release the code, checkpoints, and data upon acceptance.

Comparing Satellite Data for Next-Day Wildfire Predictability

Mar 11, 2025Abstract:Multiple studies have performed next-day fire prediction using satellite imagery. Two main satellites are used to detect wildfires: MODIS and VIIRS. Both satellites provide fire mask products, called MOD14 and VNP14, respectively. Studies have used one or the other, but there has been no comparison between them to determine which might be more suitable for next-day fire prediction. In this paper, we first evaluate how well VIIRS and MODIS data can be used to forecast wildfire spread one day ahead. We find that the model using VIIRS as input and VNP14 as target achieves the best results. Interestingly, the model using MODIS as input and VNP14 as target performs significantly better than using VNP14 as input and MOD14 as target. Next, we discuss why MOD14 might be harder to use for predicting next-day fires. We find that the MOD14 fire mask is highly stochastic and does not correlate with reasonable fire spread patterns. This is detrimental for machine learning tasks, as the model learns irrational patterns. Therefore, we conclude that MOD14 is unsuitable for next-day fire prediction and that VNP14 is a much better option. However, using MODIS input and VNP14 as target, we achieve a significant improvement in predictability. This indicates that an improved fire detection model is possible for MODIS. The full code and dataset is available online: https://github.com/justuskarlsson/wildfire-mod14-vnp14

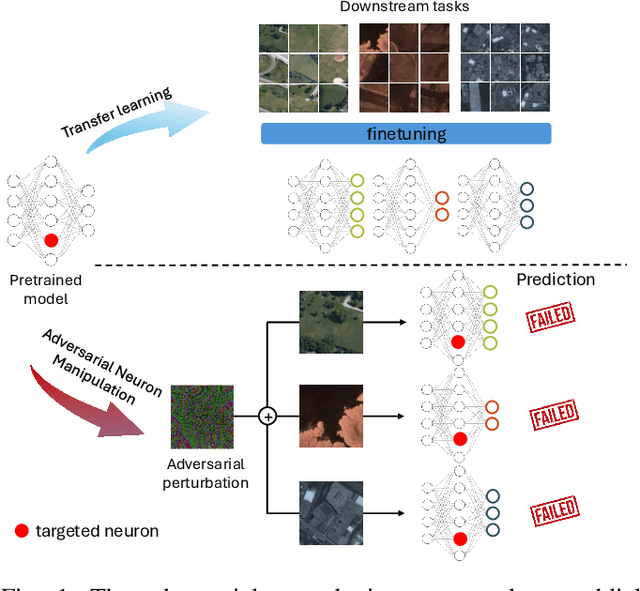

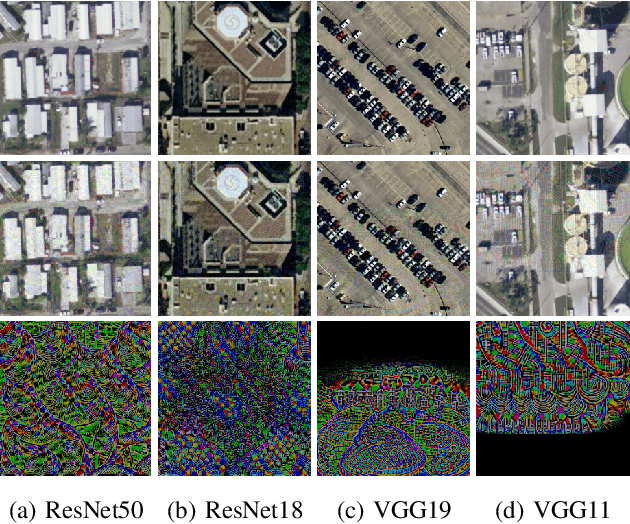

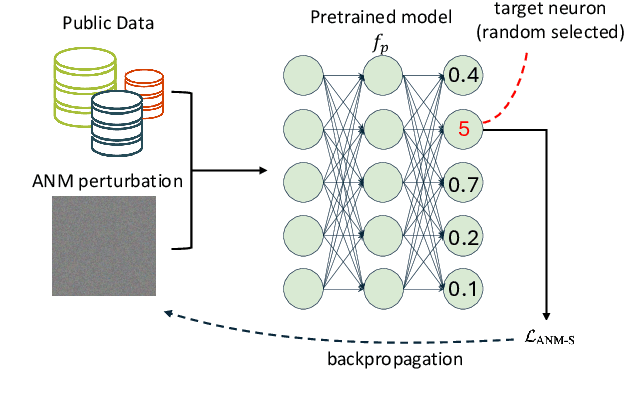

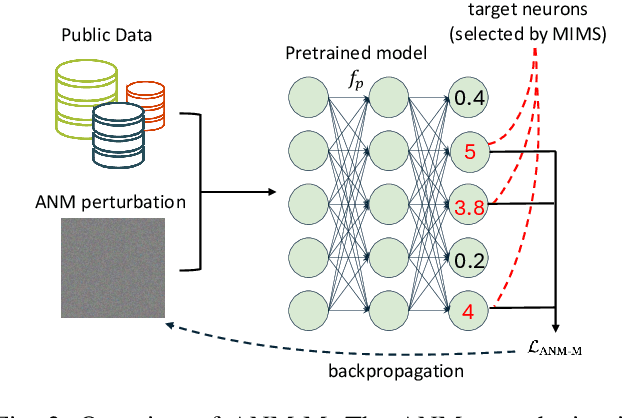

On the Adversarial Vulnerabilities of Transfer Learning in Remote Sensing

Jan 20, 2025

Abstract:The use of pretrained models from general computer vision tasks is widespread in remote sensing, significantly reducing training costs and improving performance. However, this practice also introduces vulnerabilities to downstream tasks, where publicly available pretrained models can be used as a proxy to compromise downstream models. This paper presents a novel Adversarial Neuron Manipulation method, which generates transferable perturbations by selectively manipulating single or multiple neurons in pretrained models. Unlike existing attacks, this method eliminates the need for domain-specific information, making it more broadly applicable and efficient. By targeting multiple fragile neurons, the perturbations achieve superior attack performance, revealing critical vulnerabilities in deep learning models. Experiments on diverse models and remote sensing datasets validate the effectiveness of the proposed method. This low-access adversarial neuron manipulation technique highlights a significant security risk in transfer learning models, emphasizing the urgent need for more robust defenses in their design when addressing the safety-critical remote sensing tasks.

Sen2Fire: A Challenging Benchmark Dataset for Wildfire Detection using Sentinel Data

Mar 26, 2024Abstract:Utilizing satellite imagery for wildfire detection presents substantial potential for practical applications. To advance the development of machine learning algorithms in this domain, our study introduces the \textit{Sen2Fire} dataset--a challenging satellite remote sensing dataset tailored for wildfire detection. This dataset is curated from Sentinel-2 multi-spectral data and Sentinel-5P aerosol product, comprising a total of 2466 image patches. Each patch has a size of 512$\times$512 pixels with 13 bands. Given the distinctive sensitivities of various wavebands to wildfire responses, our research focuses on optimizing wildfire detection by evaluating different wavebands and employing a combination of spectral indices, such as normalized burn ratio (NBR) and normalized difference vegetation index (NDVI). The results suggest that, in contrast to using all bands for wildfire detection, selecting specific band combinations yields superior performance. Additionally, our study underscores the positive impact of integrating Sentinel-5 aerosol data for wildfire detection. The code and dataset are available online (https://zenodo.org/records/10881058).

There Are No Data Like More Data- Datasets for Deep Learning in Earth Observation

Oct 30, 2023

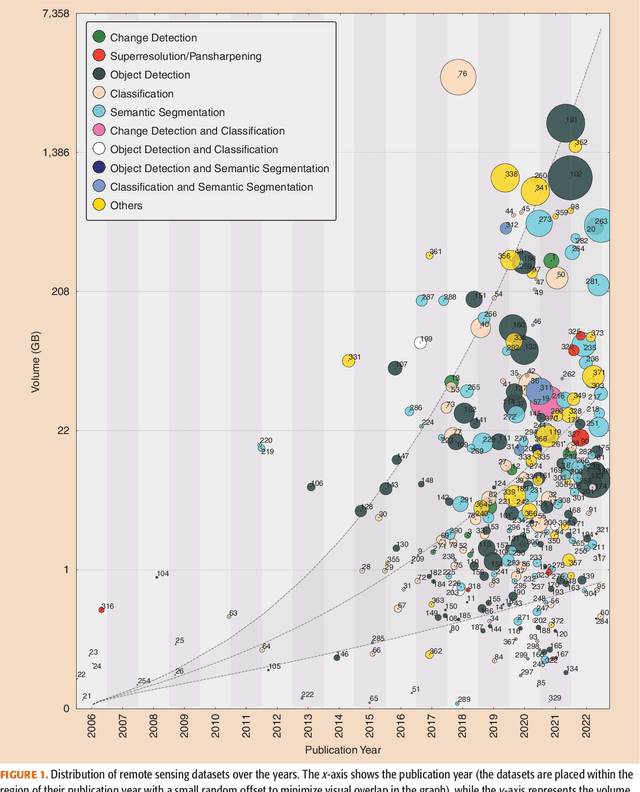

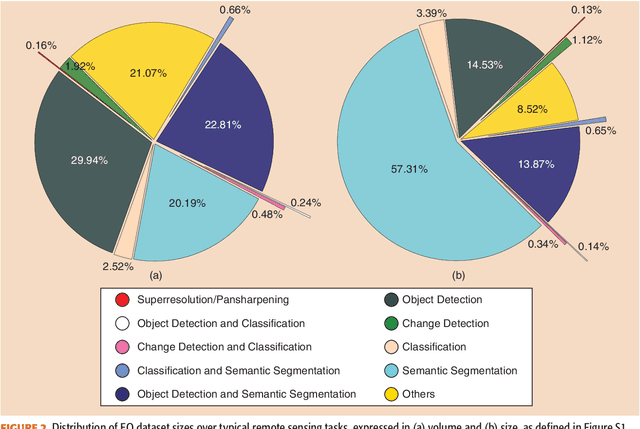

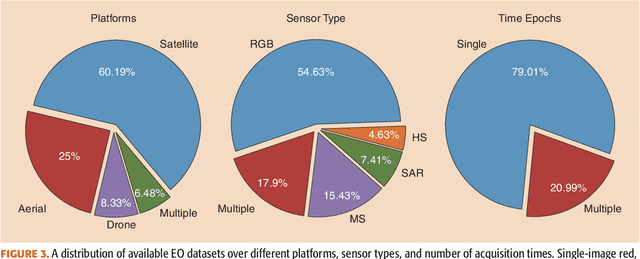

Abstract:Carefully curated and annotated datasets are the foundation of machine learning, with particularly data-hungry deep neural networks forming the core of what is often called Artificial Intelligence (AI). Due to the massive success of deep learning applied to Earth Observation (EO) problems, the focus of the community has been largely on the development of ever-more sophisticated deep neural network architectures and training strategies largely ignoring the overall importance of datasets. For that purpose, numerous task-specific datasets have been created that were largely ignored by previously published review articles on AI for Earth observation. With this article, we want to change the perspective and put machine learning datasets dedicated to Earth observation data and applications into the spotlight. Based on a review of the historical developments, currently available resources are described and a perspective for future developments is formed. We hope to contribute to an understanding that the nature of our data is what distinguishes the Earth observation community from many other communities that apply deep learning techniques to image data, and that a detailed understanding of EO data peculiarities is among the core competencies of our discipline.

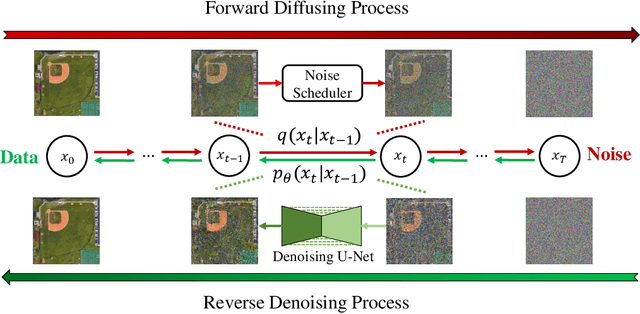

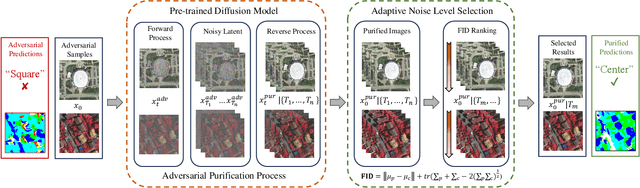

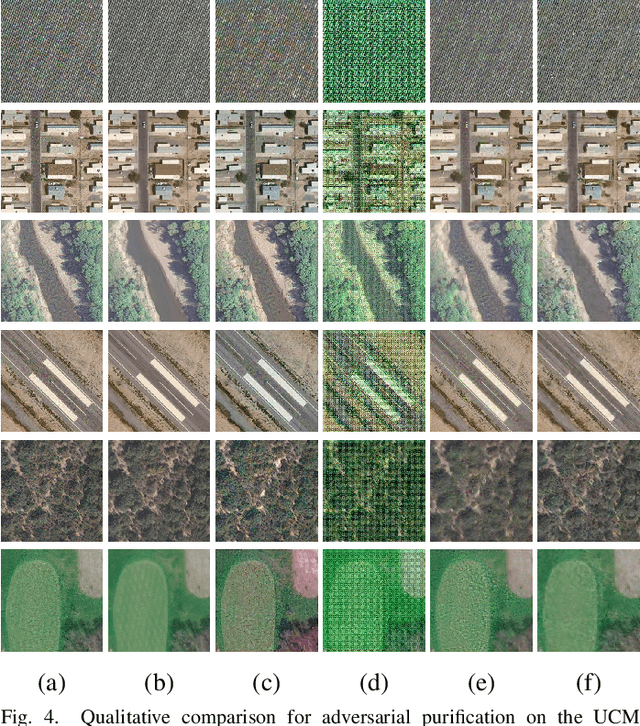

Universal Adversarial Defense in Remote Sensing Based on Pre-trained Denoising Diffusion Models

Aug 02, 2023

Abstract:Deep neural networks (DNNs) have achieved tremendous success in many remote sensing (RS) applications, in which DNNs are vulnerable to adversarial perturbations. Unfortunately, current adversarial defense approaches in RS studies usually suffer from performance fluctuation and unnecessary re-training costs due to the need for prior knowledge of the adversarial perturbations among RS data. To circumvent these challenges, we propose a universal adversarial defense approach in RS imagery (UAD-RS) using pre-trained diffusion models to defend the common DNNs against multiple unknown adversarial attacks. Specifically, the generative diffusion models are first pre-trained on different RS datasets to learn generalized representations in various data domains. After that, a universal adversarial purification framework is developed using the forward and reverse process of the pre-trained diffusion models to purify the perturbations from adversarial samples. Furthermore, an adaptive noise level selection (ANLS) mechanism is built to capture the optimal noise level of the diffusion model that can achieve the best purification results closest to the clean samples according to their Frechet Inception Distance (FID) in deep feature space. As a result, only a single pre-trained diffusion model is needed for the universal purification of adversarial samples on each dataset, which significantly alleviates the re-training efforts and maintains high performance without prior knowledge of the adversarial perturbations. Experiments on four heterogeneous RS datasets regarding scene classification and semantic segmentation verify that UAD-RS outperforms state-of-the-art adversarial purification approaches with a universal defense against seven commonly existing adversarial perturbations. Codes and the pre-trained models are available online (https://github.com/EricYu97/UAD-RS).

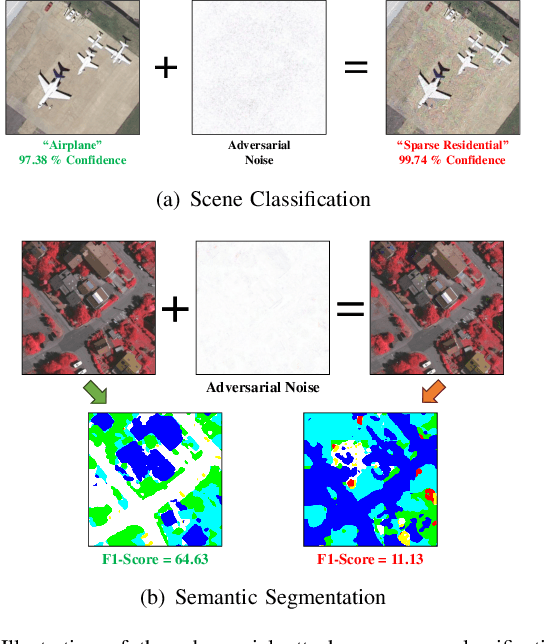

AI Security for Geoscience and Remote Sensing: Challenges and Future Trends

Dec 19, 2022Abstract:Recent advances in artificial intelligence (AI) have significantly intensified research in the geoscience and remote sensing (RS) field. AI algorithms, especially deep learning-based ones, have been developed and applied widely to RS data analysis. The successful application of AI covers almost all aspects of Earth observation (EO) missions, from low-level vision tasks like super-resolution, denoising, and inpainting, to high-level vision tasks like scene classification, object detection, and semantic segmentation. While AI techniques enable researchers to observe and understand the Earth more accurately, the vulnerability and uncertainty of AI models deserve further attention, considering that many geoscience and RS tasks are highly safety-critical. This paper reviews the current development of AI security in the geoscience and RS field, covering the following five important aspects: adversarial attack, backdoor attack, federated learning, uncertainty, and explainability. Moreover, the potential opportunities and trends are discussed to provide insights for future research. To the best of the authors' knowledge, this paper is the first attempt to provide a systematic review of AI security-related research in the geoscience and RS community. Available code and datasets are also listed in the paper to move this vibrant field of research forward.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge