Yiping Lu

On the Width Scaling of Neural Optimizers Under Matrix Operator Norms I: Row/Column Normalization and Hyperparameter Transfer

Mar 10, 2026Abstract:A central question in modern deep learning is how to design optimizers whose behavior remains stable as the network width $w$ increases. We address this question by interpreting several widely used neural-network optimizers, including \textrm{AdamW} and \textrm{Muon}, as instances of steepest descent under matrix operator norms. This perspective links optimizer geometry with the Lipschitz structure of the network forward map, and enables width-independent control of both Lipschitz and smoothness constants. However, steepest-descent rules induced by standard $p \to q$ operator norms lack layerwise composability and therefore cannot provide width-independent bounds in deep architectures. We overcome this limitation by introducing a family of mean-normalized operator norms, denoted $\pmean \to \qmean$, that admit layerwise composability, yield width-independent smoothness bounds, and give rise to practical optimizers such as \emph{rescaled} \textrm{AdamW}, row normalization, and column normalization. The resulting learning rate width-aware scaling rules recover $μ$P scaling~\cite{yang2021tensor} as a special case and provide a principled mechanism for cross-width learning-rate transfer across a broad class of optimizers. We further show that \textrm{Muon} can suffer an $\mathcal{O}(\sqrt{w})$ worst-case growth in the smoothness constant, whereas a new family of row-normalized optimizers we propose achieves width-independent smoothness guarantees. Based on the observations, we propose MOGA (Matrix Operator Geometry Aware), a width-aware optimizer based only on row/column-wise normalization that enables stable learning-rate transfer across model widths. Large-scale pre-training on GPT-2 and LLaMA shows that MOGA, especially with row normalization, is competitive with Muon while being notably faster in large-token and low-loss regimes.

A Covering Framework for Offline POMDPs Learning using Belief Space Metric

Mar 03, 2026Abstract:In off policy evaluation (OPE) for partially observable Markov decision processes (POMDPs), an agent must infer hidden states from past observations, which exacerbates both the curse of horizon and the curse of memory in existing OPE methods. This paper introduces a novel covering analysis framework that exploits the intrinsic metric structure of the belief space (distributions over latent states) to relax traditional coverage assumptions. By assuming value relevant functions are Lipschitz continuous in the belief space, we derive error bounds that mitigate exponential blow ups in horizon and memory length. Our unified analysis technique applies to a broad class of OPE algorithms, yielding concrete error bounds and coverage requirements expressed in terms of belief space metrics rather than raw history coverage. We illustrate the improved sample efficiency of this framework via case studies: the double sampling Bellman error minimization algorithm, and the memory based future dependent value functions (FDVF). In both cases, our coverage definition based on the belief space metric yields tighter bounds.

On the Power of (Approximate) Reward Models for Inference-Time Scaling

Feb 01, 2026Abstract:Inference-time scaling has recently emerged as a powerful paradigm for improving the reasoning capability of large language models. Among various approaches, Sequential Monte Carlo (SMC) has become a particularly important framework, enabling iterative generation, evaluation, rejection, and resampling of intermediate reasoning trajectories. A central component in this process is the reward model, which evaluates partial solutions and guides the allocation of computation during inference. However, in practice, true reward models are never available. All deployed systems rely on approximate reward models, raising a fundamental question: Why and when do approximate reward models suffice for effective inference-time scaling? In this work, we provide a theoretical answer. We identify the Bellman error of the approximate reward model as the key quantity governing the effectiveness of SMC-based inference-time scaling. For a reasoning process of length $T$, we show that if the Bellman error of the approximate reward model is bounded by $O(1/T)$, then combining this reward model with SMC reduces the computational complexity of reasoning from exponential in $T$ to polynomial in $T$. This yields an exponential improvement in inference efficiency despite using only approximate rewards.

RAGEN: Understanding Self-Evolution in LLM Agents via Multi-Turn Reinforcement Learning

Apr 24, 2025Abstract:Training large language models (LLMs) as interactive agents presents unique challenges including long-horizon decision making and interacting with stochastic environment feedback. While reinforcement learning (RL) has enabled progress in static tasks, multi-turn agent RL training remains underexplored. We propose StarPO (State-Thinking-Actions-Reward Policy Optimization), a general framework for trajectory-level agent RL, and introduce RAGEN, a modular system for training and evaluating LLM agents. Our study on three stylized environments reveals three core findings. First, our agent RL training shows a recurring mode of Echo Trap where reward variance cliffs and gradient spikes; we address this with StarPO-S, a stabilized variant with trajectory filtering, critic incorporation, and decoupled clipping. Second, we find the shaping of RL rollouts would benefit from diverse initial states, medium interaction granularity and more frequent sampling. Third, we show that without fine-grained, reasoning-aware reward signals, agent reasoning hardly emerge through multi-turn RL and they may show shallow strategies or hallucinated thoughts. Code and environments are available at https://github.com/RAGEN-AI/RAGEN.

Physics-Informed Inference Time Scaling via Simulation-Calibrated Scientific Machine Learning

Apr 22, 2025Abstract:High-dimensional partial differential equations (PDEs) pose significant computational challenges across fields ranging from quantum chemistry to economics and finance. Although scientific machine learning (SciML) techniques offer approximate solutions, they often suffer from bias and neglect crucial physical insights. Inspired by inference-time scaling strategies in language models, we propose Simulation-Calibrated Scientific Machine Learning (SCaSML), a physics-informed framework that dynamically refines and debiases the SCiML predictions during inference by enforcing the physical laws. SCaSML leverages derived new physical laws that quantifies systematic errors and employs Monte Carlo solvers based on the Feynman-Kac and Elworthy-Bismut-Li formulas to dynamically correct the prediction. Both numerical and theoretical analysis confirms enhanced convergence rates via compute-optimal inference methods. Our numerical experiments demonstrate that SCaSML reduces errors by 20-50% compared to the base surrogate model, establishing it as the first algorithm to refine approximated solutions to high-dimensional PDE during inference. Code of SCaSML is available at https://github.com/Francis-Fan-create/SCaSML.

What is a Sketch-and-Precondition Derivation for Low-Rank Approximation? Inverse Power Error or Inverse Power Estimation?

Feb 11, 2025Abstract:Randomized sketching accelerates large-scale numerical linear algebra by reducing computa- tional complexity. While the traditional sketch-and-solve approach reduces the problem size di- rectly through sketching, the sketch-and-precondition method leverages sketching to construct a computational friendly preconditioner. This preconditioner improves the convergence speed of iterative solvers applied to the original problem, maintaining accuracy in the full space. Further- more, the convergence rate of the solver improves at least linearly with the sketch size. Despite its potential, developing a sketch-and-precondition framework for randomized algorithms in low- rank matrix approximation remains an open challenge. We introduce the Error-Powered Sketched Inverse Iteration (EPSI) Method via run sketched Newton iteration for the Lagrange form as a sketch-and-precondition variant for randomized low-rank approximation. Our method achieves theoretical guarantees, including a convergence rate that improves at least linearly with the sketch size.

Which Spaces can be Embedded in $L_p$-type Reproducing Kernel Banach Space? A Characterization via Metric Entropy

Oct 16, 2024

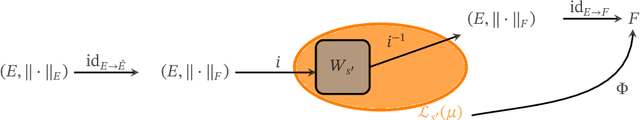

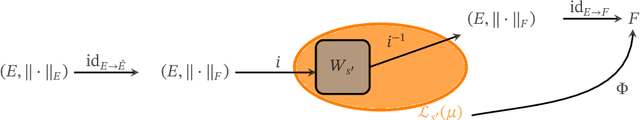

Abstract:In this paper, we establish a novel connection between the metric entropy growth and the embeddability of function spaces into reproducing kernel Hilbert/Banach spaces. Metric entropy characterizes the information complexity of function spaces and has implications for their approximability and learnability. Classical results show that embedding a function space into a reproducing kernel Hilbert space (RKHS) implies a bound on its metric entropy growth. Surprisingly, we prove a \textbf{converse}: a bound on the metric entropy growth of a function space allows its embedding to a $L_p-$type Reproducing Kernel Banach Space (RKBS). This shows that the ${L}_p-$type RKBS provides a broad modeling framework for learnable function classes with controlled metric entropies. Our results shed new light on the power and limitations of kernel methods for learning complex function spaces.

Which Spaces can be Embedded in $\mathcal{L}_p$-type Reproducing Kernel Banach Space? A Characterization via Metric Entropy

Oct 14, 2024

Abstract:In this paper, we establish a novel connection between the metric entropy growth and the embeddability of function spaces into reproducing kernel Hilbert/Banach spaces. Metric entropy characterizes the information complexity of function spaces and has implications for their approximability and learnability. Classical results show that embedding a function space into a reproducing kernel Hilbert space (RKHS) implies a bound on its metric entropy growth. Surprisingly, we prove a \textbf{converse}: a bound on the metric entropy growth of a function space allows its embedding to a $\mathcal{L}_p-$type Reproducing Kernel Banach Space (RKBS). This shows that the $\mathcal{L}_p-$type RKBS provides a broad modeling framework for learnable function classes with controlled metric entropies. Our results shed new light on the power and limitations of kernel methods for learning complex function spaces.

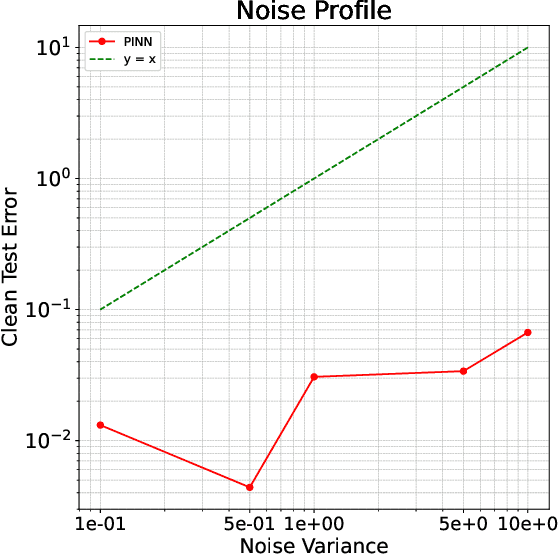

Benign overfitting in Fixed Dimension via Physics-Informed Learning with Smooth Inductive Bias

Jun 16, 2024

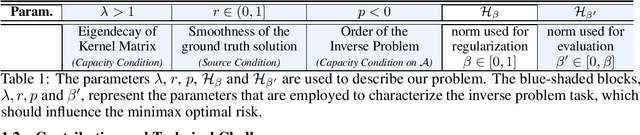

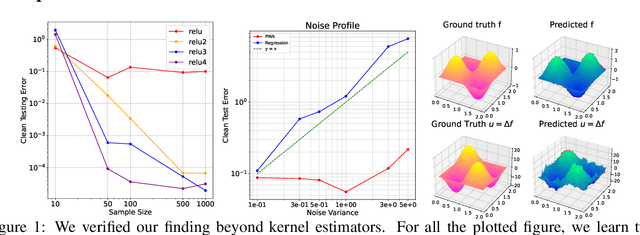

Abstract:Recent advances in machine learning have inspired a surge of research into reconstructing specific quantities of interest from measurements that comply with certain physical laws. These efforts focus on inverse problems that are governed by partial differential equations (PDEs). In this work, we develop an asymptotic Sobolev norm learning curve for kernel ridge(less) regression when addressing (elliptical) linear inverse problems. Our results show that the PDE operators in the inverse problem can stabilize the variance and even behave benign overfitting for fixed-dimensional problems, exhibiting different behaviors from regression problems. Besides, our investigation also demonstrates the impact of various inductive biases introduced by minimizing different Sobolev norms as a form of implicit regularization. For the regularized least squares estimator, we find that all considered inductive biases can achieve the optimal convergence rate, provided the regularization parameter is appropriately chosen. The convergence rate is actually independent to the choice of (smooth enough) inductive bias for both ridge and ridgeless regression. Surprisingly, our smoothness requirement recovered the condition found in Bayesian setting and extend the conclusion to the minimum norm interpolation estimators.

Orthogonal Bootstrap: Efficient Simulation of Input Uncertainty

May 01, 2024Abstract:Bootstrap is a popular methodology for simulating input uncertainty. However, it can be computationally expensive when the number of samples is large. We propose a new approach called \textbf{Orthogonal Bootstrap} that reduces the number of required Monte Carlo replications. We decomposes the target being simulated into two parts: the \textit{non-orthogonal part} which has a closed-form result known as Infinitesimal Jackknife and the \textit{orthogonal part} which is easier to be simulated. We theoretically and numerically show that Orthogonal Bootstrap significantly reduces the computational cost of Bootstrap while improving empirical accuracy and maintaining the same width of the constructed interval.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge