Xinyang Wang

Marketing and Commercialization Center, JD.com

Transcending Classical Neural Network Boundaries: A Quantum-Classical Synergistic Paradigm for Seismic Data Processing

Mar 25, 2026Abstract:In recent years, a number of neural-network (NN) methods have exhibited good performance in seismic data processing, such as denoising, interpolation, and frequency-band extension. However, these methods rely on stacked perceptrons and standard activation functions, which imposes a bottleneck on the representational capacity of deep-learning models, making it difficult to capture the complex and non-stationary dynamics of seismic wavefields. Different from the classical perceptron-stacked NNs which are fundamentally confined to real-valued Euclidean spaces, the quantum NNs leverage the exponential state space of quantum mechanics to map the features into high-dimensional Hilbert spaces, transcending the representational boundary of classical NNs. Based on this insight, we propose a quantum-classical synergistic generative adversarial network (QC-GAN) for seismic data processing, serving as the first application of quantum NNs in seismic exploration. In QC-GAN, a quantum pathway is used to exploit the high-order feature correlations, while the convolutional pathway specializes in extracting the waveform structures of seismic wavefields. Furthermore, we design a QC feature complementarity loss to enforce the feature orthogonality in the proposed QC-GAN. This novel loss function can ensure that the two pathways encode non-overlapping information to enrich the capacity of feature representation. On the whole, by synergistically integrating the quantum and convolutional pathways, the proposed QC-GAN breaks the representational bottleneck inherent in classical GAN. Experimental results on denoising and interpolation tasks demonstrate that QC-GAN preserves wavefield continuity and amplitude-phase information under complex noise conditions.

Unifying Language-Action Understanding and Generation for Autonomous Driving

Mar 02, 2026Abstract:Vision-Language-Action (VLA) models are emerging as a promising paradigm for end-to-end autonomous driving, valued for their potential to leverage world knowledge and reason about complex driving scenes. However, existing methods suffer from two critical limitations: a persistent misalignment between language instructions and action outputs, and the inherent inefficiency of typical auto-regressive action generation. In this paper, we introduce LinkVLA, a novel architecture that directly addresses these challenges to enhance both alignment and efficiency. First, we establish a structural link by unifying language and action tokens into a shared discrete codebook, processed within a single multi-modal model. This structurally enforces cross-modal consistency from the ground up. Second, to create a deep semantic link, we introduce an auxiliary action understanding objective that trains the model to generate descriptive captions from trajectories, fostering a bidirectional language-action mapping. Finally, we replace the slow, step-by-step generation with a two-step coarse-to-fine generation method C2F that efficiently decodes the action sequence, saving 86% inference time. Experiments on closed-loop driving benchmarks show consistent gains in instruction following accuracy and driving performance, alongside reduced inference latency.

A Consistency-Improved LiDAR-Inertial Bundle Adjustment

Feb 06, 2026Abstract:Simultaneous Localization and Mapping (SLAM) using 3D LiDAR has emerged as a cornerstone for autonomous navigation in robotics. While feature-based SLAM systems have achieved impressive results by leveraging edge and planar structures, they often suffer from the inconsistent estimator associated with feature parameterization and estimated covariance. In this work, we present a consistency-improved LiDAR-inertial bundle adjustment (BA) with tailored parameterization and estimator. First, we propose a stereographic-projection representation parameterizing the planar and edge features, and conduct a comprehensive observability analysis to support its integrability with consistent estimator. Second, we implement a LiDAR-inertial BA with Maximum a Posteriori (MAP) formulation and First-Estimate Jacobians (FEJ) to preserve the accurate estimated covariance and observability properties of the system. Last, we apply our proposed BA method to a LiDAR-inertial odometry.

Learning-Enhanced Safeguard Control for High-Relative-Degree Systems: Robust Optimization under Disturbances and Faults

Jan 26, 2025

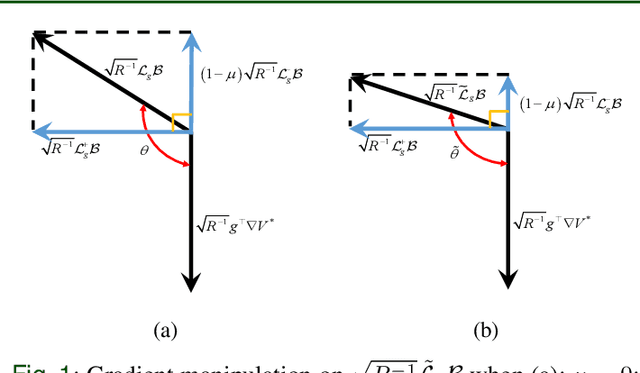

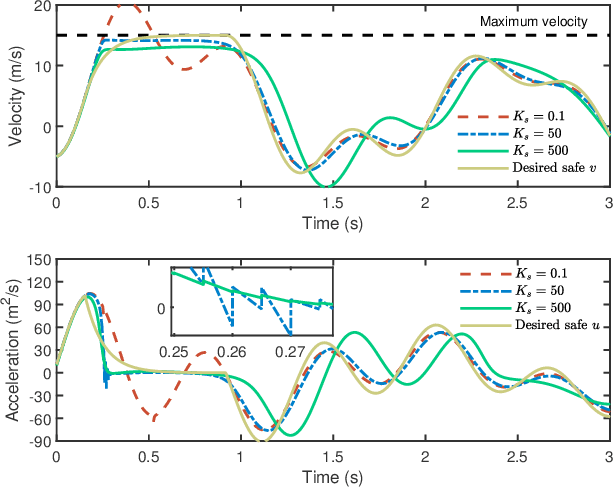

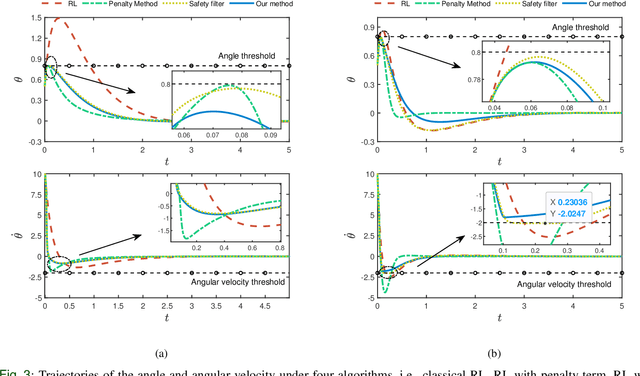

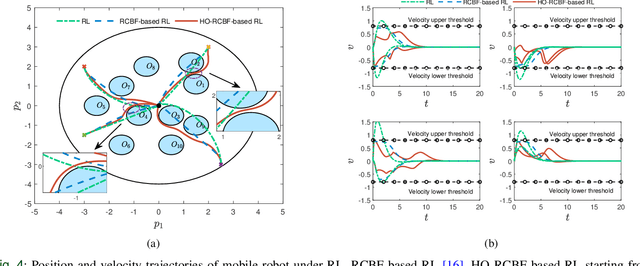

Abstract:Merely pursuing performance may adversely affect the safety, while a conservative policy for safe exploration will degrade the performance. How to balance the safety and performance in learning-based control problems is an interesting yet challenging issue. This paper aims to enhance system performance with safety guarantee in solving the reinforcement learning (RL)-based optimal control problems of nonlinear systems subject to high-relative-degree state constraints and unknown time-varying disturbance/actuator faults. First, to combine control barrier functions (CBFs) with RL, a new type of CBFs, termed high-order reciprocal control barrier function (HO-RCBF) is proposed to deal with high-relative-degree constraints during the learning process. Then, the concept of gradient similarity is proposed to quantify the relationship between the gradient of safety and the gradient of performance. Finally, gradient manipulation and adaptive mechanisms are introduced in the safe RL framework to enhance the performance with a safety guarantee. Two simulation examples illustrate that the proposed safe RL framework can address high-relative-degree constraint, enhance safety robustness and improve system performance.

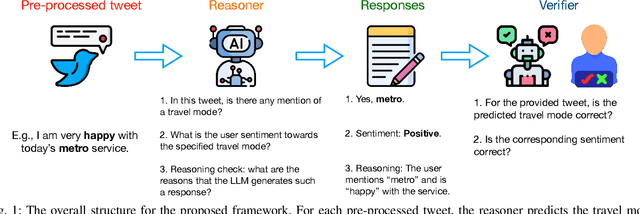

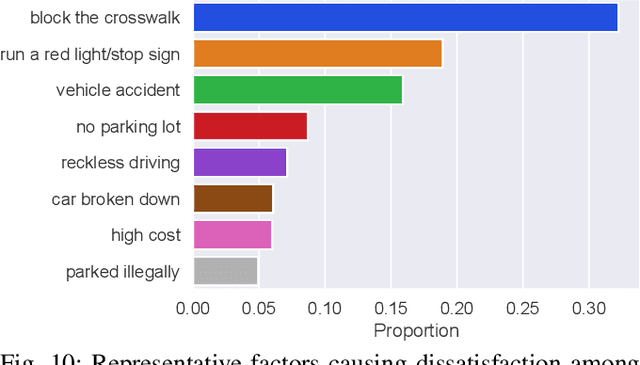

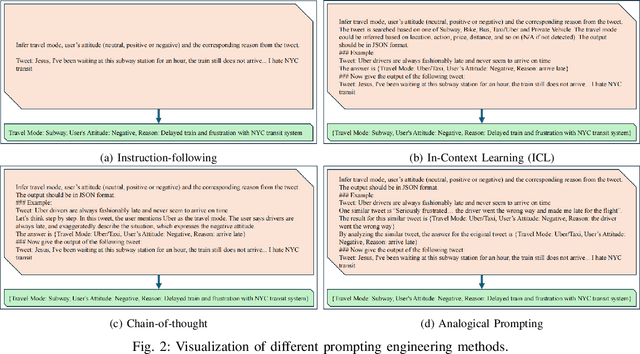

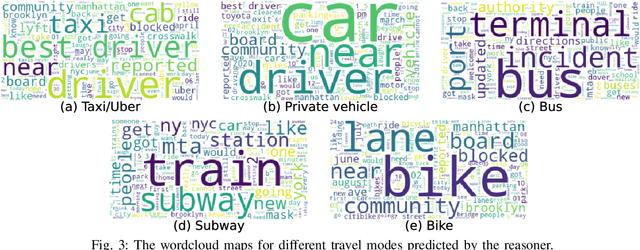

From Twitter to Reasoner: Understand Mobility Travel Modes and Sentiment Using Large Language Models

Nov 04, 2024

Abstract:Social media has become an important platform for people to express their opinions towards transportation services and infrastructure, which holds the potential for researchers to gain a deeper understanding of individuals' travel choices, for transportation operators to improve service quality, and for policymakers to regulate mobility services. A significant challenge, however, lies in the unstructured nature of social media data. In other words, textual data like social media is not labeled, and large-scale manual annotations are cost-prohibitive. In this study, we introduce a novel methodological framework utilizing Large Language Models (LLMs) to infer the mentioned travel modes from social media posts, and reason people's attitudes toward the associated travel mode, without the need for manual annotation. We compare different LLMs along with various prompting engineering methods in light of human assessment and LLM verification. We find that most social media posts manifest negative rather than positive sentiments. We thus identify the contributing factors to these negative posts and, accordingly, propose recommendations to traffic operators and policymakers.

MePT: Multi-Representation Guided Prompt Tuning for Vision-Language Model

Aug 19, 2024

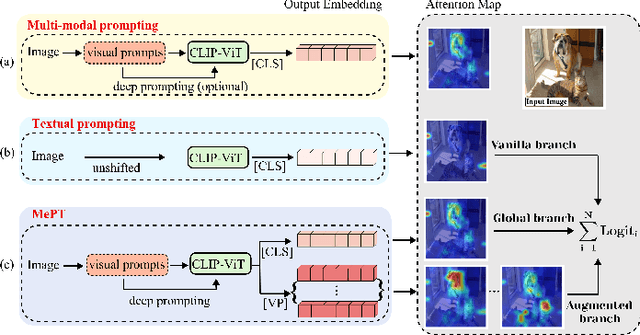

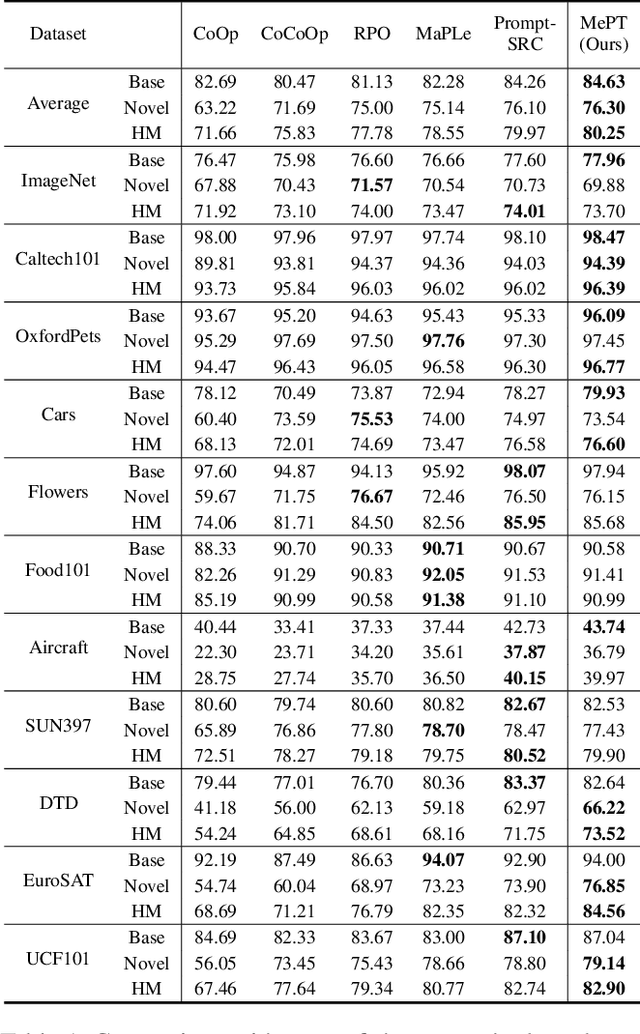

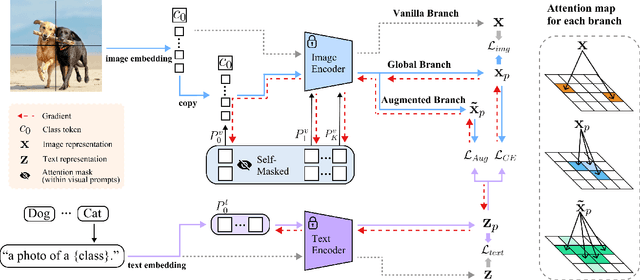

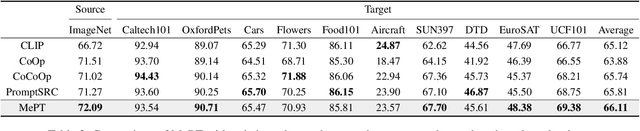

Abstract:Recent advancements in pre-trained Vision-Language Models (VLMs) have highlighted the significant potential of prompt tuning for adapting these models to a wide range of downstream tasks. However, existing prompt tuning methods typically map an image to a single representation, limiting the model's ability to capture the diverse ways an image can be described. To address this limitation, we investigate the impact of visual prompts on the model's generalization capability and introduce a novel method termed Multi-Representation Guided Prompt Tuning (MePT). Specifically, MePT employs a three-branch framework that focuses on diverse salient regions, uncovering the inherent knowledge within images which is crucial for robust generalization. Further, we employ efficient self-ensemble techniques to integrate these versatile image representations, allowing MePT to learn all conditional, marginal, and fine-grained distributions effectively. We validate the effectiveness of MePT through extensive experiments, demonstrating significant improvements on both base-to-novel class prediction and domain generalization tasks.

Pruner: An Efficient Cross-Platform Tensor Compiler with Dual Awareness

Feb 04, 2024Abstract:Tensor program optimization on Deep Learning Accelerators (DLAs) is critical for efficient model deployment. Although search-based Deep Learning Compilers (DLCs) have achieved significant performance gains compared to manual methods, they still suffer from the persistent challenges of low search efficiency and poor cross-platform adaptability. In this paper, we propose $\textbf{Pruner}$, following hardware/software co-design principles to hierarchically boost tensor program optimization. Pruner comprises two primary components: a Parameterized Static Analyzer ($\textbf{PSA}$) and a Pattern-aware Cost Model ($\textbf{PaCM}$). The former serves as a hardware-aware and formulaic performance analysis tool, guiding the pruning of the search space, while the latter enables the performance prediction of tensor programs according to the critical data-flow patterns. Furthermore, to ensure effective cross-platform adaptation, we design a Momentum Transfer Learning ($\textbf{MTL}$) strategy using a Siamese network, which establishes a bidirectional feedback mechanism to improve the robustness of the pre-trained cost model. The extensive experimental results demonstrate the effectiveness and advancement of the proposed Pruner in various tensor program tuning tasks across both online and offline scenarios, with low resource overhead. The code is available at https://github.com/qiaolian9/Pruner.

Rethinking Large-scale Pre-ranking System: Entire-chain Cross-domain Models

Oct 12, 2023Abstract:Industrial systems such as recommender systems and online advertising, have been widely equipped with multi-stage architectures, which are divided into several cascaded modules, including matching, pre-ranking, ranking and re-ranking. As a critical bridge between matching and ranking, existing pre-ranking approaches mainly endure sample selection bias (SSB) problem owing to ignoring the entire-chain data dependence, resulting in sub-optimal performances. In this paper, we rethink pre-ranking system from the perspective of the entire sample space, and propose Entire-chain Cross-domain Models (ECM), which leverage samples from the whole cascaded stages to effectively alleviate SSB problem. Besides, we design a fine-grained neural structure named ECMM to further improve the pre-ranking accuracy. Specifically, we propose a cross-domain multi-tower neural network to comprehensively predict for each stage result, and introduce the sub-networking routing strategy with $L0$ regularization to reduce computational costs. Evaluations on real-world large-scale traffic logs demonstrate that our pre-ranking models outperform SOTA methods while time consumption is maintained within an acceptable level, which achieves better trade-off between efficiency and effectiveness.

* 5 pages, 2 figures

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge