Rosie Jones

Cem Mil Podcasts: A Spoken Portuguese Document Corpus

Sep 23, 2022

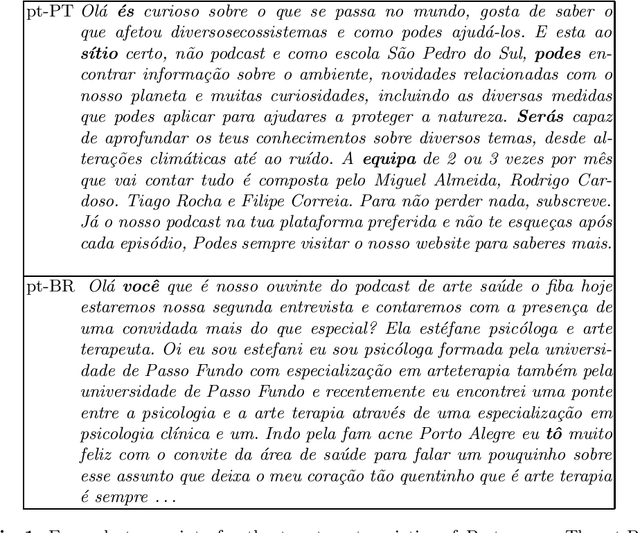

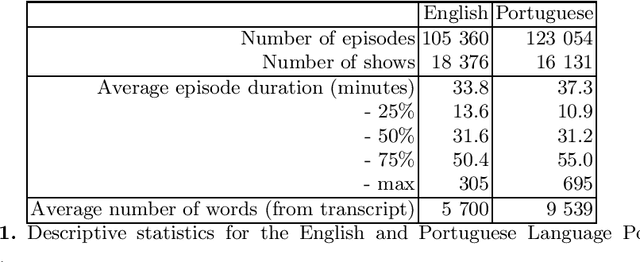

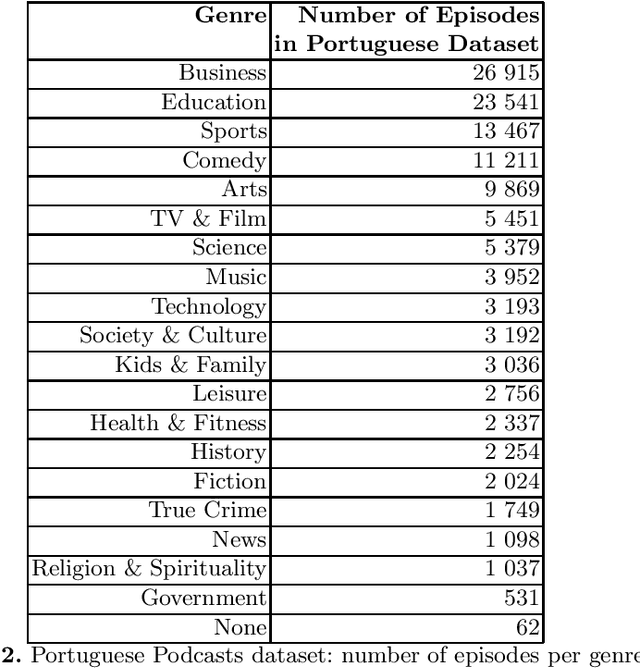

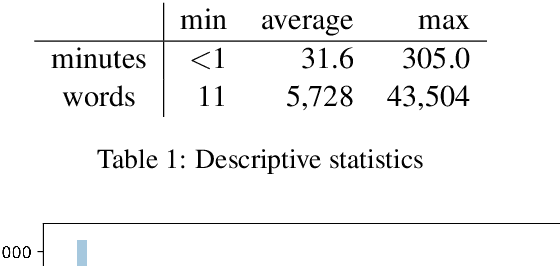

Abstract:This document describes the Portuguese language podcast dataset released by Spotify for academic research purposes. We give an overview of how the data was sampled, some basic statistics over the collection, as well as brief information of distribution over Brazilian and Portuguese dialects.

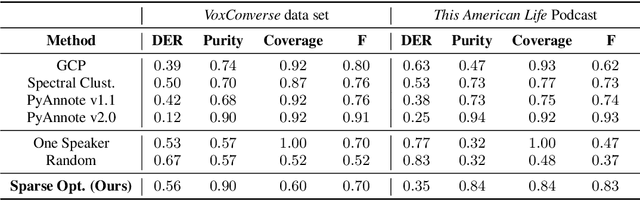

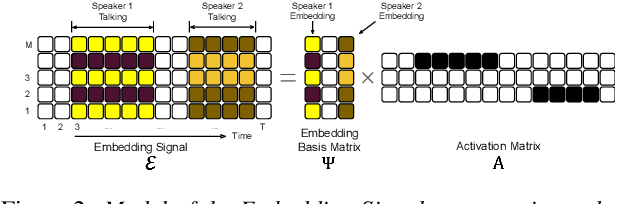

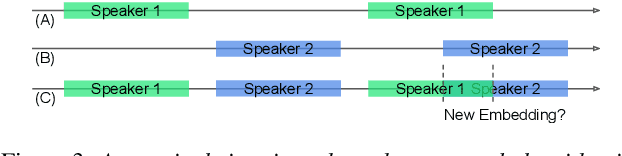

Unsupervised Speaker Diarization that is Agnostic to Language, Overlap-Aware, and Tuning Free

Jul 25, 2022

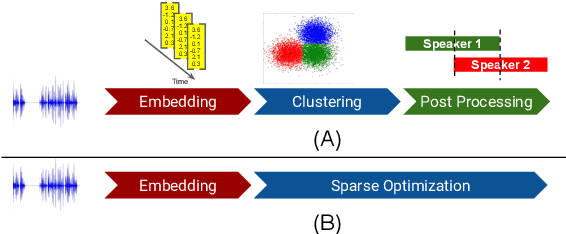

Abstract:Podcasts are conversational in nature and speaker changes are frequent -- requiring speaker diarization for content understanding. We propose an unsupervised technique for speaker diarization without relying on language-specific components. The algorithm is overlap-aware and does not require information about the number of speakers. Our approach shows 79% improvement on purity scores (34% on F-score) against the Google Cloud Platform solution on podcast data.

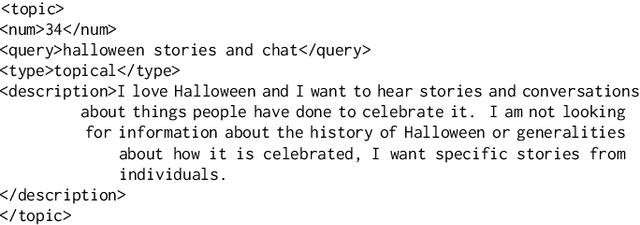

Podcast Metadata and Content: Episode Relevance andAttractiveness in Ad Hoc Search

Aug 25, 2021

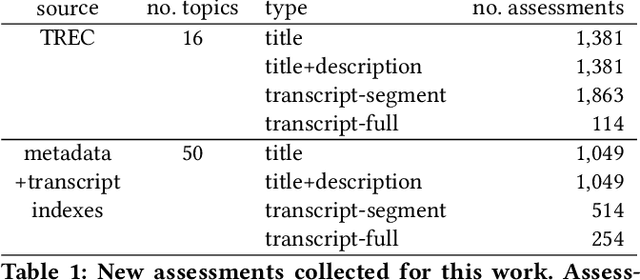

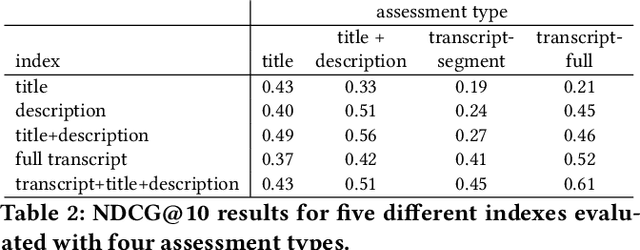

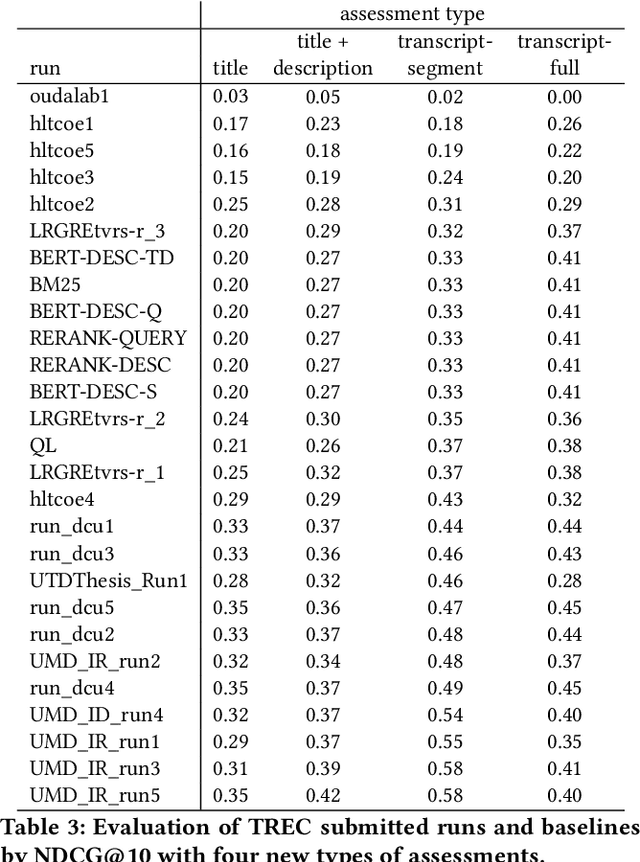

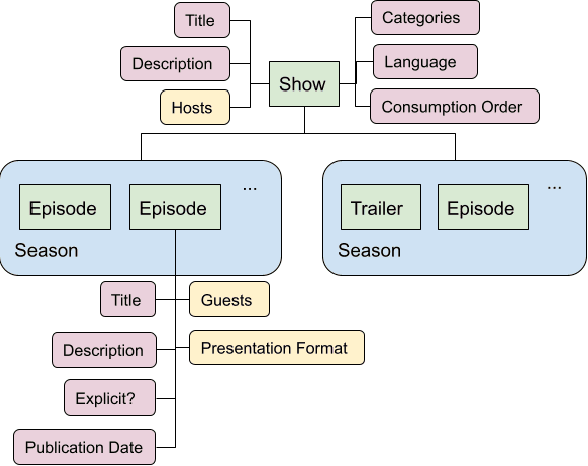

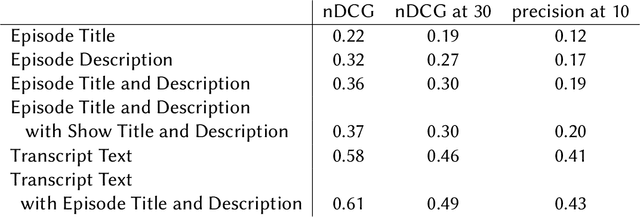

Abstract:Rapidly growing online podcast archives contain diverse content on a wide range of topics. These archives form an important resource for entertainment and professional use, but their value can only be realized if users can rapidly and reliably locate content of interest. Search for relevant content can be based on metadata provided by content creators, but also on transcripts of the spoken content itself. Excavating relevant content from deep within these audio streams for diverse types of information needs requires varying the approach to systems prototyping. We describe a set of diverse podcast information needs and different approaches to assessing retrieved content for relevance. We use these information needs in an investigation of the utility and effectiveness of these information sources. Based on our analysis, we recommend approaches for indexing and retrieving podcast content for ad hoc search.

Current Challenges and Future Directions in Podcast Information Access

Jun 17, 2021

Abstract:Podcasts are spoken documents across a wide-range of genres and styles, with growing listenership across the world, and a rapidly lowering barrier to entry for both listeners and creators. The great strides in search and recommendation in research and industry have yet to see impact in the podcast space, where recommendations are still largely driven by word of mouth. In this perspective paper, we highlight the many differences between podcasts and other media, and discuss our perspective on challenges and future research directions in the domain of podcast information access.

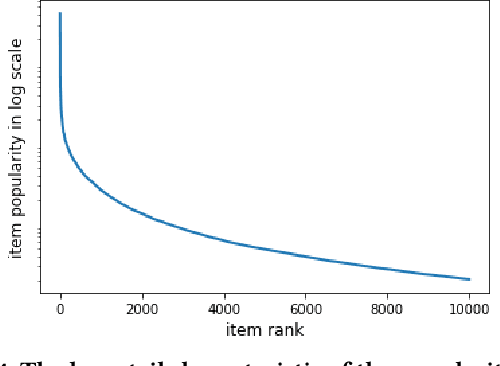

Modeling Language Usage and Listener Engagement in Podcasts

Jun 11, 2021

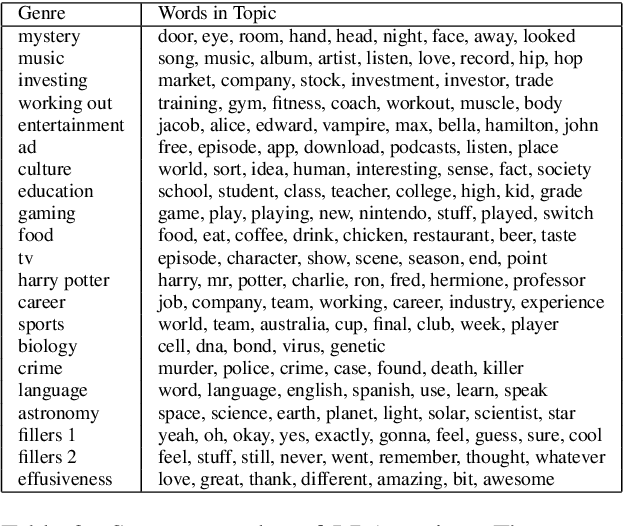

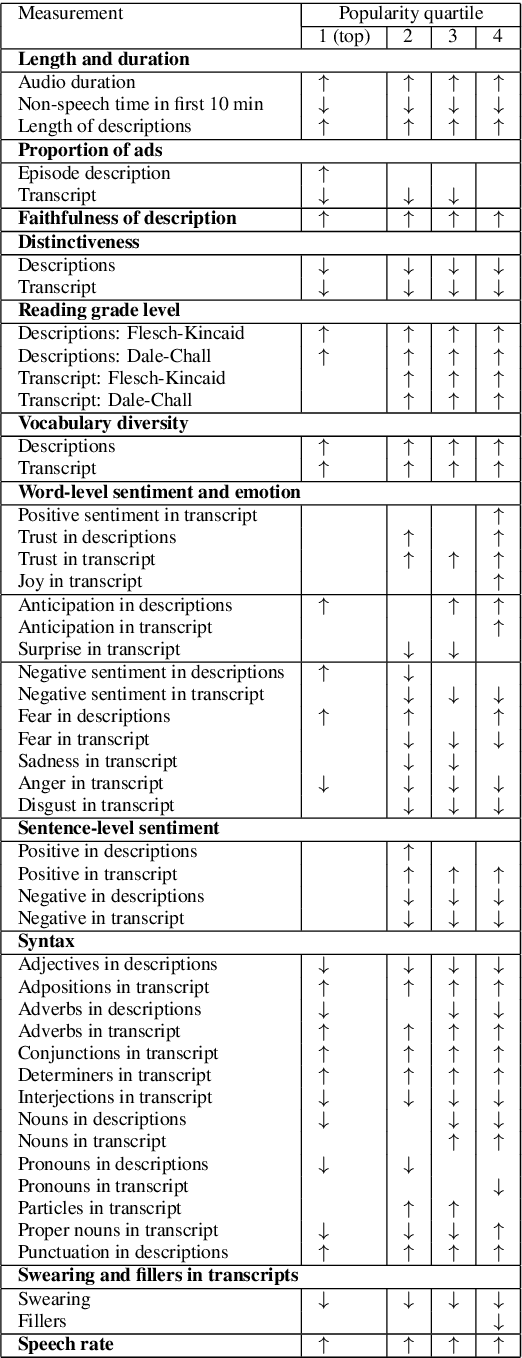

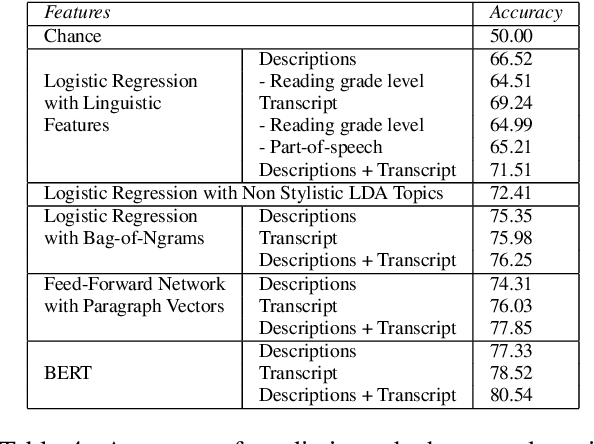

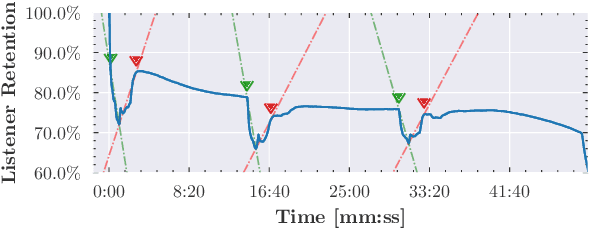

Abstract:While there is an abundance of popular writing targeted to podcast creators on how to speak in ways that engage their listeners, there has been little data-driven analysis of podcasts that relates linguistic style with listener engagement. In this paper, we investigate how various factors -- vocabulary diversity, distinctiveness, emotion, and syntax, among others -- correlate with engagement, based on analysis of the creators' written descriptions and transcripts of the audio. We build models with different textual representations, and show that the identified features are highly predictive of engagement. Our analysis tests popular wisdom about stylistic elements in high-engagement podcasts, corroborating some aspects, and adding new perspectives on others.

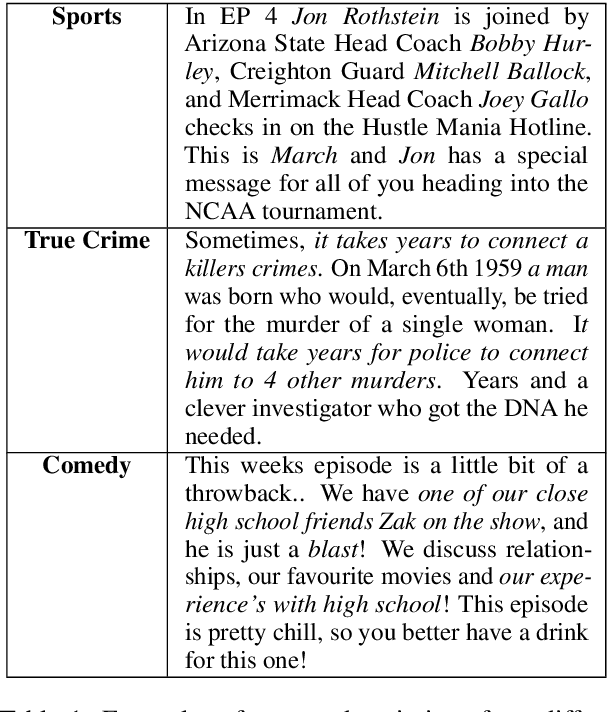

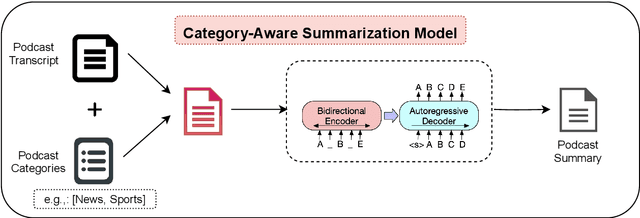

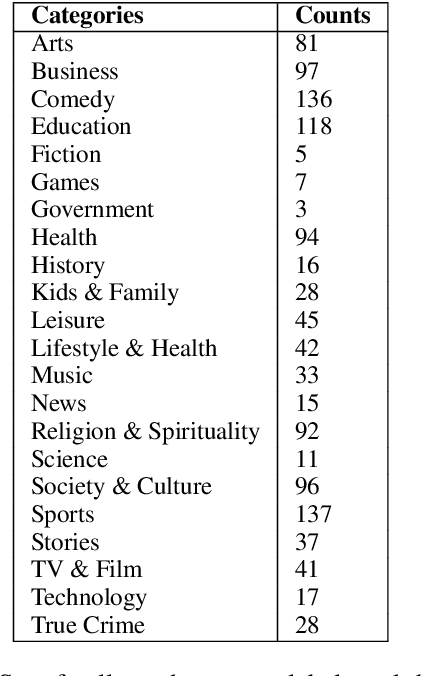

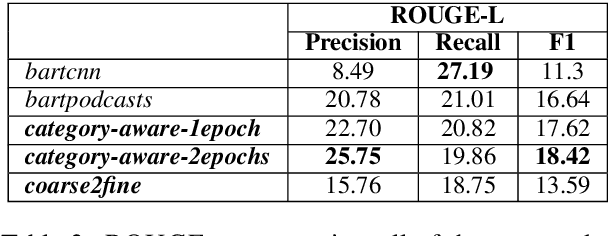

Spotify at TREC 2020: Genre-Aware Abstractive Podcast Summarization

Apr 07, 2021

Abstract:This paper contains the description of our submissions to the summarization task of the Podcast Track in TREC (the Text REtrieval Conference) 2020. The goal of this challenge was to generate short, informative summaries that contain the key information present in a podcast episode using automatically generated transcripts of the podcast audio. Since podcasts vary with respect to their genre, topic, and granularity of information, we propose two summarization models that explicitly take genre and named entities into consideration in order to generate summaries appropriate to the style of the podcasts. Our models are abstractive, and supervised using creator-provided descriptions as ground truth summaries. The results of the submitted summaries show that our best model achieves an aggregate quality score of 1.58 in comparison to the creator descriptions and a baseline abstractive system which both score 1.49 (an improvement of 9%) as assessed by human evaluators.

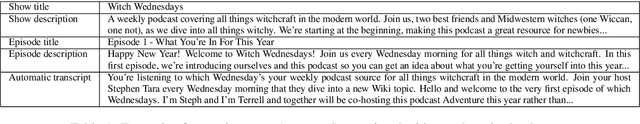

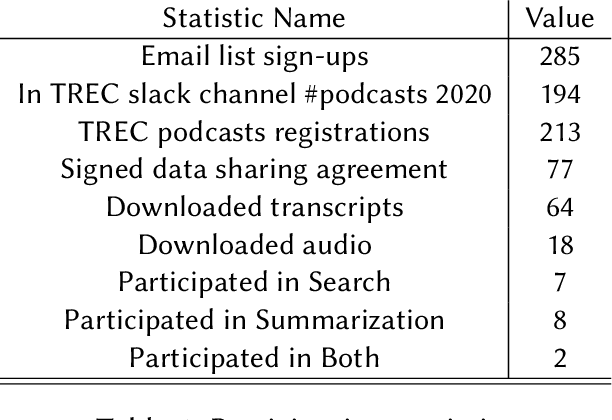

TREC 2020 Podcasts Track Overview

Mar 29, 2021

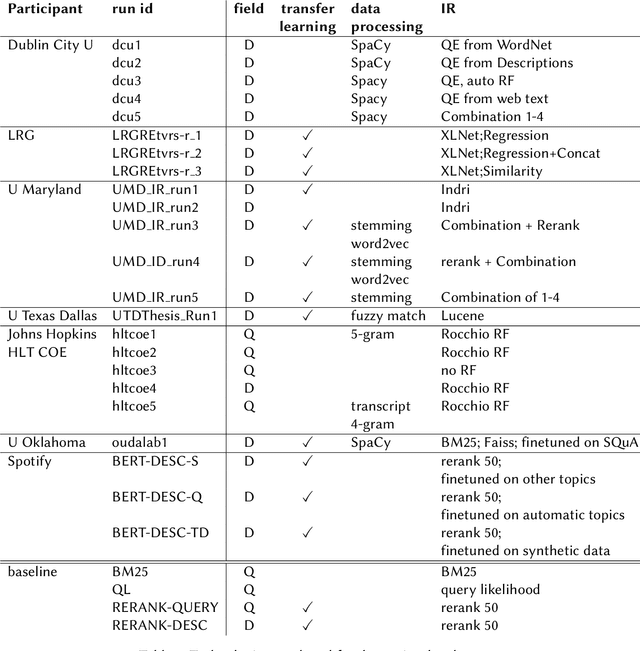

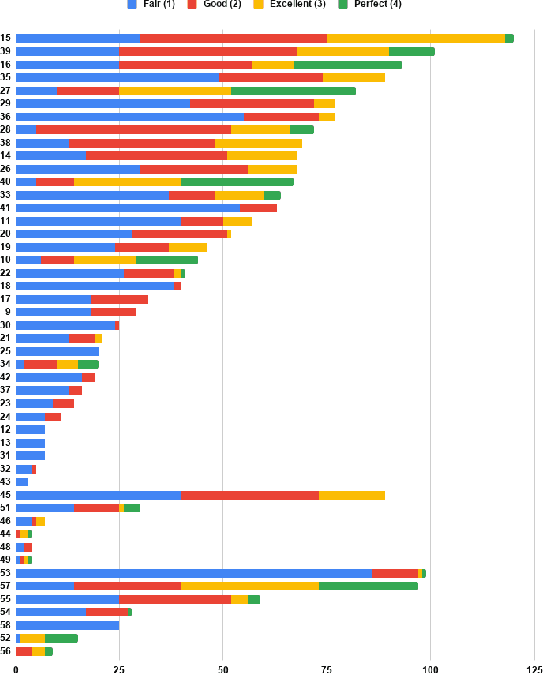

Abstract:The Podcast Track is new at the Text Retrieval Conference (TREC) in 2020. The podcast track was designed to encourage research into podcasts in the information retrieval and NLP research communities. The track consisted of two shared tasks: segment retrieval and summarization, both based on a dataset of over 100,000 podcast episodes (metadata, audio, and automatic transcripts) which was released concurrently with the track. The track generated considerable interest, attracted hundreds of new registrations to TREC and fifteen teams, mostly disjoint between search and summarization, made final submissions for assessment. Deep learning was the dominant experimental approach for both search experiments and summarization. This paper gives an overview of the tasks and the results of the participants' experiments. The track will return to TREC 2021 with the same two tasks, incorporating slight modifications in response to participant feedback.

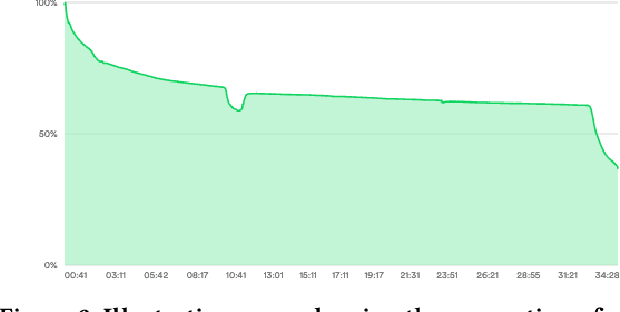

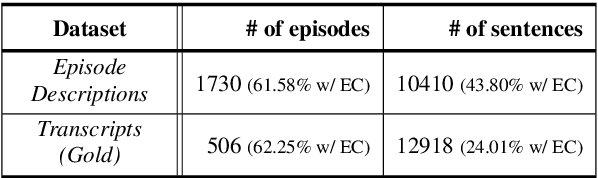

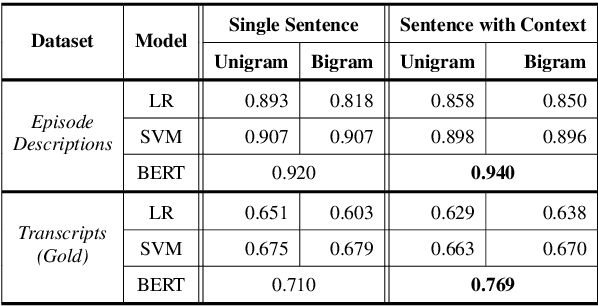

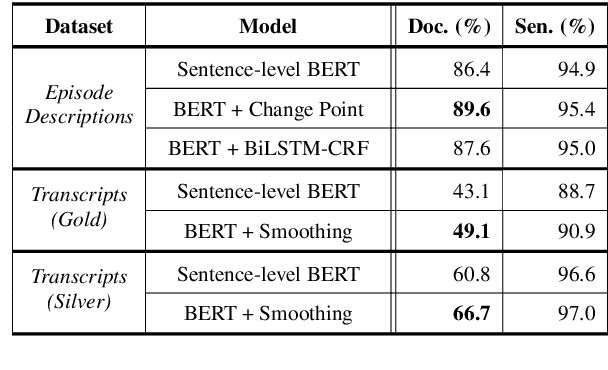

Detecting Extraneous Content in Podcasts

Mar 03, 2021

Abstract:Podcast episodes often contain material extraneous to the main content, such as advertisements, interleaved within the audio and the written descriptions. We present classifiers that leverage both textual and listening patterns in order to detect such content in podcast descriptions and audio transcripts. We demonstrate that our models are effective by evaluating them on the downstream task of podcast summarization and show that we can substantively improve ROUGE scores and reduce the extraneous content generated in the summaries.

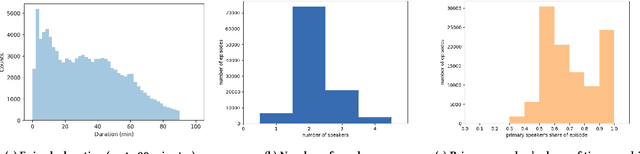

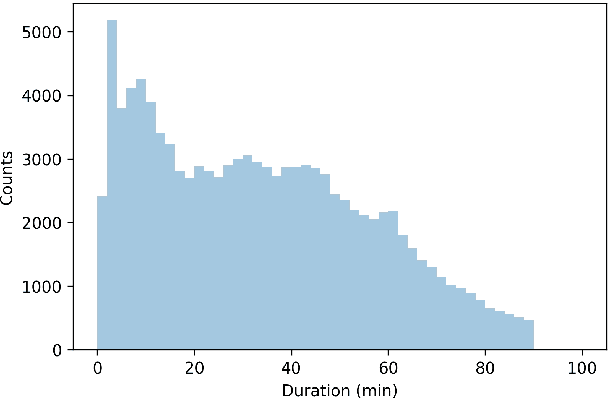

The Spotify Podcasts Dataset

Apr 08, 2020

Abstract:Podcasts are a relatively new form of audio media. Episodes appear on a regular cadence, and come in many different formats and levels of formality. They can be formal news journalism or conversational chat; fiction or non-fiction. They are rapidly growing in popularity and yet have been relatively little studied. As an audio format, podcasts are more varied in style and production types than, say, broadcast news, and contain many more genres than typically studied in video research. The medium is therefore a rich domain with many research avenues for the IR and NLP communities. We present the Spotify Podcasts Dataset, a set of approximately 100K podcast episodes comprised of raw audio files along with accompanying ASR transcripts. This represents over 47,000 hours of transcribed audio, and is an order of magnitude larger than previous speech-to-text corpora.

Online Learning with Pairwise Loss Functions

Jan 22, 2013Abstract:Efficient online learning with pairwise loss functions is a crucial component in building large-scale learning system that maximizes the area under the Receiver Operator Characteristic (ROC) curve. In this paper we investigate the generalization performance of online learning algorithms with pairwise loss functions. We show that the existing proof techniques for generalization bounds of online algorithms with a univariate loss can not be directly applied to pairwise losses. In this paper, we derive the first result providing data-dependent bounds for the average risk of the sequence of hypotheses generated by an arbitrary online learner in terms of an easily computable statistic, and show how to extract a low risk hypothesis from the sequence. We demonstrate the generality of our results by applying it to two important problems in machine learning. First, we analyze two online algorithms for bipartite ranking; one being a natural extension of the perceptron algorithm and the other using online convex optimization. Secondly, we provide an analysis for the risk bound for an online algorithm for supervised metric learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge