Quanying Liu

Understanding Generalization from Embedding Dimension and Distributional Convergence

Jan 30, 2026Abstract:Deep neural networks often generalize well despite heavy over-parameterization, challenging classical parameter-based analyses. We study generalization from a representation-centric perspective and analyze how the geometry of learned embeddings controls predictive performance for a fixed trained model. We show that population risk can be bounded by two factors: (i) the intrinsic dimension of the embedding distribution, which determines the convergence rate of empirical embedding distribution to the population distribution in Wasserstein distance, and (ii) the sensitivity of the downstream mapping from embeddings to predictions, characterized by Lipschitz constants. Together, these yield an embedding-dependent error bound that does not rely on parameter counts or hypothesis class complexity. At the final embedding layer, architectural sensitivity vanishes and the bound is dominated by embedding dimension, explaining its strong empirical correlation with generalization performance. Experiments across architectures and datasets validate the theory and demonstrate the utility of embedding-based diagnostics.

Local Intrinsic Dimension of Representations Predicts Alignment and Generalization in AI Models and Human Brain

Jan 30, 2026Abstract:Recent work has found that neural networks with stronger generalization tend to exhibit higher representational alignment with one another across architectures and training paradigms. In this work, we show that models with stronger generalization also align more strongly with human neural activity. Moreover, generalization performance, model--model alignment, and model--brain alignment are all significantly correlated with each other. We further show that these relationships can be explained by a single geometric property of learned representations: the local intrinsic dimension of embeddings. Lower local dimension is consistently associated with stronger model--model alignment, stronger model--brain alignment, and better generalization, whereas global dimension measures fail to capture these effects. Finally, we find that increasing model capacity and training data scale systematically reduces local intrinsic dimension, providing a geometric account of the benefits of scaling. Together, our results identify local intrinsic dimension as a unifying descriptor of representational convergence in artificial and biological systems.

SLIM-Brain: A Data- and Training-Efficient Foundation Model for fMRI Data Analysis

Dec 26, 2025Abstract:Foundation models are emerging as a powerful paradigm for fMRI analysis, but current approaches face a dual bottleneck of data- and training-efficiency. Atlas-based methods aggregate voxel signals into fixed regions of interest, reducing data dimensionality but discarding fine-grained spatial details, and requiring extremely large cohorts to train effectively as general-purpose foundation models. Atlas-free methods, on the other hand, operate directly on voxel-level information - preserving spatial fidelity but are prohibitively memory- and compute-intensive, making large-scale pre-training infeasible. We introduce SLIM-Brain (Sample-efficient, Low-memory fMRI Foundation Model for Human Brain), a new atlas-free foundation model that simultaneously improves both data- and training-efficiency. SLIM-Brain adopts a two-stage adaptive design: (i) a lightweight temporal extractor captures global context across full sequences and ranks data windows by saliency, and (ii) a 4D hierarchical encoder (Hiera-JEPA) learns fine-grained voxel-level representations only from the top-$k$ selected windows, while deleting about 70% masked patches. Extensive experiments across seven public benchmarks show that SLIM-Brain establishes new state-of-the-art performance on diverse tasks, while requiring only 4 thousand pre-training sessions and approximately 30% of GPU memory comparing to traditional voxel-level methods.

ChineseEEG-2: An EEG Dataset for Multimodal Semantic Alignment and Neural Decoding during Reading and Listening

Aug 06, 2025Abstract:EEG-based neural decoding requires large-scale benchmark datasets. Paired brain-language data across speaking, listening, and reading modalities are essential for aligning neural activity with the semantic representation of large language models (LLMs). However, such datasets are rare, especially for non-English languages. Here, we present ChineseEEG-2, a high-density EEG dataset designed for benchmarking neural decoding models under real-world language tasks. Building on our previous ChineseEEG dataset, which focused on silent reading, ChineseEEG-2 adds two active modalities: Reading Aloud (RA) and Passive Listening (PL), using the same Chinese corpus. EEG and audio were simultaneously recorded from four participants during ~10.7 hours of reading aloud. These recordings were then played to eight other participants, collecting ~21.6 hours of EEG during listening. This setup enables speech temporal and semantic alignment across the RA and PL modalities. ChineseEEG-2 includes EEG signals, precise audio, aligned semantic embeddings from pre-trained language models, and task labels. Together with ChineseEEG, this dataset supports joint semantic alignment learning across speaking, listening, and reading. It enables benchmarking of neural decoding algorithms and promotes brain-LLM alignment under multimodal language tasks, especially in Chinese. ChineseEEG-2 provides a benchmark dataset for next-generation neural semantic decoding.

Scale-Invariance Drives Convergence in AI and Brain Representations

Jun 13, 2025Abstract:Despite variations in architecture and pretraining strategies, recent studies indicate that large-scale AI models often converge toward similar internal representations that also align with neural activity. We propose that scale-invariance, a fundamental structural principle in natural systems, is a key driver of this convergence. In this work, we propose a multi-scale analytical framework to quantify two core aspects of scale-invariance in AI representations: dimensional stability and structural similarity across scales. We further investigate whether these properties can predict alignment performance with functional Magnetic Resonance Imaging (fMRI) responses in the visual cortex. Our analysis reveals that embeddings with more consistent dimension and higher structural similarity across scales align better with fMRI data. Furthermore, we find that the manifold structure of fMRI data is more concentrated, with most features dissipating at smaller scales. Embeddings with similar scale patterns align more closely with fMRI data. We also show that larger pretraining datasets and the inclusion of language modalities enhance the scale-invariance properties of embeddings, further improving neural alignment. Our findings indicate that scale-invariance is a fundamental structural principle that bridges artificial and biological representations, providing a new framework for evaluating the structural quality of human-like AI systems.

Synthesizing Images on Perceptual Boundaries of ANNs for Uncovering and Manipulating Human Perceptual Variability

May 06, 2025

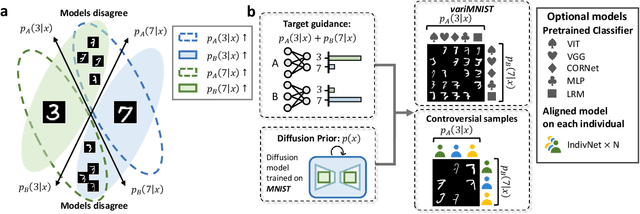

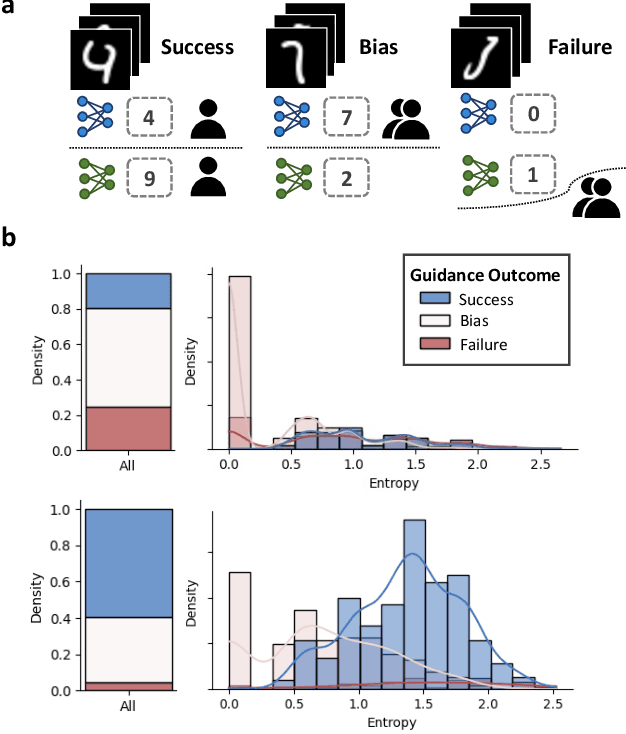

Abstract:Human decision-making in cognitive tasks and daily life exhibits considerable variability, shaped by factors such as task difficulty, individual preferences, and personal experiences. Understanding this variability across individuals is essential for uncovering the perceptual and decision-making mechanisms that humans rely on when faced with uncertainty and ambiguity. We present a computational framework BAM (Boundary Alignment & Manipulation framework) that combines perceptual boundary sampling in ANNs and human behavioral experiments to systematically investigate this phenomenon. Our perceptual boundary sampling algorithm generates stimuli along ANN decision boundaries that intrinsically induce significant perceptual variability. The efficacy of these stimuli is empirically validated through large-scale behavioral experiments involving 246 participants across 116,715 trials, culminating in the variMNIST dataset containing 19,943 systematically annotated images. Through personalized model alignment and adversarial generation, we establish a reliable method for simultaneously predicting and manipulating the divergent perceptual decisions of pairs of participants. This work bridges the gap between computational models and human individual difference research, providing new tools for personalized perception analysis.

Dynamic-Attention-based EEG State Transition Modeling for Emotion Recognition

Nov 07, 2024

Abstract:Electroencephalogram (EEG)-based emotion decoding can objectively quantify people's emotional state and has broad application prospects in human-computer interaction and early detection of emotional disorders. Recently emerging deep learning architectures have significantly improved the performance of EEG emotion decoding. However, existing methods still fall short of fully capturing the complex spatiotemporal dynamics of neural signals, which are crucial for representing emotion processing. This study proposes a Dynamic-Attention-based EEG State Transition (DAEST) modeling method to characterize EEG spatiotemporal dynamics. The model extracts spatiotemporal components of EEG that represent multiple parallel neural processes and estimates dynamic attention weights on these components to capture transitions in brain states. The model is optimized within a contrastive learning framework for cross-subject emotion recognition. The proposed method achieved state-of-the-art performance on three publicly available datasets: FACED, SEED, and SEED-V. It achieved 75.4% accuracy in the binary classification of positive and negative emotions and 59.3% in nine-class discrete emotion classification on the FACED dataset, 88.1% in the three-class classification of positive, negative, and neutral emotions on the SEED dataset, and 73.6% in five-class discrete emotion classification on the SEED-V dataset. The learned EEG spatiotemporal patterns and dynamic transition properties offer valuable insights into neural dynamics underlying emotion processing.

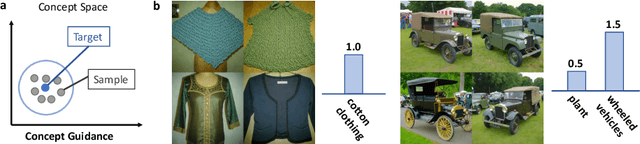

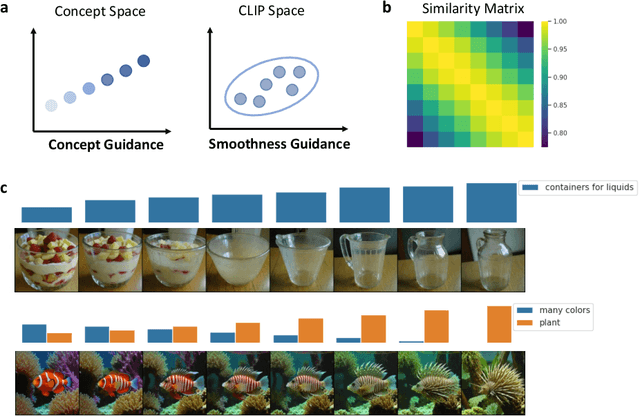

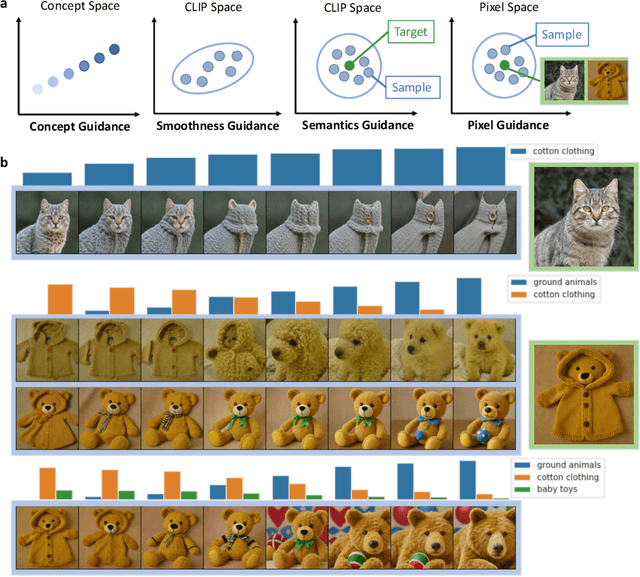

CoCoG-2: Controllable generation of visual stimuli for understanding human concept representation

Jul 20, 2024

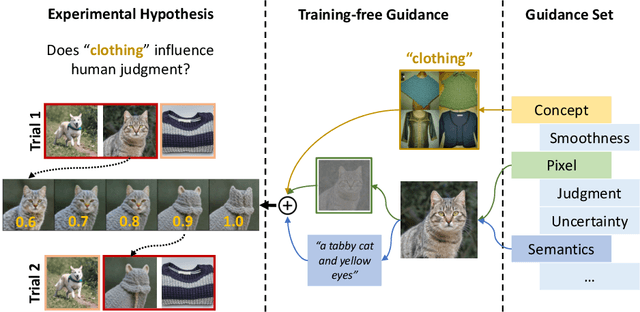

Abstract:Humans interpret complex visual stimuli using abstract concepts that facilitate decision-making tasks such as food selection and risk avoidance. Similarity judgment tasks are effective for exploring these concepts. However, methods for controllable image generation in concept space are underdeveloped. In this study, we present a novel framework called CoCoG-2, which integrates generated visual stimuli into similarity judgment tasks. CoCoG-2 utilizes a training-free guidance algorithm to enhance generation flexibility. CoCoG-2 framework is versatile for creating experimental stimuli based on human concepts, supporting various strategies for guiding visual stimuli generation, and demonstrating how these stimuli can validate various experimental hypotheses. CoCoG-2 will advance our understanding of the causal relationship between concept representations and behaviors by generating visual stimuli. The code is available at \url{https://github.com/ncclab-sustech/CoCoG-2}.

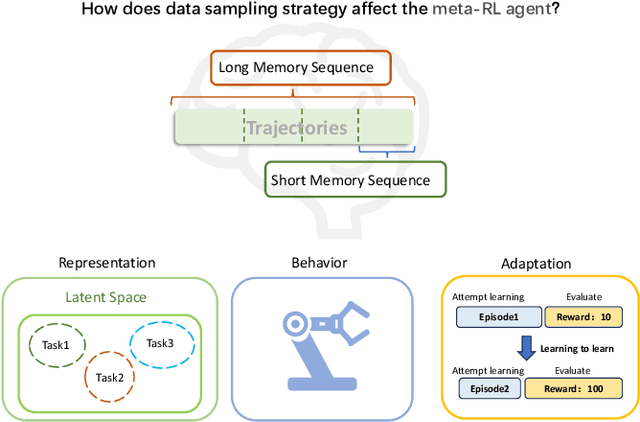

Memory Sequence Length of Data Sampling Impacts the Adaptation of Meta-Reinforcement Learning Agents

Jun 18, 2024

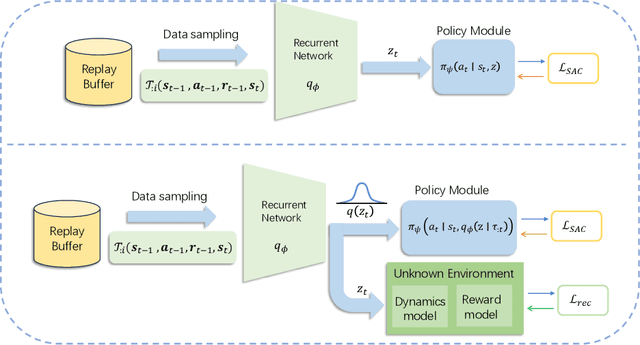

Abstract:Fast adaptation to new tasks is extremely important for embodied agents in the real world. Meta-reinforcement learning (meta-RL) has emerged as an effective method to enable fast adaptation in unknown environments. Compared to on-policy meta-RL algorithms, off-policy algorithms rely heavily on efficient data sampling strategies to extract and represent the historical trajectories. However, little is known about how different data sampling methods impact the ability of meta-RL agents to represent unknown environments. Here, we investigate the impact of data sampling strategies on the exploration and adaptability of meta-RL agents. Specifically, we conducted experiments with two types of off-policy meta-RL algorithms based on Thompson sampling and Bayes-optimality theories in continuous control tasks within the MuJoCo environment and sparse reward navigation tasks. Our analysis revealed the long-memory and short-memory sequence sampling strategies affect the representation and adaptive capabilities of meta-RL agents. We found that the algorithm based on Bayes-optimality theory exhibited more robust and better adaptability than the algorithm based on Thompson sampling, highlighting the importance of appropriate data sampling strategies for the agent's representation of an unknown environment, especially in the case of sparse rewards.

CoCoG: Controllable Visual Stimuli Generation based on Human Concept Representations

Apr 25, 2024Abstract:A central question for cognitive science is to understand how humans process visual objects, i.e, to uncover human low-dimensional concept representation space from high-dimensional visual stimuli. Generating visual stimuli with controlling concepts is the key. However, there are currently no generative models in AI to solve this problem. Here, we present the Concept based Controllable Generation (CoCoG) framework. CoCoG consists of two components, a simple yet efficient AI agent for extracting interpretable concept and predicting human decision-making in visual similarity judgment tasks, and a conditional generation model for generating visual stimuli given the concepts. We quantify the performance of CoCoG from two aspects, the human behavior prediction accuracy and the controllable generation ability. The experiments with CoCoG indicate that 1) the reliable concept embeddings in CoCoG allows to predict human behavior with 64.07\% accuracy in the THINGS-similarity dataset; 2) CoCoG can generate diverse objects through the control of concepts; 3) CoCoG can manipulate human similarity judgment behavior by intervening key concepts. CoCoG offers visual objects with controlling concepts to advance our understanding of causality in human cognition. The code of CoCoG is available at \url{https://github.com/ncclab-sustech/CoCoG}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge