Philipp Terhörst

A Responsible Face Recognition Approach for Small and Mid-Scale Systems Through Personalized Neural Networks

May 26, 2025Abstract:Traditional face recognition systems rely on extracting fixed face representations, known as templates, to store and verify identities. These representations are typically generated by neural networks that often lack explainability and raise concerns regarding fairness and privacy. In this work, we propose a novel model-template (MOTE) approach that replaces vector-based face templates with small personalized neural networks. This design enables more responsible face recognition for small and medium-scale systems. During enrollment, MOTE creates a dedicated binary classifier for each identity, trained to determine whether an input face matches the enrolled identity. Each classifier is trained using only a single reference sample, along with synthetically balanced samples to allow adjusting fairness at the level of a single individual during enrollment. Extensive experiments across multiple datasets and recognition systems demonstrate substantial improvements in fairness and particularly in privacy. Although the method increases inference time and storage requirements, it presents a strong solution for small- and mid-scale applications where fairness and privacy are critical.

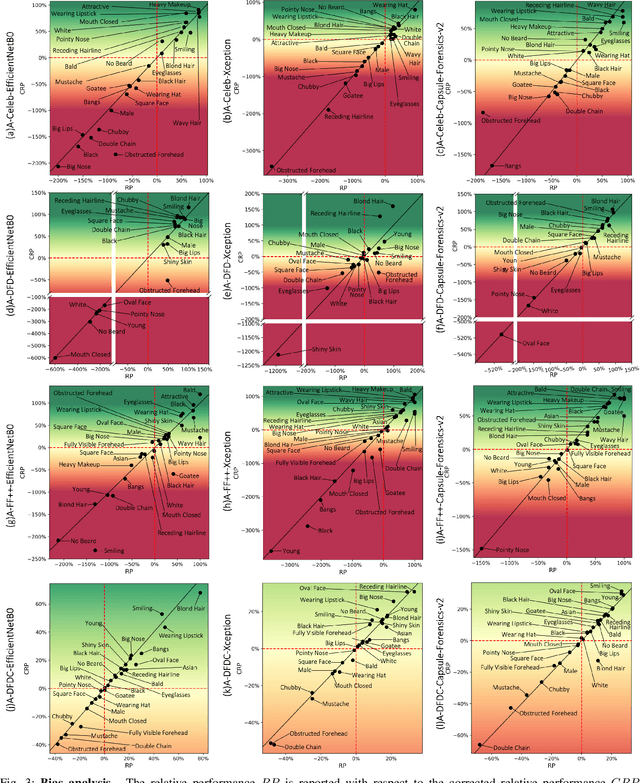

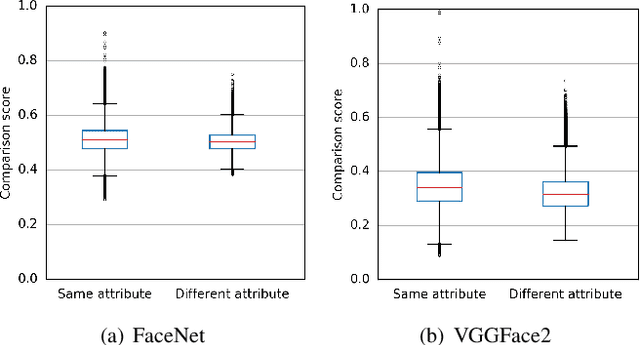

On the "Illusion" of Gender Bias in Face Recognition: Explaining the Fairness Issue Through Non-demographic Attributes

Jan 21, 2025Abstract:Face recognition systems (FRS) exhibit significant accuracy differences based on the user's gender. Since such a gender gap reduces the trustworthiness of FRS, more recent efforts have tried to find the causes. However, these studies make use of manually selected, correlated, and small-sized sets of facial features to support their claims. In this work, we analyse gender bias in face recognition by successfully extending the search domain to decorrelated combinations of 40 non-demographic facial characteristics. First, we propose a toolchain to effectively decorrelate and aggregate facial attributes to enable a less-biased gender analysis on large-scale data. Second, we introduce two new fairness metrics to measure fairness with and without context. Based on these grounds, we thirdly present a novel unsupervised algorithm able to reliably identify attribute combinations that lead to vanishing bias when used as filter predicates for balanced testing datasets. The experiments show that the gender gap vanishes when images of male and female subjects share specific attributes, clearly indicating that the issue is not a question of biology but of the social definition of appearance. These findings could reshape our understanding of fairness in face biometrics and provide insights into FRS, helping to address gender bias issues.

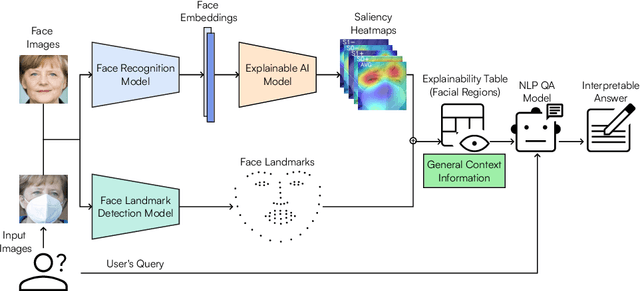

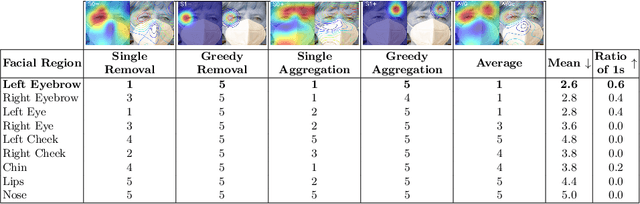

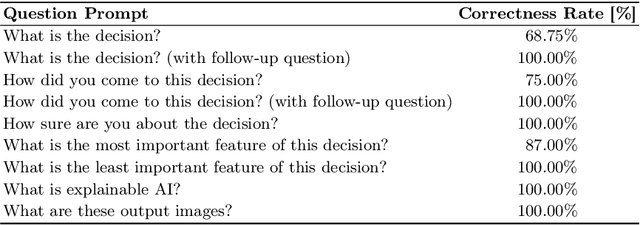

From Pixels to Words: Leveraging Explainability in Face Recognition through Interactive Natural Language Processing

Sep 24, 2024

Abstract:Face Recognition (FR) has advanced significantly with the development of deep learning, achieving high accuracy in several applications. However, the lack of interpretability of these systems raises concerns about their accountability, fairness, and reliability. In the present study, we propose an interactive framework to enhance the explainability of FR models by combining model-agnostic Explainable Artificial Intelligence (XAI) and Natural Language Processing (NLP) techniques. The proposed framework is able to accurately answer various questions of the user through an interactive chatbot. In particular, the explanations generated by our proposed method are in the form of natural language text and visual representations, which for example can describe how different facial regions contribute to the similarity measure between two faces. This is achieved through the automatic analysis of the output's saliency heatmaps of the face images and a BERT question-answering model, providing users with an interface that facilitates a comprehensive understanding of the FR decisions. The proposed approach is interactive, allowing the users to ask questions to get more precise information based on the user's background knowledge. More importantly, in contrast to previous studies, our solution does not decrease the face recognition performance. We demonstrate the effectiveness of the method through different experiments, highlighting its potential to make FR systems more interpretable and user-friendly, especially in sensitive applications where decision-making transparency is crucial.

Efficient Explainable Face Verification based on Similarity Score Argument Backpropagation

Apr 26, 2023Abstract:Explainable Face Recognition is gaining growing attention as the use of the technology is gaining ground in security-critical applications. Understanding why two faces images are matched or not matched by a given face recognition system is important to operators, users, anddevelopers to increase trust, accountability, develop better systems, and highlight unfair behavior. In this work, we propose xSSAB, an approach to back-propagate similarity score-based arguments that support or oppose the face matching decision to visualize spatial maps that indicate similar and dissimilar areas as interpreted by the underlying FR model. Furthermore, we present Patch-LFW, a new explainable face verification benchmark that enables along with a novel evaluation protocol, the first quantitative evaluation of the validity of similarity and dissimilarity maps in explainable face recognition approaches. We compare our efficient approach to state-of-the-art approaches demonstrating a superior trade-off between efficiency and performance. The code as well as the proposed Patch-LFW is publicly available at: https://github.com/marcohuber/xSSAB.

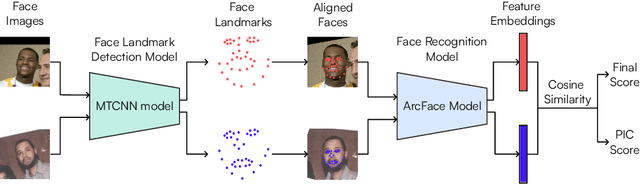

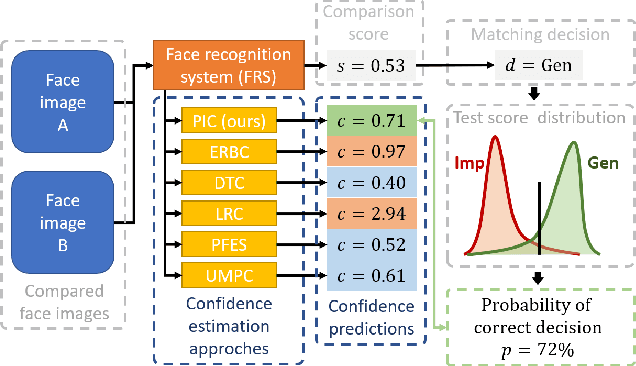

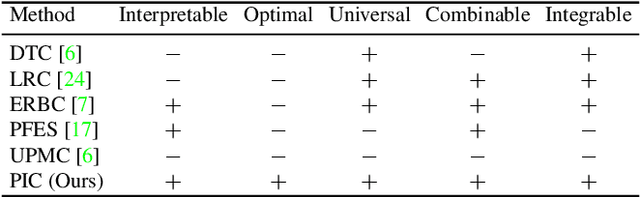

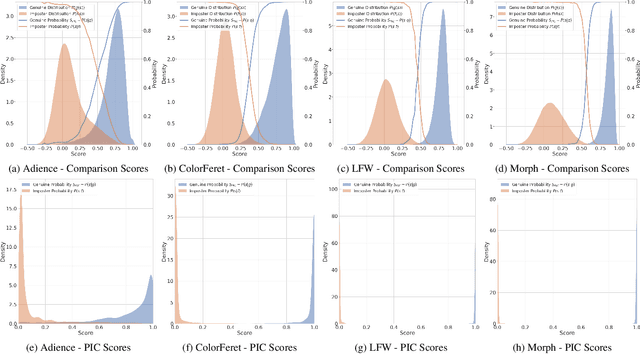

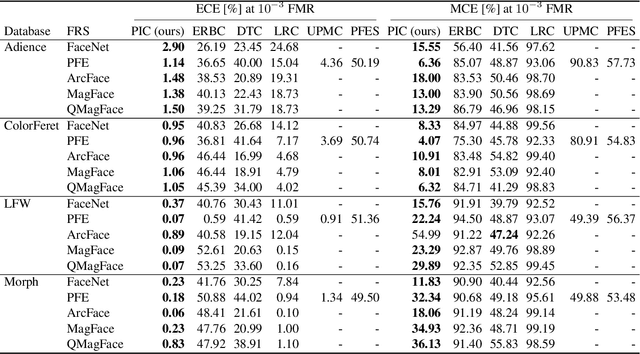

PIC-Score: Probabilistic Interpretable Comparison Score for Optimal Matching Confidence in Single- and Multi-Biometric (Face) Recognition

Nov 22, 2022

Abstract:In the context of biometrics, matching confidence refers to the confidence that a given matching decision is correct. Since many biometric systems operate in critical decision-making processes, such as in forensics investigations, accurately and reliably stating the matching confidence becomes of high importance. Previous works on biometric confidence estimation can well differentiate between high and low confidence, but lack interpretability. Therefore, they do not provide accurate probabilistic estimates of the correctness of a decision. In this work, we propose a probabilistic interpretable comparison (PIC) score that accurately reflects the probability that the score originates from samples of the same identity. We prove that the proposed approach provides optimal matching confidence. Contrary to other approaches, it can also optimally combine multiple samples in a joint PIC score which further increases the recognition and confidence estimation performance. In the experiments, the proposed PIC approach is compared against all biometric confidence estimation methods available on four publicly available databases and five state-of-the-art face recognition systems. The results demonstrate that PIC has a significantly more accurate probabilistic interpretation than similar approaches and is highly effective for multi-biometric recognition. The code is publicly-available.

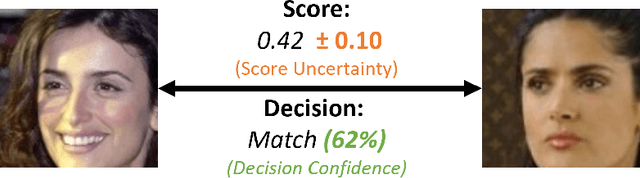

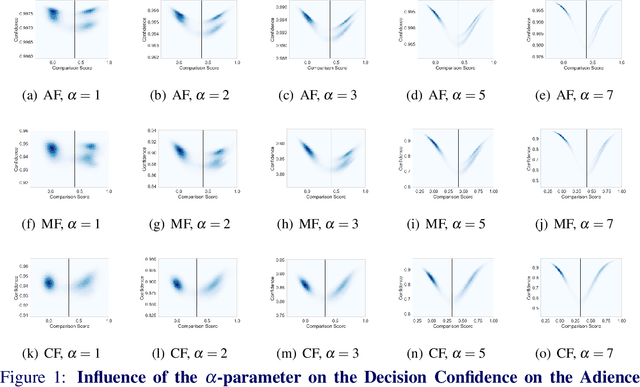

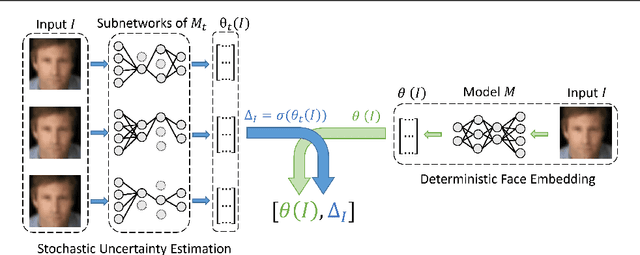

Stating Comparison Score Uncertainty and Verification Decision Confidence Towards Transparent Face Recognition

Oct 19, 2022

Abstract:Face Recognition (FR) is increasingly used in critical verification decisions and thus, there is a need for assessing the trustworthiness of such decisions. The confidence of a decision is often based on the overall performance of the model or on the image quality. We propose to propagate model uncertainties to scores and decisions in an effort to increase the transparency of verification decisions. This work presents two contributions. First, we propose an approach to estimate the uncertainty of face comparison scores. Second, we introduce a confidence measure of the system's decision to provide insights into the verification decision. The suitability of the comparison scores uncertainties and the verification decision confidences have been experimentally proven on three face recognition models on two datasets.

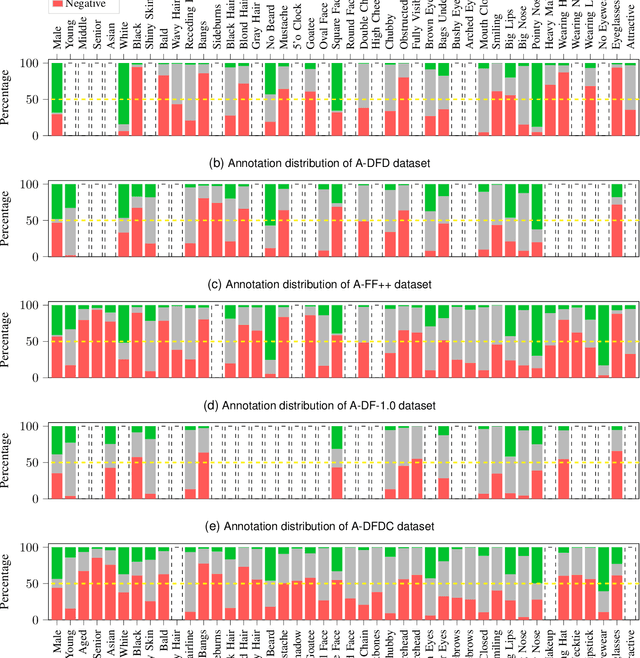

A Comprehensive Analysis of AI Biases in DeepFake Detection With Massively Annotated Databases

Aug 11, 2022

Abstract:In recent years, image and video manipulations with DeepFake have become a severe concern for security and society. Therefore, many detection models and databases have been proposed to detect DeepFake data reliably. However, there is an increased concern that these models and training databases might be biased and thus, cause DeepFake detectors to fail. In this work, we tackle these issues by (a) providing large-scale demographic and non-demographic attribute annotations of 41 different attributes for five popular DeepFake datasets and (b) comprehensively analysing AI-bias of multiple state-of-the-art DeepFake detection models on these databases. The investigation analyses the influence of a large variety of distinctive attributes (from over 65M labels) on the detection performance, including demographic (age, gender, ethnicity) and non-demographic (hair, skin, accessories, etc.) information. The results indicate that investigated databases lack diversity and, more importantly, show that the utilised DeepFake detection models are strongly biased towards many investigated attributes. Moreover, the results show that the models' decision-making might be based on several questionable (biased) assumptions, such if a person is smiling or wearing a hat. Depending on the application of such DeepFake detection methods, these biases can lead to generalizability, fairness, and security issues. We hope that the findings of this study and the annotation databases will help to evaluate and mitigate bias in future DeepFake detection techniques. Our annotation datasets are made publicly available.

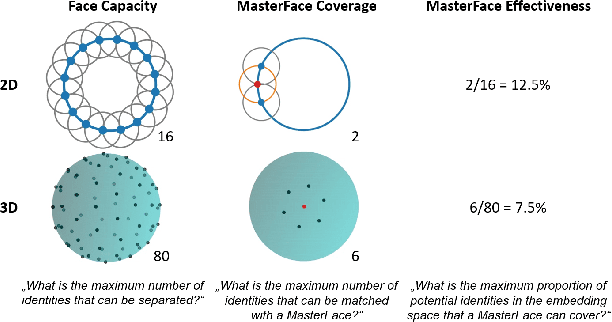

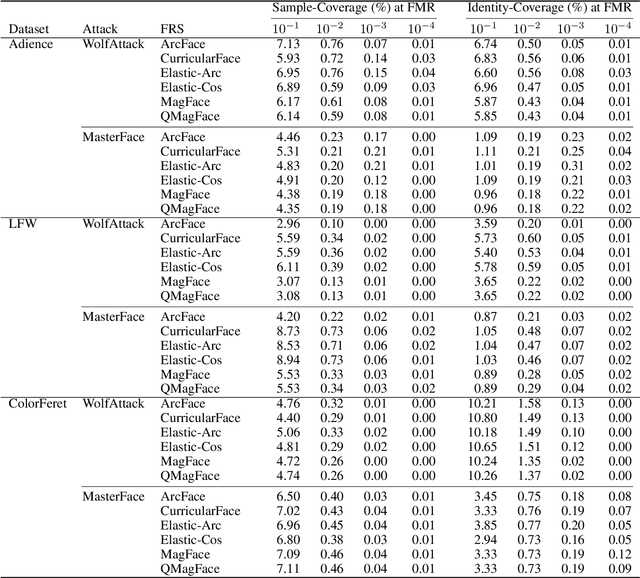

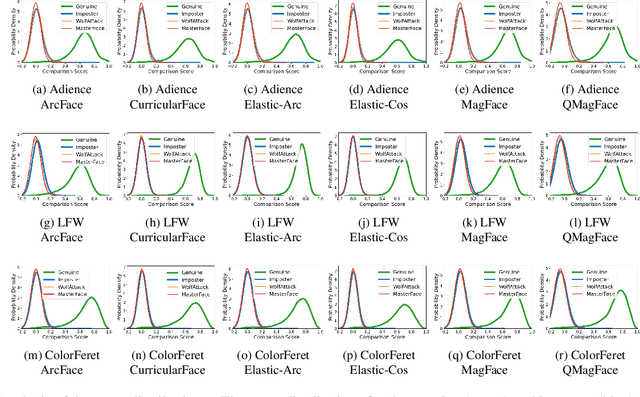

On the (Limited) Generalization of MasterFace Attacks and Its Relation to the Capacity of Face Representations

Apr 01, 2022

Abstract:A MasterFace is a face image that can successfully match against a large portion of the population. Since their generation does not require access to the information of the enrolled subjects, MasterFace attacks represent a potential security risk for widely-used face recognition systems. Previous works proposed methods for generating such images and demonstrated that these attacks can strongly compromise face recognition. However, previous works followed evaluation settings consisting of older recognition models, limited cross-dataset and cross-model evaluations, and the use of low-scale testing data. This makes it hard to state the generalizability of these attacks. In this work, we comprehensively analyse the generalizability of MasterFace attacks in empirical and theoretical investigations. The empirical investigations include the use of six state-of-the-art FR models, cross-dataset and cross-model evaluation protocols, and utilizing testing datasets of significantly higher size and variance. The results indicate a low generalizability when MasterFaces are training on a different face recognition model than the one used for testing. In these cases, the attack performance is similar to zero-effort imposter attacks. In the theoretical investigations, we define and estimate the face capacity and the maximum MasterFace coverage under the assumption that identities in the face space are well separated. The current trend of increasing the fairness and generalizability in face recognition indicates that the vulnerability of future systems might further decrease. Future works might analyse the utility of MasterFaces for understanding and enhancing the robustness of face recognition models.

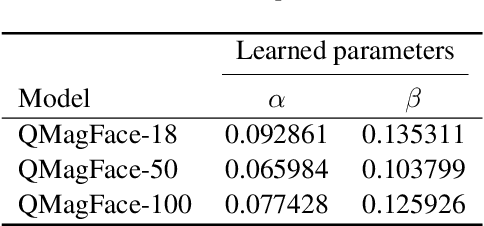

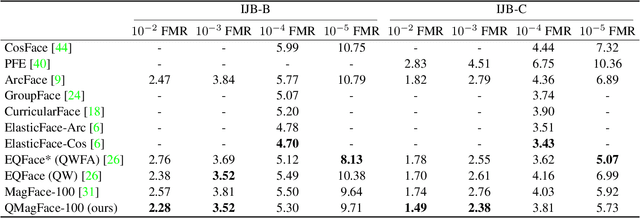

QMagFace: Simple and Accurate Quality-Aware Face Recognition

Nov 30, 2021

Abstract:Face recognition systems have to deal with large variabilities (such as different poses, illuminations, and expressions) that might lead to incorrect matching decisions. These variabilities can be measured in terms of face image quality which is defined over the utility of a sample for recognition. Previous works on face recognition either do not employ this valuable information or make use of non-inherently fit quality estimates. In this work, we propose a simple and effective face recognition solution (QMag-Face) that combines a quality-aware comparison score with a recognition model based on a magnitude-aware angular margin loss. The proposed approach includes model-specific face image qualities in the comparison process to enhance the recognition performance under unconstrained circumstances. Exploiting the linearity between the qualities and their comparison scores induced by the utilized loss, our quality-aware comparison function is simple and highly generalizable. The experiments conducted on several face recognition databases and benchmarks demonstrate that the introduced quality-awareness leads to consistent improvements in the recognition performance. Moreover, the proposed QMagFace approach performs especially well under challenging circumstances, such as cross-pose, cross-age, or cross-quality. Consequently, it leads to state-of-the-art performances on several face recognition benchmarks, such as 98.50% on AgeDB, 83.95% on XQLFQ, and 98.74% on CFP-FP. The code for QMagFace is publicly available

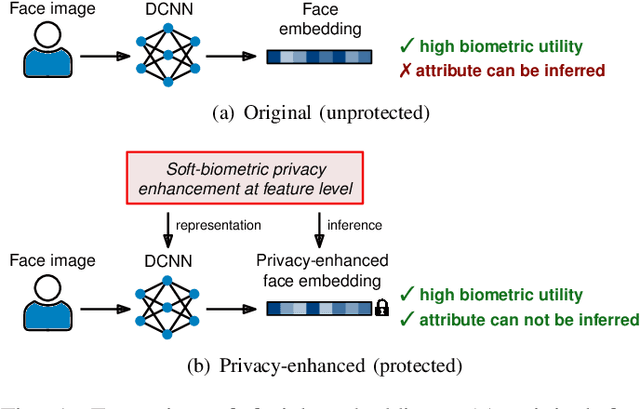

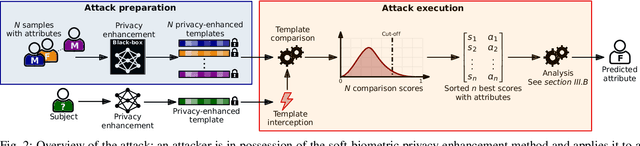

An Attack on Feature Level-based Facial Soft-biometric Privacy Enhancement

Nov 24, 2021

Abstract:In the recent past, different researchers have proposed novel privacy-enhancing face recognition systems designed to conceal soft-biometric information at feature level. These works have reported impressive results, but usually do not consider specific attacks in their analysis of privacy protection. In most cases, the privacy protection capabilities of these schemes are tested through simple machine learning-based classifiers and visualisations of dimensionality reduction tools. In this work, we introduce an attack on feature level-based facial soft-biometric privacy-enhancement techniques. The attack is based on two observations: (1) to achieve high recognition accuracy, certain similarities between facial representations have to be retained in their privacy-enhanced versions; (2) highly similar facial representations usually originate from face images with similar soft-biometric attributes. Based on these observations, the proposed attack compares a privacy-enhanced face representation against a set of privacy-enhanced face representations with known soft-biometric attributes. Subsequently, the best obtained similarity scores are analysed to infer the unknown soft-biometric attributes of the attacked privacy-enhanced face representation. That is, the attack only requires a relatively small database of arbitrary face images and the privacy-enhancing face recognition algorithm as a black-box. In the experiments, the attack is applied to two representative approaches which have previously been reported to reliably conceal the gender in privacy-enhanced face representations. It is shown that the presented attack is able to circumvent the privacy enhancement to a considerable degree and is able to correctly classify gender with an accuracy of up to approximately 90% for both of the analysed privacy-enhancing face recognition systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge