Lu Dai

VideoAfford: Grounding 3D Affordance from Human-Object-Interaction Videos via Multimodal Large Language Model

Feb 10, 2026Abstract:3D affordance grounding aims to highlight the actionable regions on 3D objects, which is crucial for robotic manipulation. Previous research primarily focused on learning affordance knowledge from static cues such as language and images, which struggle to provide sufficient dynamic interaction context that can reveal temporal and causal cues. To alleviate this predicament, we collect a comprehensive video-based 3D affordance dataset, \textit{VIDA}, which contains 38K human-object-interaction videos covering 16 affordance types, 38 object categories, and 22K point clouds. Based on \textit{VIDA}, we propose a strong baseline: VideoAfford, which activates multimodal large language models with additional affordance segmentation capabilities, enabling both world knowledge reasoning and fine-grained affordance grounding within a unified framework. To enhance action understanding capability, we leverage a latent action encoder to extract dynamic interaction priors from HOI videos. Moreover, we introduce a \textit{spatial-aware} loss function to enable VideoAfford to obtain comprehensive 3D spatial knowledge. Extensive experimental evaluations demonstrate that our model significantly outperforms well-established methods and exhibits strong open-world generalization with affordance reasoning abilities. All datasets and code will be publicly released to advance research in this area.

Deep Probabilistic Modeling of User Behavior for Anomaly Detection via Mixture Density Networks

May 13, 2025Abstract:To improve the identification of potential anomaly patterns in complex user behavior, this paper proposes an anomaly detection method based on a deep mixture density network. The method constructs a Gaussian mixture model parameterized by a neural network, enabling conditional probability modeling of user behavior. It effectively captures the multimodal distribution characteristics commonly present in behavioral data. Unlike traditional classifiers that rely on fixed thresholds or a single decision boundary, this approach defines an anomaly scoring function based on probability density using negative log-likelihood. This significantly enhances the model's ability to detect rare and unstructured behaviors. Experiments are conducted on the real-world network user dataset UNSW-NB15. A series of performance comparisons and stability validation experiments are designed. These cover multiple evaluation aspects, including Accuracy, F1- score, AUC, and loss fluctuation. The results show that the proposed method outperforms several advanced neural network architectures in both performance and training stability. This study provides a more expressive and discriminative solution for user behavior modeling and anomaly detection. It strongly promotes the application of deep probabilistic modeling techniques in the fields of network security and intelligent risk control.

Contrastive and Variational Approaches in Self-Supervised Learning for Complex Data Mining

Apr 05, 2025Abstract:Complex data mining has wide application value in many fields, especially in the feature extraction and classification tasks of unlabeled data. This paper proposes an algorithm based on self-supervised learning and verifies its effectiveness through experiments. The study found that in terms of the selection of optimizer and learning rate, the combination of AdamW optimizer and 0.002 learning rate performed best in all evaluation indicators, indicating that the adaptive optimization method can improve the performance of the model in complex data mining tasks. In addition, the ablation experiment further analyzed the contribution of each module. The results show that contrastive learning, variational modules, and data augmentation strategies play a key role in the generalization ability and robustness of the model. Through the convergence curve analysis of the loss function, the experiment verifies that the method can converge stably during the training process and effectively avoid serious overfitting. Further experimental results show that the model has strong adaptability on different data sets, can effectively extract high-quality features from unlabeled data, and improves classification accuracy. At the same time, under different data distribution conditions, the method can still maintain high detection accuracy, proving its applicability in complex data environments. This study analyzed the role of self-supervised learning methods in complex data mining through systematic experiments and verified its advantages in improving feature extraction quality, optimizing classification performance, and enhancing model stability

Federated Learning for Cross-Domain Data Privacy: A Distributed Approach to Secure Collaboration

Mar 31, 2025Abstract:This paper proposes a data privacy protection framework based on federated learning, which aims to realize effective cross-domain data collaboration under the premise of ensuring data privacy through distributed learning. Federated learning greatly reduces the risk of privacy breaches by training the model locally on each client and sharing only model parameters rather than raw data. The experiment verifies the high efficiency and privacy protection ability of federated learning under different data sources through the simulation of medical, financial, and user data. The results show that federated learning can not only maintain high model performance in a multi-domain data environment but also ensure effective protection of data privacy. The research in this paper provides a new technical path for cross-domain data collaboration and promotes the application of large-scale data analysis and machine learning while protecting privacy.

SePer: Measure Retrieval Utility Through The Lens Of Semantic Perplexity Reduction

Mar 05, 2025

Abstract:Large Language Models (LLMs) have demonstrated improved generation performance by incorporating externally retrieved knowledge, a process known as retrieval-augmented generation (RAG). Despite the potential of this approach, existing studies evaluate RAG effectiveness by 1) assessing retrieval and generation components jointly, which obscures retrieval's distinct contribution, or 2) examining retrievers using traditional metrics such as NDCG, which creates a gap in understanding retrieval's true utility in the overall generation process. To address the above limitations, in this work, we introduce an automatic evaluation method that measures retrieval quality through the lens of information gain within the RAG framework. Specifically, we propose Semantic Perplexity (SePer), a metric that captures the LLM's internal belief about the correctness of the retrieved information. We quantify the utility of retrieval by the extent to which it reduces semantic perplexity post-retrieval. Extensive experiments demonstrate that SePer not only aligns closely with human preferences but also offers a more precise and efficient evaluation of retrieval utility across diverse RAG scenarios.

Improve Dense Passage Retrieval with Entailment Tuning

Oct 21, 2024

Abstract:Retrieval module can be plugged into many downstream NLP tasks to improve their performance, such as open-domain question answering and retrieval-augmented generation. The key to a retrieval system is to calculate relevance scores to query and passage pairs. However, the definition of relevance is often ambiguous. We observed that a major class of relevance aligns with the concept of entailment in NLI tasks. Based on this observation, we designed a method called entailment tuning to improve the embedding of dense retrievers. Specifically, we unify the form of retrieval data and NLI data using existence claim as a bridge. Then, we train retrievers to predict the claims entailed in a passage with a variant task of masked prediction. Our method can be efficiently plugged into current dense retrieval methods, and experiments show the effectiveness of our method.

Integrating Medical Imaging and Clinical Reports Using Multimodal Deep Learning for Advanced Disease Analysis

May 23, 2024

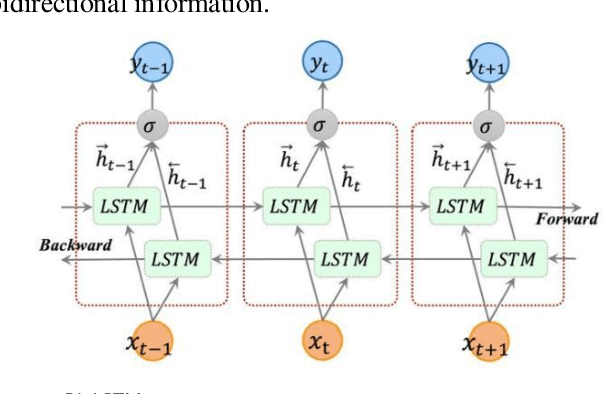

Abstract:In this paper, an innovative multi-modal deep learning model is proposed to deeply integrate heterogeneous information from medical images and clinical reports. First, for medical images, convolutional neural networks were used to extract high-dimensional features and capture key visual information such as focal details, texture and spatial distribution. Secondly, for clinical report text, a two-way long and short-term memory network combined with an attention mechanism is used for deep semantic understanding, and key statements related to the disease are accurately captured. The two features interact and integrate effectively through the designed multi-modal fusion layer to realize the joint representation learning of image and text. In the empirical study, we selected a large medical image database covering a variety of diseases, combined with corresponding clinical reports for model training and validation. The proposed multimodal deep learning model demonstrated substantial superiority in the realms of disease classification, lesion localization, and clinical description generation, as evidenced by the experimental results.

Real-Time Go-Around Prediction: A case study of JFK airport

May 18, 2024

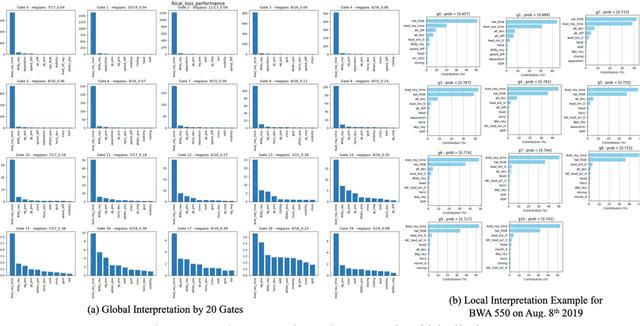

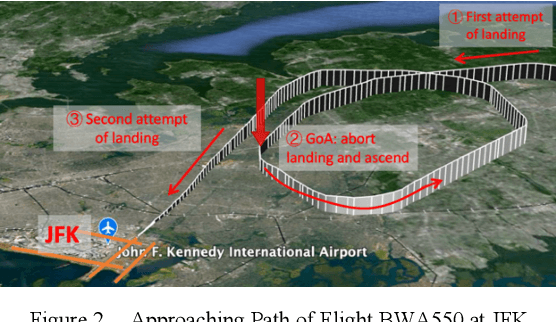

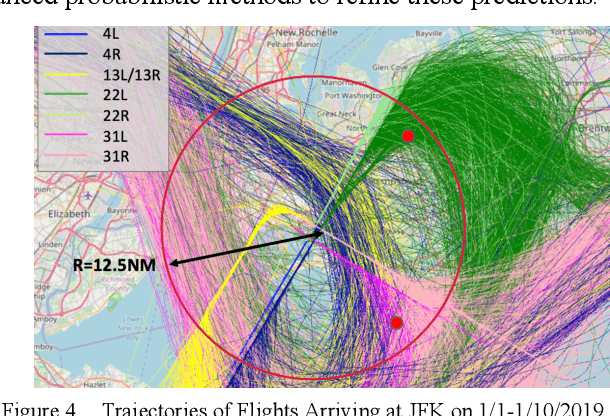

Abstract:In this paper, we employ the long-short-term memory model (LSTM) to predict the real-time go-around probability as an arrival flight is approaching JFK airport and within 10 nm of the landing runway threshold. We further develop methods to examine the causes to go-around occurrences both from a global view and an individual flight perspective. According to our results, in-trail spacing, and simultaneous runway operation appear to be the top factors that contribute to overall go-around occurrences. We then integrate these pre-trained models and analyses with real-time data streaming, and finally develop a demo web-based user interface that integrates the different components designed previously into a real-time tool that can eventually be used by flight crews and other line personnel to identify situations in which there is a high risk of a go-around.

* https://www.icrat.org/

Cloth2Body: Generating 3D Human Body Mesh from 2D Clothing

Sep 28, 2023

Abstract:In this paper, we define and study a new Cloth2Body problem which has a goal of generating 3D human body meshes from a 2D clothing image. Unlike the existing human mesh recovery problem, Cloth2Body needs to address new and emerging challenges raised by the partial observation of the input and the high diversity of the output. Indeed, there are three specific challenges. First, how to locate and pose human bodies into the clothes. Second, how to effectively estimate body shapes out of various clothing types. Finally, how to generate diverse and plausible results from a 2D clothing image. To this end, we propose an end-to-end framework that can accurately estimate 3D body mesh parameterized by pose and shape from a 2D clothing image. Along this line, we first utilize Kinematics-aware Pose Estimation to estimate body pose parameters. 3D skeleton is employed as a proxy followed by an inverse kinematics module to boost the estimation accuracy. We additionally design an adaptive depth trick to align the re-projected 3D mesh better with 2D clothing image by disentangling the effects of object size and camera extrinsic. Next, we propose Physics-informed Shape Estimation to estimate body shape parameters. 3D shape parameters are predicted based on partial body measurements estimated from RGB image, which not only improves pixel-wise human-cloth alignment, but also enables flexible user editing. Finally, we design Evolution-based pose generation method, a skeleton transplanting method inspired by genetic algorithms to generate diverse reasonable poses during inference. As shown by experimental results on both synthetic and real-world data, the proposed framework achieves state-of-the-art performance and can effectively recover natural and diverse 3D body meshes from 2D images that align well with clothing.

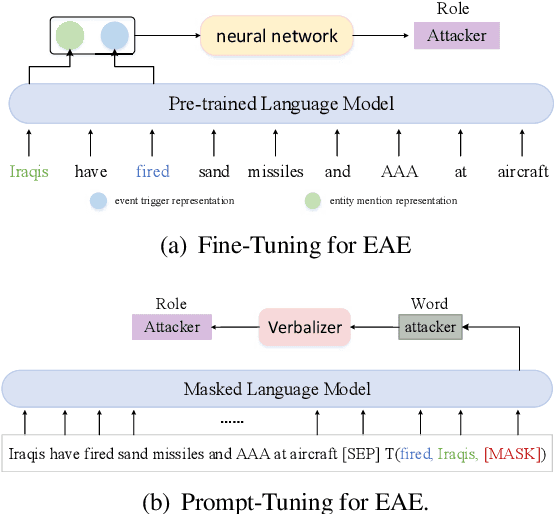

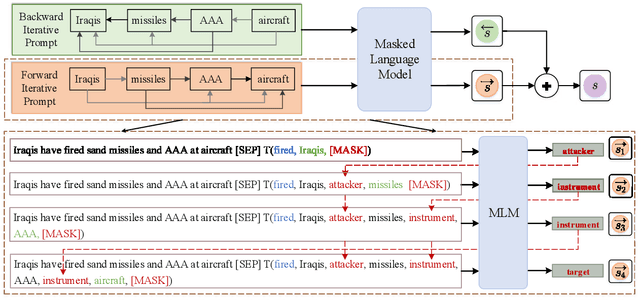

Bi-Directional Iterative Prompt-Tuning for Event Argument Extraction

Oct 28, 2022

Abstract:Recently, prompt-tuning has attracted growing interests in event argument extraction (EAE). However, the existing prompt-tuning methods have not achieved satisfactory performance due to the lack of consideration of entity information. In this paper, we propose a bi-directional iterative prompt-tuning method for EAE, where the EAE task is treated as a cloze-style task to take full advantage of entity information and pre-trained language models (PLMs). Furthermore, our method explores event argument interactions by introducing the argument roles of contextual entities into prompt construction. Since template and verbalizer are two crucial components in a cloze-style prompt, we propose to utilize the role label semantic knowledge to construct a semantic verbalizer and design three kinds of templates for the EAE task. Experiments on the ACE 2005 English dataset with standard and low-resource settings show that the proposed method significantly outperforms the peer state-of-the-art methods. Our code is available at https://github.com/HustMinsLab/BIP.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge