Inwook Shim

SyNeT: Synthetic Negatives for Traversability Learning

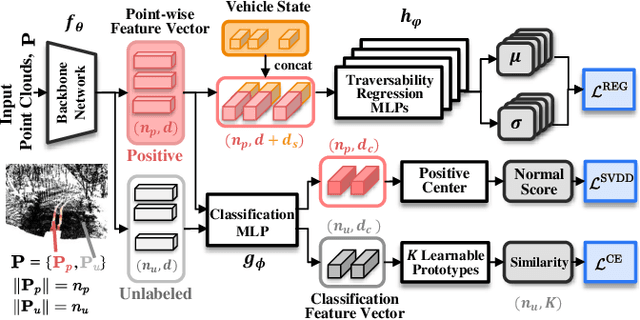

Feb 03, 2026Abstract:Reliable traversability estimation is crucial for autonomous robots to navigate complex outdoor environments safely. Existing self-supervised learning frameworks primarily rely on positive and unlabeled data; however, the lack of explicit negative data remains a critical limitation, hindering the model's ability to accurately identify diverse non-traversable regions. To address this issue, we introduce a method to explicitly construct synthetic negatives, representing plausible but non-traversable, and integrate them into vision-based traversability learning. Our approach is formulated as a training strategy that can be seamlessly integrated into both Positive-Unlabeled (PU) and Positive-Negative (PN) frameworks without modifying inference architectures. Complementing standard pixel-wise metrics, we introduce an object-centric FPR evaluation approach that analyzes predictions in regions where synthetic negatives are inserted. This evaluation provides an indirect measure of the model's ability to consistently identify non-traversable regions without additional manual labeling. Extensive experiments on both public and self-collected datasets demonstrate that our approach significantly enhances robustness and generalization across diverse environments. The source code and demonstration videos will be publicly available.

SceneAware: Scene-Constrained Pedestrian Trajectory Prediction with LLM-Guided Walkability

Jun 17, 2025Abstract:Accurate prediction of pedestrian trajectories is essential for applications in robotics and surveillance systems. While existing approaches primarily focus on social interactions between pedestrians, they often overlook the rich environmental context that significantly shapes human movement patterns. In this paper, we propose SceneAware, a novel framework that explicitly incorporates scene understanding to enhance trajectory prediction accuracy. Our method leverages a Vision Transformer~(ViT) scene encoder to process environmental context from static scene images, while Multi-modal Large Language Models~(MLLMs) generate binary walkability masks that distinguish between accessible and restricted areas during training. We combine a Transformer-based trajectory encoder with the ViT-based scene encoder, capturing both temporal dynamics and spatial constraints. The framework integrates collision penalty mechanisms that discourage predicted trajectories from violating physical boundaries, ensuring physically plausible predictions. SceneAware is implemented in both deterministic and stochastic variants. Comprehensive experiments on the ETH/UCY benchmark datasets show that our approach outperforms state-of-the-art methods, with more than 50\% improvement over previous models. Our analysis based on different trajectory categories shows that the model performs consistently well across various types of pedestrian movement. This highlights the importance of using explicit scene information and shows that our scene-aware approach is both effective and reliable in generating accurate and physically plausible predictions. Code is available at: https://github.com/juho127/SceneAware.

Addressing Relative Pose Impact on UWB Localization: Dataset Introduction and Analysis

Jul 04, 2024Abstract:UWB has recently gained new attention as an auxiliary sensor in the field of robot localization due to its compactness and ease of distance measurement. Consequently, various UWB-related localization and dataset research have increased. Despite this broad interest, there is a lack of UWB datasets that thoroughly analyze the performance of UWB ranging measurement. To address this issue, our paper introduces a UWB dataset that examines UWB relative pose factors affecting ranging measurement. To the best of our knowledge, our dataset is the first to analyze these factors while rigorously providing precise ground-truth UWB poses. The dataset is accessible at https://github.com/cjhhalla/RCV_uwb_dataset .

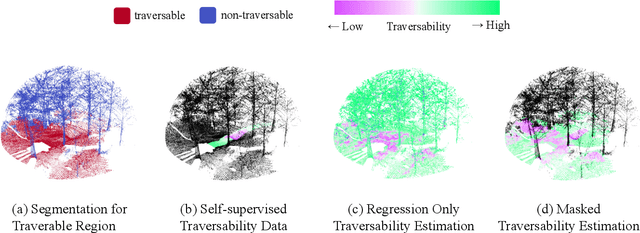

Learning Off-Road Terrain Traversability with Self-Supervisions Only

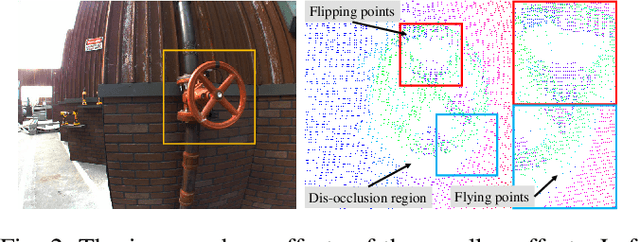

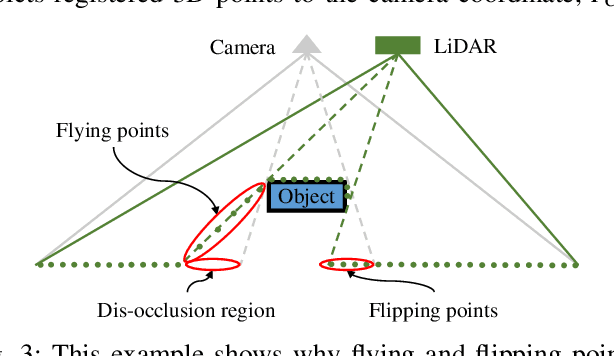

May 30, 2023Abstract:Estimating the traversability of terrain should be reliable and accurate in diverse conditions for autonomous driving in off-road environments. However, learning-based approaches often yield unreliable results when confronted with unfamiliar contexts, and it is challenging to obtain manual annotations frequently for new circumstances. In this paper, we introduce a method for learning traversability from images that utilizes only self-supervision and no manual labels, enabling it to easily learn traversability in new circumstances. To this end, we first generate self-supervised traversability labels from past driving trajectories by labeling regions traversed by the vehicle as highly traversable. Using the self-supervised labels, we then train a neural network that identifies terrains that are safe to traverse from an image using a one-class classification algorithm. Additionally, we supplement the limitations of self-supervised labels by incorporating methods of self-supervised learning of visual representations. To conduct a comprehensive evaluation, we collect data in a variety of driving environments and perceptual conditions and show that our method produces reliable estimations in various environments. In addition, the experimental results validate that our method outperforms other self-supervised traversability estimation methods and achieves comparable performances with supervised learning methods trained on manually labeled data.

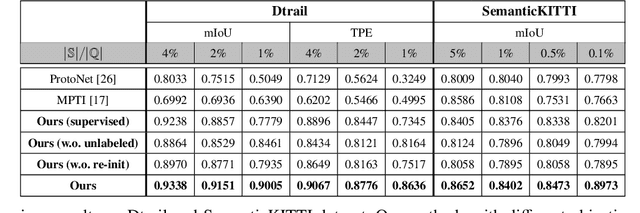

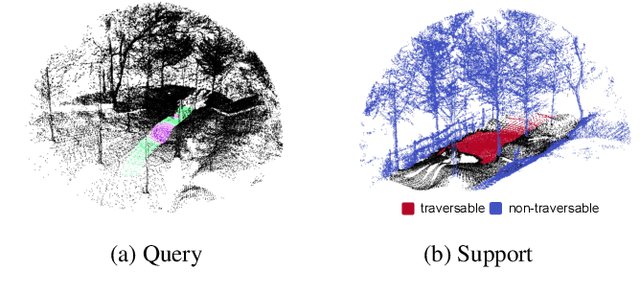

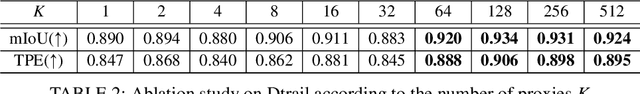

Uncertainty Reduction for 3D Point Cloud Self-Supervised Traversability Estimation

Nov 21, 2022

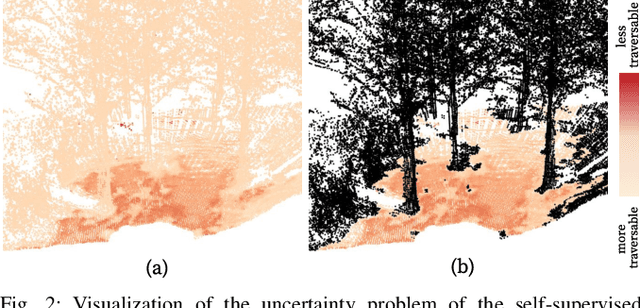

Abstract:Traversability estimation in off-road environments requires a robust perception system. Recently, approaches to learning a traversability estimation from past vehicle experiences in a self-supervised manner are arising as they can greatly reduce human labeling costs and labeling errors. Nonetheless, the learning setting from self-supervised traversability estimation suffers from congenital uncertainties that appear according to the scarcity of negative information. Negative data are rarely harvested as the system can be severely damaged while logging the data. To mitigate the uncertainty, we introduce a method to incorporate unlabeled data in order to leverage the uncertainty. First, we design a learning architecture that inputs query and support data. Second, unlabeled data are assigned based on the proximity in the metric space. Third, a new metric for uncertainty measures is introduced. We evaluated our approach on our own dataset, `Dtrail', which is composed of a wide variety of negative data.

ScaTE: A Scalable Framework for Self-Supervised Traversability Estimation in Unstructured Environments

Sep 14, 2022

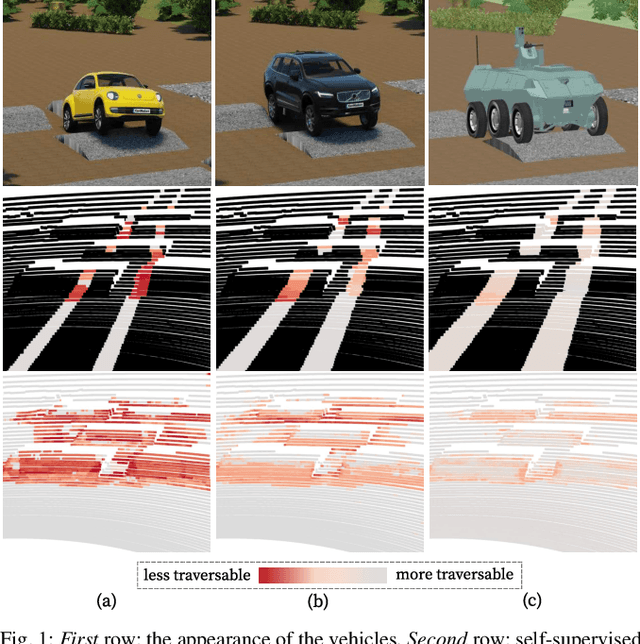

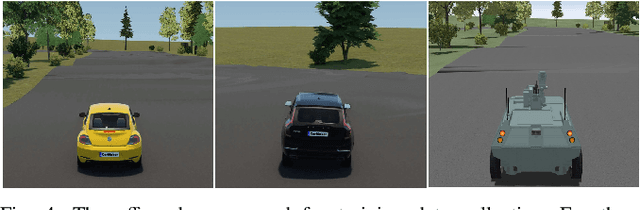

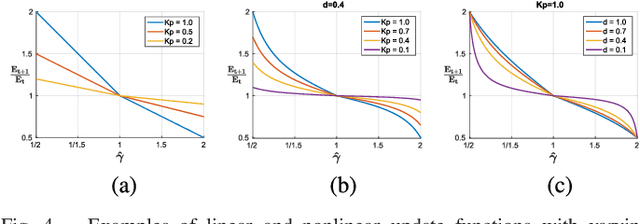

Abstract:For the safe and successful navigation of autonomous vehicles in unstructured environments, the traversability of terrain should vary based on the driving capabilities of the vehicles. Actual driving experience can be utilized in a self-supervised fashion to learn vehicle-specific traversability. However, existing methods for learning self-supervised traversability are not highly scalable for learning the traversability of various vehicles. In this work, we introduce a scalable framework for learning self-supervised traversability, which can learn the traversability directly from vehicle-terrain interaction without any human supervision. We train a neural network that predicts the proprioceptive experience that a vehicle would undergo from 3D point clouds. Using a novel PU learning method, the network simultaneously identifies non-traversable regions where estimations can be overconfident. With driving data of various vehicles gathered from simulation and the real world, we show that our framework is capable of learning the self-supervised traversability of various vehicles. By integrating our framework with a model predictive controller, we demonstrate that estimated traversability results in effective navigation that enables distinct maneuvers based on the driving characteristics of the vehicles. In addition, experimental results validate the ability of our method to identify and avoid non-traversable regions.

SLiDE: Self-supervised LiDAR De-snowing through Reconstruction Difficulty

Aug 08, 2022

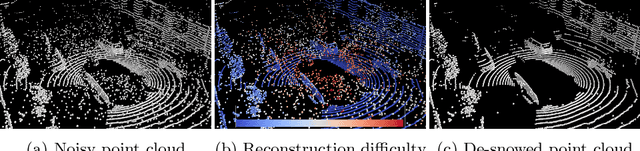

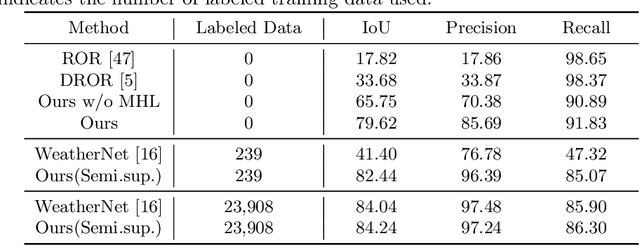

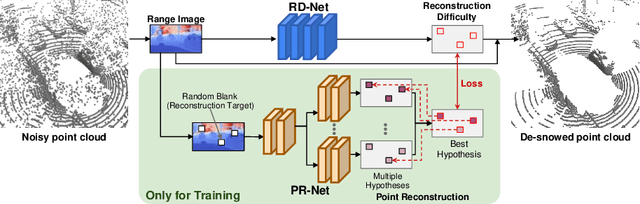

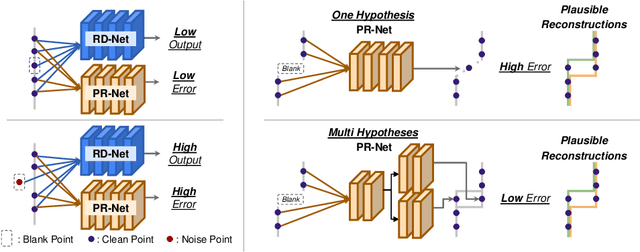

Abstract:LiDAR is widely used to capture accurate 3D outdoor scene structures. However, LiDAR produces many undesirable noise points in snowy weather, which hamper analyzing meaningful 3D scene structures. Semantic segmentation with snow labels would be a straightforward solution for removing them, but it requires laborious point-wise annotation. To address this problem, we propose a novel self-supervised learning framework for snow points removal in LiDAR point clouds. Our method exploits the structural characteristic of the noise points: low spatial correlation with their neighbors. Our method consists of two deep neural networks: Point Reconstruction Network (PR-Net) reconstructs each point from its neighbors; Reconstruction Difficulty Network (RD-Net) predicts point-wise difficulty of the reconstruction by PR-Net, which we call reconstruction difficulty. With simple post-processing, our method effectively detects snow points without any label. Our method achieves the state-of-the-art performance among label-free approaches and is comparable to the fully-supervised method. Moreover, we demonstrate that our method can be exploited as a pretext task to improve label-efficiency of supervised training of de-snowing.

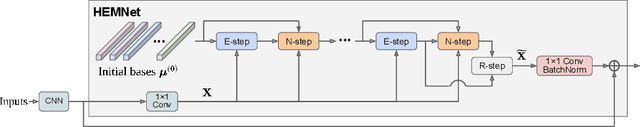

Improving Gradient Flow with Unrolled Highway Expectation Maximization

Dec 09, 2020

Abstract:Integrating model-based machine learning methods into deep neural architectures allows one to leverage both the expressive power of deep neural nets and the ability of model-based methods to incorporate domain-specific knowledge. In particular, many works have employed the expectation maximization (EM) algorithm in the form of an unrolled layer-wise structure that is jointly trained with a backbone neural network. However, it is difficult to discriminatively train the backbone network by backpropagating through the EM iterations as they are prone to the vanishing gradient problem. To address this issue, we propose Highway Expectation Maximization Networks (HEMNet), which is comprised of unrolled iterations of the generalized EM (GEM) algorithm based on the Newton-Rahpson method. HEMNet features scaled skip connections, or highways, along the depths of the unrolled architecture, resulting in improved gradient flow during backpropagation while incurring negligible additional computation and memory costs compared to standard unrolled EM. Furthermore, HEMNet preserves the underlying EM procedure, thereby fully retaining the convergence properties of the original EM algorithm. We achieve significant improvement in performance on several semantic segmentation benchmarks and empirically show that HEMNet effectively alleviates gradient decay.

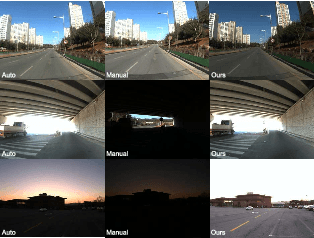

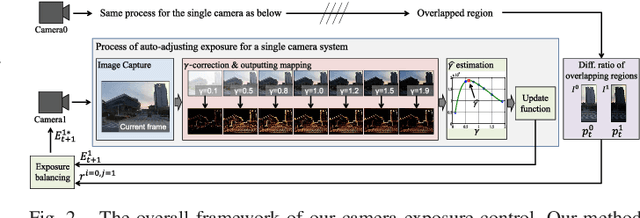

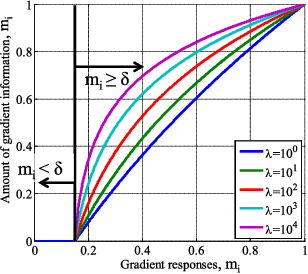

Gradient-based Camera Exposure Control for Outdoor Mobile Platforms

Jun 13, 2018

Abstract:We introduce a novel method to automatically adjust camera exposure for image processing and computer vision applications on mobile robot platforms. Because most image processing algorithms rely heavily on low-level image features that are based mainly on local gradient information, we consider that gradient quantity can determine the proper exposure level, allowing a camera to capture the important image features in a manner robust to illumination conditions. We then extend this concept to a multi-camera system and present a new control algorithm to achieve both brightness consistency between adjacent cameras and a proper exposure level for each camera. We implement our prototype system with off-the-shelf machine-vision cameras and demonstrate the effectiveness of the proposed algorithms on practical applications, including pedestrian detection, visual odometry, surround-view imaging, panoramic imaging and stereo matching.

Vision System and Depth Processing for DRC-HUBO+

Sep 28, 2015

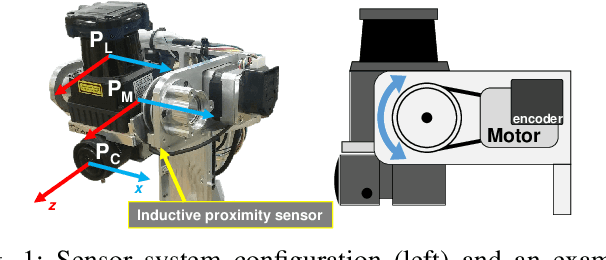

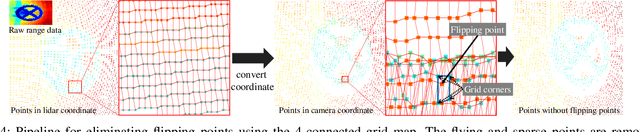

Abstract:This paper presents a vision system and a depth processing algorithm for DRC-HUBO+, the winner of the DRC finals 2015. Our system is designed to reliably capture 3D information of a scene and objects robust to challenging environment conditions. We also propose a depth-map upsampling method that produces an outliers-free depth map by explicitly handling depth outliers. Our system is suitable for an interactive robot with real-world that requires accurate object detection and pose estimation. We evaluate our depth processing algorithm over state-of-the-art algorithms on several synthetic and real-world datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge