Hak-Keung Lam

Towards deployment-centric multimodal AI beyond vision and language

Apr 04, 2025Abstract:Multimodal artificial intelligence (AI) integrates diverse types of data via machine learning to improve understanding, prediction, and decision-making across disciplines such as healthcare, science, and engineering. However, most multimodal AI advances focus on models for vision and language data, while their deployability remains a key challenge. We advocate a deployment-centric workflow that incorporates deployment constraints early to reduce the likelihood of undeployable solutions, complementing data-centric and model-centric approaches. We also emphasise deeper integration across multiple levels of multimodality and multidisciplinary collaboration to significantly broaden the research scope beyond vision and language. To facilitate this approach, we identify common multimodal-AI-specific challenges shared across disciplines and examine three real-world use cases: pandemic response, self-driving car design, and climate change adaptation, drawing expertise from healthcare, social science, engineering, science, sustainability, and finance. By fostering multidisciplinary dialogue and open research practices, our community can accelerate deployment-centric development for broad societal impact.

Constrained Proximal Policy Optimization

May 23, 2023

Abstract:The problem of constrained reinforcement learning (CRL) holds significant importance as it provides a framework for addressing critical safety satisfaction concerns in the field of reinforcement learning (RL). However, with the introduction of constraint satisfaction, the current CRL methods necessitate the utilization of second-order optimization or primal-dual frameworks with additional Lagrangian multipliers, resulting in increased complexity and inefficiency during implementation. To address these issues, we propose a novel first-order feasible method named Constrained Proximal Policy Optimization (CPPO). By treating the CRL problem as a probabilistic inference problem, our approach integrates the Expectation-Maximization framework to solve it through two steps: 1) calculating the optimal policy distribution within the feasible region (E-step), and 2) conducting a first-order update to adjust the current policy towards the optimal policy obtained in the E-step (M-step). We establish the relationship between the probability ratios and KL divergence to convert the E-step into a convex optimization problem. Furthermore, we develop an iterative heuristic algorithm from a geometric perspective to solve this problem. Additionally, we introduce a conservative update mechanism to overcome the constraint violation issue that occurs in the existing feasible region method. Empirical evaluations conducted in complex and uncertain environments validate the effectiveness of our proposed method, as it performs at least as well as other baselines.

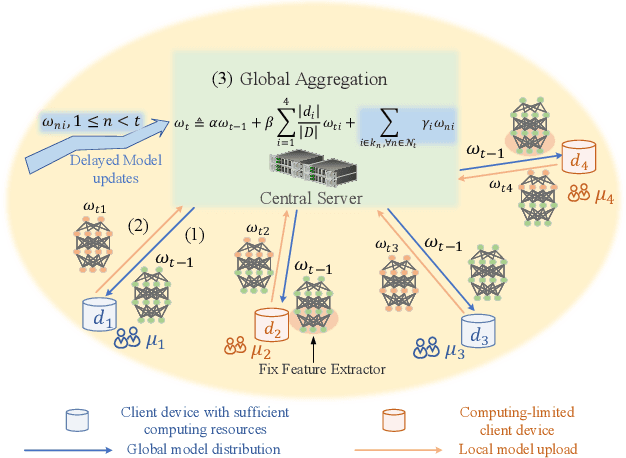

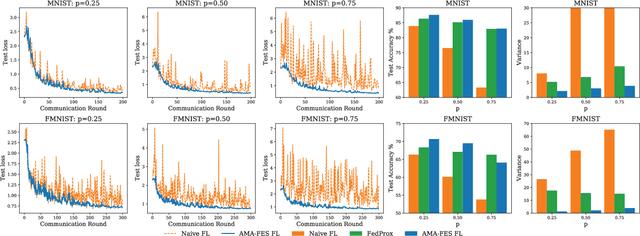

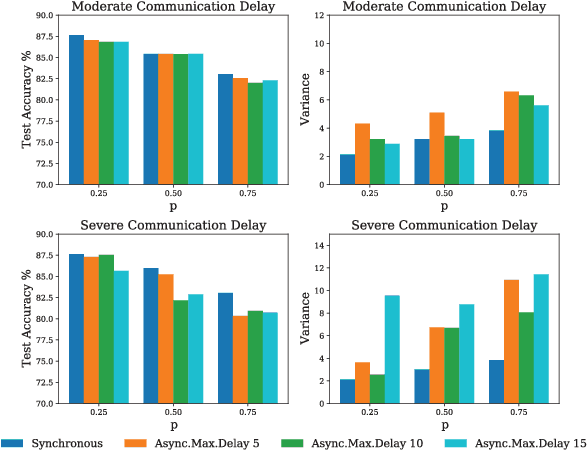

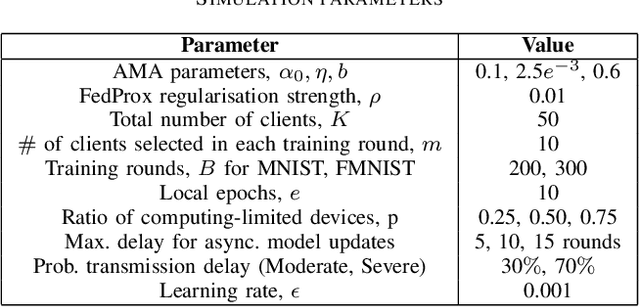

Distributed Learning in Heterogeneous Environment: federated learning with adaptive aggregation and computation reduction

Feb 16, 2023

Abstract:Although federated learning has achieved many breakthroughs recently, the heterogeneous nature of the learning environment greatly limits its performance and hinders its real-world applications. The heterogeneous data, time-varying wireless conditions and computing-limited devices are three main challenges, which often result in an unstable training process and degraded accuracy. Herein, we propose strategies to address these challenges. Targeting the heterogeneous data distribution, we propose a novel adaptive mixing aggregation (AMA) scheme that mixes the model updates from previous rounds with current rounds to avoid large model shifts and thus, maintain training stability. We further propose a novel staleness-based weighting scheme for the asynchronous model updates caused by the dynamic wireless environment. Lastly, we propose a novel CPU-friendly computation-reduction scheme based on transfer learning by sharing the feature extractor (FES) and letting the computing-limited devices update only the classifier. The simulation results show that the proposed framework outperforms existing state-of-the-art solutions and increases the test accuracy, and training stability by up to 2.38%, 93.10% respectively. Additionally, the proposed framework can tolerate communication delay of up to 15 rounds under a moderate delay environment without significant accuracy degradation.

Improving COVID-19 CT Classification of CNNs by Learning Parameter-Efficient Representation

Aug 09, 2022

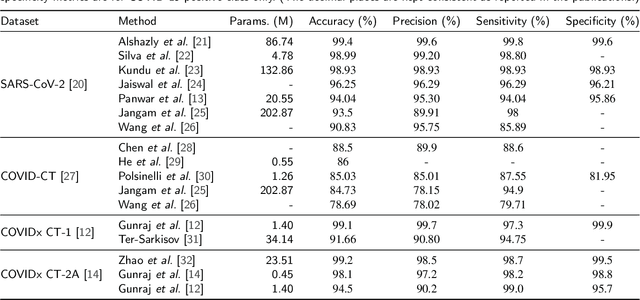

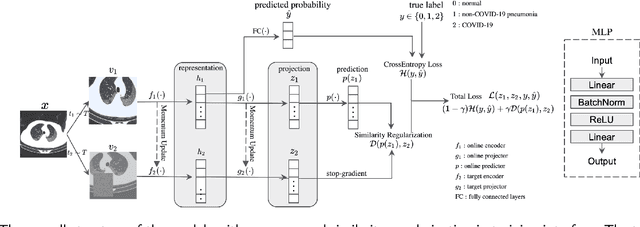

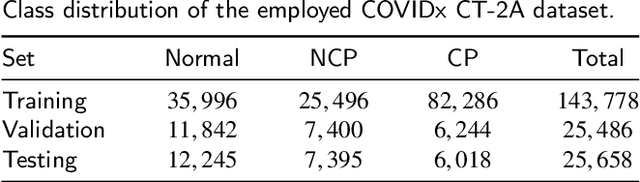

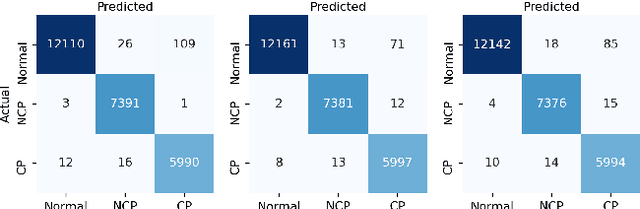

Abstract:COVID-19 pandemic continues to spread rapidly over the world and causes a tremendous crisis in global human health and the economy. Its early detection and diagnosis are crucial for controlling the further spread. Many deep learning-based methods have been proposed to assist clinicians in automatic COVID-19 diagnosis based on computed tomography imaging. However, challenges still remain, including low data diversity in existing datasets, and unsatisfied detection resulting from insufficient accuracy and sensitivity of deep learning models. To enhance the data diversity, we design augmentation techniques of incremental levels and apply them to the largest open-access benchmark dataset, COVIDx CT-2A. Meanwhile, similarity regularization (SR) derived from contrastive learning is proposed in this study to enable CNNs to learn more parameter-efficient representations, thus improving the accuracy and sensitivity of CNNs. The results on seven commonly used CNNs demonstrate that CNN performance can be improved stably through applying the designed augmentation and SR techniques. In particular, DenseNet121 with SR achieves an average test accuracy of 99.44% in three trials for three-category classification, including normal, non-COVID-19 pneumonia, and COVID-19 pneumonia. And the achieved precision, sensitivity, and specificity for the COVID-19 pneumonia category are 98.40%, 99.59%, and 99.50%, respectively. These statistics suggest that our method has surpassed the existing state-of-the-art methods on the COVIDx CT-2A dataset.

Time-Frequency Distributions of Heart Sound Signals: A Comparative Study using Convolutional Neural Networks

Aug 05, 2022

Abstract:Time-Frequency Distributions (TFDs) support the heart sound characterisation and classification in early cardiac screening. However, despite the frequent use of TFDs in signal analysis, no study comprehensively compared their performances on deep learning for automatic diagnosis. Furthermore, the combination of signal processing methods as inputs for Convolutional Neural Networks (CNNs) has been proved as a practical approach to increasing signal classification performance. Therefore, this study aimed to investigate the optimal use of TFD/ combined TFDs as input for CNNs. The presented results revealed that: 1) The transformation of the heart sound signal into the TF domain achieves higher classification performance than using of raw signals. Among the TFDs, the difference in the performance was slight for all the CNN models (within $1.3\%$ in average accuracy). However, Continuous wavelet transform (CWT) and Chirplet transform (CT) outperformed the rest. 2) The appropriate increase of the CNN capacity and architecture optimisation can improve the performance, while the network architecture should not be overly complicated. Based on the ResNet or SEResNet family results, the increase in the number of parameters and the depth of the structure do not improve the performance apparently. 3) Combining TFDs as CNN inputs did not significantly improve the classification results. The findings of this study provided the knowledge for selecting TFDs as CNN input and designing CNN architecture for heart sound classification.

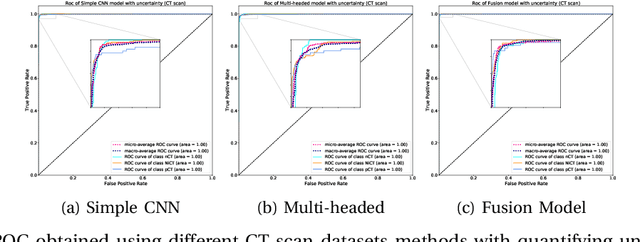

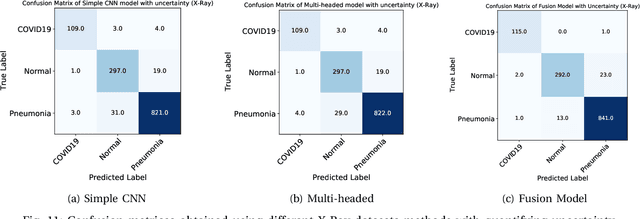

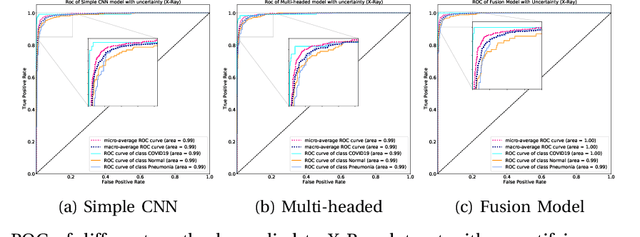

UncertaintyFuseNet: Robust Uncertainty-aware Hierarchical Feature Fusion with Ensemble Monte Carlo Dropout for COVID-19 Detection

May 22, 2021

Abstract:The COVID-19 (Coronavirus disease 2019) has infected more than 151 million people and caused approximately 3.17 million deaths around the world up to the present. The rapid spread of COVID-19 is continuing to threaten human's life and health. Therefore, the development of computer-aided detection (CAD) systems based on machine and deep learning methods which are able to accurately differentiate COVID-19 from other diseases using chest computed tomography (CT) and X-Ray datasets is essential and of immediate priority. Different from most of the previous studies which used either one of CT or X-ray images, we employed both data types with sufficient samples in implementation. On the other hand, due to the extreme sensitivity of this pervasive virus, model uncertainty should be considered, while most previous studies have overlooked it. Therefore, we propose a novel powerful fusion model named $UncertaintyFuseNet$ that consists of an uncertainty module: Ensemble Monte Carlo (EMC) dropout. The obtained results prove the effectiveness of our proposed fusion for COVID-19 detection using CT scan and X-Ray datasets. Also, our proposed $UncertaintyFuseNet$ model is significantly robust to noise and performs well with the previously unseen data. The source codes and models of this study are available at: https://github.com/moloud1987/UncertaintyFuseNet-for-COVID-19-Classification.

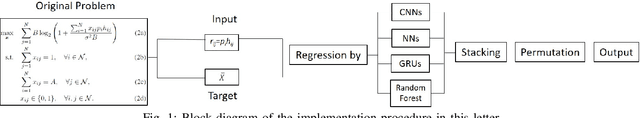

Channel Assignment in Uplink Wireless Communication using Machine Learning Approach

Jan 12, 2020

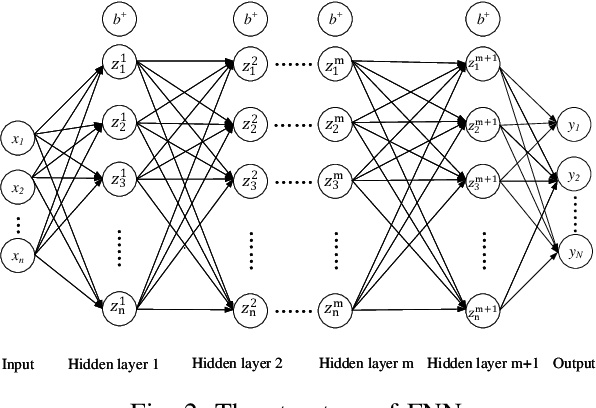

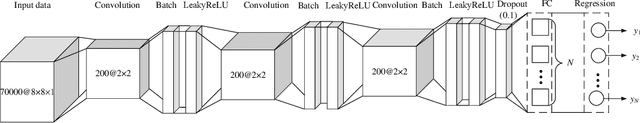

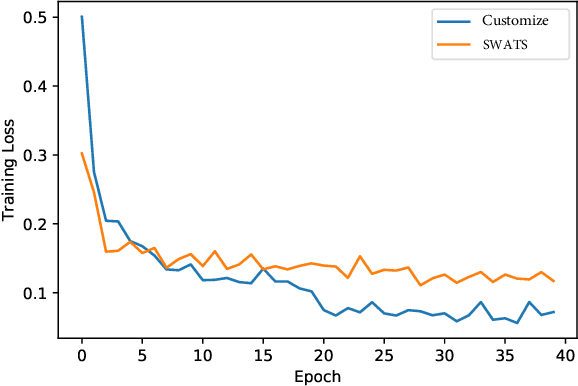

Abstract:This letter investigates a channel assignment problem in uplink wireless communication systems. Our goal is to maximize the sum rate of all users subject to integer channel assignment constraints. A convex optimization based algorithm is provided to obtain the optimal channel assignment, where the closed-form solution is obtained in each step. Due to high computational complexity in the convex optimization based algorithm, machine learning approaches are employed to obtain computational efficient solutions. More specifically, the data are generated by using convex optimization based algorithm and the original problem is converted to a regression problem which is addressed by the integration of convolutional neural networks (CNNs), feed-forward neural networks (FNNs), random forest and gated recurrent unit networks (GRUs). The results demonstrate that the machine learning method largely reduces the computation time with slightly compromising of prediction accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge