Frank Dellaert

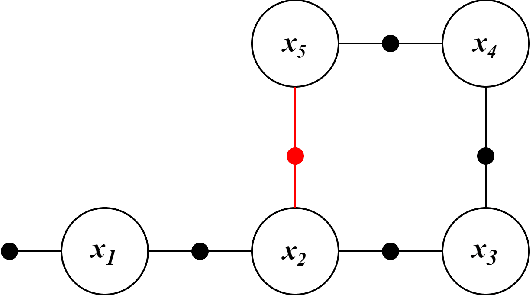

Variable Elimination in Hybrid Factor Graphs for Discrete-Continuous Inference & Estimation

Jan 02, 2026Abstract:Many hybrid problems in robotics involve both continuous and discrete components, and modeling them together for estimation tasks has been a long standing and difficult problem. Hybrid Factor Graphs give us a mathematical framework to model these types of problems, however existing approaches for solving them are based on approximations. In this work, we propose an efficient Hybrid Factor Graph framework alongwith a variable elimination algorithm to produce a hybrid Bayes network, which can then be used for exact Maximum A Posteriori estimation and marginalization over both sets of variables. Our approach first develops a novel hybrid Gaussian factor which can connect to both discrete and continuous variables, and a hybrid conditional which can represent multiple continuous hypotheses conditioned on the discrete variables. Using these representations, we derive the process of hybrid variable elimination under the Conditional Linear Gaussian scheme, giving us exact posteriors as hybrid Bayes network. To bound the number of discrete hypotheses, we use a tree-structured representation of the factors coupled with a simple pruning and probabilistic assignment scheme, which allows for tractable inference. We demonstrate the applicability of our framework on a SLAM dataset with ambiguous measurements, where discrete choices for the most likely measurement have to be made. Our demonstrated results showcase the accuracy, generality, and simplicity of our hybrid factor graph framework.

SALVe: Semantic Alignment Verification for Floorplan Reconstruction from Sparse Panoramas

Jun 27, 2024Abstract:We propose a new system for automatic 2D floorplan reconstruction that is enabled by SALVe, our novel pairwise learned alignment verifier. The inputs to our system are sparsely located 360$^\circ$ panoramas, whose semantic features (windows, doors, and openings) are inferred and used to hypothesize pairwise room adjacency or overlap. SALVe initializes a pose graph, which is subsequently optimized using GTSAM. Once the room poses are computed, room layouts are inferred using HorizonNet, and the floorplan is constructed by stitching the most confident layout boundaries. We validate our system qualitatively and quantitatively as well as through ablation studies, showing that it outperforms state-of-the-art SfM systems in completeness by over 200%, without sacrificing accuracy. Our results point to the significance of our work: poses of 81% of panoramas are localized in the first 2 connected components (CCs), and 89% in the first 3 CCs. Code and models are publicly available at https://github.com/zillow/salve.

Neural Visibility Field for Uncertainty-Driven Active Mapping

Jun 11, 2024Abstract:This paper presents Neural Visibility Field (NVF), a novel uncertainty quantification method for Neural Radiance Fields (NeRF) applied to active mapping. Our key insight is that regions not visible in the training views lead to inherently unreliable color predictions by NeRF at this region, resulting in increased uncertainty in the synthesized views. To address this, we propose to use Bayesian Networks to composite position-based field uncertainty into ray-based uncertainty in camera observations. Consequently, NVF naturally assigns higher uncertainty to unobserved regions, aiding robots to select the most informative next viewpoints. Extensive evaluations show that NVF excels not only in uncertainty quantification but also in scene reconstruction for active mapping, outperforming existing methods.

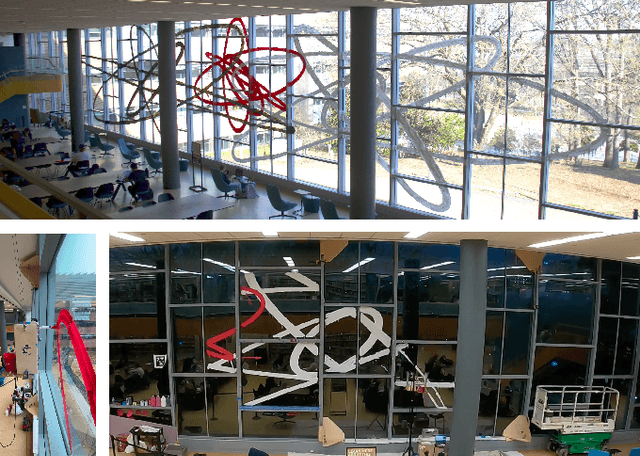

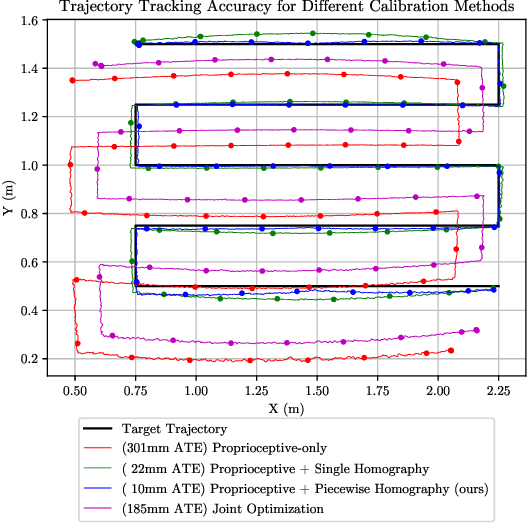

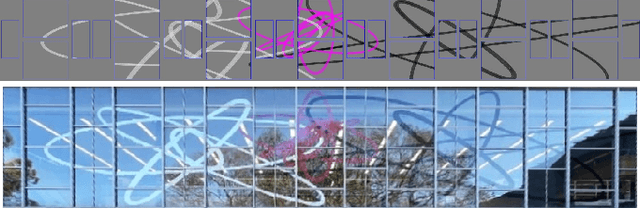

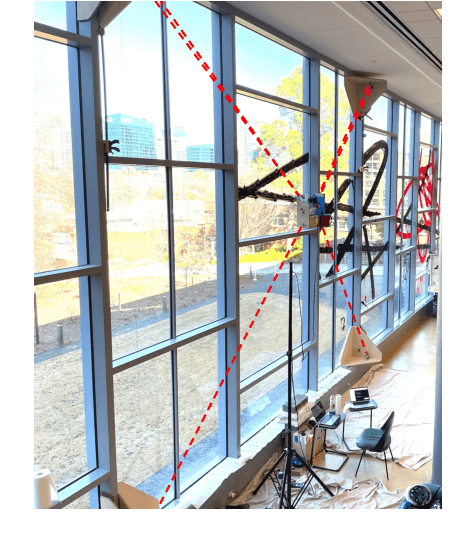

Architectural-Scale Artistic Brush Painting with a Hybrid Cable Robot

Mar 18, 2024

Abstract:Robot art presents an opportunity to both showcase and advance state-of-the-art robotics through the challenging task of creating art. Creating large-scale artworks in particular engages the public in a way that small-scale works cannot, and the distinct qualities of brush strokes contribute to an organic and human-like quality. Combining the large scale of murals with the strokes of the brush medium presents an especially impactful result, but also introduces unique challenges in maintaining precise, dextrous motion control of the brush across such a large workspace. In this work, we present the first robot to our knowledge that can paint architectural-scale murals with a brush. We create a hybrid robot consisting of a cable-driven parallel robot and 4 degree of freedom (DoF) serial manipulator to paint a 27m by 3.7m mural on windows spanning 2-stories of a building. We discuss our approach to achieving both the scale and accuracy required for brush-painting a mural through a combination of novel mechanical design elements, coordinated planning and control, and on-site calibration algorithms with experimental validations.

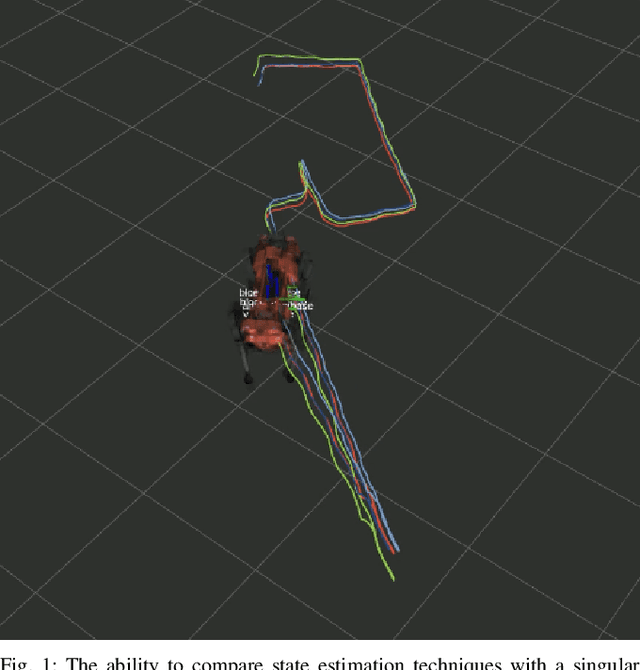

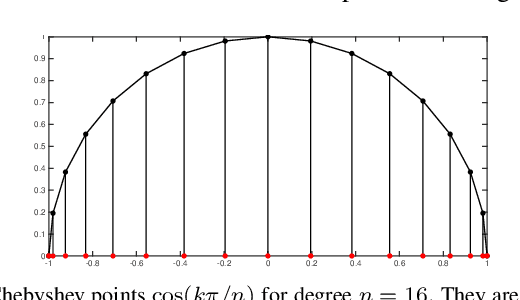

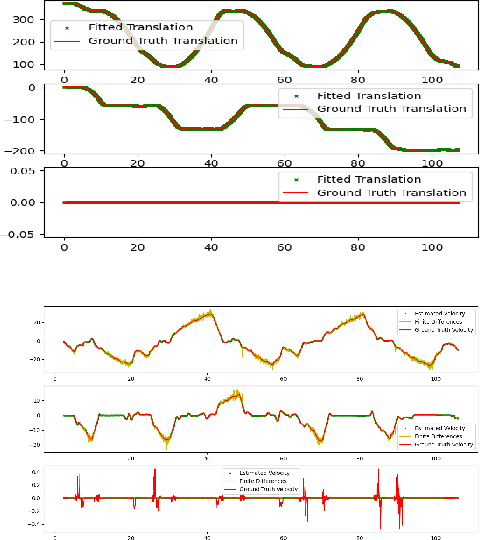

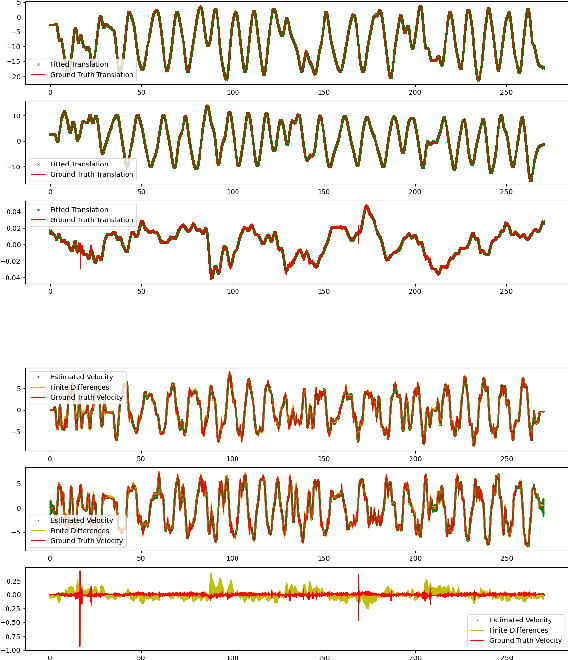

A Group Theoretic Metric for Robot State Estimation Leveraging Chebyshev Interpolation

Jan 30, 2024

Abstract:We propose a new metric for robot state estimation based on the recently introduced $\text{SE}_2(3)$ Lie group definition. Our metric is related to prior metrics for SLAM but explicitly takes into account the linear velocity of the state estimate, improving over current pose-based trajectory analysis. This has the benefit of providing a single, quantitative metric to evaluate state estimation algorithms against, while being compatible with existing tools and libraries. Since ground truth data generally consists of pose data from motion capture systems, we also propose an approach to compute the ground truth linear velocity based on polynomial interpolation. Using Chebyshev interpolation and a pseudospectral parameterization, we can accurately estimate the ground truth linear velocity of the trajectory in an optimal fashion with best approximation error. We demonstrate how this approach performs on multiple robotic platforms where accurate state estimation is vital, and compare it to alternative approaches such as finite differences. The pseudospectral parameterization also provides a means of trajectory data compression as an additional benefit. Experimental results show our method provides a valid and accurate means of comparing state estimation systems, which is also easy to interpret and report.

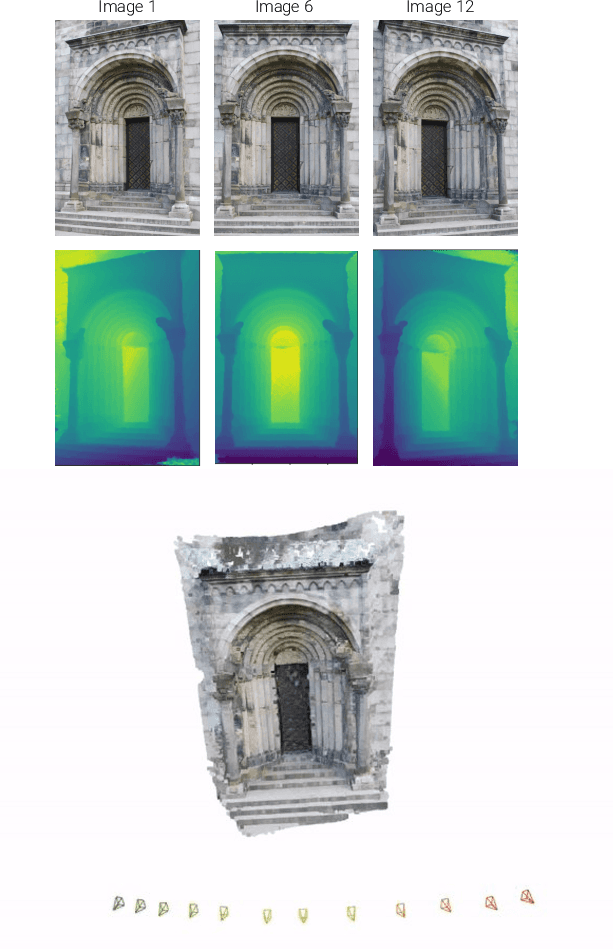

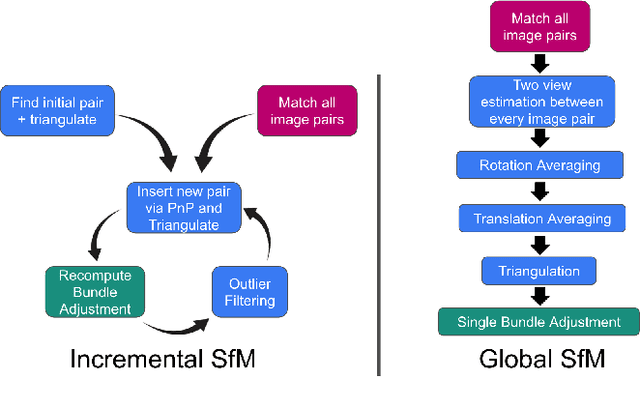

Distributed Global Structure-from-Motion with a Deep Front-End

Nov 30, 2023Abstract:While initial approaches to Structure-from-Motion (SfM) revolved around both global and incremental methods, most recent applications rely on incremental systems to estimate camera poses due to their superior robustness. Though there has been tremendous progress in SfM `front-ends' powered by deep models learned from data, the state-of-the-art (incremental) SfM pipelines still rely on classical SIFT features, developed in 2004. In this work, we investigate whether leveraging the developments in feature extraction and matching helps global SfM perform on par with the SOTA incremental SfM approach (COLMAP). To do so, we design a modular SfM framework that allows us to easily combine developments in different stages of the SfM pipeline. Our experiments show that while developments in deep-learning based two-view correspondence estimation do translate to improvements in point density for scenes reconstructed with global SfM, none of them outperform SIFT when comparing with incremental SfM results on a range of datasets. Our SfM system is designed from the ground up to leverage distributed computation, enabling us to parallelize computation on multiple machines and scale to large scenes.

Generalizing Trajectory Retiming to Quadratic Objective Functions

Sep 18, 2023Abstract:Trajectory retiming is the task of computing a feasible time parameterization to traverse a path. It is commonly used in the decoupled approach to trajectory optimization whereby a path is first found, then a retiming algorithm computes a speed profile that satisfies kino-dynamic and other constraints. While trajectory retiming is most often formulated with the minimum-time objective (i.e. traverse the path as fast as possible), it is not always the most desirable objective, particularly when we seek to balance multiple objectives or when bang-bang control is unsuitable. In this paper, we present a novel algorithm based on factor graph variable elimination that can solve for the global optimum of the retiming problem with quadratic objectives as well (e.g. minimize control effort or match a nominal speed by minimizing squared error), which may extend to arbitrary objectives with iteration. Our work extends prior works, which find only solutions on the boundary of the feasible region, while maintaining the same linear time complexity from a single forward-backward pass. We experimentally demonstrate that (1) we achieve better real-world robot performance by using quadratic objectives in place of the minimum-time objective, and (2) our implementation is comparable or faster than state-of-the-art retiming algorithms.

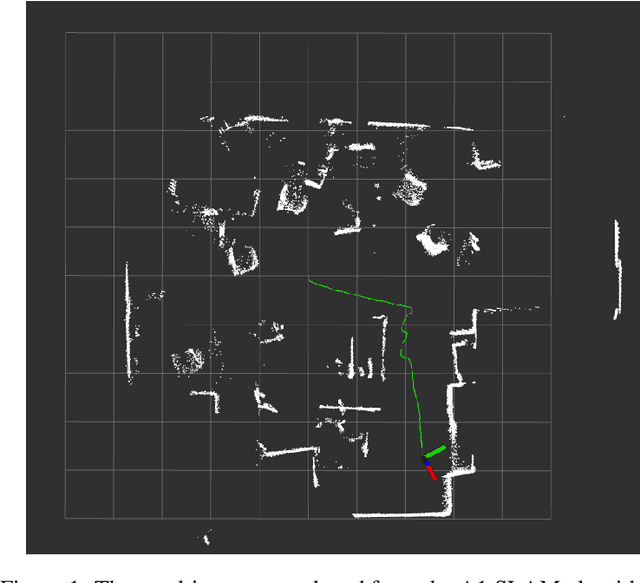

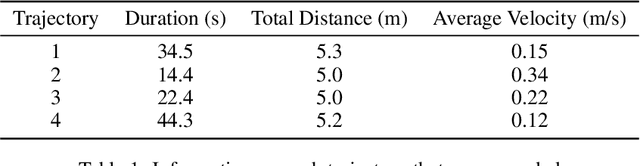

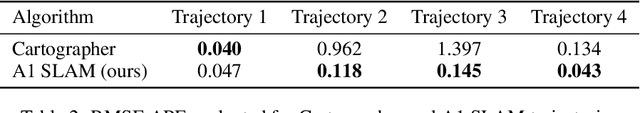

A1 SLAM: Quadruped SLAM using the A1's Onboard Sensors

Nov 26, 2022

Abstract:Quadrupeds are robots that have been of interest in the past few years due to their versatility in navigating across various terrain and utility in several applications. For quadrupeds to navigate without a predefined map a priori, they must rely on SLAM approaches to localize and build the map of the environment. Despite the surge of interest and research development in SLAM and quadrupeds, there still has yet to be an open-source package that capitalizes on the onboard sensors of an affordable quadruped. This motivates the A1 SLAM package, which is an open-source ROS package that provides the Unitree A1 quadruped with real-time, high performing SLAM capabilities using the default sensors shipped with the robot. A1 SLAM solves the PoseSLAM problem using the factor graph paradigm to optimize for the poses throughout the trajectory. A major design feature of the algorithm is using a sliding window of fully connected LiDAR odometry factors. A1 SLAM has been benchmarked against Google's Cartographer and has showed superior performance especially with trajectories experiencing aggressive motion.

Deep IMU Bias Inference for Robust Visual-Inertial Odometry with Factor Graphs

Nov 08, 2022

Abstract:Visual Inertial Odometry (VIO) is one of the most established state estimation methods for mobile platforms. However, when visual tracking fails, VIO algorithms quickly diverge due to rapid error accumulation during inertial data integration. This error is typically modeled as a combination of additive Gaussian noise and a slowly changing bias which evolves as a random walk. In this work, we propose to train a neural network to learn the true bias evolution. We implement and compare two common sequential deep learning architectures: LSTMs and Transformers. Our approach follows from recent learning-based inertial estimators, but, instead of learning a motion model, we target IMU bias explicitly, which allows us to generalize to locomotion patterns unseen in training. We show that our proposed method improves state estimation in visually challenging situations across a wide range of motions by quadrupedal robots, walking humans, and drones. Our experiments show an average 15% reduction in drift rate, with much larger reductions when there is total vision failure. Importantly, we also demonstrate that models trained with one locomotion pattern (human walking) can be applied to another (quadruped robot trotting) without retraining.

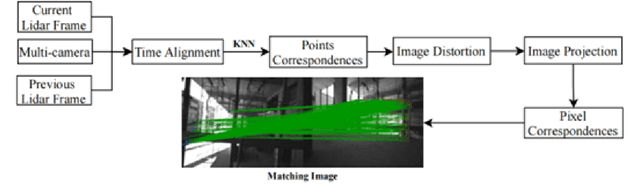

FAST-LIO, Then Bayesian ICP, Then GTSFM

Oct 05, 2022

Abstract:For the Hilti Challenge 2022, we created two systems, one building upon the other. The first system is FL2BIPS which utilizes the iEKF algorithm FAST-LIO2 and Bayesian ICP PoseSLAM, whereas the second system is GTSFM, a structure from motion pipeline with factor graph backend optimization powered by GTSAM

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge