Fei Hou

SharpNet: Enhancing MLPs to Represent Functions with Controlled Non-differentiability

Jan 27, 2026Abstract:Multi-layer perceptrons (MLPs) are a standard tool for learning and function approximation, but they inherently yield outputs that are globally smooth. As a result, they struggle to represent functions that are continuous yet deliberately non-differentiable (i.e., with prescribed $C^0$ sharp features) without relying on ad hoc post-processing. We present SharpNet, a modified MLP architecture capable of encoding functions with user-defined sharp features by enriching the network with an auxiliary feature function, which is defined as the solution to a Poisson equation with jump Neumann boundary conditions. It is evaluated via an efficient local integral that is fully differentiable with respect to the feature locations, enabling our method to jointly optimize both the feature locations and the MLP parameters to recover the target functions/models. The $C^0$-continuity of SharpNet is precisely controllable, ensuring $C^0$-continuity at the feature locations and smoothness elsewhere. We validate SharpNet on 2D problems and 3D CAD model reconstruction, and compare it against several state-of-the-art baselines. In both types of tasks, SharpNet accurately recovers sharp edges and corners while maintaining smooth behavior away from those features, whereas existing methods tend to smooth out gradient discontinuities. Both qualitative and quantitative evaluations highlight the benefits of our approach.

Voronoi-Assisted Diffusion for Computing Unsigned Distance Fields from Unoriented Points

Oct 14, 2025Abstract:Unsigned Distance Fields (UDFs) provide a flexible representation for 3D shapes with arbitrary topology, including open and closed surfaces, orientable and non-orientable geometries, and non-manifold structures. While recent neural approaches have shown promise in learning UDFs, they often suffer from numerical instability, high computational cost, and limited controllability. We present a lightweight, network-free method, Voronoi-Assisted Diffusion (VAD), for computing UDFs directly from unoriented point clouds. Our approach begins by assigning bi-directional normals to input points, guided by two Voronoi-based geometric criteria encoded in an energy function for optimal alignment. The aligned normals are then diffused to form an approximate UDF gradient field, which is subsequently integrated to recover the final UDF. Experiments demonstrate that VAD robustly handles watertight and open surfaces, as well as complex non-manifold and non-orientable geometries, while remaining computationally efficient and stable.

A Divide-and-Conquer Approach for Global Orientation of Non-Watertight Scene-Level Point Clouds Using 0-1 Integer Optimization

May 29, 2025Abstract:Orienting point clouds is a fundamental problem in computer graphics and 3D vision, with applications in reconstruction, segmentation, and analysis. While significant progress has been made, existing approaches mainly focus on watertight, object-level 3D models. The orientation of large-scale, non-watertight 3D scenes remains an underexplored challenge. To address this gap, we propose DACPO (Divide-And-Conquer Point Orientation), a novel framework that leverages a divide-and-conquer strategy for scalable and robust point cloud orientation. Rather than attempting to orient an unbounded scene at once, DACPO segments the input point cloud into smaller, manageable blocks, processes each block independently, and integrates the results through a global optimization stage. For each block, we introduce a two-step process: estimating initial normal orientations by a randomized greedy method and refining them by an adapted iterative Poisson surface reconstruction. To achieve consistency across blocks, we model inter-block relationships using an an undirected graph, where nodes represent blocks and edges connect spatially adjacent blocks. To reliably evaluate orientation consistency between adjacent blocks, we introduce the concept of the visible connected region, which defines the region over which visibility-based assessments are performed. The global integration is then formulated as a 0-1 integer-constrained optimization problem, with block flip states as binary variables. Despite the combinatorial nature of the problem, DACPO remains scalable by limiting the number of blocks (typically a few hundred for 3D scenes) involved in the optimization. Experiments on benchmark datasets demonstrate DACPO's strong performance, particularly in challenging large-scale, non-watertight scenarios where existing methods often fail. The source code is available at https://github.com/zd-lee/DACPO.

GeoMaNO: Geometric Mamba Neural Operator for Partial Differential Equations

May 17, 2025Abstract:The neural operator (NO) framework has emerged as a powerful tool for solving partial differential equations (PDEs). Recent NOs are dominated by the Transformer architecture, which offers NOs the capability to capture long-range dependencies in PDE dynamics. However, existing Transformer-based NOs suffer from quadratic complexity, lack geometric rigor, and thus suffer from sub-optimal performance on regular grids. As a remedy, we propose the Geometric Mamba Neural Operator (GeoMaNO) framework, which empowers NOs with Mamba's modeling capability, linear complexity, plus geometric rigor. We evaluate GeoMaNO's performance on multiple standard and popularly employed PDE benchmarks, spanning from Darcy flow problems to Navier-Stokes problems. GeoMaNO improves existing baselines in solution operator approximation by as much as 58.9%.

From Transparent to Opaque: Rethinking Neural Implicit Surfaces with $α$-NeuS

Nov 08, 2024

Abstract:Traditional 3D shape reconstruction techniques from multi-view images, such as structure from motion and multi-view stereo, primarily focus on opaque surfaces. Similarly, recent advances in neural radiance fields and its variants also primarily address opaque objects, encountering difficulties with the complex lighting effects caused by transparent materials. This paper introduces $\alpha$-NeuS, a new method for simultaneously reconstructing thin transparent objects and opaque objects based on neural implicit surfaces (NeuS). Our method leverages the observation that transparent surfaces induce local extreme values in the learned distance fields during neural volumetric rendering, contrasting with opaque surfaces that align with zero level sets. Traditional iso-surfacing algorithms such as marching cubes, which rely on fixed iso-values, are ill-suited for this data. We address this by taking the absolute value of the distance field and developing an optimization method that extracts level sets corresponding to both non-negative local minima and zero iso-values. We prove that the reconstructed surfaces are unbiased for both transparent and opaque objects. To validate our approach, we construct a benchmark that includes both real-world and synthetic scenes, demonstrating its practical utility and effectiveness. Our data and code are publicly available at https://github.com/728388808/alpha-NeuS.

Quasi-Medial Distance Field (Q-MDF): A Robust Method for Approximating and Discretizing Neural Medial Axis

Oct 23, 2024Abstract:The medial axis, a lower-dimensional shape descriptor, plays an important role in the field of digital geometry processing. Despite its importance, robust computation of the medial axis transform from diverse inputs, especially point clouds with defects, remains a significant challenge. In this paper, we tackle the challenge by proposing a new implicit method that diverges from mainstream explicit medial axis computation techniques. Our key technical insight is the difference between the signed distance field (SDF) and the medial field (MF) of a solid shape is the unsigned distance field (UDF) of the shape's medial axis. This allows for formulating medial axis computation as an implicit reconstruction problem. Utilizing a modified double covering method, we extract the medial axis as the zero level-set of the UDF. Extensive experiments show that our method has enhanced accuracy and robustness in learning compact medial axis transform from thorny meshes and point clouds compared to existing methods.

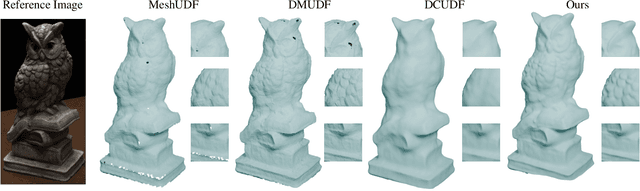

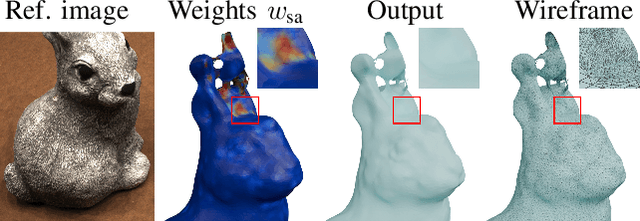

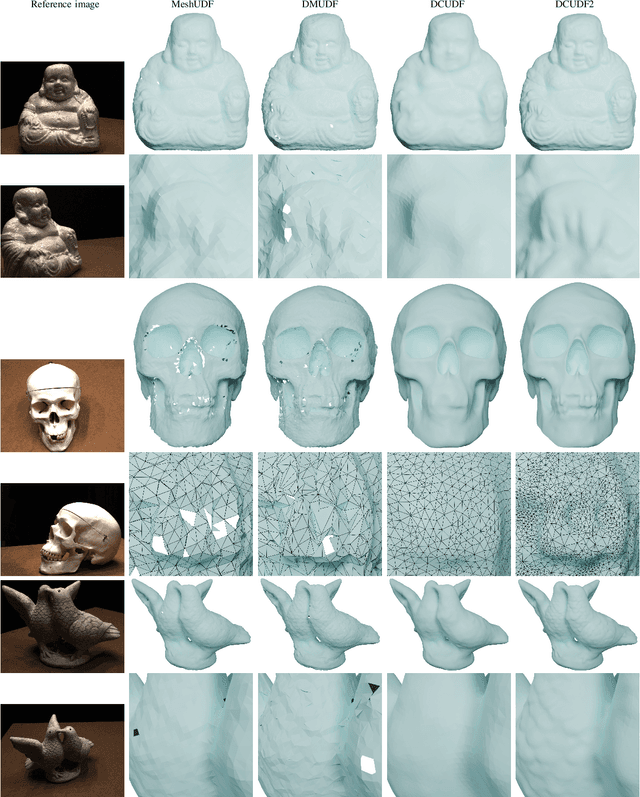

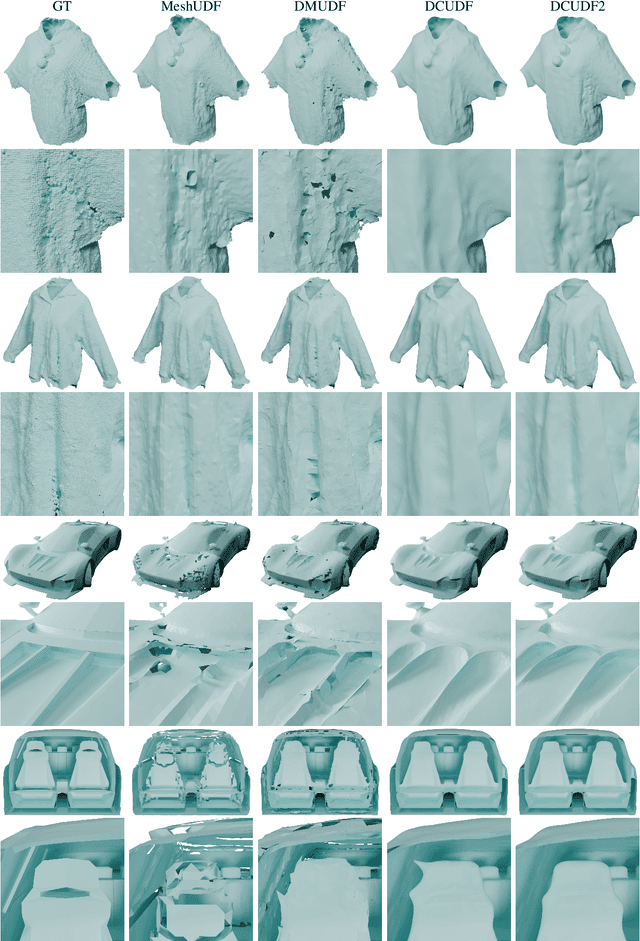

DCUDF2: Improving Efficiency and Accuracy in Extracting Zero Level Sets from Unsigned Distance Fields

Aug 30, 2024

Abstract:Unsigned distance fields (UDFs) allow for the representation of models with complex topologies, but extracting accurate zero level sets from these fields poses significant challenges, particularly in preserving topological accuracy and capturing fine geometric details. To overcome these issues, we introduce DCUDF2, an enhancement over DCUDF--the current state-of-the-art method--for extracting zero level sets from UDFs. Our approach utilizes an accuracy-aware loss function, enhanced with self-adaptive weights, to improve geometric quality significantly. We also propose a topology correction strategy that reduces the dependence on hyper-parameter, increasing the robustness of our method. Furthermore, we develop new operations leveraging self-adaptive weights to boost runtime efficiency. Extensive experiments on surface extraction across diverse datasets demonstrate that DCUDF2 outperforms DCUDF and existing methods in both geometric fidelity and topological accuracy. We will make the source code publicly available.

UGrid: An Efficient-And-Rigorous Neural Multigrid Solver for Linear PDEs

Aug 09, 2024Abstract:Numerical solvers of Partial Differential Equations (PDEs) are of fundamental significance to science and engineering. To date, the historical reliance on legacy techniques has circumscribed possible integration of big data knowledge and exhibits sub-optimal efficiency for certain PDE formulations, while data-driven neural methods typically lack mathematical guarantee of convergence and correctness. This paper articulates a mathematically rigorous neural solver for linear PDEs. The proposed UGrid solver, built upon the principled integration of U-Net and MultiGrid, manifests a mathematically rigorous proof of both convergence and correctness, and showcases high numerical accuracy, as well as strong generalization power to various input geometry/values and multiple PDE formulations. In addition, we devise a new residual loss metric, which enables unsupervised training and affords more stability and a larger solution space over the legacy losses.

Learning Unsigned Distance Fields from Local Shape Functions for 3D Surface Reconstruction

Jul 01, 2024

Abstract:Unsigned distance fields (UDFs) provide a versatile framework for representing a diverse array of 3D shapes, encompassing both watertight and non-watertight geometries. Traditional UDF learning methods typically require extensive training on large datasets of 3D shapes, which is costly and often necessitates hyperparameter adjustments for new datasets. This paper presents a novel neural framework, LoSF-UDF, for reconstructing surfaces from 3D point clouds by leveraging local shape functions to learn UDFs. We observe that 3D shapes manifest simple patterns within localized areas, prompting us to create a training dataset of point cloud patches characterized by mathematical functions that represent a continuum from smooth surfaces to sharp edges and corners. Our approach learns features within a specific radius around each query point and utilizes an attention mechanism to focus on the crucial features for UDF estimation. This method enables efficient and robust surface reconstruction from point clouds without the need for shape-specific training. Additionally, our method exhibits enhanced resilience to noise and outliers in point clouds compared to existing methods. We present comprehensive experiments and comparisons across various datasets, including synthetic and real-scanned point clouds, to validate our method's efficacy.

GS-Octree: Octree-based 3D Gaussian Splatting for Robust Object-level 3D Reconstruction Under Strong Lighting

Jun 26, 2024

Abstract:The 3D Gaussian Splatting technique has significantly advanced the construction of radiance fields from multi-view images, enabling real-time rendering. While point-based rasterization effectively reduces computational demands for rendering, it often struggles to accurately reconstruct the geometry of the target object, especially under strong lighting. To address this challenge, we introduce a novel approach that combines octree-based implicit surface representations with Gaussian splatting. Our method consists of four stages. Initially, it reconstructs a signed distance field (SDF) and a radiance field through volume rendering, encoding them in a low-resolution octree. The initial SDF represents the coarse geometry of the target object. Subsequently, it introduces 3D Gaussians as additional degrees of freedom, which are guided by the SDF. In the third stage, the optimized Gaussians further improve the accuracy of the SDF, allowing it to recover finer geometric details compared to the initial SDF obtained in the first stage. Finally, it adopts the refined SDF to further optimize the 3D Gaussians via splatting, eliminating those that contribute little to visual appearance. Experimental results show that our method, which leverages the distribution of 3D Gaussians with SDFs, reconstructs more accurate geometry, particularly in images with specular highlights caused by strong lighting.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge