Amr Mohamed

Markovian Generation Chains in Large Language Models

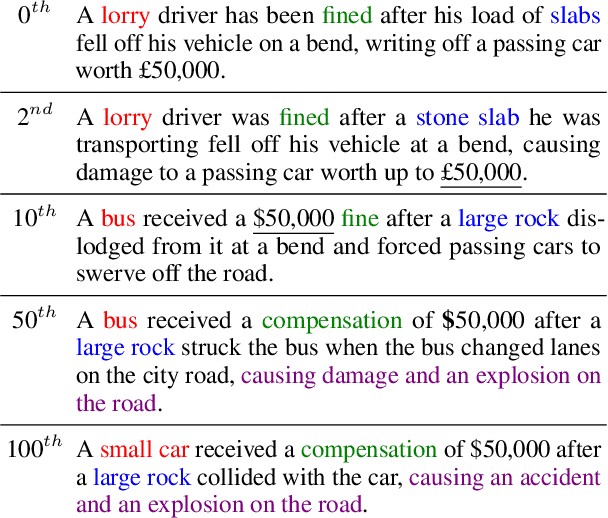

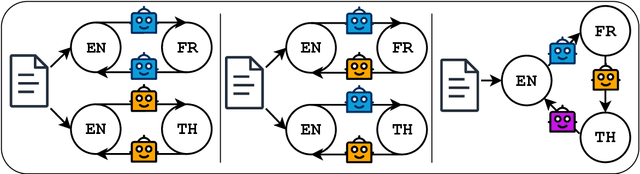

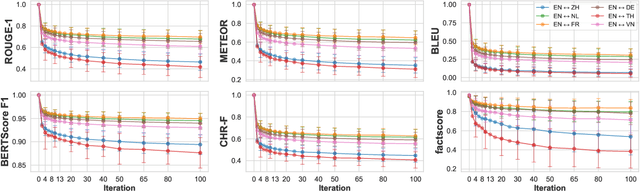

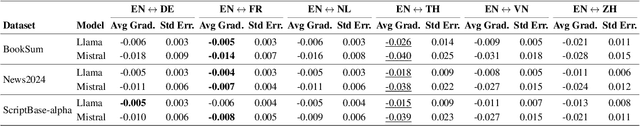

Mar 11, 2026Abstract:The widespread use of large language models (LLMs) raises an important question: how do texts evolve when they are repeatedly processed by LLMs? In this paper, we define this iterative inference process as Markovian generation chains, where each step takes a specific prompt template and the previous output as input, without including any prior memory. In iterative rephrasing and round-trip translation experiments, the output either converges to a small recurrent set or continues to produce novel sentences over a finite horizon. Through sentence-level Markov chain modeling and analysis of simulated data, we show that iterative process can either increase or reduce sentence diversity depending on factors such as the temperature parameter and the initial input sentence. These results offer valuable insights into the dynamics of iterative LLM inference and their implications for multi-agent LLM systems.

IntAgent: NWDAF-Based Intent LLM Agent Towards Advanced Next Generation Networks

Jan 19, 2026Abstract:Intent-based networks (IBNs) are gaining prominence as an innovative technology that automates network operations through high-level request statements, defining what the network should achieve. In this work, we introduce IntAgent, an intelligent intent LLM agent that integrates NWDAF analytics and tools to fulfill the network operator's intents. Unlike previous approaches, we develop an intent tools engine directly within the NWDAF analytics engine, allowing our agent to utilize live network analytics to inform its reasoning and tool selection. We offer an enriched, 3GPP-compliant data source that enhances the dynamic, context-aware fulfillment of network operator goals, along with an MCP tools server for scheduling, monitoring, and analytics tools. We demonstrate the efficacy of our framework through two practical use cases: ML-based traffic prediction and scheduled policy enforcement, which validate IntAgent's ability to autonomously fulfill complex network intents.

Beyond Random Sampling: Efficient Language Model Pretraining via Curriculum Learning

Jun 12, 2025

Abstract:Curriculum learning has shown promise in improving training efficiency and generalization in various machine learning domains, yet its potential in pretraining language models remains underexplored, prompting our work as the first systematic investigation in this area. We experimented with different settings, including vanilla curriculum learning, pacing-based sampling, and interleaved curricula-guided by six difficulty metrics spanning linguistic and information-theoretic perspectives. We train models under these settings and evaluate their performance on eight diverse benchmarks. Our experiments reveal that curriculum learning consistently improves convergence in early and mid-training phases, and can yield lasting gains when used as a warmup strategy with up to $3.5\%$ improvement. Notably, we identify compression ratio, lexical diversity, and readability as effective difficulty signals across settings. Our findings highlight the importance of data ordering in large-scale pretraining and provide actionable insights for scalable, data-efficient model development under realistic training scenarios.

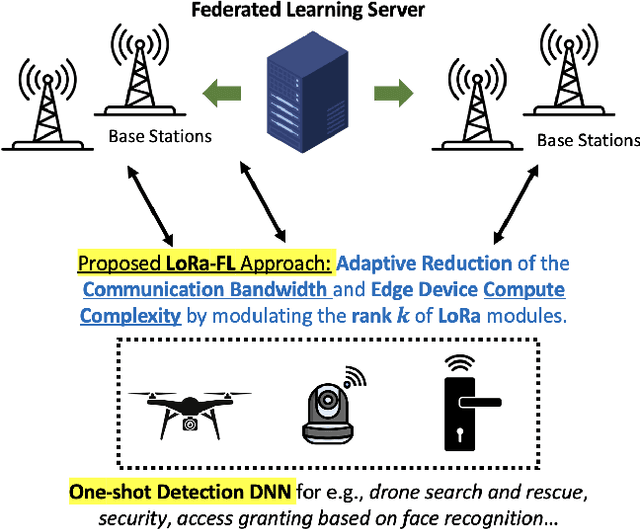

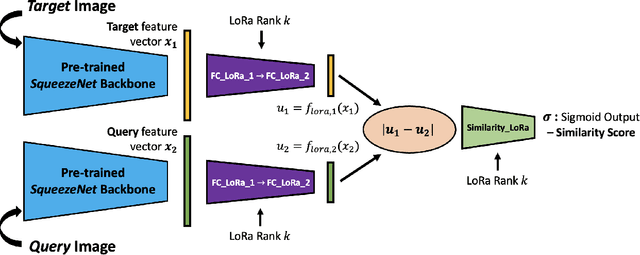

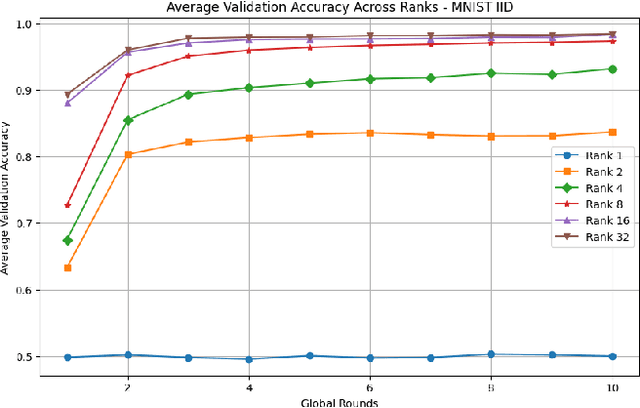

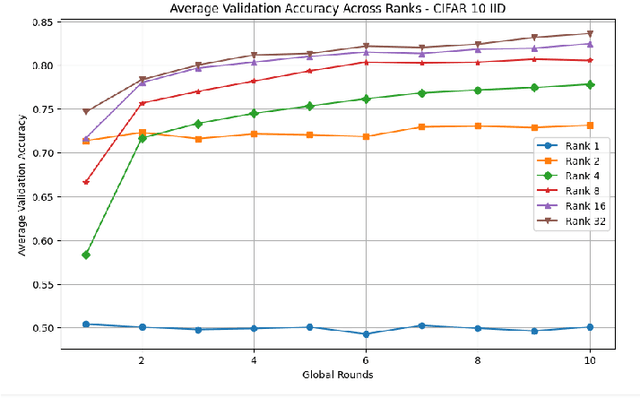

Federated Learning of Low-Rank One-Shot Image Detection Models in Edge Devices with Scalable Accuracy and Compute Complexity

Apr 23, 2025

Abstract:This paper introduces a novel federated learning framework termed LoRa-FL designed for training low-rank one-shot image detection models deployed on edge devices. By incorporating low-rank adaptation techniques into one-shot detection architectures, our method significantly reduces both computational and communication overhead while maintaining scalable accuracy. The proposed framework leverages federated learning to collaboratively train lightweight image recognition models, enabling rapid adaptation and efficient deployment across heterogeneous, resource-constrained devices. Experimental evaluations on the MNIST and CIFAR10 benchmark datasets, both in an independent-and-identically-distributed (IID) and non-IID setting, demonstrate that our approach achieves competitive detection performance while significantly reducing communication bandwidth and compute complexity. This makes it a promising solution for adaptively reducing the communication and compute power overheads, while not sacrificing model accuracy.

LLM as a Broken Telephone: Iterative Generation Distorts Information

Feb 27, 2025

Abstract:As large language models are increasingly responsible for online content, concerns arise about the impact of repeatedly processing their own outputs. Inspired by the "broken telephone" effect in chained human communication, this study investigates whether LLMs similarly distort information through iterative generation. Through translation-based experiments, we find that distortion accumulates over time, influenced by language choice and chain complexity. While degradation is inevitable, it can be mitigated through strategic prompting techniques. These findings contribute to discussions on the long-term effects of AI-mediated information propagation, raising important questions about the reliability of LLM-generated content in iterative workflows.

PDSR: Efficient UAV Deployment for Swift and Accurate Post-Disaster Search and Rescue

Oct 30, 2024

Abstract:This paper introduces a comprehensive framework for Post-Disaster Search and Rescue (PDSR), aiming to optimize search and rescue operations leveraging Unmanned Aerial Vehicles (UAVs). The primary goal is to improve the precision and availability of sensing capabilities, particularly in various catastrophic scenarios. Central to this concept is the rapid deployment of UAV swarms equipped with diverse sensing, communication, and intelligence capabilities, functioning as an integrated system that incorporates multiple technologies and approaches for efficient detection of individuals buried beneath rubble or debris following a disaster. Within this framework, we propose architectural solution and address associated challenges to ensure optimal performance in real-world disaster scenarios. The proposed framework aims to achieve complete coverage of damaged areas significantly faster than traditional methods using a multi-tier swarm architecture. Furthermore, integrating multi-modal sensing data with machine learning for data fusion could enhance detection accuracy, ensuring precise identification of survivors.

Atlas-Chat: Adapting Large Language Models for Low-Resource Moroccan Arabic Dialect

Sep 26, 2024Abstract:We introduce Atlas-Chat, the first-ever collection of large language models specifically developed for dialectal Arabic. Focusing on Moroccan Arabic, also known as Darija, we construct our instruction dataset by consolidating existing Darija language resources, creating novel datasets both manually and synthetically, and translating English instructions with stringent quality control. Atlas-Chat-9B and 2B models, fine-tuned on the dataset, exhibit superior ability in following Darija instructions and performing standard NLP tasks. Notably, our models outperform both state-of-the-art and Arabic-specialized LLMs like LLaMa, Jais, and AceGPT, e.g., achieving a 13% performance boost over a larger 13B model on DarijaMMLU, in our newly introduced evaluation suite for Darija covering both discriminative and generative tasks. Furthermore, we perform an experimental analysis of various fine-tuning strategies and base model choices to determine optimal configurations. All our resources are publicly accessible, and we believe our work offers comprehensive design methodologies of instruction-tuning for low-resource language variants, which are often neglected in favor of data-rich languages by contemporary LLMs.

Object Depth and Size Estimation using Stereo-vision and Integration with SLAM

Sep 11, 2024

Abstract:Autonomous robots use simultaneous localization and mapping (SLAM) for efficient and safe navigation in various environments. LiDAR sensors are integral in these systems for object identification and localization. However, LiDAR systems though effective in detecting solid objects (e.g., trash bin, bottle, etc.), encounter limitations in identifying semitransparent or non-tangible objects (e.g., fire, smoke, steam, etc.) due to poor reflecting characteristics. Additionally, LiDAR also fails to detect features such as navigation signs and often struggles to detect certain hazardous materials that lack a distinct surface for effective laser reflection. In this paper, we propose a highly accurate stereo-vision approach to complement LiDAR in autonomous robots. The system employs advanced stereo vision-based object detection to detect both tangible and non-tangible objects and then uses simple machine learning to precisely estimate the depth and size of the object. The depth and size information is then integrated into the SLAM process to enhance the robot's navigation capabilities in complex environments. Our evaluation, conducted on an autonomous robot equipped with LiDAR and stereo-vision systems demonstrates high accuracy in the estimation of an object's depth and size. A video illustration of the proposed scheme is available at: \url{https://www.youtube.com/watch?v=nusI6tA9eSk}.

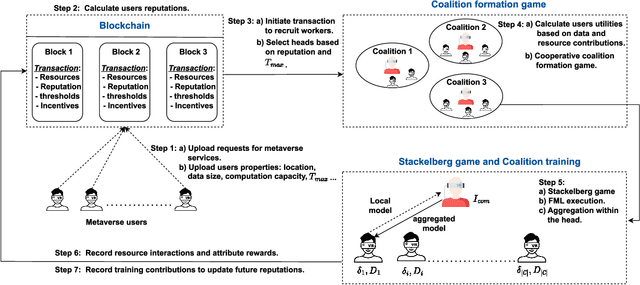

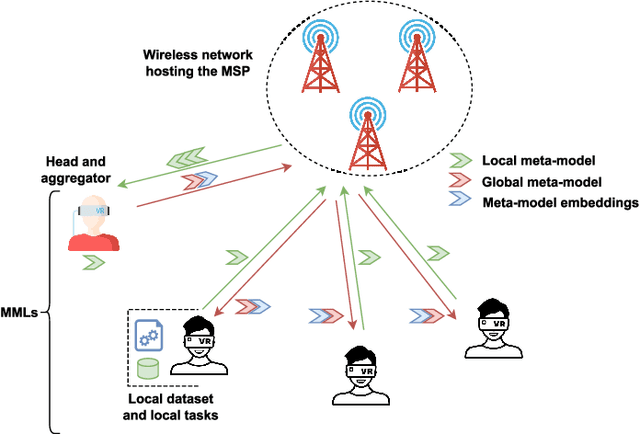

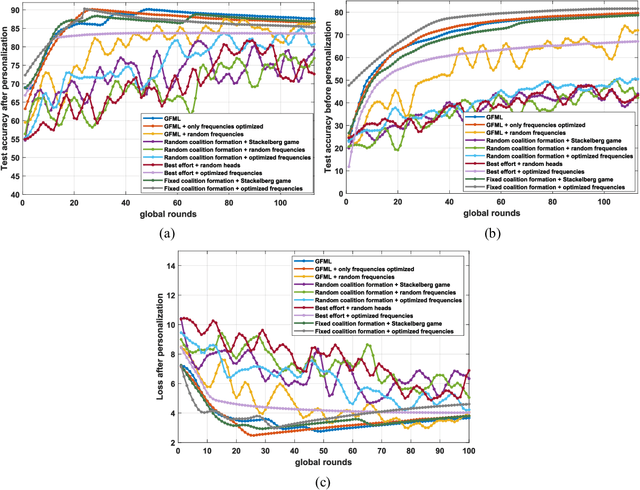

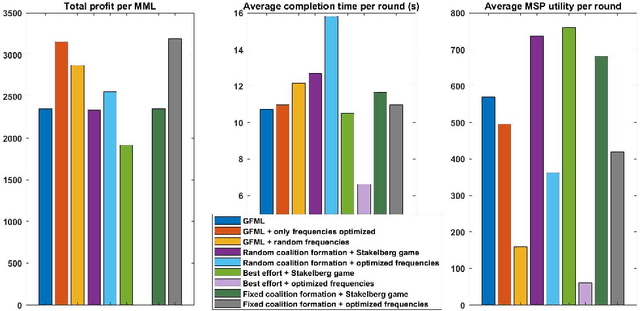

A Blockchain-based Reliable Federated Meta-learning for Metaverse: A Dual Game Framework

Aug 07, 2024

Abstract:The metaverse, envisioned as the next digital frontier for avatar-based virtual interaction, involves high-performance models. In this dynamic environment, users' tasks frequently shift, requiring fast model personalization despite limited data. This evolution consumes extensive resources and requires vast data volumes. To address this, meta-learning emerges as an invaluable tool for metaverse users, with federated meta-learning (FML), offering even more tailored solutions owing to its adaptive capabilities. However, the metaverse is characterized by users heterogeneity with diverse data structures, varied tasks, and uneven sample sizes, potentially undermining global training outcomes due to statistical difference. Given this, an urgent need arises for smart coalition formation that accounts for these disparities. This paper introduces a dual game-theoretic framework for metaverse services involving meta-learners as workers to manage FML. A blockchain-based cooperative coalition formation game is crafted, grounded on a reputation metric, user similarity, and incentives. We also introduce a novel reputation system based on users' historical contributions and potential contributions to present tasks, leveraging correlations between past and new tasks. Finally, a Stackelberg game-based incentive mechanism is presented to attract reliable workers to participate in meta-learning, minimizing users' energy costs, increasing payoffs, boosting FML efficacy, and improving metaverse utility. Results show that our dual game framework outperforms best-effort, random, and non-uniform clustering schemes - improving training performance by up to 10%, cutting completion times by as much as 30%, enhancing metaverse utility by more than 25%, and offering up to 5% boost in training efficiency over non-blockchain systems, effectively countering misbehaving users.

* Accepted in IEEE Internet of Things Journal

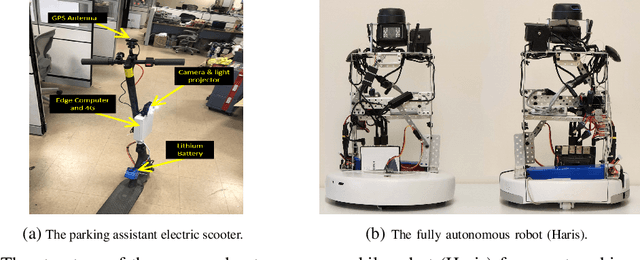

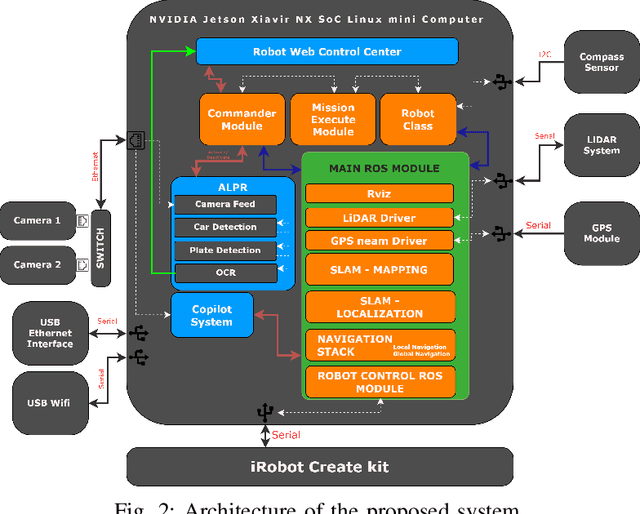

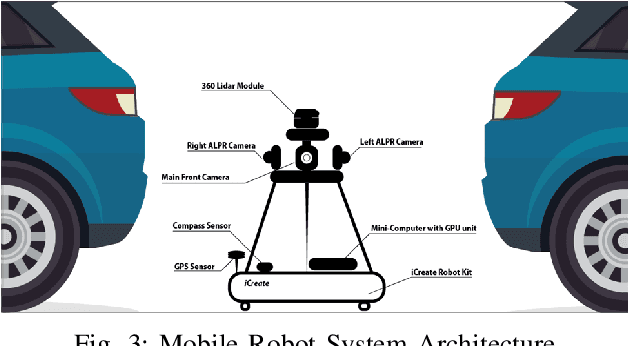

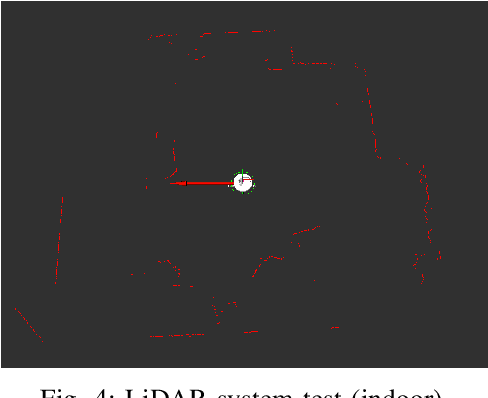

Haris: an Advanced Autonomous Mobile Robot for Smart Parking Assistance

Jan 31, 2024

Abstract:This paper presents Haris, an advanced autonomous mobile robot system for tracking the location of vehicles in crowded car parks using license plate recognition. The system employs simultaneous localization and mapping (SLAM) for autonomous navigation and precise mapping of the parking area, eliminating the need for GPS dependency. In addition, the system utilizes a sophisticated framework using computer vision techniques for object detection and automatic license plate recognition (ALPR) for reading and associating license plate numbers with location data. This information is subsequently synchronized with a back-end service and made accessible to users via a user-friendly mobile app, offering effortless vehicle location and alleviating congestion within the parking facility. The proposed system has the potential to improve the management of short-term large outdoor parking areas in crowded places such as sports stadiums. The demo of the robot can be found on https://youtu.be/ZkTCM35fxa0?si=QjggJuN7M1o3oifx.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge