Fethi Filali

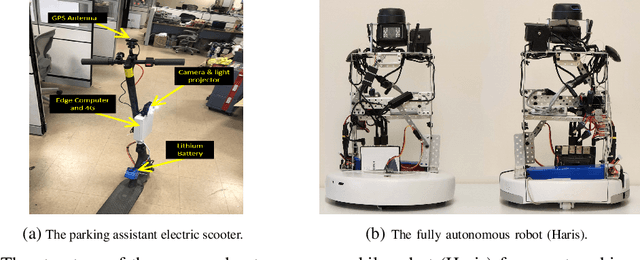

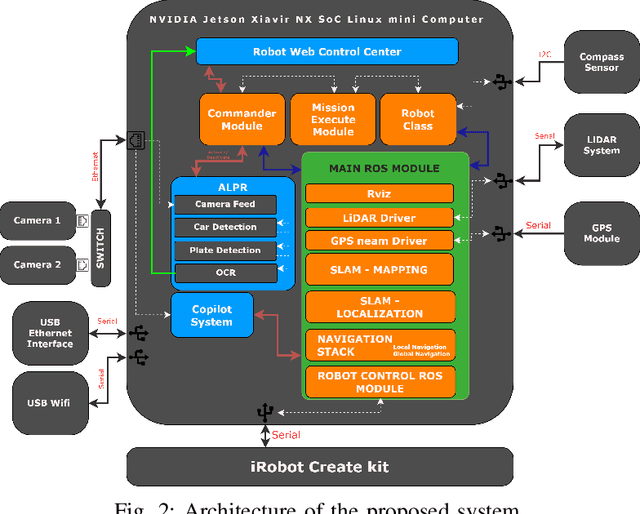

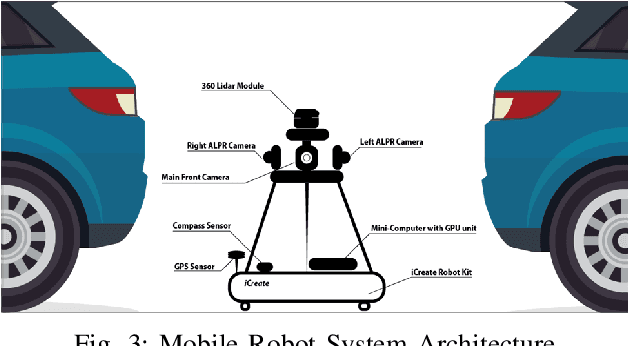

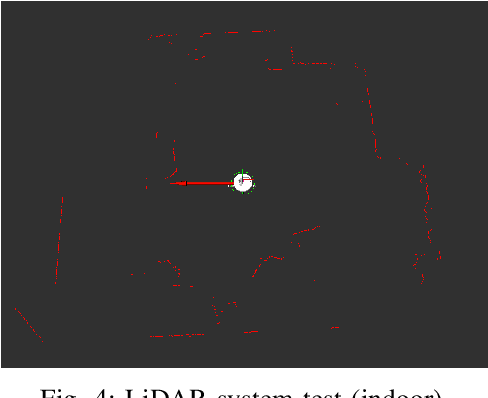

Haris: an Advanced Autonomous Mobile Robot for Smart Parking Assistance

Jan 31, 2024

Abstract:This paper presents Haris, an advanced autonomous mobile robot system for tracking the location of vehicles in crowded car parks using license plate recognition. The system employs simultaneous localization and mapping (SLAM) for autonomous navigation and precise mapping of the parking area, eliminating the need for GPS dependency. In addition, the system utilizes a sophisticated framework using computer vision techniques for object detection and automatic license plate recognition (ALPR) for reading and associating license plate numbers with location data. This information is subsequently synchronized with a back-end service and made accessible to users via a user-friendly mobile app, offering effortless vehicle location and alleviating congestion within the parking facility. The proposed system has the potential to improve the management of short-term large outdoor parking areas in crowded places such as sports stadiums. The demo of the robot can be found on https://youtu.be/ZkTCM35fxa0?si=QjggJuN7M1o3oifx.

Consistent Valid Physically-Realizable Adversarial Attack against Crowd-flow Prediction Models

Mar 05, 2023

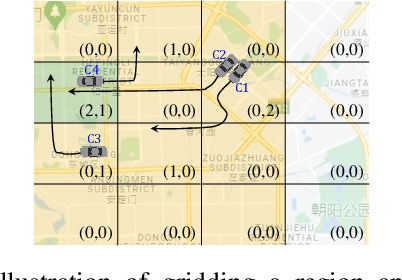

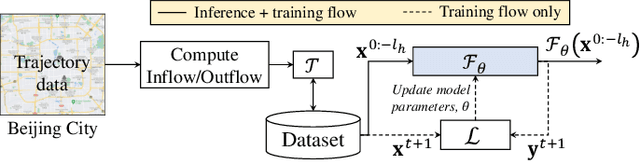

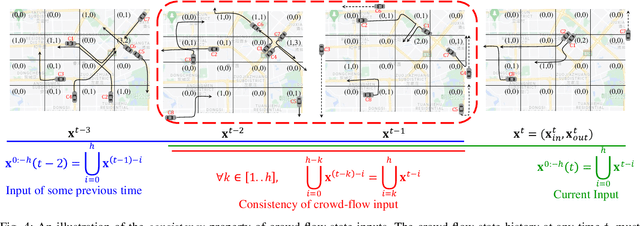

Abstract:Recent works have shown that deep learning (DL) models can effectively learn city-wide crowd-flow patterns, which can be used for more effective urban planning and smart city management. However, DL models have been known to perform poorly on inconspicuous adversarial perturbations. Although many works have studied these adversarial perturbations in general, the adversarial vulnerabilities of deep crowd-flow prediction models in particular have remained largely unexplored. In this paper, we perform a rigorous analysis of the adversarial vulnerabilities of DL-based crowd-flow prediction models under multiple threat settings, making three-fold contributions. (1) We propose CaV-detect by formally identifying two novel properties - Consistency and Validity - of the crowd-flow prediction inputs that enable the detection of standard adversarial inputs with 0% false acceptance rate (FAR). (2) We leverage universal adversarial perturbations and an adaptive adversarial loss to present adaptive adversarial attacks to evade CaV-detect defense. (3) We propose CVPR, a Consistent, Valid and Physically-Realizable adversarial attack, that explicitly inducts the consistency and validity priors in the perturbation generation mechanism. We find out that although the crowd-flow models are vulnerable to adversarial perturbations, it is extremely challenging to simulate these perturbations in physical settings, notably when CaV-detect is in place. We also show that CVPR attack considerably outperforms the adaptively modified standard attacks in FAR and adversarial loss metrics. We conclude with useful insights emerging from our work and highlight promising future research directions.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge