Abhinav K. Jha

SPASHT: An image-enhancement method for sparse-view MPI SPECT

Nov 09, 2025Abstract:Single-photon emission computed tomography for myocardial perfusion imaging (MPI SPECT) is a widely used diagnostic tool for coronary artery disease. However, the procedure requires considerable scanning time, leading to patient discomfort and the potential for motion-induced artifacts. Reducing the number of projection views while keeping the time per view unchanged provides a mechanism to shorten the scanning time. However, this approach leads to increased sampling artifacts, higher noise, and hence limited image quality. To address these issues, we propose sparseview SPECT image enhancement (SPASHT), inherently training the algorithm to improve performance on defect-detection tasks. We objectively evaluated SPASHT on the clinical task of detecting perfusion defects in a retrospective clinical study using data from patients who underwent MPI SPECT, where the defects were clinically realistic and synthetically inserted. The study was conducted for different numbers of fewer projection views, including 1/6, 1/3, and 1/2 of the typical projection views for MPI SPECT. Performance on the detection task was quantified using area under the receiver operating characteristic curve (AUC). Images obtained with SPASHT yielded significantly improved AUC compared to those obtained with the sparse-view protocol for all the considered numbers of fewer projection views. To further assess performance, a human observer study on the task of detecting perfusion defects was conducted. Results from the human observer study showed improved detection performance with images reconstructed using SPASHT compared to those from the sparse-view protocol. The results provide evidence of the efficacy of SPASHT in improving the quality of sparse-view MPI SPECT images and motivate further clinical validation.

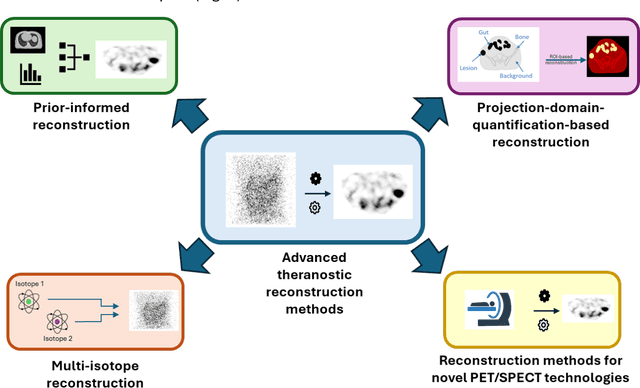

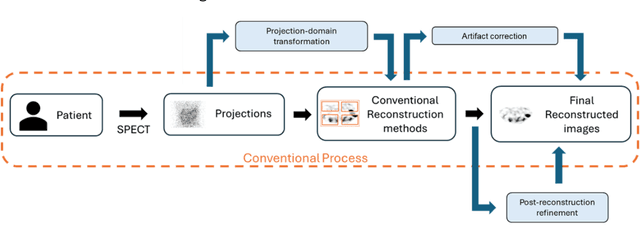

From Diagnosis to Therapy: Progress in SPECT and PET Reconstruction for Theranostics

Sep 09, 2025

Abstract:The theranostic paradigm enables personalization of treatment by selecting patients with a diagnostic radiopharmaceutical and monitoring therapy using a matched therapeutic isotope. This strategy relies on accurate image reconstruction of both pre-therapy and post-therapy images for patient selection and monitoring treatment. However, traditional reconstruction methods are hindered by challenges such as crosstalk in multi-isotope imaging and extremely low-count measurements when imaging of alpha- ({\alpha}-) emitting therapies. Additionally, to fully realize the benefits of new imaging systems being developed for theranostic applications, advanced reconstruction techniques are needed. These needs, alongside the growing clinical adoption of theranostics, have spurred the development of novel PET and SPECT reconstruction algorithms. This review highlights recent progress and addresses critical challenges and unmet needs in theranostic image reconstruction.

A detection-task-specific deep-learning method to improve the quality of sparse-view myocardial perfusion SPECT images

Apr 22, 2025Abstract:Myocardial perfusion imaging (MPI) with single-photon emission computed tomography (SPECT) is a widely used and cost-effective diagnostic tool for coronary artery disease. However, the lengthy scanning time in this imaging procedure can cause patient discomfort, motion artifacts, and potentially inaccurate diagnoses due to misalignment between the SPECT scans and the CT-scans which are acquired for attenuation compensation. Reducing projection angles is a potential way to shorten scanning time, but this can adversely impact the quality of the reconstructed images. To address this issue, we propose a detection-task-specific deep-learning method for sparse-view MPI SPECT images. This method integrates an observer loss term that penalizes the loss of anthropomorphic channel features with the goal of improving performance in perfusion defect-detection task. We observed that, on the task of detecting myocardial perfusion defects, the proposed method yielded an area under the receiver operating characteristic (ROC) curve (AUC) significantly larger than the sparse-view protocol. Further, the proposed method was observed to be able to restore the structure of the left ventricle wall, demonstrating ability to overcome sparse-sampling artifacts. Our preliminary results motivate further evaluations of the method.

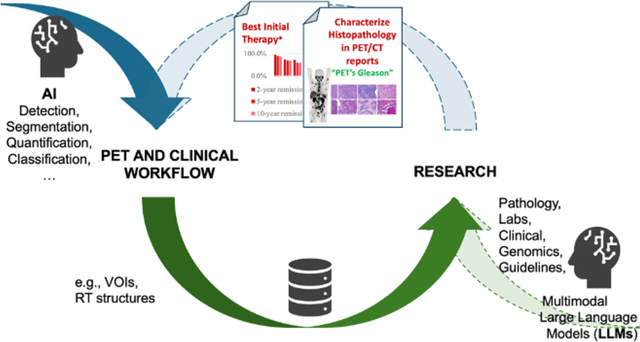

Nuclear Medicine Artificial Intelligence in Action: The Bethesda Report (AI Summit 2024)

Jun 03, 2024

Abstract:The 2nd SNMMI Artificial Intelligence (AI) Summit, organized by the SNMMI AI Task Force, took place in Bethesda, MD, on February 29 - March 1, 2024. Bringing together various community members and stakeholders, and following up on a prior successful 2022 AI Summit, the summit theme was: AI in Action. Six key topics included (i) an overview of prior and ongoing efforts by the AI task force, (ii) emerging needs and tools for computational nuclear oncology, (iii) new frontiers in large language and generative models, (iv) defining the value proposition for the use of AI in nuclear medicine, (v) open science including efforts for data and model repositories, and (vi) issues of reimbursement and funding. The primary efforts, findings, challenges, and next steps are summarized in this manuscript.

Observer study-based evaluation of TGAN architecture used to generate oncological PET images

Nov 28, 2023Abstract:The application of computer-vision algorithms in medical imaging has increased rapidly in recent years. However, algorithm training is challenging due to limited sample sizes, lack of labeled samples, as well as privacy concerns regarding data sharing. To address these issues, we previously developed (Bergen et al. 2022) a synthetic PET dataset for Head and Neck (H and N) cancer using the temporal generative adversarial network (TGAN) architecture and evaluated its performance segmenting lesions and identifying radiomics features in synthesized images. In this work, a two-alternative forced-choice (2AFC) observer study was performed to quantitatively evaluate the ability of human observers to distinguish between real and synthesized oncological PET images. In the study eight trained readers, including two board-certified nuclear medicine physicians, read 170 real/synthetic image pairs presented as 2D-transaxial using a dedicated web app. For each image pair, the observer was asked to identify the real image and input their confidence level with a 5-point Likert scale. P-values were computed using the binomial test and Wilcoxon signed-rank test. A heat map was used to compare the response accuracy distribution for the signed-rank test. Response accuracy for all observers ranged from 36.2% [27.9-44.4] to 63.1% [54.8-71.3]. Six out of eight observers did not identify the real image with statistical significance, indicating that the synthetic dataset was reasonably representative of oncological PET images. Overall, this study adds validity to the realism of our simulated H&N cancer dataset, which may be implemented in the future to train AI algorithms while favoring patient confidentiality and privacy protection.

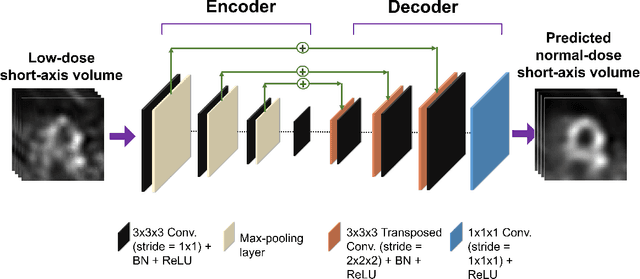

DEMIST: A deep-learning-based task-specific denoising approach for myocardial perfusion SPECT

Jun 14, 2023

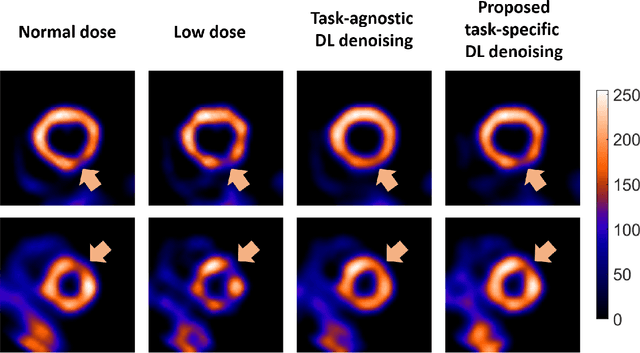

Abstract:There is an important need for methods to process myocardial perfusion imaging (MPI) SPECT images acquired at lower radiation dose and/or acquisition time such that the processed images improve observer performance on the clinical task of detecting perfusion defects. To address this need, we build upon concepts from model-observer theory and our understanding of the human visual system to propose a Detection task-specific deep-learning-based approach for denoising MPI SPECT images (DEMIST). The approach, while performing denoising, is designed to preserve features that influence observer performance on detection tasks. We objectively evaluated DEMIST on the task of detecting perfusion defects using a retrospective study with anonymized clinical data in patients who underwent MPI studies across two scanners (N = 338). The evaluation was performed at low-dose levels of 6.25%, 12.5% and 25% and using an anthropomorphic channelized Hotelling observer. Performance was quantified using area under the receiver operating characteristics curve (AUC). Images denoised with DEMIST yielded significantly higher AUC compared to corresponding low-dose images and images denoised with a commonly used task-agnostic DL-based denoising method. Similar results were observed with stratified analysis based on patient sex and defect type. Additionally, DEMIST improved visual fidelity of the low-dose images as quantified using root mean squared error and structural similarity index metric. A mathematical analysis revealed that DEMIST preserved features that assist in detection tasks while improving the noise properties, resulting in improved observer performance. The results provide strong evidence for further clinical evaluation of DEMIST to denoise low-count images in MPI SPECT.

A quality assurance framework for real-time monitoring of deep learning segmentation models in radiotherapy

May 19, 2023

Abstract:To safely deploy deep learning models in the clinic, a quality assurance framework is needed for routine or continuous monitoring of input-domain shift and the models' performance without ground truth contours. In this work, cardiac substructure segmentation was used as an example task to establish a QA framework. A benchmark dataset consisting of Computed Tomography (CT) images along with manual cardiac delineations of 241 patients were collected, including one 'common' image domain and five 'uncommon' domains. Segmentation models were tested on the benchmark dataset for an initial evaluation of model capacity and limitations. An image domain shift detector was developed by utilizing a trained Denoising autoencoder (DAE) and two hand-engineered features. Another Variational Autoencoder (VAE) was also trained to estimate the shape quality of the auto-segmentation results. Using the extracted features from the image/segmentation pair as inputs, a regression model was trained to predict the per-patient segmentation accuracy, measured by Dice coefficient similarity (DSC). The framework was tested across 19 segmentation models to evaluate the generalizability of the entire framework. As results, the predicted DSC of regression models achieved a mean absolute error (MAE) ranging from 0.036 to 0.046 with an averaged MAE of 0.041. When tested on the benchmark dataset, the performances of all segmentation models were not significantly affected by scanning parameters: FOV, slice thickness and reconstructions kernels. For input images with Poisson noise, CNN-based segmentation models demonstrated a decreased DSC ranging from 0.07 to 0.41, while the transformer-based model was not significantly affected.

Development and task-based evaluation of a scatter-window projection and deep learning-based transmission-less attenuation compensation method for myocardial perfusion SPECT

Mar 19, 2023Abstract:Attenuation compensation (AC) is beneficial for visual interpretation tasks in single-photon emission computed tomography (SPECT) myocardial perfusion imaging (MPI). However, traditional AC methods require the availability of a transmission scan, most often a CT scan. This approach has the disadvantages of increased radiation dose, increased scanner cost, and the possibility of inaccurate diagnosis in cases of misregistration between the SPECT and CT images. Further, many SPECT systems do not include a CT component. To address these issues, we developed a Scatter-window projection and deep Learning-based AC (SLAC) method to perform AC without a separate transmission scan. To investigate the clinical efficacy of this method, we then objectively evaluated the performance of this method on the clinical task of detecting perfusion defects on MPI in a retrospective study with anonymized clinical SPECT/CT stress MPI images. The proposed method was compared with CT-based AC (CTAC) and no-AC (NAC) methods. Our results showed that the SLAC method yielded an almost overlapping receiver operating characteristic (ROC) plot and a similar area under the ROC (AUC) to the CTAC method on this task. These results demonstrate the capability of the SLAC method for transmission-less AC in SPECT and motivate further clinical evaluation.

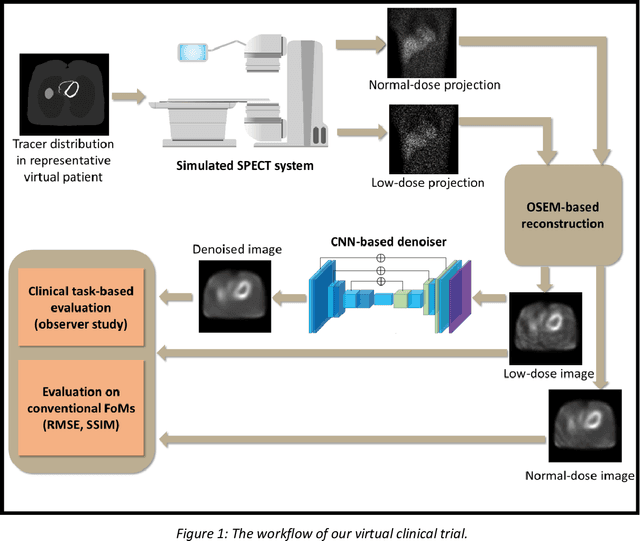

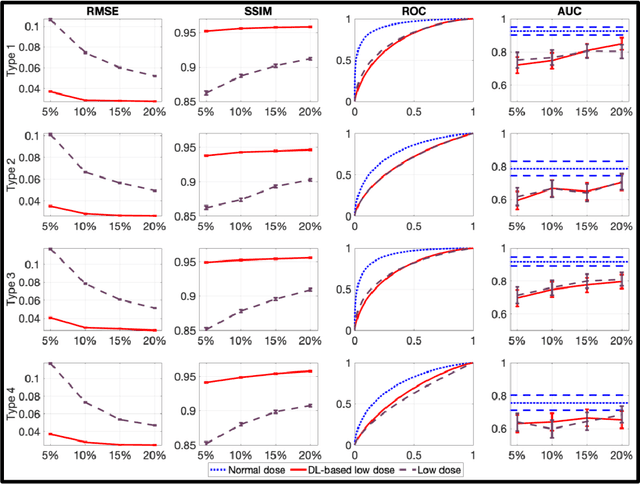

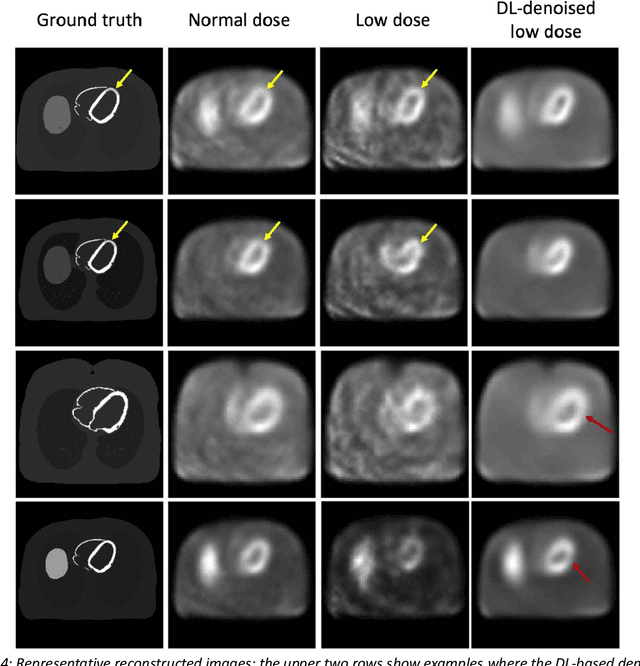

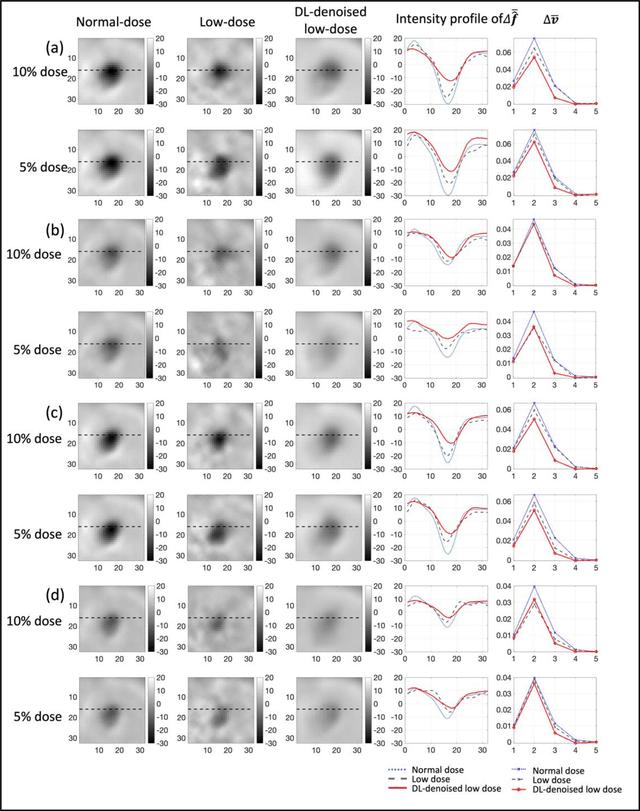

Need for Objective Task-based Evaluation of Deep Learning-Based Denoising Methods: A Study in the Context of Myocardial Perfusion SPECT

Mar 16, 2023

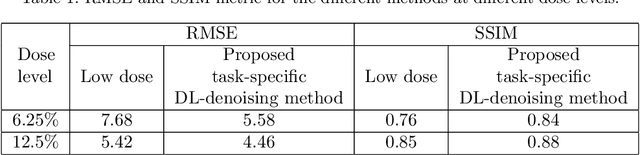

Abstract:Artificial intelligence-based methods have generated substantial interest in nuclear medicine. An area of significant interest has been using deep-learning (DL)-based approaches for denoising images acquired with lower doses, shorter acquisition times, or both. Objective evaluation of these approaches is essential for clinical application. DL-based approaches for denoising nuclear-medicine images have typically been evaluated using fidelity-based figures of merit (FoMs) such as RMSE and SSIM. However, these images are acquired for clinical tasks and thus should be evaluated based on their performance in these tasks. Our objectives were to (1) investigate whether evaluation with these FoMs is consistent with objective clinical-task-based evaluation; (2) provide a theoretical analysis for determining the impact of denoising on signal-detection tasks; (3) demonstrate the utility of virtual clinical trials (VCTs) to evaluate DL-based methods. A VCT to evaluate a DL-based method for denoising myocardial perfusion SPECT (MPS) images was conducted. The impact of DL-based denoising was evaluated using fidelity-based FoMs and AUC, which quantified performance on detecting perfusion defects in MPS images as obtained using a model observer with anthropomorphic channels. Based on fidelity-based FoMs, denoising using the considered DL-based method led to significantly superior performance. However, based on ROC analysis, denoising did not improve, and in fact, often degraded detection-task performance. The results motivate the need for objective task-based evaluation of DL-based denoising approaches. Further, this study shows how VCTs provide a mechanism to conduct such evaluations using VCTs. Finally, our theoretical treatment reveals insights into the reasons for the limited performance of the denoising approach.

A task-specific deep-learning-based denoising approach for myocardial perfusion SPECT

Mar 01, 2023

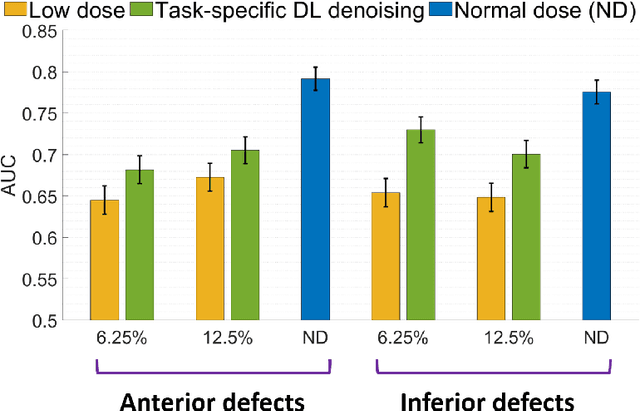

Abstract:Deep-learning (DL)-based methods have shown significant promise in denoising myocardial perfusion SPECT images acquired at low dose. For clinical application of these methods, evaluation on clinical tasks is crucial. Typically, these methods are designed to minimize some fidelity-based criterion between the predicted denoised image and some reference normal-dose image. However, while promising, studies have shown that these methods may have limited impact on the performance of clinical tasks in SPECT. To address this issue, we use concepts from the literature on model observers and our understanding of the human visual system to propose a DL-based denoising approach designed to preserve observer-related information for detection tasks. The proposed method was objectively evaluated on the task of detecting perfusion defect in myocardial perfusion SPECT images using a retrospective study with anonymized clinical data. Our results demonstrate that the proposed method yields improved performance on this detection task compared to using low-dose images. The results show that by preserving task-specific information, DL may provide a mechanism to improve observer performance in low-dose myocardial perfusion SPECT.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge