Yunyu Xiao

Ret.

A Multi-Stage Large Language Model Framework for Extracting Suicide-Related Social Determinants of Health

Aug 07, 2025Abstract:Background: Understanding social determinants of health (SDoH) factors contributing to suicide incidents is crucial for early intervention and prevention. However, data-driven approaches to this goal face challenges such as long-tailed factor distributions, analyzing pivotal stressors preceding suicide incidents, and limited model explainability. Methods: We present a multi-stage large language model framework to enhance SDoH factor extraction from unstructured text. Our approach was compared to other state-of-the-art language models (i.e., pre-trained BioBERT and GPT-3.5-turbo) and reasoning models (i.e., DeepSeek-R1). We also evaluated how the model's explanations help people annotate SDoH factors more quickly and accurately. The analysis included both automated comparisons and a pilot user study. Results: We show that our proposed framework demonstrated performance boosts in the overarching task of extracting SDoH factors and in the finer-grained tasks of retrieving relevant context. Additionally, we show that fine-tuning a smaller, task-specific model achieves comparable or better performance with reduced inference costs. The multi-stage design not only enhances extraction but also provides intermediate explanations, improving model explainability. Conclusions: Our approach improves both the accuracy and transparency of extracting suicide-related SDoH from unstructured texts. These advancements have the potential to support early identification of individuals at risk and inform more effective prevention strategies.

Machine Learning Applications Related to Suicide in Military and Veterans: A Scoping Literature Review

May 18, 2025Abstract:Suicide remains one of the main preventable causes of death among active service members and veterans. Early detection and prediction are crucial in suicide prevention. Machine learning techniques have yielded promising results in this area recently. This study aims to assess and summarize current research and provides a comprehensive review regarding the application of machine learning techniques in assessing and predicting suicidal ideation, attempts, and mortality among members of military and veteran populations. A keyword search using PubMed, IEEE, ACM, and Google Scholar was conducted, and the PRISMA protocol was adopted for relevant study selection. Thirty-two articles met the inclusion criteria. These studies consistently identified risk factors relevant to mental health issues such as depression, post-traumatic stress disorder (PTSD), suicidal ideation, prior attempts, physical health problems, and demographic characteristics. Machine learning models applied in this area have demonstrated reasonable predictive accuracy. However, additional research gaps still exist. First, many studies have overlooked metrics that distinguish between false positives and negatives, such as positive predictive value and negative predictive value, which are crucial in the context of suicide prevention policies. Second, more dedicated approaches to handling survival and longitudinal data should be explored. Lastly, most studies focused on machine learning methods, with limited discussion of their connection to clinical rationales. In summary, machine learning analyses have identified a wide range of risk factors associated with suicide in military populations. The diversity and complexity of these factors also demonstrates that effective prevention strategies must be comprehensive and flexible.

Semi-supervised Node Importance Estimation with Informative Distribution Modeling for Uncertainty Regularization

Mar 26, 2025Abstract:Node importance estimation, a classical problem in network analysis, underpins various web applications. Previous methods either exploit intrinsic topological characteristics, e.g., graph centrality, or leverage additional information, e.g., data heterogeneity, for node feature enhancement. However, these methods follow the supervised learning setting, overlooking the fact that ground-truth node-importance data are usually partially labeled in practice. In this work, we propose the first semi-supervised node importance estimation framework, i.e., EASING, to improve learning quality for unlabeled data in heterogeneous graphs. Different from previous approaches, EASING explicitly captures uncertainty to reflect the confidence of model predictions. To jointly estimate the importance values and uncertainties, EASING incorporates DJE, a deep encoder-decoder neural architecture. DJE introduces distribution modeling for graph nodes, where the distribution representations derive both importance and uncertainty estimates. Additionally, DJE facilitates effective pseudo-label generation for the unlabeled data to enrich the training samples. Based on labeled and pseudo-labeled data, EASING develops effective semi-supervised heteroscedastic learning with varying node uncertainty regularization. Extensive experiments on three real-world datasets highlight the superior performance of EASING compared to competing methods. Codes are available via https://github.com/yankai-chen/EASING.

Two-layer retrieval augmented generation framework for low-resource medical question-answering: proof of concept using Reddit data

May 29, 2024

Abstract:Retrieval augmented generation (RAG) provides the capability to constrain generative model outputs, and mitigate the possibility of hallucination, by providing relevant in-context text. The number of tokens a generative large language model (LLM) can incorporate as context is finite, thus limiting the volume of knowledge from which to generate an answer. We propose a two-layer RAG framework for query-focused answer generation and evaluate a proof-of-concept for this framework in the context of query-focused summary generation from social media forums, focusing on emerging drug-related information. The evaluations demonstrate the effectiveness of the two-layer framework in resource constrained settings to enable researchers in obtaining near real-time data from users.

Uncovering Misattributed Suicide Causes through Annotation Inconsistency Detection in Death Investigation Notes

Mar 29, 2024

Abstract:Data accuracy is essential for scientific research and policy development. The National Violent Death Reporting System (NVDRS) data is widely used for discovering the patterns and causes of death. Recent studies suggested the annotation inconsistencies within the NVDRS and the potential impact on erroneous suicide-cause attributions. We present an empirical Natural Language Processing (NLP) approach to detect annotation inconsistencies and adopt a cross-validation-like paradigm to identify problematic instances. We analyzed 267,804 suicide death incidents between 2003 and 2020 from the NVDRS. Our results showed that incorporating the target state's data into training the suicide-crisis classifier brought an increase of 5.4% to the F-1 score on the target state's test set and a decrease of 1.1% on other states' test set. To conclude, we demonstrated the annotation inconsistencies in NVDRS's death investigation notes, identified problematic instances, evaluated the effectiveness of correcting problematic instances, and eventually proposed an NLP improvement solution.

Time-to-event modeling of subreddits transitions to r/SuicideWatch

Feb 13, 2023

Abstract:Recent data mining research has focused on the analysis of social media text, content and networks to identify suicide ideation online. However, there has been limited research on the temporal dynamics of users and suicide ideation. In this work, we use time-to-event modeling to identify which subreddits have a higher association with users transitioning to posting on r/suicidewatch. For this purpose we use a Cox proportional hazards model that takes as input text and subreddit network features and outputs a probability distribution for the time until a Reddit user posts on r/suicidewatch. In our analysis we find a number of statistically significant features that predict earlier transitions to r/suicidewatch. While some patterns match existing intuition, for example r/depression is positively associated with posting sooner on r/suicidewatch, others were more surprising (for example, the average time between a high risk post on r/Wishlist and a post on r/suicidewatch is 10.2 days). We then discuss these results as well as directions for future research.

Combating Health Misinformation in Social Media: Characterization, Detection, Intervention, and Open Issues

Nov 10, 2022

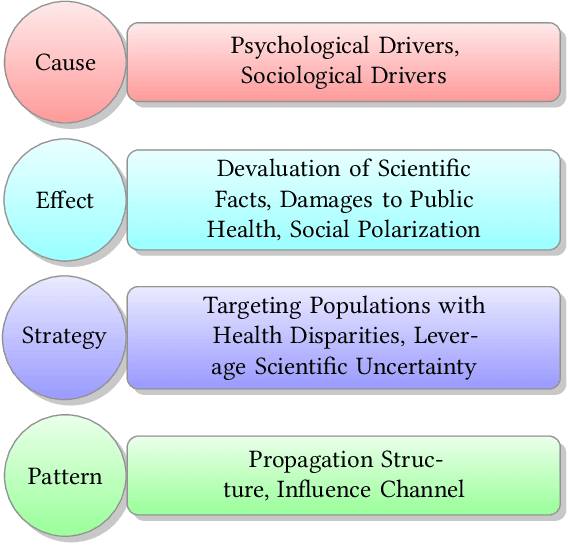

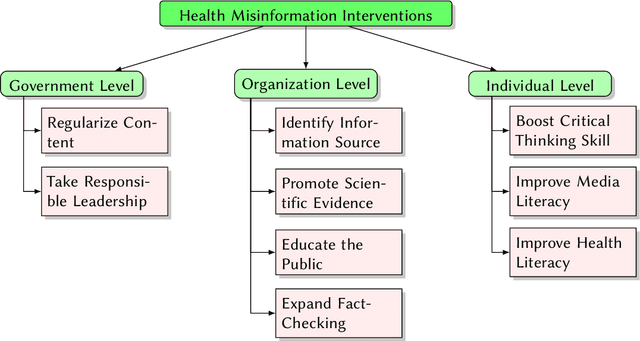

Abstract:Social media has been one of the main information consumption sources for the public, allowing people to seek and spread information more quickly and easily. However, the rise of various social media platforms also enables the proliferation of online misinformation. In particular, misinformation in the health domain has significant impacts on our society such as the COVID-19 infodemic. Therefore, health misinformation in social media has become an emerging research direction that attracts increasing attention from researchers of different disciplines. Compared to misinformation in other domains, the key differences of health misinformation include the potential of causing actual harm to humans' bodies and even lives, the hardness to identify for normal people, and the deep connection with medical science. In addition, health misinformation on social media has distinct characteristics from conventional channels such as television on multiple dimensions including the generation, dissemination, and consumption paradigms. Because of the uniqueness and importance of combating health misinformation in social media, we conduct this survey to further facilitate interdisciplinary research on this problem. In this survey, we present a comprehensive review of existing research about online health misinformation in different disciplines. Furthermore, we also systematically organize the related literature from three perspectives: characterization, detection, and intervention. Lastly, we conduct a deep discussion on the pressing open issues of combating health misinformation in social media and provide future directions for multidisciplinary researchers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge