Yulia Sandamirskaya

Heterogeneous computing platform for real-time robotics

Jan 13, 2026Abstract:After Industry 4.0 has embraced tight integration between machinery (OT), software (IT), and the Internet, creating a web of sensors, data, and algorithms in service of efficient and reliable production, a new concept of Society 5.0 is emerging, in which infrastructure of a city will be instrumented to increase reliability, efficiency, and safety. Robotics will play a pivotal role in enabling this vision that is pioneered by the NEOM initiative - a smart city, co-inhabited by humans and robots. In this paper we explore the computing platform that will be required to enable this vision. We show how we can combine neuromorphic computing hardware, exemplified by the Loihi2 processor used in conjunction with event-based cameras, for sensing and real-time perception and interaction with a local AI compute cluster (GPUs) for high-level language processing, cognition, and task planning. We demonstrate the use of this hybrid computing architecture in an interactive task, in which a humanoid robot plays a musical instrument with a human. Central to our design is the efficient and seamless integration of disparate components, ensuring that the synergy between software and hardware maximizes overall performance and responsiveness. Our proposed system architecture underscores the potential of heterogeneous computing architectures in advancing robotic autonomy and interactive intelligence, pointing toward a future where such integrated systems become the norm in complex, real-time applications.

Continual Learning for Autonomous Robots: A Prototype-based Approach

Mar 30, 2024Abstract:Humans and animals learn throughout their lives from limited amounts of sensed data, both with and without supervision. Autonomous, intelligent robots of the future are often expected to do the same. The existing continual learning (CL) methods are usually not directly applicable to robotic settings: they typically require buffering and a balanced replay of training data. A few-shot online continual learning (FS-OCL) setting has been proposed to address more realistic scenarios where robots must learn from a non-repeated sparse data stream. To enable truly autonomous life-long learning, an additional challenge of detecting novelties and learning new items without supervision needs to be addressed. We address this challenge with our new prototype-based approach called Continually Learning Prototypes (CLP). In addition to being capable of FS-OCL learning, CLP also detects novel objects and learns them without supervision. To mitigate forgetting, CLP utilizes a novel metaplasticity mechanism that adapts the learning rate individually per prototype. CLP is rehearsal-free, hence does not require a memory buffer, and is compatible with neuromorphic hardware, characterized by ultra-low power consumption, real-time processing abilities, and on-chip learning. Indeed, we have open-sourced a simple version of CLP in the neuromorphic software framework Lava, targetting Intel's neuromorphic chip Loihi 2. We evaluate CLP on a robotic vision dataset, OpenLORIS. In a low-instance FS-OCL scenario, CLP shows state-of-the-art results. In the open world, CLP detects novelties with superior precision and recall and learns features of the detected novel classes without supervision, achieving a strong baseline of 99% base class and 65%/76% (5-shot/10-shot) novel class accuracy.

Neuromorphic force-control in an industrial task: validating energy and latency benefits

Mar 13, 2024Abstract:As robots become smarter and more ubiquitous, optimizing the power consumption of intelligent compute becomes imperative towards ensuring the sustainability of technological advancements. Neuromorphic computing hardware makes use of biologically inspired neural architectures to achieve energy and latency improvements compared to conventional von Neumann computing architecture. Applying these benefits to robots has been demonstrated in several works in the field of neurorobotics, typically on relatively simple control tasks. Here, we introduce an example of neuromorphic computing applied to the real-world industrial task of object insertion. We trained a spiking neural network (SNN) to perform force-torque feedback control using a reinforcement learning approach in simulation. We then ported the SNN to the Intel neuromorphic research chip Loihi interfaced with a KUKA robotic arm. At inference time we show latency competitive with current CPU/GPU architectures, two orders of magnitude less energy usage in comparison to traditional low-energy edge-hardware. We offer this example as a proof of concept implementation of a neuromoprhic controller in real-world robotic setting, highlighting the benefits of neuromorphic hardware for the development of intelligent controllers for robots.

NeuroBench: Advancing Neuromorphic Computing through Collaborative, Fair and Representative Benchmarking

Apr 15, 2023

Abstract:The field of neuromorphic computing holds great promise in terms of advancing computing efficiency and capabilities by following brain-inspired principles. However, the rich diversity of techniques employed in neuromorphic research has resulted in a lack of clear standards for benchmarking, hindering effective evaluation of the advantages and strengths of neuromorphic methods compared to traditional deep-learning-based methods. This paper presents a collaborative effort, bringing together members from academia and the industry, to define benchmarks for neuromorphic computing: NeuroBench. The goals of NeuroBench are to be a collaborative, fair, and representative benchmark suite developed by the community, for the community. In this paper, we discuss the challenges associated with benchmarking neuromorphic solutions, and outline the key features of NeuroBench. We believe that NeuroBench will be a significant step towards defining standards that can unify the goals of neuromorphic computing and drive its technological progress. Please visit neurobench.ai for the latest updates on the benchmark tasks and metrics.

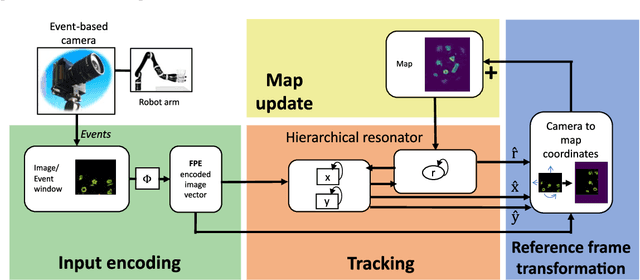

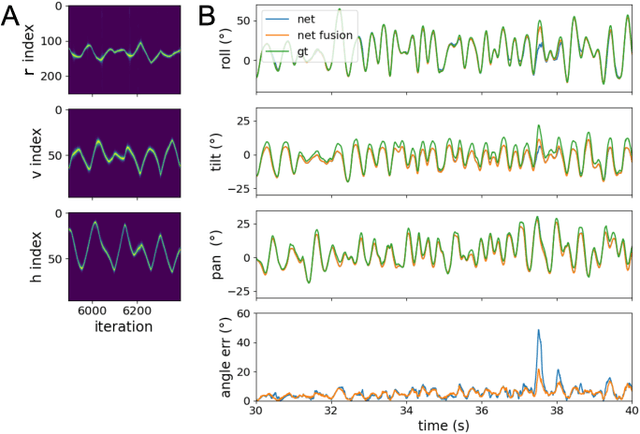

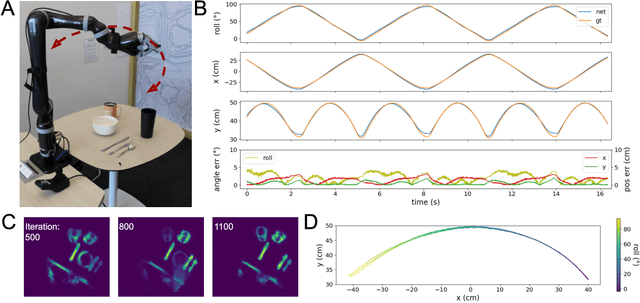

Neuromorphic Visual Odometry with Resonator Networks

Sep 05, 2022

Abstract:Autonomous agents require self-localization to navigate in unknown environments. They can use Visual Odometry (VO) to estimate self-motion and localize themselves using visual sensors. This motion-estimation strategy is not compromised by drift as inertial sensors or slippage as wheel encoders. However, VO with conventional cameras is computationally demanding, limiting its application in systems with strict low-latency, -memory, and -energy requirements. Using event-based cameras and neuromorphic computing hardware offers a promising low-power solution to the VO problem. However, conventional algorithms for VO are not readily convertible to neuromorphic hardware. In this work, we present a VO algorithm built entirely of neuronal building blocks suitable for neuromorphic implementation. The building blocks are groups of neurons representing vectors in the computational framework of Vector Symbolic Architecture (VSA) which was proposed as an abstraction layer to program neuromorphic hardware. The VO network we propose generates and stores a working memory of the presented visual environment. It updates this working memory while at the same time estimating the changing location and orientation of the camera. We demonstrate how VSA can be leveraged as a computing paradigm for neuromorphic robotics. Moreover, our results represent an important step towards using neuromorphic computing hardware for fast and power-efficient VO and the related task of simultaneous localization and mapping (SLAM). We validate this approach experimentally in a robotic task and with an event-based dataset, demonstrating state-of-the-art performance.

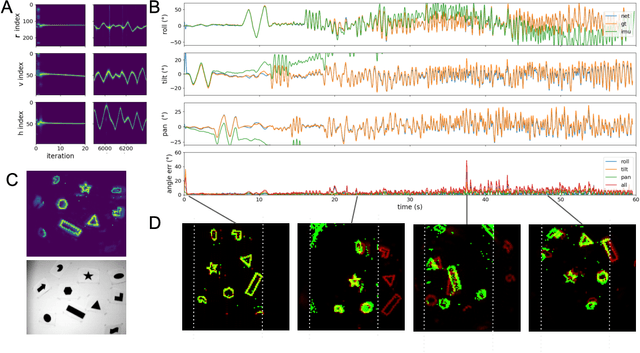

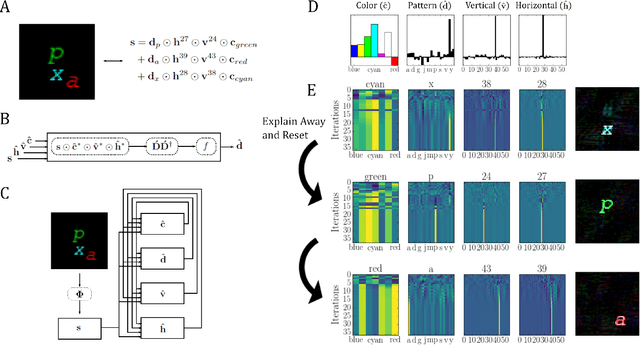

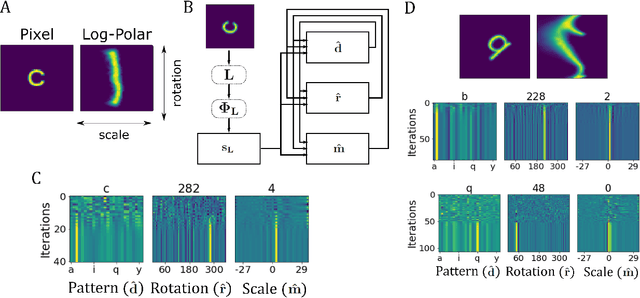

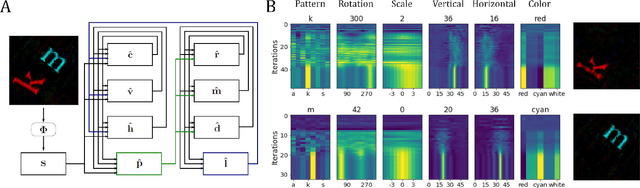

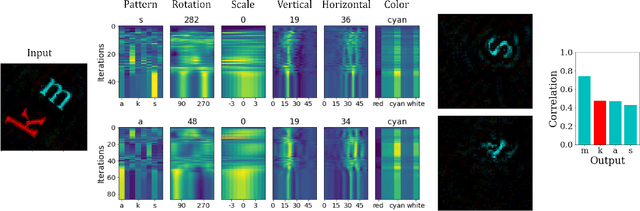

Neuromorphic Visual Scene Understanding with Resonator Networks

Aug 26, 2022

Abstract:Inferring the position of objects and their rigid transformations is still an open problem in visual scene understanding. Here we propose a neuromorphic solution that utilizes an efficient factorization network which is based on three key concepts: (1) a computational framework based on Vector Symbolic Architectures (VSA) with complex-valued vectors; (2) the design of Hierarchical Resonator Networks (HRN) to deal with the non-commutative nature of translation and rotation in visual scenes, when both are used in combination; (3) the design of a multi-compartment spiking phasor neuron model for implementing complex-valued vector binding on neuromorphic hardware. The VSA framework uses vector binding operations to produce generative image models in which binding acts as the equivariant operation for geometric transformations. A scene can therefore be described as a sum of vector products, which in turn can be efficiently factorized by a resonator network to infer objects and their poses. The HRN enables the definition of a partitioned architecture in which vector binding is equivariant for horizontal and vertical translation within one partition, and for rotation and scaling within the other partition. The spiking neuron model allows to map the resonator network onto efficient and low-power neuromorphic hardware. In this work, we demonstrate our approach using synthetic scenes composed of simple 2D shapes undergoing rigid geometric transformations and color changes. A companion paper demonstrates this approach in real-world application scenarios for machine vision and robotics.

What does it mean to represent? Mental representations as falsifiable memory patterns

Mar 11, 2022

Abstract:Representation is a key notion in neuroscience and artificial intelligence (AI). However, a longstanding philosophical debate highlights that specifying what counts as representation is trickier than it seems. With this brief opinion paper we would like to bring the philosophical problem of representation into attention and provide an implementable solution. We note that causal and teleological approaches often assumed by neuroscientists and engineers fail to provide a satisfactory account of representation. We sketch an alternative according to which representations correspond to inferred latent structures in the world, identified on the basis of conditional patterns of activation. These structures are assumed to have certain properties objectively, which allows for planning, prediction, and detection of unexpected events. We illustrate our proposal with the simulation of a simple neural network model. We believe this stronger notion of representation could inform future research in neuroscience and AI.

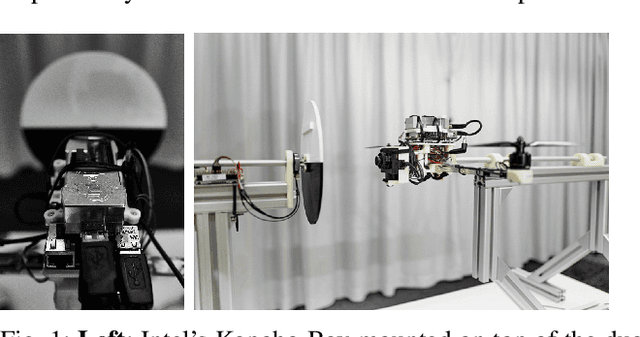

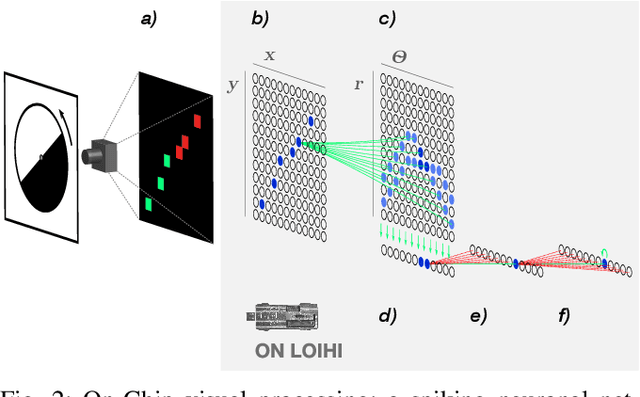

Event-driven Vision and Control for UAVs on a Neuromorphic Chip

Aug 19, 2021

Abstract:Event-based vision sensors achieve up to three orders of magnitude better speed vs. power consumption trade off in high-speed control of UAVs compared to conventional image sensors. Event-based cameras produce a sparse stream of events that can be processed more efficiently and with a lower latency than images, enabling ultra-fast vision-driven control. Here, we explore how an event-based vision algorithm can be implemented as a spiking neuronal network on a neuromorphic chip and used in a drone controller. We show how seamless integration of event-based perception on chip leads to even faster control rates and lower latency. In addition, we demonstrate how online adaptation of the SNN controller can be realised using on-chip learning. Our spiking neuronal network on chip is the first example of a neuromorphic vision-based controller solving a high-speed UAV control task. The excellent scalability of processing in neuromorphic hardware opens the possibility to solve more challenging visual tasks in the future and integrate visual perception in fast control loops.

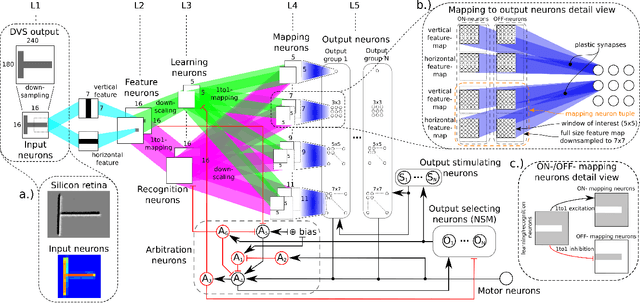

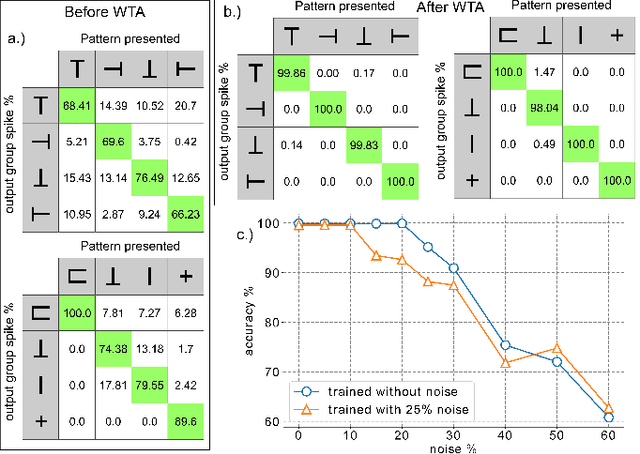

Visual Pattern Recognition with on On-chip Learning: towards a Fully Neuromorphic Approach

Aug 08, 2020

Abstract:We present a spiking neural network (SNN) for visual pattern recognition with on-chip learning on neuromorphichardware. We show how this network can learn simple visual patterns composed of horizontal and vertical bars sensed by a Dynamic Vision Sensor, using a local spike-based plasticity rule. During recognition, the network classifies the pattern's identity while at the same time estimating its location and scale. We build on previous work that used learning with neuromorphic hardware in the loop and demonstrate that the proposed network can properly operate with on-chip learning, demonstrating a complete neuromorphic pattern learning and recognition setup. Our results show that the network is robust against noise on the input (no accuracy drop when adding 130% noise) and against up to 20% noise in the neuron parameters.

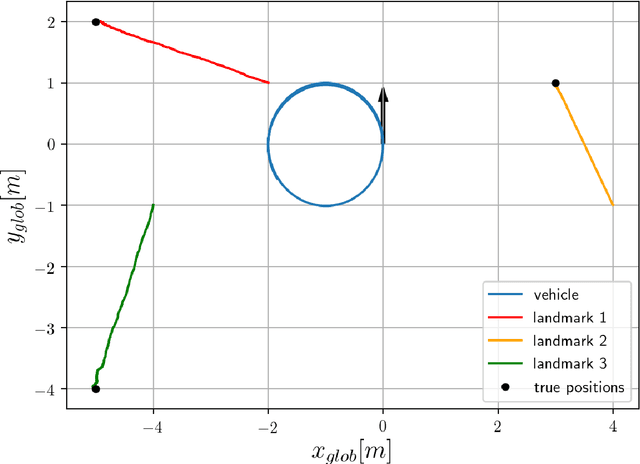

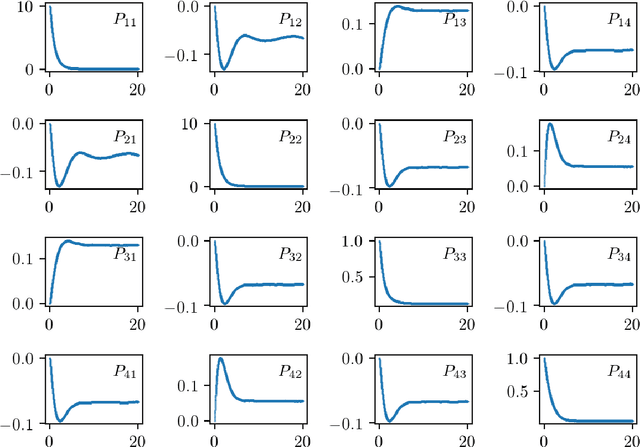

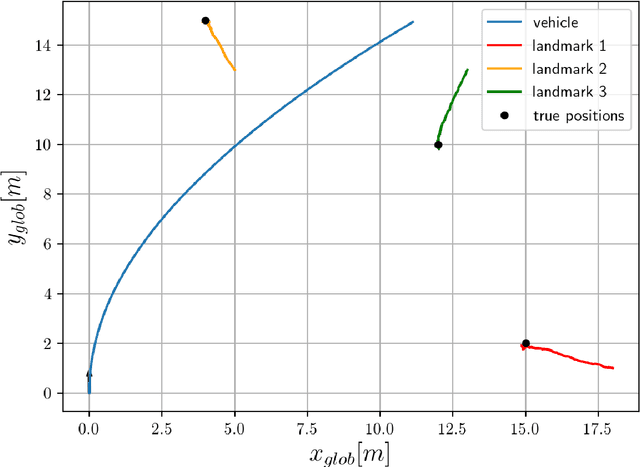

Numerical and experimental realization of analytical SLAM

Nov 15, 2019

Abstract:Analytical approach to SLAM problem was introduced in the recent years. In our work we investigate the method numerically with the motivation of using the algorithm in a real hardware experiments. We perform a robustness test of the algorithm and apply it to the robotic hardware in two different setups. In one we try to recover a map of the environment using bearing angle measurements and radial distance measurements. The another setup utilizes only bearing angle information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge