Younes Samih

DialectalArabicMMLU: Benchmarking Dialectal Capabilities in Arabic and Multilingual Language Models

Oct 31, 2025Abstract:We present DialectalArabicMMLU, a new benchmark for evaluating the performance of large language models (LLMs) across Arabic dialects. While recently developed Arabic and multilingual benchmarks have advanced LLM evaluation for Modern Standard Arabic (MSA), dialectal varieties remain underrepresented despite their prevalence in everyday communication. DialectalArabicMMLU extends the MMLU-Redux framework through manual translation and adaptation of 3K multiple-choice question-answer pairs into five major dialects (Syrian, Egyptian, Emirati, Saudi, and Moroccan), yielding a total of 15K QA pairs across 32 academic and professional domains (22K QA pairs when also including English and MSA). The benchmark enables systematic assessment of LLM reasoning and comprehension beyond MSA, supporting both task-based and linguistic analysis. We evaluate 19 open-weight Arabic and multilingual LLMs (1B-13B parameters) and report substantial performance variation across dialects, revealing persistent gaps in dialectal generalization. DialectalArabicMMLU provides the first unified, human-curated resource for measuring dialectal understanding in Arabic, thus promoting more inclusive evaluation and future model development.

Large Language Models as Code Executors: An Exploratory Study

Oct 10, 2024

Abstract:The capabilities of Large Language Models (LLMs) have significantly evolved, extending from natural language processing to complex tasks like code understanding and generation. We expand the scope of LLMs' capabilities to a broader context, using LLMs to execute code snippets to obtain the output. This paper pioneers the exploration of LLMs as code executors, where code snippets are directly fed to the models for execution, and outputs are returned. We are the first to comprehensively examine this feasibility across various LLMs, including OpenAI's o1, GPT-4o, GPT-3.5, DeepSeek, and Qwen-Coder. Notably, the o1 model achieved over 90% accuracy in code execution, while others demonstrated lower accuracy levels. Furthermore, we introduce an Iterative Instruction Prompting (IIP) technique that processes code snippets line by line, enhancing the accuracy of weaker models by an average of 7.22% (with the highest improvement of 18.96%) and an absolute average improvement of 3.86% against CoT prompting (with the highest improvement of 19.46%). Our study not only highlights the transformative potential of LLMs in coding but also lays the groundwork for future advancements in automated programming and the completion of complex tasks.

Can a Multichoice Dataset be Repurposed for Extractive Question Answering?

Apr 26, 2024

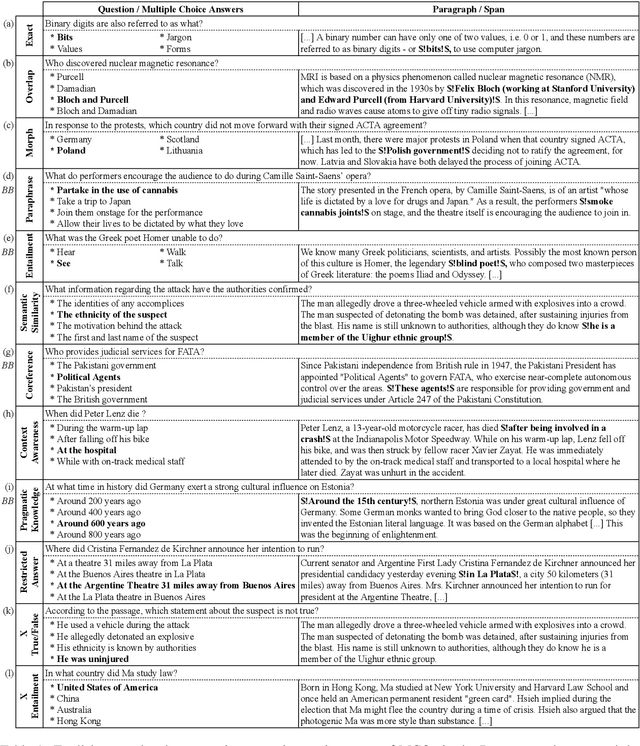

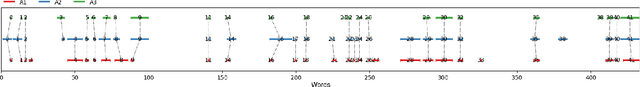

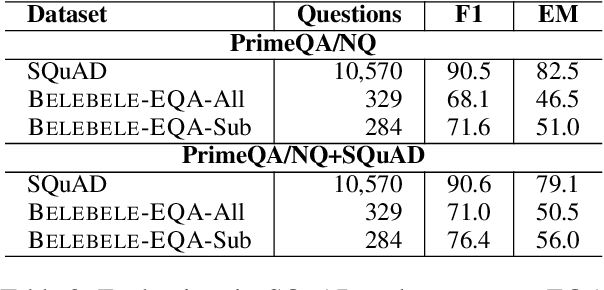

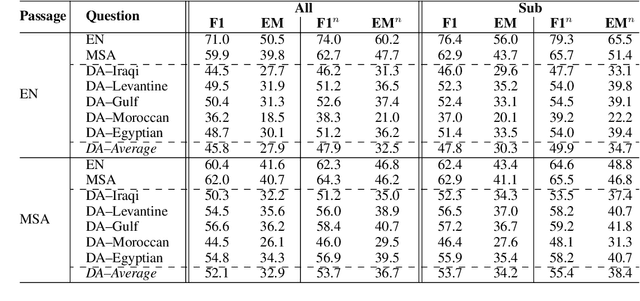

Abstract:The rapid evolution of Natural Language Processing (NLP) has favored major languages such as English, leaving a significant gap for many others due to limited resources. This is especially evident in the context of data annotation, a task whose importance cannot be underestimated, but which is time-consuming and costly. Thus, any dataset for resource-poor languages is precious, in particular when it is task-specific. Here, we explore the feasibility of repurposing existing datasets for a new NLP task: we repurposed the Belebele dataset (Bandarkar et al., 2023), which was designed for multiple-choice question answering (MCQA), to enable extractive QA (EQA) in the style of machine reading comprehension. We present annotation guidelines and a parallel EQA dataset for English and Modern Standard Arabic (MSA). We also present QA evaluation results for several monolingual and cross-lingual QA pairs including English, MSA, and five Arabic dialects. Our aim is to enable others to adapt our approach for the 120+ other language variants in Belebele, many of which are deemed under-resourced. We also conduct a thorough analysis and share our insights from the process, which we hope will contribute to a deeper understanding of the challenges and the opportunities associated with task reformulation in NLP research.

Multilingual Nonce Dependency Treebanks: Understanding how LLMs represent and process syntactic structure

Nov 13, 2023

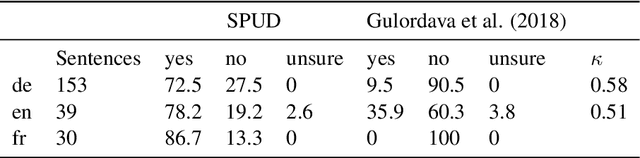

Abstract:We introduce SPUD (Semantically Perturbed Universal Dependencies), a framework for creating nonce treebanks for the multilingual Universal Dependencies (UD) corpora. SPUD data satisfies syntactic argument structure, provides syntactic annotations, and ensures grammaticality via language-specific rules. We create nonce data in Arabic, English, French, German, and Russian, and demonstrate two use cases of SPUD treebanks. First, we investigate the effect of nonce data on word co-occurrence statistics, as measured by perplexity scores of autoregressive (ALM) and masked language models (MLM). We find that ALM scores are significantly more affected by nonce data than MLM scores. Second, we show how nonce data affects the performance of syntactic dependency probes. We replicate the findings of M\"uller-Eberstein et al. (2022) on nonce test data and show that the performance declines on both MLMs and ALMs wrt. original test data. However, a majority of the performance is kept, suggesting that the probe indeed learns syntax independently from semantics.

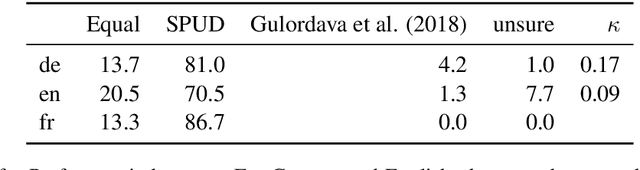

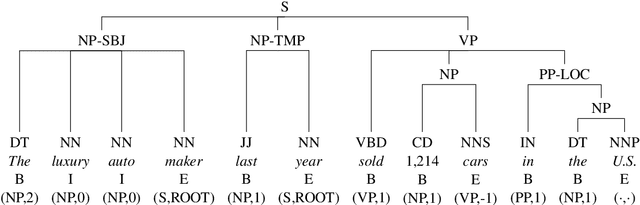

Probing for Constituency Structure in Neural Language Models

Apr 13, 2022

Abstract:In this paper, we investigate to which extent contextual neural language models (LMs) implicitly learn syntactic structure. More concretely, we focus on constituent structure as represented in the Penn Treebank (PTB). Using standard probing techniques based on diagnostic classifiers, we assess the accuracy of representing constituents of different categories within the neuron activations of a LM such as RoBERTa. In order to make sure that our probe focuses on syntactic knowledge and not on implicit semantic generalizations, we also experiment on a PTB version that is obtained by randomly replacing constituents with each other while keeping syntactic structure, i.e., a semantically ill-formed but syntactically well-formed version of the PTB. We find that 4 pretrained transfomer LMs obtain high performance on our probing tasks even on manipulated data, suggesting that semantic and syntactic knowledge in their representations can be separated and that constituency information is in fact learned by the LM. Moreover, we show that a complete constituency tree can be linearly separated from LM representations.

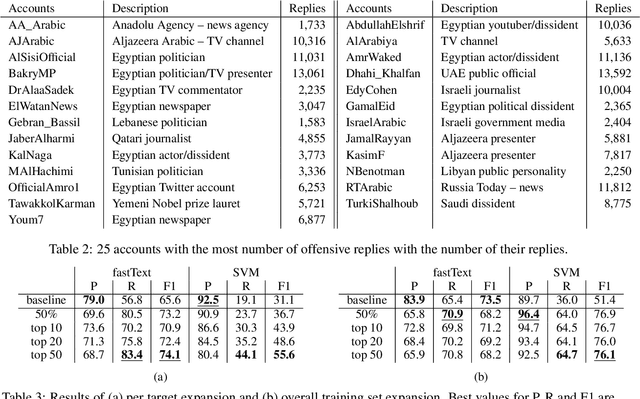

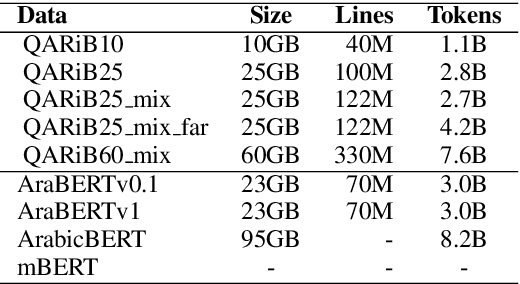

Automatic Expansion and Retargeting of Arabic Offensive Language Training

Nov 18, 2021

Abstract:Rampant use of offensive language on social media led to recent efforts on automatic identification of such language. Though offensive language has general characteristics, attacks on specific entities may exhibit distinct phenomena such as malicious alterations in the spelling of names. In this paper, we present a method for identifying entity specific offensive language. We employ two key insights, namely that replies on Twitter often imply opposition and some accounts are persistent in their offensiveness towards specific targets. Using our methodology, we are able to collect thousands of targeted offensive tweets. We show the efficacy of the approach on Arabic tweets with 13% and 79% relative F1-measure improvement in entity specific offensive language detection when using deep-learning based and support vector machine based classifiers respectively. Further, expanding the training set with automatically identified offensive tweets directed at multiple entities can improve F1-measure by 48%.

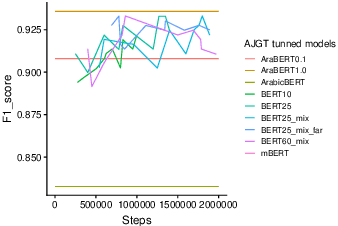

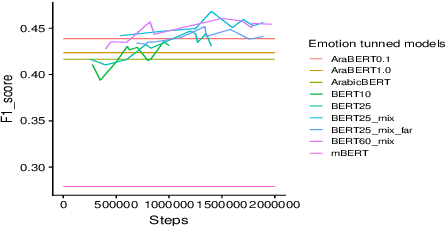

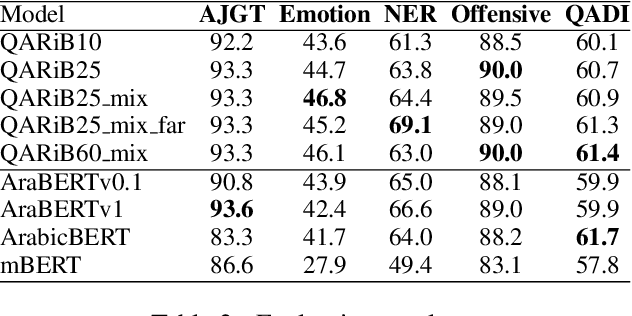

Pre-Training BERT on Arabic Tweets: Practical Considerations

Feb 21, 2021

Abstract:Pretraining Bidirectional Encoder Representations from Transformers (BERT) for downstream NLP tasks is a non-trival task. We pretrained 5 BERT models that differ in the size of their training sets, mixture of formal and informal Arabic, and linguistic preprocessing. All are intended to support Arabic dialects and social media. The experiments highlight the centrality of data diversity and the efficacy of linguistically aware segmentation. They also highlight that more data or more training step do not necessitate better models. Our new models achieve new state-of-the-art results on several downstream tasks. The resulting models are released to the community under the name QARiB.

Arabic Dialect Identification in the Wild

May 15, 2020

Abstract:We present QADI, an automatically collected dataset of tweets belonging to a wide range of country-level Arabic dialects -covering 18 different countries in the Middle East and North Africa region. Our method for building this dataset relies on applying multiple filters to identify users who belong to different countries based on their account descriptions and to eliminate tweets that are either written in Modern Standard Arabic or contain inappropriate language. The resultant dataset contains 540k tweets from 2,525 users who are evenly distributed across 18 Arab countries. Using intrinsic evaluation, we show that the labels of a set of randomly selected tweets are 91.5% accurate. For extrinsic evaluation, we are able to build effective country-level dialect identification on tweets with a macro-averaged F1-score of 60.6% across 18 classes.

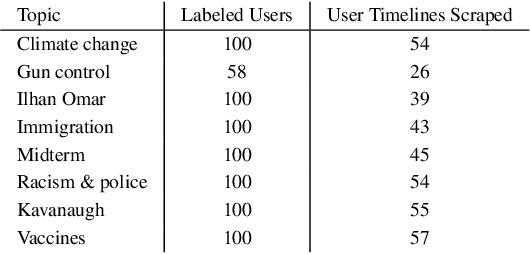

A Few Topical Tweets are Enough for Effective User-Level Stance Detection

Apr 07, 2020

Abstract:Stance detection entails ascertaining the position of a user towards a target, such as an entity, topic, or claim. Recent work that employs unsupervised classification has shown that performing stance detection on vocal Twitter users, who have many tweets on a target, can yield very high accuracy (+98%). However, such methods perform poorly or fail completely for less vocal users, who may have authored only a few tweets about a target. In this paper, we tackle stance detection for such users using two approaches. In the first approach, we improve user-level stance detection by representing tweets using contextualized embeddings, which capture latent meanings of words in context. We show that this approach outperforms two strong baselines and achieves 89.6% accuracy and 91.3% macro F-measure on eight controversial topics. In the second approach, we expand the tweets of a given user using their Twitter timeline tweets, and then we perform unsupervised classification of the user, which entails clustering a user with other users in the training set. This approach achieves 95.6% accuracy and 93.1% macro F-measure.

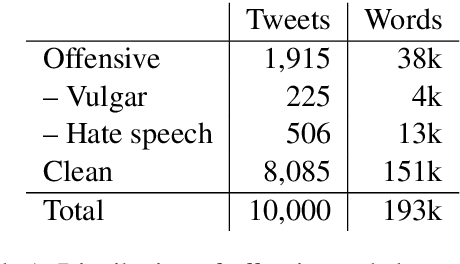

Arabic Offensive Language on Twitter: Analysis and Experiments

Apr 05, 2020

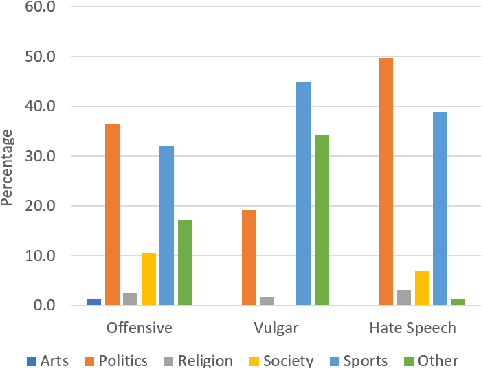

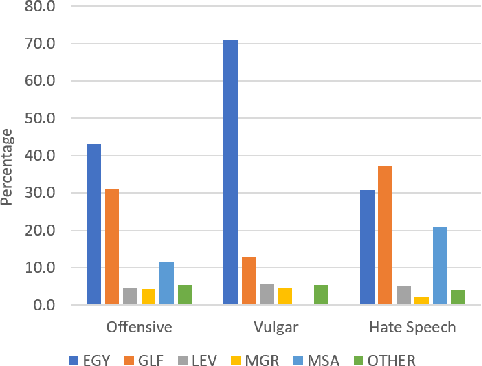

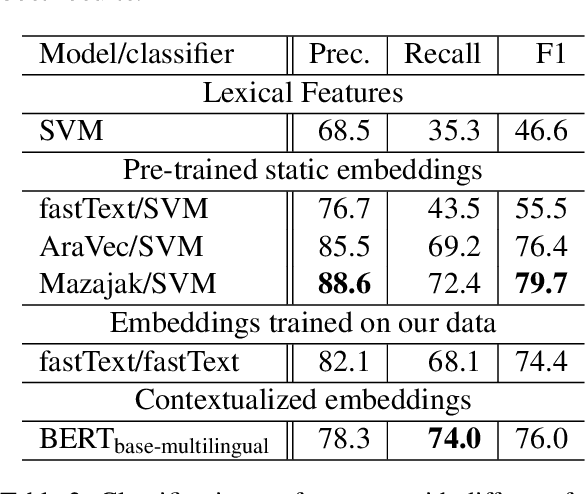

Abstract:Detecting offensive language on Twitter has many applications ranging from detecting/predicting bullying to measuring polarization. In this paper, we focus on building effective Arabic offensive tweet detection. We introduce a method for building an offensive dataset that is not biased by topic, dialect, or target. We produce the largest Arabic dataset to date with special tags for vulgarity and hate speech. Next, we analyze the dataset to determine which topics, dialects, and gender are most associated with offensive tweets and how Arabic speakers use offensive language. Lastly, we conduct a large battery of experiments to produce strong results (F1 = 79.7) on the dataset using Support Vector Machine techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge