Yihong Wang

Predicting Neo-Adjuvant Chemotherapy Response in Triple-Negative Breast Cancer Using Pre-Treatment Histopathologic Images

May 20, 2025Abstract:Triple-negative breast cancer (TNBC) is an aggressive subtype defined by the lack of estrogen receptor (ER), progesterone receptor (PR), and human epidermal growth factor receptor 2 (HER2) expression, resulting in limited targeted treatment options. Neoadjuvant chemotherapy (NACT) is the standard treatment for early-stage TNBC, with pathologic complete response (pCR) serving as a key prognostic marker; however, only 40-50% of patients with TNBC achieve pCR. Accurate prediction of NACT response is crucial to optimize therapy, avoid ineffective treatments, and improve patient outcomes. In this study, we developed a deep learning model to predict NACT response using pre-treatment hematoxylin and eosin (H&E)-stained biopsy images. Our model achieved promising results in five-fold cross-validation (accuracy: 82%, AUC: 0.86, F1-score: 0.84, sensitivity: 0.85, specificity: 0.81, precision: 0.80). Analysis of model attention maps in conjunction with multiplexed immunohistochemistry (mIHC) data revealed that regions of high predictive importance consistently colocalized with tumor areas showing elevated PD-L1 expression, CD8+ T-cell infiltration, and CD163+ macrophage density - all established biomarkers of treatment response. Our findings indicate that incorporating IHC-derived immune profiling data could substantially improve model interpretability and predictive performance. Furthermore, this approach may accelerate the discovery of novel histopathological biomarkers for NACT and advance the development of personalized treatment strategies for TNBC patients.

Large Language Model Can Be a Foundation for Hidden Rationale-Based Retrieval

Dec 21, 2024

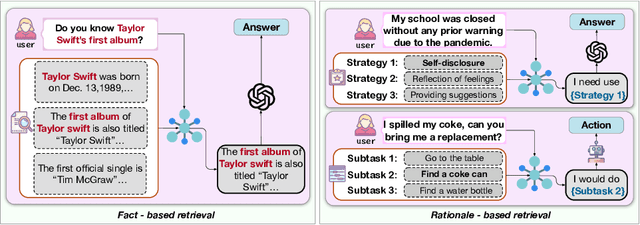

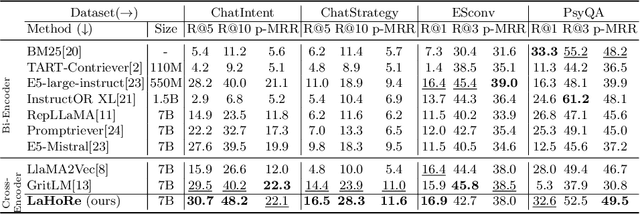

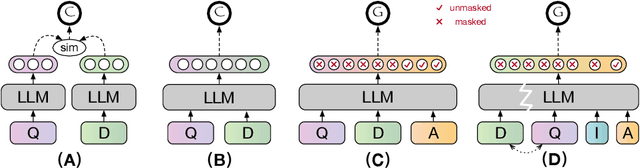

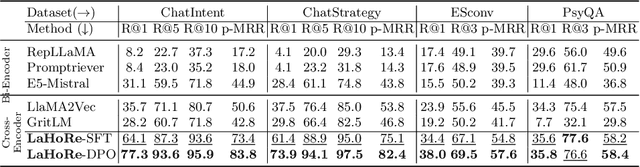

Abstract:Despite the recent advancement in Retrieval-Augmented Generation (RAG) systems, most retrieval methodologies are often developed for factual retrieval, which assumes query and positive documents are semantically similar. In this paper, we instead propose and study a more challenging type of retrieval task, called hidden rationale retrieval, in which query and document are not similar but can be inferred by reasoning chains, logic relationships, or empirical experiences. To address such problems, an instruction-tuned Large language model (LLM) with a cross-encoder architecture could be a reasonable choice. To further strengthen pioneering LLM-based retrievers, we design a special instruction that transforms the retrieval task into a generative task by prompting LLM to answer a binary-choice question. The model can be fine-tuned with direct preference optimization (DPO). The framework is also optimized for computational efficiency with no performance degradation. We name this retrieval framework by RaHoRe and verify its zero-shot and fine-tuned performance superiority on Emotional Support Conversation (ESC), compared with previous retrieval works. Our study suggests the potential to employ LLM as a foundation for a wider scope of retrieval tasks. Our codes, models, and datasets are available on https://github.com/flyfree5/LaHoRe.

Alleviating Hallucinations in Large Language Models with Scepticism Modeling

Sep 10, 2024

Abstract:Hallucinations is a major challenge for large language models (LLMs), prevents adoption in diverse fields. Uncertainty estimation could be used for alleviating the damages of hallucinations. The skeptical emotion of human could be useful for enhancing the ability of self estimation. Inspirited by this observation, we proposed a new approach called Skepticism Modeling (SM). This approach is formalized by combining the information of token and logits for self estimation. We construct the doubt emotion aware data, perform continual pre-training, and then fine-tune the LLMs, improve their ability of self estimation. Experimental results demonstrate this new approach effectively enhances a model's ability to estimate their uncertainty, and validate its generalization ability of other tasks by out-of-domain experiments.

LB-KBQA: Large-language-model and BERT based Knowledge-Based Question and Answering System

Feb 09, 2024

Abstract:Generative Artificial Intelligence (AI), because of its emergent abilities, has empowered various fields, one typical of which is large language models (LLMs). One of the typical application fields of Generative AI is large language models (LLMs), and the natural language understanding capability of LLM is dramatically improved when compared with conventional AI-based methods. The natural language understanding capability has always been a barrier to the intent recognition performance of the Knowledge-Based-Question-and-Answer (KBQA) system, which arises from linguistic diversity and the newly appeared intent. Conventional AI-based methods for intent recognition can be divided into semantic parsing-based and model-based approaches. However, both of the methods suffer from limited resources in intent recognition. To address this issue, we propose a novel KBQA system based on a Large Language Model(LLM) and BERT (LB-KBQA). With the help of generative AI, our proposed method could detect newly appeared intent and acquire new knowledge. In experiments on financial domain question answering, our model has demonstrated superior effectiveness.

ITRE: Low-light Image Enhancement Based on Illumination Transmission Ratio Estimation

Oct 08, 2023

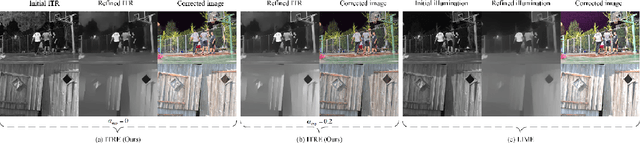

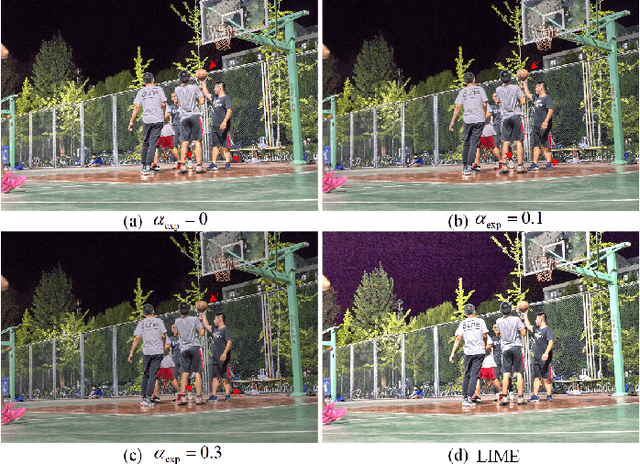

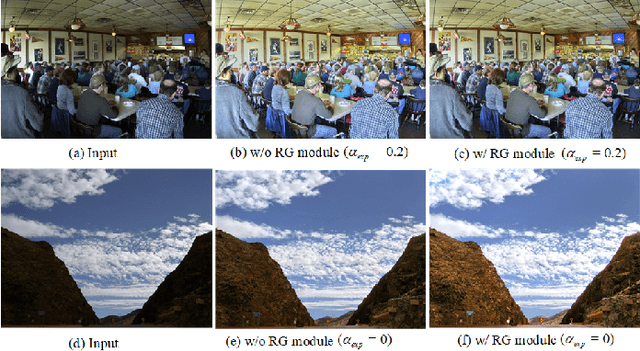

Abstract:Noise, artifacts, and over-exposure are significant challenges in the field of low-light image enhancement. Existing methods often struggle to address these issues simultaneously. In this paper, we propose a novel Retinex-based method, called ITRE, which suppresses noise and artifacts from the origin of the model, prevents over-exposure throughout the enhancement process. Specifically, we assume that there must exist a pixel which is least disturbed by low light within pixels of same color. First, clustering the pixels on the RGB color space to find the Illumination Transmission Ratio (ITR) matrix of the whole image, which determines that noise is not over-amplified easily. Next, we consider ITR of the image as the initial illumination transmission map to construct a base model for refined transmission map, which prevents artifacts. Additionally, we design an over-exposure module that captures the fundamental characteristics of pixel over-exposure and seamlessly integrate it into the base model. Finally, there is a possibility of weak enhancement when inter-class distance of pixels with same color is too small. To counteract this, we design a Robust-Guard module that safeguards the robustness of the image enhancement process. Extensive experiments demonstrate the effectiveness of our approach in suppressing noise, preventing artifacts, and controlling over-exposure level simultaneously. Our method performs superiority in qualitative and quantitative performance evaluations by comparing with state-of-the-art methods.

Computer-Aided Diagnosis of Label-Free 3-D Optical Coherence Microscopy Images of Human Cervical Tissue

Sep 17, 2018

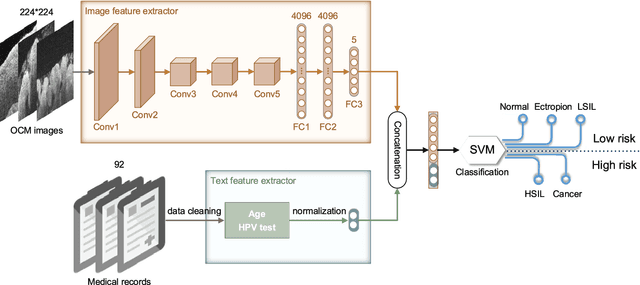

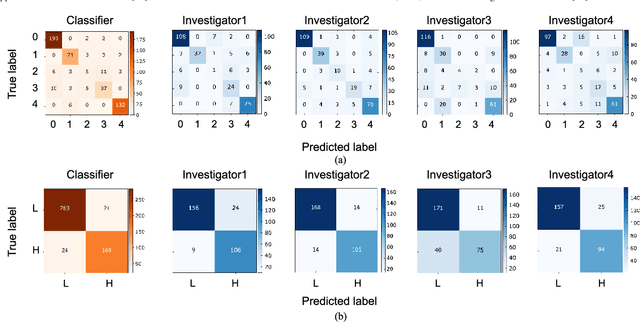

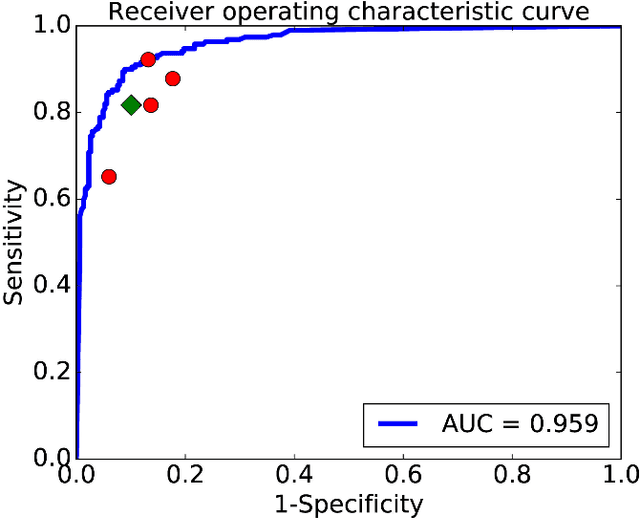

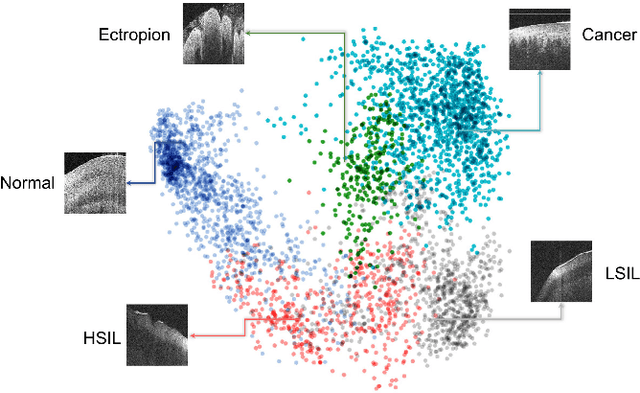

Abstract:Objective: Ultrahigh-resolution optical coherence microscopy (OCM) has recently demonstrated its potential for accurate diagnosis of human cervical diseases. One major challenge for clinical adoption, however, is the steep learning curve clinicians need to overcome to interpret OCM images. Developing an intelligent technique for computer-aided diagnosis (CADx) to accurately interpret OCM images will facilitate clinical adoption of the technology and improve patient care. Methods: 497 high-resolution 3-D OCM volumes (600 cross-sectional images each) were collected from 159 ex vivo specimens of 92 female patients. OCM image features were extracted using a convolutional neural network (CNN) model, concatenated with patient information (e.g., age, HPV results), and classified using a support vector machine classifier. Ten-fold cross-validations were utilized to test the performance of the CADx method in a five-class classification task and a binary classification task. Results: An 88.3 plus or minus 4.9% classification accuracy was achieved for five fine-grained classes of cervical tissue, namely normal, ectropion, low-grade and high-grade squamous intraepithelial lesions (LSIL and HSIL), and cancer. In the binary classification task (low-risk [normal, ectropion and LSIL] vs. high-risk [HSIL and cancer]), the CADx method achieved an area-under-the-curve (AUC) value of 0.959 with an 86.7 plus or minus 11.4% sensitivity and 93.5 plus or minus 3.8% specificity. Conclusion: The proposed deep-learning based CADx method outperformed three human experts. It was also able to identify morphological characteristics in OCM images that were consistent with histopathological interpretations. Significance: Label-free OCM imaging, combined with deep-learning based CADx methods, hold a great promise to be used in clinical settings for the effective screening and diagnosis of cervical diseases.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge