Yazhan Zhang

Motion-R3: Fast and Accurate Motion Annotation via Representation-based Representativeness Ranking

Apr 04, 2023

Abstract:In this paper, we follow a data-centric philosophy and propose a novel motion annotation method based on the inherent representativeness of motion data in a given dataset. Specifically, we propose a Representation-based Representativeness Ranking R3 method that ranks all motion data in a given dataset according to their representativeness in a learned motion representation space. We further propose a novel dual-level motion constrastive learning method to learn the motion representation space in a more informative way. Thanks to its high efficiency, our method is particularly responsive to frequent requirements change and enables agile development of motion annotation models. Experimental results on the HDM05 dataset against state-of-the-art methods demonstrate the superiority of our method.

Diverse Motion In-betweening with Dual Posture Stitching

Mar 25, 2023

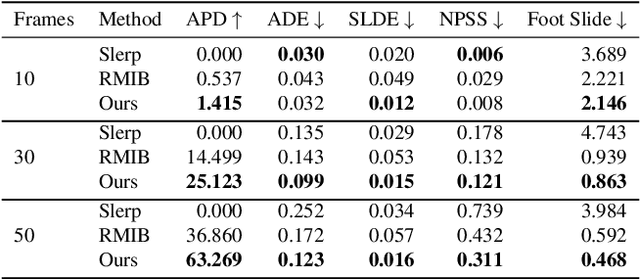

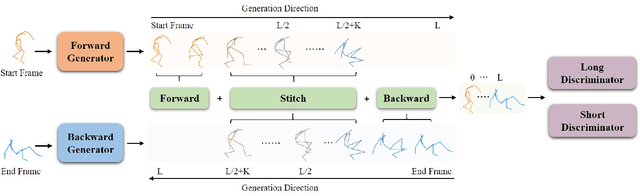

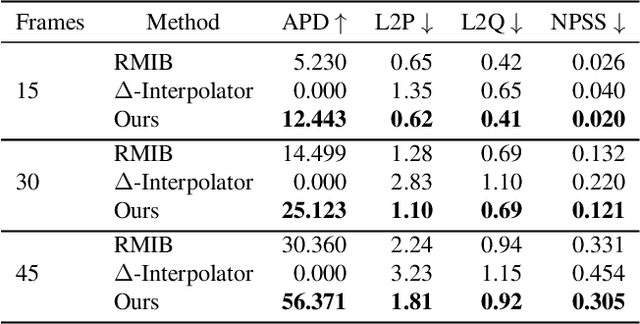

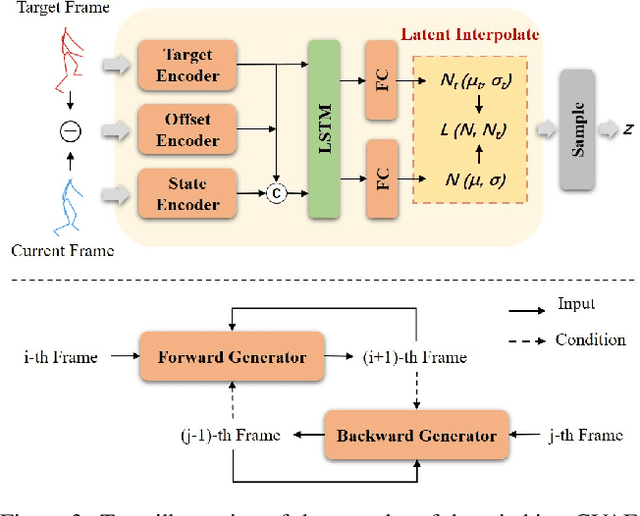

Abstract:In-betweening is a technique for generating transitions given initial and target character states. The majority of existing works require multiple (often $>$10) frames as input, which are not always accessible. Our work deals with a focused yet challenging problem: to generate the transition when given exactly two frames (only the first and last). To cope with this challenging scenario, we implement our bi-directional scheme which generates forward and backward transitions from the start and end frames with two adversarial autoregressive networks, and stitches them in the middle of the transition where there is no strict ground truth. The autoregressive networks based on conditional variational autoencoders (CVAE) are optimized by searching for a pair of optimal latent codes that minimize a novel stitching loss between their outputs. Results show that our method achieves higher motion quality and more diverse results than existing methods on both the LaFAN1 and Human3.6m datasets.

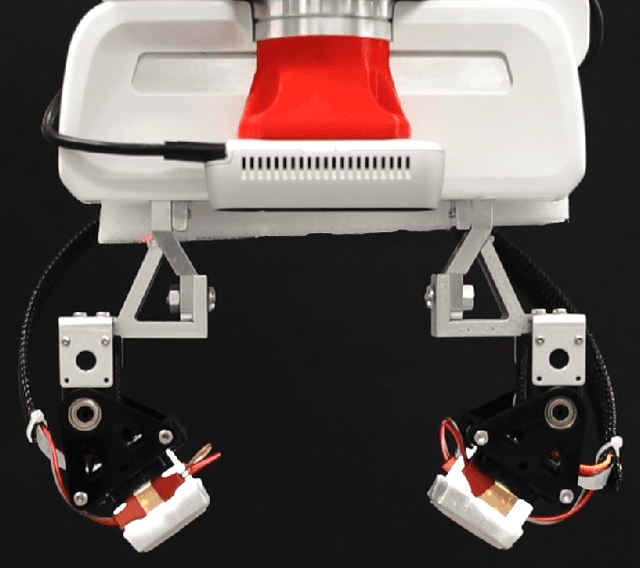

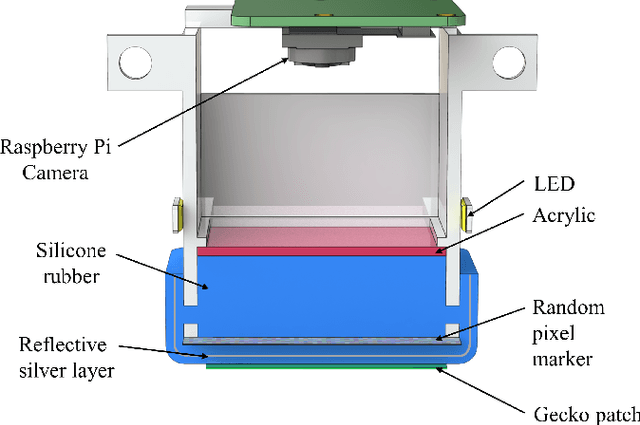

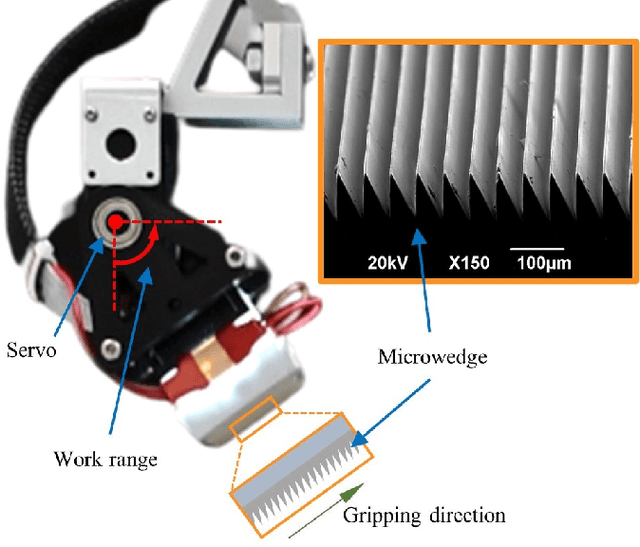

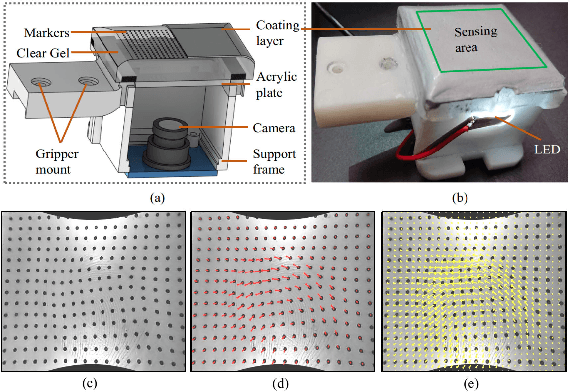

Viko: An Adaptive Gecko Gripper with Vision-based Tactile Sensor

May 03, 2021

Abstract:Monitoring the state of contact is essential for robotic devices, especially grippers that implement gecko-inspired adhesives where intimate contact is crucial for a firm attachment. However, due to the lack of deformable sensors, few have demonstrated tactile sensing for gecko grippers. We present Viko, an adaptive gecko gripper that utilizes vision-based tactile sensors to monitor contact state. The sensor provides high-resolution real-time measurements of contact area and shear force. Moreover, the sensor is adaptive, low-cost, and compact. We integrated gecko-inspired adhesives into the sensor surface without impeding its adaptiveness and performance. Using a robotic arm, we evaluate the performance of the gripper by a series of grasping test. The gripper has a maximum payload of 8N even at a low fingertip pitch angle of 30 degrees. We also showcase the gripper's ability to adjust fingertip pose for better contact using sensor feedback. Further, everyday object picking is presented as a demonstration of the gripper's adaptiveness.

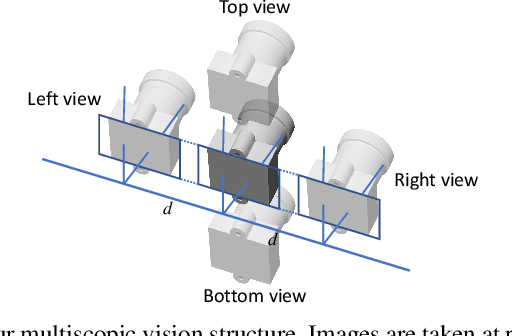

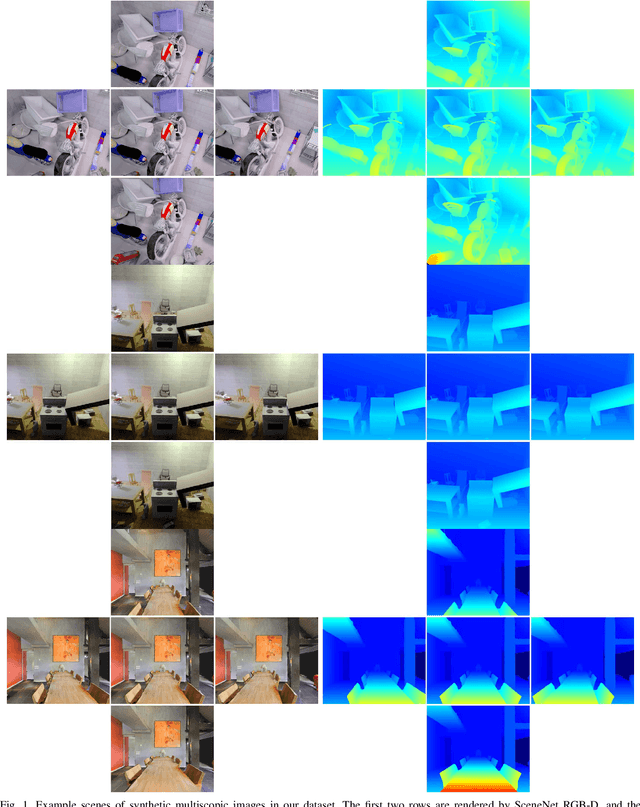

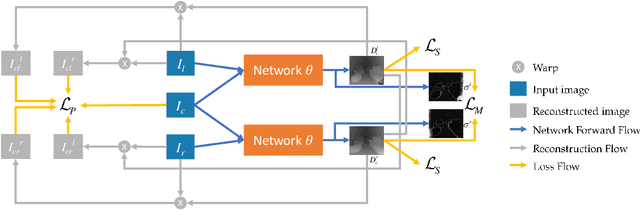

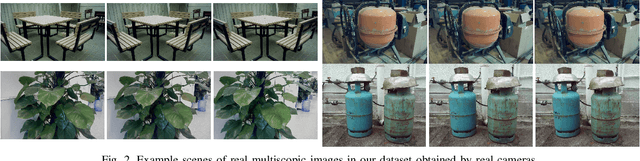

Stereo Matching by Self-supervision of Multiscopic Vision

Apr 09, 2021

Abstract:Self-supervised learning for depth estimation possesses several advantages over supervised learning. The benefits of no need for ground-truth depth, online fine-tuning, and better generalization with unlimited data attract researchers to seek self-supervised solutions. In this work, we propose a new self-supervised framework for stereo matching utilizing multiple images captured at aligned camera positions. A cross photometric loss, an uncertainty-aware mutual-supervision loss, and a new smoothness loss are introduced to optimize the network in learning disparity maps end-to-end without ground-truth depth information. To train this framework, we build a new multiscopic dataset consisting of synthetic images rendered by 3D engines and real images captured by real cameras. After being trained with only the synthetic images, our network can perform well in unseen outdoor scenes. Our experiment shows that our model obtains better disparity maps than previous unsupervised methods on the KITTI dataset and is comparable to supervised methods when generalized to unseen data. Our source code and dataset will be made public, and more results are provided in the supplement.

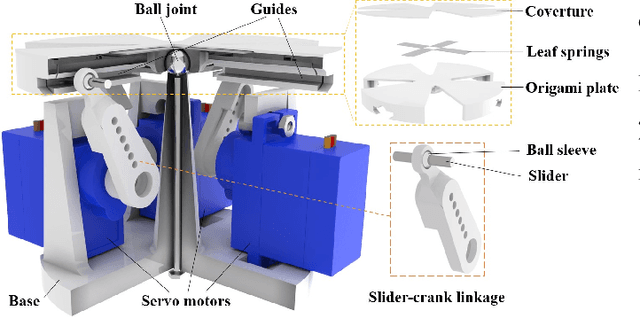

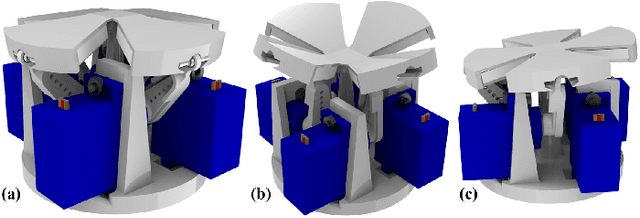

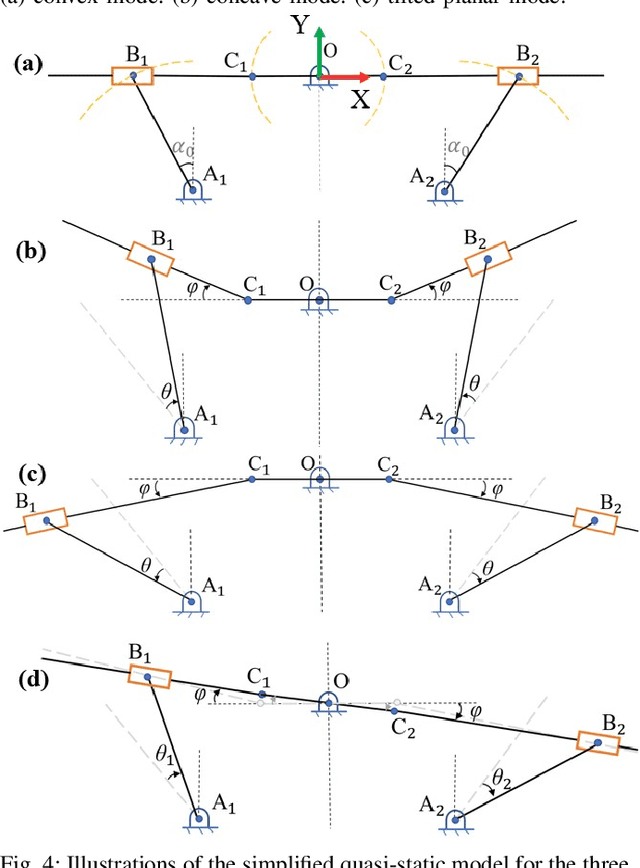

Origami-based Shape Morphing Fingertip to Enhance Grasping Stability and Dexterity

Oct 10, 2020

Abstract:Adaptation to various scene configurations and object properties, stability and dexterity in robotic grasping manipulation is far from explored. This work presents an origami-based shape morphing fingertip design to actively tackle the grasping stability and dexterity problems. The proposed fingertip utilizes origami as its skeleton providing degrees of freedom at desired positions and motor-driven four-bar-linkages as its transmission components to achieve a compact size of the fingertip. 3 morphing types that are commonly observed and essential in robotic grasping are studied and validated with geometrical modeling. Experiments including grasping an object with convex point contact to pivot or do pinch grasping, grasped object reorientation, and enveloping grasping with concave fingertip surfaces are implemented to demonstrate the advantages of our fingertip compared to conventional parallel grippers. Multi-functionality on enhancing grasping stability and dexterity via active adaptation given different grasped objects and manipulation tasks are justified. Video is available at youtu.be/jJoJ3xnDdVk/.

Towards Learning to Detect and Predict Contact Events on Vision-based Tactile Sensors

Oct 09, 2019

Abstract:In essence, successful grasp boils down to correct responses to multiple contact events between fingertips and objects. In most scenarios, tactile sensing is adequate to distinguish contact events. Due to the nature of high dimensionality of tactile information, classifying spatiotemporal tactile signals using conventional model-based methods is difficult. In this work, we propose to predict and classify tactile signal using deep learning methods, seeking to enhance the adaptability of the robotic grasp system to external event changes that may lead to grasping failure. We develop a deep learning framework and collect 6650 tactile image sequences with a vision-based tactile sensor, and the neural network is integrated into a contact-event-based robotic grasping system. In grasping experiments, we achieved 52% increase in terms of object lifting success rate with contact detection, significantly higher robustness under unexpected loads with slip prediction compared with open-loop grasps, demonstrating that integration of the proposed framework into robotic grasping system substantially improves picking success rate and capability to withstand external disturbances.

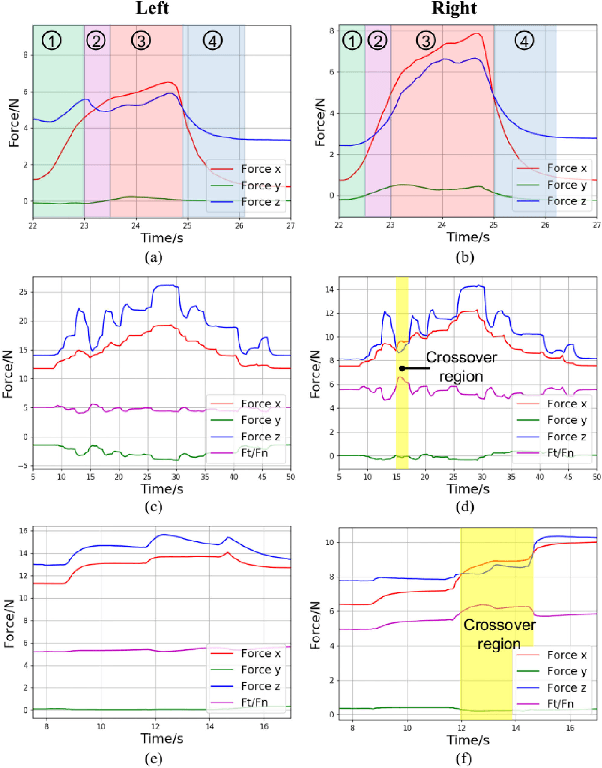

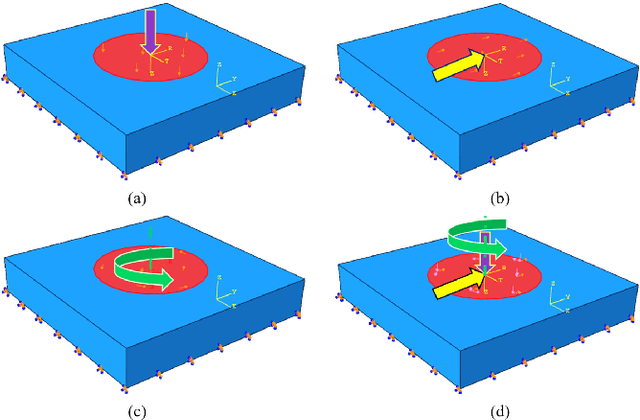

Effective Estimation of Contact Force and Torque for Vision-based Tactile Sensor with Helmholtz-Hodge Decomposition

Jun 22, 2019

Abstract:Retrieving rich contact information from robotic tactile sensing has been a challenging, yet significant task for the effective perception of object properties that the robot interacts with. This work is dedicated to developing an algorithm to estimate contact force and torque for vision-based tactile sensors. We first introduce the observation of the contact deformation patterns of hyperelastic materials under ideal single-axial loads in simulation. Then based on the observation, we propose a method of estimating surface forces and torque from the contact deformation vector field with the Helmholtz-Hodge Decomposition (HHD) algorithm. Extensive experiments of calibration and baseline comparison are followed to verify the effectiveness of the proposed method in terms of prediction error and variance. The proposed algorithm is further integrated into a contact force visualization module as well as a closed-loop adaptive grasp force control framework and is shown to be useful in both visualization of contact stability and minimum force grasping task.

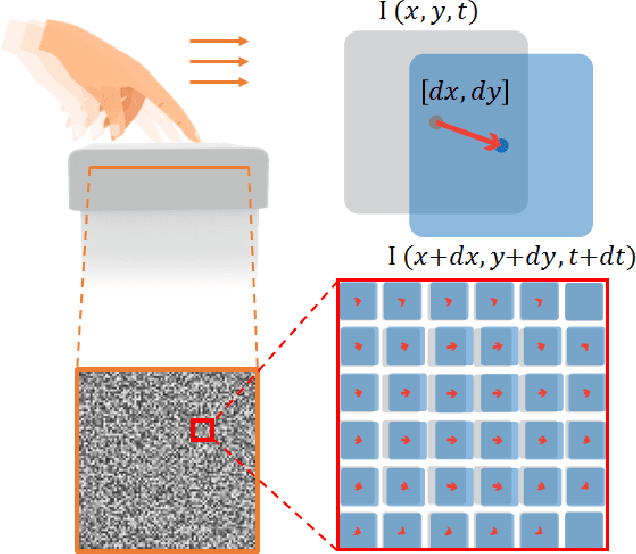

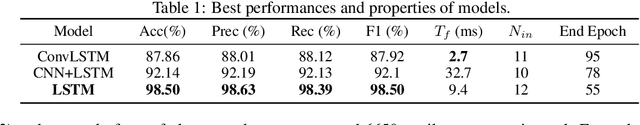

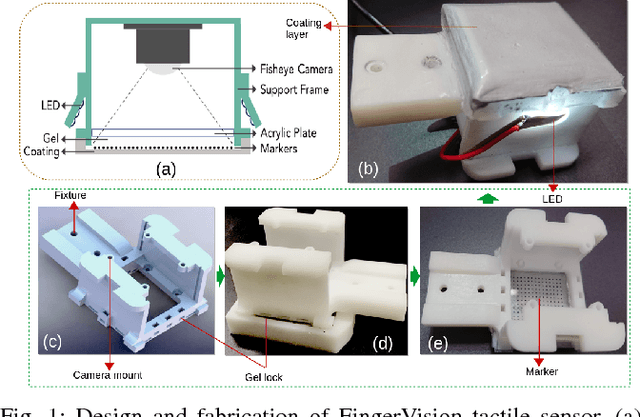

FingerVision Tactile Sensor Design and Slip Detection Using Convolutional LSTM Network

Oct 05, 2018

Abstract:Tactile sensing is essential to the human perception system, so as to robot. In this paper, we develop a novel optical-based tactile sensor "FingerVision" with effective signal processing algorithms. This sensor is composed of soft skin with embedded marker array bonded to rigid frame, and a web camera with a fisheye lens. While being excited with contact force, the camera tracks the movements of markers and deformation field is obtained. Compared to existing tactile sensors, our sensor features compact footprint, high resolution, and ease of fabrication. Besides, utilizing the deformation field estimation, we propose a slip classification framework based on convolution Long Short Term Memory (convolutional LSTM) networks. The data collection process takes advantage of the human sense of slip, during which human hand holds 12 daily objects, interacts with sensor skin and labels data with a slip or non-slip identity based on human feeling of slip. Our slip classification framework performs high accuracy of 97.62% on the test dataset. It is expected to be capable of enhancing the stability of robot grasping significantly, leading to better contact force control, finer object interaction and more active sensing manipulation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge