Xuhan Zhu

StreamingClaw Technical Report

Mar 23, 2026Abstract:Applications such as embodied intelligence rely on a real-time perception-decision-action closed loop, posing stringent challenges for streaming video understanding. However, current agents suffer from fragmented capabilities, such as supporting only offline video understanding, lacking long-term multimodal memory mechanisms, or struggling to achieve real-time reasoning and proactive interaction under streaming inputs. These shortcomings have become a key bottleneck for preventing them from sustaining perception, making real-time decisions, and executing actions in real-world environments. To alleviate these issues, we propose StreamingClaw, a unified agent framework for streaming video understanding and embodied intelligence. It is also an OpenClaw-compatible framework that supports real-time, multimodal streaming interaction. StreamingClaw integrates five core capabilities: (1) It supports real-time streaming reasoning. (2) It supports reasoning about future events and proactive interaction under the online evolution of interaction objectives. (3) It supports multimodal long-term storage, hierarchical evolution, and efficient retrieval of shared memory across multiple agents. (4) It supports a closed-loop of perception-decision-action. In addition to conventional tools and skills, it also provides streaming tools and action-centric skills tailored for real-world physical environments. (5) It is compatible with the OpenClaw framework, allowing it to fully leverage the resources and support of the open-source community. With these designs, StreamingClaw integrates online real-time reasoning, multimodal long-term memory, and proactive interaction within a unified framework. Moreover, by translating decisions into executable actions, it enables direct control of the physical world, supporting practical deployment of embodied interaction.

EXPLORE-Bench: Egocentric Scene Prediction with Long-Horizon Reasoning

Mar 12, 2026Abstract:Multimodal large language models (MLLMs) are increasingly considered as a foundation for embodied agents, yet it remains unclear whether they can reliably reason about the long-term physical consequences of actions from an egocentric viewpoint. We study this gap through a new task, Egocentric Scene Prediction with LOng-horizon REasoning: given an initial-scene image and a sequence of atomic action descriptions, a model is asked to predict the final scene after all actions are executed. To enable systematic evaluation, we introduce EXPLORE-Bench, a benchmark curated from real first-person videos spanning diverse scenarios. Each instance pairs long action sequences with structured final-scene annotations, including object categories, visual attributes, and inter-object relations, which supports fine-grained, quantitative assessment. Experiments on a range of proprietary and open-source MLLMs reveal a significant performance gap to humans, indicating that long-horizon egocentric reasoning remains a major challenge. We further analyze test-time scaling via stepwise reasoning and show that decomposing long action sequences can improve performance to some extent, while incurring non-trivial computational overhead. Overall, EXPLORE-Bench provides a principled testbed for measuring and advancing long-horizon reasoning for egocentric embodied perception.

UV R-CNN: Stable and Efficient Dense Human Pose Estimation

Nov 04, 2022Abstract:Dense pose estimation is a dense 3D prediction task for instance-level human analysis, aiming to map human pixels from an RGB image to a 3D surface of the human body. Due to a large amount of surface point regression, the training process appears to be easy to collapse compared to other region-based human instance analyzing tasks. By analyzing the loss formulation of the existing dense pose estimation model, we introduce a novel point regression loss function, named Dense Points} loss to stable the training progress, and a new balanced loss weighting strategy to handle the multi-task losses. With the above novelties, we propose a brand new architecture, named UV R-CNN. Without auxiliary supervision and external knowledge from other tasks, UV R-CNN can handle many complicated issues in dense pose model training progress, achieving 65.0% $AP_{gps}$ and 66.1% $AP_{gpsm}$ on the DensePose-COCO validation subset with ResNet-50-FPN feature extractor, competitive among the state-of-the-art dense human pose estimation methods.

Killing Two Birds with One Stone:Efficient and Robust Training of Face Recognition CNNs by Partial FC

Mar 28, 2022

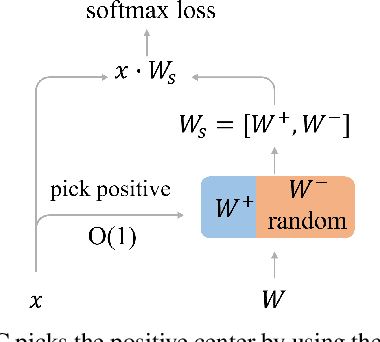

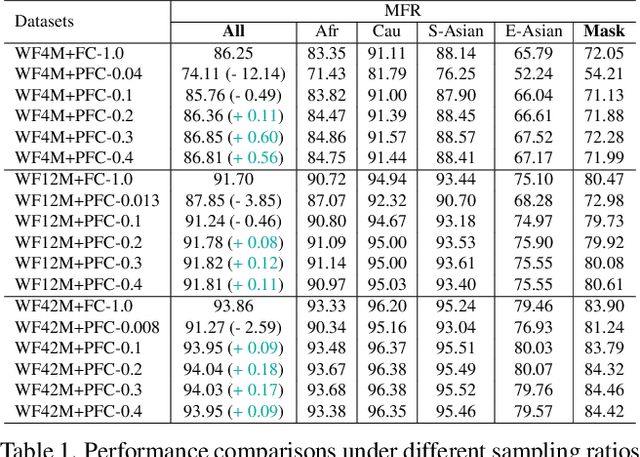

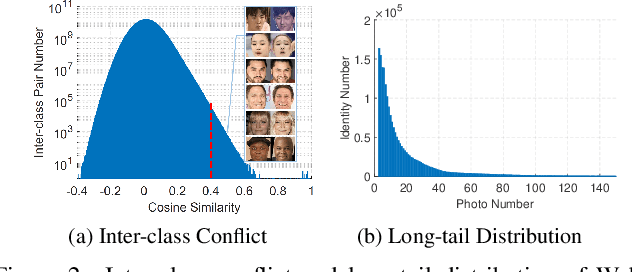

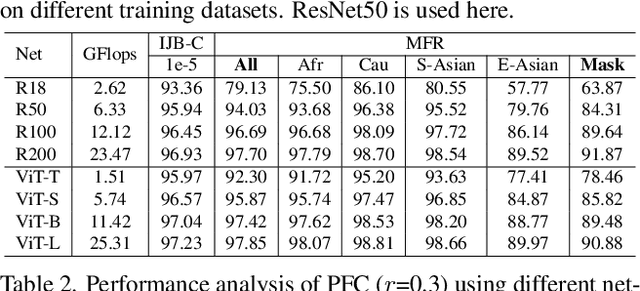

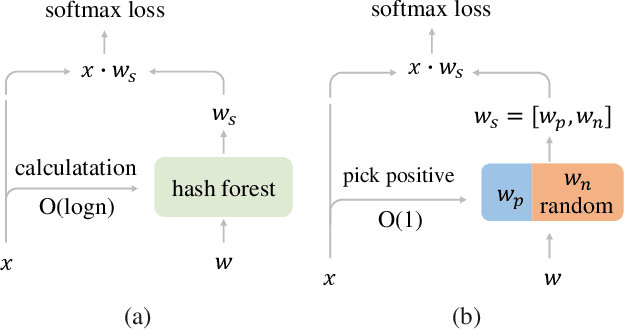

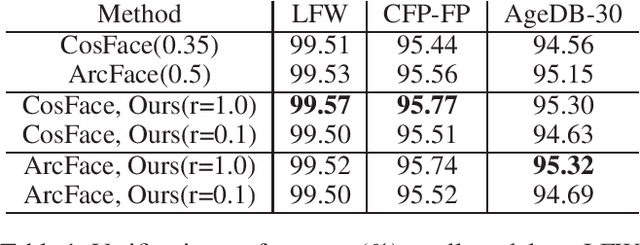

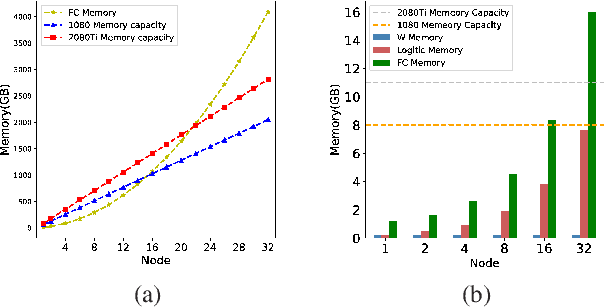

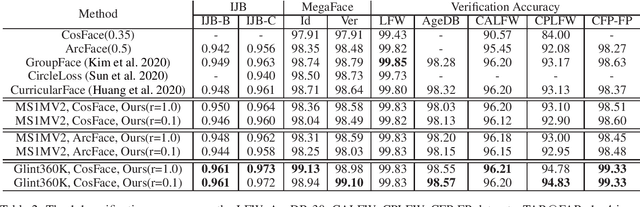

Abstract:Learning discriminative deep feature embeddings by using million-scale in-the-wild datasets and margin-based softmax loss is the current state-of-the-art approach for face recognition. However, the memory and computing cost of the Fully Connected (FC) layer linearly scales up to the number of identities in the training set. Besides, the large-scale training data inevitably suffers from inter-class conflict and long-tailed distribution. In this paper, we propose a sparsely updating variant of the FC layer, named Partial FC (PFC). In each iteration, positive class centers and a random subset of negative class centers are selected to compute the margin-based softmax loss. All class centers are still maintained throughout the whole training process, but only a subset is selected and updated in each iteration. Therefore, the computing requirement, the probability of inter-class conflict, and the frequency of passive update on tail class centers, are dramatically reduced. Extensive experiments across different training data and backbones (e.g. CNN and ViT) confirm the effectiveness, robustness and efficiency of the proposed PFC. The source code is available at \https://github.com/deepinsight/insightface/tree/master/recognition.

* 8 pages, 16 figures

Partial FC: Training 10 Million Identities on a Single Machine

Oct 11, 2020

Abstract:Face recognition has been an active and vital topic among computer vision community for a long time. Previous researches mainly focus on loss functions used for facial feature extraction network, among which the improvements of softmax-based loss functions greatly promote the performance of face recognition. However, the contradiction between the drastically increasing number of face identities and the shortage of GPU memories is gradually becoming irreconcilable. In this paper, we thoroughly analyze the optimization goal of softmax-based loss functions and the difficulty of training massive identities. We find that the importance of negative classes in softmax function in face representation learning is not as high as we previously thought. The experiment demonstrates no loss of accuracy when training with only 10\% randomly sampled classes for the softmax-based loss functions, compared with training with full classes using state-of-the-art models on mainstream benchmarks. We also implement a very efficient distributed sampling algorithm, taking into account model accuracy and training efficiency, which uses only eight NVIDIA RTX2080Ti to complete classification tasks with tens of millions of identities. The code of this paper has been made available https://github.com/deepinsight/insightface/tree/master/recognition/partial_fc.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge