Xiangru Huang

3DGS$^2$-TR: Scalable Second-Order Trust-Region Method for 3D Gaussian Splatting

Jan 30, 2026Abstract:We propose 3DGS$^2$-TR,a second-order optimizer for accelerating the scene training problem in 3D Gaussian Splatting (3DGS). Unlike existing second-order approaches that rely on explicit or dense curvature representations, such as 3DGS-LM (Höllein et al., 2025) or 3DGS2 (Lan et al., 2025), our method approximates curvature using only the diagonal of the Hessian matrix, efficiently via Hutchinson's method. Our approach is fully matrix-free and has the same complexity as ADAM (Kingma, 2024), $O(n)$ in both computation and memory costs. To ensure stable optimization in the presence of strong nonlinearity in the 3DGS rasterization process, we introduce a parameter-wise trust-region technique based on the squared Hellinger distance, regularizing updates to Gaussian parameters. Under identical parameter initialization and without densification, 3DGS$^2$-TR is able to achieve better reconstruction quality on standard datasets, using 50% fewer training iterations compared to ADAM, while incurring less than 1GB of peak GPU memory overhead (17% more than ADAM and 85% less than 3DGS-LM), enabling scalability to very large scenes and potentially to distributed training settings.

ReWeaver: Towards Simulation-Ready and Topology-Accurate Garment Reconstruction

Jan 23, 2026Abstract:High-quality 3D garment reconstruction plays a crucial role in mitigating the sim-to-real gap in applications such as digital avatars, virtual try-on and robotic manipulation. However, existing garment reconstruction methods typically rely on unstructured representations, such as 3D Gaussian Splats, struggling to provide accurate reconstructions of garment topology and sewing structures. As a result, the reconstructed outputs are often unsuitable for high-fidelity physical simulation. We propose ReWeaver, a novel framework for topology-accurate 3D garment and sewing pattern reconstruction from sparse multi-view RGB images. Given as few as four input views, ReWeaver predicts seams and panels as well as their connectivities in both the 2D UV space and the 3D space. The predicted seams and panels align precisely with the multi-view images, yielding structured 2D--3D garment representations suitable for 3D perception, high-fidelity physical simulation, and robotic manipulation. To enable effective training, we construct a large-scale dataset GCD-TS, comprising multi-view RGB images, 3D garment geometries, textured human body meshes and annotated sewing patterns. The dataset contains over 100,000 synthetic samples covering a wide range of complex geometries and topologies. Extensive experiments show that ReWeaver consistently outperforms existing methods in terms of topology accuracy, geometry alignment and seam-panel consistency.

KeyframeFace: From Text to Expressive Facial Keyframes

Dec 12, 2025Abstract:Generating dynamic 3D facial animation from natural language requires understanding both temporally structured semantics and fine-grained expression changes. Existing datasets and methods mainly focus on speech-driven animation or unstructured expression sequences and therefore lack the semantic grounding and temporal structures needed for expressive human performance generation. In this work, we introduce KeyframeFace, a large-scale multimodal dataset designed for text-to-animation research through keyframe-level supervision. KeyframeFace provides 2,100 expressive scripts paired with monocular videos, per-frame ARKit coefficients, contextual backgrounds, complex emotions, manually defined keyframes, and multi-perspective annotations based on ARKit coefficients and images via Large Language Models (LLMs) and Multimodal Large Language Models (MLLMs). Beyond the dataset, we propose the first text-to-animation framework that explicitly leverages LLM priors for interpretable facial motion synthesis. This design aligns the semantic understanding capabilities of LLMs with the interpretable structure of ARKit's coefficients, enabling high-fidelity expressive animation. KeyframeFace and our LLM-based framework together establish a new foundation for interpretable, keyframe-guided, and context-aware text-to-animation. Code and data are available at https://github.com/wjc12345123/KeyframeFace.

VidCRAFT3: Camera, Object, and Lighting Control for Image-to-Video Generation

Feb 12, 2025Abstract:Recent image-to-video generation methods have demonstrated success in enabling control over one or two visual elements, such as camera trajectory or object motion. However, these methods are unable to offer control over multiple visual elements due to limitations in data and network efficacy. In this paper, we introduce VidCRAFT3, a novel framework for precise image-to-video generation that enables control over camera motion, object motion, and lighting direction simultaneously. To better decouple control over each visual element, we propose the Spatial Triple-Attention Transformer, which integrates lighting direction, text, and image in a symmetric way. Since most real-world video datasets lack lighting annotations, we construct a high-quality synthetic video dataset, the VideoLightingDirection (VLD) dataset. This dataset includes lighting direction annotations and objects of diverse appearance, enabling VidCRAFT3 to effectively handle strong light transmission and reflection effects. Additionally, we propose a three-stage training strategy that eliminates the need for training data annotated with multiple visual elements (camera motion, object motion, and lighting direction) simultaneously. Extensive experiments on benchmark datasets demonstrate the efficacy of VidCRAFT3 in producing high-quality video content, surpassing existing state-of-the-art methods in terms of control granularity and visual coherence. All code and data will be publicly available.

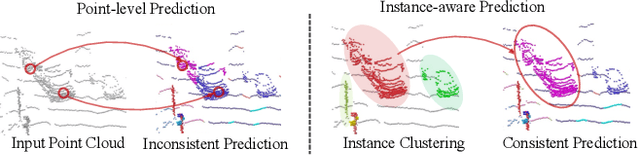

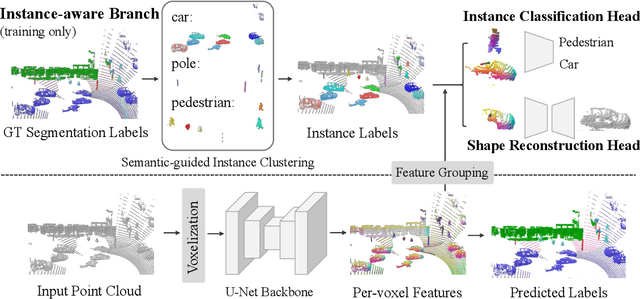

Instance-aware 3D Semantic Segmentation powered by Shape Generators and Classifiers

Nov 21, 2023

Abstract:Existing 3D semantic segmentation methods rely on point-wise or voxel-wise feature descriptors to output segmentation predictions. However, these descriptors are often supervised at point or voxel level, leading to segmentation models that can behave poorly at instance-level. In this paper, we proposed a novel instance-aware approach for 3D semantic segmentation. Our method combines several geometry processing tasks supervised at instance-level to promote the consistency of the learned feature representation. Specifically, our methods use shape generators and shape classifiers to perform shape reconstruction and classification tasks for each shape instance. This enforces the feature representation to faithfully encode both structural and local shape information, with an awareness of shape instances. In the experiments, our method significantly outperform existing approaches in 3D semantic segmentation on several public benchmarks, such as Waymo Open Dataset, SemanticKITTI and ScanNetV2.

GenCorres: Consistent Shape Matching via Coupled Implicit-Explicit Shape Generative Models

Apr 20, 2023

Abstract:This paper introduces GenCorres, a novel unsupervised joint shape matching (JSM) approach. The basic idea of GenCorres is to learn a parametric mesh generator to fit an unorganized deformable shape collection while constraining deformations between adjacent synthetic shapes to preserve geometric structures such as local rigidity and local conformality. GenCorres presents three appealing advantages over existing JSM techniques. First, GenCorres performs JSM among a synthetic shape collection whose size is much bigger than the input shapes and fully leverages the data-driven power of JSM. Second, GenCorres unifies consistent shape matching and pairwise matching (i.e., by enforcing deformation priors between adjacent synthetic shapes). Third, the generator provides a concise encoding of consistent shape correspondences. However, learning a mesh generator from an unorganized shape collection is challenging. It requires a good initial fitting to each shape and can easily get trapped by local minimums. GenCorres addresses this issue by learning an implicit generator from the input shapes, which provides intermediate shapes between two arbitrary shapes. We introduce a novel approach for computing correspondences between adjacent implicit surfaces and force the correspondences to preserve geometric structures and be cycle-consistent. Synthetic shapes of the implicit generator then guide initial fittings (i.e., via template-based deformation) for learning the mesh generator. Experimental results show that GenCorres considerably outperforms state-of-the-art JSM techniques on benchmark datasets. The synthetic shapes of GenCorres preserve local geometric features and yield competitive performance gains against state-of-the-art deformable shape generators.

LiDAR-Based 3D Object Detection via Hybrid 2D Semantic Scene Generation

Apr 04, 2023Abstract:Bird's-Eye View (BEV) features are popular intermediate scene representations shared by the 3D backbone and the detector head in LiDAR-based object detectors. However, little research has been done to investigate how to incorporate additional supervision on the BEV features to improve proposal generation in the detector head, while still balancing the number of powerful 3D layers and efficient 2D network operations. This paper proposes a novel scene representation that encodes both the semantics and geometry of the 3D environment in 2D, which serves as a dense supervision signal for better BEV feature learning. The key idea is to use auxiliary networks to predict a combination of explicit and implicit semantic probabilities by exploiting their complementary properties. Extensive experiments show that our simple yet effective design can be easily integrated into most state-of-the-art 3D object detectors and consistently improves upon baseline models.

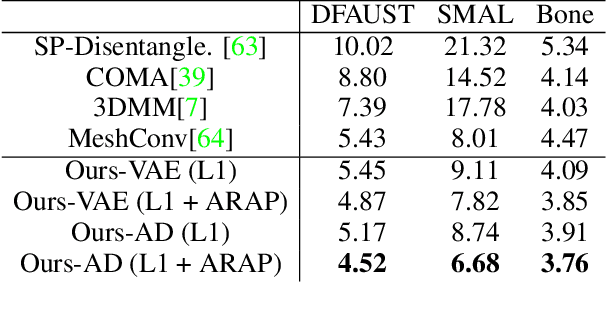

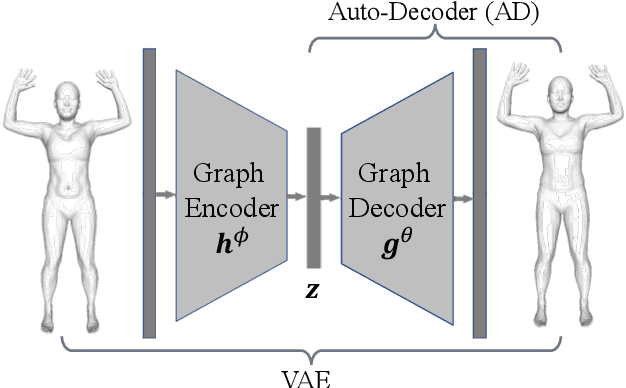

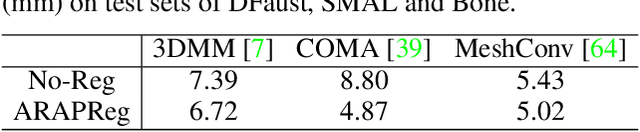

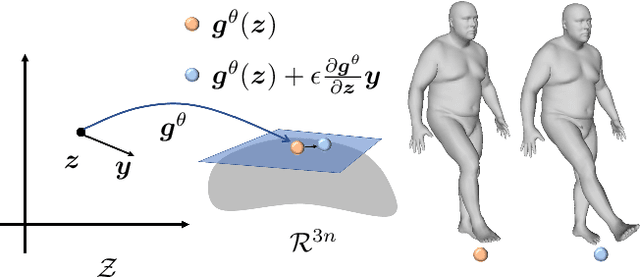

ARAPReg: An As-Rigid-As Possible Regularization Loss for Learning Deformable Shape Generators

Aug 21, 2021

Abstract:This paper introduces an unsupervised loss for training parametric deformation shape generators. The key idea is to enforce the preservation of local rigidity among the generated shapes. Our approach builds on an approximation of the as-rigid-as possible (or ARAP) deformation energy. We show how to develop the unsupervised loss via a spectral decomposition of the Hessian of the ARAP energy. Our loss nicely decouples pose and shape variations through a robust norm. The loss admits simple closed-form expressions. It is easy to train and can be plugged into any standard generation models, e.g., variational auto-encoder (VAE) and auto-decoder (AD). Experimental results show that our approach outperforms existing shape generation approaches considerably on public benchmark datasets of various shape categories such as human, animal and bone.

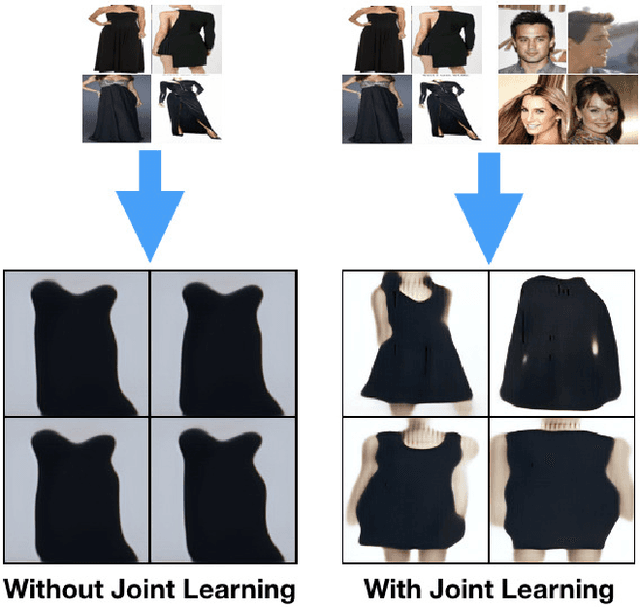

Joint Learning of Neural Networks via Iterative Reweighted Least Squares

May 16, 2019

Abstract:In this paper, we introduce the problem of jointly learning feed-forward neural networks across a set of relevant but diverse datasets. Compared to learning a separate network from each dataset in isolation, joint learning enables us to extract correlated information across multiple datasets to significantly improve the quality of learned networks. We formulate this problem as joint learning of multiple copies of the same network architecture and enforce the network weights to be shared across these networks. Instead of hand-encoding the shared network layers, we solve an optimization problem to automatically determine how layers should be shared between each pair of datasets. Experimental results show that our approach outperforms baselines without joint learning and those using pretraining-and-fine-tuning. We show the effectiveness of our approach on three tasks: image classification, learning auto-encoders, and image generation.

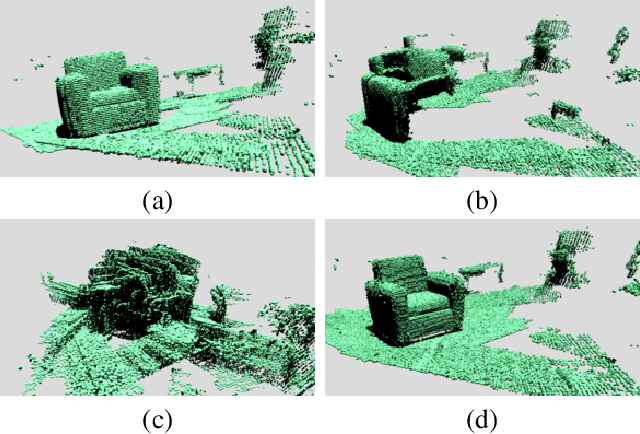

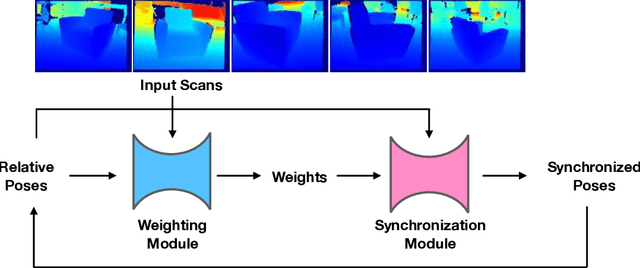

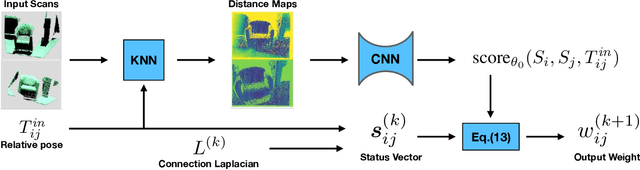

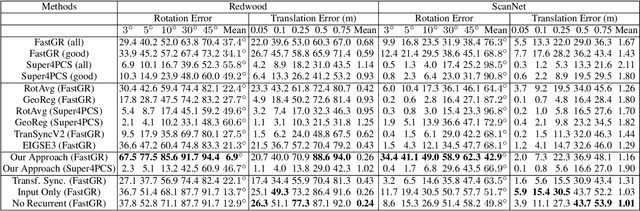

Learning Transformation Synchronization

Jan 27, 2019

Abstract:Reconstructing the 3D model of a physical object typically requires us to align the depth scans obtained from different camera poses into the same coordinate system. Solutions to this global alignment problem usually proceed in two steps. The first step estimates relative transformations between pairs of scans using an off-the-shelf technique. Due to limited information presented between pairs of scans, the resulting relative transformations are generally noisy. The second step then jointly optimizes the relative transformations among all input depth scans. A natural constraint used in this step is the cycle-consistency constraint, which allows us to prune incorrect relative transformations by detecting inconsistent cycles. The performance of such approaches, however, heavily relies on the quality of the input relative transformations. Instead of merely using the relative transformations as the input to perform transformation synchronization, we propose to use a neural network to learn the weights associated with each relative transformation. Our approach alternates between transformation synchronization using weighted relative transformations and predicting new weights of the input relative transformations using a neural network. We demonstrate the usefulness of this approach across a wide range of datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge