Tzyy-Ping Jung

Graph Representations for Reading Comprehension Analysis using Large Language Model and Eye-Tracking Biomarker

Jul 16, 2025Abstract:Reading comprehension is a fundamental skill in human cognitive development. With the advancement of Large Language Models (LLMs), there is a growing need to compare how humans and LLMs understand language across different contexts and apply this understanding to functional tasks such as inference, emotion interpretation, and information retrieval. Our previous work used LLMs and human biomarkers to study the reading comprehension process. The results showed that the biomarkers corresponding to words with high and low relevance to the inference target, as labeled by the LLMs, exhibited distinct patterns, particularly when validated using eye-tracking data. However, focusing solely on individual words limited the depth of understanding, which made the conclusions somewhat simplistic despite their potential significance. This study used an LLM-based AI agent to group words from a reading passage into nodes and edges, forming a graph-based text representation based on semantic meaning and question-oriented prompts. We then compare the distribution of eye fixations on important nodes and edges. Our findings indicate that LLMs exhibit high consistency in language understanding at the level of graph topological structure. These results build on our previous findings and offer insights into effective human-AI co-learning strategies.

From Theory to Application: Fine-Tuning Large EEG Model with Real-World Stress Data

May 29, 2025

Abstract:Recent advancements in Large Language Models have inspired the development of foundation models across various domains. In this study, we evaluate the efficacy of Large EEG Models (LEMs) by fine-tuning LaBraM, a state-of-the-art foundation EEG model, on a real-world stress classification dataset collected in a graduate classroom. Unlike previous studies that primarily evaluate LEMs using data from controlled clinical settings, our work assesses their applicability to real-world environments. We train a binary classifier that distinguishes between normal and elevated stress states using resting-state EEG data recorded from 18 graduate students during a class session. The best-performing fine-tuned model achieves a balanced accuracy of 90.47% with a 5-second window, significantly outperforming traditional stress classifiers in both accuracy and inference efficiency. We further evaluate the robustness of the fine-tuned LEM under random data shuffling and reduced channel counts. These results demonstrate the capability of LEMs to effectively process real-world EEG data and highlight their potential to revolutionize brain-computer interface applications by shifting the focus from model-centric to data-centric design.

ChatGPT-BCI: Word-Level Neural State Classification Using GPT, EEG, and Eye-Tracking Biomarkers in Semantic Inference Reading Comprehension

Sep 27, 2023Abstract:With the recent explosion of large language models (LLMs), such as Generative Pretrained Transformers (GPT), the need to understand the ability of humans and machines to comprehend semantic language meaning has entered a new phase. This requires interdisciplinary research that bridges the fields of cognitive science and natural language processing (NLP). This pilot study aims to provide insights into individuals' neural states during a semantic relation reading-comprehension task. We propose jointly analyzing LLMs, eye-gaze, and electroencephalographic (EEG) data to study how the brain processes words with varying degrees of relevance to a keyword during reading. We also use a feature engineering approach to improve the fixation-related EEG data classification while participants read words with high versus low relevance to the keyword. The best validation accuracy in this word-level classification is over 60\% across 12 subjects. Words of high relevance to the inference keyword had significantly more eye fixations per word: 1.0584 compared to 0.6576 when excluding no-fixation words, and 1.5126 compared to 1.4026 when including them. This study represents the first attempt to classify brain states at a word level using LLM knowledge. It provides valuable insights into human cognitive abilities and the realm of Artificial General Intelligence (AGI), and offers guidance for developing potential reading-assisted technologies.

Using EEG Signals to Assess Workload during Memory Retrieval in a Real-world Scenario

May 14, 2023Abstract:Objective: The Electroencephalogram (EEG) is gaining popularity as a physiological measure for neuroergonomics in human factor studies because it is objective, less prone to bias, and capable of assessing the dynamics of cognitive states. This study investigated the associations between memory workload and EEG during participants' typical office tasks on a single-monitor and dual-monitor arrangement. We expect a higher memory workload for the single-monitor arrangement. Approach: We designed an experiment that mimics the scenario of a subject performing some office work and examined whether the subjects experienced various levels of memory workload in two different office setups: 1) a single-monitor setup and 2) a dual-monitor setup. We used EEG band power, mutual information, and coherence as features to train machine learning models to classify high versus low memory workload states. Main results: The study results showed that these characteristics exhibited significant differences that were consistent across all participants. We also verified the robustness and consistency of these EEG signatures in a different data set collected during a Sternberg task in a prior study. Significance: The study found the EEG correlates of memory workload across individuals, demonstrating the effectiveness of using EEG analysis in conducting real-world neuroergonomic studies.

IC-U-Net: A U-Net-based Denoising Autoencoder Using Mixtures of Independent Components for Automatic EEG Artifact Removal

Nov 22, 2021

Abstract:Electroencephalography (EEG) signals are often contaminated with artifacts. It is imperative to develop a practical and reliable artifact removal method to prevent misinterpretations of neural signals and underperformance of brain-computer interfaces. This study developed a new artifact removal method, IC-U-Net, which is based on the U-Net architecture for removing pervasive EEG artifacts and reconstructing brain sources. The IC-U-Net was trained using mixtures of brain and non-brain sources decomposed by independent component analysis and employed an ensemble of loss functions to model complex signal fluctuations in EEG recordings. The effectiveness of the proposed method in recovering brain sources and removing various artifacts (e.g., eye blinks/movements, muscle activities, and line/channel noises) was demonstrated in a simulation study and three real-world EEG datasets collected at rest and while driving and walking. IC-U-Net is user-friendly and publicly available, does not require parameter tuning or artifact type designations, and has no limitations on channel numbers. Given the increasing need to image natural brain dynamics in a mobile setting, IC-U-Net offers a promising end-to-end solution for automatically removing artifacts from EEG recordings.

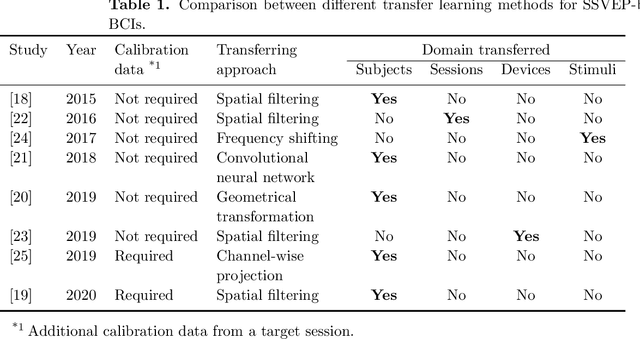

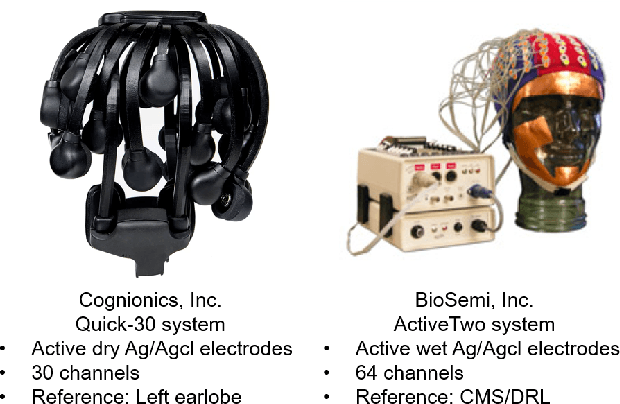

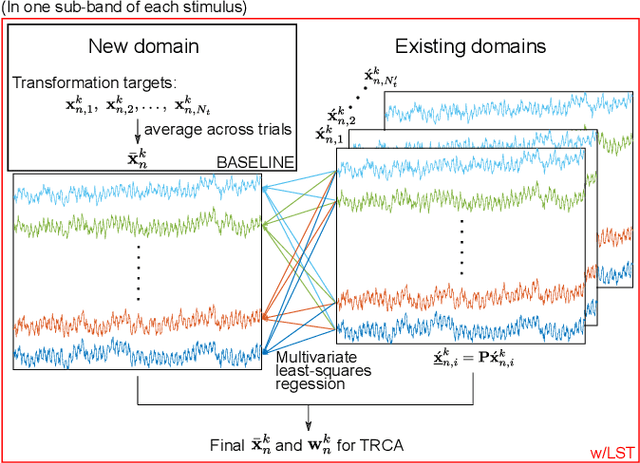

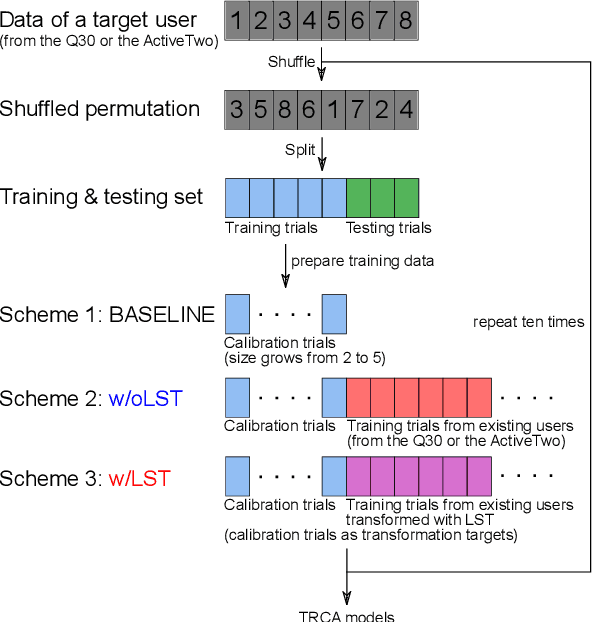

Boosting Template-based SSVEP Decoding by Cross-domain Transfer Learning

Feb 10, 2021

Abstract:Objective: This study aims to establish a generalized transfer-learning framework for boosting the performance of steady-state visual evoked potential (SSVEP)-based brain-computer interfaces (BCIs) by leveraging cross-domain data transferring. Approach: We enhanced the state-of-the-art template-based SSVEP decoding through incorporating a least-squares transformation (LST)-based transfer learning to leverage calibration data across multiple domains (sessions, subjects, and EEG montages). Main results: Study results verified the efficacy of LST in obviating the variability of SSVEPs when transferring existing data across domains. Furthermore, the LST-based method achieved significantly higher SSVEP-decoding accuracy than the standard task-related component analysis (TRCA)-based method and the non-LST naive transfer-learning method. Significance: This study demonstrated the capability of the LST-based transfer learning to leverage existing data across subjects and/or devices with an in-depth investigation of its rationale and behavior in various circumstances. The proposed framework significantly improved the SSVEP decoding accuracy over the standard TRCA approach when calibration data are limited. Its performance in calibration reduction could facilitate plug-and-play SSVEP-based BCIs and further practical applications.

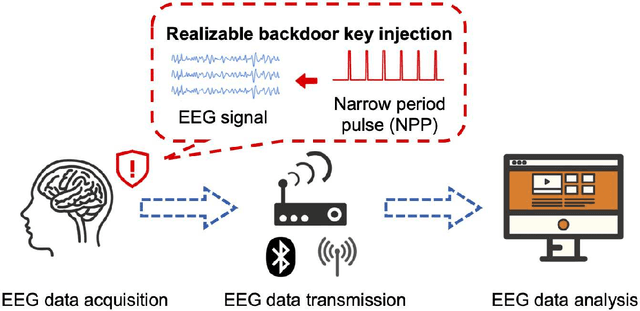

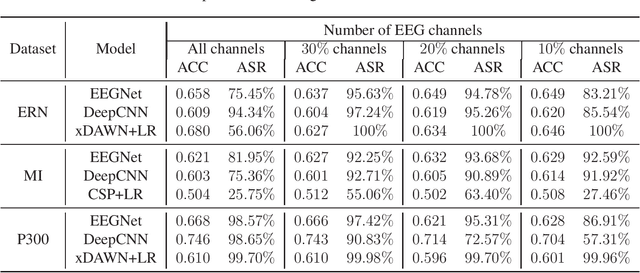

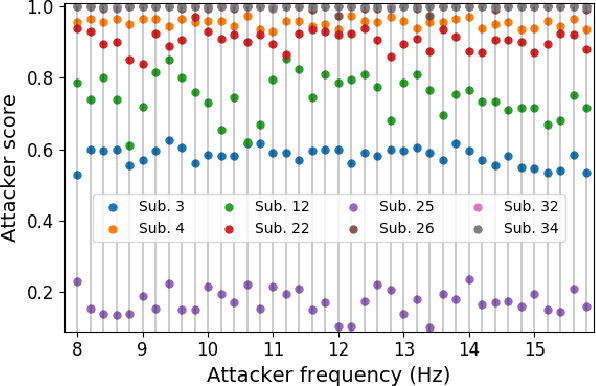

EEG-Based Brain-Computer Interfaces Are Vulnerable to Backdoor Attacks

Oct 30, 2020

Abstract:Research and development of electroencephalogram (EEG) based brain-computer interfaces (BCIs) have advanced rapidly, partly due to the wide adoption of sophisticated machine learning approaches for decoding the EEG signals. However, recent studies have shown that machine learning algorithms are vulnerable to adversarial attacks, e.g., the attacker can add tiny adversarial perturbations to a test sample to fool the model, or poison the training data to insert a secret backdoor. Previous research has shown that adversarial attacks are also possible for EEG-based BCIs. However, only adversarial perturbations have been considered, and the approaches are theoretically sound but very difficult to implement in practice. This article proposes to use narrow period pulse for poisoning attack of EEG-based BCIs, which is more feasible in practice and has never been considered before. One can create dangerous backdoors in the machine learning model by injecting poisoning samples into the training set. Test samples with the backdoor key will then be classified into the target class specified by the attacker. What most distinguishes our approach from previous ones is that the backdoor key does not need to be synchronized with the EEG trials, making it very easy to implement. The effectiveness and robustness of the backdoor attack approach is demonstrated, highlighting a critical security concern for EEG-based BCIs.

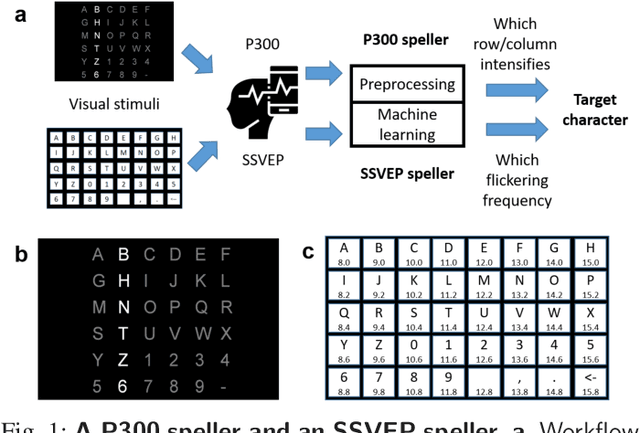

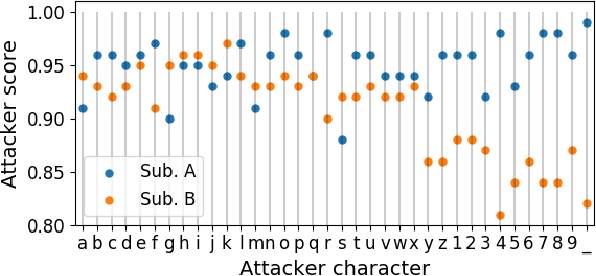

Tiny Noise Can Make an EEG-Based Brain-Computer Interface Speller Output Anything

Mar 04, 2020

Abstract:An electroencephalogram (EEG) based brain-computer interface (BCI) speller allows a user to input text to a computer by thought. It is particularly useful to severely disabled individuals, e.g., amyotrophic lateral sclerosis patients, who have no other effective means of communication with another person or a computer. Most studies so far focused on making EEG-based BCI spellers faster and more reliable; however, few have considered their security. Here we show that P300 and steady-state visual evoked potential BCI spellers are very vulnerable, i.e., they can be severely attacked by adversarial perturbations, which are too tiny to be noticed when added to EEG signals, but can mislead the spellers to spell anything the attacker wants. The consequence could range from merely user frustration to severe misdiagnosis in clinical applications. We hope our research can attract more attention to the security of EEG-based BCI spellers, and more broadly, EEG-based BCIs, which has received little attention before.

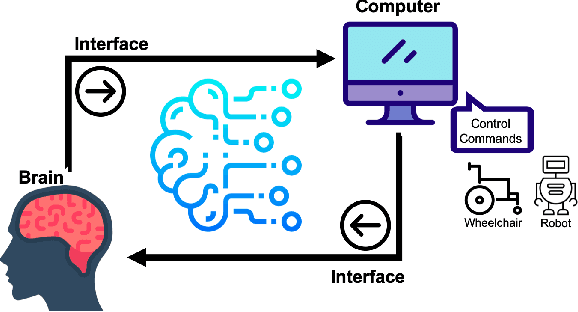

EEG-based Brain-Computer Interfaces : A Survey of Recent Studies on Signal Sensing Technologies and Computational Intelligence Approaches and their Applications

Jan 28, 2020

Abstract:Brain-Computer Interface (BCI) is a powerful communication tool between users and systems, which enhances the capability of the human brain in communicating and interacting with the environment directly. Advances in neuroscience and computer science in the past decades have led to exciting developments in BCI, thereby making BCI a top interdisciplinary research area in computational neuroscience and intelligence. Recent technological advances such as wearable sensing devices, real-time data streaming, machine learning, and deep learning approaches have increased interest in electroencephalographic (EEG) based BCI for translational and healthcare applications. Many people benefit from EEG-based BCIs, which facilitate continuous monitoring of fluctuations in cognitive states under monotonous tasks in the workplace or at home. In this study, we survey the recent literature of EEG signal sensing technologies and computational intelligence approaches in BCI applications, compensated for the gaps in the systematic summary of the past five years (2015-2019). In specific, we first review the current status of BCI and its significant obstacles. Then, we present advanced signal sensing and enhancement technologies to collect and clean EEG signals, respectively. Furthermore, we demonstrate state-of-art computational intelligence techniques, including interpretable fuzzy models, transfer learning, deep learning, and combinations, to monitor, maintain, or track human cognitive states and operating performance in prevalent applications. Finally, we deliver a couple of innovative BCI-inspired healthcare applications and discuss some future research directions in EEG-based BCIs.

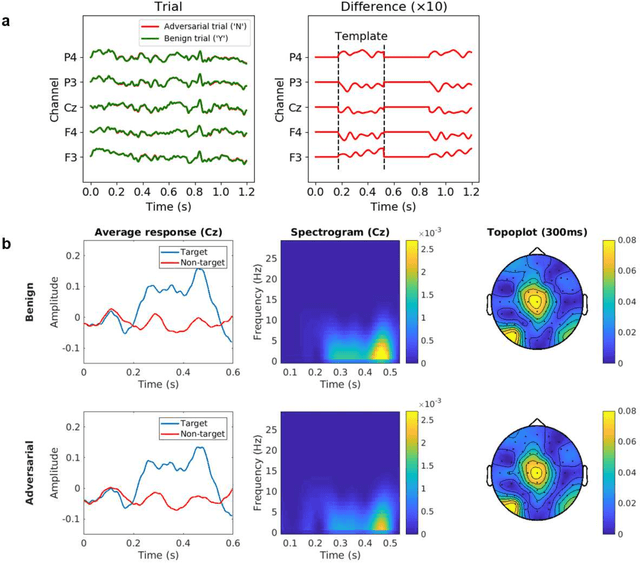

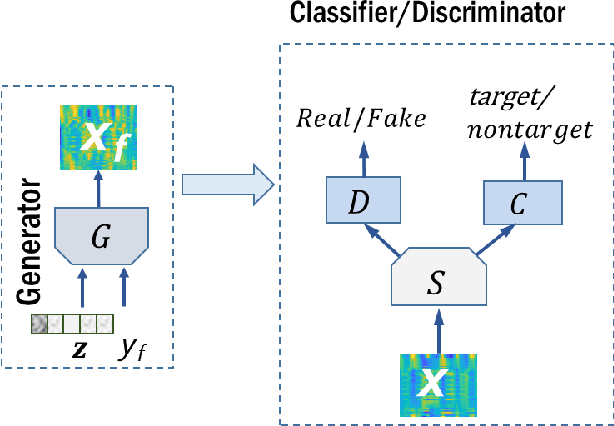

Modeling EEG data distribution with a Wasserstein Generative Adversarial Network to predict RSVP Events

Nov 11, 2019

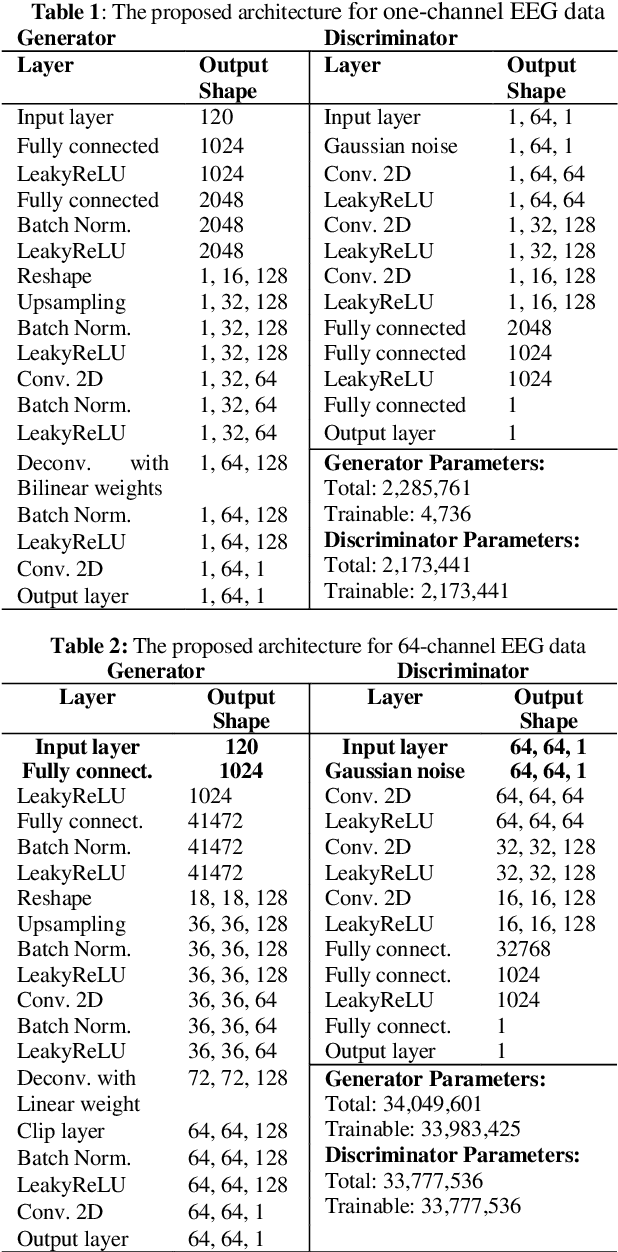

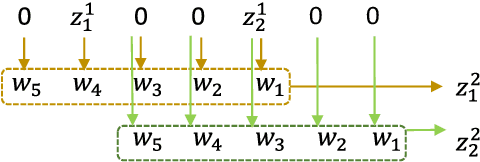

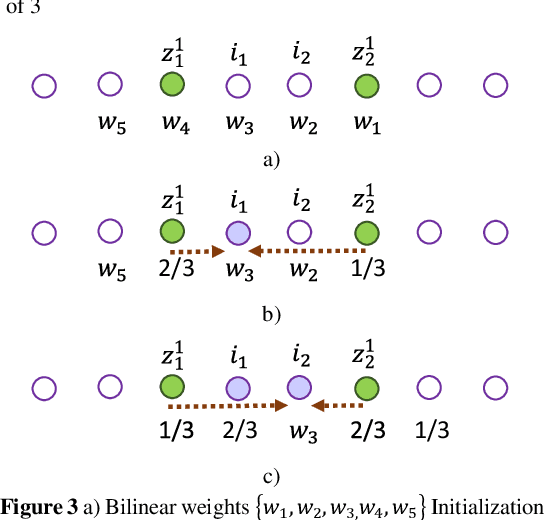

Abstract:Electroencephalography (EEG) data are difficult to obtain due to complex experimental setups and reduced comfort with prolonged wearing. This poses challenges to train powerful deep learning model with the limited EEG data. Being able to generate EEG data computationally could address this limitation. We propose a novel Wasserstein Generative Adversarial Network with gradient penalty (WGAN-GP) to synthesize EEG data. This network addresses several modeling challenges of simulating time-series EEG data including frequency artifacts and training instability. We further extended this network to a class-conditioned variant that also includes a classification branch to perform event-related classification. We trained the proposed networks to generate one and 64-channel data resembling EEG signals routinely seen in a rapid serial visual presentation (RSVP) experiment and demonstrated the validity of the generated samples. We also tested intra-subject cross-session classification performance for classifying the RSVP target events and showed that class-conditioned WGAN-GP can achieve improved event-classification performance over EEGNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge