Swati Gupta

Improved Regret Guarantees for Online Mirror Descent using a Portfolio of Mirror Maps

Feb 13, 2026Abstract:OMD and its variants give a flexible framework for OCO where the performance depends crucially on the choice of the mirror map. While the geometries underlying OPGD and OEG, both special cases of OMD, are well understood, it remains a challenging open question on how to construct an optimal mirror map for any given constrained set and a general family of loss functions, e.g., sparse losses. Motivated by parameterizing a near-optimal set of mirror maps, we consider a simpler question: is it even possible to obtain polynomial gains in regret by using mirror maps for geometries that interpolate between $L_1$ and $L_2$, which may not be possible by restricting to only OEG ($L_1$) or OPGD ($L_2$). Our main result answers this question positively. We show that mirror maps based on block norms adapt better to the sparsity of loss functions, compared to previous $L_p$ (for $p \in [1, 2]$) interpolations. In particular, we construct a family of online convex optimization instances in $\mathbb{R}^d$, where block norm-based mirror maps achieve a provable polynomial (in $d$) improvement in regret over OEG and OPGD for sparse loss functions. We then turn to the setting in which the sparsity level of the loss functions is unknown. In this case, the choice of geometry itself becomes an online decision problem. We first show that naively switching between OEG and OPGD can incur linear regret, highlighting the intrinsic difficulty of geometry selection. To overcome this issue, we propose a meta-algorithm based on multiplicative weights that dynamically selects among a family of uniform block norms. We show that this approach effectively tunes OMD to the sparsity of the losses, yielding adaptive regret guarantees. Overall, our results demonstrate that online mirror-map selection can significantly enhance the ability of OMD to exploit sparsity in online convex optimization.

AnyView: Synthesizing Any Novel View in Dynamic Scenes

Jan 23, 2026Abstract:Modern generative video models excel at producing convincing, high-quality outputs, but struggle to maintain multi-view and spatiotemporal consistency in highly dynamic real-world environments. In this work, we introduce \textbf{AnyView}, a diffusion-based video generation framework for \emph{dynamic view synthesis} with minimal inductive biases or geometric assumptions. We leverage multiple data sources with various levels of supervision, including monocular (2D), multi-view static (3D) and multi-view dynamic (4D) datasets, to train a generalist spatiotemporal implicit representation capable of producing zero-shot novel videos from arbitrary camera locations and trajectories. We evaluate AnyView on standard benchmarks, showing competitive results with the current state of the art, and propose \textbf{AnyViewBench}, a challenging new benchmark tailored towards \emph{extreme} dynamic view synthesis in diverse real-world scenarios. In this more dramatic setting, we find that most baselines drastically degrade in performance, as they require significant overlap between viewpoints, while AnyView maintains the ability to produce realistic, plausible, and spatiotemporally consistent videos when prompted from \emph{any} viewpoint. Results, data, code, and models can be viewed at: https://tri-ml.github.io/AnyView/

Mixed-Integer Projections for Automated Data Correction of EMRs Improve Predictions of Sepsis among Hospitalized Patients

Aug 21, 2023Abstract:Machine learning (ML) models are increasingly pivotal in automating clinical decisions. Yet, a glaring oversight in prior research has been the lack of proper processing of Electronic Medical Record (EMR) data in the clinical context for errors and outliers. Addressing this oversight, we introduce an innovative projections-based method that seamlessly integrates clinical expertise as domain constraints, generating important meta-data that can be used in ML workflows. In particular, by using high-dimensional mixed-integer programs that capture physiological and biological constraints on patient vitals and lab values, we can harness the power of mathematical "projections" for the EMR data to correct patient data. Consequently, we measure the distance of corrected data from the constraints defining a healthy range of patient data, resulting in a unique predictive metric we term as "trust-scores". These scores provide insight into the patient's health status and significantly boost the performance of ML classifiers in real-life clinical settings. We validate the impact of our framework in the context of early detection of sepsis using ML. We show an AUROC of 0.865 and a precision of 0.922, that surpasses conventional ML models without such projections.

TACOS: Topology-Aware Collective Algorithm Synthesizer for Distributed Training

Apr 11, 2023

Abstract:Collective communications are an indispensable part of distributed training. Running a topology-aware collective algorithm is crucial for optimizing communication performance by minimizing congestion. Today such algorithms only exist for a small set of simple topologies, limiting the topologies employed in training clusters and handling irregular topologies due to network failures. In this paper, we propose TACOS, an automated topology-aware collective synthesizer for arbitrary input network topologies. TACOS synthesized 3.73x faster All-Reduce algorithm over baselines, and synthesized collective algorithms for 512-NPU system in just 6.1 minutes.

Artificial Intelligence/Operations Research Workshop 2 Report Out

Apr 10, 2023

Abstract:This workshop Report Out focuses on the foundational elements of trustworthy AI and OR technology, and how to ensure all AI and OR systems implement these elements in their system designs. Four sessions on various topics within Trustworthy AI were held, these being Fairness, Explainable AI/Causality, Robustness/Privacy, and Human Alignment and Human-Computer Interaction. Following discussions of each of these topics, workshop participants also brainstormed challenge problems which require the collaboration of AI and OR researchers and will result in the integration of basic techniques from both fields to eventually benefit societal needs.

Tree DNN: A Deep Container Network

Dec 07, 2022

Abstract:Multi-Task Learning (MTL) has shown its importance at user products for fast training, data efficiency, reduced overfitting etc. MTL achieves it by sharing the network parameters and training a network for multiple tasks simultaneously. However, MTL does not provide the solution, if each task needs training from a different dataset. In order to solve the stated problem, we have proposed an architecture named TreeDNN along with it's training methodology. TreeDNN helps in training the model with multiple datasets simultaneously, where each branch of the tree may need a different training dataset. We have shown in the results that TreeDNN provides competitive performance with the advantage of reduced ROM requirement for parameter storage and increased responsiveness of the system by loading only specific branch at inference time.

Reusing Combinatorial Structure: Faster Iterative Projections over Submodular Base Polytopes

Jun 22, 2021

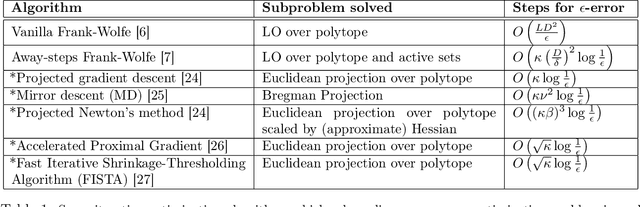

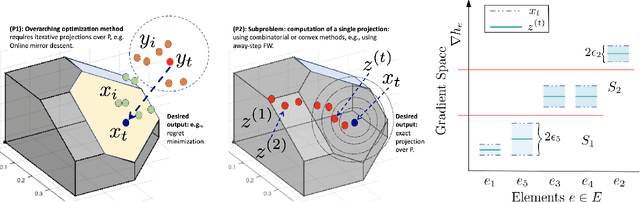

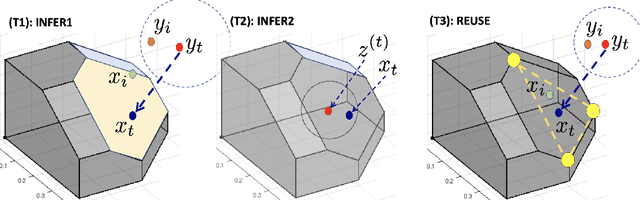

Abstract:Optimization algorithms such as projected Newton's method, FISTA, mirror descent and its variants enjoy near-optimal regret bounds and convergence rates, but suffer from a computational bottleneck of computing "projections'' in potentially each iteration (e.g., $O(T^{1/2})$ regret of online mirror descent). On the other hand, conditional gradient variants solve a linear optimization in each iteration, but result in suboptimal rates (e.g., $O(T^{3/4})$ regret of online Frank-Wolfe). Motivated by this trade-off in runtime v/s convergence rates, we consider iterative projections of close-by points over widely-prevalent submodular base polytopes $B(f)$. We develop a toolkit to speed up the computation of projections using both discrete and continuous perspectives. We subsequently adapt the away-step Frank-Wolfe algorithm to use this information and enable early termination. For the special case of cardinality based submodular polytopes, we improve the runtime of computing certain Bregman projections by a factor of $\Omega(n/\log(n))$. Our theoretical results show orders of magnitude reduction in runtime in preliminary computational experiments.

Taming Wild Price Fluctuations: Monotone Stochastic Convex Optimization with Bandit Feedback

Mar 16, 2021

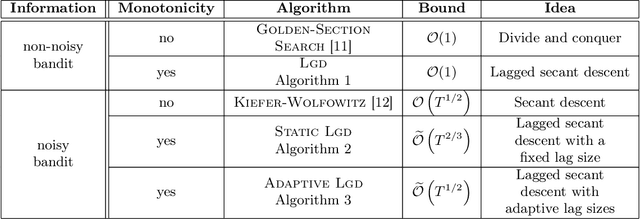

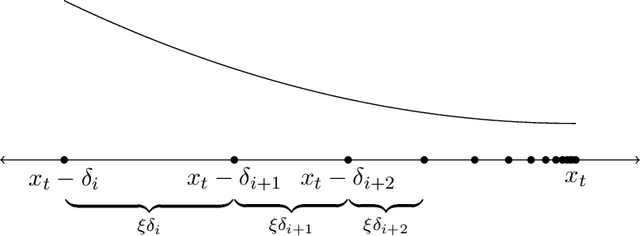

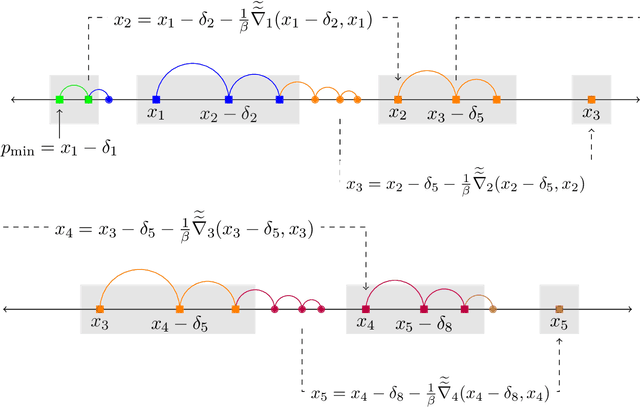

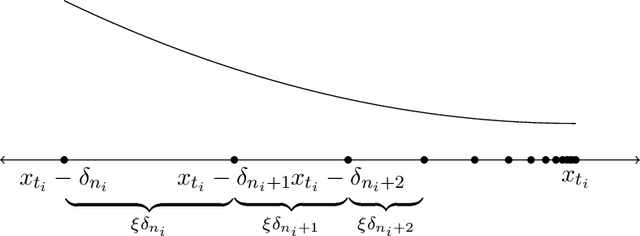

Abstract:Prices generated by automated price experimentation algorithms often display wild fluctuations, leading to unfavorable customer perceptions and violations of individual fairness: e.g., the price seen by a customer can be significantly higher than what was seen by her predecessors, only to fall once again later. To address this concern, we propose demand learning under a monotonicity constraint on the sequence of prices, within the framework of stochastic convex optimization with bandit feedback. Our main contribution is the design of the first sublinear-regret algorithms for monotonic price experimentation for smooth and strongly concave revenue functions under noisy as well as noiseless bandit feedback. The monotonicity constraint presents a unique challenge: since any increase (or decrease) in the decision-levels is final, an algorithm needs to be cautious in its exploration to avoid over-shooting the optimum. At the same time, minimizing regret requires that progress be made towards the optimum at a sufficient pace. Balancing these two goals is particularly challenging under noisy feedback, where obtaining sufficiently accurate gradient estimates is expensive. Our key innovation is to utilize conservative gradient estimates to adaptively tailor the degree of caution to local gradient information, being aggressive far from the optimum and being increasingly cautious as the prices approach the optimum. Importantly, we show that our algorithms guarantee the same regret rates (up to logarithmic factors) as the best achievable rates of regret without the monotonicity requirement.

Multi-Threshold Attention U-Net based Model for Multimodal Brain Tumor Segmentation in MRI scans

Jan 29, 2021

Abstract:Gliomas are one of the most frequent brain tumors and are classified into high grade and low grade gliomas. The segmentation of various regions such as tumor core, enhancing tumor etc. plays an important role in determining severity and prognosis. Here, we have developed a multi-threshold model based on attention U-Net for identification of various regions of the tumor in magnetic resonance imaging (MRI). We propose a multi-path segmentation and built three separate models for the different regions of interest. The proposed model achieved mean Dice Coefficient of 0.59, 0.72, and 0.61 for enhancing tumor, whole tumor and tumor core respectively on the training dataset. The same model gave mean Dice Coefficient of 0.57, 0.73, and 0.61 on the validation dataset and 0.59, 0.72, and 0.57 on the test dataset.

Walking in the Shadow: A New Perspective on Descent Directions for Constrained Minimization

Jun 15, 2020

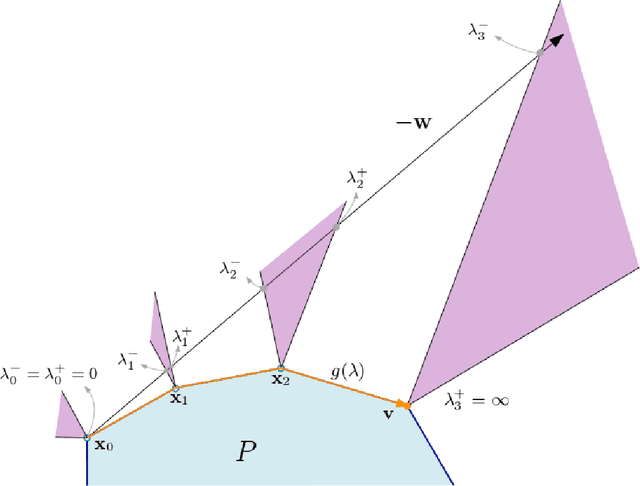

Abstract:Descent directions such as movement towards Frank-Wolfe vertices, away steps, in-face away steps and pairwise directions have been an important design consideration in conditional gradient descent (CGD) variants. In this work, we attempt to demystify the impact of movement in these directions towards attaining constrained minimizers. The best local direction of descent is the directional derivative of the projection of the gradient, which we refer to as the $\textit{shadow}$ of the gradient. We show that the continuous-time dynamics of moving in the shadow are equivalent to those of PGD however non-trivial to discretize. By projecting gradients in PGD, one not only ensures feasibility but also is able to "wrap" around the convex region. We show that Frank-Wolfe (FW) vertices in fact recover the maximal wrap one can obtain by projecting gradients, thus providing a new perspective to these steps. We also claim that the shadow steps give the best direction of descent emanating from the convex hull of all possible away-vertices. Opening up the PGD movements in terms of shadow steps gives linear convergence, dependent on the number of faces. We combine these insights into a novel $S\small{HADOW}$-$CG$ method that uses FW steps (i.e., wrap around the polytope) and shadow steps (i.e., optimal local descent direction), while enjoying linear convergence. Our analysis develops properties of directional derivatives of projections (which may be of independent interest), while providing a unifying view of various descent directions in the CGD literature.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge