Shiwei Wu

Beyond Token Eviction: Mixed-Dimension Budget Allocation for Efficient KV Cache Compression

Mar 21, 2026Abstract:Key-value (KV) caching is widely used to accelerate transformer inference, but its memory cost grows linearly with input length, limiting long-context deployment. Existing token eviction methods reduce memory by discarding less important tokens, which can be viewed as a coarse form of dimensionality reduction that assigns each token either zero or full dimension. We propose MixedDimKV, a mixed-dimension KV cache compression method that allocates dimensions to tokens at a more granular level, and MixedDimKV-H, which further integrates head-level importance information. Experiments on long-context benchmarks show that MixedDimKV outperforms prior KV cache compression methods that do not rely on head-level importance profiling. When equipped with the same head-level importance information, MixedDimKV-H consistently outperforms HeadKV. Notably, our approach achieves comparable performance to full attention on LongBench with only 6.25% of the KV cache. Furthermore, in the Needle-in-a-Haystack test, our solution maintains 100% accuracy at a 50K context length while using as little as 0.26% of the cache.

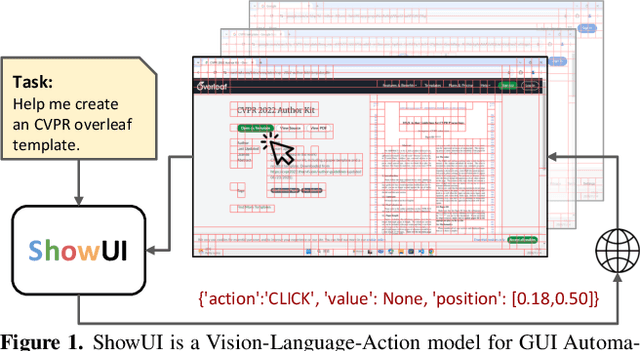

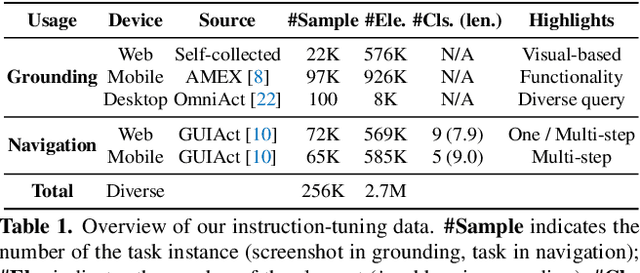

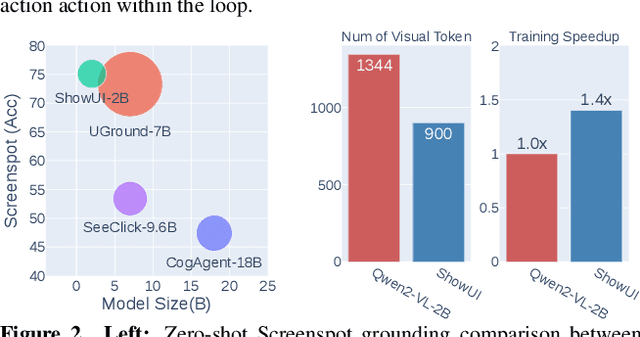

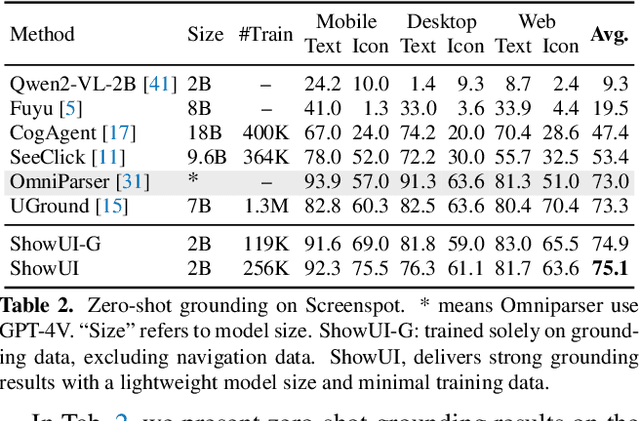

ShowUI: One Vision-Language-Action Model for GUI Visual Agent

Nov 26, 2024

Abstract:Building Graphical User Interface (GUI) assistants holds significant promise for enhancing human workflow productivity. While most agents are language-based, relying on closed-source API with text-rich meta-information (e.g., HTML or accessibility tree), they show limitations in perceiving UI visuals as humans do, highlighting the need for GUI visual agents. In this work, we develop a vision-language-action model in digital world, namely ShowUI, which features the following innovations: (i) UI-Guided Visual Token Selection to reduce computational costs by formulating screenshots as an UI connected graph, adaptively identifying their redundant relationship and serve as the criteria for token selection during self-attention blocks; (ii) Interleaved Vision-Language-Action Streaming that flexibly unifies diverse needs within GUI tasks, enabling effective management of visual-action history in navigation or pairing multi-turn query-action sequences per screenshot to enhance training efficiency; (iii) Small-scale High-quality GUI Instruction-following Datasets by careful data curation and employing a resampling strategy to address significant data type imbalances. With above components, ShowUI, a lightweight 2B model using 256K data, achieves a strong 75.1% accuracy in zero-shot screenshot grounding. Its UI-guided token selection further reduces 33% of redundant visual tokens during training and speeds up the performance by 1.4x. Navigation experiments across web Mind2Web, mobile AITW, and online MiniWob environments further underscore the effectiveness and potential of our model in advancing GUI visual agents. The models are available at https://github.com/showlab/ShowUI.

VideoLLM-MoD: Efficient Video-Language Streaming with Mixture-of-Depths Vision Computation

Aug 29, 2024

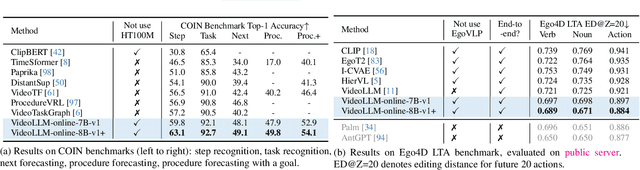

Abstract:A well-known dilemma in large vision-language models (e.g., GPT-4, LLaVA) is that while increasing the number of vision tokens generally enhances visual understanding, it also significantly raises memory and computational costs, especially in long-term, dense video frame streaming scenarios. Although learnable approaches like Q-Former and Perceiver Resampler have been developed to reduce the vision token burden, they overlook the context causally modeled by LLMs (i.e., key-value cache), potentially leading to missed visual cues when addressing user queries. In this paper, we introduce a novel approach to reduce vision compute by leveraging redundant vision tokens "skipping layers" rather than decreasing the number of vision tokens. Our method, VideoLLM-MoD, is inspired by mixture-of-depths LLMs and addresses the challenge of numerous vision tokens in long-term or streaming video. Specifically, for each transformer layer, we learn to skip the computation for a high proportion (e.g., 80\%) of vision tokens, passing them directly to the next layer. This approach significantly enhances model efficiency, achieving approximately \textasciitilde42\% time and \textasciitilde30\% memory savings for the entire training. Moreover, our method reduces the computation in the context and avoid decreasing the vision tokens, thus preserving or even improving performance compared to the vanilla model. We conduct extensive experiments to demonstrate the effectiveness of VideoLLM-MoD, showing its state-of-the-art results on multiple benchmarks, including narration, forecasting, and summarization tasks in COIN, Ego4D, and Ego-Exo4D datasets.

Leveraging Entity Information for Cross-Modality Correlation Learning: The Entity-Guided Multimodal Summarization

Aug 06, 2024Abstract:The rapid increase in multimedia data has spurred advancements in Multimodal Summarization with Multimodal Output (MSMO), which aims to produce a multimodal summary that integrates both text and relevant images. The inherent heterogeneity of content within multimodal inputs and outputs presents a significant challenge to the execution of MSMO. Traditional approaches typically adopt a holistic perspective on coarse image-text data or individual visual objects, overlooking the essential connections between objects and the entities they represent. To integrate the fine-grained entity knowledge, we propose an Entity-Guided Multimodal Summarization model (EGMS). Our model, building on BART, utilizes dual multimodal encoders with shared weights to process text-image and entity-image information concurrently. A gating mechanism then combines visual data for enhanced textual summary generation, while image selection is refined through knowledge distillation from a pre-trained vision-language model. Extensive experiments on public MSMO dataset validate the superiority of the EGMS method, which also prove the necessity to incorporate entity information into MSMO problem.

VideoLLM-online: Online Video Large Language Model for Streaming Video

Jun 17, 2024

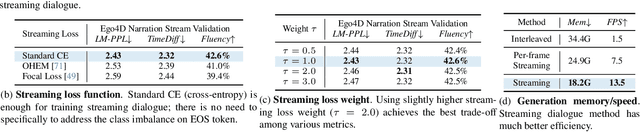

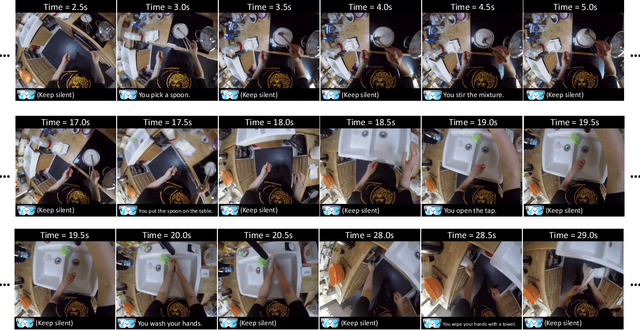

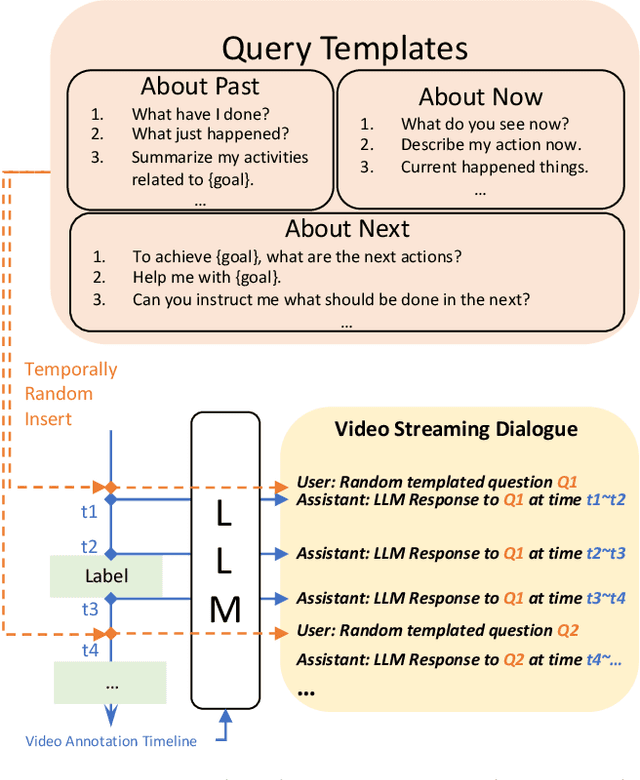

Abstract:Recent Large Language Models have been enhanced with vision capabilities, enabling them to comprehend images, videos, and interleaved vision-language content. However, the learning methods of these large multimodal models typically treat videos as predetermined clips, making them less effective and efficient at handling streaming video inputs. In this paper, we propose a novel Learning-In-Video-Stream (LIVE) framework, which enables temporally aligned, long-context, and real-time conversation within a continuous video stream. Our LIVE framework comprises comprehensive approaches to achieve video streaming dialogue, encompassing: (1) a training objective designed to perform language modeling for continuous streaming inputs, (2) a data generation scheme that converts offline temporal annotations into a streaming dialogue format, and (3) an optimized inference pipeline to speed up the model responses in real-world video streams. With our LIVE framework, we built VideoLLM-online model upon Llama-2/Llama-3 and demonstrate its significant advantages in processing streaming videos. For instance, on average, our model can support streaming dialogue in a 5-minute video clip at over 10 FPS on an A100 GPU. Moreover, it also showcases state-of-the-art performance on public offline video benchmarks, such as recognition, captioning, and forecasting. The code, model, data, and demo have been made available at https://showlab.github.io/videollm-online.

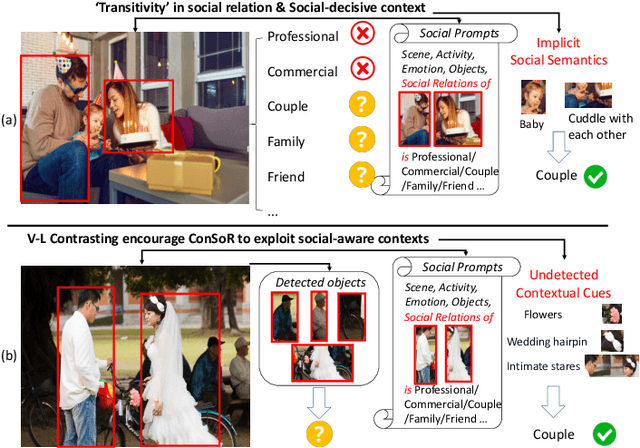

From a Social Cognitive Perspective: Context-aware Visual Social Relationship Recognition

Jun 12, 2024

Abstract:People's social relationships are often manifested through their surroundings, with certain objects or interactions acting as symbols for specific relationships, e.g., wedding rings, roses, hugs, or holding hands. This brings unique challenges to recognizing social relationships, requiring understanding and capturing the essence of these contexts from visual appearances. However, current methods of social relationship understanding rely on the basic classification paradigm of detected persons and objects, which fails to understand the comprehensive context and often overlooks decisive social factors, especially subtle visual cues. To highlight the social-aware context and intricate details, we propose a novel approach that recognizes \textbf{Con}textual \textbf{So}cial \textbf{R}elationships (\textbf{ConSoR}) from a social cognitive perspective. Specifically, to incorporate social-aware semantics, we build a lightweight adapter upon the frozen CLIP to learn social concepts via our novel multi-modal side adapter tuning mechanism. Further, we construct social-aware descriptive language prompts (e.g., scene, activity, objects, emotions) with social relationships for each image, and then compel ConSoR to concentrate more intensively on the decisive visual social factors via visual-linguistic contrasting. Impressively, ConSoR outperforms previous methods with a 12.2\% gain on the People-in-Social-Context (PISC) dataset and a 9.8\% increase on the People-in-Photo-Album (PIPA) benchmark. Furthermore, we observe that ConSoR excels at finding critical visual evidence to reveal social relationships.

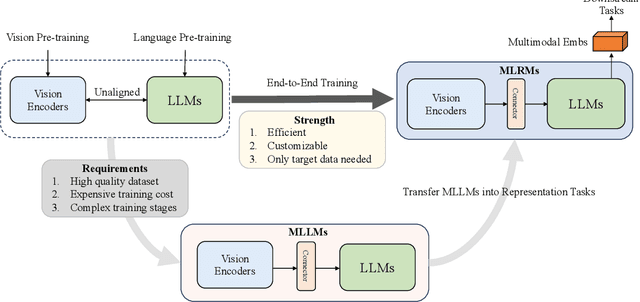

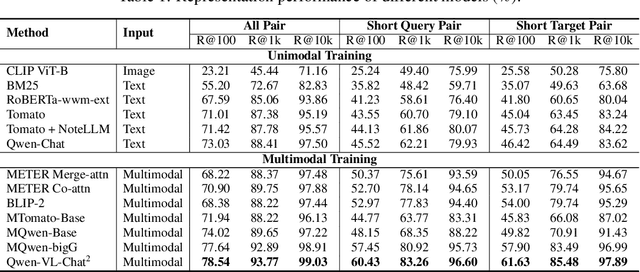

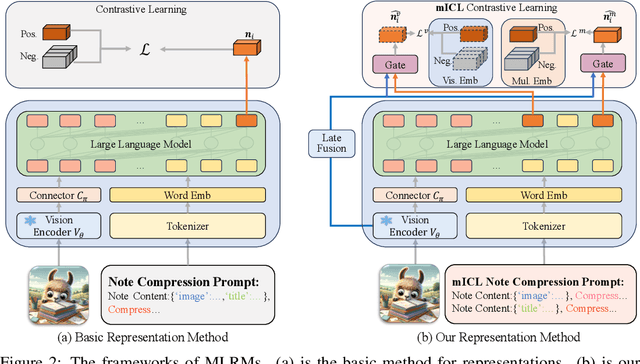

NoteLLM-2: Multimodal Large Representation Models for Recommendation

May 27, 2024

Abstract:Large Language Models (LLMs) have demonstrated exceptional text understanding. Existing works explore their application in text embedding tasks. However, there are few works utilizing LLMs to assist multimodal representation tasks. In this work, we investigate the potential of LLMs to enhance multimodal representation in multimodal item-to-item (I2I) recommendations. One feasible method is the transfer of Multimodal Large Language Models (MLLMs) for representation tasks. However, pre-training MLLMs usually requires collecting high-quality, web-scale multimodal data, resulting in complex training procedures and high costs. This leads the community to rely heavily on open-source MLLMs, hindering customized training for representation scenarios. Therefore, we aim to design an end-to-end training method that customizes the integration of any existing LLMs and vision encoders to construct efficient multimodal representation models. Preliminary experiments show that fine-tuned LLMs in this end-to-end method tend to overlook image content. To overcome this challenge, we propose a novel training framework, NoteLLM-2, specifically designed for multimodal representation. We propose two ways to enhance the focus on visual information. The first method is based on the prompt viewpoint, which separates multimodal content into visual content and textual content. NoteLLM-2 adopts the multimodal In-Content Learning method to teach LLMs to focus on both modalities and aggregate key information. The second method is from the model architecture, utilizing a late fusion mechanism to directly fuse visual information into textual information. Extensive experiments have been conducted to validate the effectiveness of our method.

NoteLLM: A Retrievable Large Language Model for Note Recommendation

Mar 04, 2024

Abstract:People enjoy sharing "notes" including their experiences within online communities. Therefore, recommending notes aligned with user interests has become a crucial task. Existing online methods only input notes into BERT-based models to generate note embeddings for assessing similarity. However, they may underutilize some important cues, e.g., hashtags or categories, which represent the key concepts of notes. Indeed, learning to generate hashtags/categories can potentially enhance note embeddings, both of which compress key note information into limited content. Besides, Large Language Models (LLMs) have significantly outperformed BERT in understanding natural languages. It is promising to introduce LLMs into note recommendation. In this paper, we propose a novel unified framework called NoteLLM, which leverages LLMs to address the item-to-item (I2I) note recommendation. Specifically, we utilize Note Compression Prompt to compress a note into a single special token, and further learn the potentially related notes' embeddings via a contrastive learning approach. Moreover, we use NoteLLM to summarize the note and generate the hashtag/category automatically through instruction tuning. Extensive validations on real scenarios demonstrate the effectiveness of our proposed method compared with the online baseline and show major improvements in the recommendation system of Xiaohongshu.

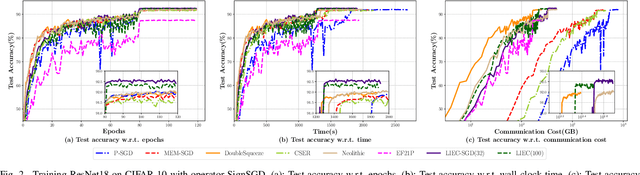

Communication-Efficient Distributed Learning with Local Immediate Error Compensation

Feb 19, 2024

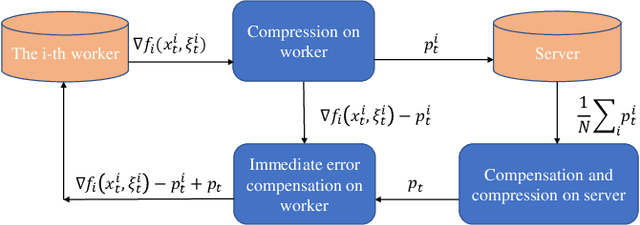

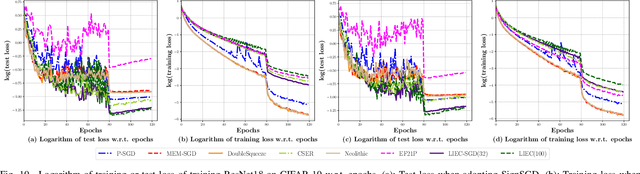

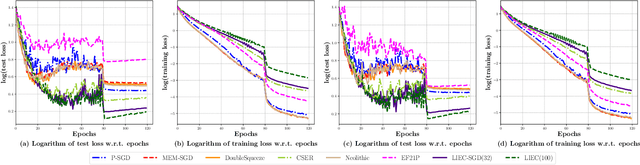

Abstract:Gradient compression with error compensation has attracted significant attention with the target of reducing the heavy communication overhead in distributed learning. However, existing compression methods either perform only unidirectional compression in one iteration with higher communication cost, or bidirectional compression with slower convergence rate. In this work, we propose the Local Immediate Error Compensated SGD (LIEC-SGD) optimization algorithm to break the above bottlenecks based on bidirectional compression and carefully designed compensation approaches. Specifically, the bidirectional compression technique is to reduce the communication cost, and the compensation technique compensates the local compression error to the model update immediately while only maintaining the global error variable on the server throughout the iterations to boost its efficacy. Theoretically, we prove that LIEC-SGD is superior to previous works in either the convergence rate or the communication cost, which indicates that LIEC-SGD could inherit the dual advantages from unidirectional compression and bidirectional compression. Finally, experiments of training deep neural networks validate the effectiveness of the proposed LIEC-SGD algorithm.

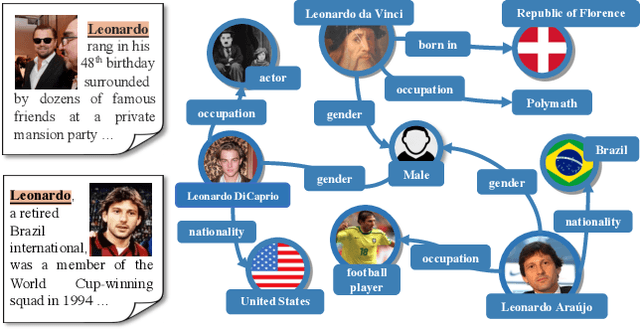

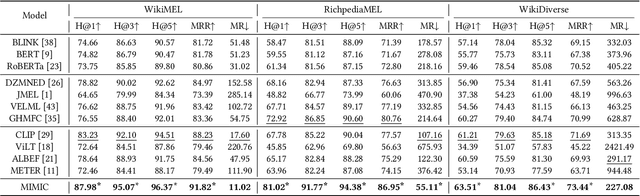

Multi-Grained Multimodal Interaction Network for Entity Linking

Jul 19, 2023

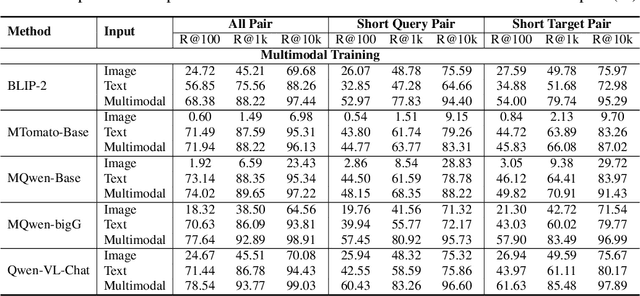

Abstract:Multimodal entity linking (MEL) task, which aims at resolving ambiguous mentions to a multimodal knowledge graph, has attracted wide attention in recent years. Though large efforts have been made to explore the complementary effect among multiple modalities, however, they may fail to fully absorb the comprehensive expression of abbreviated textual context and implicit visual indication. Even worse, the inevitable noisy data may cause inconsistency of different modalities during the learning process, which severely degenerates the performance. To address the above issues, in this paper, we propose a novel Multi-GraIned Multimodal InteraCtion Network $\textbf{(MIMIC)}$ framework for solving the MEL task. Specifically, the unified inputs of mentions and entities are first encoded by textual/visual encoders separately, to extract global descriptive features and local detailed features. Then, to derive the similarity matching score for each mention-entity pair, we device three interaction units to comprehensively explore the intra-modal interaction and inter-modal fusion among features of entities and mentions. In particular, three modules, namely the Text-based Global-Local interaction Unit (TGLU), Vision-based DuaL interaction Unit (VDLU) and Cross-Modal Fusion-based interaction Unit (CMFU) are designed to capture and integrate the fine-grained representation lying in abbreviated text and implicit visual cues. Afterwards, we introduce a unit-consistency objective function via contrastive learning to avoid inconsistency and model degradation. Experimental results on three public benchmark datasets demonstrate that our solution outperforms various state-of-the-art baselines, and ablation studies verify the effectiveness of designed modules.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge